Abstract

Although semantic processing has traditionally been associated with brain responses maximal at 350–400 ms, recent studies reported that words of different semantic types elicit topographically distinct brain responses substantially earlier, at 100–200 ms. These earlier responses have, however, been achieved using insufficiently precise source localisation techniques, therefore casting doubt on reported differences in brain generators. Here, we used high-density MEG-EEG recordings in combination with individual MRI images and state-of-the-art source reconstruction techniques to compare localised early activations elicited by words from different semantic categories in different cortical areas. Reliable neurophysiological word-category dissociations emerged bilaterally at ~ 150 ms, at which point action-related words most strongly activated frontocentral motor areas and visual object-words occipitotemporal cortex. These data now show that different cortical areas are activated rapidly by words with different meanings and that aspects of their category-specific semantics is reflected by dissociating neurophysiological sources in motor and visual brain systems.

Similar content being viewed by others

Introduction

The latency and automaticity of semantic processes in language perception has been the topic of scrutiny and debate in cognitive neuroscience. An electrophysiological response most frequently considered an index of semantic processing is the N400, a negative potential peaking at around 400 ms after stimulus presentation, which is reliably evoked by semantic incongruities between word-pairs or critical words embedded in sentences1. Recent evidence suggests, however, that semantic information provided by words and their contexts is being retrieved far earlier, in the “pre-N400” time domain, that is, within the first 200 ms after the critical stimulus word can first be recognized. This evidence came from eye-tracking and psychophysiological studies that found early effects for words embedded in congruent and incongruent sentence contexts2,3. In addition, early brain responses < 200 ms differed between words with different meaning. Interestingly, differential brain activation reflecting semantic differences sometimes seemed to emerge in modality-specific areas, so that, for example, action-related words sparked particularly strong activity in motor systems and object words activated inferior temporal or occipital areas most profoundly. As word groups with different meanings well-matched for psycholinguistic properties such as length were found to elicit differential electrical and neuromagnetic activity already within 200 ms, it has been suggested that semantic access is an early process, starting within the first 200 ms4,5,6,7,8,9,10,11. A number of these studies found differences in the scalp topography of event-related potentials and used those as a basis for suggesting dissociations in neuronal generators. However, it has been argued that a mere difference in scalp distribution may sometimes be explained by rescaling all or a selection of the underlying generators12,13,14, so that firm conclusions on any double dissociation between the activation of different sets of cortical generators – for example in the processing of action- and object-related words – requires source reconstruction in the cortex rather than topographic voltage mapping. Recent work indeed suggested such word-type specific differential cortical generators based on distributed source localisation methods applied to word-elicited EEG and MEG surface topographies. In an event-related potential (ERP) study, Shtyrov et al (2004)15 employed an auditory paradigm whereby brain responses to English action verbs semantically related to arm- and leg-actions (pick vs. kick) were recorded. Topographical differences between brain responses to arm-and leg-related words were evident already at 140–180 ms, with relatively stronger focal dorsal activity for leg- and relatively stronger lateral activity for hand-related items. In a similar MEG study, Finnish words typically used to speak about mouth and leg actions, respectively, evoked differential responses in inferior and superior frontocentral cortex at around 140–170 ms16. In both cases, meticulous psycholinguistic matching of stimuli allows topographical differences to be confidently attributed to the differing semantic relationships of words to the effectors of the body. Interestingly, the topographical differences in brain activation observed between these word types reflected to a degree aspects of their meaning, as leg-words activated areas consistent with dorsal leg-representation in the motor system and arm/face words activated inferior/lateral areas close to motor regions controlling the arm and face. In the visual modality, similar findings were obtained in EEG17 with topographical differences between words of different semantic sub-categories arising around 200 ms. This semantic mapping of action-related words onto sensorimotor brain systems consistent with body part representations has been cross-validated with a range of methods, including functional fMRI and TMS18,19,20,21,22,23. Importantly, the rapid onset of this activation and the covert, passive nature of stimulus presentation in some of the studies15,16 indicates that the observed semantic motor mapping can be elicited without the subjects' active attention towards stimulus words and that it may occur in a time-window preceding conscious mental imagery processes.

At this stage, we may conclude that there is strong support for category-specific semantic activation at early latencies, within 200 ms upon perceiving a word and even that it is the sensory and motor areas that become involved, which may possibly reflect the processing of specific perceptual or action-related aspects of the meaning of the critical stimulus words11,24,25,26. However, there are significant caveats of the earlier work which relate to the source localisation methods applied. For example, some studies performed source analysis only at the group level but provided signal-space evidence for category-specific differential activation, thus making themselves subject to the criticisms previously mentioned against signal-topography approaches12,13,14. At signal space, amplitude differences between sources may give rise to apparent topographical differences due to the multiplicative nature of variances in source currents at recording sites. An increase in source strength produces a proportionately similar increase of the voltage at every site, whereas ANOVA, which is additive in nature, assumes that a constant voltage should be added to each location. Significant interactions can also arise from noisy data or activity in the baseline period which is assumed to be the ‘true zero’ of the EEG recordings. Other studies did perform statistics in source space, but used relatively crude source localisation methods – based, for example, on average brains or even sphere models of the cortex – so that the danger of mislocalisation and incorrect conclusions about any possible topographical differences cannot be ignored. While the very nature of the EEG/MEG inverse problem prevents any firm conclusions from two-dimensional surface recordings on the three-dimensional sources of neurophysiological activity in the human brain, it can, however, be claimed that, for any convincing inference, the best possible source localisation technique available should be employed. We therefore here utilise concurrent recordings from 306 MEG sensors and 70 EEG electrodes and localised sources, taking into account pre-stimulus noise levels and co-variances in the data and individual brain anatomy, to obtain semantic-category specific neuroanatomically-constrained source statistics calculated over a group of healthy experimental participants. We recorded brain responses elicited by matched written words of different semantic categories (object-related, action-related and abstract) in a passive reading task and investigated semantic effects in the most prominent early peak of word-evoked brain activation. We predicted specific source-activation advantages for action-related words in frontal motor and premotor areas as opposed to posterior visual-cortex specificity for object words; no specific prediction was made for the abstract word stimuli that served as fillers. Our results show area-specific cortical activations reflecting word-category specific semantic processing within 150 ms of stimulus onset.

Results

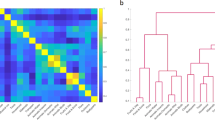

For an unbiased estimate of the overall time course of word-elicited cortical activity, we calculated the global signal-to-noise ratio (SNR) across all stimuli for all 376 EEG/MEG channels for all subjects (at each time point, the signal was divided by the standard deviation of the baseline interval). This, in line with earlier investigations reviewed above, revealed the most prominent peak at ~ 150 ms (see Figure 1). Consequently, with a focus on early semantic effects which commonly manifest themselves in short-lived transient activations27, our analysis focused on an epoch capturing this peak (140–160 ms). At this time point, all words appear to evoke widespread activation in perisylvian regions, including superior temporal cortex and the inferior frontal gyrus, along with prominent activity in the occipital lobe and extrasylvian parts of temporal, parietal and frontal cortices.

Top diagram: Global signal-to-noise-ratio (SNR) curve calculated from 376 MEG and EEG sensors for all words together (time point 0 = stimulus onset). As can be seen, brain activation exhibited its absolute maximum between 140–160 ms, which was chosen as the time window of interest (TWOI). The passive task employed did not produce any prominent N400 response. Bottom diagram: MNE source reconstruction for the TWOI calculated for the brain response to all words. Note that activity predominates in occipitotemporal areas, as words were presented visually and is present in widespread cortical areas at this early latency.

For the purposes of quantifying activation of neural generators underlying recorded activity, ROIs were anatomically defined based on the Desikan-Killiany Atlas subdivisions of the brain28 (please see Supplementary Fig. S1 for more information) and average amplitudes of source activation were calculated using the anatomically-constrained distributed L2 Minimum-Norm Estimation approach29.

As previous investigations suggested the importance of motor and executive systems in precentral and adjacent inferior prefrontal cortex for action word processing and that of inferior higher visual areas in temporal and occipital cortex for object-related words, a primary statistical analysis focused on source activations in these a priori defined frontocentral (FC) and temporo-occipital (TO) regions (design: Region (FC, TO) x Hemisphere (2) x Word Category (action, object, abstract).

A general Word Category effect revealed different response patterns for semantic categories (F [2, 32] = 8.458, p < .001), and, most importantly, an interaction between the factors Region and Word Category (F [2, 32] = 3.882, ε = 1.000, p < .035) revealed differential activation of motor and visual systems activation by action, object and abstract words. Planned comparison tests showed significantly stronger responses to action words compared with abstract words in bilateral FC cortex (t [16] = 2.836, p < .02) and strongest responses to object words in bilateral TO cortex relative to both action (t [16] = 2.189, p < .05) and abstract words (t [16] = 3.148, p < .01). The Word Category effects in FC and TO regions are depicted in Figure 2. When entering data about just action and object words into a separate two-way ANOVA (2 Regions x 2 Hemispheres x 2 Word Categories), the interaction remained significant (F [1, 16] = 6.970, ε = 1.000, p < .02).

Bilateral activation for each semantic category is depicted in the frontocentral (FC) and temporo-occipital (TO) regions bilaterally.

The FC region was taken from a combination of inferior frontal (BA 44) and precentral ROIs and the TO region was a combination of fusiform and secondary visual cortex ROIs. Axis y reflects mean source activation (nano-ampermeters [nAm]) in the FC and TO regions for each semantic category.

Although the superiority of action words compared with objects words failed to reach significance in bilateral FC areas (p > .15), data from the right hemisphere alone changed this picture. In the right-hemispheric precentral gyrus, action words evoked significantly greater activity than both object words (t [16], = 2.784, p < .015) and abstract words (t [16] = 2.295, p < .04). However, the same trend in the left hemisphere did not pass the significance threshold. Scrutinising the TO regions in more detail showed that bilateral fusiform cortex replicated the superiority of object words over both action (t [16] = 3.442, p < .005) and abstract items (t [16] = 2.910, p < .01), whereas occipital areas (BA18/19) just revealed a significantly stronger response to visual than abstract words (t [16] = 3.032, p < .01). Bar-graphs reflecting activation in each of these individual regions can be seen in Supplementary Materials (Fig. S2).

In addition to this theory-driven approach, an additional exploratory analysis confirmed significant effects of Word Category across the brain, with a particular strength for action words in frontal (FC) cortex and for object words in temporo-occipital (TO) cortex. No region of the brain was seen to be most greatly activated by abstract words, which evoked levels of activation indistinguishable from action and object words in multimodal cortices including dorsolateral prefrontal cortex, temporal pole and angular gyrus. Please see Supplementary Materials for details of this exploratory investigation.

Discussion

As category-specific findings from previous studies could not be confidently localised due to methodological weaknesses, the current work attempted to clarify and build on this literature by investigating early semantic effects with state-of-the-art methodological procedures. A neurophysiological investigation using simultaneous MEG and EEG recordings showed maximal brain responses to written words maximal at 150 ms after their onset. At this early time point, an a priori designed theory-based source analysis showed semantic category effects in multiple regions across the cortex (effects further corroborated by a secondary exploratory analysis reported in Supplementary Materials). Brain responses to action words tended to dominate in inferior frontal regions, with clearest category specificity for action words appearing in the IFG and (right) precentral cortex and for visually-related object words in temporo-occipital brain regions, including the fusiform gyrus and visual cortex. These effects indicate early semantic access to single words which occurs long before the “N400” response traditionally ascribed to semantic processes1.

In both auditory and visual modalities, well-matched words of different semantic types have previously been shown to activate different sets of cortical generators within one fifth or one quarter of a second4,5,6,7,8,9,10,11,15,16,17,21,30,31,32,33. However, as discussed in the introduction, much evidence was based on signal space statistical analysis (as well as insufficiently precise source localisation using standard surfaces and/or even statistically unsupported group-average solutions) so that their conclusions on the existence and localisation of brain generators specific to semantic categories are, in principle, still questionable. This necessitated addressing the inverse problem with more advanced source reconstruction methods. Although, by definition, the inverse problem can never be fully resolved, it is important to provide best possible guesses about the cortical topographies of category-specific semantic processes by using cutting edge analysis techniques and by taking into account additional disambiguating information, including especially a definition of the source space based on individuals' precise neuroanatomy and the use of complementary information provided by MEG and EEG.

This was realised in the current study, which has the following methodological advantages over previous investigations. With high temporal resolution (sampling rate of 1 kHz), the data resolution in the spatial domain was improved through concurrent use of 372 neurophysiological sensors of 3 different types: magnetometers, planar gradiometers and EEG electrodes. This high-density multi-modal coverage, in combination with co-registration of participants' EMEG data to their structural MRI scans and use of individual cortical surfaces for calculating sources of activation (a feature absent in previous studies reporting similar early semantic findings), allows for far greater spatial precision than the algorithms used in earlier literature which employed a spherical model15,16 or a standardised brain surface31,32. In the localisation of neural source generators, noise levels in the 50 ms baseline period of the epoch were taken into account by using a noise covariance matrix, in addition to the conventional baseline subtraction procedure used previously. Group-level statistics were explored at the level of individually-computed source spaces rather than signal-space analysis, following the projection of individual brains into a group-specific average cortical surface computed from T1 structural images of each participant: consequently, the pitfalls reported in signal-topography approaches12,13,14 were avoided. Therefore, whilst corroborating the early automaticity of semantic differentiation reported by previous studies, the precision of the source localisation employed in the current study reveals the association of action words with inferior frontal and precentral gyrus and the linkage of visual object-related words to posterior occipitotemporal regions. These links had earlier been established in neurometabolic imaging, although these slow methods are unable to address the question whether any activation revealed might indeed reflect cognitive processes following upon and therefore potentially epiphenomenal to, word comprehension. Neurophysiological methods, as they have been applied here, are necessary to separate the earliest neurophysiological indexes of word comprehension and their underlying cortical sources from later brain indexes potentially related to memory encoding or access, wider contextual association, or second-thought-type “simulation”. The present results indicate that a surprisingly early process of motor vs. visual systems activation corresponds to the semantic access to action and object schemas triggered immediately by upcoming action and object words respectively (in the sense of an instantaneous involuntary “simulation”).

As the pattern of category-specificity here revealed by neurophysiological imaging at early latencies is strongly consistent with other findings about semantic category specificity reported in the neuropsychological and brain imaging literature, these results can now be put in a larger context. As mentioned, the somatotopic activation of precentral/motor regions bilaterally by action-related language has been robustly reported in neuroimaging15,16,17,18,19,20,21,22,23,34. Furthermore, patient studies have also associated damage to inferior frontal and motor regions, in left and right hemispheres, with deficits in action-word processing35,36,37,38,39,40,41. In contrast, words with visual properties, such as living things and objects, evoke activity in more posterior occipitotemporal brain regions and tend to be impaired by damage to the same regions24. Congruent with the specific activation of sensorimotor areas in the processing of action and object words and the crucial relevance of these areas for processing of these word types, the present study showed the most pronounced early activation of anterior frontal region (notably pars opercularis and precentral gyrus) to action words (most pronounced, in this case, in the right hemisphere) and the most remarkable temporo-occipital activity (fusiform gyrus, occipital lobe) to visually-related object words. The mutual cross modality convergence now confirms and substantiates the source localisation results, which, in principle, even though cutting edge methods were used, cannot overcome the principled limitation dictated by Helmholtz' inverse problem. The cross validation of the present methods adds substantial plausibility and support for these source localisations. Together, the neurometabolic, neuropsychological and neurophysiological findings provide support for a model of semantics according to which Hebbian principles of associative learning (whereby synaptic changes occur between cell groups frequently active together, thus strengthening communication between them) are a major driving force for binding together word-form circuits and (in the case of object-related nouns) object-related perceptual schemas stored in visual systems or (for action-related word types) action schemas laid down in the cortical motor system. After such learning has taken place, the distributed semantic circuits, or cell assemblies, are, as a whole, functionally important for conceptual retrieval42,43. These cell assemblies, evident as ‘memory traces’ for real words44, would become quickly activated in core perisylvian language regions during word-presentation, with semantic representations in sensorimotor areas almost instantly ignited in addition due to the strong cortico-cortical connections binding assemblies. Semantic access therefore may occur instantaneously as part of the cell assembly ignition process, within ¼ of a second or even 150 ms7,27,42.

In the study, abstract words were employed as a ‘filler’, comparison condition to reduce participants' focus on action- and object-related words. Though not therefore a focus of the study, we observed that they produced significantly lower activity than action but not object words in frontocentral cortex and lower activity than object but not action words in the temporo-occipital cortex. In comparison to the experimental word groups, the abstract words employed (containing exemplars such as ‘fraud’ and ‘strive’) lacked strong associations with actions, the effectors of the body, or visual forms (see Supplementary Table 1). Therefore, unlike action- or object-related words, they were not strongly associated with any one region of the brain, though they evoked similar levels of activation in multimodal areas. Previous authors have suggested that representation of many of the most abstract function words (for example those critical in adding meaning to sentences, such as ‘is’, ‘the’, ‘it’) is strongly reserved to core left perisylvian language regions42, given their complete lack of definitive referential links with objects or actions in the world. However, many abstract words, like those employed here, are content words which do describe concepts, ideas and states of being. Whilst some studies have pointed to inferior frontal and anterior temporal cortices in abstract word processing45,46, theorists have also suggested that these words may, to an extent, be grounded in sensorimotor systems in a similar way to concrete words through association with the sensorimotor world in metaphors and situations in context25,26,47. As our abstract words were a conglomeration of nouns and verbs with differing associations to situated smells, feelings, sights, sounds, tastes and actions, their weaker association with frontal motor areas and posterior visual areas than concrete action and object words respectively is consistent with the theory purported above. In addition, although in order to know what abstract concepts such as ‘freedom’ and ‘beauty’ are it is necessary to relate these terms to real situations, actions and objects, the variability of such instantiations of freedom and beauty is so great that a correlation approach predicts detachment of any individual action or object schema from the word representation; therefore, some “disembodiment” of abstract concepts is evident due to this lack of strong correlations. Rather, an either-or function over a divergent set of typical examples needs to be stored and will form the basis of the abstract concept. This “disembodiment” approach dictated by neurobiologically-founded Hebbian-type learning provides a tentative explanation for why abstract words activated multimodal cortices – to a similar degree as concrete ones did – but seemed detached from any specific action or object schemas implemented as sensorimotor circuits in motor or visual systems26.

Another strength of the current research, alongside the methodological improvements, lies in the meticulous matching of psycholinguistic variables such as word length and frequency, bigram and trigram frequencies, which are known to modulate brain activity48. Significant differences in their semantic properties set our semantic categories apart from each other: object words were significantly more related to colour and form and the visual world and action words to actions and greater physiological arousal of the body49. A potential confound, however, does exist with lexical/grammatical class (what part of language a word belongs to), which, alongside semantics, differs between stimulus groups. Visual objects typically belong to the grammatical category of nouns whilst action words are typically verbs. Some authors have suggested that grammatical category, rather than semantic differences, might be the underlying determinant of brain differences50,51. Dissociations between nouns and verbs have been observed in patients35,36,37 and with neurometabolic and neurophysiological measures5,6,7,8,52,53,54. However, due to the difficulty of controlling for this grammatical-semantic confound, these aforementioned studies are open to the interpretation that semantic differences, rather than grammatical category per se, drive the differential topographies which emerge for nouns and verbs. This is indeed indicated by careful attempts to disentangle semantics and grammatical category. Pulvermuller et al8, for example, reported differences in the neurophysiological brain response to action and object nouns, but not in neural activation evoked by action nouns and action verbs. Though one study did report a grammatical category effect between abstract nouns and verbs55, this is a minority finding as a semantic interpretation of brain differences is strongly advocated by reviews41,56,57. It is noteworthy that the majority of abstract items used in the present study, approximately 66%, were classed as verbs or had more frequent usage as verbs than nouns (many of the remaining 33%, though more frequent as nouns, were additionally used as verbs). Given that the action word category (strongly verb dominated) significantly dissociated from abstract words in the frontal cortex (bilateral superior frontal cortex, pars opercularis and right precentral gyrus), the data suggest differentiation on the basis of action-relatedness, a semantic variable in which action words were significantly higher than abstract words (t [16] = 8.168, p < .001). Further electrophysiological research with a focus on short, early time-windows and controlled stimulus sets might, however, address this issue directly.

In conclusion, the present study looked for word-category differences in an early neurophysiological response, peaking at 150 ms, which arose when participants passively read three semantic types of words. With greater methodological precision including simultaneous EEG and MEG recordings through 376 channels, co-registration to structural MRI scans of individual participants and cutting edge distributed source localisation techniques, we were able to localise different brain activation topographies to action-related, object-related and abstract words, with local cortical activation reflecting semantic properties of their related concepts. Most pronounced motor to action words and most pronounced visual systems activations to object words were seen within 150 ms, which shows that meaning features of words are already specifically reflected in the brain response at such early latency. These results demonstrate the early time course of both sensorimotor as well as abstract semantic information processing in the brain and lead to a novel perspective on the brain mechanisms of language understanding which are manifest before the classic N400 response emerges.

Methods

Participants

Participants (mean age: 25 ± standard error [SE]: 1.23) were 17 healthy monolingual native British-English speakers, all neurologically-normal and free of psychotropic medication at the time of the study. They had normal or corrected-to-normal vision. All were right-handed (mean handedness quotient of 90 ± SE 2.77 on the Edinburgh Handedness Inventory58) and of above-average intelligence as determined by the Cattell Culture-Fair IQ test59 (mean: 115.9 ± SE 3.62). The group consisted of 11 men and 6 women, all of whom gave informed consent for testing and were remunerated for their time. Ethical approval was obtained from the Cambridge Psychology Research Ethics Committee (CPREC 2008.64) prior to testing.

Stimuli

Experimental words were matched for length, letter bigram and trigram frequency and number of orthographic neighbours and presented alongside 120 length-matched hash-mark strings which acted as a low-level visual baseline. Prior to the study, words had been semantically rated by 10 native speakers (see Pulvermüller et al.8, for procedural details and Table S1 in supplementary methods) on a range of semantic variables, in accordance with which groups of 120 action-related (e.g. “knead”, “jog”, “chew”), 120 object-related (e.g. “hawk”, “cheese”, “axe”) and 120 abstract (e.g. “faze”, “fluke”, “ail”) words were selected. Table S2 in Supplementary materials gives details of their psycholinguistic and semantic properties and of statistical differences between the three semantic categories. As we focused on the contrast between action and object words, statistical contrasts between these two semantic categories are also given. The experimental stimuli themselves can be found in Table S3 in Supplementary Materials.

Procedure

Following EMEG preparation and completion of the Cattell Culture Fair test59 and the Edinburgh Handedness Inventory58, participants were comfortably seated and requested to stay as still as possible inside the MEG dewar, avoiding all unnecessary movements. They were asked to focus on a central fixation cross and to attend to the stimuli, which were presented tachistoscopically for 150 ms each in a random order, in light grey font on a black background, with an inter-stimulus interval of 2500 ms. This passive reading task was split into 3 blocks of approximately 7 minutes each. Please see Supplementary Fig. S3 for a depiction of this task.

After the task, participants completed a word recognition test containing both novel distractor and experimental words, in order to check that they had attended to the task. They performed above chance (average hit rate: 82% [STD: 8.6%]), indicating their attention to the words and compliance with the task.

EMEG recording and preprocessing

EEG caps (EasyCap, Falk Minow Services, Herrsching-Breitbrunn, Germany) had 70 Ag/AgCl electrodes arranged according to the extended 10%/10% system. Magnetoencephalographic (MEG) data were recorded from 306 channels (204 planar gradiometers and 102 magnetometers). Both electroencephalograms [EEG] and magnetoencephalograms [MEG] were collected simultaneously in a magnetically and acoustically shielded MEG booth (IMEDCO, etc). 5 magnetic coils, attached to the EEG cap, allowed for constant recording of head position and were digitised using the Polhemus Isotrak digital tracker system (Polhemus, Colchester, VT, USA). The positions of EEG electrodes and three cardinal landmarks (nasion, left and right pre-auricular points) were also digitised to assist with anatomical co-registration with MRI scans, along with additional points distributed over the scalp. Eye movements were monitored by four EOG electrodes, placed laterally to each eye (horizontal EOG) and above and below the left eye (vertical EOG), in order to later reject trials interrupted by blinks.

Data were preprocessed offline using MaxFilter software (Elekta Neuromag, Helsinki) and the signal-space separation method60, which minimises external noise and sensor artefacts. Preprocessing also involved spatio-temporal filtering, head-movement compensation to correct for between-block movements and identification and rejection of bad EEG/MEG channels. Using MNE Suite 2.7 software package (Martinos Centre for Biomedical Imaging, Charlestown, MA, USA: http://www.nmr.mgh.harvard.edu/martinos/flashHome.php), data were band-pass filtered between 0.1-30 Hz and epoched into segments of 500 ms starting from 50 ms prior to stimulus onset: the period of −50–0 ms was used for baseline correction. Individual noise covariance was computed for the baseline period in each dataset, to be used in source estimation later. Epoch rejection criteria were set at 150 μV (EEG and EOG channels) and 2000 fT/cm and 3500 fT (gradiometers and magnetometers) and epochs of semantic categories were averaged together within individual datasets. Overall signal strength of ERPs/ERFs combined was quantified as global signal-to-noise ratio (SNR), which was calculated for all words over all sensors for all participants. This was computed by dividing amplitude at each time-point by the standard deviation of the baseline period (the first 50 ms) and then computing the square root of the sum of squares across all sensors. The 140–160 ms time-window chosen for analysis was clearly identifiable by a prominent peak in the grand-average global SNR.

MRI acquisition and EMEG source analysis

For source localisation, anatomically constrained distributed L2 minimum norm source estimations for combined EEG/MEG data were computed using MNE Suite 2.7 and Freesurfer 4.3 software. For each subject, the source space was restricted by the cortical surface as computed from high-resolution structural T1 scans acquired with a 3 T Siemens Tim Trio MRI scanner (parameters of the MPRAGE sequence were as follows: field-of-view 256 mm × 240 mm × 160 mm, matrix dimensions 256 × 240 × 160, 1 mm isotropic resolution, TR = 2250 ms, T1 = 900 ms, TE = 2.99 ms, flip angle 9°). A 3-shell boundary-element model (BEM) for each subject, using inner and outer skull and skin surfaces, was created using a watershed algorithm. The original triangulated cortical surface was re-sampled to a grid by decimating the cortical surface with an average distance between vertices of 5 mm, which resulted in 10242 vertices (5120 triangles) in each hemisphere. Dipole sources (located at all vertices simultaneously) were computed with a loose orientation constraint of 0.2 and depth weighting and with a regularization of the noise-covariance matrix of 0.1. Whole-brain source estimates for each stimulus type were computed for each subject. These source estimates from individual brains were later morphed across all subjects to an averaged cortex, which was later inflated and used to display grand average source activations for words and individual semantic categories.

Mean amplitudes of the source currents within ROIs (defined in accordance with the Desikan-Killiany Atlas subdivisions of the brain as implemented in Freesurfer software28) were calculated and examined statistically at several levels of analysis. Primarily, a theory-led approach investigated potential differences between inferior frontal and motor (Frontocentral) and posterior inferior temporal and occipital regions (Temporo-Occipital), where previous metabolic neuroimaging studies had reported clear differences between activation patterns elicited by action- and object-related words21,34,46. The Frontocentral ROIs selected included BA 44 (pars opercularis), the gyrus incorporated within premotor cortex and the precentral gyrus. The Temporo-Occipital ROIs included fusiform gyrus and BA 18 and 19, which were collapsed into one ROI given their relatively small size. Activation across the whole of the time-course (−50 to 450 ms) can be seen for Frontocentral ROIs in Fig. S4 and for Temporo-Occipital ROIs in Fig. S5, though it is not here the focus of analysis.

An ANOVA was conducted with the factors Region (2 levels, Frontocentral and Temporo-Occipital, with data averaged across the 2 frontal and 2 temporo-occipital ROIs) x Hemisphere (2 levels) x Word Category (3 levels: action, object and abstract words). In order to corroborate findings, a secondary exploratory global brain analysis looked for semantic category effects in 23 ROIs from the Freesurfer software28 (see Supplementary Fig. S2), which were grouped into 4 to investigate activity in each lobe independently. Word category effects were also explored within individual regions and t-tests conducted between semantic categories when significant differences were found – this secondary analysis is available in Supplementary Materials. With all statistical analysis, Huynh-Feldt correction was applied to correct for sphericity violations wherever appropriate. Corrected p values are reported throughout.

References

Kutas, M. & Federmeier, K. D. Thirty years and counting: finding meaning in the N400 component of the event-related brain potential (ERP). Annu. Rev. Psychol. 62, 621–647 (2011).

Sereno, S. C., Brewer, C. C. & O'Donnell, P. J. Context effects in word recognition: evidence for early interactive processing. Psychol. Sci. 14, 328–333 (2003).

Penolazzi, B., Hauk, O. & Pulvermüller, F. Early semantic context integration and lexical access as revealed by event-related brain potentials. Biol. Psychol. 74, 374–388 (2006).

Brown, W. S. & Lehmann, D. Verb and noun meaning of homophone words activate different cortical generators: a topographic study of evoked potential fields. Exp. Brain Res. 2, 159–168 (1979).

Brown, W. S. & Lehmann, D. Linguistic meaning related differences in evoked potential topography: English, Swiss-German and imagined. Brain Lang. 11, 340–353 (1980).

Preissl, H., Pulvermüller, F., Lutzenberger, W. & Birbaumer, N. Evoked potentials distinguish between nouns and verbs. Neurosci. Lett. 197, 81–83 (1995).

Pulvermüller, F., Lutzenberger, W. & Birbaumer, N. Electrocortical distinction of vocabulary types. Electroencephalography and Clin. Neurophys. 94, 357–370 (1995).

Pulvermüller, F., Lutzenberger, W. & Preissl, H. Nouns and verbs in the intact brain: evidence from event-related potentials and high-frequency cortical responses. Cereb. Cortex 9, 498–508 (1999).

Hinojosa, J. A., Martin-Loeches, M., Muñoz, F., Casado, P., Fernández-Fias, C. & Pozo, M. A. Electrophysiological evidence of a semantic system commonly accessed by animals and tools categories. Cog. Brain. Res. 12, 321–328 (2001).

Martin-Loeches, M., Hinojosa, J. A., Gómez-Jarabo, G. & Rubia, F. An early electrophysiological sign of semantic processing in basal extrastriate areas. Psychophysiology 38, 114–124 (2001).

Hoenig, K., Sim, E.-J., Bochev, V., Herrnberger, B. & Kiefer, M. Conceptual flexibility in the human brain: dynamic recruitment of semantic maps from visual, motor and motion-related areas. J. Cog. Neurosci. 20, 1799–1814 (2008).

McCarthy, G. & Wood, C. C. Scalp distribution of event-related potentials: an ambiguity associated with analysis of variance models. Electroencephalography and Clin. Neurophys. 62, 203–208 (1985).

Urbach, T. P. & Kutas, M. The intractability of scaling scalp distributions to infer neuroelectric sources. Psychophysiology 39, 791–808 (2002).

Dien, J. & Santuzzi, A. M. Application of repeated measures ANOVA to high-density ERP datasets: a review and tutorial. In: T. C.Handy (Ed.), Event-Related Potentials: A Methods Handbook (pp. 57–82). Cambridge, UK: MIT Press (2005).

Shtyrov, Y., Hauk, O. & Pulvermüller, F. Distributed neuronal networks encoding category-specific semantic information as shown by the mismatch negativity to action words. Eur. J. Neurosci. 19, 1083–1092 (2004).

Pulvermüller, F., Shtyrov, Y. & Ilmoniemi, R. Brain signatures of meaning access in action word recognition. J. Cog. Neurosci. 17, 884–892 (2005).

Hauk, O. & Pulvermüller, F. Neurophysiological distinction of action words in fronto-central cortex. Hum. Brain Map. 21, 191–201 (2004).

Hauk, O., Johnsrude, I. & Pulvermüller, F. Somatotopic representation of action words in human motor and premotor cortex. Neuron 41, 301–307 (2004).

Tettamanti, M. et al. Listening to action-related sentences activates fronto-parietal motor circuits. J. Cogn. Neurosci. 17, 273–281 (2005).

Aziz-Zadeh, L. & Damasio, A. Embodied semantics for actions: findings from functional brain imaging. J. Physiol. Paris 102, 35–39 (2008).

Hauk, O., Shtyrov, Y. & Pulvermüller, F. The time course of action and action-word comprehension in the human brain as revealed by neurophysiology. J. Physiology Paris 102, 50–58 (2008).

Kemmerer, D., Gonzalez-Castillo, J., Talavage, T., Patterson, S. & Wiley, C. Neuroanatomical distribution of five semantic components of verbs: Evidence from fMRI. Brain Lang. 107, 16–43 (2008).

Boulenger, V., Hauk, O. & Pulvermüller, F. Grasping ideas with the motor system: Semantic somatotopy in idiom comprehension. Cereb. Cortex 19, 1905–1914 (2009).

Martin, A. The representation of object concepts in the brain. Annu. Rev. Psychol. 58, 25–45 (2007).

Barsalou, L. W. Grounded cognition. Annu. Rev. Psychol. 59, 617–645 (2008).

Kiefer, M. & Pulvermüller, F. Conceptual representations in mind and brain: theoretical developments, current evidence and future directions. Cortex 48(7), 805–825 (2012).

Pulvermüller, F., Shtyrov, Y. & Hauk, O. Understanding in an instant: neurophysiological evidence for mechanistic language circuits in the brain. Brain Lang. 110, 81–94 (2009).

Desikan, R. S. et al. An automated labelling system for subdividing the human cerebral cortex on MRI scans into gyral based regions of interest. Neuroimage 31, 968–980 (2006).

Lin, F. H., Witzel, T., Ahlfors, S. P., Stufflebeam, S. M., Belliveau, J. W. & Hamalainen, M. S. Assessing and improving the spatial accuracy in MEG source localization by depth-weighted minimum-norm estimates. Neuroimage 31(1), 160–171 (2006).

Pulvermüller, F., Assadollahi, R. & Elbert, T. Neuromagnetic evidence for early semantic access in word recognition. Eur. J. Neurosci. 13, 201–205 (2001).

Hauk, O. et al. [Q:] When would you prefer a SOSSAGE to a SAUSAGE? [A:] At around 100 msec. ERP correlates of orthographic typicality and lexicality in written word recognition. J. Cog. Neurosci. 18, 818–832 (2006a).

Hauk, O., Davis, M. H., Ford, M., Pulvermüller, F. & Marslen-Wilson, W. D. The time course of visual word recognition as revealed by linear regression analysis of ERP data. Neuroimage 4, 1383–1400 (2006b).

Moscoso del Prado Martin, F., Hauk, O. & Pulvermüller, F. Category-specificity in the processing of colour-related and form-related words: an ERP study. Neuroimage 29, 29–37 (2006).

Pulvermüller, F., Kherif, F., Hauk, O. & Nimmo-Smith, I. Distributed cell assemblies for general lexical and category-specific semantic processing as revealed by fMRI cluster analysis. Hum. Brain Map. 30, 3837–3850 (2009b).

Miceli, G., Silveri, M. C., Villa, G. & Caramazza, A. On the basis for the agrammatics' difficulty in producing main verbs. Cortex 20, 207–220 (1984).

Daniele, A., Giustolisi, L., Silveri, M. C., Colosimo, C. & Gainotti, G. Evidence for a possible neuroanatomical basis for lexical processing of nouns and verbs. Neuropsychologia 32, 1325–1341 (1994).

Damasio, H. et al. Neueral correlates of naming actions and of naming spatial relations. Neuroimage 13, 1053–1064 (2001).

Bak, T. H., O'Donovan, D. G., Xuereb, J. H., Boniface, S. & Hodges, J. R. Selective impairment of verb processing associated with pathological changes in Brodmann areas 44 and 45 in the Motor Neurone Disease-Dementia-Aphasia syndrome. Brain 124, 103–120 (2001).

Cottelli, M. et al. Action and object naming in frontotemporal dementia, progressive supranuclear palsy and corticobasal degeneration. Neuropsychologia 20, 558–565 (2006).

Boulenger, V. et al. Word processing in Parkinson's disease is impaired for action verbs but not for concrete nouns. Neuropsychologia 46, 743–756 (2008).

Kemmerer, D., Rudrauf, D., Manzel, K. & Tranel, D. Behavioural patterns and lesion sites associated with impaired processing of lexical and conceptual knowledge of action. Cortex 48(7), 826–848 (2012).

Pulvermüller, P. Words in the brain's language. Behav. Brain Sci. 22, 253–336 (1999).

Pulvermüller, F. & Fadiga, L. Active perception: sensorimotor circuits as a cortical basis for language. Nat. Rev. Neurosci. 11, 351–360 (2010).

Pulvermüller, F. & Shtyrov, Y. Language outside the focus of attention: the mismatch negativity as a tool for studying higher cognitive processes. Prog. Neurobiol. 79, 49–71 (2006).

Binder, J. R., Westbury, C. F., McKiernan, K. A., Possing, E. T. & Medler, D. A. Distinct brain systems for processing concrete and abstract concepts. J. Cog. Neurosci. 17, 905–917 (2005).

Papagno, C., Capasso, R. & Miceli, G. Reversed concreteness effect for nouns in a subject with semantic dementia. Neuropsychologia 47, 1138–1148 (2009).

Lakoff, G. & Johnson, M. Metaphors We Live By. Chicago, IL: University of Chicago Press (1980).

Hauk, O., Davis, M. H., Kherif, F. & Pulvermüller, F. Imagery or meaning? Evidence for a semantic origin of category-specific brain activity in metabolic imaging. Eur. J. Neurosci. 27, 1856–1866 (2008).

Moseley, R. L., Carota, F., Hauk, O., Mohr, B. & Pulvermüller, F. A role for the motor system in binding abstract emotional meaning. Cereb. Cortex 22, 1634–1647 (2012).

Shapiro, K. A., Shelton, J. & Caramazza, A. Grammatical class in lexical production and morphological processing: evidence from a case of fluent aphasia. Cogn. Neuropsychol. 17, 665–682 (2000).

Bedny, M., Caramazza, A., Pascual-Leone, A. & Saxe, R. Typical neural representations of action verbs develop without vision. Cereb. Cortex 22, 286–293 (2012).

Warburton, E. et al. Noun and verb retrieval by normal subjects: studies with PET. Brain 119, 159–179 (1996).

Perani, D. et al. The neural correlates of verb and noun processing: a PET study. Brain 122, 2337–2344 (1999).

Shapiro, K. A., Moo, L. R. & Caramazza, A. Cortical signatures of noun and verb production. PNAS USA. 103, 1644–1649 (2006).

Yu, X., Po Law, S., Han, Z., Zhu, C. & Bi, Y. Dissociative neural correlates of semantic processing of nouns and verbs in Chinese – a language with minimal inflectional morphology. Neuroimage 58, 912–922 (2011).

Cappa, S. F. & Pulvermüller, F. Language and the motor system. Cortex 8(7), 785–787 (2012).

Vigliocco, G., Vinson, D. P., Druks, J., Barber, H. & Cappa, S. F. Nouns and verbs in the brain: a review of behavioural, electrophysiological, neuropsychological and imaging studies. Neurosci. Biobehav. Rev. 35, 407–426 (2011).

Oldfield, R. C. The assessment and analysis of handedness: the Edinburgh inventory. Neuropsychologia 9, 97–113 (1971).

Cattell, R. B. & Cattell, A. K. S. Test of "g": Culture fair, scale 2, form A. Champaign (IL): Institute for Personality and Ability Testing (1960).

Taulu, S. & Kajola, M. Presentation of electromagnetic multichannel data: the signal space separation method. J. App. Physics 97, 124905–124905 (2005).

Acknowledgements

The authors would like to thank Lucy MacGregor, Matthias Schultz and Olaf Hauk for their advice and assistance at different stages of MEG/EEG recording and analysis and Clare Cook and Bettina Mohr for their assistance with the stimulus list. This work was supported by the Medical Research Council (core programme MC_US_A060_0034, U1055.04.003.00001.01 to F. P., core programme MC_A060_5PQ90 to Y. S.).

Author information

Authors and Affiliations

Contributions

Data collection and analysis run by R. Moseley; manuscript written and figures prepared by R. Moseley. F. Pulvermuller and Y. Shtyrov provided theoretical and practical advice throughout and made edits and written contributions to the manuscript.

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Electronic supplementary material

Supplementary Information

Supplementary materials

Rights and permissions

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivs 3.0 Unported License. To view a copy of this license, visit http://creativecommons.org/licenses/by-nc-nd/3.0/

About this article

Cite this article

Moseley, R., Pulvermüller, F. & Shtyrov, Y. Sensorimotor semantics on the spot: brain activity dissociates between conceptual categories within 150 ms. Sci Rep 3, 1928 (2013). https://doi.org/10.1038/srep01928

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/srep01928

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.