Abstract

Humans actively use behavioral synchrony such as dancing and singing when they intend to make affiliative relationships. Such advanced synchronous movement occurs even unconsciously when we hear rhythmically complex music. A foundation for this tendency may be an evolutionary adaptation for group living but evolutionary origins of human synchronous activity is unclear. Here we show the first evidence that a member of our closest living relatives, a chimpanzee, spontaneously synchronizes her movement with an auditory rhythm: After a training to tap illuminated keys on an electric keyboard, one chimpanzee spontaneously aligned her tapping with the sound when she heard an isochronous distractor sound. This result indicates that sensitivity to and tendency toward synchronous movement with an auditory rhythm exist in chimpanzees, although humans may have expanded it to unique forms of auditory and visual communication during the course of human evolution.

Similar content being viewed by others

Introduction

Compared to other primate species, humans are known to have an advanced ability to synchronize their movements to external rhythms. In many cultures across the world behavioral synchrony occurs in activities such as marching, dancing and singing1. From an early age, we can capture and interpret a beat in a rhythmic pattern2; this allows us to sing and dance in time to music. It has been suggested that such behavioral synchrony may function to socially bind individuals together into a larger whole3, a view that is supported by behavioral experiments4. However, little is known about evolutionary origins of such synchronous activity, so it is important to compare behavioral synchronization abilities and their effects on social relationship across primate species.

There is little evidence that non-human primates spontaneously synchronize their movement to external rhythms such as human dancing, although it has been shown that chimpanzees spontaneously match behaviors, as in mimicry of others' facial expressions5,6. Furthermore, such behavioral matching is known to increase affiliation in capuchin monkeys7. These findings suggest that non-human primates as well as humans have some tendency to adjust their behavior to match external movements and influence social impressions of others, but whether non-human primates are sensitive to external rhythm is unclear. Here we investigated whether behavioral synchrony to external auditory rhythms exists in our closest relatives, chimpanzees.

Recent study indicates that monkeys had difficulty synchronizing finger tapping with auditory and visual rhythms8; great apes, however, are known to chorus synchronously9,10 and drum on hollow or dead trees known as so-called “rain dance” shown close to rainfalls or during thunder rain11, suggesting possible sensitivity to auditory rhythms. Hence we expect that at least great apes have some predilection for behavioral synchrony, whether within the rage of the definition of behavioral synchrony in humans or not. The present experimental study is a first contribution toward a better understanding of the emergence of humans' unique communicative ability of synchronous movement to auditory rhythms.

Although the chimpanzees had never been trained to respond any rhythms, they had several studies which included auditory stimuli. For example, one study investigated conditional position discrimination of visual and auditory stimuli, where chimpanzees were required to discriminate between human voice and chimpanzee voice12. Another study about the effect of multimodal representation showed different pitch following visual stimuli to the chimpanzees13. However, none of the previous studies required the chimpanzees to adjust their movements to any auditory rhythms.

We introduced an electric keyboard to three chimpanzees (Figure 1) and trained them to tap two keys (i.e., “C4” and “C5”, see Figure 2) alternately 30 times (see supplementary Video_S1). Each key to be tapped was illuminated and if a chimpanzee tapped this key (e.g., “C4”), sound feedback was given and another key was immediately illuminated (e.g.,“C5”) so it was unlikely that the visual stimuli affected tapping rhythm by chimpanzees. When the chimpanzees tapped the two keys in alternation a total of 30 times, they received positive auditory feedback (a chime) and a reward (Figure 2). If they tapped the illuminated keys 30 times with fewer than 3 errors on two consecutive trials (i.e., less than 10% errors overall), they proceeded to a test session. In a test session, a distractor sound (i.e., ISI 400 ms, 500 ms, 600 ms, random with key of “G” or no sound) was played while the chimpanzees tapped the keyboard for three 30-tap trials (90 taps in total). The chimpanzees received rewards whenever they completed 30 key taps regardless of their responses to the distractor.

Results

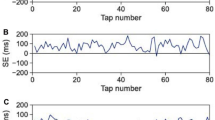

Synchronized tapping with 600 ms-ISI auditory stimulus onset in Ai: The main result is that one of the three chimpanzees (“Ai”), showed spontaneous and significant synchronized tapping to the 600 ms-ISI distractor sound over 540 taps in total (Rayleigh test with unspecified mean direction, n = 540, P < 0.05, Dunn-Sidák-corrected)14 (Figure 3c, see also supplemental video_S2). In contrast, no such consistent relationship was found in no-sound condition when the tapping data was compared with 600 ms sound sequence (Rayleigh test with unspecified mean direction, n = 540, P = 0.839) (Figure 3a). The same analysis for the Random-ISI condition also failed to show any consistent relationship between taps and the stimulus sound (Rayleigh test with unspecified mean direction, n = 540, P = 0.36992) (Figure 3b). Moreover, with the 600 ms-ISI sound beat her tapping was phase matched (Rayleigh test specifying a mean direction of zero (identical phase), n = 540, P < 0.05, Dunn-Sidák-corrected). These results indicate that Ai's tapping was generally aligned to 600 ms-ISI beats, which resembles the response of human tapping to music15. In other words, her tapping was phase-matched; it was not random with regard to the distractor sound (i.e., no-stimulus condition) or simply a response to external sound (i.e., random condition), although we did not find any other statistical significance in other conditions and other subjects (Figure 4).

In order to further investigate whether Ai's synchronized tapping in the 600 ms-ISI condition could be explained by random responding to auditory stimuli, we calculated relative phase (−0.5 to 0.5) for each tap onset and compared 600 ms-ISI condition and Random condition (Figure 5(a)). Because “accurate” taps should occur around zero, we compared absolute value of the each relative phase in 600 ms-ISI condition and Random condition. If Ai tapped more accurately in 600 ms-ISI condition than in Random condition, the absolute values in 600 ms-ISI condition should be smaller than those in Random condition. As we predicted, there was significant difference between 600 ms-ISI (mean = 0.21) condition and Random condition (mean = 0.25) (paired t-test, t = 2.826, d.f. = 359, P < 0.05, Dunn-Sidák-corrected ). This indicates that Ai tapped around the onset of the sound more frequently in the 600 ms-ISI condition than in the Random condition. However, when we compared the frequency of “accurate” taps which lay between −0.1 and 0 and 0 and 0.1 of the sound stimulus (i.e., 10% before and after onset of the sound interval) between 600 ms-ISI condition and Random condition by using a Monte Carlo test involving 10,000 simulations, statistical significance did not survive under Dunn-Sidák adjustment of significance. This may be accuracy of Ai's tapping was relatively weak in compared with previous studies in humans17, although the adjusted p value (i.e., P = 0.00568) was severer. We did not find any significant difference from tapping in Random condition in other tempi and other subjects, which is consistent with circular analysis (Figure 5(a); (b); (c)).

Why Ai's tapping was synchronized to auditory rhythm but not others? Because we did not find any other consistent relationships or accurate tapping to auditory beat in other two chimpanzees, we further looked at tapping data to see if different characteristics were found between Ai's tapping and those of other two chimpanzees. We calculated median ITIs and SDs in all conditions for all three chimpanzees (Table 1) and found that Ai's tapping rate was more stable than other two chimpanzees and generally close to 600 ms, which is one of distractor sound interval. Thus, such a sustained relationship may have facilitated Ai's tapping to resonate with the distractor sound. Previous study in humans also reported that tapping onset became in synchronized with auditory distractor beat if the rate was close to the original tapping rate17. So the fact that Ai's tapping was aligned to an auditory rhythm most close to the original tapping rate resembles the characteristics of human tapping. Additionally, Ai has more experienced variety of auditory stimuli in everyday life than other two chimpanzees because she lives much longer than others. Thus, greater amount of experience in variety of auditory stimuli may have affected Ai's performance, although all chimpanzees had the same previous experiments using auditory stimuli and live together in the same physical and social environment.

Discussion

Overall, our results show that Ai spontaneously synchronized her tapping to the ISI-600 ms auditory beat. This is the first behavioral evidence that chimpanzees are sensitive to isochronous auditory stimuli and can synchronize their movements to these stimuli without explicit training. Hearing the ISI-600 ms sound beat, Ai spontaneously and accurately aligned her tapping to the beat even though she had never been required to pay attention to auditory stimuli. The analysis in No-stimulus and Random conditions suggests that her synchronized tapping cannot be explained away as random tapping or merely responding to sound. Although we did not find a negative mean asynchrony (mean difference from the sound onset in the 600 ms-ISI condition = 4.06, SD = 151.78), which commonly appears when humans try to tap in synchrony with auditory rhythms16, we observed that Ai seemed to adjusted her tapping to be in time with the beat (see supplemental video_S2 for example). When hearing 600ms-ISI auditory rhythm, Ai sometimes slowed down her tapping speed to be in matched with the onset of auditory distractor despite that it takes longer to be given reward. Added to the fact that the distractor stimuli which affected her tapping (i.e., 600 ms) was close to her spontaneous tapping rate (i.e., 578.5 ms (median tapping rate in No stimulus condition, see also Table 1)), Ai's synchronized tapping resembles to some extent human tapping in response to hearing isochronous auditory distractor16.

However, several questions about behavioral synchrony in chimpanzees arise from current experiment. For example, we did not find flexible alignment of Ai's tapping to other auditory rhythms whereas humans can intentionally synchronize their tapping to various rates in a rage between 200 ms to 1800 ms17. Additionally, Ai's accuracy of tapping was relatively weak and lack of evidence of negative asynchrony makes it unclear whether Ai had clear intention17 to entrain her movement with auditory rhythms. Moreover, it is also unclear whether Ai's synchronized tapping was truly auditory-motor entrainment because the keyboard produced sound when keys were tapped and it is possible that Ai aligned her sound with auditory stimulus sound. Thus, differences in synchronized tapping between chimpanzees and humans should be clarified extensively in further studies in order to place Ai's synchronized tapping in previous human tapping studies.

Concerning evolutionary development of behavioral synchrony in humans, recent studies suggest only animals that are able to imitate sound, such as humans and parrots, have evolved an ability to move with an auditory beat: this is called “vocal mimicry hypothesis”18,19. Supporting this hypothesis, researchers have demonstrated that several bird species known for complex vocal learning can synchronize their movements to external auditory and visual rhythms18,19,20 as well as humans. Because of lack of several evidences in Ai's synchronized tapping as described above, current data does not definitively falsify the “vocal mimicry hypothesis” and it might possible that Ai's movement was qualitatively different from auditory-motor entrainment in humans and vocal mimicking birds. Although she was clearly aligned her movements to the 600 ms-ISI beat and reflect a basic attraction of rhythmic movement to auditory rhythms, further investigation of chimpanzees' behavioral synchrony to various rhythms in different modalities appear warranted. By comparing great apes, humans and other complex vocal learning species, we believe that the evolutionary origins of humans' widespread use of behavioral synchrony such as dance and music will be clarified.

Methods

Subjects

We tested three chimpanzees (Ai: a 36-year-old female, Ayumu: a 12-year-old male and Cleo: a 12-year-old female), who live in a social group of 14 individuals in an environmentally enriched outdoor compound connected to the experimental room by a tunnel21. The care and use of the chimpanzees adhered to the 2002 version of the Guide for the Care and Use of Laboratory Primates published by the KUPRI. The experimental protocol was approved by the Animal Welfare and Animal Care Committee of the KUPRI and the Animal Research Committee of Kyoto University. All procedures adhered to the Japanese “Act on Welfare and Management of Animals”.

Apparatus and Stimuli: In order to get the chimpanzees to tap repeatedly, we introduced an electric keyboard (Casio LK208). The keyboard had a function to illuminate keys in a certain midi channel, so we made an auditory sequence to be tapped described below in a midi file and transformed it into a midi channel (i.e., “midi channel 1”) and other auditory sound such as the positive feedback sound in another midi channel (i.e., “midi channel 2”). If an illuminated key was tapped, the illumination immediately switched to the next key, so it is unlikely that the rhythm of tapping was generated by the illumination. The outputs of the keyboard were sent to a desktop computer through a USB cable and recorded in real time in Cubase 6 (Available from http://www.steinberg.net/en/products/cubase/) at a sampling frequency of 44.1 kHz. Cubase is a computer program for music recording and production and also have been used in human tapping study. The distractor sound was also made and played using the Cubase and the signal was sent from the same desktop computer to speakers (Inspire T10). In the distractor stimulus, each auditory pulse was presented for 200 ms.

Training using an electric keyboard: At the start of a training trial a “G” key was lit as a start button. When the chimpanzees tapped “G”, training started and a “C4” key was lit. If the chimpanzees tapped “C4”, the illumination immediately switched to a “C5” key (see Figure 2 and Supplementary video_S1). If they followed the illumination and tapped “C4” and “C5” alternately for certain times, a chime (positive sound feedback) was played and they were given a reward. We started training with tapping 4 times and if the chimpanzees passed criterion on two consecutive trials the number of required taps was increased until they were able to tap 30 times in total (criteria: no mistake for 4 taps and 8 taps training, two mistakes for 16 taps and 24 taps, three mistakes for 30 taps, on two consecutive trials). When the chimpanzees passed training for 30 taps, they proceeded to a test phase.

Test phase 1 (Conditions: No stimulus, 400 ms-ISI, I500 ms-ISI and 600 ms-ISI): We first conducted a synchronized tapping experiment for No stimulus, ISI-400 ms, ISI-500 ms and ISI-600 ms conditions. In the test phase, one session consisted of one training trial followed by three test trials. The procedure for a training trial was exactly the same as the one for tapping 30 times in training phase. The three test trials were also exactly the same as a training trial except that while the chimpanzees were tapping the keyboard, an auditory distractor for one of the four conditions (No stimulus, 400 ms-ISI, 500 ms-ISI and 600 ms-ISI) was played. The chimpanzees were rewarded whenever they completed 30 taps following the lit keys regardless of the distractor sound. The same distractor sound was played during one session. We conducted four sessions in one day for four conditions and the order of the four conditions was randomized every day. We tested for six days, so we collected six sets of 3 trials per tempo for each condition (thus, 540 taps in total for each condition).

Test phase 2 (condition: random ISI): After the first experiment we conducted a second experiment using the random ISI condition. The procedure was the same as in the first experiment but only one session was conducted per day. In the random ISI stimuli, seven ISI stimuli (i.e., 200 ms, 300 ms, 400 ms, 500 ms, 600 ms, 700 ms and 800 ms) were randomly sequenced. We also tested for six days and collected 540 taps.

Analysis

The data were imported into MATLAB using the MIDI toolbox and only the onset tapping times were analyzed. We used MATLAB with Circstat Toolbox for circular analysis and SPSS 13.0 for other statistical analysis such as t-test. For circular analysis, we transformed all ITIs (Inter-Tap-Interval) from all data onto a circular scale (in degrees: −180° to +180°; in radians:−π to π) with all stimulus beats aligned at 0° (Figure 3).

Monte Carlo simulation: Because we conducted 6 trials for each condition, we randomly selected one trial sequence and re-paired the 600 ms-ISI distractor sequence. A Monte Carlo test with 10,000 such simulated experiments resulted in a distribution of the number of “accurate” taps within before and after 60 ms from the onset of the sound and computed the P value of Ai's actual data with 600 ms-ISI stimuli. We expected that the number of “accurate” taps in 600 ms-ISI stimuli would be higher than in the Random condition. However, the p-value (i.e., P = 0.033, one-tailed), did not survive under Dunn-Sidák adjustment of significance.

References

McNeill, W. H. Keeping together in time: Dance and drill in human history. Cambridge, MA: Harvard University Press. (1995).

Phillips-Silver, J. & Trainor, L. I. Feeling the beat: Movement influences infant rhythm perception. Science. 308, 1430 (2005).

Haidt, J., Seder, J. P. & Kesebir, S. Hive psychology, happiness and public policy. J. Legal Stud. 37, S133–S156 (2008).

Wiltermuth, S. S. & Heath, C. Synchrony and cooperation. Psychol Sci. 20, 1–5 (2009).

Anderson, J. R., Myowa-Yamakoshi, M. & Matsuzawa, T. Contagious yawning in chimpanzees. Biol Lett. 271, S468–S470 (2004).

Myowa-Yamakoshi, M., Tomonaga, M., Tanaka, M. & Matsuzawa, T. Imitation in neonatal chimpanzees (Pan troglodytes). Developmental Science. 7, 437–442. (2004).

Paukner, A., Suomi, S., Visalberghi, E. & Ferrari, P. Capuchin monkeys display affiliation toward humans who imitate them. Science. 325, 880–883 (2009).

Zarco, W., Merchant, H., Prado, L. & Mendez, J. C. Subsecond timing in primates: comparison of interval production between human subjects and rhesus monkeys. J Neurophysiol. 102, 3191–3202 (2009).

de Waal, F. B. M. The communicative repertoire of captive bonobos (Pan paniscus) compared to that of chimpanzees. Behavior 106, 183–251 (1988).

Geissmann, T. Duet songs of the siamang, Hylobates syndactylus: I. Structure and organization. Primate Rep. 56, 33–60 (2000).

Van Lawick-Goodall, J. The Quest for Man. Phaidon, London, p.131–169 (1975).

Martinez, L. & Matsuzawa, T. Visual and auditory conditional position discrimination in chimpanzees (Pan troglodytes). Behav Processes. 82, 90–94.

Ludwing, V., Adachi, I. & Matsuzawa, T. Visuoauditory mappings between high luminance and high pitch are shared by chimpanzees (Pan troglodytes) and humans. Proc Natl Acad Sci USA. 108, 20661–20665 (2011).

Fisher, N. I. Statistical Analysis of Circular Data (Cambridge University Press, 1993).

Toiviainen, P. & Snyder, J. S. Tapping to Bach: Resonance-based modeling of pulse. Music percept. 21, 43–80 (2003).

Repp, B. H. Does an auditory distractor sequence affect self-paced tapping? Acta Psychol. 121, 81–107 (2006).

Repp, B. H. Sensorimotor synchronization: A review of the tapping literature. Psychol Bull. 12, 969–992 (2005).

Patel, A. D., Iversen, J. R., Bregman, M. R. & Schulz, I. Experimental evidence for synchronization to musical beat in a nonhuman animal. Curr Biol. 19, 827–830 (2009).

Schachner, A., Brady, T. F., Pepperberg, I. M. & Hauser, M. D. Spontaneous motor entrainment to music in multiple vocal mimicking species. Curr Biol. 19, 831–836 (2009).

Hasegawa, A., Okanoya, K., Hasegawa, T. & Seki, Y. Rhythmic synchronization tapping to an audio-visual metronome in budgerigars. Sci Rep. 1, 1–8 (2011).

Matsuzawa, T., Tomonaga, M. & Tanaka, M. Cognitive development in chimpanzees. Tokyo, Japan: Springer. (2006).

Acknowledgements

This study and preparation of this manuscript were financially supported by Grants-in-Aid for Scientific Research from the Japanese Ministry of Education, Cultures, Sports, Science and Technology (MEXT); the Japan Society for the Promotion of Science (JSPS) (20-1240, 16002001, 19300091, 20002001, 23220006, 24000001, 24700260); and by MEXT Grants-in-Aid for the global COE program (A06 and D07). We appreciate Dr. James Anderson for his help to collect manuscript. We thank the staff of the Language and Intelligence Section for their help and invaluable comments. We also thank the Center for Human Evolution Modeling Research at the Primate Research Institute for daily care of the chimpanzees.

Author information

Authors and Affiliations

Contributions

Y.H., M.T. and T.M. designed the experiment and discussed about the data. Y.H. conducted, analyzed the data and wrote the paper. M.T. and T.M. also supervised and financially supported the experiment.

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Electronic supplementary material

Supplementary Information

Supplemental Video_S1

Supplementary Information

Supplemental Video_S2

Rights and permissions

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivs 3.0 Unported License. To view a copy of this license, visit http://creativecommons.org/licenses/by-nc-nd/3.0/

About this article

Cite this article

Hattori, Y., Tomonaga, M. & Matsuzawa, T. Spontaneous synchronized tapping to an auditory rhythm in a chimpanzee. Sci Rep 3, 1566 (2013). https://doi.org/10.1038/srep01566

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/srep01566

This article is cited by

-

Production of regular rhythm induced by external stimuli in rats

Animal Cognition (2021)

-

Temporal rate is not a distinct perceptual metric

Scientific Reports (2020)

-

Predictive and tempo-flexible synchronization to a visual metronome in monkeys

Scientific Reports (2017)

-

Plio-Pleistocene Foundations of Hominin Musicality: Coevolution of Cognition, Sociality, and Music

Biological Theory (2017)

-

Unidirectional adaptation in tempo in pairs of chimpanzees during simultaneous tapping movement: an examination under face-to-face setup

Primates (2016)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.