Abstract

Ideally, neuronal functions would be studied by performing experiments with unconstrained animals whilst they behave in their natural environment. Although this is not feasible currently for most animal models, one can mimic the natural environment in the laboratory by using a virtual reality (VR) environment. Here we present a novel VR system based upon a spherical projection of computer generated images using a modified commercial data projector with an add-on fish-eye lens. This system provides equidistant visual stimulation with extensive coverage of the visual field, high spatio-temporal resolution and flexible stimulus generation using a standard computer. It also includes a track-ball system for closed-loop behavioural experiments with walking animals. We present a detailed description of the system and characterize it thoroughly. Finally, we demonstrate the VR system’s performance whilst operating in closed-loop conditions by showing the movement trajectories of the cockroaches during exploratory behaviour in a VR forest.

Similar content being viewed by others

Introduction

A traditional approach for studying neuronal mechanisms is to securely fix, anesthetize and/or dissect animals to allow stable electrophysiological recordings to be made whilst they are presented with simplified sensory stimuli. More complex stimuli with naturalistic spatio-temporal structures introduced as an improvement upon the experimental paradigm1,2,3,4,5,6,7 are now acknowledged to be essential for gaining a more complete understanding of the sensory processing. In addition to the stimulus properties, sensory processing is also known to be modulated by the behavioural state of an animal (see eg8,9,10,11,12,13,14). Therefore, it would be beneficial to carry out experiments in minimally constrained conditions and in naturalistic sensory environments, where animals can affect the environment through their own actions (eg walking).

There are numerous examples of neurophysiological experiments in awake but constrained animals. One example of such a controlled experiment is the classical opto-motor paradigm used to study the visual behaviour of tethered insects when they are either walking on a track-ball, or flying attached to a torque-meter15. Because experiments in freely moving animals are still mostly limited to the larger animal models (see eg16,17,18), virtual reality (VR) environments have been recently developed and introduced to the experimental set-ups to provide more naturalistic but well controlled settings19,20,21,22,23,24,25,26.

We present a novel VR system that is based on a commercial data projector and a custom add-on fish-eye lens that projects images to the inner surface of a sphere. It also includes a track-ball to enable closed loop experiments in walking animals. The entire system is computer controlled for flexible stimulus control and easy integration with electrophysiological and behavioural experiments.

Results

The goal of this study was to develop a virtual reality (VR) system suitable for studying the processing of visual information in animal models that possess a fast visual system with vast visual field, such as flying day-active insects. Therefore, following design requirements were set: (1) coverage of a large field of view; (2) spatial resolution better than 1° when observed from the animal’s point of view27; (3) image update rates above 100 Hz and no flicker below 200 Hz; (4) bright illumination up to day-light levels; (5) contrast performance within the range of natural environments; (6) flexible stimulus generation software that runs on a standard computer; (7) implementation of closed-loop behavioural paradigms.

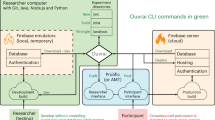

The VR environment that we developed is a spherical projection system, where a data projector is used to display computer generated images on the inner surface of the sphere (Fig. 1a,b). To achieve high image refresh rate required for the animal models with fast vision, the projector was modified to operate with 360 Hz in greyscale mode (see Methods). Previously described mirror-based projection systems20,21,28 were used as a starting point for the optical design (Fig. 1c,d). However, they were found to be unsuitable for the system because of the large projection angle, drawing capability requirements and the mirror blocking a large part of the visual field. We were not able to verify if these limitations were specific to our sub-optimal mirror design or whether it is inherent limitation to the mirror-based approach. This is because the earlier descriptions of such systems20,21,28 do not provide any simulated or measured data about the drawing capability or image quality on the projection surface. Instead of a mirror-based approach, a fish-eye type lens projection was selected that has not to our knowledge been described earlier for spherical projection systems (see also29 for early account of lens-based projection on to a cylindrical screen). The fish-eye lens can be used to project images over the whole spherical surface when placed at the top (Fig. 1e). The animal observes the VR environment from the centre of the sphere, which covers 65 % of the full visual field equidistantly (−135 to 135 degrees in azimuth and −64 to 57 degrees in elevation; Fig. 2a). It should be noted that areas used for the instrumentation overlap with locations that animals cannot often see (such as below and behind them).

VR system and projection optics.

(a) 3D model of the VR system showing projector with add-on lens, spherical projection surface, track ball, microscope and micromanipulator. (b) Photograph of a behavioural experiment with the forest scene; cockroach is on a track ball tethered to a metal wire and it is circulating the forest edge. (c) Ray tracing simulations of the projection optics design with a flat mirror and an aspherical angular amplification mirror20,21,28; the mirror blocks ca. 45 degrees of the visual field. (d) Design with two aspherical mirrors28 for angular amplification that blocks smaller visual field than in (c). (e) The fish-eye lens design.

Projection area and spatial resolution.

(a) Projection area shown in grey rectangle covers ca. 65 % of the full visual field. (b) Pixel size at the projection surface as a function of elevation; squares represent width and circles height of each pixel; grey squares represent the simulated RMS spot size of the add-on optics. (c) Simulated spots at the projection surface as a function of elevation for a point-like white light source; geometric and chromatic aberrations are seen as separation of red, green and blue colours.

As a measure of the spatial resolution of the VR the sizes of individual pixels were measured from the projection surface. The angular size of the pixels as observed from the animal’s perspective were found to be ca. 0.8 degree squares at low elevations but became horizontally compressed towards higher elevations (Fig. 2b). This is attributable to the projection geometry where, at the extreme, 600 pixels are compressed to the ring surrounding the lens. However, it should be noted that the geometrical mapping of the image rendering software displays images correctly on the surface in spite of the varying pixel density. At the highest elevations the pixels were also found to be vertically stretched, which can be attributed to the small incidence angle of the projecting light that caused partial reflection (Fig. 2b). The drawing performance of the projection optics was also simulated with optic design software to investigate its possible contribution to the observed image quality on the projection surface. In such simulation, image of an infinitely small point of white light on the projection surface is calculated, which provides information on the spatial resolution of the optics and possible optical aberrations. The results showed that the drawing performance decreased from the ca. 0.28° root-mean-square (RMS) spot diameter at the equator up to ca. 0.5° towards the lower and higher elevations (Fig. 2b,c). The attained resolution is an order of magnitude worse than the ∼60 µm diffraction limited theoretical performance boundary for the design. The simulations showed that the performance was reduced by the geometrical and chromatic aberrations, which changed from a spherical aberration at the low elevations to dominantly an astigmatism type at the equator and higher elevations (Fig. 2c). These aberrations were difficult to correct completely through the lens optimization because of variation in the distance as a function of elevation from the lens to the surface of the sphere. However, the drawing performance of the add-on lens still exceeds the projector’s pixel resolution at the most part of the visual field, which acts as a limiting factor for the spatial resolution in the system (Fig. 2b).

The surface brightness and contrast of the displayed images were measured using a spectrometer coupled to a fibre optic probe (see Methods). The spectrum of the greyscale images reflected from the projection surface shows several distinct peaks over the visible range and represents the “spectral fingerprint” of the projector lamp (Fig. 3a). The maximum surface brightness, i.e. the maximum irradiance of the projection surface, was measured to be 4.0 W/m2, which corresponds roughly to the irradiance on the earth’s surface during a cloudy day. Surface brightness was found to vary as a function of elevation, which was expected because of the increasing pixel density at the higher elevations (Fig. 3b). The stimulus brightness was also measured for increasing input signal levels, which resulted in a typical nonlinear relation, the gamma curve (Fig. 3c). The input versus brightness curve was linearised using the gamma correction and an additional software calibration was applied that partially corrected the elevation effect in brightness. It should be noted that any remaining smooth and moderate brightness gradients are not expected to significantly affect visual behaviours (see eg7 for the optic flow processing). The Michelson contrasts of the projected images were characterized by measuring the irradiance values between a bright patch of various sizes on a dark background (and vice versa). The contrast was very high with values close to 1 for small bright patches on the dark background and decreased steadily with an increasing ratio of bright vs. dark areas, until becoming saturated at ca. 0.2 (e.g. 0.65, 0.35 and 0.2 for bright to dark area ratios of 1/10, 1/3 and 2/3, respectively; Fig. 3d). This decrease in contrast is attributable to the light integrating nature of the diffusively reflecting spherical projection surface, which is an unavoidable consequence of the spherical projection geometry. Importantly, the measured image contrasts are still well within the range of naturally occurring contrasts30.

Characterization of the surface brightness and contrast.

(a) Spectrum of the grey scale image at the projection surface for 100% brightness full field stimulus. (b) Projection surface irradiance as a function of elevation for 100% brightness full field stimulus. (c) Projection surface irradiance as a function of input intensity level; line shows fit of the gamma curve with the gamma value of 2.56. (d) Contrast as a function of the area of the 100% brightness stimulus patch at dark background or dark patch at 100% bright back-ground.

The temporal performance of the VR system was characterized by monitoring the projected stimuli with a photodiode. Full field stimuli of an intermediate and full brightness of a ca. 6 ms long sequence of three experimenter controlled frames were presented, followed by a ca. 2 ms white segment controlled by the projector hardware (Fig. 4a; see Methods). Fast intensity fluctuations within each frame are caused by the digital encoding of the light intensity that is implemented in the projector hardware. However, this fast fluctuation takes place in micro-second time scale, too fast to be perceived by any known visual system and becomes integrated in the visual system as a constant intensity level. The power spectrum of the data was calculated for the full field stimulus of maximum brightness, which revealed a 120 Hz flicker attributable to the uncontrollable white segment (Fig. 4b). Data from the stimulus with intermediate brightness showed further increase in the flicker (Fig. 4a), which leads to the corresponding increases in the power spectrum at 120 Hz and its harmonics (Fig. 4b). Note that the digital implementation of the light intensity encoding leads to signal analogous to the digital white noise, which gives rise to flat power spectra.

Measurements of the stimulus intensity flickering.

(a) Light intensity fluctuations measured for the full field stimuli with brightness of 100% (top) and 50% (bottom). Coloured bar represents ca. 2 ms colour segments used to encode the sequential image frames and white corresponds to white segment; note that intensity varies from dark to full brightness. (b) Power spectra of the 0% (black), 50% (blue) and 100% (red) brightness full field stimuli show large power peaks at 120 Hz and its harmonics that are associated with the white segment.

Intracellular in vivo recordings were carried out in blowfly (Calliphora vicina) photoreceptors, insect with one of the fastest known visual system31,32,33. Our logic was that if their photoreceptors do not respond to the rapid fluctuations in the light intensity attributable to the digital light intensity encoding or the 120 Hz flicker associated with the white segment, the VR can be considered to be suitable for any animal model with fast vision. Step-like full field stimuli from darkness to the varying brightness levels were used to elicit photoreceptor responses. No response to rapid light intensity encoding fluctuations was observed but, after the initial transient response, the photoreceptor’s membrane potentials were locked to the 120 Hz flicker (Fig. 5a). The flicker responses were largest at intermediate brightness values, which resulted from the proportionally larger intensity fluctuations in the stimulus (Fig. 5b). To address this problem the 2nd generation version of the same projector model was used, in which the manufacturer had removed the white segment by modifying the projector’s firmware (see Methods). Intracellular photoreceptor recordings made using the new projector model to present the stimulus showed no 120 Hz ripple in the step responses (Fig. 5d,e). The differences in the voltage waveforms between the two projectors were attributable to the reduced background light and higher brightness of the stimuli in the measurements with the 2nd generation model. Analysis of the power spectrum from responses to steady light using the older and new projector model also showed that peak in the photoreceptor voltage response power related to the 120 Hz flicker had decreased by ca. 104 orders in magnitude (Fig. 5c,f). Therefore, it can be concluded that the developed VR equipped with the 2nd generation projector model enables stimulating even the fastest visual systems with high 360 Hz frame rate and without flicker artefacts.

120 Hz is detected by the blowfly photoreceptors.

(a) Photoreceptor voltage responses with 1st generation projector for a full field step stimuli from darkness to varying brightness from maximum; each trace is an average of 10 trials; stimulus is shown below. (b) Photoreceptor voltage response (black) and photodiode signal (red) with the 1st generation projector for full field stimulus of the constant 100% (top) and 18% (bottom) brightness; photodiode signal off-set and scale is arbitrary. (c) Power spectra of the photoreceptor voltage responses to full field stimuli with 100% (black) and 18% (red) brightness; the 120 Hz and its harmonics are clearly visible. (d)–(f) Similar measurements as in (a)–(c), but with the 2nd generation projector; scale in the (d) and (e) are as in the (a) and (b), respectively; note that the residual flicker detected by the photodiode is too fast to be seen by the photoreceptors.

Finally, cockroaches (Periplaneta americana) were used as an animal model to demonstrate the suitability of the VR system for behavioural studies with walking behaviour. Although not an animal with fast vision the cockroach was selected because it has robust walking behaviour on the track-ball and it was readily available in the laboratory. The visual system of cockroaches is too slow for them to detect the 120 Hz flicker34. Therefore, the VR system with the 1st generation projector installed was used for these experiments. The cockroaches were tethered from the pronotum and placed on a track-ball system, which enabled them to navigate in the VR forest (Fig. 1b; Supplementary video S1). Although the track-ball weight exceeded the optimal weight for the cockroach35, they had no difficulties in rotating the nearly frictionless track-ball. Each experiment started in the middle of the forest (Fig. 6a,b). The trees were disproportionately small compared to a cockroach, but they can still be considered as concrete visual objects. The cockroaches remained active in the VR for extended periods of time, typically showing burst of walking activity interspersed with periods of inactivity (Fig. 6c,d). While approaching a tree the cockroaches would typically antennate towards it, presumably trying to establish a tactile contact with the visual object (Supplementary video S1). Typically, they avoided the trees, walking around them but occasionally, possibly the lack of tactile feedback from the antennae, caused them to continue to approach the tree, eventually walking through it. This outcome was not prevented by the software. This behaviour can be seen in the track plots (Fig. 6a,b) and in the histograms of cockroach distance to the tree centre (Fig. 6e,f). Note that especially the other individual has spent lots of time at the distance close to the tree trunk (Fig. 6f). At a larger scale the cockroaches fixated on the darkness surrounding the forest and headed towards the forest edge along a fairly straight route (Fig. 6a,b). Once at the edge (Fig. 1b), they typically started to follow it, often walking around the perimeter of the virtual world several times (Fig. 6a,b; Supplementary video S2). None of the cockroaches returned to the forest after they had found the dark forest edge, which is not surprising considering their innate preference for dark areas in the environment.

Cockroach exploratory behaviour in the VR environment.

(a) Cockroach no. 1 and; (b) no. 2 exploring in the virtual forest; red crosses mark 20 s time progression; circles mark location of the trees; world is dark outside the forest (see also Supplementary videos; note that videos are not from the data shown in the figure). (c) and (d) movement speed for the trajectories shown in (a) and (b) showing bouts of activity followed by inactivity periods. (e) and (f) histograms of the distance to tree centre for the data in (a) and (b); note that trees were rectangles with their larger dimensions shown in the histogram as grey bar.

Discussion

We have successfully developed a VR system for animal models that have fast vision, such as day-active insects. The system we developed provides good spatio-temporal performance and covers most of the relevant parts of the visual field, which is known to be essential for the optic flow dependent behaviours in insects15,26,36. The spherical VR also has equidistant projection geometry, which removed need for the real-time perspective distortion corrections required with e.g. flat computer screens26, which could become a limiting factor for the spatio-temporal performance in high frame rate system such as one presented here. The VR system can also produce stimuli with equivalent brightness to a cloudy-day and contrasts comparable to the natural environment. Finally, it is controlled with a standard computer and software that provides flexible stimulus generation and control possibilities. These technical features coupled with demonstrable success in both behavioural and electrophysiological experiments (see also37) allow us to conclude that the system is suitable for presenting animals with the behaviourally relevant and naturalistic stimuli. Further details of the design, including manufacturing instructions, are available from authors upon request to enable system replication.

A temporal flicker problem was identified during the system characterization, which was also verified in electrophysiological recordings from blowfly photoreceptors to be detectable by animals with very fast vision. However, this problem was avoided by replacement of the original projector with the 2nd generation model, which is commercially available from the projector manufacturer. Because the 1st generation projector was already fully adequate for the cockroach and several other animal models used in the laboratory, we decided not to replace it with the newer model but instead build a second VR system based on the 2nd generation model for the animals with faster vision (work in progress). The main remaining limitation with the developed VR system is the inability to generate colour stimulation, which was compromised to increase the frame rate to 360 Hz. However, many visual behaviours are not dependent upon colour information (e.g. optic flow processing15,36,38) and the colour filters can be used in the lens assembly for simple full field manipulation of the spectral content of the stimuli.

Other approaches have been described in the literature for implementing VR environments, but detailed comparison to our system is not possible because lack of quantitative data on the performance of these VR system in the published results. A LED-based virtual reality system has been presented that also covers a large fraction of the visual field and in which the stimulus is controlled through a modified VGA graphic card7 (see also39). Its main advantages are the flicker free image with high brightness but, on the other hand, its disadvantages include a limited spatial resolution, as well as requirements for complex custom instrumentation and control software. It should also be noted that our VR system already achieves the day-light level brightness, which can be increased further when using the 2nd generation projector that has brighter lamp in it.

Data projector based VR systems have also been described for rodents, which include the track-ball system20,21. However, their temporal performance is too low for animals with fast vision, which would see the typically 60 Hz frame rate as high contrast flicker (see also19). In addition, based on our simulations, the mirror based projection optics used in these systems is expected to produce lower image quality than the novel fish-eye design of our VR system. Therefore, the lens based design is expected to provide significant improvement in the image quality of the spherical VR systems in general. A VR system was also recently reported that uses two custom DLP projector engines to create a panoramic field of view with back-projecting spherical surfaces22. It is reported to produce greyscale frame rates of up to 8000 frames/s in a binary mode, however, this corresponds to only 60 Hz frame rate when 8 bit intensity encoding is used as in our system. It also provides stimulation options for other sensory modalities but, for the visual stimulation, our system provides more flexible stimulus control and comparable spatio-temporal performance with much simpler and more cost efficient implementation.

Our future method development work will focus on implementing multisensory stimulation possibilities into the VR. For example, an olfactometer will be included to enable stimulation of the olfactory and mechanosensory pathways. The VR based approach will be extensively used in our future works to replicate traditional arena or maze experiments in the VR, as well as to implement more complex experiments enabled by the easy real-time manipulation of the visual environment (e.g. a visual object that the animal has fixated can be easily moved to different location or modified by the appearance). Importantly, the behaving animals can be constrained in the VR to enable simultaneous electrophysiological measurements, which can be used to investigate the neuronal mechanisms behind the behaviours.

Methods

VR design

The system involves the projection of computer generated images onto the inner surface of a spherical projection screen using a data projector in combination with a fish-eye lens (Fig. 1a). An animal is placed in the centre of the sphere, which from the animal’s viewpoint enables covering of the full visual field equidistantly with the screen. A sphere with 400 mm diameter was built from a glass fibre by laminating the fibres on top of a positive half-sphere mould. The two halves of the sphere were attached to each other and sections from the resulting full sphere were subsequently removed from the top and the bottom to accommodate the lens and the animal holder, respectively (Fig. 1a). In addition, a 90° section was removed from the back to provide access for a stereo microscope and micromanipulators required for the electrophysiology. The inner surface of the sphere was polished and coated with barium sulphate based paint (ODP97-1.0, Gigahertz-Optic GmbH) that forms a high-quality white Lambertian surface. This reduces directional reflections and increases surface brightness.

Fish-eye type add-on lens was designed to be used in conjunction with the projector’s own lens (together called projection optics). ZEMAX® (Zemax Development Corp) software was used for the optical design and optimization. The optical properties of the projector lens were measured to allow it to be replaced by a thin lens equivalent in the optics design. At the image source, the projection optics was optimized to use the approximately 10.5 mm diameter circle of the projector’s digital micromirror device (DMD), which corresponds to a circle of 600 pixels in diameter. This allows the system to take full advantage of the available resolution of the SVGA projector. The designed add-on lens assembly includes one catalogue singlet (KXP-189, Newport Corp.), a plane mirror, two custom doublets and two custom singlets (Fig. 7a,b). The effective focal length of the projection optics is 4.1 mm. The lens assembly also includes slots for placing optical filters (Fig. 7a), such as neutral density and colour filters.

Fish-eye lens and trackball schematics.

(a) Schematic cross section of the add-on fish-eye lens assembly; colour filter slot is highlighted in grey colour. (b) Custom lenses in the assembly shown in more detail; R refers to surface curvature of the spherical lens. (c) Section through a 3D wire model of the track-ball. Pressure port at the lower part is for coupling of the air supply to provide low friction air cushion; one of the two sensors is shown in the cross section.

Animals with fast vision, such as flying insects, have a flicker fusion rate that exceeds the frame rate of the commercial data projector27,32,33. A moderately priced digital light processing (DLP) type data projector was selected for the VR system (DepthQ®, Lightspeed Design Inc). It can produce 120 Hz frame rate with 24 bit colour reproduction and SVGA resolution. The colour filter was removed from the projector’s image engine to harness the colour production for tripling the frame rate of 8 bit greyscale images to 360 Hz. Custom software was developed to control the image production, based on an open-source Ogre3d graphic rendering engine (http://www.ogre3d.org/). The software runs with a standard computer and a Windows operating system (Microsoft Inc.). The rendering is based on a cube map with six cameras for each frame. The VR environment is created as a cube of specific size, where each camera is facing one of the inner faces of the cube. A geometrical transformation is included in the compiled VR control files that subsequently morphs the cube into a sphere. In addition, the control files include functional data that can be used to control aspects of the VR environment, such as moving objects or changing the viewpoint within the VR. The pixels that are not “seen” by the projection optics and the ones that would project to the sectors removed from the sphere or directly to the animal are set to black. The software creates greyscale images with 8 bit intensity encoding that are displayed sequentially on the colour channels in the order of blue, green and red to produce 360 Hz frame-rate. It should be noted that in the 1st generation projector model the hardware implemented white channel always follows the red channel and its brightness is automatically determined, based on the three colour channels intensities. The white segment is eliminated in the 2nd generation special model that was made recently available by the projector manufacturer (result of an independent development project; courtesy of Anthony Leonardo, Janelia Farm Research Campus, HHMI and Lightspeed Design Inc.).

A track-ball was designed according to the previously described approach using computer mice and built to permit closed-loop behavioural experiments with the walking animals (Fig. 7c)20,21,40,41,42,43. It is placed on a heater platform that can control the temperature inside the VR system within a range of 20 −40°C (Fig. 1b). The track ball includes an air-supported hollow Styrofoam sphere with 93 mm diameter and 4.8 g weight (Fig. 7c), which is placed to the centre of the sphere (Fig 1a,b). The track-ball chassis is milled from PVC plastic. The animal is tethered and placed on top of the nearly frictionless ball. The ball weight is heavier than what would be optimal for matching its inertia to that of e.g. cockroach35, but this has not lead to difficulties in rotating the ball. Rotations of the ball are measured by two image sensors disassembled from an optical computer mouse (AMU45EU, Targus Group International Inc.). The image sensors (ADNS-2610) are integrated to the track ball chassis and connected to the VR controlling computer with the USB cables of the computer mice. The sensor frame rate is 1500 frames/s, resolution is ca. 0.078 degrees and the maximum speed and acceleration are 0.3 m/s and 2.5 m/s2, respectively. This performance is sufficient for the typical experiments with walking insects (Fig. 7c,d), except for the maximum speed that may be exceeded e.g. during fast escape responses (∼ 1 m/s in cockroach). In such cases, the measured movement and the subsequent VR control would be inaccurate. Rotations of the ball are streamed to the memory buffers of the mice and polled by the VR software through raw input interface of the MS Windows operating system at every vsync event associated with the frame rate update. The rotation values are transformed into the control commands for the VR, which enables calculating difference between two frames used to move the VR scene accordingly. In addition of the closed-loop control, the track-ball can also be used in the open-loop mode, where animal movements are measured but not used to control the VR environment.

Technical measurements

Pixel diameters on the projection surface were measured from the varying elevations along the 135 degrees in azimuth. The measurements were done manually using a calliper and repeated several times to increase the reliability of the measurements (N = 6). Contrast and surface brightness measurements were made with a calibrated spectrometer (USB4000 UV-VIS, Ocean optics Inc). Spectrum and surface irradiances with varying brightness levels were measured using the full field stimuli. For the contrast measurements a white patch stimulus of varying size on a black background or black patch stimulus on a white background were used. The fibre optic probe (NA = 0.22) of the spectrometer was positioned either 20 or 154 mm from the surface of the sphere, or in the centre of it, for measuring the size of pixels, contrasts and brightnesses, respectively. The contrasts, c, were calculated according to the Michelson formula c = (Is−Ib)/( Is+Ib) where Is and Ib are the surface irradiance of the stimulus and the background, respectively.

Stimuli were also measured with a photodiode. The photodiode signals were amplified and saved to a computer with a 50 kHz sampling rate. Because the photodiodes were only used to monitor temporal dynamics of the stimulus intensity, they were not calibrated leading to arbitrary output values. The data acquisition in all measurements were carried out with a data acquisition card (PCIe-6259, National Instruments Corp.) and a custom LABVIEW® (National Instruments Corp.) program. Power spectral densities were estimated in MATLAB® (Mathworks Inc.) using the Welch’s method with a hamming window.

Animal experiments

Blowflies (Calliphora vicina) were grown in a laboratory stock that is regularly refreshed with animals obtained from the Blades Biological Ltd, UK. All data presented was collected from the female flies. Flies were fixed on a piece of microscopy glass with a mixture of a paraffin and beeswax. Legs and antennae were removed and wounds sealed with the wax to prevent drying. A small hole was cut in the dorsal part of the cornea of the left eye for the electrode insertion. Sharp glass capillary microelectrodes were manufactured using a laser puller (P-2000, Sutter Instrument Company Inc.). An Ag/AgCl reference electrode was placed in a small opening cut in the fly’s abdomen. The glass capillary electrodes were driven to the eye with a custom piezo manipulator (courtesy of Sensapex Ltd.). Experiments were performed on the cells with dark resting potential below −60 mV and an input resistance of at least 15MΩ44. The photoreceptors were stimulated using full field flashes of varying brightness. The experiments shown in Fig. 5a–c were performed using the 1st generation projector that is integrated to the VR system and those in Fig. 5d–f with the 2nd generation model projecting to a planar screen coated with the same paint as in the spherical screen. Measurements were made from four animals (for cells N = 12) and data was acquired at 4 kHz sampling rate.

Adult male American cockroaches (Periplaneta americana) from a laboratory stock were used for the behavioural experiments. The tip of a wire holder was fixed to the pronotum of a carbon dioxide-anesthetized cockroach with a mixture of beeswax and resin. The cockroach was mounted on the track ball and care was taken to adjust the animal’s posture and elevation from the ball so that it could move as normally as possible. Each cockroach was allowed to recover from the anaesthesia and adapt to the experimental set-up for at least 15 minutes prior to starting the experiment, which was found to be sufficient time for the full recovery of normal activity level. The animals were not exposed to any stimuli during this time (i.e. VR was dark). The cockroaches were able to navigate freely in the virtual forest environment, including the black surround (Fig. 1b). The forest was evenly lit without any shadows under the trees.

References

Karmeier, K., van Hateren, J. H., Kern, R. & Egelhaaf, M. Encoding of naturalistic optic flow by a population of blowfly motion-sensitive neurons. J. Neurophysiol. 96, 1602–1614 (2006).

Niven, J. E., Vähäsöyrinki, M., Juusola, M. & French, A. S. Interactions between light-induced currents, voltage-gated currents and input signal properties in Drosophila photoreceptors. J Neurophysiol 91, 2696–706 (2004).

Passaglia, C., Dodge, F., Herzog, E., Jackson, S. & Barlow, R. Deciphering a neural code for vision. Proc. Natl. Acad. Sci. U. S. A. 94, 12649–12654 (1997).

Reinagel, P. How do visual neurons respond in the real world? Curr. Opin. Neurobiol. 11, 437–442 (2001).

Vinje, W. E. & Gallant, J. L. Sparse coding and decorrelation in primary visual cortex during natural vision. Science 287, 1273–1276 (2000).

Felsen, G. & Dan, Y. A natural approach to studying vision. Nat. Neurosci. 8, 1643–1646 (2005).

Lindemann, J. P. et al. FliMax, a novel stimulus device for panoramic and highspeed presentation of behaviourally generated optic flow. Vision Res. 43, 779–791 (2003).

Maimon, G., Straw, A. D. & Dickinson, M. H. Active flight increases the gain of visual motion processing in Drosophila. Nat. Neurosci. 13, 393–399 (2010).

Chiappe, M. E., Seelig, J. D., Reiser, M. B. & Jayaraman, V. Walking modulates speed sensitivity in Drosophila motion vision. Curr. Biol. 20, 1470–1475 (2010).

Ramirez, J. M. & Pearson, K. G. Alteration of bursting properties in interneurons during locust flight. J. Neurophysiol. 70, 2148–2160 (1993).

Schmidt, M. F. & Konishi, M. Gating of auditory responses in the vocal control system of awake songbirds. Nat. Neurosci. 1, 513–518 (1998).

Rind, F. C., Santer, R. D. & Wright, G. A. Arousal facilitates collision avoidance mediated by a looming sensitive visual neuron in a flying locust. J. Neurophysiol. 100, 670–680 (2008).

Rosner, R., Egelhaaf, M. & Warzecha, A. K. Behavioural state affects motion-sensitive neurones in the fly visual system. J. Exp. Biol. 213, 331–338 (2010).

Longden, K. D. & Krapp, H. G. State-dependent performance of optic-flow processing interneurons. J. Neurophysiol. 102, 3606–3618 (2009).

Borst, A., Haag, J. & Reiff, D. F. Fly motion vision. Annu. Rev. Neurosci. 33, 49–70 (2010).

O'Keefe, J. & Dostrovsky, J. The hippocampus as a spatial map. Preliminary evidence from unit activity in the freely-moving rat. Brain Res. 34, 171–175 (1971).

Lee, A. K., Epsztein, J. & Brecht, M. Head-anchored whole-cell recordings in freely moving rats. Nat. Protoc. 4, 385–392 (2009).

Fotowat, H., Harrison, R. R. & Gabbiani, F. Multiplexing of motor information in the discharge of a collision detecting neuron during escape behaviors. Neuron 69, 147–158 (2011).

Gray, J. R., Pawlowski, V. & Willis, M. A. A method for recording behavior and multineuronal CNS activity from tethered insects flying in virtual space. J. Neurosci. Methods 120, 211–223 (2002).

Holscher, C., Schnee, A., Dahmen, H., Setia, L. & Mallot, H. A. Rats are able to navigate in virtual environments. J. Exp. Biol. 208, 561–569 (2005).

Harvey, C. D., Collman, F., Dombeck, D. A. & Tank, D. W. Intracellular dynamics of hippocampal place cells during virtual navigation. Nature 461, 941–946 (2009).

Taylor, G. K. et al. New experimental approaches to the biology of flight control systems. J. Exp. Biol. 211, 258–266 (2008).

Strauss, R., Schuster, S. & Gotz, K. G. Processing of artificial visual feedback in the walking fruit fly Drosophila melanogaster. J. Exp. Biol. 200, 1281–1296 (1997).

Schuster, S., Strauss, R. & Gotz, K. G. Virtual-reality techniques resolve the visual cues used by fruit flies to evaluate object distances. Curr. Biol. 12, 1591–1594 (2002).

Fry, S. N., Rohrseitz, N., Straw, A. D. & Dickinson, M. H. TrackFly: virtual reality for a behavioral system analysis in free-flying fruit flies. J. Neurosci. Methods 171, 110–117 (2008).

Luu, T., Cheung, A., Ball, D. & Srinivasan, M. V. Honeybee flight: a novel ‘streamlining' response. J. Exp. Biol. 214, 2215–2225 (2011).

Land, M. F. Visual acuity in insects. Annu. Rev. Entomol. 42, 147–177 (1997).

Chahl, J. S. & Srinivasan, M. V. Reflective surfaces for panoramic imaging. Appl. Opt. 36, 8275–8285 (1997).

Götz, K. G. Course-control, metabolism and wing interference during ultralong tethered flight in Drosophila melanogaster. J. Exp. Biol. 128, 35–46 (1987).

Laughlin, S. B. Form and function in retinal processing. Trends in Neurosciences 10, 487–483 (1987).

Hardie, R. C. & Raghu, P. Visual transduction in Drosophila. Nature 413, 186–93 (2001).

Howard, J., Dubs, A. & Payne, R. The dynamics of phototransduction in insects. Journal of Comparative Physiology a-Sensory Neural and Behavioral Physiology 154, 707–718 (1984).

Juusola, M., Kouvalainen, E., Jarvilehto, M. & Weckström, M. Contrast gain, signal-to-noise ratio and linearity in light-adapted blowfly photoreceptors. J. Gen. Physiol. 104, 593–621 (1994).

Heimonen, K., Salmela, I., Kontiokari, P. & Weckström, M. Large functional variability in cockroach photoreceptors: optimization to low light levels. J. Neurosci. 26, 13454–13462 (2006).

Weber, T., Thorson, J. & Huber, F. Auditory behavior of cricket. I. Dynamics of compensated walking and discrimination paradigms on the Kramer treadmill. J Comp Physiol A 141, 215–232 (1981).

Egelhaaf, M. et al. Neural encoding of behaviourally relevant visual-motion information in the fly. Trends Neurosci. 25, 96–102 (2002).

Piironen, A., Weckström, M. & Vähäsöyrinki, M. Ultra-small and customizable multichannel electrodes for extracellular recordings. J. Neurophysiol. (2011).

Borst, A. & Haag, J. Neural networks in the cockpit of the fly. J. Comp. Physiol. A. Neuroethol Sens. Neural Behav. Physiol. 188, 419–437 (2002).

Reiser, M. B. & Dickinson, M. H. A modular display system for insect behavioral neuroscience. J. Neurosci. Methods 167, 127–139 (2008).

Seelig, J. D. et al. Two-photon calcium imaging from head-fixed Drosophila during optomotor walking behavior. Nat. Methods 7, 535–540 (2010).

Gotz, K. G. & Wenking, H. Visual control of locomotion in the walking fruitfly. J. Comp. Physiol. A. Neuroethol Sens. Neural Behav. Physiol. 85, 235–266 (1973).

Buchner, E. Elementary movement detectors in an insect visual-system. Biol Cybern 24, 85–101 (1976).

Hedwig, B. & Poulet, J. F. Mechanisms underlying phonotactic steering in the cricket Gryllus bimaculatus revealed with a fast trackball system. J. Exp. Biol. 208, 915–927 (2005).

Weckström, M., Hardie, R. C. & Laughlin, S. B. Voltage-activated potassium channels in blowfly photoreceptors and their role in light adaptation. J Physiol 440, 635–57 (1991).

Acknowledgements

We would like to thank LudoCraft Oy for developing the custom rendering engine, Lightspeed Design Inc. for the help with the projector modifications and Sensapex Ltd. for supplying the piezo micromanipulators. We are also thankful for Matti Weckström and Jeremy Niven for the insightful comments on the manuscript. The work was supported by the Finnish Graduate School of Neuroscience (AP), Biocenter Oulu Graduate School (AH), Academy of Finland grants 118480 (MV) and 129762 (MV) and Sigrid Juselius Foundation (MV).

Author information

Authors and Affiliations

Contributions

MV conceived the VR design concept; MA performed optics design, ML and TT carried out instrumentation design and manufacturing; JT contributed to software development and made technical measurements; AP performed electrophysiological and AH behavioural experiments; JT, AP, AH, MA and MV wrote the manuscript.

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Electronic supplementary material

Supplementary Information

Supplementary video 1

Supplementary Information

Supplementary video 2

Rights and permissions

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareALike 3.0 Unported License. To view a copy of this license, visit http://creativecommons.org/licenses/by-nc-sa/3.0/

About this article

Cite this article

Takalo, J., Piironen, A., Honkanen, A. et al. A fast and flexible panoramic virtual reality system for behavioural and electrophysiological experiments. Sci Rep 2, 324 (2012). https://doi.org/10.1038/srep00324

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/srep00324

This article is cited by

-

Ant foragers might present variation and universal property in their movements

Journal of Comparative Physiology A (2021)

-

The role of ocelli in cockroach optomotor performance

Journal of Comparative Physiology A (2018)

-

Insect–machine hybrid system for understanding and evaluating sensory-motor control by sex pheromone in Bombyx mori

Journal of Comparative Physiology A (2013)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.