Abstract

The human emotional reactions to stimuli delivered by different sensory modalities is a topic of interest for many disciplines, from Human-Computer-Interaction to cognitive sciences. Different databases of stimuli eliciting emotional reaction are available, tested on a high number of participants. Interestingly, stimuli within one database are always of the same type. In other words, to date, no data was obtained and compared from distinct types of emotion-eliciting stimuli from the same participant. This makes it difficult to use different databases within the same experiment, limiting the complexity of experiments investigating emotional reactions. Moreover, whereas the stimuli and the participants’ rating to the stimuli are available, physiological reactions of participants to the emotional stimuli are often recorded but not shared. Here, we test stimuli delivered either through a visual, auditory, or haptic modality in a within participant experimental design. We provide the results of our study in the form of a MATLAB structure including basic demographics on the participants, the participant’s self-assessment of his/her emotional state, and his/her physiological reactions (i.e., skin conductance).

Design Type(s) | stimulus or stress design |

Measurement Type(s) | Change in Emotional State • Galvanic Skin Response |

Technology Type(s) | Self-Report of Personality • Sensor Device |

Factor Type(s) | |

Sample Characteristic(s) | Homo sapiens |

Machine-accessible metadata file describing the reported data (ISA-Tab format)

Similar content being viewed by others

Background & Summary

The study of human emotions is a fascinating and cross-disciplinary field of research. In the past 20 years, the interest on human emotions has been extended, from the realm of psychology, to other disciplines such us neuroscience1, product and experience design2, and computer science 3,4.

Despite different theories of emotions have been proposed over the years5,6, there seems to be the common understanding that emotional states are characterized by physiological and cognitive responses to clearly identifiable stimuli7. As such, whether we investigate emotions to understand the human mind or to teach an automated system how to be more “humane”, emotional investigation is, in the great majority of cases based on: (i) delivering emotional stimulation and (ii) measuring cognitive and physiological reactions.

With emotional stimulation, we intend an external stimulus that is able to elicit an emotional reaction. In literature, the most common way to elicit emotions is by delivering visual stimuli8. The International Affective Picture system (IAPS)9 and the Geneva Affective Pictures Database (GAPD)10, are examples of collection of visual stimuli (pictures) often used to elicit emotions. The Affective Norms for English Words (ANEW)11 and the Affective Norms for English Texts (ANET)12 are still visual-based (although not pictorial) collections of emotion-eliciting stimuli.

Auditory stimuli are often used as an alternative to visual stimuli (e.g., the International Affective Digital Sounds, IADS)13. While the IADS includes mainly short audio clips, long segments of musical pieces have also been used to elicit emotional reactions14,15.

It is important to note that, while IADS stimuli always have a well-defined semantic (indeed, it is not hard to imagine the source of the IADS stimuli when listening to them), classical music pieces differ from these in the sense that their emotional value is to be found within the features of the composition itself, such as its tempo and tonality16,17. Audio-visual stimulation has also been used to elicit emotions. A number of studies has been performed using short movies as emotional stimuli18 and a few standardized databases of emotional films and clips are now available19,20.

Alongside the more common audio-visual stimuli, more non-conventional and less standardized stimuli have been used in literature, such as olfactory21,22 and haptic23–25 stimuli.

Whatever stimulus is used to elicit an emotion, this is supposed to impact on participants’ cognitive and physiological state. To measure the impact of an emotional stimulus, a number of self-assessment questionnaires have been used in literature. Notable examples are the Self-Assessment Manikin (SAM)26 and the Geneva Emotion Wheel (GEW)27.

Together with self-assessment measures, participants’ physiological and bodily reactions to emotional stimuli are often recorded28. In neuroscience, such exploration is often performed through brain imaging29. Within computer science, where gaining access to brain activations might be cumbersome, attention is often directed towards autonomic system responses. This approach, although rather generic, allows to record bodily variations based on emotional stimulation using relatively little hardware (measuring tools)30,31. Such responses include the recording of parameters related with the human vascular system (blood volume pulse, heart rate), participants’ reaction times to startle reflexes, variation in skin and body temperature, and variation in the skin conductance (SC) of light electric current. The latter measure directly correlates with autonomic sudomotor nerve activation, and is therefore an indirect measure of palm sweating, in turn related with the increase in the arousal of a subject. SC is arguably the most used physiological parameter to investigate participants’ emotional activation32,33.

Here, we describe a database (Data Citation 1) reporting SC activations and SAM self-assessment from 100 participants, to a variety of emotional stimuli. The emotional stimuli are communicated through three different sensory modalities, namely: 20 audio stimuli, 20 visual stimuli, and 10 haptic stimuli. Of the 20 audio stimuli, 10 were selected from the IADS, and the other 10 stimuli were instrumental musical pieces that were never tested before. Similarly, 10 visual stimuli came from the IAPS, while the other 10 were abstract works of art. The 10 haptic stimuli were obtained from previous work on using mid-air haptic stimulation for eliciting emotions25. Noteworthy, the choice of including abstract visual stimuli was motivated by the fact that, in both the haptic and audio modality, it is possible to elicit emotions by stimuli with no obvious semantics34. We therefore tested whether this holds true for the selected stimuli in the visual modality.

This database is the first database that allows to compare directly SAM ratings for given stimuli to SC responses in 3 different sensory modalities. Far from being a comprehensive database of emotional stimuli, nevertheless it offers the possibility to compare ratings and physiological activation within subjects for stimuli in 3 different sensory modalities. In addition, it validates 30 stimuli, which do not have immediate meaning for the participant, for the first time assessing emotional “abstract” stimulation on a large number of participants.

Methods

Participants

One hundred, healthy volunteers participated in the experiment (mean=26.88 years; SD=9.11; range: from 18 to 71 years; 61 females). All but 9 participants declared to be right-handed. Pre-screening allowed only participants with normal (or corrected to normal) vision, with no history of neurological, psychological, or psychiatric disorders, and no tactile or auditory impairments to take part in the experiment. Participants were recruited from the University of Sussex, were naïve as to the purpose of the experiment, were paid for their participation, and gave written informed consent. The study was conducted in accordance with the principles of the Declaration of Helsinki and was approved by the University of Sussex ethics committee (Sciences & Technology Cross-Schools Research Ethics Committee, Ref: ER/EG296/1).

Acquisition setup and procedure

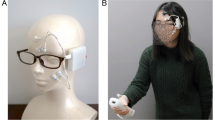

Participants were invited to sit comfortably in front of a computer screen. We placed SC recording electrodes (GSR Electrodes, Shimmer®) to the index and ring finger of participants’ left hand (as in ref. 35) as shown in Fig. 1.

(a) Schematic representation (left) and picture (right) of the box containing the mid-air haptic device. (b) Schematic representation (left) and picture (right) of the experimental setup. (c) Schematic representation of the experimental procedure. At first the participant is asked to relax for about 5 min; followed by a 60-seconds break before the start of the experiment; a three seconds countdown precedes the stimulus; the stimulus is displayed; SAM questions are presented one after another in a randomized order; after answering to the questions the countdown for the next stimulus starts. The whole procedure is repeated through the experiment.

A variable amount of time (approximately five minutes) was allowed to participants to relax, get ready for the experiment, and familiarize themselves with the experimental setup. Particularly, within this time, participants were first shown by the experimenter how to place correctly their right hand over the haptic device, and then asked to repeatedly put their right hand on it, memorizing the position of the hand so that they could replicate it throughout the experiment. Moreover, participants were invited to find a comfortable position for the left hand, which they were instructed not to move for the duration of the experiment.

At the beginning of the experiment, participants were asked again to relax for a duration of 60 s, for the SC signal to reach baseline. At the start of the experiment, SC recording was triggered, and kept running until the end of the experiment. The delivery of each emotional stimulus was marked as emotional trigger in the data log to help us interpret the meaning of the SC signal.

Emotional stimuli were presented in a randomized order. Particularly, stimuli presentation was completely randomized, rather than block randomized, to avoid any sensory-modality-related scale bias (i.e., participants adopting different scales depending on the sensory modality). Before each stimulus, a three seconds countdown appeared in the centre of the screen, followed by the stimulus. When a haptic or auditory stimulus was presented, a sentence was simultaneously displayed on the screen (either “playing audio” or “playing haptics”), informing participants on the sensory modality of the upcoming stimulus. After each stimulus, participants were asked to rate the stimulus using the original version of the SAM26 (see Self-Assessment rating, below), therefore rating the stimuli according to their arousal, valence, and dominance26, with the right hand. Each dimension of the SAM was presented in a randomized order, one after another. After answering to the last of the SAM questions, a new countdown started, marking the beginning of a new trial. At the beginning of each countdown, participants positioned their right hand comfortably on a black Plexiglas box containing a mid-air haptic device (Ultrahaptics®), as they had previously learned during the familiarization. The box was open on the upper side, and a soft support for the wrist was attached on the edge close to the participant, so that participants could comfortably place their right hand over the aperture, with the palm completely exposed to the mid-air haptic device at a 20cm distance (Fig. 1). Noteworthy, the design of the box allowed participants to easily position their hand above the device in a standardized manner. Moreover, the experimenter assisted the participant throughout the experiment and repeated the trials in case of wrong positioning of the hand.

Stimuli

Auditory stimuli

The auditory stimuli comprise ten sounds from the IADS database (see Table 1), and ten instrumental extracts from various compositions (see Table 2). The ten sounds from the IADS were selected according to their SAM scores on valence and arousal reported in previous works13. IADS sounds were selected so that two sounds had high arousal and high valence ratings, two had low arousal and low valence ratings, two had low arousal and high valence ratings, two had high arousal and low valence ratings, and two were defined as neutral (low arousal and mid-valence ratings). The ten instrumental sounds were rated for the first time in this work, and constitute an original contribution to the state of the art. Musical pieces were considered an “abstract” form of stimulation in our study. In fact, instrumental pieces do not convey immediate meaning to the listeners, and the emotional content of the piece is instead related to features within the composition (e.g., tonality, tempo, etc.)16,17. The inclusion of classical music pieces in our database was motivated by the recent interest in the link between musical pieces and emotional reactions14–17. All the auditory stimuli were presented to participants by means of a pair of headphones (Beat Studio, Monster); auditory stimuli volume did not surpass the 90 dB limit (IADS #275, scream). The duration of all the IADS auditory stimuli selected was 6 s, apart from one lasting 5 s (see Table 1). The duration of the abstract-classic auditory stimuli could vary according to the musical sentence (see Table 1).

Visual stimuli

The twenty visual stimuli comprise 10 pictures from the IAPS database, and ten abstract pictures (see Table 1). The ten pictures from the IAPS were selected according to their SAM scores on valence and arousal reported in previous works3. In particular, two pictures were selected having high arousal and high valence ratings, two having low arousal and low valence ratings, two having low arousal and high valence ratings, and two having high arousal and low valence ratings, and two were pictures defined as neutral (low arousal and mid-valence ratings). The ten abstract pictures were arbitrarily selected from the work of renowned artists, thus their arousal, valence and dominance scores were assessed for the first time in the current work. The choice of abstract art as emotional stimulus was inspired by the body of literature on emotions, art, and aesthetic8,36. By including works of abstract art in our experiment, we test emotional visual stimuli that, as for the instrumental pieces in the acoustic realm, do not relate to an obvious meaning. Data reported herby might serve to further explore the connection between aesthetic and emotional reactions. All the visual stimuli were presented in the centre of a monitor screen (26 inches), placed at about 40 cm distance from participants, with the centre aligned at participants’ eye level. The visual stimuli were displayed for 15 s.

Haptic stimuli

Ten haptic stimuli were delivered by means of a mid-air haptic device developed by Ultrahaptics Ltd (http://ultrahaptics.com/). This mid-air haptic technology allows the creation of tactile sensations through focused ultrasound waves, resulting in sensations that can be described as dry rain, puffs of air37. The haptic device comprises an array of ultrasonic 16×16 transducers; each transducer can be activated individually, in sequence, or simultaneously with other transducers, thus creating unique patterns varying in location, intensity, frequency, and duration38.The ten different haptic stimuli used in the present experiment are presented in form of haptic patterns. These patterns were selected from a list of haptic patterns created and previously validated by Obrist and colleagues25. These patterns vary in location (16 different locations specified in a 4×4 grid) on the users’ palm, intensity (three level: low – medium – high), frequency (five options, range: 16–256 Hz), and duration (200–600 ms). Please note that such patterns were designed for the right hand of the user, and therefore were delivered to the right hand of the participant, without considering the hand dominance.

Self-Assessment rating

Participants ratings of their own emotional reaction were recorded using the Self-Assessment Manikin (SAM) (see Fig. 2), a non-verbal pictorial assessment technique evaluating emotional reaction along three components: Valence (whether the elicited emotion is positive or negative), Arousal (how much the elicited emotion is “activating”), and Dominance (if the participant feels “in control” of the emotion), which are often identified as the main descriptors of all emotional activations26. The SAM was first proposed by Bradley and Lang 24, and reflects the idea that emotions as we know them (i.e., fear, joy, anger, calm, etc.) can be represented on a two-dimensional space that has Valence and Arousal as main orthogonal axes. Despite discussing the different theories of emotions is beyond the scope of this paper, it is worth considering the advantage of using the SAM approach as compared to a questionnaire reflecting a categorical model of emotions (i.e., Geneva Emotions Wheel). In fact, compared to a categorical approach of emotions27, this approach allowed us to bypass the semantic implication and idiosyncrasies that participants could have had in naming the emotion they were feeling39, focusing instead on the assessment of their own emotional state. Rating scales were displayed on the computer screen. Below each rating scale a horizontal bar of the same length of the five SAM’s pictorial representations was presented with a cursor at the centre of the bar. Participants had the five different manikins as a visual reference for each emotional dimension (i.e., arousal, valence, and dominance). Participants could regulate the cursor position over the bar by means of a mouse manoeuvred with their right hand. The rating scales range from 0 to 100, in 1 point steps, where 0 corresponds to the extreme left manikin, and 100 to the extreme right manikin26. A continuous visual analogue scale was used to account for more accuracy in the parametric data-analysis and more sensitiveness to change in the assessment40. No time limit was given to the participants to answer the SAM.

Skin conductance recording and features extraction

Skin conductance (SC) response was measured with a Shimmer3 GSR+ Unit wireless device (Shimmer Sensing, Dublin). Two 8mm snap style finger TYPE (such as: Ag–AgCl) electrodes (GSR electrodes, Shimmer Sensing) with a constant voltage (0.5 V) were attached to participants’ intermediate phalanges of their left index and ring fingers. The SC recording device was connected wireless to a PC to digitalize data through the ShimmerCapture software. The gain parameter was set at 10 mSiemens (μS)/ Volt, the A/D resolution was 12 bit, allowing to record responses ranging from 2 to 100 μS.

Each recording was analysed using the MATLAB Ledalab toolbox. As first step data were downsampled and cleaned from artefacts (see Technical validation). Feature extraction was obtained via continuous deconvolution analysis41 (CDA). CDA divides the SC signal in a phasic and a tonic component, making it easier to extract features related to determinate events (triggers). Event related features obtained with CDA are shown in Table 2, which has been adapted from the original table presented at www.ledalab.de. Please note that while the trigger eliciting the event-related features was set at the beginning of the stimulus, the time window considered to compute event related features encompassed the whole duration of the stimulus, plus 4 s (for a discussion on the duration of this time window please refer to Usage Notes and Limitations of the database, below). Standard trough-to-peak (TTP) features were also obtained from the raw signal, as well as global measures (mean and maximum deflection, see Table 2).

In general, features related to SC response have been related in multiple occasions to emotional responses (and particularly, high arousal responses)31–33. Emotional states characterized by high arousal correlate with the activation of a fight or fly response. Such response is regulated by the autonomic system (and in particular by the sympathetic system), which in turn activates the sudomotor nerve, triggering the release of sweat from the sweat glands in the hand’s skin. An example of SC signal is shown in Fig. 3.

Data Records

SC recordings and self-assessment ratings collected during the experiment are organized in a single MATLAB data structure available at (Data Citation 1). Such data structure also includes demographic information on each participant, as well as baseline SC recordings collected prior the experiment. Quality of the SC recording is also available as further structure field (see Technical Validation). Moreover, the “features” field of the structures shows SC features extracted by using Continuous Decomposition Analysis (CDA) with the MATLAB toolbox Ledalab.

multisensory_emotions.m

Hereafter is an explanation of all the fields of the MATLAB data structure multisensory_emotions.m are explained. Matlab structures allow to organize the data hierarchically, the hierarchical design of the structure is shown in Fig. 4.

-

multisensory_emotions.stim=50X6 MATLAB array. This field allows to retrieve information about the stimuli that were delivered to participants during the experiment. multisensory_emotions.stim allows users to access a MATLAB array which columns represent respectively: the stimulus identification number, the modality of delivery (either audio, video, or haptic), the name of the stimulus (IAPS/IADS identification number, artwork title, etc.), the duration of the stimulus/presentation time, the author/composer (when known), and finally the database on which the stimulus was tested (in case of further expansion of the present database by other parties in future studies, see below: Usage Notes and Limitations of the database).

-

multisensory_emotions.su=The.su field allows to retrieve information about one particular participant. The.su field is the basic layer of the structure. The number of items at this level of the structure is 100, same as the number of participants taking part to the experiment. The.su field is, in turn, divided into different sub-fields:

.su.demographic =1X4 MATLAB vector. Accessing to the.demographic field allows to retrieve demographic information on one particular participant. The vector contains, respectively: the participant’s ID number, the gender of the participant, the hand dominance, and the age of the participant.

.su.SAM=MATLAB array 50X5. Accessing the.SAM field allows to retrieve information about the ratings of each stimulus, as well as about the order of the stimuli presented to the participant..array columns are, respectively, ratings on (1) valence, (2) arousal, and (3) dominance. Column number 4 represents the stimulus identification number. It is possible look at the type of stimulus by matching the identification number with the stimulus identification number in multisensory_emotions.stim. Please note that the knowledge of what stimulus was delivered to the participant in any given moment is a crucial information to correctly interpret the SC signals. Column number 5 marks instead the presentation order of the stimuli.

.su.SC=the.SC field allows users to access skin conductance data collected during the experiment. SC data is in turn divided into 7 sub fields:

.SC.raw: nX2 MATLAB array containing the n samples collected throughout the whole experiment and 2 columns. The first column represents skin conductance value (μS) collected with the Shimmer3 GSR+ Unit. The second column represents the presentation of the stimulus as SC trigger. The moment of the stimulus presentation is marked as 1, while other samples are simply 0 s.

.SC.raw_all: nX2 MATLAB array containing the n samples collected throughout the whole experiment and 2 columns. The first column represents skin conductance value (μS) collected with the Shimmer3 GSR+ Unit. The second column represents the presentation of the stimulus as SC trigger. The moment of the stimulus presentation is marked as 1 and the presentation of each SAM question on arousal is marked as 4, on valence as 5, on dominance as 6.

.SC.clean: nx2 MATLAB matrix containing the n samples collected throughout the whole experiment and 2 columns. The data in.SC.clean have been downsampled with a factor 4 to allow for a faster reading and elaboration of the SC trace. Moreover, data in this sub-field have been cleaned using the Ledalab artefact correction toolbox (see Technical Validation below).

.SC.baseline: 1xn MATLAB vector, including the SC recordings from the participant prior the beginning of the experiment.

.SC.quality: single value from 0 to 2, whereas 0 represents unusable data, 1 represents partially complete recording, and 2 represents complete recordings.

.SC.features: 50X12 MATLAB array. The columns represent the 12 features extracted using the Ledalab toolbox for MATLAB (see Technical Validation for more information on the features extracted). The rows represent the stimuli arranged by “stimulus number” (therefore not presentation order). Missing values are reported as NA in the MATLAB table. Each of the 12 features is reported and explained in Table 2.

.SC.responsiveness: single value, either 0 or 1. It is meant to serve as an indication of whether the participant showed any phasic response throughout the experiment. The absence of phasic responses for all the stimuli (.SCrespnsiveness=0) mark the participant as a possible non-responder. With non-responder is meant an individual with low skin conductance, on which SC recording do not evidence emotional responses even when they are present (as shown by SAM ratings).

Together with the MATLAB structure, we also provide an R list (multisensory_emotions_R.rda, Data Citation 1). The R list architecture is equivalent to the one shown in Fig. 4. R is an open source software downloadable at https://cran.r-project.org/ and hence enables anyone interested in our dataset to access and use it for future studies.

Technical Validation

A post-doctoral level researcher with over 5 years of experience in the field of behavioural research acquired data from all the 100 participants. Participants were invited to take a comfortable position at the beginning of the experiment, and asked not to move their left hand during the whole experiment to avoid movement artefacts in the SC signal.

Participants reliability and SAM ratings

While for the SC signal, it is possible to assess the quality of the data by checking the number of obtained samples and artefacts, it can be harder to determine whether self-assessment questionnaires have been answered by participants with the due attention. To investigate the reliability of our data, we compared the ratings obtained in the 10 IAPS and 10 IADS stimuli in our experiment with the values of valence, arousal, and dominance indicated in the IAPS and IADS manuals9,13. Results showed high significant correlations between our data and the expected value from the two manuals (IADS: r=0.86, p<0.01; IAPS: r=0.79, p<0.01). We also checked whether the responses distribution for each stimulus and each SAM dimension approximated normality. Table 3 (available online only) shows average ratings±standard deviation for each stimulus in the arousal, valence, and dominance dimensions. Table 4 (available online only) additionally shows results from a normality test (Kolmogorov–Smirnov test) and the skewness and kurtosis of their distributions.

Skin conductance signal processing

From each participant, we obtained two SC traces. The first trace was collected in absence of stimulation for about 60 s (.SC.baseline). The second trace was collected for the whole duration of the experiment.

Both traces were examined by using the Ledalab MATLAB toolbox. Ledalab allows to visually inspect each single loaded trace and correct for movement artefacts. Before artefact correction, signal was downsampled (with a factor 8) to allow for a faster processing of the data. The movement artefacts were identified after careful visual inspection, and corrected by using a fitting spline. An example of such correction can be seen in Fig. 5.

SC signal ranged between 10.01 μS and 28.34 μS (mean: 15.55, sd: 1.67, value computed on SC traces rated one or more, see SC.quality). Collected SC values are in line with typical SC levels in humans, with baseline SC usually lower than the SC recording during the experiment29. Downsampled and artefacts corrected data are available at.SC.clean. Raw data at full sampling (512 Hz) are available at.SC.raw.

Skin conductance signal processing

Skin conductance signal is known to change in time 31,32. Particularly, the tonic component of the signal (Fig. 3) is expected to vary41 over relatively long periods of time. On the contrary, the phasic component of the signal, if correctly extracted from the raw data, is expected only to be tied to the eliciting events (that is: the emotional stimulus). However, it is important to consider that the effect of the triggering event on the SC signal might also decrease after several presentations of emotional stimuli, as the participant could habituate to the emotional stimulation itself. In other words, by being emotionally stimulated repeatedly, a participant could grow accustomed to the emotional impact of the eliciting stimuli, therefore not being scared, moved, or aroused by a stimulus as it would be if the stimulus was presented at the beginning of the experiment. We used Spearman rank-order correlation to assess whether the position that a stimulus held in the sequence of trials (that is, whether the stimulus was presented as first, second, third, etc.) correlated with lower (or higher) value of particular phasic features. Results are shown in Table 5. As it is possible to evince by the results in the table, correlations were low but nonetheless present, and users will have to take this fact into account when using the database.

Usage Notes and Limitations of the database

The proposed database is available at (Data Citation 1) and can be used for several different applications. These data are of clear interest for different fields investigating human emotional reaction and automatic emotion recognition, from psychology to computer science. We strongly encourage the use of the stimuli contained in this database for experiments where emotional stimulation is needed, and particularly when emotional stimulation is not delivered through “traditional” means (such as pictures). In particular, the emotional ratings and reactions to haptic stimuli constitute a first, however small, strongly validated database for affective haptic. Abstract art pieces and instrumental pieces are also not conventional and validated stimuli. At the same time, SC traces and features could be used to train automatic systems in recognizing human emotions, given the sensory modality through which the stimulus has been delivered. Furthermore, the relationship between SAM ratings, SC features, and sensory modality involved in the stimulation could open new paths for the study of how emotions are communicated and interpreted by humans.

In order to facilitate the access to the data within the database, a MATLAB function and its equivalent R function (SCR_statistics.m and SCRstatistics.R) have been prepared. These functions are available at (Data Citation 1). These functions allow to compute mean and standard deviation over the selected SCR features across all participants, for each different stimulus. Furthermore, these functions allow the user to select the participants over which compute the descriptive statistics based on the quality of their SC.

However, we would also point out some of the flaws of the current database, so to help the future users in interpreting the results that are available for its analysis. First, this database is far from being complete and comprehensive of all the possible stimuli available in the three different sensory modalities. The number of conditions tested strongly reduced the number of samples we could use, as we wanted to maintain an experiment of a reasonable duration. Any generalization of the data contained in this database should therefore be limited at the stimuli hereby tested. Importantly, we encourage other scientists to expand the database themselves, still maintaining the same experimental design, where multisensory stimuli for eliciting emotions are delivered to users in a within-subjects experimental design. To facilitate the inclusion of further stimuli and participants responses to the database, we developed an R-based graphic user interface which allows other researchers to merge their new data to the R list. The graphic user interface is available at [Data Citation 1] and has been developed using the open source R package shiny. Such interface provides information about the format required to integrate the data and allows a database to be included by following a series of guided steps, even when the SC or the SAM fields are missing.

Second, SCR were computed over different timeframes. Indeed, the response window in which SCRs were considered depend on the duration of the stimulus. The choice was motivated by the fact that for stimuli such as haptic and auditory (abstract) any truncation of the stimulus would have resulted in an uncomplete pattern, or melody, therefore (most likely) inducing frustration in the participant. Finally, our last concern relates to the haptic stimuli used. Although the positioning of the hand was relatively constant across trials, and facilitated by the foam support on top of the haptic device, the size of the hand was different across participants. This could lead the haptic stimuli to fall on slightly different locations on the hand depending on the participant. Therefore, haptic stimulation may not have been identical across participants, whereas audio and visual stimulation was.

Additional information

How to cite this article: Gatti, E, et al. Emotional ratings and skin conductance response to visual, auditory and haptic stimuli. Sci. Data 5:180120 doi: 10.1038/sdata.2018.120 (2018).

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

References

References

Panksepp, J. . Affective neuroscience: The foundations of human and animal emotions (Oxford university press, 2004).

Hassenzahl, M. Experience design: Technology for all the right reasons. Synthesis Lectures on Human-Centered Informatics 3, 1–95 (2010).

Lang, P. J., Bradley, M. M. & Cuthbert, B. N. International affective picture system (IAPS): Instruction manual and affective ratings. The center for research in psychophysiology, University of Florida, A-6 (1999).

Brave, S. & Nass, C. Emotion in human–computer interaction. Human-Computer Interaction 53, 53–68 (2003).

Kreibig, S. D. Autonomic nervous system activity in emotion: A review. Biological psychology 84, 394–421 (2010).

Marsella, S., Gratch, J. & Petta, P. Computational models of emotion. A Blueprint for Affective Computing-A sourcebook and manual 11, 21–46 (2010).

Rodríguez‐Torres, R. et al. The lay distinction between primary and secondary emotions: A spontaneous categorization? International Journal of Psychology 40, 100–107 (2005).

Noy, P. & Noy‐Sharav, D Art and emotions. International Journal of Applied Psychoanalytic Studies 10, 100–107 (2013).

Lang, P. & Bradley, M. M. The International Affective Picture System (IAPS) in the study of emotion and attention. Handbook of emotion elicitation and assessment 29 (2007).

Dan-Glauser, E. S. & Scherer, K. The Geneva affective picture database (GAPED): a new 730-picture database focusing on valence and normative significance. Behavior research methods 43, 468 (2011).

Bradley, M. M. & Lang, P. J. Affective norms for English words (ANEW): Instruction manual and affective ratings. Technical report C-1, the center for research in psychophysiology, University of Florida 1, 1–45 (1999).

Bradley, M. M. & Lang, P. J. Affective Norms for English Text (ANET): Affective ratings of text and instruction manual. Technical Report. D-1, University of Florida, Gainesville, FL 1, 1–45 (2007).

Lang, P. J., Bradley, M. M. & Cuthbert, B. N. International affective picture system (IAPS): Technical manual and affective ratings. NIMH Center for the Study of Emotion and Attention 1, 39–58 (1997).

Husain, G., William, F. T. & Schellenberg, E. G. "Effects of musical tempo and mode on arousal, mood, and spatial abilities.". Music Perception: An Interdisciplinary Journal 20, 151–171 (2002).

Vuoskoski, J. K., Gatti, E., Spence, C. & Clarke, E. F. Do visual cues intensify the emotional responses evoked by musical performance? A psychophysiological investigation. Psychomusicology: Music, Mind, and Brain 26, 179–192 (2016).

Parncutt, R. Major-minor tonality, Schenkerian prolongation, and emotion: A commentary on Huron and Davis (2012). Empirical Musicology Review 7, 118–137 (2013).

Fernández-Sotos, A., Fernández-Caballero, A. & Latorre, J. M. Influence of tempo and rhythmic unit in musical emotion regulation. Frontiers in computational neuroscience 10, 80 (2016).

Gross, J. J. & Levenson, R. W. Emotion elicitation using films. Cognition & emotion 9, 87–108 (1995).

Carvalho, S., Leite, J., Galdo-Álvarez, S. & Gonçalves, O. F. The emotional movie database (EMDB): A self-report and psychophysiological study. Applied psychophysiology and biofeedback 37, 279–294 (2012).

Baveye, Y., Bettinelli, J. N., Dellandréa, E., Chen, L. & Chamaret, C. A large video database for computational models of induced emotion. In Affective Computing and Intelligent Interaction (ACII), 2013 Humaine Association Conference on, 13-18 (IEEE: New York, NY, USA, 2013).

Alaoui-Ismaïli, O., Robin, O., Rada, H., Dittmar, A. & Vernet-Maury, E. Basic emotions evoked by odorants: comparison between autonomic responses and self-evaluation. Physiology & Behavior 62, 713–720 (1997).

Chrea, C., Grandjean, D., Delplanque, S., Cayeux, I., Le Calvé, B., Aymard, L. & Scherer, K. R. Mapping the semantic space for the subjective experience of emotional responses to odors. Chemical Senses 34, 49–62 (2009).

Salminen, K., Surakka, V., Lylykangas, J., Raisamo, J., Saarinen, R., Raisamo, R. & Evreinov, G. Emotional and behavioral responses to haptic stimulation. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, 1555–1562 (ACM: New York, NY, USA, 2008).

Tsetserukou, D., Neviarouskaya, A., Prendinger, H., Kawakami, N. & Tachi, S. Affective haptics in emotional communication. In Affective Computing and Intelligent Interaction and Workshops, 2009. ACII 2009. 3rd International Conference on 1-6 (2009).

Obrist, M., Subramanian, S., Gatti, E., Long, B. & Carter, T. Emotions mediated through mid-air haptics. In Proceedings of the 33rd Annual ACM Conference on Human Factors in Computing Systems 2053–2062 (2015).

Bradley, M. M. & Lang, P. J. Measuring emotion: the self-assessment manikin and the semantic differential. Journal of behavior therapy and experimental psychiatry 25, 49–59 (1994).

Bänziger, T., Tran, V. & Scherer, K. R. The Geneva Emotion Wheel: A tool for the verbal report of emotional reactions. Poster presented at ISRE 149, 271–294 (2005).

Nasoz, F., Alvarez, K., Lisetti, C. L. & Finkelstein, N. Emotion recognition from physiological signals using wireless sensors for presence technologies. Cognition, Technology & Work 6, 4–14 (2004).

Mauss, I. B. & Robinson, M. D. Measures of emotion: A review. Cognition and Emotion 23, 209–237 (2009).

Martyn Jones, C. & Troen, T. Biometric valence and arousal recognition, Proceedings of the 19th Australasian conference on Computer-Human Interaction: Entertaining User Interfaces, November 28–30 (2007).

Haag, A., Goronzy, S., Schaich, P. & Williams, J. Emotion recognition using bio-sensors: First steps towards an automatic system. In Tutorial and research workshop on affective dialogue systems 36–48 (2004).

Maybe Lang, 2014 Lang, P. J. Emotion’s Response Patterns: The Brain and the Autonomic Nervous System. Emotion Review 6, 93–99 2014.

Kreibig, S. D. Autonomic nervous system activity in emotion: A review. BiologicalPsychology 84, 394–421 (2010).

Vi, C. T., Ablart, D., Gatti, E., Velasco, C. & Obrist, M. Not just seeing, but also feeling art: Mid-air haptic experiences integrated in a multisensory art exhibition. International Journal of Human-Computer Studies 108, 1–14 (2017).

Gatti, E., Caruso, G., Bordegoni, M. & Spence, C. Can the feel of the haptic interaction modify a user's emotional state? In World Haptics Conference (WHC) 247–252 (2013).

Silvia, P. J. Cognitive appraisals and interest in visual art: Exploring an appraisal theory of aesthetic emotions. Empirical studies of the arts 23, 119–133 (2005).

Obrist, M, Seah, S. A. & Subramanian, S Talking about tactile experiences In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (CHI '13), 1659–1668 (ACM: New York, NY, USA 2013).

Carter, T., Seah, S. A., Long, B., Drinkwater, B. & Subramanian, S. UltraHaptics: multi-point mid-air haptic feedback for touch surfacesIn Proceedings of the 26th annual ACM symposium on User interface software and technology (UIST '13), 505–514 (ACM: New York, NY, USA 2013).

Stearns, D. C. & Parrott, W. G. When feeling bad makes you look good: Guilt, shame, and person perception. Cognition & emotion 26, 407–430 (2012).

Kindler, C. H., Harms, C., Amsler, F., Ihde-Scholl, T. & Scheidegger, D The visual analog scale allows effective measurement of preoperative anxiety and detection of patients’ anesthetic concerns. Anesthesia & Analgesia 90, 706–712 (2000).

Benedek, M. & Kaernbach, C. A continuous measure of phasic electrodermal activity. Journal of neuroscience methods 190, 80–91 (2010).

Data Citations

Gatti, E., Calzolari, E., Maggioni, E., & Obrist, M. Figshare https://doi.org/10.6084/m9.figshare.c.3873067.v2 (2018)

Acknowledgements

This project has received funding from the European Research council (ERC) under the European Union’s Horizon 2020 research and innovation programme under grant agreement No 638605.

Author information

Authors and Affiliations

Contributions

E.G. designed the experimental set-up, coded the software for the data collection wrote the main manuscript text, analysed the data and prepared the dataset. E.C. collected the data and initialized the data analysed. E.M. supported the data collection and the initialization of the data analyses. M.O. collaborated in the design of the experimental set-up and supported the data collection. All the authors have contributed to and reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

ISA-Tab metadata

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/ The Creative Commons Public Domain Dedication waiver http://creativecommons.org/publicdomain/zero/1.0/ applies to the metadata files made available in this article.

About this article

Cite this article

Gatti, E., Calzolari, E., Maggioni, E. et al. Emotional ratings and skin conductance response to visual, auditory and haptic stimuli. Sci Data 5, 180120 (2018). https://doi.org/10.1038/sdata.2018.120

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/sdata.2018.120

This article is cited by

-

A real-world dataset of group emotion experiences based on physiological data

Scientific Data (2024)

-

Specific Fabric Properties Elicit Characteristic Neuro and Electrophysiological Responses

Fibers and Polymers (2024)

-

H-GOMS: a model for evaluating a virtual-hand interaction system in virtual environments

Virtual Reality (2023)

-

Construction and effect of relationships with agents in a virtual reality environment

Virtual Reality (2023)

-

Altered electromyographic responses to emotional and pain information in awake bruxers: case–control study

Clinical Oral Investigations (2022)