Abstract

Limb-driven control allows for direct control by using residual limb movements rather than unnatural and complex muscle activation. Existing limb-driven methods simultaneously learn a variety of possible motions, ranging from a residual limb to entire arm motions, from human templates by relying on linear or nonlinear regression techniques. However, the map between a low-dimensional residual limb movement and high-dimensional total limb movement is highly underdetermined. Therefore, this complex, high-dimensional coordination problem cannot be accurately solved by treating it as a data-driven black box problem. Here we address this challenge by introducing the residual limb-driven control framework synergy complement control. Firstly, the residual limb drives a one-dimensional phase variable to simultaneously control the multiple joints of the prosthesis. Secondly, the resulting prosthesis motion naturally complements the movement of the residual limb by its synergy components. Furthermore, our framework adds information on contextual tasks and goals and allows for seamless transitions between these. Experimental validation was conducted using subjects with preserved arms employing an exo-prosthesis setup, and studies involving participants with and without limb differences in a virtual reality setup. The findings affirm that the restoration of lost coordinated synergy capabilities is reliably achieved through the utilization of synergy complement control with the prosthesis.

Similar content being viewed by others

Main

Humans have replaced lost or non-developed body limbs for millennia with technical counterparts, namely prostheses. The first powered upper limb prosthesis dates back to a patent from Germany in 1915 (refs. 1,2). Since then, many powered mechanical systems have been developed to replace limbs that have been amputated, and the mechanics of these systems have continuously been improved. Since the emergence of myoelectric upper limb prostheses around 1950 (refs. 3,4,5), substantial efforts have been made to improve and enhance the operability of such systems6 by using myoelectric controls with sequential control (SEQ), which meet today’s commercial standards7,8,9,10,11,12. SEQ often involves a finite state machine, which allows the user to select and control one joint at a time using direct electromyography (EMG)-based proportional control. The joints that are not in use are locked, and a muscle co-contraction causes the change to the next joint13. However, due to the limitations of myoelectric control, such as muscle fatigue, electrode displacement and difficulties in decoding complex patterns or dealing with coordinated joint movements14, an interest in limb-driven control concepts has emerged. Here, the residual limb (RL) movement, rather than muscle activation measurements, is used as a continuous control input for the device. Several RL-driven methods exist for upper limb prostheses that are still considered to be basic and primarily focus on simulation, virtual reality (VR) or single degrees of freedom (d.f.) elbow coordination (for example, refs. 15,16,17). These methods share one fundamental idea at their core: learning the coordination between the upper and lower arm for a wide variety of possible motions based on captured human templates into a single model instead of multiple models. To accomplish this, regression techniques are applied. These techniques involve linear regression such as principal components analysis17,18,19 or nonlinear regression such as artificial neural networks, radial basis function networks or locally weighted regression15,16,17,19,20,21,22,23,24,25,26,27. However, the map between a low-dimensional upper arm movement and high-dimensional total limb movement is highly underdetermined, which results in accuracy problems and may even lead to jerky prosthetic motions17. The authors in ref. 17 revealed another critical limitation of current approaches, which is the lack of environmental context. With some exceptions such as ref. 27, current approaches are not able to systematically deal with changing tasks or targets. More context and autonomy are required for limb-driven methods to be useful for amputated participants. Therefore, there is still a need for alternative methods17.

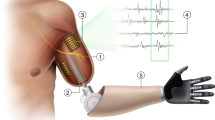

In this Article, we present a new RL-driven method called synergy complement control (SCC) that is based on a whole new hypothesis and overcomes the issues of previous methods: amputated subjects are able to control a task-dependent, multi-dimensional coordinated prosthesis motion via a low-dimensional motion phase and together with task and target detection. Concretely, we introduce synergy complements, which we define as the synergistic-complementary prosthesis movement to the RL movement. The synergy complements are controlled by a single-dimensional phase variable, which is driven by the RL, and together result in a natural motion corresponding to an unimpaired limb. SCC can inherently deal with complex motions that have multiple d.f., and it can process changes in tasks and target locations utilizing proper intention detection. Figure 1 shows the workflow of the SCC method.

Results

RL–prosthesis coordination

In this paragraph, we illustrate how SCC works on the basis of the results of an unimpaired participant using an exo-prosthesis28 (Supplementary Fig. 5). Figure 2 shows an image sequence with the corresponding time series for the RL–prosthesis coordination while it performed an exemplar reaching movement. The movement was computationally designed. For explanation purposes, we divided the time series into six periods. In period 1, the user sat in the pose and did not move (Fig. 2, period 1). In period 2, the RL moved forwards (cf. measured RL position lx) while the phase variable ψ started to drop. Consequently, the end effector adapted its position py and orientation αx to follow the synergy complementary motion. In period 3, the user stopped moving the RL, which caused ψ, py and αx to remain constant (Fig. 2). This shows that only the RL drove the prosthesis movement. In period 4, the user continued to move the RL, leading to a further drop in ψ. Correspondingly, the prosthesis was lifted and rotated until it finally reached the goal pose in period 5 (Fig. 2, period 5). In contrast, we show the final configuration with the SCC turned off (Fig. 2, top right) In period 6, the movement was reversed. The RL moved backward, causing ψ to increase again, which drove the prosthesis back to the initial configuration. In period 6, the user pulled the RL beyond its initial position. In this case, the phase variable remained at ψ = 1. This ensured that the prosthesis would stay in its initial position.

Time series for the measured RL’s position lx, the phase variable ψ (equation (15)), the end effectors’ position py and the end effectors’ orientation αx (angle around the x axis).

Reaching and reach-and-grasp tasks

In this paragraph, we present the results of three unimpaired participants using an exo-prosthesis to accomplish reaching and reach-and-grasp tasks (see Methods for more details). We used the SCC method in two purely reaching tasks (intransitive tasks) (Fig. 3) and three reach-and-grasp tasks, the latter of which involve hand–object interaction (transitive tasks) (Fig. 4). All movements were learned from human templates.

Figure 3 shows an image sequence for the template movement and the corresponding ‘cyborg’ movement in each reaching task. The first image shows the starting pose, the third image shows the final pose and the second image shows an intermediate state. The ‘block light source’ goal (Fig. 3a) is to protect the eyes from the centre light, which originates from direction o while the hand keeps a distance of ~0.1 m from the face. This task was reliably executed with a 100% success rate (four out of four trials) at a duration that was close to an average human’s duration (Fig. 5a). The ‘stop gesture’ goal (Fig. 3b) sends a signal to an agent, which approaches at velocity v, to stop by extending the arm and presenting the palm in his direction. This task was also reliably executed with a 100% success rate (four out of four trials) at a duration that was close to an average human’s duration (Fig. 5a). This shows that, with the proposed SCC method, lost reaching abilities can be reliably recovered. Note that the reaching tasks were pre-selected before execution and did not involve intention recognition.

The data are presented as median values (the central line of each box) along with the 25th and 75th percentiles (the bottom and top edges of each box) as well as minima and maxima (the whiskers attached to each box). a, Box plots for the time it took for the hand to move from the starting position to the time at which it touched the object (transitive tasks) or the time at which it reached its final goal pose (intransitive tasks). b, Box plots for the duration of the entire task sequence (see Supplementary Fig. 1 for details).

Figure 4 shows an image sequence for the template movement and the corresponding representative ‘cyborg’ movement for each reach-and-grasp task. The goal of all three tasks was for the subject to reach and grasp the object (that is, the apple (Fig. 4a), the bottle (Fig. 4b) or the book (Fig. 4c)) at location xg from direction o without colliding with the table. All three objects were reliably grasped, each with a 100% success rate (four out of four trials). The durations are shown in Fig. 5a. This shows that, based on the proposed SCC method, more complex grasping tasks can be reliably achieved. The accompanying video attachment shows the full experiment of representative trials.

Inter-task transitioning

In this subsection, we show the behaviour of inter-task transitioning, which we tested with an unimpaired participant (see Methods for more details). Supplementary Fig. 2 shows the experimental setup, the task sequence and the time series for the inter-task transition experiment. The ‘cyborg’ was seated in front of a table. A book (task 1), an apple (task 2) and a bottle (task 3) were placed on top of the table. The cyborg had to grasp these objects. We applied the same template motions as in Fig. 4. We used eye-tracking glasses to track the cyborg’s gaze, and a motion tracking system to keep track of the objects. The user was instructed to grasp the objects in any order by following the task sequence (Supplementary Fig. 2, bottom box). The user could activate a task transition request (TTR) by looking at the desired object for 1 s. A TTR was only accepted in this work if the RL was in the starting position (that is, phase variable ψ = 1). A valid TTR triggered a context transition in which both the task-related RL reference motion and the task-related dynamic movement primitive (DMP) with the goal pose pg were loaded.

For explanation purposes, the procedure was divided into five periods. In period 1, the cyborg was in the starting pose and did not move. He then moved his gaze onto the book, which caused a TTR to grasp the book. In period 2, he reached for the book. This caused the phase variable ψ to drop, and the end effector adapted its position py and orientation αx accordingly. In period 3, the user closed the prosthetic hand via the myo armband, which was attached to his lower left arm (see Methods for more details). In period 4, he returned to the starting pose while holding the book with his prosthetic hand. In period 5, he placed the book back in its original location and returned to the starting pose to complete the task sequence. Next, he focused on the apple, repeating the same procedure. All three objects were reliably grasped following this sequence, and each had a 100% success rate (four out of four trials). The durations are shown in Fig. 5b. The results show that the proposed SCC method allows users to transition between different tasks seamlessly.

Goal changes within a task

The following experiments are performed with a subject with preserved arm wearing an exo-prosthesis (more details can be found in Methods). Supplementary Fig. 3 shows the experimental setup, task sequence and time series for this experiment. The experimental setup was the same as in the inter-task transitioning experiment. Another human template motion was used in this experiment: a ball was grasped from the table. The red-coloured ball was placed at the default goal xg,def. The blue- and yellow-coloured balls were placed at different goals (xg1 and xg2, respectively). The positions were measured by a motion capture system in a global frame, and by computation subsequently transformed to the exo-prosthetic base frame (see Supplementary Information for details). For explanation purposes, the procedure was divided into five periods. In period 1, the cyborg was in the starting pose and did not move. He then moved his gaze onto the red ball, which caused a TTR (that is, the corresponding RL reference motion uref and the DMP with the goal pose xg,def were loaded). In period 2, the user reached for the red ball, causing the phase variable ψ to drop, and the prosthesis reached the default goal position. In period 3, the user grasped the ball with the prosthetic hand. In period 4, he placed the ball in the ball bucket, and then he returned to the starting position (period 5). Next, he focused on the blue ball (located in the middle). The goal was updated to xg1, and the procedure was repeated. This experiment shows that the proposed SCC method can be generalized to new goal poses.

User study in VR

We conducted a confirmatory study with a participant with limb difference and six unimpaired participants in an extended VR setup (more details can be found in Supplementary Information). The participants were asked to grasp an apple on the table, grasp a bottle on the table, grasp a book with goal pose 1 (horizontally on the shelf), grasp a book with goal pose 2 (right to goal pose 1 and vertically on the shelf), grasp a hat on the table, perform a greet gesture, grasp a plate on the table and perform a stop gesture.

For quantitative performance assessment and comparison between SCC and SEQ, we evaluate three metrics:

-

The ‘time to completion’ measures the task completion speed; that is, the lower its value, the faster the task is completed.

-

The ‘gaze focus index’ indicates the level of a participant’s gaze focus. The lower its value, the higher the gaze focus.

-

The ‘torso tilting magnitude’ captures the extent of torso movements. The higher this value, the more pronounced the torso tilts.

The metrics are calculated for each task repetition performed with both control methods (Fig. 6a (participant with limb difference) and Fig. 6b (unimpaired participants)).

The data are presented as median values (the central line of each box) along with the 25th and 75th percentiles (the bottom and top edges of each box) as well as minima and maxima (the whiskers attached to each box). Gaze focus index (shaded with ‘N/A’) does not exist for tasks Greet and Stop. a, Evaluation metrics from all repetitions performed by a participant with limb difference. b, Evaluation metrics from all repetitions performed by six unimpaired participants.

In general, tasks were completed substantially faster with SCC than with SEQ, where one exception task ‘hat’ shows comparable performance. Additionally, SCC exhibits notably improved gaze focus across all tasks compared with SEQ, with one exception task ‘apple’ that exhibits a similar value for both SCC and SEQ. Moreover, SCC demonstrated lower torso tilting magnitude than SEQ across various tasks, except for task ‘hat’. The reason for similar performance is that the hat was located at the farthest distance the participants may reach, while only minimal joint switching was required in SEQ.

Noticeably, the participant with limb difference exhibits lower variability among task repetitions with SEQ than the unimpaired participants as she has been an experienced user of a state-of-the-art prosthesis. It is also worth noting that an unimpaired participant faced challenges using SEQ and has managed only ~2.5 repetitions with SEQ. In Fig. 6b, one is able to see that several data points in SEQ’s time to completion are located far away from the 75th percentile, indicating very long time duration; this reflects the challenges that this participant faced. In contrast to the large variability and unreliability of SEQ, the performance variations among task repetitions are much smaller with SCC. This demonstrates the SCC’s capability of maintaining consistent performance. These facts suggest that SCC requires little learning to use and, therefore, the difference in individual’s learning abilities does not play an essential role in using SCC.

Discussion

Existing limb-driven methods simultaneously learn a wide range of possible motions, ranging from an RL to entire arm motions, from human templates by relying on linear or nonlinear regression techniques15,16,17,19,20,21,22,23,24,25,26. However, the map between a low-dimensional RL movement and high-dimensional total limb movement is highly underdetermined. Thus, these methods often result in inaccurate, jerky prosthetic motions that are unnatural and often delayed. Moreover, most approaches lack contextual information. In this work, we introduced the SCC framework, which is based on a whole new hypothesis and fundamentally overcomes these problems: amputated subjects are able to control a task-dependent, multi-dimensional coordinated prosthesis motion via low-dimensional limb motion using a single-dimensional motion phase and together with task and target detection.

Results on the exemplar reaching movement showed that the RL purely drives the prosthetic movement. EMG-based control, which is accompanied by non-robust EMG measurements14, was omitted for prosthetic arm motion generation. However, this does not mean that EMG measurements are or should be avoided entirely. For example, in this work, the prosthetic hand was able to grasp objects as a result of an EMG measurement (Supplementary Table 1). Although the SCC framework focused on trajectory tracking, future work shall extend SCC to adapt the impedance setting of the prostheses and the grasping force. EMG measurements will play a central role here29.

The unimpaired participants solved the reaching tasks using SCC with a 100% success rate at a duration that was close to human performance (Figs. 5a,b and 6b). When SCC is used, the accuracy problem is reduced to a simple tracking problem and fully governed by controller performance. Therefore, SCC is more accurate than existing methods that output inaccurate prosthetic positions because of the inherent underdetermination in the learning approach. Moreover, in contrast to current approaches, SCC introduces no control delay between RL movement and the complementary prosthesis motion generation. The RL position directly controls the prosthesis motion only with the dynamics of the DMP in between. The unimpaired participants solved all reach-and-grasp tasks with a 100% success rate. It is crucial to note that the trajectory generated by SCC is twice differentiable, as explicitly outlined in equations (21) and (23). On the other hand, the trajectory generated by SEQ is only once differentiable, as shown in equation (29) in Supplementary Information. This confirms that the SCC method reliably generates smooth complementary synergy movements in complex grasping tasks. However, the unimpaired participants reached objects (Fig. 5a) and completed whole tasks (Fig. 5b) slower than they would do naturally. The bulky exo-prosthetic system may have prevented the subjects from performing the reaching movement more quickly. The longer time taken to complete the whole task may be also explained by the time-consuming grasping procedure (see the accompanying video attachment). Extended algorithms that result in faster task completion should be implemented in the future. A further key advantage of SCC is that it can adapt to changing goals and transitions between tasks, as is shown in Fig. 6a,b. The comparison with the SEQ mode indicates that reaching tasks and reach-and-grasp tasks can be solved much faster with SCC. In case of the ‘grasp apple’ task with the SEQ method, the time for the ‘start-to-object’ (Fig. 5a) is similar to other control modes, but higher for the entire task. This is because our participants’ strategy was to first move the prosthesis near the object (as the starting configuration of the prosthesis allowed this) and then to position the prosthesis to grasp the object using the time-consuming SEQ approach.

Furthermore, the pilot study with the amputated participant (Supplementary Fig. 4) confirms that the SCC method also works with potential users. The higher standard deviation in case of the ‘grasp apple’ tasks is probably due to the hand-on-object detection algorithm and the handling of the amputated participant with the VR system (Fig. 5a). The time was stopped as soon as the distance between the heel of the virtual prosthesis hand and the centre of the object fell below a certain threshold. In the case of the apple, the subject found this threshold at different rates. We note that a coloured marking of the heel of the virtual prosthesis might have facilitated the participant’s coordination. In the case of the book task, however, the performance remained almost constant. The confirmatory study revealed a small variability of SCC among task repetitions (Supplementary Figs. 4 and 6). This demonstrates the SCC’s capability of maintaining consistent performance.

Torso tilting is a crucial factor when reaching for objects beyond one’s natural reach. Notably, the preservation of a focused gaze on the target object, despite torso movement, underscores the adaptability and efficacy of our control approach in real-world scenarios. Furthermore, the findings derived from the confirmatory study highlight a notable distinction (Fig. 6a,b): the scattering of torso tilting magnitude exhibits a considerably higher magnitude for the SEQ method when compared with our SCC. This observation accentuates the efficacy of SCC in streamlining the reach-and-grasping process for users, also achieved through a reduction in torso movements and, thus, the user’s physical effort. Moreover, the participants’ gaze focus was improved by SCC compared with SEQ, as told by the confirmatory study (Fig. 6a,b). This signifies that SCC facilitates users to focus on tasks.

A limitation of all RL-driven prosthetic control methods is that the prosthesis cannot be controlled if no RL motion is involved. For example, the lower arm cannot be positioned without moving the RL. In such situations, the probably simplest solution would be using classical SEQ mode based on EMG as a default. Alternatively, we could leverage EMG signals to be fused with RL motion and thus have kinodynamic and muscle input, which could substantially improve as both the causal muscle input and motion output would be used for driving the prosthesis. This might also apply for the situations where the intention recognition does not work. In future work, SCC shall be validated on amputated participants wearing a prosthesis to investigate whether the physical impacts of the prosthesis to the limb influence the overall results. Furthermore, the intention detection remains a challenge not only for intransitive tasks but also for unstructured and cluttered environments. In future work, the intention recognition algorithms will be extended with three-dimensional vision-based scene understanding and other sensing interfaces30, and their performance will be evaluated for practical life situations. Moreover, the SCC method will be extended to manipulate more modalities such as impedance29. Also DMP’s feature of adjusting the starting pose will be evaluated in future experiments.

In summary, this work has presented a new, robust RL-driven control method, called SCC, that can cope with the disadvantages of existing methods. We experimentally validated the key advantages of SCC, such as its reliability (that is, its high success rates when performing tasks) and its ability to adapt to goal updates, using a novel prosthesis with four d.f. Furthermore, a pilot and confirmatory study with amputated participants and unimpaired participants indicate that this method is beneficial for prosthesis wearers in the future.

Methods

This section focuses on explaining the functionality of SCC and delves into the general and fundamental concepts of experimental user studies. Detailed implementations of SCC and experimental setup, along with accompanying data, can be found in Supplementary Information, which includes Supplementary Figs. 1–6 and Supplementary Table 1 for visual reference. Additionally, cross-references within the text direct readers to relevant sections containing further explanations and details.

Concept

SCC can be explained in seven steps, which are shown in Fig. 1. In the first step, we use a full hand–arm motion xH(t) for task T that was generated from experiments with unimpaired subjects that perform daily living tasks. In this work, we set two intransitive tasks in which the subjects had to lift their right arm to perform a ‘stop’ and ‘block light source’ gesture. Furthermore, we included three transitive tasks that involved hand–object interactions such as grasping an apple, a book and a bottle. In the second step, we divide the intact motion xH(t) into amputation-specific motions xA(t) and xB(t) such that

where the operator ⊕ denotes the tensor product. Thus, we obtain a motion corresponding to the RL xA(t) and the corresponding synergy complement xB(t). Throughout this work, we demonstrate our method for the case of transhumeral amputation. However, our method can also be transferred to other amputation degrees and limbs such as legs. In the third step, motions xA(t) and xB(t) are scaled to the individual cyborg via the scaling program S, resulting in the individualized motions xA,s(t) and xB,s(t). In the fourth step, the individualized reference motion xB,s(t) is decoded into a phase-based prosthetic motion program p(ψ) in which ψ is the phase variable. Furthermore, ψ(t) is anchored to the RL reference motion xA,s(t) by sample-based allocation resulting in \({{{{\mathbf{u}}}}}_{{{{\rm{ref}}}}}^{{\prime} }\in \{\ f\;| {{{{\mathbf{x}}}}}_{{{{\rm{A,s}}}}}(t)\equiv f(\psi (t)),\forall t\}\). The resulting ‘cyborg’ motion is

The goal is to minimize the error

based on a policy optimizer. For the specific implementation, we use DMP equations (19) and (21). A DMP is a differential equation system that can be used to model trajectories. In the fifth step, the measured RL position \({{{{\mathbf{u}}}}}^{{\prime} }:= {{{\mathbf{l}}}}({{{\mathbf{m}}}}(t))\in {{\mathbb{R}}}^{3}\) (which depends on muscle activation m(t)) is matched to the task-specific reference trajectory \({{{{\mathbf{u}}}}}_{{{{\rm{ref}}}}}^{{\prime} }\) online, and a continuous phase variable \(\psi ({{{{\mathbf{u}}}}}^{{\prime} })\in [0,1]\) (equation (15)) is generated online. A value of ψ = 1 means that \({{{{\mathbf{u}}}}}^{{\prime} }\) corresponds to the starting position of \({{{{\mathbf{u}}}}}_{{{{\rm{ref}}}}}^{{\prime} }\), while ψ = 0 means that \({{{{\mathbf{u}}}}}^{{\prime} }\) reached the end position of \({{{{\mathbf{u}}}}}_{{{{\rm{ref}}}}}^{{\prime} }\). The task-specific prosthetic motion program p(ψ) is generated online using the DMP, where ψ is the input. Thus, p(ψ) is fully driven by l(m(t)). We further consider an intention decoder for seamless transition between tasks. In step 6, the user’s intention is decoded on the basis of measurements such as eye tracking and motion tracking. It outputs the task that is intended to be solved as well as the goal pose that is related to the corresponding object. If the user switches to a different task or goal, the corresponding reference motion \({{{{\mathbf{u}}}}}_{{{{\rm{ref}}}}}^{{\prime} }\) and DMP are loaded. In summary (step 7), this framework leads to a cyborg movement xC that corresponds to a natural synergistically coordinated movement of an entire arm.

Synergy dataset

We took the human template motions from the dataset in ref. 31. This dataset contains multi-modal measurements such as the Cartesian marker trajectories of shoulder–arm movements from six right-handed, male, unimpaired human subjects (age 30 ± 5.81 years). The subjects performed 30 table-top activities that would be useful in daily life (in ref. 32, we analysed this dataset for kinematic and muscular synergies). In this work, we used two intransitive tasks (in which no objects were involved) and three transitive tasks (that involved hand–object interactions) for validation purposes. In the intransitive tasks, the subjects lifted their right arm from a relaxed pose on the table to perform common gestures (‘block light source’ and ‘stop gesture’). The transitive tasks involved hand–object interactions such as ‘grasp an apple’, ‘grasp a bottle’ and ‘grasp a book from the shelf’. These tasks are suitable for demonstrating our proposed method for two reasons. First, they involve complex arm movements with multiple d.f. (including the upper arm, forearm and wrist rotation). Second, the prosthetic hand can grasp objects (that is, an apple, a bottle and a book). We scale the captured template motions xA(t) and xB(t) (Supplementary Fig. 5) to individual subjects by using a parameterized kinematics model. In this work, we use arm segment length as the kinematic parameter. However, note that the parameterization step is not limited to arm lengths but can be extended to further modalities such as bone diameter.

SCC

Kinematic synergy identification

The core of kinematic synergy identification methods is to find a mapping from a given joint space \({{\mathbb{R}}}^{L}\) to a more compact representation in a lower-dimensional space \({{\mathbb{R}}}^{{L}^{{\prime} }}\) (ref. 32). For the sake of argument, let us consider the existence of a template human with measured joint state qt that can be consistently scaled to an arbitrary unimpaired human. For example, if the mathematical tool for finding the synergy space is linear principal components analysis, the goal is to find a transformation matrix \({{{W}}}\in {{\mathbb{R}}}^{L\times {L}^{{\prime} }}\) that maps the reference synergy coordinate vector \({{{{\mathbf{v}}}}}_{{{{\rm{t}}}}}\in {{\mathbb{R}}}^{{L}^{{\prime} }}\) to the joint angle vector \({{{{\mathbf{q}}}}}_{{{{\rm{t}}}}}\in {{\mathbb{R}}}^{L}\) such that

where \({\bar{{{{\mathbf{q}}}}}}_{{{{\rm{t}}}}}={{{\rm{const.}}}}\) is the temporal mean of qt. Through this method it is possible to identify kinematic synergies across various tasks in unimpaired subjects32 and even design controllers in the synergy space \({{\mathbb{R}}}^{{L}^{{\prime} }}\) for robotic tools such as exoskeletons33.

Cyborg system

For an ideal cyborg system, the prosthesis shall complement the RL motion to generate the synergistic arm motion of the template human such that

where \({{{{\mathbf{q}}}}}_{{{{\rm{c}}}}}={\left[{{{{\mathbf{q}}}}}_{{{{\rm{l}}}}}{{{{\mathbf{q}}}}}_{{{{\rm{p}}}}}\right]}^{\top }\) denotes the joint vector of the cyborg system. This vector is composed of the RL joint vector ql and the matching joint vector of the prosthesis qp, that is, we assume that the reference human and the resulting cyborg system match at least regarding their kinematics.

Kinematic synergy complements

For the cyborg and the template human to be compatible with each other, let us assume that the template human is divided into parts A and B, corresponding to the junction between residual and prosthesis in the cyborg system, respectively. This allows to stack the motion states at the junction for the effective cyborg equation. In previous works on limb-driven prosthesis control, the control input u is assumed to be the RL position ql such as in ref. 21. The system can then be written as

With this, the human would be able to directly control the prosthesis configuration

as the joint-space synergy complement of ql = u. Herein, the superscript hash symbol denotes a pseudo-inverse of the respective matrix. However, since ql is not directly measurable, this approach is not applicable without external measurement devices such as exoskeletons or visual tracking systems. Therefore, we transform the problem into Cartesian space as follows. Differentiating equation (5) and left multiplication with the respective Jacobian Jc(qc) and Jt(qt) leads to

When stacking the equations of the Cartesian RL velocity \({\dot{{{{\mathbf{x}}}}}}_{{{{\rm{l}}}}}\) and equation (8), we get

where the Cartesian space human-driven RL movement acts as control input \({{{{\mathbf{u}}}}}^{{\prime} }\in {{\mathbb{R}}}^{6}\). With this, we can deduce the Cartesian-level differential synergy complement of xl to be

where \({{{{\mathbf{P}}}}}_{{{{\rm{SCC}}}}}={{{{\mathbf{J}}}}}_{{{{\rm{t}}}}}({{{{\mathbf{q}}}}}_{{{{\rm{t}}}}}){{{{W}}}}{{{{{W}}}}}_{{{{\rm{A}}}}}^{\;\#}{{{{\mathbf{J}}}}}_{{{{\rm{A}}}}}^{\#}({{{{\mathbf{q}}}}}_{{{{\rm{A}}}}})\) denotes the according synergy complement projector, generating prosthesis motions directly from commanded limb movements. However, we can identify at least four challenges that prevent us from applying equation (10). First, its differential nature requires the template Jacobian Jt(qt) to be coupled to the control input u so that the full synergy complement \({\dot{{{{\mathbf{x}}}}}}_{{{{\rm{p}}}}}\) can be calculated online. Second, the choice of the pseudo inverses makes this approach dependent on interpretation. Third, synergies cannot generalize per se to new goals and do not allow for dynamic trajectory generation. Fourth, the static nature of the presented synergy formalism so far makes transition between tasks during execution impossible. In turn, we propose to extend the useful, however, rather limited static synergy mappings in the sense of equation (4) and transform them to a dynamic and more flexible formulation. Specifically, we fuse a new SCC-based interpretation of synergies with state-of-the-art phase-based trajectory learning and generalization approaches.

Kinematic synergy sequencing

Our previous results32 indicate that, instead of coding all synergies into a single system in the sense of equation (4), one could reduce the number of necessary synergies to describe meaningful arm motions down to \({L}^{{\prime} }=1\). This deeper one-dimensional structure in the synergy space appears to be evident, when analysing one specific task class only. The \({L}^{{\prime} }=1\) dimensional (task-aware) synergies exist in respective (sub-)manifolds and not in a single L-dimensional one. Task sequencing is then achieved by switching the weight vector wi along the task-encoding sequence (note that, in equation (6), W is a matrix). Therefore—in contrast to muscle synergies34 that are mere anatomical properties or postural hand synergies35 that were also used for robot hand design36,37 and control38—sequencing of joint-level motion segments driven by one-dimensional segment synergies can be used to encode entire tasks such as drinking from a bottle. For static synergies a single motion segment driven by a particular segment synergy becomes

where the ith synergy coordinate \({v}_{{{{\rm{t,i}}}}}\in {\mathbb{R}}\) essentially takes the role of an independent phase variable ψ ∈ [0, 1]. To transition from one motion segment to the next, the weight vector wi is then simply switched synchronously to the limit cycle of ψ and \({\bar{{{{\mathbf{q}}}}}}_{{{{\rm{t}}}},i}\) is updated accordingly.

Analogue to equation (6), the phase-based reinterpretation is brought into the SCC representation

allowing one to express the coupling between the residual input u and the according prosthesis motion through ψ as

Now, due to the first and second challenge, we bring equation (13b) to the Cartesian level using the forward kinematics map t(⋅) ∈ SE(3) and \({{{{\mathbf{u}}}}}^{{\prime} }={{{\mathbf{t}}}}({{{\mathbf{u}}}})\).

Solving equation (14b) requires knowing the synergy submatrices wA and wB and the inverse kinematics map \({{{{\mathbf{t}}}}}^{-1}({{{{\mathbf{u}}}}}^{{\prime} })\), which is hardly achievable under real conditions and real humans.

In contrast, we leverage the known human template motion xA(t) as follows: First, it is encoded into an SCC-compatible phase-based representation xA(Ψ). Then, instead of using equation (14b), ψ is estimated by solving the optimization problem

Equation (15) can be efficiently solved even in real time from the phase-parameterized template human xA(Ψ) and the Cartesian-level measurement of the RL \({{{{\mathbf{u}}}}}^{{\prime} }\), then finally driving the prosthesis position \({{{{\mathbf{x}}}}}_{{{{\rm{p}}}}}({\psi }^{* }({{{{\mathbf{u}}}}}^{{\prime} }))\).

However, the third and fourth challenge remain unsolved yet. Neither are velocity or acceleration encoded, nor is it possible to change the goal or even a task online. For this, we generalize the encoding process beyond the static version discussed so far. Specifically, we fall back on the well-known idea of encoding originally time-series data into a phase-based dynamical system. As a well-known implementation we chose DMPs39 to encode the human template complement xB(t) into its matching phase-based DMP representation \({{{{\mathbf{x}}}}}_{{{{\rm{B}}}}}({\psi }^{* }({{{{\mathbf{u}}}}}^{{\prime} }))\), driven by the human residual motion \({{{{\mathbf{u}}}}}^{{\prime} }\). Finally, we arrive at the dynamic reference for the prosthesis \(({{{{\mathbf{x}}}}}_{{{{\rm{p}}}}}(t),{\dot{{{{\mathbf{x}}}}}}_{{{{\rm{p}}}}}(t),{\ddot{{{{\mathbf{x}}}}}}_{{{{\rm{p}}}}}(t))=\left({{{{\mathbf{x}}}}}_{{{{\rm{B}}}}}({\psi }^{* }({{{{\mathbf{u}}}}}^{{\prime} })),{\dot{{{{\mathbf{x}}}}}}_{{{{\rm{B}}}}}({\psi }^{* }({{{{\mathbf{u}}}}}^{{\prime} })),{\ddot{{{{\mathbf{x}}}}}}_{{{{\rm{B}}}}}({\psi }^{* }({{{{\mathbf{u}}}}}^{{\prime} }))\right.\) driving the underlying motion control system described next. As shown in equations (19) and (21), we also employ DMPs’ well-known ability to generalize to new goals or transfer between task instances.

Motion control

The prosthesis hand pose is defined as

where \({{{{\mathbf{r}}}}}_{{{{\rm{p}}}}}\in {{\mathbb{R}}}^{3}\) denotes the position vector and Φ ∈ SE(3) the orientation vector. The prosthesis motion control law is defined as a basic operational space controller on joint torque-level

where rP,d denotes the desired Cartesian position of the prosthetic hand (cf. Supplementary Fig. 1b), respectively. \({{{\mathbf{\upphi }}}}\in {{\mathbb{R}}}^{3}\) is the orientation difference in the Euler angle representation, and \({{{{{J}}}}}_{{{{\rm{\omega }}}}}\in {{\mathbb{R}}}^{3\times 3}\) is the Jacobian between the Euler angle velocities and the angular velocities40. The diagonal matrices Kt and \({{{{{K}}}}}_{{{{\rm{r}}}}}\in {{\mathbb{R}}}^{3\times 3}\) denote the translational and rotational stiffness matrices, respectively. \({{{{D}}}}\in {{\mathbb{R}}}^{4\times 4}\) is the damping matrix. Furthermore, \({\hat{{{{\mathbf{g}}}}}}_{{{{\rm{p}}}}}\) and \({\hat{{{{{\mathbf{\uptau }}}}}}}_{{{{\rm{f,p}}}}}\) denote the gravitational effect estimate and the frictional effect estimate, respectively.

The exoskeleton compensated for its own weight as well as the weight of the prosthesis. The feed-forward control law, which was applied to the exoskeleton, is defined as

where \({\hat{{{{\mathbf{g}}}}}}_{{{{\rm{exo}}}}}({{{\mathbf{\uptheta }}}})\) and \({\hat{{{{\mathbf{\uptau }}}}}}_{{{{\rm{f}}}},{{{\rm{exo}}}}}\) denote the gexo(θ) estimates and the τf,exo estimates, respectively.

In the experiments with SCC, the exoskeleton was controlled in gravity compensation mode (equation (18)), and the prosthesis followed the control law that is defined in equation (17). The control parameters of equation (17) were empirically determined on the basis of a full simulation of the exo-prosthetic system. They were chosen to be Kt = 125 N/mI3×3 and Kr = 25 N/radI3×3, where \({{{{{I}}}}}_{3\times 3}\in {{\mathbb{R}}}^{3\times 3}\) denotes a identity matrix.

Human template encoding

For the specific implementation, we used the DMP framework from refs. 39,41 to generate a desired position \({{{{{\mathbf{r}}}}}}_{{{{\rm{P,d}}}}}(t)\in {{\mathbb{R}}}^{3}\) and orientation a(t) (which is expressed as a quaternion throughout this manuscript) to feed the controller in equation (17). A DMP is essentially a nonlinear system that can be written as39

where r0 and \({{{{\mathbf{r}}}}}_{{{{\rm{g}}}}}\in {{\mathbb{R}}}^{3}\) are the starting position and goal position, respectively. The positive definite and diagonal matrices Ap and \({{{{{B}}}}}_{{{{\rm{p}}}}}\in {{\mathbb{R}}}^{3\times 3}\) denote the stiffness and damping, respectively. A forcing term is defined as

where \({{{\varOmega }}}_{k}(\psi )=\exp (-{h}_{k}{(\psi -{c}_{k})}^{2})\) is a Gaussian function. hk and ck are the Gaussian function’s width and centre, respectively. The parameter vector \({{{{\mathbf{\upxi }}}}}_{k}\in {{\mathbb{R}}}^{3}\) is learned for a desired trajectory based on linear regression (cf. ref. 39). In this work, the hand position trajectories rB,s of task T (rB,s were the positional component of xB,s in Fig. 1), and they were extracted from the synergy dataset (‘Synergy dataset’ section).

The quaternion-based orientational DMP can be written as39,41

where the output orientation is expressed as the unit quaternion a = a0 + na1 + ma2 + ka3, and 1, n, m and k denote the quaternion basis. The starting quaternoin and the goal quaternion are denoted by a0 and ag, respectively. The positive, definite, and diagonal stiffness and damping matrices are denoted by Ao and \({{{{{B}}}}}_{{{{\rm{o}}}}}\in {{\mathbb{R}}}^{3\times 3}\), respectively. \({{{\mathbf{\upomega }}}}\in {{\mathbb{R}}}^{3}\) denotes the angular velocity, and fo(ψ) (cf. equation (20)) is a nonlinear forcing term. The operators \(\bar{\square }\), *, \(\log (\cdot )\) and \(\exp (\cdot )\) denote the quaternion conjugation, product, logarithm and exponential function, respectively. Furthermore, we introduced the following operators:

where b = 0 + mb1 + nb2 + kb3 denotes a non-real quaternion, and \({{{\mathbf{z}}}}={({z}_{1},{z}_{2},{z}_{3})}^{\top }\). To integrate equation (22), we used the following formula41

where δt denotes the time step. Corresponding to the learned position, the orientation of the hand’s trajectory aB,s in task T is learned (aB,s is the orientation component of xB,s in Fig. 1).

We note that, on the policy level, the accuracy corresponds to the offline learning stage performance of a DMP, that is, how good are the trajectories of the intact hand–arm approximated by the DMP. On the task level, the accuracy only depends on the identification of the actual goal (ag and rg). Another potential source of accuracy errors may arise if the prosthesis user deviates from the template motion, as seen in upper arm or torso movements (refer to Supplementary Video 2 at 02:48 min). In such instances, SCC can effectively compensate for local inaccuracies. However, if there are substantial global deviations, such as the upper arm moving in a completely different direction, this may be interpreted as the user’s intentional choice not to execute the task and decide for another task. However, the systematic analysis of this dynamic cognitive process and its interplay with SCC would need to be handled by appropriate online intention decoding and adaptation, which is beyond the scope of this work.

Participants and experimental protocol

We conducted two studies. In study 1, one female subject aged 58 years with transradial arm amputation of the right arm (>1 year) and three male subjects aged 33.3 ± 0.58 (mean ± standard deviation) years with preserved arm participated in the experiments. In study 2, one female participant, 23 years old, born with an undeveloped right forearm, and seven male participants aged between 22 and 32 years, without limb differences participated in the experiments. All subjects gave their written informed consent before participation. No participant received financial or other compensation. The experiments took place at the Munich Institute of Robotics and Machine Intelligence at the Technical University Munich. All the experiments were conducted according to the principles in the Declaration of Helsinki. Guidelines for study procedures were provided by the Ethic Commission of the Technical University Munich. The experimental protocol can be found in Supplementary Information. Note for data collection we have utilized Simulink 2017b, EtherLab 1.5 and Vicon Nexus 2.15, while MATLAB 2017b and 2021a was used for data analysis.

Reporting summary

Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.

Data availability

All experimental data collected during the user study in virtual reality can be accessed for download at https://doi.org/10.6084/m9.figshare.25368253 (ref. 42).

Code availability

We have included MATLAB scripts to reproduce Fig. 6 in this manuscript, as well as relevant Supplementary Figs. 1–6 in Supplementary Information. Additionally, a ‘Read me’ file is provided for additional guidance. These can be accessed for download at https://doi.org/10.6084/m9.figshare.25368253 (ref. 42).

References

Childress, D. S. Historical aspects of powered limb prostheses. Clin. Prosthet. Orthot. 9, 2–13 (1985).

Borchardt, M., Hartmann, K., Leymann, R. & Schlesinger, S. Ersatzglieder und Arbeitshilfen: Für Kriegsbeschädigte und Unfallverletzte (Springer, 2013).

Sauter, W. in Amputationschirurgie und Rehabilitation 373–391 (Springer, 1985).

Battye, C. K., Nightingale, A. & Whillis, J. The use of myo-electric currents in the operation of prostheses. J. Bone Joint Surg. 37-B, 506–510 (1955).

Berger, N. & Huppert, C. R. The use of electrical and mechanical muscular forces for the control of an electrical prosthesis. Am. J. Occup. Ther. 6, 110–114 (1952).

Haddadin, S. Prothese. Patent DE 10 2016 107 615 B3 (2017).

Vujaklija, I., Farina, D. & Aszmann, O. New developments in prosthetic arm systems. Orthop. Res. Rev. ume 8, 31–39 (2016).

Lenzi, T., Lipsey, J. & Sensinger, J. W. The RIC arm—a small anthropomorphic transhumeral prosthesis. IEEE/ASME Trans. Mechatr. 21, 2660–2671 (2016).

Toledo, C. et al. A comparison of direct and pattern recognition control for a two degree-of-freedom above elbow virtual prosthesis. In Proc. 2012 Annual International Conference of the IEEE Engineering in Medicine and Biology Society 4332–4335 (IEEE, 2012).

Alshammary, N. A., Bennett, D. A. & Goldfarb, M. Synergistic elbow control for a myoelectric transhumeral prosthesis. IEEE Trans. Neural Syst. Rehab. Eng. 26, 468–476 (2018).

Lauretti, C., Davalli, A., Sacchetti, R., Guglielmelli, E. & Zollo, L. Fusion of m-imu and emg signals for the control of trans-humeral prostheses. In Proc. 2016 6th IEEE International Conference on Biomedical Robotics and Biomechatronics (BioRob) 1123–1128 (IEEE, 2016).

Garcia-Rosas, R., Tan, Y., Oetomo, D. & Manzie, C. On-line synergy identification for personalized active arm prosthesis: a feasibility study. In Proc. 2018 Annual American Control Conference (ACC) 514–519 (IEEE, 2018).

Abayasiri, R. A. M., Madusanka, D. K., Arachchige, N., Silva, A. & Gopura, R. Mobio: a 5 dof trans-humeral robotic prosthesis. In Proc. 2017 International Conference on Rehabilitation Robotics (ICORR) 1627–1632 (IEEE, 2017).

Castellini, C. et al. Proceedings of the first workshop on peripheral machine interfaces: going beyond traditional surface electromyography. Front. Neurorobot. 8, 22 (2014).

Iftime, S., Egsgaard, L. & Popović, M. Automatic determination of synergies by radial basis function artificial neural networks for the control of a neural prosthesis. IEEE Trans. Neural Syst. Rehab. Eng. 13, 482–489 (2006).

Farokhzadi, M., Maleki, A., Fallah, A. & Rashidi, S. Online estimation of elbow joint angle using upper arm acceleration: a movement partitioning approach. J. Biomed. Phys. Eng. 7, 305–314 (2017).

Legrand, M., Merad, M., de Montalivet, E., Roby-Brami, A. & Jarrassé, N. Movement-based control for upper-limb prosthetics: is the regression technique the key to a robust and accurate control? Front. Neurorobot. 12, 41 (2018).

Vallery, H. & Buss, M. Complementary limb motion estimation based on interjoint coordination using principal components analysis. In Proc. 2006 IEEE International Conference on Control Applications Vol. 1–4, 933–938 (2006).

Legrand, M. Upper limb prostheses control based on user’s body compensations. Sorbonne Université https://tel.archives-ouvertes.fr/tel-03360709 (2021).

Kaliki, R. R., Davoodi, R. & Loeb, G. E. Evaluation of a noninvasive command scheme for upper-limb prostheses in a virtual reality reach and grasp task. IEEE Trans. Biomed. Eng. 60, 792–802 (2013).

Kaliki, R. R., Davoodi, R. & Loeb, G. E. Prediction of distal arm posture in 3-d space from shoulder movements for control of upper limb prostheses. Proc. IEEE 96, 1217–1225 (2008).

Merad, M., Roby-Brami, A. & Jarrassé, N. Towards the implementation of natural prosthetic elbow motion using upper limb joint coordination. In Proc. 2016 6th IEEE International Conference on Biomedical Robotics and Biomechatronics (BioRob) 821–826, IEEE (2016).

Merad, M. et al. Can we achieve intuitive prosthetic elbow control based on healthy upper limb motor strategies? Front. Neurorobot. 12, 1 (2018).

Popovic, M. & Popovic, D. Cloning biological synergies improves control of elbow neuroprostheses. IEEE Eng. Med. Biol. Mag. 20, 74–81 (2001).

Mijovic, B., Popović, M. B. & Popović, D. B. Synergistic control of forearm based on accelerometer data and artificial neural networks. Braz. J. Med. Biol. Res. 41, 389–97 (2008).

Alshammary, N., Bennett, D. & Goldfarb, M. Efficacy of coordinating shoulder and elbow motion in a myoelectric transhumeral prosthesis in reaching tasks. In 2016 IEEE International Conference on Robotics and Automation (ICRA) 3723–3728 (2016).

Mick, S. et al. Shoulder kinematics plus contextual target information enable control of multiple distal joints of a simulated prosthetic arm and hand. J. Neuroeng. Rehab. 18, 3 (2021).

Toedtheide, A., Fortunić, E. P., Kühn, J., Jensen, E. R. & Haddadin, S. A wearable force-sensitive and body-aware exoprosthesis for a transhumeral prosthesis socket. IEEE Trans. Robot. 39, 2203–2223 (2023).

Ajoudani, A., Tsagarakis, N. & Bicchi, A. Tele-impedance: teleoperation with impedance regulation using a body-machine interface. Int. J. Robot. Res. 31, 1642–1656 (2012).

Ficuciello, F., Migliozzi, A., Laudante, G., Falco, P. & Siciliano, B. Vision-based grasp learning of an anthropomorphic hand-arm system in a synergy-based control framework. Sci. Robot. 4, eaao4900 (2019).

Averta, G. et al. U-Limb: a multi-modal, multi-center database on arm motion control in healthy and post-stroke conditions. GigaScience 10, giab043 (2021).

Hu, T., Kuehn, J. & Haddadin, S. Identification of human shoulder-arm kinematic and muscular synergies during daily-life manipulation tasks. In Proc. 7th IEEE International Conference on Biomedical Robotics and Biomechatronics (Biorob) 1011–1018 (IEEE, 2018).

Kühn, J., Hu, T., Schappler, M. & Haddadin, S. Dynamics simulation for an upper-limb human-exoskeleton assistance system in a latent-space controlled tool manipulation task. In Proc. IEEE International Conference on Simulation, Modeling, and Programming for Autonomous Robots (SIMPAR) (2018).

d’Avella, A., Portone, A., Fernandez, L. & Lacquaniti, F. Control of fast-reaching movements by muscle synergy combinations. J. Neurosci. 26, 7791–7810 (2006).

Santello, M., Flanders, M. & Soechting, J. F. Postural hand synergies for tool use. J. Neurosci. 18, 10105–10115 (1998).

Palli, G. et al. The dexmart hand: mechatronic design and experimental evaluation of synergy-based control for human-like grasping. Int. J. Robot. Res. 33, 799–824 (2014).

Catalano, M. et al. Adaptive synergies for the design and control of the pisa/iit softhand. Int. J. Robot. Res. 33, 768–782 (2014).

Wimböck, T., Jahn, B. & Hirzinger, G. Synergy level impedance control for multifingered hands. In Proc. 2011 IEEE/RSJ International Conference on Intelligent Robots and Systems 973–979 (IEEE, 2011).

Hoffmann, H., Pastor, P., Park, D. & Schaal, S. Biologically-inspired dynamical systems for movement generation: automatic real-time goal adaptation and obstacle avoidance. In Proc. 2009 IEEE International Conference on Robotics and Automation 2587–2592 (IEEE, 2009).

Schindler, W. F. & Natale, C. Interaction Control of Robot Manipulators: Six Degrees-of-Freedom Tasks (Springer, 2003).

Ude, A., Nemec, B., Petrić, T. & Morimoto, J. Orientation in cartesian space dynamic movement primitives. In Proc. 2014 IEEE International Conference on Robotics and Automation (ICRA) 2997–3004 (IEEE, 2014).

Kühn, J. et al. The experimental data of the synergy-complement control for transhumeral prostheses. figshare https://doi.org/10.6084/m9.figshare.25368253 (2024).

Acknowledgements

The authors greatly acknowledge the funding of this work by the Alfried Krupp von Bohlen und Halbach Foundation (S.H. and J.K.), the Lighthouse Initiative Geriatronics by StMWi Bayern (Project X, grant no. 5140951) (T.H., E.J. and S.H.), the research project SOFTPRO (ICT 688857) through the European Union H2020 programme (E.P.F., E.J., T.H., A.T. and S.H.), and the research project AI.D (16ME0539K) through the Federal Ministry of Education and Research of the Federal Republic of Germany (T.H. and S.H.).

Funding

Open access funding provided by Technische Universität München.

Author information

Authors and Affiliations

Contributions

The concepts were developed by J.K. and S.H. J.K. implemented and conducted the experiments with the exo-prosthesis and the pilot study. A.T. assisted J.K. in the experiments with the exo-prosthesis, while T.H. assisted J.K. in the pilot study and conducted the confirmatory experiments. J.K., S.H. and T.H. interpreted the results. The exo-prosthetic system was mechatronically designed and developed by A.T. and electronically by E.P.F., while J.K. contributed to the communication architecture and software integration. J.K. and S.H. conceptualized, J.K. wrote and S.H. and T.H. edited the manuscript. E.J. contributed to the introduction and the discussion. All of the authors read the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Machine Intelligence thanks Marco Santello, and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information

Experimental setup and methods, study I and II with experimental protocols and extended results, and Supplementary Figs. 1–6 and Table 1.

Supplementary Video 1

Methods.

Supplementary Video 2

Results of reaching and reach-and-grasp tasks.

Supplementary Video 3

Results of task transitions, goal changes, and SCC versus SEQ.

Supplementary Video 4

Results of study I (pilot study).

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kühn, J., Hu, T., Tödtheide, A. et al. The synergy complement control approach for seamless limb-driven prostheses. Nat Mach Intell 6, 481–492 (2024). https://doi.org/10.1038/s42256-024-00825-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s42256-024-00825-7