Abstract

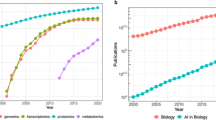

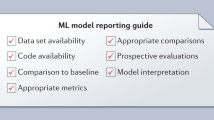

Machine learning (ML) has become an essential asset for the life sciences and medicine. We selected 250 articles describing ML applications from 17 journals sampling 26 different fields between 2011 and 2016. Independent evaluation by two readers highlighted three results. First, only half of the articles shared software, 64% shared data and 81% applied any kind of evaluation. Although crucial for ensuring the validity of ML applications, these aspects were met more by publications in lower-ranked journals. Second, the authors’ scientific backgrounds highly influenced how technical aspects were addressed: reproducibility and computational evaluation methods were more prominent with computational co-authors; experimental proofs more with experimentalists. Third, 73% of the ML applications resulted from interdisciplinary collaborations comprising authors from at least two of the three disciplines: computational sciences, biology, and medicine. The results suggested collaborations between computational and experimental scientists to generate more scientifically sound and impactful work integrating knowledge from both domains. Although scientifically more valid solutions and collaborations involving diverse expertise did not correlate with impact factors, such collaborations provide opportunities to both sides: computational scientists are given access to novel and challenging real-world biological data, increasing the scientific impact of their research, and experimentalists benefit from more in-depth computational analyses improving the technical correctness of work.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Bleicher, K. H., Bohm, H. J., Muller, K. & Alanine, A. I. Hit and lead generation: beyond high-throughput screening. Nat. Rev. Drug. Discov. 2, 369–378 (2003).

Sulakhe, D. et al. High-throughput translational medicine: challenges and solutions. Adv. Exp. Med. Biol. 799, 39–67 (2014).

Howard, J. Quantitative cell biology: the essential role of theory. Mol. Biol. Cell. 25, 3438–3440 (2014).

Cook, C. E. et al. The European Bioinformatics Institute in 2016: data growth and integration. Nucl. Acids Res. 44, D20–26 (2016).

Chicco, D. Ten quick tips for machine learning in computational biology. BioData Mining 10, 35 (2017).

Cios, K. J., Kurgan, L. A. & Reformat, M. Machine learning in the life sciences. IEEE Eng. Med. Biol. Mag. 26, 14–16 (2007).

Google Trends. Google https://trends.google.de/trends (2019).

Rost, B., Radivojac, P. & Bromberg, Y. Protein function in precision medicine: deep understanding with machine learning. FEBS Lett. 590, 2327–2341 (2016).

Webb, S. Deep learning for biology. Nature 554, 555–557 (2018).

Min, S., Lee, B. & Yoon, S. Deep learning in bioinformatics. Brief. Bioinform. 18, 851–869 (2017).

Larranaga, P. et al. Machine learning in bioinformatics. Brief. Bioinform. 7, 86–112 (2006).

Frank, M. R., Wang, D., Cebrian, M. & Rahwan, I. The evolution of citation graphs in artificial intelligence research. Nat. Mach. Intell. 1, 79–85 (2019).

Domingos, P. A few useful things to know about machine learning. Commun. ACM 55, 78–87 (2012).

Chou, K.-C. Some remarks on protein attribute prediction and pseudo amino acid composition. J. Theor. Biol. 273, 236–247 (2011).

Ioannidis, J. P. et al. Increasing value and reducing waste in research design, conduct, and analysis. Lancet 383, 166–175 (2014).

Gron, A. Hands-On Machine Learning with Scikit-Learn and TensorFlow: Concepts, Tools, and Techniques to Build Intelligent Systems (O’Reilly Media, 2017).

Chen, S., Arsenault, C. & Larivière, V. Are top-cited papers more interdisciplinary? J. Informetr. 9, 1034–1046 (2015).

Cummings, J. & Kiesler, S. Organization theory and the changing nature of science. J. Org. Des. 3, 1–16 (2014).

Abramo, G., D’Angelo, C. A. & Di Costa, F. Authorship analysis of specialized vs diversified research output. J. Informetr. 13, 564–573 (2019).

Abramo, G., D’Angelo, C. A. & Di Costa, F. Do interdisciplinary research teams deliver higher gains to science? Scientometrics 111, 317–336 (2017).

Chen, S., Arsenault, C., Gingras, Y. & Larivière, V. Exploring the interdisciplinary evolution of a discipline: the case of biochemistry and molecular biology. Scientometrics 102, 1307–1323 (2015).

Xie, Z., Li, M., Li, J., Duan, X. & Ouyang, Z. Feature analysis of multidisciplinary scientific collaboration patterns based on PNAS. EPJ Data Sci. 7, 5 (2018).

Rinia, E. J., van Leeuwen, T. N. & van Raan, A. F. J. Impact measures of interdisciplinary research in physics. Scientometrics 53, 241–248 (2002).

Larivière, V. & Gingras, Y. On the relationship between interdisciplinarity and scientific impact. J. Am. Soc. Inform. Sci. Technol. 61, 126–131 (2010).

Wallach, J. D., Boyack, K. W. & Ioannidis, J. P. A. Reproducible research practices, transparency, and open access data in the biomedical literature, 2015–2017. PLoS Biol. 16, e2006930 (2018).

Berger, B. et al. ISCB’s initial reaction to the New England Journal of Medicine editorial on data sharing. PLoS Comput. Biol. 12, e1004816 (2016).

Drazen, J. M. Data sharing and the journal. N. Engl. J. Med. 374, e24 (2016).

Longo, D. L. & Drazen, J. M. Data sharing. N. Engl. J. Med. 374, 276–277 (2016).

Mind meld. Nature 525, 289–290 (2015).

Nissani, M. Ten cheers for interdisciplinarity: the case for interdisciplinary knowledge and research. Soc. Sci. J. 34, 201–216 (1997).

van Wesel, M., Wyatt, S. & ten Haaf, J. What a difference a colon makes: how superficial factors. Scientometrics 98, 1601–1615 (2014).

Fitzgerald, R. T. & Radmanesh, A. Social media and research visibility. Am. J. Neuroradiol. 36, 637 (2015).

Patton, R. M., Stahl, C. G. & Wells, J. C. Measuring scientific impact beyond citation counts. D-Lib Magazine 22, 5 (2016).

Acknowledgements

Thanks to T. Karl and I. Weise (both TUM) for invaluable help with technical and administrative aspects of this work. Thanks to the TUM Graduate School (in particular Z. Zhang) for organizing the summer school, to the TUM (in particular H. Keidel and W. Herrmann) for substantial support on several levels including financing the summer school, to the Weizmann Institute, Tel Aviv University, Technion and Hebrew University for financial and general support; thanks also to the enlightening talks by D. Cremers (TUM), M. Linial (IAS Israel, Hebrew University), Y. Ofran (Bar-Ilan University); thanks to PubMed for providing easy access to published articles and supporting automatic access; thanks to the maintainers of Biopython for providing excellent code to access various databases and process biological data. Last, but not least, thanks to all maintainers of public databases and to all experimentalists who enabled this analysis by making their data publicly available. This work was supported by grant no. 640508 from the Deutsche Forschungsgemeinschaft (DFG).

Author information

Authors and Affiliations

Contributions

M.L. and K.S. performed the major part of data analysis and of writing the manuscript. M.L. created and adapted the predefined list of articles. K.S. generated figures and performed statistical tests. L.C. assisted in finding interesting correlations in the data by performing complex analyses and statistical test and in generating figures. M.L., K.S., L.C., Y.F., P.H, E.K., A.M., K.Q., A.R., S.S., A.S., L.S. and A. D.-W. participated in the summer school where the idea for this work was developed, were involved in agreeing on the goals and analysis methods of this work, were involved in data analysis by collecting data from the predefined list of articles, and assisted in writing the manuscript. M.L., K.S. and A.M. collected the data for 2018. N.B.-T., M.Y.N, D.R. and B.W.S. supervised the work over the entire time and proofread the manuscript. D.A. provided valuable comments, especially regarding statistical analysis and was involved in manuscript writing. T.H. and B.R. initiated and supervised the summer school where the idea for this project was developed. T.H. provided important comments to refine the analysis and contributed to manuscript writing. B.R. supervised and guided the work over the entire time and proofread the manuscript. All authors read and approved the final manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

About this article

Cite this article

Littmann, M., Selig, K., Cohen-Lavi, L. et al. Validity of machine learning in biology and medicine increased through collaborations across fields of expertise. Nat Mach Intell 2, 18–24 (2020). https://doi.org/10.1038/s42256-019-0139-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s42256-019-0139-8

This article is cited by

-

Artificial intelligence and illusions of understanding in scientific research

Nature (2024)

-

Biological data studies, scale-up the potential with machine learning

European Journal of Human Genetics (2023)

-

Large language models in medicine

Nature Medicine (2023)

-

A modeling method for the development of a bioprocess to optimally produce umqombothi (a South African traditional beer)

Scientific Reports (2021)

-

Accelerating antibiotic discovery through artificial intelligence

Communications Biology (2021)