Abstract

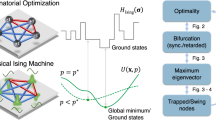

Various kinds of Ising machines based on unconventional computing have recently been developed for practically important combinatorial optimization. Among them, the machines implementing a heuristic algorithm called simulated bifurcation have achieved high performance, where Hamiltonian dynamics are simulated by massively parallel processing. To further improve the performance of simulated bifurcation, here we introduce thermal fluctuation to its dynamics relying on the Nosé–Hoover method, which has been used to simulate Hamiltonian dynamics at finite temperatures. We find that a heating process in the Nosé–Hoover method can assist simulated bifurcation to escape from local minima of the Ising problem, and hence lead to improved performance. We thus propose heated simulated bifurcation and demonstrate its performance improvement by numerically solving instances of the Ising problem with up to 2000 spin variables and all-to-all connectivity. Proposed heated simulated bifurcation is expected to be accelerated by parallel processing.

Similar content being viewed by others

Introduction

Unconventional computing with special-purpose hardware devices for solving combinatorial optimization problems has attracted growing interest due to practical importance. Many combinatorial optimization problems can be mapped onto finding ground states of Ising spin models1,2, which is referred to as the Ising problem. Special-purpose hardware devices for the Ising problem are called Ising machines. Ising machines utilizing natural phenomena have been developed, such as quantum annealers3,4,5,6,7,8 with superconducting circuits9, coherent Ising machines with pulse lasers10,11,12,13,14, oscillator-based Ising machines15,16,17,18,19, and other Ising machines with various systems, such as stochastic nanomagnets20, gain-dissipative systems21, spatial light modulators22, memristor Hopfield neural networks23, and spin torque nano-oscillators24,25.

Ising machines have also been implemented with special-purpose digital processors26,27,28,29,30,31,32 using simulated annealing (SA)33 and other algorithms. Among such algorithms, simulated bifurcation (SB) is a recently proposed heuristic algorithm34. SB originates from numerical simulations of Hamiltonian dynamics with bifurcation that is a classical counterpart of quantum adiabatic bifurcation in nonlinear oscillators35,36, which itself has been studied actively37,38,39,40,41,42,43,44,45. Simulation-based approaches such as SB allow one to deal with dense spin–spin interactions with high precision, which might be challenging for physical implementations. Also, the classical dynamics can be simulated efficiently, unlike quantum dynamics. SB can be accelerated by parallel processing with, e.g., field-programmable gate arrays (FPGAs)34,46,47,48, because of its capability of simultaneous updating of variables. Recently proposed variants of SB have achieved faster and more accurate optimization49 than original SB.

To further improve the performance of SB, here we introduce thermal fluctuation to SB. SA can yield high-accuracy solutions by modeling thermal fluctuation33, while quantum annealing utilizes quantum fluctuation3. These fluctuations can assist escape from local minima of the Ising problem and lead to higher solution accuracy. Our method is based on the Nosé-Hoover method50,51, which enables simulations of Hamiltonian dynamics at finite temperatures52. Unlike SA, the Nosé-Hoover method does not use random numbers, namely, is deterministic, and thus the simplicity of SB is preserved. We find that a simplified dynamics with only a heating process can improve the performance of SB, where an ancillary dynamical variable in the Nosé–Hoover method is replaced by a constant. We numerically demonstrate this improvement by solving instances of the Ising problem with up to 2000 spin variables and all-to-all connectivity, which corresponds to the Sherrington–Kirkpatrick (SK) model introduced in studies of spin glasses53,54,55. The SK model has been widely used to measure the performance of Ising machines12,14,28,29,30,32,34,49,56. Proposed heated SB is also suitable for massively parallel implementations with, e.g., FPGAs.

Results and discussion

SB with thermal fluctuation

First, we briefly explain the Ising problem and SB. The Ising problem is to find N Ising spins si = ±1 minimizing a dimensionless Ising energy,

where Jij represents the interactions between si and sj (Jij = Jji and Jii = 0). The SB has two latest variants, ballistic SB (bSB) and discrete SB (dSB)49. Both bSB and dSB are based on the following Hamiltonian equations of motion,

where xi and yi are respectively the positions and momenta corresponding to si, the dots denote time derivatives, a(t) is a control parameter, and a0 and c0 are constants. The force due to the interactions, fi, are given by

where sgn(xj) is the sign of xj. Time evolutions of xi are calculated by solving Eqs. (2) and (3) with the symplectic Euler method52, where the positions xi are confined within |xi| ≤ 1 by perfectly inelastic walls at xi = ±1, that is, if |xi| > 1 after each time step, xi and yi are set to xi = sgn(xi) and yi = 0. With increasing a(t) from zero to a0, bifurcations to xi = ±1 occur, and the signs si = sgn(xi) yield a solution to the Ising problem. A solution at the final time is at least a local minimum of the Ising problem49. Ballistic behavior in bSB leads to fast convergence to a local or approximate solution, while the discretized fi in dSB enable higher solution accuracy with a longer time.

Here we apply the Nosé–Hoover method50,51,52 with a finite temperature T to Eqs. (2) and (3), obtaining

where ξ is an ancillary variable playing a role of thermal fluctuation, and M is a parameter (mass). The variable ξ controls an instantaneous temperature defined by

to be a given T as follows. When Tinst is smaller than T, \(\dot{\xi }\) is negative according to Eq. (8), which makes ξ negative. Then |yi| increase owing to the last term in Eq. (7), and thus Tinst increases and approaches T, which can be regarded as heating. To the contrary, when Tinst > T, cooling occurs.

We found that SB gives better solutions when ξ is kept negative by negative initial ξ and large M (leading to \(\dot{\xi }\simeq 0\)). This observation suggests that the heating can improve SB but the cooling is unnecessary. This may be because increased |yi| by the heating can lead to escape from local minima of the Ising problem. Furthermore, small \({{{{{\rm{|}}}}}}\dot{\xi }{{{{{\rm{|}}}}}}\) due to large M above implies that constant ξ can play a similar role, and then we found that ξ replaced by a negative constant −γ (γ > 0) can rather yield higher performance. The constant γ is regarded as a rate of the heating.

Thus, in this paper, we propose SB with a heating term, which we call heated bSB (HbSB) and dSB (HdSB), as follows,

We numerically solve Eqs. (10) and (11) by discretizing the time by \({t}_{k+1}={t}_{k}+\Delta t\) with a time interval Δt, and by calculating \({x}_{i}\left({t}_{k+1}\right)\) and \({y}_{i}\left({t}_{k+1}\right)\) from xi(tk) and \({y}_{i}\left({t}_{k}\right)\) in each time step. Here note that the symplectic Euler method is not applicable for Eqs. (10) and (11), because these equations are no longer Hamiltonian equations owing to the term γyi52. We empirically found that solution accuracy can be improved by the same update as previous bSB and dSB49 followed by an update corresponding to the term γyi. This ordering results in nonzero momenta by the heating and can prevent from getting stuck at the walls. See “Methods” for a detailed algorithm.

In the following, we compare heated SB with previous SB by solving instances of the Ising problem with all-to-all connectivity, where Jij are randomly chosen from ±1 with equal probabilities (corresponding to the SK model). The control parameter a(t) is linearly increased from 0 to a0. The constant parameters are set as a0 = 1 and

where c1 is a parameter tuned around 0.5, which is based on random matrix theory34,49. xi and yi are initialized by uniform random numbers in the interval (−1,1).

Performance for a 2000-spin Ising problem

We first solve a benchmark instance called K2000, which is a 2000-spin instance of the Ising problem with all-to-all connectivity12,30,34,49. K2000 is often expressed as a MAX-CUT problem. The MAX-CUT problem is given by weights wij with wij = wji, and the following cut value C is maximized,

where in Eq. (14), C has been related to EIsing [Eq. (1)] by wij = −Jij. Thus the MAX-CUT problem can be reduced to the Ising problem.

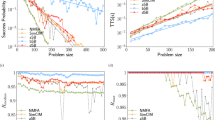

To confirm the effect of the heating, we calculate the instantaneous temperature Tinst in Eq. (9) and the cut value C in Eq. (14) at every time step. Figure 1a, b shows typical examples of time evolutions of Tinst. Here parameters are the ones optimized in advance, which are explained later. For bSB, Tinst rapidly decreases owing to collisions with the perfectly inelastic walls, while Tinst for HbSB is kept much higher owing to the heating, as expected. For dSB, Tinst is also higher than bSB, because the discretized forces fi in Eq. (5) increase energy, violating conservation of energy49. In comparison between HbSB and dSB, Tinst for HbSB is higher than dSB around the end of the evolution. HdSB shows similar Tinst to dSB, implying that the heating does not alter the dynamics of dSB.

a, b Instantaneous temperatures Tinst. c, d Instantaneous cut values C. Here, bSB, dSB, HbSB, and HdSB denote ballistic SB, discrete SB, heated bSB, and heated dSB, respectively. Time is represented by time steps. Parameters are Δt = 0.7 and c1 = 0.6 for bSB, Δt = 1.1 and c1 = 0.6 for dSB, Δt = 1.1, c1 = 0.9 and γ = 0.5 for HbSB, and Δt = 1.1, c1 = 0.7 and γ = 0.06 for HdSB.

Figure 1c, d shows last parts of typical time evolutions of C. (These final C are at around the middle of distributions in many trials, not the best ones, which we will see in Fig. 2). For bSB, C is almost constant for the last 1000 time steps, while C for HbSB continues to fluctuate until nearly the end of the evolution owing to the heating. The fluctuation for HbSB looks similar to the fluctuations for dSB and HdSB.

a For bSB and HbSB. b For dSB and HdSB. (SB means simulated bifurcation, and b, d, and H denote ballistic, discrete, and heated, respectively.) The dashed vertical line indicates the best known cut value 33337. Insets show magnifications around the best known cut value. Here the number of time steps is Ns = 10000, and parameters Δt, c1, and γ are the same as in Fig. 1.

Next, we solve K2000 in 104 trials with random initial xi and yi. In one trial, C is evaluated every 100 time steps, and the best value is output. Figure 2 shows examples of cumulative distributions of C, where the number of trials giving cut values lower than C are normalized by the total number of trials, 104. For bSB, the majority of trials results in one of a few values of C between 33200 and 33300. On the other hand, dSB, HbSB, and HdSB yield broader distributions, owing to fluctuations (higher instantaneous temperatures) in their dynamics. It is notable that the heating changes the distribution of bSB to the one similar to the distribution of dSB (and HdSB), and that the heating much improves bSB. The insets in Fig. 2 show magnifications around the best known cut value, Cbest = 3333749. The distributions for dSB and HdSB are almost overlapping, indicating the similar performance of dSB and HdSB. The insets also show that HbSB leads to more trials resulting in C close to Cbest than dSB and HdSB, suggesting the highest performance of HbSB.

We then evaluate average and maximum C in the 104 trials and probability P for obtaining Cbest. Here, P is estimated by dividing the number of trials obtaining Cbest by the total number of trials. Also, using P and the number of time steps, Ns, we calculate the number of time steps required to find Cbest with a probability of 99%, which we call step-to-solution S, given by

Step-to-solution is a useful measure of performance of an algorithm32. (Time-to-solution is often used to measure performance of Ising machines28,31,32,49, but it depends on not only algorithms but also implementations to hardware devices. Time-to-solution equals step-to-solution multiplied by a computation time for one time step.) Here the parameters Δt, c1, and γ are set such that S are minimized. For bSB, instead, average C is maximized, because we found P = 0 for bSB and could not estimate S.

Figure 3a shows average and maximum C as functions of Ns. For large Ns, average C for dSB, HbSB, and HdSB are larger than that for bSB. Cbest is reached by three SBs other than bSB. Figure 3b shows that, for large Ns, P are the highest for HbSB, followed by HdSB, dSB, and bSB in this ordering.

a Average (lines) and maximum (symbols) cut values C as functions of the number of time steps, Ns. The horizontal dashed line indicates the best known cut value Cbest = 33337. The maximum C for dSB, HbSB, and HdSB coincide with Cbest for Ns ≥ 800. b Probabilities for obtaining Cbest, P. c Step-to-solution S. The black cross is step-to-solution obtained from previously reported data for dSB49. Parameters Δt, c1, and γ here are the same as in Fig. 1. SB means simulated bifurcation, and b, d, and H denote ballistic, discrete, and heated, respectively.

Figure 3c shows S as functions of Ns. Each SB has a minimum of S at certain Ns, and in the following we compare S minimized with respect to Ns. The black cross represents a value obtained from previously reported data for dSB49. The present value of S for dSB is smaller than the previous value, because in this study the parameters Δt and c1 are optimized for K2000 while not in the previous study. In Fig. 3c, at optimal Ns, S for HbSB is the smallest. Compared with dSB, S are reduced by 32.7% for HbSB and 19.7% for HdSB. These results demonstrate that the heating improves the performance. Although dSB performs better than bSB, bSB is much more improved by the heating than dSB, and resulting HbSB shows higher performance than HdSB.

Performance for 100 instances of a 700-spin Ising problem

Finally, we examine the performance for instances other than K2000 by solving 100 instances of the Ising problem with 700 spin variables and all-to-all connectivity32,49. For these instances, reference solutions for estimating step-to-solution were obtained by SA with sufficiently long annealing times and many iterations, which are expected to be close to optimal solutions49. Here each instance is solved in 104 trials, and C (or EIsing) is evaluated at the last time step. We set the parameters to the values optimized in K2000.

Figure 4 shows the medians of S for the 100 instances32,49 as functions of Ns. S by dSB, HbSB, and HdSB are much smaller than S by bSB, because larger fluctuations in these three SBs might assist escape from local minima, as suggested in Figs. 1 and 2 for K2000. Besides, HbSB results in the smallest S among four SBs. In comparison with dSB, HbSB reduces S by 9.55%. This result demonstrates that HbSB can improve the performance for not only K2000 but also the other instances. The reason for the highest performance of HbSB is left for future work.

The medians of step-to-solution S for the 100 instances are shown as functions of the number of time steps, Ns. Parameters Δt, c1, and γ are the same as in Fig. 1. SB means simulated bifurcation, and b, d, and H denote ballistic, discrete, and heated, respectively.

Conclusions

We have demonstrated that SB can be improved by introducing fluctuation with a heating term, which has been obtained by replacing an ancillary dynamical variable in the Nosé–Hoover method by a constant rate of heating. We have compared previous and heated SBs by solving all-to-all connected 2000-spin and 700-spin instances of the Ising problem (the SK model), and have found that HbSB gives better step-to-solution than bSB, dSB, and HdSB. This result indicates that the heating is effective, especially for bSB.

Since the proposed heated SB shares the simple dynamics with previous SB, we expect that heated SB will be accelerated by massively parallel processing implemented by, e.g., FPGAs. This study also implies that further improvements of SB will be possible by simple physics-inspired modifications like the heating term introduced here. For example, fluctuations in Hamiltonian dynamics can also be modeled by stochastic methods57.

Methods

Heated simulated bifurcation

First, the symplectic Euler method52 is formally applied to the terms other than γyi in Eqs. (10) and (11),

where fi are calculated from xi(tk) with Eqs. (4) and (5), and the variables with the tildes denote temporary variables used within a time step. Then, the perfectly inelastic walls work as

where the functions g(x) and h(x,y) are given by

Finally, we include the heating term, referring to the usual Euler method, as

Equations (16)–(19) and (22) are numerically solved, where the variables are represented as single precision floating-point real numbers.

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Code availability

The code used in this work is available from the corresponding author upon reasonable request.

References

Barahona, F. On the computational complexity of Ising spin glass models. J. Phys. A: Math. Gen. 15, 3241–3253 (1982).

Lucas, A. Ising formulations of many NP problems. Front. Phys. 2, 5 (2014).

Kadowaki, T. & Nishimori, H. Quantum annealing in the transverse Ising model. Phys. Rev. E 58, 5355–5363 (1998).

Farhi, E., Goldstone, J., Gutmann, S. & Sipser, M. Quantum computation by adiabatic evolution. Preprint at https://arxiv.org/abs/quant-ph/0001106 (2000).

Farhi, E. et al. A quantum adiabatic evolution algorithm applied to random instances of an NP-complete problem. Science 292, 472–475 (2001).

Das, A. & Chakrabarti, B. K. Colloquium: Quantum annealing and analog quantum computation. Rev. Mod. Phys. 80, 1061–1081 (2008).

Albash, T. & Lidar, D. A. Adiabatic quantum computation. Rev. Mod. Phys. 90, 015002 (2018).

Hauke, P., Katzgraber, H. G., Lechner, W., Nishimori, H. & Oliver, W. D. Perspectives of quantum annealing: methods and implementations. Rep. Prog. Phys. 83, 054401 (2020).

Johnson, M. W. et al. Quantum annealing with manufactured spins. Nature 473, 194–198 (2011).

Wang, Z., Marandi, A., Wen, K., Byer, R. L. & Yamamoto, Y. Coherent Ising machine based on degenerate optical parametric oscillators. Phys. Rev. A 88, 063853 (2013).

Marandi, A., Wang, Z., Takata, K., Byer, R. L. & Yamamoto, Y. Network of time-multiplexed optical parametric oscillators as a coherent Ising machine. Nat. Photonics 8, 937–942 (2014).

Inagaki, T. et al. A coherent Ising machine for 2000-node optimization problems. Science 354, 603–606 (2016).

Yamamoto, Y. et al. Coherent Ising machines–optical neural networks operating at the quantum limit. npj Quantum Inf. 3, 49 (2017).

Honjo, T. et al. 100,000-spin coherent Ising machine. Sci. Adv. 7, eabh0952 (2021).

Wang, T. & Roychowdhury, J. Oscillator-based Ising machine. Preprint at https://arxiv.org/abs/1709.08102 (2017).

Wang, T. & Roychowdhury, J. Unconventional Computation and Natural Computation. UCNC 2019. Lecture Notes in Computer Science Vol. 11493, 232–256 (Springer, 2019).

Chou, J., Bramhavar, S., Ghosh, S. & Herzog, W. Analog coupled oscillator based weighted Ising machine. Sci. Rep. 9, 14786 (2019).

Mallick, A. et al. Using synchronized oscillators to compute the maximum independent set. Nat. Commun. 1, 4689 (2020).

Vaidya, J., Kanthi, R. S. S. & Shukla, N. Creating electronic oscillator-based Ising machines without external injection locking. Sci. Rep. 12, 981 (2022).

Sutton, B., Camsari, K. Y., Behin-Aein, B. & Datta, S. Intrinsic optimization using stochastic nanomagnets. Sci. Rep. 7, 44370 (2017).

Kalinin, K. P. & Berloff, N. G. Global optimization of spin Hamiltonians with gain-dissipative systems. Sci. Rep. 8, 17791 (2018).

Pierangeli, D., Marcucci, G. & Conti, C. Large-scale photonic Ising machine by spatial light modulation. Phys. Rev. Lett. 122, 213902 (2019).

Cai, F. et al. Power-efficient combinatorial optimization using intrinsic noise in memristor Hopfield neural networks. Nat. Electron. 3, 409–418 (2020).

Houshang, A. et al. A spin Hall Ising machine. Preprint at https://arxiv.org/abs/2006.02236 (2020).

Albertsson, D. I. et al. Ultrafast Ising machines using spin torque nano-oscillators. Appl. Phys. Lett. 118, 112404 (2021).

Yamaoka, M. et al. A 20k-spin Ising chip to solve combinatorial optimization problems with CMOS annealing. IEEE J. Solid-State Circuits 51, 303–309 (2016).

Tsukamoto, S., Takatsu, M., Matsubara, S. & Tamura, H. An accelerator architecture for combinatorial optimization problems. FUJITSU Sci. Tech. J. 53, 8–13 (2017).

Aramon, M. et al. Physics-inspired optimization for quadratic unconstrained problems using a digital annealer. Front. Phys. 7, 48 (2019).

Okuyama, T., Sonobe, T., Kawarabayashi, K. & Yamaoka, M. Binary optimization by momentum annealing. Phys. Rev. E 100, 012111 (2019).

Yamamoto, K. et al. STATICA: A 512-spin 0.25M-weight annealing processor with an all-spin-updates-at-once architecture for combinatorial optimization with complete spin–spin interactions. IEEE J. Solid-State Circuits 56, 165–178 (2021).

Patel, S., Chen, L., Canoza, P. & Salahuddin, S. Ising model optimization problems on a FPGA accelerated restricted Boltzmann machine. Preprint at https://arxiv.org/abs/2008.04436 (2020).

Leleu, T. et al. Scaling advantage of chaotic amplitude control for high-performance combinatorial optimization. Commun. Phys. 4, 266 (2021).

Kirkpatrick, S., Gelatt, C. D. & Vecchi, M. P. Optimization by simulated annealing. Science 220, 671–680 (1983).

Goto, H., Tatsumura, K. & Dixon, A. R. Combinatorial optimization by simulating adiabatic bifurcations in nonlinear Hamiltonian systems. Sci. Adv. 5, eaav2372 (2019).

Goto, H. Bifurcation-based adiabatic quantum computation with a nonlinear oscillator network. Sci. Rep. 6, 21686 (2016).

Goto, H. Quantum computation based on quantum adiabatic bifurcations of Kerr-nonlinear parametric oscillators. J. Phys. Soc. Jpn. 88, 061015 (2019).

Nigg, S. E., Lörch, N. & Tiwari, R. P. Robust quantum optimizer with full connectivity. Sci. Adv. 3, e1602273 (2017).

Puri, S., Andersen, C. K., Grimsmo, A. L. & Blais, A. Quantum annealing with all-to-all connected nonlinear oscillators. Nat. Commun. 8, 15785 (2017).

Zhao, P. et al. Two-photon driven Kerr resonator for quantum annealing with three-dimensional circuit QED. Phys. Rev. Appl. 10, 024019 (2018).

Goto, H., Lin, Z. & Nakamura, Y. Boltzmann sampling from the Ising model using quantum heating of coupled nonlinear oscillators. Sci. Rep. 8, 7154 (2018).

Kewming, M. J., Shrapnel, S. & Milburn, G. J. Quantum correlations in the Kerr Ising model. N. J. Phys. 22, 053042 (2020).

Onodera, T., Ng, E. & McMahon, P. L. A quantum annealer with fully programmable all-to-all coupling via Floquet engineering. npj Quantum Inf. 6, 48 (2020).

Goto, H. & Kanao, T. Quantum annealing using vacuum states as effective excited states of driven systems. Commun. Phys. 3, 235 (2020).

Kanao, T. & Goto, H. High-accuracy Ising machine using Kerr-nonlinear parametric oscillators with local four-body interactions. npj Quantum Inf. 7, 18 (2021).

Goto, H. & Kanao, T. Chaos in coupled Kerr-nonlinear parametric oscillators. Phys. Rev. Res. 3, 043196 (2021).

Tatsumura, K., Dixon, A. R. & Goto, H. FPGA-based simulated bifurcation machine. In 2019 29th International Conference on Field Programmable Logic and Applications (FPL), 59–66 (IEEE, New York, 2019).

Zou, Y. & Lin, M. Massively simulating adiabatic bifurcations with FPGA to solve combinatorial optimization. In Proceedings of the 2020 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays (FPGA ’20), 65–75 (ACM, New York, 2020).

Tatsumura, K., Yamasaki, M. & Goto, H. Scaling out Ising machines using a multi-chip architecture for simulated bifurcation. Nat. Electron. 4, 208–217 (2021).

Goto, H. et al. High-performance combinatorial optimization based on classical mechanics. Sci. Adv. 7, eabe7953 (2021).

Nosé, S. A molecular dynamics method for simulations in the canonical ensemble. Mol. Phys. 52, 255–268 (1984).

Hoover, W. G. Canonical dynamics: Equilibrium phase-space distributions. Phys. Rev. A 31, 1695–1697 (1985).

Leimkuhler, B. & Reich, S. Simulating Hamiltonian Dynamics (Cambridge University Press, 2004).

Sherrington, D. & Kirkpatrick, S. Solvable model of a spin-glass. Phys. Rev. Lett. 35, 1792–1796 (1975).

Parisi, G. Infinite number of order parameters for spin-glasses. Phys. Rev. Lett. 43, 1754–1756 (1979).

Parisi, G., Ritort, F. & Slanina, F. Critical finite-size corrections for the Sherrington–Kirkpatrick spin glass. J. Phys. A: Math. Gen. 26, 247–259 (1993).

Oshiyama, H. & Ohzeki, M. Benchmark of quantum-inspired heuristic solvers for quadratic unconstrained binary optimization. Sci. Rep. 12, 2146 (2022).

Andersen, H. C. Molecular dynamics simulations at constant pressure and/or temperature. J. Chem. Phys. 72, 2384 (1980).

Acknowledgements

We thank K. Tatsumura, R. Hidaka, Y. Hamakawa, M. Yamasaki, and Y. Sakai for valuable discussion.

Author information

Authors and Affiliations

Contributions

T.K. and H.G. conceived the idea, and developed the code. T.K. performed the numerical simulations presented here. T.K. and H.G. wrote the manuscript. H.G. supervised this project.

Corresponding author

Ethics declarations

Competing interests

T.K. and H.G. are inventors on Japanese, US, and Chinese patent applications related to this work filed by Toshiba Corporation (no. P2021-036688, filed 8 March 2021, no. 17/461452, filed 30 August 2021, and no. 202111002962.7, filed 30 August 2021, respectively). The authors declare no other competing interests.

Peer review

Peer review information

Communications Physics thanks the anonymous reviewers for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kanao, T., Goto, H. Simulated bifurcation assisted by thermal fluctuation. Commun Phys 5, 153 (2022). https://doi.org/10.1038/s42005-022-00929-9

Published:

DOI: https://doi.org/10.1038/s42005-022-00929-9

This article is cited by

-

Performance of quantum annealing inspired algorithms for combinatorial optimization problems

Communications Physics (2024)

-

Point convolutional neural network algorithm for Ising model ground state research based on spring vibration

Scientific Reports (2024)

-

Quantum-Annealing-Inspired Algorithms for Track Reconstruction at High-Energy Colliders

Computing and Software for Big Science (2024)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.