Abstract

We examined how age and exposure to different types of COVID-19 (mis)information affect misinformation beliefs, perceived credibility of the message and intention-to-share it on WhatsApp. Through two mixed-design online experiments in the UK and Brazil (total N = 1454) we first randomly exposed adult WhatsApp users to full misinformation, partial misinformation, or full truth about the therapeutic powers of garlic to cure COVID-19. We then exposed all participants to corrective information from the World Health Organisation debunking this claim. We found stronger misinformation beliefs among younger adults (18–54) in both the UK and Brazil and possible backfire effects of corrective information among older adults (55+) in the UK. Corrective information from the WHO was effective in enhancing perceived credibility and intention-to-share of accurate information across all groups in both countries. Our findings call for evidence-based infodemic interventions by health agencies, with greater engagement of younger adults in pandemic misinformation management efforts.

Similar content being viewed by others

Introduction

Background to the problem and policy needs

Since December 2019, when the SARS-CoV-2 virus was first reported in Wuhan, China, information about the COVID-19 pandemic has swamped online platforms in parallel with the global spread of the virus itself. An undesirable outcome of this phenomenon has been a surge in misinformation, broadly understood as false information that is shared without intent to harm (Wardle and Derakshan, 2017). An examination of fact-checked claims found misinformation with misleading content, false context, manipulated content, fabricated content, and imposter content (Brennen et al., 2020). Various manifestations of misinformation including conspiracy theories, fake testimonies claiming to be sourced from doctors in Wuhan, and promotion of pseudoscientific cures for COVID-19 such as garlic quickly went ‘viral’ amplified by social media (Mian and Khan, 2020). Worryingly, misinformation about the actions or policies of public authorities and international bodies like the World Health Organization (WHO) comprised the largest category of claims (39%) (Brennen et al., 2020).

As the pandemic spread globally, becoming increasingly political in the process, the spectrum of COVID-19 misinformation further diversified, with pernicious impacts (Motta et al., 2020). An analysis of more than 100 million tweets demonstrated how waves of misinformation, spread by human and automated agents, preceded COVID-19 outbreaks in various countries (Gallotti et al., 2020). Conspiracy theories about the radiation from 5G towers spreading COVID-19 fueled anger leading to violence directed at telecommunication masts and engineers (Jolley and Paterson, 2020). About 1700 instances of discrimination against Asian minority communities in the United States were documented in the first few months of the pandemic even as political leaders characterized COVID-19 as the “Chinese virus”(BBC, 2020; Van Barvel et al., 2020). Aside from stoking violence and stigma, there were concerns that online misinformation might lead to risky health behaviors and a lack of adherence to personal protective measures (Islam et al., 2020).

Tackling misinformation has since become a key challenge for the global health response against COVID-19. The WHO has characterized the explosion of online information as an “infodemic” and launched EPI-WIN (WHO, 2020), an online informational resource related to COVID-19 with a dedicated “myth busters” section designed to debunk COVID-19 misinformation circulating on social media. Member countries such as the UK and Brazil followed suit by instituting their own political mechanisms to track and tackle online misinformation related to COVID-19(GOV.UK, 2020; Ricard and Medeiros, 2020). Given the ubiquity of COVID-19 misinformation and its impact, scientists from disciplines as varied as communication studies, public health and information science have thus far generated empirical evidence related to: (1) analyses of misinformation content and message characteristics (Brennen et al., 2020), (2) analyses of the volume and diffusion of misinformation (Kouzy et al., 2020), and (3) interventions to tackle misinformation (Pennycook et al., 2020).

While we now grasp substantially more about the nature of COVID-19 misinformation, what we lack is a deeper understanding of how individuals respond to this misinformation. In this paper, we examine, through the lens of social and behavioral sciences, how social media users of different age groups process and respond to COVID-19 misinformation. We are particularly interested in how individuals respond to exposure to various forms of (mis)information (ranging from full falsities to partial falsities and full truths), which in this paper we refer to as ‘shades of truth’. Once exposed to these ‘shades of truth’, we then examine the effectiveness of corrective information, as disseminated by agencies like the WHO, on social media users of different age groups. Our study was conducted in the UK and Brazil: two countries with not only the severest COVID-19 burden in their respective continents (ECDC, 2020; AS-COA, 2020) but also ones where WhatsApp, a known vector of misinformation spread, is the most popular messaging application (Statista, 2020a; Statista, 2020b).

Despite the descriptive nature of most health misinformation studies (Nan et al., 2020), a new line of research has emerged recently, using experimental approaches to understand how health communicators can effectively combat misinformation and mitigate misperception among vulnerable populations (van der Meer and Jin, 2020; Vraga et al., 2020). Grounded in a new theoretical framework of misinformation debunking and corrective communication in public health crises (Van der Meer and Jin, 2020), this study further examines linkages between individual characteristics and health misinformation susceptibility, impacts of different forms of viral (mis)information on social media users’ responses to health messages, and the effectiveness of corrective communication in mitigating the harm of health misinformation. We present below our rationale surrounding each of the theoretical considerations and the resultant research questions.

Age and susceptibility to misinformation

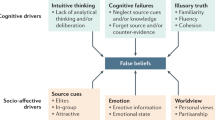

Research investigating the relationship between age and misinformation has been ongoing for more than 30 years. However, consensus about whether older or younger adults are more vulnerable to misinformation has yet to be reached. Findings from a recent study of political misinformation behavior on Facebook showed that adults aged 65 or older shared links to fake domains seven times more often than young adults (Guess et al., 2019). A similar study using Twitter revealed that users aged 50 or more accounted for 80% of fake news shared (Grinberg et al., 2019). In concert with anecdotal evidence about prolific misinformation sharing behavior via WhatsApp among older adults (Bangkok Post, 2019; Quartz Africa, 2019; Walcott, 2020), academics have speculated about the potential vulnerability of older adults to COVID-19 misinformation (Vijaykumar, 2020). These concerns are not unfounded and find support in historic evidence from research on the ‘misinformation effect’, which suggests that our ability to recall past events can be affected by exposure to false or misleading information about the event. In effect, misinformation pollutes our memory of original events with details that do not exist, effectively making our memory malleable (Loftus and Hoffman, 1989). Reviews of psychological studies have revealed that older adults are more vulnerable to misinformation than younger adults (Wylie et al., 2014) and, more worryingly, possess greater confidence in false memories (Jacoby and Rhodes, 2006). Brashier and Schachter (2020) summarize three explanations that are often invoked to explain this increased vulnerability to misinformation especially in the social media age where fake news is rampant: (i) decline of cognitive function with age places a strain on abilities related to cognitive functioning and abstract reasoning, (ii) older adults’ tendency to forget the source of the original misinformation (called source monitoring) (Dehon and Brédart, 2004; Mitchell and Johnson, 2009) which makes fact-checks less effective for this group, and (iii) that older adults are relatively new to digital technologies and thus possess limited capabilities to differentiate between accurate and misinforming content especially when images and text arrive in various ‘shades of truth’ (Nightingale et al., 2017).

The relationship between age and vulnerability to misinformation is however contested by other streams of evidence. For instance, older and younger adults were found to be equally vulnerable to the illusory truth effect because older adults are able to invoke their extensive knowledge base while evaluating false claims (Brashier et al., 2017). Illustrating this point, one study found that the ability to distinguish between true and fake headlines increased with age; notwithstanding their apparent online gullibility due to low digital literacy levels, older adults are more resilient to scams offline (Brashier and Schacter, 2020). While older adults might be able to distinguish between true and fake information on a one-off basis, their vulnerability increases when repeatedly exposed to misinformation (as can happen on social media) (Brashier and Schacter, 2020). However, the problem of digital illiteracy is not limited to older adults alone. Despite being prolific social media users, researchers have described the ability of young adults to evaluate the quality of online information as “bleak” (Stanford Health Education Group, 2019).

In this study, we investigate how age is associated with differential psychological and behavioral responses to varied ‘shades of truth’, embedded in COVID-19 (mis)information, reaching WhatsApp users in the UK and Brazil. The following research questions were explored:

RQ1.1: To what extent is age associated with misinformation beliefs related to COVID-19?

RQ1.2: To what extent is age associated with perceived credibility of COVID-19 information on WhatsApp?

RQ1.3: To what extent is age associated with intention-to-share COVID-19 information on WhatsApp?

Forms of misinformation circulating online

Online communication platforms are flooded with misinformation in various forms. Two broad categories of misinformation exist: (1) Completely false information, which is based on Tan et al.’s (2015) definition of misinformation as “explicitly false” information as falsified by expert consensus; and (2) Partially false (or Incomplete) information, which may contain a mixture of correct, unverified, and objectively false information or rumors (Southwell et al., 2018). For example, in the context of mental health, exercising is a complementary therapy for depression treatment; however, when it is presented as an alternative therapy to standard medical treatment, it becomes incomplete information for correct treatment, thus misleading and even causing negative health consequences such as antidepressant rejection among patients (Glazer, 2013).

The intentions driving the spread of misinformation can range from (a) efforts to make sense of ambiguous and uncertain situations through rumors (DiFonzo and Bordio, 2007), (b) “honest mistakes” made in spreading inaccurate information about a complex situation without intending to mislead (Freelon and Wells, 2020; Hameleers, 2020), or (c) intentionally designing or spreading falsehood in order to harm others (Freelon and Wells, 2020; Hameleers, 2020). Regardless of the types and intentions underlying misinformation, its spread has damaging consequences, especially in public health crisis situations (Van der Meer and Jin, 2020).

In this study, we investigate how exposure to different types of (mis)information (operationalized by varied ‘shades of truth’ embedded in COVID-19 (mis)information) might affect psychological and behavioral responses among WhatsApp users in the UK and Brazil.

RQ2.1: To what extent does exposure to different types of (mis)information affect beliefs in COVID-19 misinformation?

RQ2.2: To what extent does exposure to different types of (mis)information affect perceived credibility of COVID-19 information?

RQ2.3: To what extent does exposure to different types of (mis)information affect intention-to-share COVID-19 information?

Efficacy of corrective information

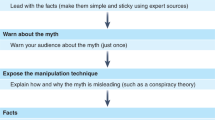

Existing empirical results regarding the efficacy of corrective efforts against misinformation are mixed, although the complexity of debunking misinformation has been acknowledged. Corrections may be ineffective or even backfire by strengthening falsehoods (Lewandowsky et al., 2012). Meta-analyses of existing research on corrective interventions have revealed several successful strategies. Those which combine retraction with an alternative explanation are reported to be most effective compared to fact-checking and appeals to credibility (Walter and Murphy, 2018). Corrections with factual elaboration (e.g., detailing not only that there was misinformation but also why) seemed to be more effective compared to warnings of the possible presence of misinformation and social discrediting of the misinformation source (Blank and Launay, 2014).

Extant research provides mixed findings of the role of the source of corrective information on the effectiveness of debunking strategies. Critiquing the credibility of the misinformation itself was found to be far more important than who was providing the corrective information (Walter and Tukachinsky, 2020). On the other hand, in times of crisis or public emergency, sources of corrective information can make a difference. Expert sources have been found to be especially helpful in enhancing the efficacy of correction attempts(Vraga and Bode, 2018). For example, the inclusion of source information in the correction message was found to be important for correcting misinformation-based beliefs about the Zika virus (Vraga and Bode, 2018). Van der Meer and Jin (2020) further demonstrated that, compared to social peers, both national news media and government health agencies (e.g., the CDC) are more effective in correcting misinformation-based beliefs about a hypothetical infectious disease outbreak.

When compared with experimental conditions where misinformation remains uncorrected, correction helped reduce individuals’ belief in misinformation (Walter and Murphy, 2018; Blank and Launay, 2014). However, corrections do not necessarily result in a complete reversion of “people’s attitudes and beliefs to their baseline levels” (Vraga and Bode, 2018). Because misinformation can persist even after a correction, it is important to enhance individuals’ media literacy (e.g., news media literacy interventions) and their source criticism skills (e.g., information vetting) (Lu and Jin, 2020) to reduce their vulnerability toward misinformation.

In this study, we investigate the extent to which corrective information where WHO is included as the source can affect psychological and behavioral responses to COVID-19 (mis)information (with varied ‘shades of truth’ embedded) among WhatsApp users in the UK and Brazil.

RQ3.1: To what extent does corrective information from the WHO reduce COVID-19 misinformation beliefs?

RQ3.2: To what extent does corrective information from the WHO strengthen perceived credibility of the corrective COVID-19 information?

RQ3.3: To what extent does corrective information from the WHO affect intention-to-share of the corrective COVID-19 information?

Methods

We conducted two randomized online experiments in the UK and Brazil to address the aforementioned RQs. In this section, we first provide an overview of the study design, specifying how the independent variables (IVs) and their respective levels were operationalized. We then describe the experimental stimuli, procedures, and the participant profile before detailing the key outcome variables we measured.

Design

Both experiments were based on a 2 (age) × 3 (shades of truth) × 2 (exposure) mixed experimental design. Among the three IVs, age and ‘shades of truth’ were the between-subject factors while the two-time exposure was the within-subject factor. Specifically:

-

A.

Age (IV1) was comprised of two levels: 18–54 years of age and 55 years of age and above. This grouping was based on initial ANOVA analysis which identified sets of homogeneous groups—(i) 18–34 and 35–54, and (ii) 55–64 and 65 and above—which were then condensed into two groups (18–54 and 55 and above).

-

B.

‘Shades of truth’ (IV2)—henceforth referred to as (mis)information type—was comprised of three levels: full falsity, partial falsity, and full truth. Full falsity refers to a message containing completely inaccurate information relating to a remedy using garlic to cure COVID-19. Partial falsity refers to a message containing a mixture of accurate and inaccurate information. Full truth contained completely accurate information (i.e., an absence of misinformation) regarding the question of whether garlic can be used to cure COVID-19.

-

C.

Exposure (IV3) was comprised of two levels: (i) Exposure 1: initial exposure to a randomly-assigned (mis)information type (corresponding to one of the three levels of IV2 as described above); and (ii) Exposure 2: further exposure to corrective information from the WHO. Exposure was used to assess participants’ responses to different ‘shades of truth’ of COVID-19 (mis)information and whether corrective information received later on might correct or sustain their initial responses to the (mis)information to which they were exposed earlier. The stimuli for both exposures were designed to look like WhatsApp forwards (see examples in Appendix 1).

Stimuli for exposure 1: (mis)information type

We created the stimuli by extracting specific elements from two kinds of existing WhatsApp messages already in circulation in the UK and Brazil: the first contained COVID-19 misinformation falsely attributed to UNICEF (ABC, 2020) and the second claimed the remedial powers of garlic (iNews, 2020; Poynter, 2020), representing two misinformation features embedded in all three stimuli in our study: (1) false attribution to a health authority (i.e., the WHO) and (2) garlic as a claimed COVID-19 cure. All three stimuli thus contained: (1) constant element: claimed source (#WHO), (2) manipulated levels according to our operational definition of (mis)information type with three varying ‘shades of truth’ (full falsity, partial falsity, full truth), and (3) constant element: a final sentence requesting the recipient to share the message.

The stimulus for exposure 2: corrective information

A stimulus with corrective information was designed for participants’ second exposure in the study. An infographic from the WHO’s EPI-WIN myth busters website was used which addressed the misinformation pertaining to the use of garlic as a cure to COVID-19 including the WHO logo to accredit the information in the infographic.

Procedure

Participant recruitment

Participants in both UK and Brazil were recruited through Qualtrics’ panel of survey respondents who accessed the survey through a link. A quota sampling technique was used to obtain an even distribution across the three stimuli presented at Exposure 1 and across age groups. Data collection commenced on May 26, 2020, and culminated on June 4 and June 9 in the UK and Brazil, respectively. To be eligible, participants had to be a minimum of 18 years of age and users of WhatsApp. We obtained informed consent from all participants prior to the start of the online survey which was approved by the faculty ethics committee at Northumbria University.

Experimental design

To start with, all participants were asked a series of questions capturing their knowledge of COVID-19. As seen in Fig. 1, participants were then randomly assigned one of three messages based on the three ‘shades of truth’ of COVID-19 related (mis)information—full falsity, partial falsity, and full truth—presented in the form of a WhatsApp forward set in the wireframe of a smartphone. The nature of the message was not revealed until after the participation ended in the form of a briefing statement. The initial exposure time lasted a minimum of 90 s (Brazil: M = 114.79, SD = 51.46; UK: M = 109.10, SD = 45.10). Subsequently, participants were asked to respond to questions related to their belief in the assigned message, their perceived credibility of the information, and their intention-to-share the information. All participants were then presented one corrective message (same across three different initial-exposure groups) from the WHO for a minimum of 90 s (Brazil: M = 114.16, SD = 60.93; UK: M = 113.24, SD = 58.69), after which they again responded to the same set of questions listed above. Brazilian participants took between 5–40 min to complete the study, whilst the UK participants took between 4 and 21 min. This study design is represented in Fig. 1.

Experiments were based on a 2 (age) × 3 (‘shades of truth’) × 2 (exposure) mixed experimental design. Age (IV1) was comprised of two levels: 18–54 years of age and 55 years of age and above. ‘Misinformation Type’ (IV2) was comprised of three levels: full falsity, partial falsity, and full truth. Exposure (IV3) was comprised of two levels: (i) Exposure 1: initial exposure to a randomly-assigned (mis)information type (corresponding to one of the three levels of IV2 as described above); and (ii) Exposure 2: further exposure to corrective information from the WHO.

Measures

Our study first assessed pre-exposure to COVID-19 knowledge among participants. Then, after Exposures 1 and 2 we measured a set of outcome variables to gauge how participants’ responses (i.e., their beliefs in the misinformation about garlic as a cure for COVID-19, perceived credibility of the message, and their intention-to-share the message) might differ as a function of the three IVs, individually or jointly.

COVID-19 knowledge

We measured participants’ knowledge based on their identification of five facts about COVID-19 from the WHO website as true or false: (1) "The most common symptoms of Coronavirus (COVID-19) are fever, tiredness, and dry cough”, (2) “The time between catching the Coronavirus (COVID-19) and beginning to have symptoms of the disease ranges from 1 to 14 days”, (3) “Coronavirus (COVID-19) is mainly transmitted through contact with respiratory droplets than through the air”, (4) “Antibiotics are not effective in preventing or treating Coronavirus (COVID-19), and (5) “Wearing multiple masks is not effective against Coronavirus (COVID-19)”. A knowledge index was created for participants from each country.

Misinformation belief

Focusing on the widely-circulated misinformation about garlic as a COVID-19 cure, we assessed participants’ belief in this particular misinformation (after exposure 1) and corrective information (after exposure 2) by asking their perceived accuracy of the statement “Garlic can cure me of the coronavirus (COVID-19)”, using a 5-point Likert-type scale (1 = completely inaccurate, 5 = completely accurate) (Carey et al., 2020). UK participants were asked to rate the perceived accuracy of the misinformation message following exposure to misinformation (M = 2.16, SD = 1.48) and corrective information (M = 2.21, SD = 1.49). Brazilian participants also reported perceived accuracy following misinformation (M = 1.96, SD = 1.43) and corrective information (M = 1.91, SD = 1.35) using the same items.

Message credibility

Message credibility was assessed after Exposures 1 and 2 by using three items (Appelman and Sundar, 2016)—“Accurate”, “Believable”, and “Authentic”—on a 5-point Likert-type scale (1 = very poorly, 5 = very well). After Exposure 1, participants were asked to evaluate the (mis)information message they just read (UK: α = 0.95, M = 2.53, SD = 1.44; Brazil: α = 0.95, M = 1.95, SD = 1.25), and then again after Exposure 2 about the corrective information message (UK: α = 0.96, M = 3.27, SD = 1.45; Brazil: α = 0.97, M = 2.85, SD = 1.53).

Intention-to-share

A six-item measure of intention-to-share information was applied to ask how likely participants were to share what they saw at Exposures 1 and 2 with “friends”, “immediate family”, “extended family”, “colleagues”, “strangers”, and “Nobody” (reversed coded), using a 5-point Likert-type scale, ranging from 1 = “highly unlikely” to 5 = “highly likely”. Participants were asked to indicate their likelihood to share the WhatsApp forward containing (mis)information at Exposure 1 (UK: α = 0.88, M = 2.39, SD = 1.28; Brazil: α = 0.88, M = 1.93, SD = 1.10) and the one containing corrective information at Exposure 2 (UK: α = 0.88, M = 2.85, SD = 1.26; Brazil: α = 0.92, M = 2.71, SD = 1.36).

Data analyses

Descriptive statistics and t-tests were used to generate the participant profile and knowledge scores, respectively. Given the mixed experimental design, effects of the IVs on the outcome measures were analyzed using general linear models (GLM) with repeated measures for misinformation belief, message credibility, and intention-to-share. We first assessed the main effects of age, (mis)information type (shades of truth), and exposure to examine how each of them individually affected the outcomes and then investigated two-way or three-way interaction effects.

Findings

Our survey involved 725 participants in the UK and 729 in Brazil, respectively (total N = 1454). Both the UK and Brazil samples comprised a majority of male participants with ~66 and 59%, respectively. Nearly 59% of participants in the UK and 55% in Brazil were educated at the undergraduate level or higher. A detailed demographic breakdown is available in Fig. 2.

Participants’ knowledge of COVID-19 was relatively high in the UK (M = 4.15, SD = 0.93) and medium in Brazil (M = 3.25, SD = 0.67). Older adults were significantly more knowledgeable than younger adults in both UK [M = 4.43, SD = 0.74 vs. M = 3.85, SD = 1.01, p < 0.05] and Brazil [M = 3.29; SD = 0.63 vs. M = 3.19, SD = 0.70, p < 0.05].

Our analysis yielded findings on the effects of age, (mis)information type, and exposure on misinformation belief (RQs 1.1, 2.1, 3.1), perceived credibility (RQs 1.2, 2.2, 3.2), and intention-to-share (RQs 1.3, 2.3, 3.3) (Table 1). In the following section, for each outcome variable, we present the detected main effects of the three IVs and then significant interaction effects, if any, for the UK and then Brazil. Below, our presentation of findings for each outcome will commence with a narrative description of findings, followed by a graphical representation of mean scores, and culminate with a tabulated summary of mixed and interaction effects. A full list of means and standard deviations (SDs) by sub-group and for the study population is available in a tabulated format in Appendix 2.

Misinformation belief in the UK

Main effects

Means and effect sizes for misinformation belief are presented in Fig. 3 and Table 1 below. Age had a significant effect on participants’ misinformation belief (RQ 1.1). Younger adults (18–54 age group) believed the focal misinformation of this study (i.e., garlic can cure COVID-19) to be significantly more accurate than older adults (55+ age group). (Mis)information type and the exposure did not have significant effects on participants’ belief in the focal misinformation (RQs 2.1 and 3.1, respectively).

Interaction effects

We detected a significant interaction between age and exposure to corrective information. Misinformation belief increased among older adults following exposure to corrective information compared to the first exposure to misinformation.

Misinformation belief in Brazil

Main effects

Age had a significant effect on misinformation belief (RQ 1.1), with younger adults believing the misinformation message to be more accurate than older adults. (Mis)information type had a significant effect on misinformation belief (RQ 2.1). Surprisingly, misinformation beliefs were stronger in the full-truth group compared to the full-falsity group. Exposure did not have a significant effect on misinformation belief (RQ 3.1).

Interaction effects

We found two significant interaction effects. The first was between age and exposure to corrective information. Misinformation belief among older adults was reduced after exposure to corrective information, not significant at the Bonferroni corrected (0.025) alpha levels even though there was a trend towards significance (p = .033). The second significant interaction was between (mis)information type and exposure to corrective information. Surprisingly, the misinformation belief of participants in the full-truth group increased following exposure to corrective information as compared to after the initial exposure.

Perceived credibility in the UK

Main effects

Means and effect sizes for perceived credibility are presented in Fig. 4 and Table 2 below. Age had a significant main effect on participants’ ratings of message credibility (RQ 1.2), with the younger adults perceiving received WhatsApp messages as more credible than older adults. (Mis)information type had a significant effect on overall message credibility (RQ 2.2). Specifically, participants in the full-truth group rated the messages they were exposed to as significantly more credible than those in the full-falsity group, and the partial-falsity group). Exposure to corrective information also had a significant effect on message credibility (RQ 3.2), with the corrective information rated as significantly more credible than the original exposure to (mis)information.

Interaction effects

We found two significant interaction effects. First, there was a significant two-way interaction effect between age and exposure to corrective information on message credibility. While both younger and older adults reported the corrective information to be more credible than the (mis)information exposure, the effect for younger adults was smaller than that of older adults. Second, (mis)information type was found to interact with exposure to corrective information. The corrective information was perceived to be significantly more credible than all three (mis)information exposures.

Perceived credibility in Brazil

Main effects

Age had a significant main effect on overall message credibility (RQ 1.2), with younger adults perceiving the messages they were exposed to as more credible than older adults. (Mis)information type also had a significant effect on overall message credibility. Specifically, participants in the full-falsity group rated the stimuli they were exposed to as significantly less credible than both the partial-falsity and full truth groups. Exposure to corrective information had a significant effect on message credibility ratings (RQ 3.2), as the corrective information stimulus was rated as being significantly more credible than the (mis)information stimuli to which participants were initially randomly assigned.

Interaction effects

Three significant interaction effects were detected. The first significant interaction effect was found between age and exposure to corrective information on ratings of message credibility. The message in the corrective information stimulus was rated as significantly more credible than the message of the (mis)information stimuli during the initial message exposure for both age groups. We also found a significant interaction effect between (mis)information type and exposure to corrective information. The corrective information stimulus was perceived to be significantly more credible than all three (mis)information stimuli. Age and (mis)information type were also found to significantly interact on scores of message credibility. Younger adults in the full falsity and partial falsity groups rated the stimuli that they were exposed to as more credible than older adults in those groups.

Intention-to-shrare in the UK

Main effects

Means and effect sizes for intention-to-share are presented in Fig. 5 and Table 3 below. Overall, younger adults were significantly more likely to share the WhatsApp messages they were exposed to than older adults (RQ 1.3). (Mis)information type had a significant main effect on intention-to-share (RQ 2.3). Specifically, participants in the full-truth group were significantly more likely than those in the full-falsity group to share the messages to which they had been exposed. Exposure to corrective information was also found to have a significant main effect on intention-to-share the corrective information message (RQ 3.3). Participants were significantly more likely to share the corrective information than the (mis)information message they were randomly assigned in their initial exposure.

Interaction effects

We found three significant interaction effects. First, we found a significant two-way interaction between age and exposure to corrective information on intention-to-share the forwarded message. While both younger and older adults reported that they would be significantly more likely to share the corrective message than the (mis)information stimulus they were randomly assigned to read earlier, the effect among younger adults was smaller than for older adults. Lastly, (mis)information type and exposure to corrective information were found to exert a significant interaction effect. While participants exposed to all three (mis)information types reported that they were significantly more likely to share the corrective information compared to the (mis)information, the effect was greatest among the partial falsity and the full-truth groups.

Intention-to-share in Brazil

Main effects

Age had a significant main effect on intention-to-share (RQ 1.3), with younger adults reporting a significantly greater likelihood than older adults of sharing the messages viewed in the study. (Mis)information type was found to have a significant main effect on intention-to-share (RQ 2.3). Specifically, participants in the full truth condition were significantly more likely to share the message stimuli they had been exposed to compared to the full-falsity group. There was also a significantly higher likelihood of sharing corrective information as opposed to (mis)information (RQ 3.3).

Interaction effects

A significant interaction effect was found between age and exposure to corrective information. Both younger and older adults were significantly more likely to share corrective information compared to (mis)information, with the effect being more pronounced in the younger age group. (Mis)information type also interacted significantly with exposure to corrective information. Consistent with the findings for age, participants across all three (mis)information groups reported that they were significantly more likely to share corrective information compared to all (mis)information types. Lastly, we found a significant interaction effect between age and (mis)information type on intention-to-share. There was a significant effect of age on both the full-falsity and partial-falsity misinformation conditions with younger adults being more likely to share the message stimuli than older adults.

Discussion

The UK and Brazil, two countries that have reported the highest number of COVID-19 fatalities in Europe and South America are home to 27.6 million and 99 million WhatsApp users, respectively (John Hopkins University and Medicine, 2020; Iqbal, 2020). These users, straddling the age spectrum, have been at risk of exposure to COVID-19 online misinformation circulating in various forms. Building on a growing body of research in misinformation studies, we sought to examine the extent to which age, exposure to different types of misinformation, and corrective information affect WhatsApp users’ misinformation belief, perceived credibility, and intention-to-share COVID-19 information. While our study did not set out to statistically compare the two countries given the obvious cultural and socio-political differences, contrasting them provides a useful rubric for discussion.

Overall, we found (1) significant effects of age on all three outcomes across both countries, (2) significant effects of misinformation type on all outcomes with the exception of misinformation belief in the UK, and (3) significant effects of corrective information on all outcomes in both countries except misinformation belief in the UK. We now discuss the theoretical, methodological, and practical implications of these findings for public health research and communication.

Age

While levels of belief in misinformation were low-to-medium in all groups, younger adults were significantly more likely to believe misinformation than older adults and also more likely to share it. This was found in both the Brazil and UK studies, suggesting that older people are potentially more adept than younger adults at differentiating between the information of varying accuracy. These findings are in contrast with prior research (Guess et al., 2019) suggesting that older adults are more likely to share political misinformation than younger adults, and previous research from studies in psychology and advertising (Wylie et al., 2014; Jacoby and Rhodes, 2006). However, it reinforces findings from a 50-country survey by Baum and colleagues (2020) which found that younger adults were more likely to believe misleading claims around COVID-19 compared to older adults.

Our findings also support previous studies indicating that older adults are able to deploy their more extensive general knowledge to critically evaluate new information (Umanath and Marsh, 2014). The higher levels of COVID-19 knowledge observed among the older adults in our study are consistent with this theory. Another explanation lies in the nature of the misinformation we tested—about the curative powers of garlic for COVID19—which is not likely to generate an emotional response compared to a conspiracy theory, for example. However, its appraisal is more likely driven by new knowledge that older adults would continue to acquire (Brashier and Schacter, 2020) especially during an evolving health crisis like COVID-19.

Despite these encouraging signs, we observed that belief in fully or partially false messages increased after older participants were exposed to corrective information, particularly in the UK group. This counterintuitive phenomenon, the backfire effect, has been seen in other contexts, such as vaccination messaging (Pluviano et al., 2019), and is thought to arise by enhancing participants’ familiarity with the claim. This paradoxical outcome was also found in a study of older adults (Skurnik et al., 2005), which found that repeated correction entrenched and magnified beliefs in false claims. In the absence of relevant prior knowledge to aid with judging misinformation, repeated exposure to misinformation may also give rise to an ‘illusion of truth’ effect amongst older people (Brashier et al., 2017). It is possible these unintended effects of repeated misinformation featured in our experimental design since participants were exposed to some form of information about garlic and COVID-19 at least four times (once each during the two exposures, and twice while reading the question stem that followed these stimuli). This effect was not observed among younger adults in the UK or any age group in the full truth groups.

(Mis)information type

Individuals’ responses to health (mis)information differ as a function of varied “shades of truth”. In both UK and Brazil, participants rated the full truth messages as more credible and were intent on sharing them more than the full-falsity or partial-falsity messages. These findings demonstrate their critical message evaluation abilities and are consistent with recent research from Argentina which revealed healthy skepticism among social media users who can differentiate between facts and misinformation (Wagner, 2020). In addition, we learned that users correctly identified accurate information irrespective of its stated source, which may have implications for so-called imposter messages. As such, message credibility could be driven more from an evaluation of the message as opposed to the source of information. This may have been bolstered by systematic efforts by the Brazilian and UK governments to combat misinformation. For example, the Brazilian Health Ministry launched a fact-checking initiative by creating a WhatsApp number for community members to contribute health messages for verification, the results of which are published on the website (Ministério da Saúde, 2020). Of the 84 messages about COVID-19 that have been fact-checked so far, only five have been found to be true. The fact-checked messages include misinformation about a range of food items—such as hot water, garlic water, and even bat soup—that could prevent the disease. In addition, the Oswaldo Cruz Foundation unit in Brasilia (Fiocruz Brasilia) launched a social media campaign in March 2020 to create awareness about the importance of verifying information and discouraging users from sharing fake news. In the UK, the government launched a Rapid Response within the Counter Disinformation Cell to track and tackle online misinformation (GOV.UK, 2020). Extensive media coverage about COVID-19 misinformation could have created greater public awareness about the problem. A study conducted among news audiences in the UK found that they easily identified misinformation of the kind that was tested in this study but were more concerned about misinformation or unclear and confusing communications about COVID-19 guidelines coming from the UK government (Soo et al., 2020).

Our study also echoes earlier misinformation research (Southwell et al., 2018; Freelon and Wells, 2020; Hameleers, 2020) suggesting that messages which are partially true can be particularly dangerous in entrenching misinformation belief and may trigger further sharing based on individuals’ false impressions that such information appears to be correct. These characteristics of (mis)information need to be further elucidated to create a taxonomy of misinformation circulating both online and offline.

Corrective information

Across the UK and Brazil, we found that corrective information from the WHO reduced misinformation beliefs in most sub-groups, and significantly increased perceived credibility and intention-to-share across all sub-groups. These findings suggest that corrective information from public health authorities is critical in (1) sustaining previously held accurate information, (2) debunking misinformation, and (3) intervening against harmful misinformation-sharing. It also corresponds with the evidence-based fact-checking practice that appeals to credibility (Van der Meer and Jin, 2020; Walter and Murphy, 2018). Furthermore, by using an infographic as the vehicle of truth-telling, our study opens the portal for a new line of research exploring the role of modality in health communication (Lee et al., 2018) as it relates to risk communication during health crises like COVID-19 (Jin et al., 2017). Insights from such research may help to optimize the effectiveness of corrective information in debunking health misinformation by going beyond text-based warnings or factual elaboration (Van der Meer and Jin, 2020).

Theoretical and practical implications for health and risk communication

Our findings have several theoretical implications for behavioral scientists and health and risk communication researchers in public health. To begin with, we emphasize the need to consider the role of individual factors like age in both vulnerabilities to misinformation and in people’s responses to corrective interventions. Health misinformation, especially misinformation about infectious disease outbreaks (Vijaykumar et al., 2015; Vijaykumar et al., 2019), brings unique misinformation mitigation challenges especially in a social-mediated and highly competitive media environment (Van der Meer and Jin, 2020). Previous empirical evidence of misinformation vulnerability associated with age (e.g., older people are more vulnerable to misinformation) does not seem to apply to the outbreak misinformation context based on our findings.

Because the viral spread of misinformation is driven by sharing behaviors, we suggest that future research identify specific cognitive factors—e.g., misinformation beliefs and perceived credibility—activated by misinformation exposure that tend to influence sharing behaviors. As corrective information has shown to by and large reduce misinformation beliefs, enhance perceived credibility and intention-to-share, we also suggest greater attention to testing the efficacy of communication interventions by health agencies using scientifically robust study designs. Such efforts will help create an evidence base of strategies to combat misinformation which could prove valuable in future infodemics.

For health and risk communication practitioners, our study highlights the need to actively engage with younger adults given their increased vulnerability to misinformation belief, lower levels of knowledge, and increasing susceptibility to COVID-19. Equally, communications reaching older adults will need to be calibrated in terms of frequency and content in order to avoid reinforcement of beliefs in misinformation that they receive on social media platforms like WhatsApp. Our findings also clearly speak to the need for public health organizations to incorporate audience segmentation activities as part of their infodemic management strategies. Ongoing efforts (e.g., EPI-WIN) that have made a promising start by creating targeted communication materials for different audience groups might need to bolster these efforts by identifying cross-cutting demographic segments through audience segmentation so that risk communication can be better tailored to suit specific informational and belief sensitivities (Adams et al., 2017). Public libraries are emerging as potential stakeholders who could contribute to these efforts to disseminate accurate information and combat misinformation as they could provide tools to verify the quality of information to a range of population groups (Coward et al., 2018; Young et al., 2020; Ale, 2020). As such, risk communication practitioners working in the context of disease outbreaks need to understand audience demographics and tailor communication efforts in a way that addresses informational and belief sensitivities. Furthermore, our findings bolster calls from previous research (Nyhan et al., 2014) to highlight the importance of pre-testing messages. This important step will help health agencies evaluate the effectiveness of these messages before making them available for public consumption, and help avoid unintended backfire effects of myth-busting activities; lest the investment in terms of monetary and human resources yield sub-optimal returns.

Our study has several limitations. First, our experiment involved a singular misinformation claim about the curative powers of garlic for COVID-19 even though social media is replete with numerous claims and other types of misinformation. A broader study involving different types of misinformation (conspiracy theories, hoaxes, rumors, etc.) each arriving in different ‘shades of truth’ would be a valuable addition to the misinformation literature. Using digital platforms to deliver randomized content would increase the feasibility of testing more varied types of misinformation. Our misinformation and corrective information stimuli were designed in the form of a WhatsApp forward that participants viewed on a computer or a smartphone. While this reduced the ecological validity, our decision to proceed with this mode of stimuli presentation was driven by an ethical consideration aimed at minimizing any prospect of the stimuli getting circulated in the real world. We partially addressed this limitation by using misinformation content that was already in circulation in the UK and Brazil. Lastly, in order to pre-empt practical challenges in survey recruitment, we oversampled for the 55+ age group as a result of which our study sample is not representative of the overall population in the UK and Brazil.

In conclusion, our findings provide several practical takeaways for public health policymakers, first responders, and communicators. Effective health communication, especially in times of infectious disease outbreaks, requires not only disseminating accurate information but also preparing to pre-bunk and/or de-bunk misinformation based on evidence-based research on misinformation characteristics and anticipated misinformation vulnerability among at-risk populations. A robust understanding of how different population groups in disease-stricken regions and countries consume and react to information with varied ‘shades of truth’ can significantly enhance the effectiveness of public health policy, mobilize individuals to take correct protective action recommended by authorities, and even activate user-generated fact-checking systems to provide additional social media shields against health misinformation spread. The counterintuitive findings regarding age (i.e., older adults, at least as evidenced in Brazil and UK participants of our study, seem to be more vigilant in discerning the actual ‘shade of truth’ and taking precautionary actions) provide new opportunities for enabling older adults to take an active role in misinformation preparedness despite the challenge of digital literacy (Stanford Health Education Group, 2019), which takes different forms among older and younger adults.

We recommend that misinformation researchers work with organizations like the WHO to evaluate misinformation correction campaigns involving larger and more representative respondents and develop more effective corrective message strategies. Doing so will contribute to a better understanding of demographic mediators, and enable purposive cross-country comparisons to better understand the role of culture and context in viral misinformation effects and identify approaches to best combating misinformation via persuasive corrective communication efforts.

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

References

ABC (2020) UNICEF warns of scam coronavirus messages circulating through social media. https://abcnews.go.com/Health/unicef-warns-scam-coronavirus-messages-circulating-social-media/story?id=69486041. Accessed 12 Jan 2021

Adams RM, Karlin B, Eisenman DP et al. (2017) Who participates in the Great ShakeOut? Why audience segmentation is the future of disaster preparedness campaigns. Int J Environ Res Public Health 14(11):1407–1419. https://doi.org/10.3390/ijerph14111407

Ale V (2020) A library-based model for explaining information exchange on Coronavirus disease in Nigeria. Ianna J Interdiscipl Stud 2(1):1–11

Appelman A, Sundar SS (2016) Measuring message credibility: scale construction and validation with news stories. Journal Mass Commun Quart 93(1):59–79

AS-COA (2020) The Coronavirus in Latin America. https://www.as-coa.org/articles/coronavirus-latin-america. Accessed 12 Jan 2021

Bangkok Post (2019) Anti-fake news centre pins blame on elderly. https://www.bangkokpost.com/thailand/general/1806984/anti-fake-news-centre-pins-blame-on-elderly. Accessed 12 Jan 2021

Baum MA, Ognyanova K., Chwe H (2020) The State of the Nation: A 50-State Covid-19 Survey Report #14: Misinformation and Vaccine Acceptance. http://www.kateto.net/covid19/COVID19%20CONSORTIUM%20REPORT%2014%20MISINFO%20SEP%202020.pdf. Accessed 12 Jan 2021

BBC (2020) Coronavirus: What attacks on Asians reveal about American identity. https://www.bbc.co.uk/news/world-us-canada-52714804. Accessed 12 Jan 2021

Blank H, Launay C (2014) How to protect eyewitness memory against the misinformation effect: a meta-analysis of post-warning studies. J Appl Res Memory Cogn 3(2):77–88. https://doi.org/10.1016/j.jarmac.2014.03.005

Brashier NM, Schacter DL (2020) Aging in an era of fake news. Curr Direct Psychol Sci 1–8. https://doi.org/10.1177/0963721420915872

Brashier NM, Umanath S, Cabeza RM et al. (2017) Competing cues: older adults rely on knowledge in the face of fluency. Psychol Aging 32(4):331–337

Brennen JS, Simon F, Howard PN et al. (2020) Types, sources, and claims of Covid-19 misinformation. Reuters Institute 7:1–13

Carey JM, Chi V, Flynn DJ et al. (2020) The effects of corrective information about disease epidemics and outbreaks: evidence from Zika and yellow fever in Brazil. Sci Adv 6(5):eaaw7449. https://doi.org/10.1126/sciadv.aaw7449

Coward C, McClay C, Garrido M (2018) Public libraries as platforms for civic engagement. Technology & Social Change Group, University of Washington Information School, Seattle, https://digital.lib.washington.edu/researchworks/bitstream/handle/1773/41877/CivLib.pdf. Accessed 12 Jan 2021

Dehon H, Brédart S (2004) False memories: young and older adults think of semantic associates at the same rate, but young adults are more successful at source monitoring. Psychol Aging 19(1):191–197

DiFonzo N, Bordia P (2007) Rumor, gossip and urban legends. Diogenes 54(1):19–35. https://doi.org/10.1177/0392192107073433

ECDC (2020). COVID-19 situation update for the EU/EEA and the UK, as of 11 October 2020. https://www.ecdc.europa.eu/en/cases-2019-ncov-eueea. Accessed 12 Jan 2021

Freelon D, Wells C (2020) Disinformation as political communication. Polit Commun 37(2):145–156. https://doi.org/10.1080/10584609.2020.1723755

Gallotti R, Valle F, Castaldo N et al. (2020) Assessing the risks of ‘infodemics’ in response to COVID-19 epidemics. Nat Human Behav 4:1285–1293. https://doi.org/10.1038/s41562-020-00994-6

Glazer W (2013) Scientific journalism: the dangers of misinformation: journalists can mislead when they interpret medical data instead of just reporting it. Curr Psychiatry 12(6):33–35

GOV.UK (2020). Government cracks down on spread of false coronavirus information online. https://www.gov.uk/government/news/government-cracks-down-on-spread-of-false-coronavirus-information-online. Accessed 12 Jan 2021

Grinberg N, Joseph K, Friedland L et al. (2019) Fake news on Twitter during the 2016 US presidential election. Science 363(6425):374–378

Guess A, Nagler J, Tucker J (2019) Less than you think: prevalence and predictors of fake news dissemination on Facebook. Sci Adv 5(1):eaau4586. https://doi.org/10.1126/sciadv.aau4586

Hameleers M (2020). Separating truth from lies: Comparing the effects of news media literacy interventions and fact-checkers in response to political misinformation in the US and Netherlands. Inform Commun Soc 1–17. https://doi.org/10.1080/1369118X.2020.1764603

iNews (2020). Coronavirus WhatsApp scam: message claiming to have Covid-19 cure is hoax–here’s what to do if you get it. https://inews.co.uk/news/health/coronavirus-whatsapp-scam-message-covid-19-cure-hoax-explained-398599. Accessed 12 Jan 2021

Iqbal, M. (2020). WhatsApp Revenue and Usage Statistics (2020). https://www.businessofapps.com/data/whatsapp-statistics/#:~:text=Looking%20at%20WhatsApp%20users%20by,and%20the%20US%20in%20third. Accessed 12 Jan 2021

Islam MS, Sarkar T, Khan SH et al. (2020) COVID-19–related infodemic and its impact on public health: a global social media analysis. Am J Trop Med Hygiene 103(4):1621–1629

Jacoby LL, Rhodes MG (2006) False remembering in the aged. Curr Direct Psychol Sci 15(2):49–53

Jin Y, Austin L, Guidry J et al. (2017) Picture this and take that: Strategic crisis visuals and visual social media (VSM) in crisis communication. In: Duhé S (ed.) New Media Public Relat. 3rd edn. Peter Lang Publishing Group, New York

John Hopkins University and Medicine (2020) Mortality Analyses. Mortality in the most affected countries. https://coronavirus.jhu.edu/data/mortality. Accessed 12 Jan 2021

Jolley D, Paterson JL (2020) Pylons ablaze: Examining the role of 5G COVID‐19 conspiracy beliefs and support for violence. Br J Soc Psychol 59(3):628–640. https://doi.org/10.1111/bjso.12394

Kouzy R, Abi Jaoude J, Kraitem A et al. (2020) Coronavirus goes viral: quantifying the COVID-19 misinformation epidemic on Twitter. Cureus 12(3):e7255. https://doi.org/10.7759/cureus.7255

Lee YI, Jin Y, Nowak G (2018) Motivating influenza vaccination among young adults: the effects of public service advertising message framing and text versus image support. Soc Market Quart 24(2):89–103. https://doi.org/10.1177/1524500418771283

Lewandowsky S, Ecker UK, Seifert CM et al. (2012) Misinformation and its correction: continued influence and successful debiasing. Psychol Sci Public Interest 13(3):106–131. https://doi.org/10.1177/1529100612451018

Loftus EF, Hoffman HG (1989) Misinformation and memory: the creation of new memories. J Experiment Psychol 118(1):100–104

Lu X, Jin Y (2020) Information vetting as a key component in social-mediated crisis communication: an exploratory study to examine the initial conceptualization. Public Relat Rev 46(2):101891. https://doi.org/10.1016/j.pubrev.2020.101891

Mian A, Khan S (2020) Coronavirus: the spread of misinformation. BMC Med 18(1):1–2

Ministério da Saúde (2020). Acha que ésta com sintamos da COVID-19? https://antigo.saude.gov.br/fakenews/. Accessed 12 Jan 2021

Mitchell KJ, Johnson MK (2009) Source monitoring 15 years later: What have we learned from fMRI about the neural mechanisms of source memory? Psychol Bullet 135:638–677. https://doi.org/10.1037/a0015849

Motta M, Stecula D, Farhart C (2020) How right-leaning media coverage of COVID-19 facilitated the spread of misinformation in the early stages of the pandemic in the US. Canadian J Polit Sci 53:1–8

Nan X, Wang Y, Thier K (2020) Health Misinformation. In: Thompson T, Harrington N (eds) The Routledge Handbook of Health Communication. Routledge

Nightingale SJ, Wade KA, Watson DG (2017) Can people identify original and manipulated photos of real-world scenes? Cogn Res 2 (30). https://doi.org/10.1186/s41235-017-0067-2

Nyhan B, Reifler J, Richey S et al. (2014) Effective messages in vaccine promotion: a randomized trial. Pediatrics 133(4):e835–e842. https://doi.org/10.1542/peds.2013-236

Pennycook G, McPhetres J, Zhang Y et al. (2020) Fighting COVID-19 misinformation on social media: experimental evidence for a scalable accuracy-nudge intervention. Psychol Sci 31(7):770–780

Pluviano S, Watt C, Ragazzini G et al. (2019) Parents’ beliefs in misinformation about vaccines are strengthened by pro-vaccine campaigns. Cogn Proces 20(3):325–331

Poynter (2020) FALSE: COVID-19 can be cured with an infusion of garlic, lemon and jambu (an Amazonian herb, used as a spice in Northern Brazil). https://www.poynter.org/?ifcn_misinformation=covid-19-can-be-cured-with-an-infusion-of-garlic-lemon-and-jambu-an-amazonian-herb-used-as-a-spice-in-northern-brazil. Accessed 12 Jan 2021

Quartz Africa (2019) WhatsApp is the medium of choice for older Nigerians spreading fake news. https://qz.com/africa/1688521/whatsapp-increases-the-spread-of-fake-news-among-older-nigerians/. Accessed 12 Jan 2021

Ricard J, Medeiros J (2020) Using misinformation as a political weapon: COVID-19 and Bolsonaro in Brazil. The Harvard Kennedy School Misinform Rev 1(2). https://doi.org/10.37016/mr-2020-013

Skurnik I, Yoon C, Park DC et al. (2005) How warnings about false claims become recommendations. J Consumer Res 31(4):713–724

Soo N, Morani M, Cushion S (2020) Research suggests UK public can spot fake news about COVID-19, but don’t realise the UK’s death toll is far higher than in many other countries. https://blogs.lse.ac.uk/covid19/2020/04/28/research-suggests-uk-public-can-spot-fake-news-about-covid-19-but-dont-realise-the-uks-death-toll-is-far-higher-than-in-many-other-countries/. Accessed 12 Jan 2021

Southwell BG, Thorson EA, Sheble L (2018) Misinformation and mass audiences. Vol. 307. University of Texas Press

Stanford Health Education Group (2019) Evaluating Information: The Cornerstone of Civic Online Reasoning. https://stacks.stanford.edu/file/druid:fv751yt5934/SHEG%20Evaluating%20Information%20Online.pdf. Accessed 12 Jan 2021

Statista (2020a) Share of WhatsApp users in the United Kingdom (UK) 2018, by age group. https://www.statista.com/statistics/611208/whatsapp-users-in-the-united-kingdom-uk-by-age-group/. Accessed 12 Jan 2021

Statista (2020b) Usage of selected mobile messaging apps among smartphone owners in Brazil in August 2018 and 2019. https://www.statista.com/statistics/798131/brazil-use-mobile-messaging-apps/. Accessed 12 Jan 2021

Tan AS, Lee CJ, Chae J (2015) Exposure to health (mis) information: Lagged effects on young adults' health behaviors and potential pathways. J Commun 65(4):674–698. https://doi.org/10.1111/jcom.12163

Umanath S, Marsh EJ (2014) Understanding how prior knowledge influences memory in older adults Perspect Psychol Sci 9(4):408–426

Van Bavel JJ, Baicker K, Boggio PS et al. (2020) Using social and behavioural science to support COVID-19 pandemic response. Nat Human Behav 4:460–471. https://doi.org/10.1038/s41562-020-0884-z

Van der Meer TGLA, Jin Y (2020) Seeking formula for misinformation treatment in public health crises: The effects of corrective information type and source. Health Commun 35(5):560–575. https://doi.org/10.1080/10410236.2019.1573295

Vijaykumar (2020) Covid-19: older adults and the risks of misinformation. https://blogs.bmj.com/bmj/2020/03/13/covid-19-older-adults-and-the-risks-of-misinformation/. Accessed 12 Jan 2021

Vijaykumar S, Jin Y, Nowak G (2015) Social media and the virality of risk: the risk amplification through media spread (RAMS) model. J Homeland Security Emergency Manag 12(3):653–677

Vijaykumar S, Jin Y, Pagliari C (2019). Outbreak communication challenges when misinformation spreads on social media. RECIIS (Online):39–47. https://doi.org/10.29397/reciis.v13i1.1623

Vraga EK, Bode L (2018) I do not believe you: how providing a source corrects health misperceptions across social media platforms. Inform Commun Soc 21(10):1337–1353. https://doi.org/10.1080/1369118X.2017.1313883

Vraga EK, Bode L, Tully M (2020) Creating news literacy messages to enhance expert corrections of misinformation on Twitter. Commun Res https://doi.org/10.1177/0093650219898094

Wagner MC (2020). When it comes to scientific information, WhatsApp users in Argentina are not fools. https://firstdraftnews.org/latest/when-it-comes-to-scientific-information-whatsapp-users-in-argentina-are-not-fools/. Accessed 12 Jan 2021

Walter N, Murphy ST (2018) How to unring the bell: a meta-analytic approach to correction of misinformation. Commun Monogr 85(3):423–441. https://doi.org/10.1080/03637751.2018.1467564

Walter N, Tukachinsky R (2020) A meta-analytic examination of the continued influence of misinformation in the face of correction: How powerful is it, why does it happen, and how to stop it? Commun Res 47(2):155–177

Wardle C, Derakhshan H (2017) Information disorder: toward an interdisciplinary framework for research and policy making. Council of Europe Report. https://tverezo.info/wp-content/uploads/2017/11/PREMS-162317-GBR-2018-Report-desinformation-A4-BAT.pdf. Accessed 12 Jan 2021

Walcott R (2020) WhatsApp aunties and the spread of fake news. https://wellcomecollection.org/articles/Xv3T1xQAAADN3N3r. Accessed 12 Jan 2021

WHO (2020) EPI-WIN: WHO Information Network for Epidemics https://www.who.int/teams/risk-communication. Accessed 12 Jan 2021

Wylie, LE, Patihis L, McCuller LL (2014) 2 Misinformation Effect in Older Versus Younger Adults. DFMP Toglia, The Elderly Eyewitness in Court. pp. 38–66

Young JC, Boyd B, Yefimova K, Wedlake S (2020) The role of libraries in misinformation programming: a research agenda. J Librarianship Inform Sci https://doi.org/10.1177/0961000620966650

Acknowledgements

We would like to thank Dr. Aarti Sahasranaman for reviewing and providing feedback on the initial and final proofs of this manuscript, and all the survey respondents for participating in our survey experiments. This study was supported by the WhatsApp Social Science and Misinformation Research Award.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Vijaykumar, S., Jin, Y., Rogerson, D. et al. How shades of truth and age affect responses to COVID-19 (Mis)information: randomized survey experiment among WhatsApp users in UK and Brazil. Humanit Soc Sci Commun 8, 88 (2021). https://doi.org/10.1057/s41599-021-00752-7

Received:

Accepted:

Published:

DOI: https://doi.org/10.1057/s41599-021-00752-7

This article is cited by

-

The relationship between dark tetrad and conspiracy beliefs. No consistent results across three different samples from Slovakia

Current Psychology (2024)

-

A meta-analysis of correction effects in science-relevant misinformation

Nature Human Behaviour (2023)

-

Ability of detecting and willingness to share fake news

Scientific Reports (2023)

-

Exposure to untrustworthy websites in the 2020 US election

Nature Human Behaviour (2023)

-

Narrative elaboration makes misinformation and corrective information regarding COVID-19 more believable

BMC Research Notes (2022)