Abstract

The NIH is the major federal biomedical research funding agency within the United States, and NIH funding has become a priority in institutional decisions on faculty recruitment, salary, promotion, and tenure. The implicit assumption is that well-funded investigators will maintain their funding success; however, our analysis of NIH awardees from 2000 to 2015 suggests that regardless of how well funded an investigator is, their research portfolio exhibits “regression to the mean,” matching the typical NIH funding profile within just 10–15 years. Thus, outperformance in past funding is not a strong predictor of future outperformance in funding success. This study indicates that faculty performance should not be solely judged upon grant success but should include other institutional mission priorities such as provision of clinical care, education, and service to community/profession.

Similar content being viewed by others

Introduction

The National Institutes of Health (NIH) funds most of the federal biomedical research within the United States. The investigator-initiated R01 (research project) grant is the original and continues to be the primary NIH grant mechanism. Over recent decades, particularly at medical schools, the R01 has been treated as a measure of faculty value. Success in R01 funding is the predominant factor in faculty recruitment, salary, promotion, tenure, resources, and space allocation. Schools of Medicine (SOMs)/Academic Medical Centers (AMCs) compete to increase their rankings by increasing their number of R01 awards. Increasingly, SOM/AMC strategic plans value the recruitment of Principal Investigators (PIs) that hold multiple R01s. This strategy provides institutions an immediate boost in funding totals and experienced researchers. However, these well-funded hires typically require higher salaries, large recruitment packages, and disproportionate space allocations. Institutions are thus predicting that there will be a long-term return on investment (ROI), as these recruits will continue to enjoy continued funding success. Thus far, there has been little data analyzing whether this assumption holds and our analysis indicates that it may not. We show that well-funded PIs “revert to the mean” of the overall NIH R01 funding profile in just 10–15 years. Thus, balanced recruitment of, investment in, and development of young and existing PIs are likely to be a crucial factor in long-term success of an institution.

Methods

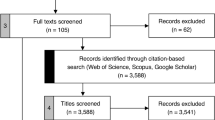

NIH grant data were exported directly from the public repository of funding information maintained by the NIH, the NIH Online Reporter Tool (https://projectreporter.nih.gov/reporter.cfm), and the Palgrave Dataverse site (https://doi.org/10.7910/DVN/GECEVA). All R01s from the category of “NIH US Schools of Medicine” for each fiscal year listed in the results were analyzed (average R01s excluding supplements/year = 14,676; range 12,870–16,128). For analysis of funding of non-R01 grants, U01s, P01s, P30s, and P50s were exported from the database for each fiscal year analyzed (average = 1,696/year; range 1,489–1,870). To determine the number of R01s per investigator, the NIH Contact Person ID was used to identify discrete NIH investigators and then the number of R01s awarded to that ID, excluding supplements, counted. In the case of multi-PI grants, sole credit for the R01 was given to the contact PI.

In the study of funding amount, the total cost of the grant (direct + indirect costs + any administrative or other supplements) was summed for each award. To normalize for inflation over the 15-year period analyzed, award values were normalized to 2015 dollars using the NIH Biomedical Research and Development Price Index’s published inflation rates. For visualization purposes in the graphs, the total cost of the grants was converted into R01 equivalents, with one R01 equivalent defined as the approximate total cost of a $250,000 modular R01 plus a simulated 50% indirect cost rate. Thus, one R01 equivalent was funding up to $375,000 and two R01 equivalents was from $375,001 to $750,000, etc.

NIH average funding profiles were generated directly from the NIH Reporter data for each fiscal year. To allow for error calculation, we bracketed each probe year (2000, 2005, 2010, and 2015) with an additional year on each side (i.e., the funding profile shown for 2000 is an average of the profiles from 1999, 2000, and 2001). To estimate the number of investigators with no R01, we generated three estimations to calculate a prediction range for unfunded investigators. The first used the NIH published data on the number of discrete R01-equivalent applications for each year, from which we then subtracted the number of applications for non-R01 grants and funded R01s to estimate the number of unfunded R01 applications. An obvious source of potential error with this method of estimation is the necessary assumption that most investigators are not submitting multiple R01s in the same fiscal year. This estimate was converted to a percentage by summing the number of discrete applications with the total number of R01-funded investigators, to approximate a total number of investigators desiring NIH R01 funding. For the second approach, we directly counted how many of the individuals that had funding during our four probes lost that funding during the 15-year analysis time frame (total discrete investigators in our cohort was 24,866). Third, we compared our estimates with a recent NIH publication of unfunded investigators (Lauer, 2018). All three estimation methods yielded similar values for the percentage of unfunded investigators.

To graph the mean outperformance of cohorts, the proportion of investigators above the NIH average funding profile at each interval of 5, 10, and 15 years was generated (i.e., area between the curve). In the special case of the 1 R01 cohort where every investigator was below the mean, the negative area between the R01 cohort and the NIH mean was used.

Results

The NIH average investigator award profile

To test whether past award success is predictive of future success, it is important to compare “success” against the average. To do this, we extracted each R01 awarded to a US SOM/AMC in 2000, 2005, 2010, and 2015 from the web-based NIH Reporter tool (https://projectreporter.nih.gov/reporter.cfm). We counted how many individual investigators, identified by NIH Contact Person ID, held 1, 2, 3, or more R01s in any given year. This PI grant portfolio profile was remarkably stable over the 15-year time frame: 73.8 ± 2.2% (range 71–78%) of R01-funded investigators held a single R01; 20.8 ± 1.7% (range 18–23%) held two R01s; 4.3 ± 0.5% (range 3–5%) held three R01s; and only 0.9 ± 0.2% of investigators held four or more R01s in any given year (Fig. 1a). This yields a mean of 1.33 ± 0.03 R01s per R01-funded investigator and shows that “highly funded” R01 awardees are exceptionally rare.

a Proportion of investigators in each year holding the number of R01s. b Average number of R01s per investigator with investigator cohorts of one, two, three, four, or five or more R01s in 2000 (blue) and the average number of R01s held by each group in 2015 (red). The dotted line represents the average number of R01s held NIH-wide in 2015 (0.52 R01s/investigator). c Proportion of investigators holding R01-equivalent funding level in each year, adjusted for inflation represented in 2015 dollars. d Average number of R01 equivalents per investigator with investigator cohorts of one, two, three, four, or five or more R01 equivalents in 2000 (blue) and the average number of R01 equivalents held by each group in 2015 (red). The dotted line represents the average number of R01 equivalents held NIH-wide in 2015 (0.89).

However, this is an incomplete picture of typical PI funding status, as there are also unfunded investigators who are seeking R01 funding. To estimate the number of investigators without an R01, we used published NIH data on the number of unique R01 applicants in each year and subtracted from that the number of PIs with funding in that year. In addition, we directly measured unfunded rates in our cohort analysis groups (see below). Our unfunded investigator observations for SOM/AMCs ranged from 53% to 66% of investigators between FY2000 and 2015. This closely approximates recently published data from the NIH, which estimated an unfunded investigator rate for all NIH investigators in FY2017 of 66% (Lauer, 2018). Thus, if we incorporate the unfunded investigators into the average awards the corrected average number of R01s across all investigators drops to 0.56 ± 0.06 per investigator.

Do highly funded investigators consistently outperform the NIH average?

SOM/AMCs recruitment and promotion practices generally assume that current multi-R01 awardees will maintain multiple grants in the future providing ROI. We tested this assumption over 5-, 10-, and 15-year time intervals. Many consumer industries examine ROI over a shorter time frame than 15 years with industry standards ranging from 3 to 5 years. However, 5 years is an exceedingly short time frame to consider ROI in academic fields. At our own institution, the average length of employment for our current faculty is 12.2 years (12.2 ± 9.5 years, n = 1,209 faculty). Therefore, we evaluated grant success over the similar time frame of 10–15 years.

For the period from FY2000 to 2015, we sorted all investigators funded in FY2000 into cohorts consisting of single R01 holding investigators, 2 R01 holders, etc. Each cohort was then assessed for the average number of grants they held 15 years later. Investigators that started with one R01 held an average of only 0.29 R01 awards after 15 years (Fig. 1b), substantially lower than the 0.52 R01/investigator average observed in FY2015. Those with two, three, or four R01 awards in 2000 did hold slightly above the NIH average after 15 years but still averaged less than one R01 per investigator (Fig. 1b, 0.53 for two R01 holders, 0.68 for three, and 0.69 for four). Even the most highly funded cohort that started with 5 or more R01s in FY2000, held an average of just 1.1 R01s after 15 years. Thus, regardless of the number of R01s held initially, all cohorts significantly decreased their funding over time. Even the most highly funded cohort (5+ R01s) outperformed the average by only 0.58 R01s per PI after 15 years.

For a more detailed analysis of these funding reduction trends, we plotted the percentage of investigators in each cohort that ended with 0, 1, 2, 3, 4, or 5+ R01s after 5, 10, or 15-year intervals (Fig. 2a–c). This showed that the striking reduction in average funding is partly due to a high proportion of investigators who no longer had R01 funding at 5, 10, or 15-year time spans. After 15 years, ~60% of investigators initially with 2+ R01s had no funding and 79% in the single R01 cohort had no R01 funding (Fig. 2a). These loss rates were also present in the 10-year intervals, with ~40% of 2+ R01 and 70% of single R01 holders without R01 funding (Fig. 2b). Even in only 5-year intervals, 20–30% of 2+ R01 holders and a disheartening 55% of single R01 holders had no R01 funding (Fig. 2c). Thus, although single R01 holders have a higher risk of losing all funding, the majority of investigators in all cohorts had complete R01 funding loss within 15 years, regardless of their initial funding level.

a Investigator funding longevity over a 15-year time frame (from 2000 to 2015). Graph shows investigator cohorts that held one (red line), two (green), three (purple), four (orange), or five or more (blue) R01 awards in 2000 with their funding profile 15 years later. The black line shows NIH average profile in 2015. b Investigator funding longevity over 10-year time frames (average of two time frames 2000–2010 and 2005–2015). c Investigator funding longevity over 5-year time frames (average of three time frames, 2000–2005, 2005–2010, 2010–2015). d Investigator funding longevity over a 15-year time frame (from 2000 to 2015) in inflation-adjusted total award dollars in R01 equivalents ($375,000 bins). Graph shows investigator cohorts that held one (red line), three (green), five (purple), seven (orange) or nine or more (blue) R01 equivalents in 2000 with their funding profile 15 years later, adjusted for inflation represented in 2015 dollars. The black line shows NIH average profile in 2015. e Investigator funding longevity over 10-year time frames in inflation-adjusted total award dollars in R01 equivalents. f Investigator funding longevity over 5-year time frames in inflation-adjusted total award dollars in R01 equivalents.

This analysis demonstrated that the proportion of investigators starting with 0, 1, 2, 3, 4, or 5+ R01s in 2000 exhibited a striking regression towards the NIH mean funding over 10–15 years (Fig. 2a–c). The single R01 cohort matches the mean distribution within 5 years and as a cohort underperforms over 10–15 years (Fig. 3). The two to four R01 cohort outperforms the mean over the 5-year (37% of investigators are above the mean) and 10-year time frame (21% of investigators are above the mean), as might be expected, given that most R01s are funded for 5 years. However, even within the well-funded two to four R01 cohorts, only 5–10% of investigators have funding levels greater than the NIH mean after 15 years. Thus, the long-term performance of the two to four R01 holders is only slightly in excess of the NIH mean. The highest funded cohort, those with 5+ R01, which is 0.2% of NIH investigators, also regresses toward the mean, with only 22% of PIs having funding greater than the NIH mean after 15 years (Fig. 3). Although these very highly funded investigators outperform over longer time periods than other cohorts, it may be very difficult to predict which individuals would outperform the NIH average over time.

Overall, we conclude that the R01 profiles of all investigator cohorts, regardless of the number of R01s held at the start, are nearly indistinguishable from the NIH investigator mean profile over 10–15 years. Thus, over any reasonable PI employment half-life, R01 funding success regresses to the mean.

Do multiple R01 PIs convert their R01s to programmatic grants?

One possible explanation for the dramatic drop-off in R01 funding over relatively short time periods is that well-funded investigators convert their multiple R01s into single, larger program or center grants. To assess this possibility, we analyzed NIH dollars per investigator, summing award amounts of R01s, program project grants (P01s), center grants (P30s and P50s), and cooperative agreement research projects (U01s). We excluded small research (R03s), exploratory/developmental research award mechanisms (R21s), and training grants (T32s). For comparison purposes, we converted the total cost (direct, indirect, and supplements) into R01 equivalents: one R01 equivalent was defined as a $375,000 module (i.e., a nominal $250,000 direct costs plus 50% Facilities & Administration rate). Thus, one R01 equivalent in 2015 is funding up to $375,000 and two R01 equivalents funding between $375,001 and 750,000, etc. As biomedical research inflation has increased the size of R01 budgets over time, we normalized for inflation using the NIH Biomedical Research and Development Price Index published rates and normalized R01 equivalents to 2015 dollars. The results showed that the proportion of investigators with one, two, three, etc., R01-equivalent funding changed little over the last 15 years (Fig. 1c). The largest number of investigators each year had two R01s equivalents. This is probably due to increased numbers of non-modular grants over time, as purchasing power of a modular grant decreased.

If highly funded investigator cohorts were converting multiple R01s into fewer large grants, they should consistently outperform the NIH total dollars per investigator mean over time. However, comparing the profile of total dollars in R01 equivalents closely recapitulated our analysis of absolute R01 numbers. Investigator cohorts with one and two R01 equivalents have lower average funding rates than the NIH mean after 15 years, and 3+ R01-equivalent cohorts only slightly exceed the NIH mean (Fig. 1d). Only a tiny subset of highly funded investigators outperforms the total dollar mean over the long term (Fig. 2d–f). Thus, we conclude that conversion of multiple R01s to fewer, but larger, program grants is not a significant factor, as the total dollars awarded also regressed to the mean across all cohorts.

Caveats

There are several caveats to these analyses. We restricted our analysis to SOMs/AMCs, so other institutional types may have different over/underperformance ratios. We also did not separate investigators based on demographics, although a recent study suggests early stage and minority investigators may be at even higher risk than we have identified based on funding level (Nikaj et al. 2018). In addition, we cannot reliably estimate how many investigators retired during the analysis time frames. Given that our data tracks ~25,000 discrete PIs of R01s over this 15-year time frame, it would be nearly impossible to determine whether each PI had retired during this time. Retirement and/or departure from academic science is also unlikely to be completely independent from loss of NIH funding, as investigators that lose all of their NIH funding are anecdotally more likely to retire than investigators that currently have NIH grants. However, we addressed the retirement caveat directly by examining only the grant award profile of the subset of investigators that had at least one R01 at the start and at the end of our analysis windows. By definition, these are active investigators. We recognize that this analysis will overestimate award success, as the non-retired unfunded investigator has been excluded in this segmentation. In this analysis, we were also unable to include the 5+ R01 holders, because only four investigators that held five or more R01s in 2000 still had at least one R01 in 2015. Regardless of the starting number of R01s held (1, 2, 3, or 4+) all groups had funding close to the 1.33 R01s per investigator average of all funded NIH investigators 15 years later (Fig. 4a). As with the analysis of the total R01 awardees, these continuously funded awardees also exhibited regression toward the NIH-wide average profile (Fig. 4b, c). The analyses on the single and multi-R01 holding cohorts of continuously funded investigators showed that the one R01 cohort was indistinguishable from the NIH average, and of those with two to four R01s in FY2000 only 16% of investigators (range 13–21%) outperformed the mean in FY2015 (Fig. 4). Thus, even when we restrict the analysis to cohorts of actively funded individuals, thereby restricting our analysis to investigators who, by definition, have not retired, their funding regresses towards the NIH mean over the same time frames. This indicates that retirement of well-funded investigators is not a driving factor explaining regression to the mean in NIH-wide well-funded investigators.

a In an investigator cohort that held at least one R01 in both 2000 and 2015, the average number of R01s per investigator with investigator cohorts of one, two, three, or four R01s in 2000 (blue) and the average number of R01s held by each group in 2015 (red). The dotted line represents the average number of R01s held by R01-funded investigators NIH-wide in 2015 (1.33 R01s/investigator). b Investigator funding longevity after 15 years (2000–2015) in a cohort of investigators that had at least one R01 in both 2000 and 2015. Investigator cohorts that held one (red line), two (green), three (purple), or four (orange) R01 awards in 2000 and their funding profile 15 years later. Black line is the NIH average in 2015 of investigators that held at least one R01 in both 2000 and 2015. c In an investigator cohort that held at least one R01 in both 2000 and 2015, investigators beginning with 1, 2, 3, or ≥4 R01s in 2000 (blue) and the percent of R01s each cohort held in 2015 (red).

Another caveat is the treatment of multi-PI R01s (MPIs). MPI R01s were initiated by the NIH in 2006; therefore, in the early years of the data analyzed (2000 and 2005), there were no MPIs to consider. By 2010, 5% of all funded NIH R01s were MPIs, rising to 15% in 2015. For the present analysis, all grants, including MPIs, were credited solely to the contact PI. This conforms to Blue Ridge Institute for Medical Research ranking procedures and how the NIH tracks awards. Thus, we counted each grant only once in our analysis. We acknowledge that some investigators classified as “unfunded” may be MPIs on one or more awards. In addition, we are also unable to track foundation, National Science Foundation, and Department of Defense funding as these organizations do not provide the same award transparency as NIH through its NIH Reporter tool. However, these sources provide substantially fewer biomedical research award dollars than the NIH, and those sources are also not counted towards an institution’s Blue Ridge Rankings.

Discussion

Our data demonstrate that regression to the mean occurs with NIH grant success, as is characteristic of long-term performance in a wide variety of settings. For example, financial investing, where data over decades show that actively managed funds and individual fund managers do not outperform the risk adjusted average (indexes) over long periods of time (Malkiel, 1973, Bogle, 1992; Carhart, 1997; Murstein, 2003). Indeed, this is distilled in the phrase the Securities and Exchange Commission (SEC) requires of all mutual fund prospectuses “…past performance is no guarantee of future results…”. Despite this caution and data, the notion persists that previously high performing fund managers will continue to “beat” the market average! Similarly, our analysis of NIH funding data shows that any cohort of investigators (i.e., low or highly funded) will regress toward the typical NIH funding profile over a relatively short time frame (10–15 years). Thus, an institution is unlikely to “beat the market” over any significant period of time by recruiting faculty based solely on past grant success.

These regression to the mean findings are also pertinent to analysis showing that a small number of investigators receive a disproportional share of NIH grant expenditures (Katz and Matter, 2017; Kuo, 2017). These data were congruent with anecdotal impressions that subsets of individuals receive preferential treatment from the NIH. It is true that a higher proportion of funding goes to a subset of investigators. However, if there were systemic bias towards the same group of investigators, we would predict that highly funded investigators would disproportionally sustain their funding over time. To the contrary, our analyses demonstrate that all cohorts of investigators regress toward the mean at very similar rates. As a result, we find no indication of significant systemic, long-term NIH award bias toward a group of highly funded investigators.

Blue chip recruits (e.g., 3+ R01 holders) are usually costlier in terms of salaries, seed packages, and space allocations. Our data show that current high-yield investigators are highly unlikely to continue to perform at the same high level. Only a tiny fraction of PIs will sustain such funding success over the short, medium, or long term. Very highly funded investigators are as likely to have decreased funding as less well-funded investigators are to have increased grant funding—the very definition of regression to the mean. Therefore, institutional “costs” (salaries, seed commitments, and space allocations) disproportional to projected long-term performance is statistically risky. From an ROI perspective, considerations other than past funding performance, including faculty service, clinical activities, mentorship of junior faculty, educational commitments, and public trust, should be part of the institutional recruitment and support of existing faculty strategies. In conclusion, our data suggest that in terms of grants the SEC warning on financial funds appears to apply to faculty grant awards: “Past performance is no guarantee of future returns.”

Data availability

NIH grant data were exported directly from the public repository of funding information maintained by the NIH, the NIH Reporter (https://projectreporter.nih.gov/reporter.cfm), and the Palgrave Dataverse site (https://doi.org/10.7910/DVN/GECEVA).

References

Bogle JC (1992) Selecting equity mutual funds. J Portf Manag 18:94–100

Carhart MM (1997) On persistence in mutual fund performance. J Financ 52:57–82

Katz Y, Matter U (2017) On the biomedical elite: inequality and stasis in scientific knowledge production. Digital access to scholarship at Harvard. Available via: https://cyber.harvard.edu/publications/2017/07/biomedicalelite. Accessed 7 Dec 2017

Kuo M (2017) Relatively few NIH grantees get lion’s share of agency’s funding. News from Science. Available via: https://www.sciencemag.org/news/2017/07/relatively-few-nih-grantees-get-lion-s-share-agency-s-funding. Accessed 17 Nov 2017

Lauer M (2018) How many researchers, revisited: a look at cumulative investigator funding rates. NIH Nexus (2018/03/07). Available via: https://nexus.od.nih.gov/all/2018/03/07/how-many-researchers-revisited-a-look-at-cumulative-investigator-funding-rates/. Accessed 2 Apr 2018

Malkiel BG (1973) A Random Walk Down Wall Street. W. W. Norton & Co., New York, NY

Murstein BI (2003) Regression to the mean: once of the most neglected but important concepts in the stock market. J Behav Financ 4:234–237

NIH Research Portfolio Online Reporting Tools (RePORT), National Institutes of Health. Available via: https://projectreporter.nih.gov/reporter.cfm

Nikaj S, Roychowdhury D, Lund PK, Matthews M, Pearson K. (2018) Examining trends in the diversity of the U.S. National Institutes of Health participating and funded workforce. FASEB J. https://doi.org/10.1096/fj.201800639

Ranking Tables of National Institutes of Health (NIH) Award Data 2006–2017. Blue Ridge Institute for Medical Research. Available via: https://www.brimr.org/NIH_Awards/NIH_Awards.htm

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Prasad, J.M., Shipley, M.T., Rogers, T.B. et al. National Institutes of Health (NIH) grant awards: does past performance predict future success?. Palgrave Commun 6, 54 (2020). https://doi.org/10.1057/s41599-020-0432-5

Received:

Accepted:

Published:

DOI: https://doi.org/10.1057/s41599-020-0432-5