Abstract

In academia, faculty are bound by three pillars of scholarship: Teaching, Research and Service. Academic promotion and tenure depend on metrics of assessment for these three pillars. However, what is and is not acceptable as “service” is often nebulous and left to the discretion of internal committees. With evolving requirements by funders to demonstrate wider impacts of research, we were keen to understand the financial and non-financial incentives for academic faculty to engage in knowledge translation and research utilization. Between November 2017–February 2018, 52 faculty from one School of Public Health (SPH) were interviewed. Data was analyzed using Atlas.Ti and furthermore with framework analysis. The appeal of incentives varied according to personal values, previous experiences, relevance of research to decision-making, individual capacities, and comfort ranging from instinctive support to reflexive resistance. Discussions around types of incentives elicited a plethora of ideas within 4 different categories: (a) Monetary Support, (b) Professional Recognition, (c) Academic Promotion, and (d) Capacity Enhancement. However, concerns included adverse incentives, disadvantaging suboptimally-equipped faculty, risk of existing efforts going unnoticed, vaguely defined evaluation metrics, and the impacts on promotion given that engagement activities often occur outside of the traditional grant cycle. With a shift in funder requests to demonstrate greater social return on their research investments, as well as renewed global attention to research, science and evidence for decision making, SPHs such as this one, are likely going to be concerned about the implications of an enhanced “service” pillar on the other two pillars: teaching and research. The role of incentives in enhancing academic engagement with policy and practice is therefore neither simple nor universally ideal. A tempered approach that considers the various professional aspirations of faculty, the capacities required, organisational culture of values around specific discovery sciences, funder conditions, as well as alignment with the institution’s mission is critical. Deliberations on incentives leads to a larger debate on how to we shift the culture of academia beyond incentives for individuals who are engagement-inclined to institutions that are engagement-ready, without imposing on or penalizing faculty who are choice-disengaged.

Similar content being viewed by others

Introduction

Boyer famously stated that “our outstanding universities and colleges remain, in my opinion, among the greatest sources of hope for intellectual and civic progress in this country. I’m convinced that for this hope to be fulfilled, the academy must become a more vigorous partner in the search for answers to our most pressing social, civic, economic, and moral problems” (Boyer, 1996b). With his call for the “scholarship of integration” in mind, this paper examines the role of incentives for the policy-inclined “service” pillar of academic scholarship. The topic emerged from the first phase of a larger study which aimed to understand the role that academic faculty at one leading School of Public Health play in engaging with, and potentially influencing, evidence-informed decision making (EIDM). In response to a social network analysis and survey administered in Phase I, where the role of incentives for policy engagement was raised as a priority, we embarked on interviews to better understand values, perceptions, suggestions and caveats of incentives for academic appointments and promotions (A&P). The results of our data analysis are contextualized within the role of higher education institutes (HEIs) in influencing EIDM and within the context of relevant literature elaborated in the background section of the paper. HEIs, policymakers at all levels of government, and others who seek to comprehend evidence-based public policy will find practical implications of the study and relevant applications for their work in the discussion.

Background

Barriers to knowledge translation and exchange

Studies investigating facilitators and barriers to research utilization by policymakers are rising rapidly in number given Oliver et al.’s (2014) systematic review yielding 145 studies. However, empirical scholarship on factors positively or negatively affecting knowledge translation and exchange (KTE) or integrated knowledge translation (IKT) between researchers and decision-makers are fewer (CHSRF, 1999; Jacobson et al., 2004; Lavis et al., 2003) with Jacobson et al. (2004) calling for “more investigation of the factors that promote or impede engagement in knowledge transfer”. Given that the environment and the conditions for successful knowledge exchange vary across organizational contexts, of particular interest were the factors affecting academic researchers in the endeavor. Lack of appropriate institutional incentives appeared amongst the most commonly cited barriers (Fraser, 2004; Jacobson et al., 2004; Jessani et al., 2016; Mitton et al., 2007) with 36% indicating it as a barrier in a study by McVay et al. (2016).

Funder response to incentives as a barrier

While the provision and understanding of incentives within academia are still nascent, funder models for supporting such activities and incentivizing researchers in IKT have begun to emerge in the last decade. A study comprising 26 research funders from High Income Countries (McLean et al., 2018) found that 20 (77%) of these funders acknowledge (I)KT as part of their core mission. While the manifestation of this priority through dedicated human resources and funding oftentimes varied as a result of each agency’s interpretation of IKT integration, the importance of IKT for funders indicates a desire to ensure research impact. For instance, the report on Increasing the Economic Impact of Research Councils (referred to as the Warry report) recommended that UK Research and Innovation (UKRI) (formerly the UK Research Councils) intensify their commitment to demonstration of social and economic impact, leverage their role to influence university (I)KT behavior, and expand researcher incentives for participation in (I)KT (Warry, 2006).

In response, the Research Excellence Framework (REF) in the UK states that research should demonstrate ‘benefits to the economy, society, public policy, culture or quality of life” (HEFCE, 2009). Consequently, UKRI included a section on Pathways to Impact in research proposals as a condition of funding (UKRI, 2018). Individual research councils developed Follow-on Funding Schemes (AHRC, 2018; NERC, 2017) to complement the initiatives, in recognition that research outcomes—intended as well as unanticipated—often appear several years after the completion of the grant.

The academic environment and implications for incentives

In academia, faculty are bound by three pillars of scholarship: teaching, research, and service. Academic promotion and tenure depend on metrics of assessment for these three pillars as determined by university Appointments and Promotion (A&P) committees. While the traditional incentive system provides space for IKT activities under the third pillar, what is and is not acceptable as “service” is often nebulous and left to the discretion of these committees.

The discussion on academic incentives has most often centered on knowledge production (Wangenge-Ouma et al., 2015; Wilsdon et al., 2015) rather than knowledge translation and exchange. Activities described under “faculty engagement” are generally recognized as part of knowledge translation and exchange and therefore embedded within the “service” pillar of academia. Scholars have explored this notion in terms of associated concepts of “knowledge exchange” (Johnson, 2020; Tang and Chau, 2020), “engaged scholarship” (Nkhoma, 2020; Renwick et al., 2020), as well as “service”. However scholars note that methods to recognize, evaluate, and reward academic engagement need to be enhanced, (Brownson et al., 2006; Denis and Lomas, 2003; Jacobson et al., 2004; Lomas, 2007; Longest Jr and Huber, 2010; McVay et al., 2016; Oliver et al., 2014; Stamatakis et al., 2013) but offer few ideas for what these could be.

Given that research is bound oftentimes by grant and funding cycles, it is perhaps not surprising that academic incentives to date have revolved around quantitative metrics, serving to adjudicate performance (as defined by the university) and outputs of research embedded and affiliated with individual grants; for example, publishing in peer-reviewed journals, securing peer-reviewed grants, and presenting at conferences, (Coburn, 1998; Denis and Lomas, 2003; Lavis et al., 2003; Wilsdon et al., 2015)—none of which accurately recognize the true impacts of academic research (Kothari et al., 2011; Pain et al., 2011; Wilsdon et al., 2015) or adequately satisfy the evidence and communication needs of potential research users (Fraser, 2004; Mok, 2013). However, when it comes to KT, several activities and outcomes may manifest pre-grant such as relationship building, navigating the context of the decision-maker, understanding decision-maker preferences and values, designing research that is policy relevant, etc. (Elliott and Popay, 2000; Kothari and Wathen, 2013; Ross et al., 2003; Sibbald et al., 2009), as well as several years post-grant such as policy and practice influence, paradigmatic contributions, demand for more research, institutionalization of relationships, future collaborations, a new communal identity etc. (Kothari and Wathen, 2013; Oliver et al., 2014; Wilsdon et al., 2015).

Lack of structures or processes recognizing the importance of these precursors and successors to a research study may offer an explanation as to why there are limited formal incentives or rewards for them in the academic arena. Difficulty in developing appropriate indicators—particularly for bi-directional and transformative aspects of the IKT process—provides an additional challenge (Kothari et al., 2011; Oliver et al., 2014; Pain et al., 2011). Irrespective of the challenges, however, universities and Higher Education Institutes (HEIs) in general have been dedicating attention to the case for revisiting incentives. Within the context of some Schools of Public Health (SPHs), scholars indicate that it is amongst the top priorities for the institution (Brownson et al., 2006; Jessani et al., 2018; Longest Jr and Huber, 2010).

The context in the USA

In the USA, even though collaborations between SPHs and government public health agencies have been emphasized for the past 30 years (IOM, 1988), and followed with recommendations (O’Neil et al., 1993; Sorensen and Bialek, 1991), the impact of the three aforementioned reports have not been accompanied by optimization of incentives demonstrating impact beyond traditional academic outputs. There have also been fewer examples of successful collaborations between SPHs and public health agencies than expected (Gordon et al., 1999; HRSA, 2005; Schieve et al., 1997). We find this not only disheartening but rather surprising given our own experiences within a SPH in the USA. If indeed collaborations can be improved, we were keen to understand the role that incentives—individual, institutional, direct and indirect—may play within this context.

The academic environment at the Johns Hopkins School of Public Health

The Johns Hopkins School of Public Health (JHSPH), founded in 1916, comprises approximately 700 full time faculty across 10 departments and a student body of 2650 at the time of writing this paper (Johns Hopkins Bloomberg School of Public Health, 2018a). Based in Baltimore, USA it has unique access to several state-government agencies and is in close proximity to the state capital of Maryland, as well as the nation’s capital Washington, DC. JHSPH also enjoys a unique history of international engagement that is reflected in its faculty, as well as research foci. Majority of the faculty fall under one of two tracks: Professorial or Non-Professorial (Scientist). Positions for distinguished professionals, referred to as “Professors of the practice”, were introduced more recently in recognition of practical experience or expertise of public health practitioners and their value to academic scholarship. JHSPH also has an Office of Public Health Practice and Training which was established in 2008 to promote the application of research to practice at the School. The Office has been spearheading the role of “public health practice” in the School’s Appointments and Promotion (A&P) process.

Study aims

This paper explores one aspect of a larger study on engagement between academic faculty at JHSPH and government decision-makers (Jessani et al., 2018a, 2018b) within which the issue of incentives appeared as the primary concern. Given the extensive interest in the issue of academic incentives for KT within academia, we sought to add to the scholarship in this area with the following aims in mind:

-

Investigate the financial and non-financial rewards and awards practices that are in place to incentivize academic faculty to engage in knowledge translation and research utilization at one SPH.

-

Understand faculty perspectives and ideas around incentives and structures related to engagement.

-

Provide recommendations for SPH leadership.

While this paper draws on research from one HEI, we believe that it is representative of the experiences across HEIs.

Methods

While guidance regarding criteria for A&P exists within organizational policies and documents at JHSPH, these focus primarily on metrics for teaching and research, and less so for service. For the service pillar, metrics around rewards and incentives for engagement activities are opaque and imprecise. The traditional academic incentive system is similar to that within other HEIs thereby leaving non-traditional activities subject to wider interpretation and adjudication. Furthermore, we found no standard reference to or definition of IKT or KTE across the SPH although there has been a groundswell of attention and focus on advocacy in recent years. The disconnect or gap between faculty activities and the promotion metrics was best evaluated through engaging with faculty reflection. For this reason, we embarked on a set of interviews with a subset of faculty who had participated in phase I.

Respondent selection and data collection

Our initial study population consisted of a sample of 211 faculty from one School of Public Health (SPH) in the USA and consisted of a network mapping and analysis of academic faculty relationships with government decision-makers. This phase is described elsewhere (Jessani et al., 2018). Based on the results of Phase I, a subsample of faculty were chosen for Phase II based on the following criteria: Highly engaged—Faculty who had 5 or more contacts with decision-makers at any one government level and/or in the top 10 percentile of those with the most connections across all four government levels (n = 49); Non-engaged- Faculty with 0 or 1 contacts with decision-makers (n = 57).

The semi-structured interview (SSI) guide was revised upon results from phase I of this study (Jessani et al., 2018), as well as non-participant faculty review of the instrument. Faculty engagement in the initial phase of this study was defined as ‘interaction, communication, outreach or exchange that was active or underway’ during the period of study. This same definition was carried over into Phase II given the respondents’ familiarity with the study and the definition used. Questions relevant to this paper explored incentives for engagement, reasons for engagement or non-engagement as they pertain to incentives, individual, as well as institutional factors that affect engagement, the role of researchers in bringing evidence to bear on decision-making, reflections on SPH initiatives in addressing “practice” relevant opportunities, and advice for peers and SPH leadership. Throughout data collection, analysis and reporting we utilized the Canadian Institutes of Health Research definitions for KT and KTE: “a dynamic and iterative process that includes synthesis, dissemination, and exchange and ethically-sound application of knowledge to improve the health of populations, provide more effective health services and products, and strengthen the health care system”. We also collected socio-demographic information including age, gender, and academic qualifications along with some organizational information in order to contextualize variation in responses.

Eligible faculty were contacted a maximum of two times. Interviews were conducted either in-person (36), over video Skype (12), or over telephone (4) over a 3-month period from November 1, 2017 to February 5, 2018. Interviews lasted between 30 and 75 min and were audio-recorded with verbal participant consent, and transcribed verbatim.

Data analysis

Varied interpretations of the collected data were shared and discussed by the study team in an attempt to discern any individual biases and determine data saturation. Predetermined categories associated with the interview questions served as the initial framework for codebook development, followed by inductive analysis of emerging themes. Data was coded using ATLASti. 8 (ATLAS ti Scientific Software Development GmbH, 2017). A sample of transcripts were co-coded by three members of the study team to establish inter-coder reliability. Codes were extracted for content and multiple responses for a single participant were then consolidated in Excel. A framework analysis approach (Gale et al., 2013) was used to articulate themes.

Ethics approval for the study was granted by the Johns Hopkins Bloomberg School of Public Health Institutional Review Board (#00006968). All respondents provided verbal consent to participate.

Results

Respondent overview

We had a positive response from 70/106 (66%) eligible faculty. A total of 52/70 (75%) faculty from all 10 departments ultimately participated (see Table 1). Respondents consisted of faculty from the professorial, as well as scientist tracks. Those under “Other” included senior research associates (1), research associates (6) Instructors (2) and one Professor Emeritus. JHSPH faculty in leadership positions refer to Associate Dean, Department Chair/Deputy Chair, Academic Program Directors, as well as Center/Institute Directors.

Upon embarking on the interviews, we noted that categorization of faculty as “engaged” or “non-engaged” while useful in phase I of the study, was misleading in Phase II due to better understandings of each respondents’ current, as well as past engagement that influenced their perspectives. We therefore discontinued these distinctions in our analysis.

Are incentives the answer?

When exploring whether incentives were the natural answer to encouraging more engagement between academics and decision makers, we heard several varying perspectives. We begin with opposition to incentives followed by support, noting that even though faculty often provided one particular stance, they were also willing to discuss other perspectives on the issue.

Arguments against incentives

Several faculty were vehement that despite the challenges, the intrinsic reward of a KT engagement outweighs the need for incentives and that public health research bears a moral prerogative for researchers to ensure that it has societal impact. In addition to the moral and ethical perspectives, one faculty member positioned it as a civic duty as noted in Table 2. This would suggest that the willingness to influence decision-makers and policy or practice decisions surpasses traditional views of researcher roles and supersedes partisan ideology for some, as articulated here: “I will talk to the most conservative institute and the progressive caucus. I will talk to whomever I can”. Professor, Department of Health Policy and Management.

For such faculty, as long as the motivations to engage were altruistic in nature, then responses indicated ‘where there is a will there is a way’. They note that time, funding, and other barriers may well be overcome if one had the desire to make such an impact. For others, the hesitancy to think of policy influence as tied to incentives was rooted in the assertion that this was the mission of the School and therefore implicit and embedded in all their work. It would be worth noting however that such perspectives were dominant amongst more senior faculty involved in more policy-relevant, operational, or implementation research in comparison to those involved in the basic sciences—regardless of academic track.

For faculty whose disciplinary focus was more on “basic sciences”, the assertion was that their research was either irrelevant to policy or far removed from it and therefore universal requirements embedded in incentives would be inappropriate. Furthermore, there was an impression amongst some that engaging with decision-makers was beyond the remit of a researcher. In addition, it felt deeply uncomfortable for faculty who see such engagement as contrary to the impartial and apolitical role of researchers whose “job is to do the science, provide the evidence and somebody else should take the baton and pass it along”. Assistant Professor, Department of Epidemiology. Others expressed concern about reputational risk if indeed they were required to expand their role into external engagement. We include a quote from faculty familiar with this perception in Table 2.

Arguments in support of incentives

Respondents who had been reticent or uncertain about engagement felt that incentives to engage would serve as a signal of importance, as well as motivator that would not hinder their core activities as academics. Moreover, they viewed it as a form of encouragement to venture into rather uncertain territory. For faculty who already engage with the decision-making process, the sentiment in support of incentives highlighted not a lack of motivation but rather insufficient recognition or reward for efforts already underway, and that there was a need to enhance existing efforts. Lastly, the disconnect between the school’s mission to have greater social impact and the inadequate recognition of research translation into public health benefit was another reason for reconsideration of the incentive structures by several faculty. Faculty however noted that not all research is immediately relevant for policy or practice uptake.

Immediate reactions “for”, or “against” academic incentives were often not as straightforward. Respondents nuanced their initial responses as the ideas, practicalities, and implications of what these could mean unfolded further. Those strongly opposed to incentives noted that their experience, seniority and research focus perhaps afforded them a different perspective and were considerate of the contexts of other colleagues who may have differing opinions.

Caveats, cautions, and concerns: implications of establishing new incentives

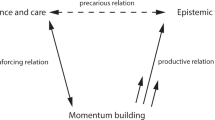

As previously noted, not all respondents were keen on developing new incentives for engagement. However, of those who were supportive of incentives within the A&P process, some raised the potential for unintended consequences which we summarize in Fig. 1. There was concern attempts to meet new requirements for promotion would create a response contrary to that intended—particularly for those unprepared or ill-equipped to respond to the incentives. This may result in penalizing faculty for underperformance. Another troubling issue was that of efforts going unnoticed, undocumented, and ultimately unrewarded. Faculty described how this could be the case in instances where valiant attempts at influencing policy or practice gain no little or no traction, are required to remain anonymous, or are prohibited direct attribution.

The next section outlines the types of incentives that were discussed.

Types of incentives

There was a recognition that “The SPH has been trying to reward faculty more for their practice activities… But the incentives for promotion remain the same. Getting into Op-ed pieces takes time and if that were to impact your ability to continue to get grant support then it would have an adverse effect on your academic career”. Professor, Department of Environmental Science and Engineering.

Regardless of their support of or opposition to incentives, all faculty provided ideas on the variety of facilitators that they would consider incentives. These were as follows and elaborated on below: (a) Monetary Support, (b) Professional Recognition, (c) Academic Promotion, and (d) Capacity Enhancement. Most of these were rooted in their own experiences while some were more theoretical in nature.

A. Monetary support

“Influencing policy can be an expensive hobby and is generally done pro-bono and there are competing demands”. Professor, Department of Health Policy and Management. The cost of engaging with decision makers evolved into discussions around monetary incentives and rewards that revealed four different types: (i) Grants and Awards (ii) Discretionary funding, (iii) Salary Relief, (iv) Funder requirements and restrictions. These are elaborated in Fig. 2. Individual remunerative rewards for any efforts or achievements in these activities, however, did not emerge.

B. Professional recognition

Recognition of faculty efforts and successes in policy engagement through the SPH newsletter and website were the most common mediums mentioned and genuinely appreciated. Positive outcomes of institutional recognition included improved perceptions of highlighted faculty amongst peers, admiration for their impact, desire for partnerships and learning, as well as requests by students for supervision or class enrollment.

However, even though the majority of respondents highlighted that the SPH’s support has actually permitted innovative and fruitful partnerships, more leniency and support would be welcomed in order for faculty to be able to capitalize on unexpected opportunities; to attend a high-profile summit, for example, in the middle of a teaching semester.

One concern raised was that oftentimes worthy achievements do not get recognized; perhaps because not all can be showcased. Another faculty noted that there was inconsistency in how influence was valued: “If your research is paid attention to by the President or Congressmen then it looks like that’s more prestigious than Baltimore Health Department” Research Associate, Department of Epidemiology.

While there was acknowledgment that many activities must remain undisclosed such as in this example “I have worked with governments where the entire engagement … is predicated on the fact you don’t say anything about it”, Associate Professor, Department of Epidemiology, some faculty noted that, “Although [they] did not necessarily get promoted within the school for that, I think this has definitely changed [their] notoriety as an expert [internally]”. Research Associate, Department of Mental Health. Ideas such as awards for significant impact (as defined by a committee) were one idea to bring such successes to the fore.

On the contrary however, too much recognition was viewed as ‘self-promotion’, ‘attention-seeking’ or ‘glorification.’ With respect to external recognition as a result of engagement with decision-makers, faculty mentioned opportunities to serve on scientific and advisory committees, invitations for consultancies, funding for more research, and offers for employment—all of which contribute to career enhancement. This leads to an important question raised by several faculty: “recognition by whom?”

C. Academic promotion

While professional recognition was greatly valued, faculty cautioned that achievements in engagement and policy or practice do not hold as much currency as traditional metrics for academic promotion likely because “It takes a lot of time to engage with decision-makers and the [decision-making] process and it doesn’t produce papers, it doesn’t produce grant proposals and that de-incentivizes it”. Assistant Scientist, Department of International Health. Several faculty expressed frustration noting that “to get promoted, if I could have an article in a top-tier journal, or a high-visibility congressional testimony, the article is of much higher value. The testimony is more unique, I think in some ways its more valuable, but not in the academic world”. Professor, Department of Health Policy and Management.

Respondents acknowledged that the existing A&P process may under-recognize efforts, or even penalize faculty efforts as reflected here: “There are many papers I am not even the co-author on, I drew the table, the analysis, the data, the rewriting but I didn’t get the [academic] credit for it”. Associate Scientist, Department of International Health. Some faculty have experienced significant disregard for such activities from their peers, noting that “I was at a faculty meeting and someone said something like ‘[advocacy] is the way we advance people who don’t have a lot of publications’ and it was incredibly offensive”. Associate Professor, Department of Environmental Science and Engineering.

With ambiguity in perspectives on faculty engagement, deliberations on the value of such activities, and how achievements are weighted in the A&P process creating a fair amount of angst, there was almost a universal request to further discussions on the ‘practice portfolio’ of one’s CV and enhance valuation of engagement activities in the A&P process. Several ideas permeated including: “Having a recommendation from a policymaker as a substitute to having recommendation letters from peers”. Assistant Professor, Department of Epidemiology.

D. Capacity enhancement

Upon reviewing the extensive list of seminars, speakers and discussions on the topic that the SPH had offered in the prior years, respondents were impressed with the diversity and quantity of capacity enhancement activities. A recurring reflection was that few were targeted at enhancing faculty capacity in networking, relationship building, communication, advocacy etc.; a key driver of their apprehension to engage:

“We communicate best with scientists but there is also communicating with non-scientists and media and those are very different arts and there can be more training on how to do this, how to communicate with decision-makers, how to present data…” Professor, Department of Epidemiology.

Incentivized workshops for some of these essential skills was one such idea that emerged. Faculty also reflected on the value of being embedded in policy and practice organizations for “sabbaticals or maybe having fellowships for those who are interested in engaging, giving faculty time to work with a policymaking agency and get some exposure into that…” Assistant Scientist, Department of International Health. In reference to peers who have joined academia from settings where their experiences were rooted in “real world” public health policy and practice, several faculty lamented that “we’re not making strategic use of a lot of senior faculty who’ve had incredible breadth of experience” Professor, Department of Environmental Science and Engineering. These experiences having contributed to their ability to leverage relationships, navigate policy arenas, create student opportunities, enhance their curricula etc… Ideas to bridge this gap therefore ranged from: more presentations or dialogs with such experienced faculty from across the various departments, embedding opportunities for less experienced faculty into grants to shadow peers at policy events, and developing guidance and mentorship such as the “Program in Applied Vaccine Experiences [which places] interns at UNICEF and WHO to actually see the policy decision process”. Professor, Department of Epidemiology.

Metrics for engagement

Although incentives related to the A&P process were the ones that received significant support due to implications on career enhancement, faculty were wary of how intangible engagement activities would be measured. The time it takes to create relationships, deliberate on policy ideas, testify in front of congress, manage political sensitivities, enhance partner capacities, serve on advisory boards, attend stakeholder meetings, create (what are oftentimes non-attributable or anonymous) products etc. were all examples of activities that are difficult to quantify, let alone document. Two such concerns were articulated here:

“…two problems with giving credit for engagement: One is that depending on the department, having it valued the same way, people don’t know how to count that. And second, engagement doesn’t always lead to a public document or record that shows that it happened, it’s sort of taking your word for it”. Associate Professor, Department of Epidemiology.

“It’s very time consuming. And the payoff is uncertain. You just don’t know what contact will make a difference and which ones will not… Whereas with writing papers, even though they all don’t get published, you write those 14 pages and there is a paper (at conclusion)”. Professor, Department of Health Policy and Management.

Even if there was a way to quantify some of these activities, faculty note that such metrics should be met with caution “If you can count the number of times your research changes a law at the federal or state level—it’s not a common outcome for a requirement for promotion or anything else. It’s a very high bar to set. It’ll be analogous to saying how many lives have you saved this year and if you haven’t you aren’t promoted—this doesn’t make sense” Professor, Department of Health Policy and Management.

Likewise, inability to trace the pathway to indirect impact also raised concern: “I know of metrics that measure how much your paper is getting read or cited, but I don’t know about objective quantifiable metrics for how much impact your research, your paper has had on policy change”. Assistant Professor, Department of Epidemiology.

If not incentives, then what?

While incentives could be one lever used to encourage current faculty to engage with policy and practice, four additional ideas emerged. These were (a) create a different academic track that would attract faculty with appropriate experience, inclination and skills; (b) modify recruitment practices to include critical skills; (c) outsource KT activities; (d) create internal structures that serve as KT platforms.

On the first, there was a recognition that while there are now positions with the title “Professors of practice”, it would be important to “create career paths for faculty who… want to transition their careers into things that are more practice/regulatory/advocacy types of roles”. Professor, Department of Environmental Science and Engineering. A track that allows for faculty at all stages of their practice careers to join the SPH and contribute to EIDM would be one such option. On the second, a focus on actively seeking engagement skills and abilities at the recruitment stage rather than, or in addition to, at the retention phase of staff development was also suggested.

Given the comments earlier on the contested role of researchers as advocates, the inadequate skills amongst existing faculty, and the lack of time to engage in decision-making, some faculty suggested that such endeavors should be contracted out to professional knowledge brokers, and advocacy or communication experts as a third option. These could be external to the institution or co-opted into a more multidisciplinary project team such as within the International Vaccine Access Center. “It’s a steep learning curve if you have not been in the government world. It would be great if there was funding for communications, policy help…like an in-house team that you could hire, contract through, or consult with on these issues. This is why we need to bring a policy person on board”. Research Associate, Department of Mental Health. This has the added advantage of assisting faculty to maintain their impartial profile, as well as respect their funder restrictions on advocacy and lobbying.

Lastly, as an alternate to hiring external communication teams, there was an argument for better leveraging the University’s Office of Communication and Public Affairs, as well as the Office of External Affairs to assist with media relations and advocacy even though collaborations seem to have resulted in mixed experiences. Better leveraging internal University Research Centers and Institutes as Knowledge Translation Platforms were also lauded. For example, “the Center for Humanitarian Health—because of the nature of their work are more involved in policy decision making processes” Professor, Department of Epidemiology.

Institutional structures to support individual efforts

There was a recognition that irrespective of individual efforts in these areas and whether there are incentives or outsourcing of KT activities, institutional support mechanisms would be integral to success in this endeavor. In addition to ideas noted above under the section on Capacity Enhancement, respondents requested more clarifications from the institution on permissible activities, management of conflicts of interest, and balancing faculty versus institutional stances on issues. Faculty also requested opportunities for more support, advice and guidance from experienced peers in a more formalized manner through enhancing the mentoring programs perhaps or ‘lightening talks’ around engagement in departmental meetings. This was coupled with an emphasis on the role of SPH leadership in providing a unified message around faculty engagement with decision making processes and people. Lastly, faculty would welcome the opportunity for interaction with peers across departments in order to foster cross-departmental learning, multi-disciplinary partnerships, and diminishing existing silos so as to enhance synergistic growth. Several of the ideas permeated from experiences with advocacy and lobbying as well as the creation of a Center for Advocacy in late 2016.

Discussion

Our study sought to understand the types of incentives faculty from one SPH would welcome in order to enhance engagement between academics and decision-makers in order to promote Knowledge Translation and Exchange with the desire ultimately to enhance Evidence-informed decision-making (EIDM). We discovered varying perspectives on the value of incentives, the types of incentives, concerns and caveats, as well as alternate or parallel solutions to the conundrum of whether academic incentives are the answer. Types of incentives included Monetary Support, Professional Recognition, Academic Promotion, and Capacity Enhancement. However, respondents raised concerns about fostering adverse incentives, disadvantaging sub optimally-equipped faculty, and the risk of existing efforts going unnoticed. Furthermore, currently vaguely defined methods to evaluate “practice” and engagement led to further unease.

The SPH comprises 10 departments that vary from Molecular Biology to Health Policy and Management. This variation in foci, by nature, attracts a variation in research topics, faculty responsiveness to policy engagement, and values with respect to the roles of researchers. Although many JHSPH staff are immensely engaged with influencing policy and practice (the COVID-19 pandemic for instance is a perfect example)—the metrics for judging these contributions were elusive. This was apparent in the interviews we conducted. We found that while some faculty were keen on incentives to enhance the potential for engagement with decision-makers (whether in the policy or practice field), others were surprisingly hesitant or outright opposed to the introduction of incentives. This was unexpected given the results of a preceding phase of this study within the same context whereby respondents highlighted “incentives” as a priority (Jessani et al., 2018).

In reflecting on this, it would seem that values, beliefs and ideological stances on what constitutes the “activist-academic” perhaps help delineate three typologies of faculty within the SPH: The choice-disengaged—those who oppose incentives for activities that surpass the (perceived) role of a researcher (Gordon et al., 1999; McAneney et al., 2010); The choice-engaged—those who consider engagement integral to their roles as researchers (Askins, 2009) and therefore consider incentives anathema to engagement (Macfarlane and Cheng, 2008; Merton, 1973); The suboptimally-engaged—those who are keen to engage more and therefore support incentives as a method for encouraging and rewarding faculty engagement.

In an institution with such varying perspectives, it is challenging to devise an optimal set of incentives. For the choice-disengaged and incentive-opposed faculty, it would behoove us to note that within this typology there were faculty motivated to respond for different reasons: some who work in the basic sciences believed incentives to be irrelevant, or at worst a distraction to their focus on pure discovery-based work; others, similar to McAneney’s results (2010), supported the importance of dissemination but believed that the research results would diffuse in other ways. In the case of the former, following guidelines similar to the UK REF (HEFCE, 2009) would be helpful as it denotes very clearly that not all academics are impelled to engage in ‘impactful’ research. For the latter, as well as in general, the SPH should seek to understand the role current incentives play in forming this position and consider some of the alternatives to incentives that we discuss further down.

The majority of those deemed suboptimally-engaged but in favor of incentives consisted of the willing but unable (those with less skills or experience), the willing but distracted (junior faculty still building their reputations) (Stephan and Levin, 1992; Zuckerman and Merton, 1972), as well as the able but under-resourced (such as those without funding or time). While the existence of seminars and presentations on engagement-relevant skills were acknowledged, for these faculty, as well as students, it would behoove the SPH to respond to the request for practical Capacity Enhancement in order to better prepare public health professionals to engage beyond academia.

For the choice-engaged who were simultaneously incentive-opposed, there is concern that incentives may diminish the avid social and moral motivations that drive their willingness to engage with the decision-making process. Such faculty are often doing translational research that naturally has implications for policy and practice; engagement therefore becomes a natural part of the research process. Many also had the privilege of being more advanced in their careers with perhaps less concern about meeting traditional academic metrics for promotion. As Askins (2009) surmised, an incentive would be redundant as its “just what they do”. However, given that more exploration around incentives provided a wide variety of possibilities, it is possible that initial implicit interpretations of the word “incentive” resulted in such diametrically opposed opinions that were tempered as the interviews progressed, and that this typology of faculty may not necessarily be categorically opposed to incentives—just certain kinds of incentives.

Our study unveiled four types of incentives that faculty surmised would be helpful to enhance the willingness, opportunity and success of engagement with decision-makers. Amongst Monetary Support there appeared four subtypes (Grants and Awards, Discretionary funding, Salary Relief, Funder requirements, and restrictions), all of which aimed at facilitating pursuit of activities and programs aligned with the research with none suggesting personal financial gain (Friedman and Silberman 2003). The types of activities that faculty suggest funding for also address time and staff (Goering et al., 2003; Gordon et al., 1999; Martens and Roos, 2005; McAneney et al., 2010; McVay et al., 2016) as well the inability to pursue engagement at various stages of the research process (Lomas, 2007). Given that historically scientists have not had to demonstrate the relevance or greater societal impact of their research (Godfrey et al., 2010), the REF in the UK (HEFCE, 2009) is one example of a funder-imposed requirement that raised divergent perspectives amongst researchers (Pain et al., 2011). The appreciation and demand for Follow-on Funding (AHRC, 2018; NERC, 2017) grants by some researchers is indicative that funding models may not only serve to incentivize more KT behaviors and activities (Godfrey et al., 2010), but also overcome the financial concerns so often cited by researchers as barriers to IKT (CHSRF, 1999) However, the reflexive outrage by scientists to the REF was embedded in a reaction to the 25% weight apportioned to such activities (Pain et al., 2011) which leads to the natural question of not only whether research be assessed this way but if so, what is an appropriate proportion, and how is it measured?

While external recognition that manifests as career advancement opportunities for faculty are perhaps out of the realm of SPH control, internal Professional Recognition that veers more along the lines of acknowledgment and appreciation for efforts is perhaps easiest of the four for an SPH to consider. Academic Promotion was perhaps the most contentious of the incentives with consistently diverging opinions on how the A&P process needs to evolve in order to consider engagement and influence activities under what the SPH refers to as “practice”. A review of the promotion guidelines, as well as interview response confirm that, similar to other HEIs (Wangenge-Ouma et al., 2015), research grants, publications, and teaching activities held the most currency for promotion. However, those who felt that the promotion system was opaque and nebulous supported more clarity on content, metrics and process for appraising “practice” activities. Concerns about metrics for appraisal were raised equally amongst the choice-engaged, as well as the suboptimally-engaged. Results from the study echo concerns raised by others (Penfield et al., 2014; Ross et al., 2003; Wilsdon et al., 2015) with respect to the fact that several engagement activities “suffer” from one or more of the following: (a) occur outside of the research timeline (b) have impacts are time lagged, (c) cannot be made public, and (d) can’t be attributed to one’s efforts alone. Many of these transcend mere transactions to being more relational and perhaps even transformational; hence unquantifiable.

Developing metrics for social returns on investment (SROI) (Penfield et al., 2014) therefore seems like an ominous endeavor that risks focus on “what is measurable at the expense of what is important” (Wilsdon et al., 2015). Again, the UK Higher Education Funding Council for England provisional methodology of assessment takes these complexities into consideration and could perhaps serve as a model for adaptation. However, Pain et al. (2011) caution that the metrics developed by such innovative funding models still fail to recognize the bi-directional possibility of impact, as well as the role of relationships, particularly since “what takes place in effective knowledge co-production is a more diverse and porous series of smaller transformative actions that arise through changed understanding among all of those involved”.

This study revealed that perhaps the deliberations on incentives leads to a larger debate altogether on how to we shift the culture of academia beyond incentives for individuals who are engagement-inclined to institutions that are engagement-ready, without imposing on or penalizing faculty who are choice-disengaged. Our study, as well as others, have demonstrated the importance of institutional context with respect to faculty ability to be active beyond the walls of the university (Ayah et al., 2014; Bingley, 2002; Hansen, 2008; Moher et al., 2018; Rabbani et al., 2016) We need to think perhaps about “measured incentives” that ensures that an HEI can encourage engagement without compromising the core reputation of academics as unbiased and apolitical in their research. Whether this is possible and in what instances requires deeper exploration. Kothari and Wathen (2013) in particular, encourage consideration of outcomes as transformational but also caution against positivity bias—the assumption that all such efforts will lead to good outcomes.

Engagement-readiness however, will only be possible if indeed there is a commitment from the institution to recognize the importance of KT and EIDM in advancing the relevance of an HEI for policy and practice, and consequently raising its profile through embedding KT in strategic plans (Jacobson et al., 2004; Rabbani et al., 2016), creating a dedicated faculty track in practice, attracting and retaining relevant skilled staff through differentiated hiring criteria and processes (Jacobson et al., 2004; Lavis et al., 2003; Rosse et al., 1991; Siegel et al., 2003; Volmink et al., 2018), reimagining competency-based selection of students (Volmink et al., 2018), revisiting pedagogical approaches to facilitate student acquisition of KT-relevant knowledge and skills (Jacobson et al., 2004) particularly for those in the DrPH program, and establishing mechanisms for recognition and promotion of successes in these areas. There has already been some advancement in some of these areas as evident by the SPH’s new 2019–2023 strategic plan (Johns Hopkins Bloomberg School of Public Health, 2018b) which includes partnerships and advocacy as two of the five goals as well as seminars hosted by the Office for Public Health Practice and Training designed to build knowledge and skills in engagement through real-world scenarios.

With a shift in funder requests to demonstrate greater social return on their research investments, as well as renewed global attention to research, science and evidence for decision making (as a result of crises such as the 2008 financial crisis, pandemic outbreaks such as H1N1 and COVID-19, climate change resultant fires in Australia etc…) HEIs such as this SPH, are likely going to be concerned about the implications of an enhanced “service” pillar on the other two pillars: teaching and research. Some scholars argue that teaching is already suffering with a greater emphasis on research without the added emphasis on translation (Mok, 2013; Shin and Harman, 2009). HEIs are also likely concerned about how this shift will affect their competitive edge if the ranking rubrics are not also revisited in parallel: adjusting rankings processes away from measuring inputs and outputs (Deem et al., 2008; Mok, 2013) that favor recruitment, retention and promotion of “star faculty” researchers (Mok, 2013; Shin and Harman, 2009; Stromquist, 2007) and instead toward longer-term value-added measurements. However, while these types of changes might ultimately encourage increased engagement with policymakers, they risk simply replacing one bias with another (Fowles et al., 2016).

Strengths and limitations

While others note the reflection of incentives for faculty engagement with decision makers (Jessani et al., 2016; Nkhoma, 2020), these studies were embedded in African countries where linkages between academia and practice and “service” are more intertwined, and the role of international donors is more pronounced. Furthermore, the study from Malawi (Nkhoma, 2020) focused more on community engagement in contrast to this paper on policy engagement which understandably may have different motivations This is the only study we are aware of from a high-income country that has explored in-depth the perspectives from a variety of faculty on the issue, types of and structures for academic incentives in the American context. The use of different modes for our interviews (video Skype, telephone) allowed us to expand reach to faculty based elsewhere or traveling during the study period. In addition, the qualitative data collection process elicited increased reflections on a topic that some faculty (especially non-engaged faculty/faculty from basic science departments) hadn’t had the opportunity to consider before. Furthermore, while the content of the instruments would not be directly generalizable due to the context specificity of the study, the constructs should have transferability across HEIs deliberating incentives for faculty engagement with decision-makers.

While our study has several strengths, we note that by virtue of being the second phase of an ongoing study, we were bound to recruitment of a subset of participants from the initial phase. We recognize the potential for social desirability bias and mitigated this by reframing questions, returning to them at a later point, or using probes to allow for as much flexibility as possible (Harvey, 2011).

Implications for policy and practice

Taking into consideration the various deliberations above, and Boyer’s call for scholarship to be reconsidered (1996a) we outline suggestions for the variety of organizations implicated in this study with SPHs as our primary focus. These can be found in Fig. 3. We hope that this will spur discussions and deliberations across SPHs, Higher education Institutes (HEIs), and health profession associations, in the USA as well as abroad.

Conclusion

Knowledge translation and exchange (KTE) activities are increasingly being viewed as a valuable use of time and skills for faculty in the Public Health sciences, especially to promote Evidence-informed decision-making. However, exploration of academic incentives for faculty engagement with the decision-making process—whether through relationships with decision-makers, serving on advisory boards considering evidence as an input to decision-making processes, or engaging in direct advocacy—was not straightforward. The appeal of incentives varied according to personal values, previous experiences, relevance of research to decision-making, and individual capacities and comfort ranging from instinctive support to reflexive resistance. Discussions around types of incentives elicited a plethora of ideas within four different categories, which we’ve distilled into recommendations for SPH leadership. Similarly, the implementation of incentive structures revealed several ideas as well as caveats—particularly around measurement of such activities and the impacts on promotion given that KTE activities often occur outside of the traditional grant cycle. The role of incentives in enhancing academic engagement with policy and practice is therefore neither simple nor universally ideal. A tempered approach that considers the various professional aspirations of faculty, the capacities required, culture of values around specific discovery sciences or funder conditions, as well as alignment with the institution’s mission is critical.

Data availability

The datasets used and/or analyzed during this study are available from the corresponding author on reasonable request.

References

AHRC (2018) Arts and humanities council (AHRC) follow-on funding for impact and engagement. http://www.fundit.fr/en/calls/ahrc-follow-funding-impact-and-engagement

Askins K (2009) ‘That’s just what I do’: placing emotion in academic activism. Emotion Space Soc 2(1):4–13

ATLAS ti Scientific Software Development GmbH (2017) ATLAS.ti [computer software]. Scientific Software Development GmbH, Berlin

Ayah R, Jessani N, Mafuta EM (2014) Institutional capacity for health systems research in east and central african schools of public health: Knowledge translation and effective communication. Health Res Policy Syst 12(1):20

Bingley A (2002) Research ethics in practice. In: Bondi L (ed) Subjectivities, knowledges, and feminist geographies: the subjects and ethics of social research. Rowman & Littlefiel, pp. 208–222

Boyer EL (1996a) From scholarship reconsidered to scholarship assessed. Quest 48(2):129–139. https://doi.org/10.1080/00336297.1996.10484184

Boyer EL (1996b) The scholarship of engagement. Bullet Am Acad Arts Sci 49(7):18–33. https://doi.org/10.2307/3824459

Brownson RC, Kreuter MW, Arrington BA, True WR (2006) Translating scientific discoveries into public health action: how can schools of public health move us forward? Public Health Rep 121(1):97–103

CHSRF (1999) Issues in linkage and exchange between researchers and decision makers. Canadian Health Services Research Foundation, Ottawa, Canada

Coburn AF (1998) The role of health services research in developing state health policy. Health Affairs 17(1):139–151

Deem R, Mok KH, Lucas L (2008) Transforming higher education in whose image? exploring the concept of the ‘world-class’ university in europe and asia. Higher Educ Policy 21:83–97

Denis JL, Lomas J (2003) Convergent evolution: the academic and policy roots of collaborative research. J Health Services Res Policy 8(suppl_2):s1–s6

Elliott H, Popay J (2000) How are policy makers using evidence? models of research utilisation and local NHS policy making. J Epidemiol Community Health 54(6):461–468

Fowles J, Frederickson HG, Koppell JG (2016) University rankings: evidence and a conceptual framework. Public Admin Rev 76(5):790–803

Fraser I (2004) Organizational research with impact: working backwards. Worldviews Evidence‐Based Nurs 1(suppl_1):s52–s59

Friedman J, Silberman J (2003) University technology transfer: Do incentives, management, and location matter? J Technol Transf 28(1):17–30

Gale NK, Heath G, Cameron E, Rashid S, Redwood S (2013) Using the framework method for the analysis of qualitative data in multi-disciplinary health research. BMC Med Res Methodol 13(117):1–8

Godfrey L, Funke N, Mbizvo C (2010) Bridging the science-policy interface: a new era for south african research and the role of knowledge brokering. South African J Sci 106(5-6):44–51

Goering P, Butterill D, Jacobson N, Sturtevant D (2003) Linkage and exchange at the organizational level: a model of collaboration between research and policy. J Health Services Res Policy 8(2_suppl):14–19

Gordon AK, Chung K, Handler A, Turnock BJ, Schieve LA, Ippoliti P (1999) Final report on public health practice linkages between schools of public health and state health agencies. J Public Health Manag Practice 5(3):25–34

Hansen ER (2008) Practical feminism in an institutional context. In: Moss P, Al-Hindi KF (eds) Feminisms in geography: rethinking space, place, and knowledges. Rowman & Littlefield, Plymouth, pp. 230–236

Harvey WS (2011) Strategies for conducting elite interviews. Qualit Res 11(4):431–441

HEFCE (2009) Research excellence framework: second consultation on the assessment and funding of research. Higher Education Funding Council for England, London

HRSA (2005) Public health workforce study (Study commissioned by Bureau of Health Professions, Health Resources and Services Administration). US Department of Health and Human Services, Rockville

IOM (1988) The future of public health. National Academy Press, Institute of Medicine Committee for the Study of the Future of Public Health, Washington, D.C.

Jacobson N, Butterill D, Goering P (2004) Organizational factors that influence university-based researchers’ engagement in knowledge transfer activities. Sci Commun 25(3):246–259

Jessani NS, Babcock C, Siddiqi S, Davey-Rothwell M, Ho S, Holtgrave DR (2018a) Relationships between public health faculty and decision-makers at four governmental levels: a social network analysis. Evidence Policy 14(3):499–522

Jessani N, Kennedy C, Bennett SC (2016) Enhancing evidence-informed decision-making: strategies for engagement between public health faculty and policymakers in kenya. Evidence Policy 13(2):225–253

Jessani NS, Siddiqi S, Babcock C, Davey-Rothwell M, Ho S, Holtgrave DR (2018b) Factors affecting engagement between academic faculty and decision-makers: Learnings and priorities for a school of public health. Health Res Policy Syst 16(65):1–15

Johns Hopkins Bloomberg School of Public Health (2018a) Student body diversity for the Johns Hopkins Bloomberg School of Public Health

Johns Hopkins Bloomberg School of Public Health (2018b) The power of public health: a strategic plan for the future FY2019-2023. Johns Hopkins Bloomberg School of Public Health, Baltimore, USA

Johnson M (2020) The knowledge exchange framework: Understanding parameters and the capacity for transformative engagement. Stud Higher Educ 1–18. https://doi.org/10.1080/03075079.2020.1735333

Kothari A, MacLean L, Edwards N, Hobbs A (2011) Indicators at the interface: managing policymaker-researcher collaboration. Knowledge Manag Res Practice 9:203–214

Kothari A, Wathen CN (2013) A critical second look at integrated knowledge translation. Health Policy 109(2):187–191

Lavis JN, Robertson D, Woodside JM, McLeod CB, Abelson J (2003) How can research organizations more effectively transfer research knowledge to decision makers? Milbank Quart 81(2):221

Lavis J, Ross S, McLeod C, Gildiner A (2003) Measuring the impact of health research. J Health Services Res Policy 8(3):165–170

Lomas J (2007) The in-between world of knowledge brokering. BMJ 334(7585):129–132

Longest Jr B, Huber G (2010) Schools of public health and the health of the public: enhancing the capabilities of faculty to be influential in policymaking. Am J Public Health 100(1):49

Macfarlane B, Cheng M (2008) Communism, universalism and disinterestedness: re-examining contemporary support among academics for merton’s scientific norms. J Acad Ethics 6(1):67–78

Martens PJ, Roos NP (2005) When health services researchers and policy makers interact: tales from the tectonic plates. Healthcare Policy 1(1):72–84

McAneney H, McCann JF, Prior L, Wilde J, Kee F (2010) Translating evidence into practice: a shared priority in public health? Soc Sci Med 70(10):1492–1500

McLean RKD, Graham ID, Tetroe JM, Volmink JA (2018) Translating research into action: an international study of the role of research funders. Health Res Policy Syst 16(44):1–15

McVay AB, Stamatakis KA, Jacobs JA, Tabak RG, Brownson RC (2016) The role of researchers in disseminating evidence to public health practice settings: a cross-sectional study. Health Res Policy Syst 14(42):1–9

Merton RK (1973) The normative structure of science. In: Storer NW (ed) The sociology of science. theoretical and empirical investigations. University of Chicago Press, pp. 267–280

Mitton C, Adair CE, McKenzie E, Patten SB, Perry BW (2007) Knowledge transfer and exchange: review and synthesis of the literature. Milbank Quart 85(4):729

Moher D, Naudet F, Cristea IA, Miedema F, Ioannidis JP, Goodman SN (2018) Assessing scientists for hiring, promotion, and tenure. PLoS Biol 16(3):1–20

Mok KH (2013) The quest for an entrepreneurial university in east asia: impact on academics and administrators in higher education. Asia Pacific Educ Rev 14:11–22

NERC (2017) Innovation follow-on call: enabling innovation in the UK and developing countries—announcement of opportunity. https://nerc.ukri.org/innovation/together/opportunities/

Nkhoma N (2020) Faculty members’ conceptualization of community-engaged scholarship. J Higher Educ Outreach Engagement 24(1):73–96

Oliver K, Lorenc T, Innvær S (2014) New directions in evidence-based policy research: a critical analysis of the literature. Health Res Policy Syst 12(34):1–11

O’Neil EH, Shugars DA, Bader JD (1993) Health professions education for the future: schools in service to the nation. Pew Health Professions Commission, San Francisco

Pain R, Kesby M, Askins K (2011) Geographies of impact: power, participation and potential. Area 43(2):183–188

Penfield T, Baker MJ, Scoble R, Wykes MC (2014) Assessment, evaluations, and definitions of research impact: a review. Res Eval 23(1):21–32

Rabbani F, Shipton L, White F, Nuwayhid I, London L, Ghaffar A, Abass F (2016) Schools of public health in low and middle-income countries: an imperative investment for improving the health of populations? BMC Public Health 16(941):1–12

Renwick K, Selkrig M, Manathunga C, Keamy R (2020) Community engagement is…: revisiting boyer’s model of scholarship. Higher Educ Res Dev 39:1–15

Ross S, Lavis J, Rodriguez C, Woodside J, Denis JL (2003) Partnership experiences: involving decision-makers in the research process. J Health Services Res Policy 8(Suppl 2):26–34. https://doi.org/10.1258/135581903322405144

Rosse JG, Miller HE, Barnes LK (1991) Combining personality and cognitive ability predictors for hiring service-oriented employees. J Business Psychol 5(4):431–445

Schieve LA, Handler A, Gordon AK, Ippoliti P, Turnock BJ (1997) Public health practice linkages between schools of public health and state health agencies: Results from a three-year survey. J Public Health Manag Practice 3(3):29–36

Shin JC, Harman G (2009) New challenges for higher education: global and asia-pacific perspectives. Asia Pacific Educ Rev 10:1–13

Sibbald SL, Singer PA, Upshur R, Martin DK (2009) Priority setting: what constitutes success? A conceptual framework for successful priority setting. BMC Health Services Res 9(43). https://doi.org/10.1186/1472-6963-9-43

Siegel DS, Waldman DA, Atwater LE, Link AN (2003) Commercial knowledge transfers from universities to firms: Improving the effectiveness of university–industry collaboration. J High Technol Manag Res 14(1):111–133. https://doi.org/10.1016/S1047-8310(03)00007-5

Sorensen AA, Bialek RG (1991) The public health faculty/agency forum: linking graduate education and practice: final report. University Press of Florida, Gainesville

Stamatakis KA, Norton WE, Stirman SW, Melvin C, Brownson RC (2013) Developing the next generation of dissemination and implementation researchers: insights from initial trainees. Implement Sci 8:29–5908. https://doi.org/10.1186/1748-5908-8-29

Stephan PE, Levin SG (1992) Striking the mother lode in science: the importance of age, place, and time. Oxford University Press, Oxford

Stromquist NP (2007) Internationalization as a response to globalization: radical shifts in university environments. Higher Education 53(1):81–105

Tang H, Chau C (2020) Knowledge exchange in a global city: a typology of universities and institutional analysis. Eur J Higher Educ 10(1):93–112

UKRI (2018) Pathways to impact. https://www.ukri.org/innovation/excellence-with-impact/pathways-to-impact/

Volmink JA, Bruce J, de Holanda Campos H, de Maeseneer J, Essack S, Green-Thompson L, Wolvaardt G (2018) Reconceptualising health professions education in South Africa. (Consensus Study). Academy of Science of South Africa (ASSAf), Pretoria, South Africa

Wangenge-Ouma G, Lutomiah A, Langa P (2015) Academic incentives for knowledge production in africa. case studies of mozambique and kenya. In: Cloete N, Maassen P (eds) Knowledge production and contradictory functions in african higher education (1st edn.) African Minds, pp. 124–147

Warry P (2006) Increasing the economic impact of research councils: advice to the director general of science and innovation. DTI from the research council economic impact group. Research Council Economic Impact Group

Wilsdon J, Allen L, Belfiore E, Campbell P, Curry S, Hill S, Viney I (2015) The metric tide: report of the independent review of the role of metrics in research assessment and management. HEFCE, London

Zuckerman H, Merton RK (1972) Age, aging, and age structure in science. In:Riley MW, Johnson M, Foner A (eds) A sociology of age stratification. Russell Sage Foundation, New York, pp. 292–356

Acknowledgements

The authors thank the leadership at JHSPH for their support, as well as all the participants for their time and insights. We express gratitude to Prof. David R. Holtgrave and Dr. Melissa Davey-Rothwell for their support and invaluable contributions to various aspects of the study and to Prof. Janet DiPietro for her guidance and manuscript review. The study was conducted through support provided by The Lerner Center for Public Health Promotion at the Johns Hopkins Bloomberg School of Public Health. The content is solely the responsibility of the authors and does not necessarily represent the official views of the funder. This study was approved by the Institutional Review Board of the Johns Hopkins Bloomberg School of Public Health (#00006968). Respondents provided verbal consent to participate.

Author information

Authors and Affiliations

Contributions

NJ designed the study in collaboration with two other colleagues acknowledged above. NJ, BL, and AVN were involved in data collection. All authors were involved in the data analysis and interpretation. All authors contributed to the development of the manuscript, and reviewed and approved the final version.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Jessani, N.S., Valmeekanathan, A., Babcock, C.M. et al. Academic incentives for enhancing faculty engagement with decision-makers—considerations and recommendations from one School of Public Health. Humanit Soc Sci Commun 7, 148 (2020). https://doi.org/10.1057/s41599-020-00629-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1057/s41599-020-00629-1