Abstract

Policy studies suggest that evidence-informed policymaking (EIPM) requires framing and persuasion strategies, and an investment of time to form alliances and identify the most important venue. However, this advice is very broad and often too abstract. In-depth case studies help make this advice more concrete. To understand the engagement strategies of influential policy actors, this case study examines the Ontario Poverty Reduction Strategy, a large-scale provincial policy touted as “evidence-based.” The study is based on interviews with elite policy advisors (n = 19) serving in different stages of the policymaking process. It shows that the elite advisors effectively used persuasion tactics, networking and longevity strategies to counteract a volatile political context and competing policy priorities. In light of the findings, this paper provides practical recommendations on how evidence producers can emulate such success in different contexts: understand formal and informal processes, master and exercise political acuity, and strategically establish networks with a diverse group of policy actors in order to effectively frame and communicate evidence.

Similar content being viewed by others

Introduction

Children’s socio-economic status (SES) remains the most powerful predictor of their educational opportunities and outcomes (e.g., PISA, 2010; Campaign 2000, 2013; People for Education, 2013). It has long been known that children in poverty are at a significantly increased risk of suffering poorer outcomes in health and education, and are more likely to continue living in poverty as adults. The Organisation for Economic Co-operation and Development (OECD) has stated that, “Failure to tackle the poverty and exclusion facing millions of families and their children is not only socially reprehensible, but it will also weigh heavily on countries’ capacity to sustain economic growth in years to come” (OECD, 2005).

In the last several decades, the global push for policy solutions to reduce child poverty has been met with an era of increasing pressures for better policy outcomes and government accountability. As a result, there has been a prioritization of evidence utilization in social policy reform agendas worldwide. However, the evidence on effective policy approaches to addressing poverty is often mixed and conflicting, adding further challenges in proposing solutions to an immensely complex problem. In short, while the case for reducing child poverty is not a difficult one to make, the issue of poverty reduction is a policy quagmire, contending with ambiguous evidence and dissenting views on effective approaches within complex political and institutional contexts. Nevertheless, the pursuit of using evidence to inform policies has drawn considerable interest from public policymakers, academics, practitioners, and other stakeholders in nearly every area of the social services sector, leading to focused efforts in this endeavor. For instance, this culture of using evidence has led to shifts in the policy engagement activities of particular stakeholder organizations (e.g., from advocacy to research efforts), in the attempt to establish and maintain legitimacy in the policy arena (Laforest, 2013).

Recent policy studies suggest that evidence-informed policymaking (EIPM) requires framing and persuasion strategies, and an investment of time to form alliances and identify the most important venue (e.g., Weible et al., (2012); Oliver et al., (2014); Cairney, 2016). However, this advice is very broad and often too abstract. To understand the engagement strategies of influential policy actors, this case study examines the Ontario Poverty Reduction Strategy (OPRS), a large-scale provincial policy touted as “evidence-based.” It is based on interviews with elite policy advisors (n = 19) serving in different stages of the policymaking process. This in-depth examination of the role of evidence in the OPRS and the interplay of macro and micro domains of influence sheds light into the multitude of influencing factors and the complex dynamics that pervade different facets of the policymaking process. It illuminates how poverty reduction organizations have worked to engage in developing poverty reduction policies, through adapting to a shifting policy culture that prioritizes “policy-relevant” evidence. The focus of this paper is on how external stakeholders invited to advise and shape the OPRS have obtained and maintained policy influence through constructing their organizational and individual strategies around this endeavor.

Findings show that the elite advisors effectively used persuasion tactics, networking and longevity strategies to counteract a volatile political context and competing policy priorities. This paper provides practical recommendations and examples of how evidence producers can emulate such success in different contexts through: understanding formal and informal aspects of the policymaking process; strategically establishing common short-term and long-term goals with a diverse group of policy actors; and effectively communicating persuasive evidence-based narratives.

What influences evidence use in policy formulation?

The process of policy formulation entails setting priorities and identifying courses of action, largely constructed through socio-political processes. In reality, contextual forces such as politics and ideology, as well as budgetary and resource availability and constraints are what shape policy decisions and outcomes—not necessarily the best available evidence. Moreover, personal beliefs and values, political-economic factors such as elections and recession, pressure from advocacy groups and institutional culture and constraints are some of the major dynamics at play in influencing how evidence is used to inform policy (e.g., Lindblom and Cohen, 1979; Lavis, 2006; Belkhodja and Landry, 2007; Oliver et al., (2014); Cairney, 2015).

In spite of this, there is frequently an inherent assumption by researchers in the knowledge translation/utilization/mobilization field that with the availability, accessibility, and use of high-quality evidence, good policy decisions will result. This rationalistic view has been criticized as over-simplistic, as the reality of policy development is that it is non-linear, varied and complex. Fafard (2012), for example, argues that researcher and external stakeholder disappointments regarding policy decisions based on politics and ideology rather than scientific knowledge are reflective of a lack of understanding of the theory of government decision-making and of the role and nature of politics in policy formulation.

The quality of public policy decisions is largely dependent on factors outside of the content of the evidence itself, including the skills and abilities of policymakers to analyze and apply the best availability evidence to the policy problem (Davies, 2012; Cappe et al., 2010). Oliver (2006) notes that, “science can identify solutions to pressing public…problems, but only politics can turn most of these solutions into reality” (p. 201). Consequently, effective policy development is not simply a matter of good technical design or applying evidence to shape policy. It is also essential to consider the processes through which policy is formulated (Gilson and Raphaely, 2008). In their use of evidence, policymakers must interpret meaning and implications as they pertain to specific policy problems and decisions. They must assess the quality and credibility of evidence, based primarily on their professional norms and training, prior knowledge, objectives and parameters for evidence use, as well as whether it is politically and economically conducive to their goals (Bogenschneider and Corbett, 2011; Caplan, 1979; Weiss and Bucuvalas, 1980; Tseng, 2012).

In this way, the use of evidence is invariably affected by broad contextual factors that are heavily influenced by the socio-political climate. While there are a multitude of models and frameworks to explain the policy process, there are no models that comprehensively illustrate the complex role of evidence within the policy process, including how and why particular sources and types are influential. Weiss’ influential work on research utilization (Weiss 1977; Weiss 1979) delineates various ways in which research evidence is used to inform policymaking. Highlighted is the complexity of the process, emphasizing that it is non-linear and influenced by multifarious, complex interactions that cannot be captured by clear-cut models. In particular, she explicates the ‘‘enlightenment’’ or tacit, indirect function of research evidence as one source information source among many that informs policy decisions. Moreover, Weiss (1977) points out that instead of a taken-for-granted independent variable, research utilization should be understood as malleable; steered by contextual shifts in the dominant discourse and sharing reciprocal influence with policy. Parkhurst (2017) further illuminates how contextual factors come into play in evidence-to-policy utilization, focusing in particular on the political realm of decision-making. As he put it, “Political decisions take place within contextually specific institutional structures that direct, shape or constrain the range of possible policy choices and outcomes” (p. 9).

Weiss and Parkhurst have provided clarification on important elements of the policymaking process and the role of evidence, adding valuable insight into rarely considered aspects of major influencing elements. However, these contributions are limited, as they do not explicitly examine the strategies undertaken by influential policy actors to frame evidence in ways that would appeal to political considerations and policymaker heuristics. Other areas that are not addressed in detail by these frameworks include the diversity of different policy actors (individuals and organizations) in their understandings and approaches to evidence use, and their varying degrees of influence within the numerous levels and areas of government. As well, what must be considered are the different norms in the many areas and levels of government that determine what, how and why evidence is used, and how this interplays with the broader political context. Adding to the complexity is the influence of networks, the dominant public discourse and sudden shifts in the policy environment that call for abrupt changes in policymakers’ attention. Furthermore, there are differential notions of what qualifies as “evidence” both within and between policy actor groups. While the predominant assumption in the evidence utilization literature is that research is evidence, in reality, policymakers include and value different types of information in what they deem as “evidence,” beyond scholarly research. Establishing the differential ways in which evidence is defined by the individuals who use it—rather than assuming that groups of policy actors work with the same understandings—is an important initial step to understanding effective evidence use in policymaking. Moreover, it is necessary to consider why different forms of information might be useful to particular actors in order to understand how to effectively ‘‘push’’ or mobilize evidence for policy development.

How policymakers define ‘‘evidence’’

Broadly conceived, evidence can be defined as, “an argument or assertion backed by information” (Cairney, 2016, p. 3). Research or scientific evidence refers to information that is systematically collected using established methods, sometimes including a hierarchy of scientific methods, with for instance, meta-analyses or randomized control trials published in prestigious, peer reviewed journals at the top (Nutley et al., 2013). This is the definition that is widely used by producers of scholarly research. However, policymakers’ more general definition of evidence encompasses a wide spectrum of “evidence” that is opinion-based, such as public consultations. This may be attributed to policymakers’ concerns with the why, how and for whom a particular policy action would work and under what conditions. In particular, policymakers must factor in the cost-benefit analyses, potential unintended consequences, public opinion, timing of elections, and so on, as part of their decision-making deliberations. Therefore, they are likely to be interested in a wide variety of information, including opinion (e.g., public consultations and polls), distributional effects, market-based considerations (political-economic cost/benefit analysis) as well as ethics. In their interviews with key education policy staff, Nelson et al., (2009) found that policymakers even conceptualized “research” as including: empirical findings, personal experiences, data and constituent input.

Caplan (1979) suggested that a major barrier to the effective use of evidence in policy formation is the fundamental differences between researchers and policymakers in how they define evidence. Furthermore, Tseng (2012) argues that it is particularly important to not only understand how individuals in different policy roles understand evidence, but also why they hold these views, as critical components to understanding evidence use. Indeed, user perception has been found to be the essential mediating factor between evidence characteristics and use, rather than content (Gabbay et al., 2003; Hennink and Stephenson, 2005; Huberman, 1994; Hutchinson, 1995; Weiss and Bucuvalas, 1980b). Beyond simply examining the attributes or characteristics of the evidence content itself, conceptualizing evidence utilization and exchange as they relate to how and why policy actors value evidence, influenced by the socio-political context, helps to illuminate the importance of heuristics in EIPM processes.

Numerous external influencers affect the ways in which policymakers use and are influenced by evidence, adding further complexity to understanding how evidence can be used effectively for policy development. Not only does the nature of the political environment play a fundamental role, the differential stages of the policy process within which policy actors are situated in their particular roles are also critical influencing factors.

Politics and processes

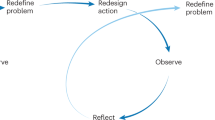

Theories such as Kingdon’s (1984) Multiple Streams Analysis, Jones and Baumgartner’s Punctuated Equilibrium Theory (e.g., Jones and Baumgartner, 2012), and Jenkins-Smith and Sabatier’s Advocacy Coalition Framework (e.g, Jenkins-Smith and Sabatier, 1993) illuminate policymaking in the context of a complex environment, in which key policy actors await politically opportune times to enact policy change. Simultaneously, however, they can influence events and the dominant discourse in creating the “right” conditions through persuasive evidence use to appeal to different audiences with the support of strong networks. While they may not be able to control the tides of the policy environment and the broader contextual factors at play, they can learn to ‘‘swim through the currants’’ through strategically navigating the shifting conditions.

In order to understand the role of key policy actors in influencing policy change through evidence use, it is important to distinguish between the stages of policymaking and thus, the differential ways in which actors in each stage influence the process. This is an area that has been neglected in the literature, with typically vague depictions of the policymaking process and the role of influential policy actors as a nebulous process influenced by homogeneous groups of actors. The assumption tends to be that particular groups of actors, such as bureaucrats, advocates and academics, use evidence and influence policy in the same ways, without consideration to the particular stage and area of the policy process in which they are involved. Furthermore, individuals within policy actor groups are diverse in their perspectives, values, agendas, heuristics, and approaches to evidence utilization.

The literature essentially fails to distinguish between two broad groups that use evidence in different ways to influence policy, contingent upon their roles and the stages of policy within which they work. The evidence-informed policymaking literature tends to address the technical, how to area of policy development—in other words, the second-order stage, after the decision has been made to move forward with a given policy. Policy actors in this stage are typically governmental staff involved with developing the parameters of the given policy, including programming and implementation logistics for the execution of an established policy. They often work in collaboration with technical advisory committees, comprised of practitioners in the field who advise on the technical areas of implementation such as best practices. In short, the second-order stage is characterized as technical, formal, and publicly official and evidence use in this stage is essentially for policy development. In this study, advisors in this stage are referred to as ‘‘official advisors, second-order’’ (OA-SO).

Prior to this stage is the ‘‘first-order,’’ or whether to stage of the process, when actors advise on whether or not the policy in question should be established in the first place. Within this stage, high-level decisions are established in a complex, elusive process, with different actors using evidence in numerous ways—informally, and unofficially. Actors advising in this stage are comprised of non-governmental actors, typically with close personal and prior professional relationships with decision-makers, essentially serving as unofficial, trusted advisors. In short, actors in this stage of the policy process are characterized as elite, close-knit and unofficial and hereafter referred to as ‘‘informal advisors, first-order’’ (IA-FO).

Thus, the ways in which evidence is used within these broad stages differ in their purposes, functions and actors involved. This paper focuses on clarifying the process of evidence-informed policymaking through examining the ways in which evidence was used to develop the OPRS in these two distinct stages. Rather than a discussion of the OPRS as an evidence-based policy success story, this case study aims to clarify the role of evidence within the policymaking process.

Case study selection: The Ontario Poverty Reduction Strategy (OPRS)

In a time of global economic downturn, the OPRS was developed in 2008 through the Cabinet Committee on Poverty Reduction, established in 2007 by the former Premier of Ontario, Dalton McGuinty. The OPRS was a cross-governmental, large-scale, and long-term-initiative, with fifteen provincial ministries involved in this unprecedented commitment to reduce child poverty in Ontario by 25% in 5 years. From the outset, the central focus of the poverty reduction strategy was on children and youth, focusing on education, social supports, and health care for low SES children and youth. With “smarter government” as a key priority area, the OPRS was publicized as an evidence-based strategy (Ontario Government, 2008).

Methodological issues with measurements of outcomes are discussed in the 2015 report on the OPRS (https://www.ontario.ca/page/poverty-reduction-strategy-2015-annual-report). However, Phase I (2008–2013) shows progress overall. The following chart (Fig. 1) in this annual report shows general data on the impact of the OPRS through the 2008 recession, in comparison with two previous recessions in 1981 and 1989.

The data were measured using Statistics Canada’s after-tax low-income cut-off (LICO), and shows the impact of the OPRS on child poverty reduction, in contrast to the previous recessions, when there was no poverty reduction strategy. As the chart illustrates, the child poverty rate increased significantly subsequent to the 1981 and 1989 recessions. In 1984, 3 years after the 1981 recession began, the child poverty rate was 17.4% higher than the most recent low point, and 3 years into the 1989 recession (1992), the child poverty rates were 30.5% higher than the most recent low point. These rates contrast with the child poverty rates subsequent to the 2008 recession, as they were 7.1% below the previous low point after 2 years (2010), and 3 years into the recession (2011), it was 6.1% above this low point in rates (Ontario Government, 2015).

These data suggest the effectiveness of an evidence-informed policy initiative in mitigating the effects of recession on child poverty rates. Thus, the OPRS was selected as an optimal case for analysis in examining the role of evidence in addressing a complex policy issue in a period of fiscal uncertainty. Given its evidence-based claims, the OPRS served as an ideal case to examine how evidence can drive such policies forward in a challenging political context.

Research design

Data from interviews with external stakeholders serving as policy advisors (hereafter, referred to as policy intermediary actors) provided in-depth illumination into the political and systemic conditions under which evidence was used to inform the OPRS. The data also illustrate how the policy window for evidence utilization provided opportunities and constraints to these advisors in engaging with the policy development process.

Data selection process

The original interview selection plan for this phase was purposive sampling of the external advisors to the lead office for the OPRS. A list of 54 high-level external advisors was provided by the Ontario Public Service’s treasury board secretariat. The focus of this study was on external stakeholders’ perspectives on the evidence-to-policy process and their strategies for influence. However, in order to include internal perspectives about the OPRS process, nine OPRS policymakers were contacted for interviews (randomly selected senior level policy analysts, advisors and managers from the poverty reduction strategy office), of which two were obtained.

The first interviewee was with external advisor Michael Mendelson, senior scholar at the Caledon Institute for Social Policy. Mendelson’s descriptions about the distinct roles of advisors in the first and second-order stages of the policymaking process led to the restructuring of the study, to separately examine each stage. The initial approach was to examine the policymaking process in general, as these stages are not clearly distinguished in the EIPM literature. As an influential actor in both areas, however, Mendelson’s insights about the unique roles and contributions of the policy actors in each stage provided clarity to an oft-muddled area. Mendelson provided information about and connections to the first-order interviewees (n = 9), as information for this elite, high-level and unofficial nature of the group was not publicly available.

Participants

Nineteen interviews were conducted in total. However, there were 15 individual participants, as four of the individuals advised in both groups. These four participants provided separate answers for the interview questions, particular to relevant stage of the process. Thus, 19 total interviews were obtained, with 12 second-order interviews and seven first-order interviews.

The basic participant profiles are listed in Table 1. Names are provided for the four participants who consented to be named (two of whom insisted on the importance of naming experts) and pseudonyms were assigned to the others.

Data collection

Interviews were semi-structured and ranged from 45–120 min in length, the majority conducted face-to-face, with three telephone interviews and one videoconference due to participants’ travel schedules. Participation was voluntary, and no compensation was provided. All interviews were audio-recorded and transcribed verbatim for use in data analysis. Two sets of interviews were conducted: with first (IA-FO) and second-order (OA-SO) advisors. The interview questions were based on a general framework for all participants, applicable to both the establishment and development stages of the OPRS. However, follow-up questions at times focused on the specific policy stage if there was a need for clarification on particular responses. Questions for the policymakers were slightly different as well, focusing primarily on external advisor recruitment and evidence use from an internal perspective.

The four interviewees who were involved in both the IA-FO and OA-SO groups were asked to separately address the questions in terms of their experiences in both stages of the process, and their responses were correspondingly separated into the respective phases for the data analysis.

Data analysis

Qualitative content analysis was utilized to analyze the interview data, chosen for its systematic, flexible approach (a combination of concept-driven and data-driven), and the reduction of data within a coding frame that addresses the research question (Schreier, 2014; Holsti, 1969). Rather than a strict adherence to this approach, however, the coding frame was developed based on emerging themes that were unaccounted for throughout the data analysis. The EIPM literature informed the coding framework, but was open to adjustments based on emerging themes from the interview data.

Using qualitative content analysis, systematic abstractions for meaning were drawn with linkages between sections of data. Double coding was conducted to validate the coding framework by repeating the categorization of data sections. Extracting sections that were directly relevant to the research question mitigated the risk of personal bias interfering with understanding the data. This data-driven approach validated the coding frame. The relative flexibility of the qualitative content analysis approach worked to align the coding frame with the data, centered on deriving contextually located meaning (Schreier, 2014).

The coding framework was developed based on elements of the qualitative content analysis that were most suitable to the study. First, separate general coding categories for the IA-SO and OA-SO interview data were established based on the interview questions and EIPM literature. As mentioned, however, it was open to revision based on emerging themes from in-depth reviews of the complete transcripts (N = 19). The subsumption approach was then used to generate data-driven sub-categories. This entailed reviewing the data for relevant concepts, subsuming sections into sub-categories and creating new sub-categories when necessary, until saturation was reached and new sub-categories were no longer found (Mayring, 2010).

Prior to finalizing the main codes in the framework, a pilot phase, or trial coding, was conducted to ensure that any shortcomings would be amended as necessary. Three IA-FO transcripts and three OA-SO transcripts were randomly selected and reviewed for the pilot (N = 6; one-third of all the transcripts), each representing interviewees from diverse backgrounds (bureaucrats, academics, and advocates—for each group). As only minimal revisions were involved, the main analysis was undertaken after the first trial. This entailed segmentation of the transcripts into units of coding, and subsequently, assigning the units into the categories in the coding frame. Although coding was conducted using the established framework, the approach was less rigid throughout the main analysis process as the coding framework was susceptible to change as appropriate.

The development of the coding framework and the subsequent analysis served to provide a strong description of the interview data, as it was driven by both concepts and data through an iterative process. The analysis was deepened through ‘‘axial coding’’ (Corbin and Strauss, 2008), as connections were drawn through relationships between themes. The entire process was completed separately for the IA-FO and OA-SO interview transcripts. Key findings from the analysis are presented in the next section, culminating in the policy recommendations section for policymakers and external evidence producers seeking social policy change.

Key findings

The case of the OPRS demonstrates that working towards effective EIPM requires understanding policy processes and the different capacities for evidence use throughout. In addition to this knowledge, the degree to which evidence can influence policy change is contingent upon policy actors’ abilities to navigate complex and shifting political contexts. This includes distinguishing between policy development stages and the area-specific opportunities and constraints for evidence use. The fluidity of evidence influence and use throughout the establishment and development process for the OPRS has important implications for evidence producing policy stakeholders.

To begin, a brief description of how the IA-FOs and OA-SOs distinguished themselves in the OPRS is provided, illuminating how evidence use is determined by the stage in the policy development process. Subsequently, the discussion of key findings is structured to illuminate how broad recommendations for increasing EIPM can work pragmatically through the concrete examples provided by the interviewees. The broad themes that emerged from the analysis are: the connection between policymaker heuristics and receptivity to evidence; the importance of networks and how to maintain them; and the fundamental role of persuasive narrative in communicating evidence.

Based on these findings, recommendations for evidence producing and using policy stakeholders are provided with a brief discussion of future research directions.

First and second-order advisors

The selection criteria for the IA-FO and OA-SO were similar—albeit, in different degrees and variations—for instance, in terms of political considerations, networks, and credibility. Politically, one of the roles for some of the IA-FO members was to assist with selling the OPRS to their respective constituencies and networks. In this way, the IA-FO had a more direct and active role in the political aspect of the process, whereas the diverse OA-SO representation was described as more symbolic, representative of different organization types and evidence perspectives. As discussed, the two groups have distinct network roles and characteristics, as the IA-FO connections were largely direct, informal and personal, while the OA-SO network was largely related to organizational reputation and political considerations. This is reflective of the ways in which evidence was used, as the IA-FO engaged with it through dialog and debate, influenced by their own values-based agendas. The unofficial, close-knit, informal and covert nature of the group allowed for evidence use in this way. Whereas, the OA-SO networks were professional, based on their organizational affiliations and evidence contributions rather than personal relationships with policymakers. Thus, evidence use was contingent upon the stage of the OPRS development process, in terms of the target audience, objectives, framing and communication strategies.

Connecting policymaker heuristics and evidence receptivity

While areas such as politics, systemic issues, and knowledge mobilization are prevailing considerations in the EIPM literature, the ‘‘human’’ element is rarely included in these discussions. This is in spite of the key role it plays in steering policy directions, as psychology, character traits, moral values, heuristics, and emotional aspects of key people pervasively influence how information is processed and decisions are made. Policymakers, for instance, use evidence in particular ways to achieve their objectives, and evidence is also used to inform and shape their policy agendas (along with political and other considerations)—based on the aforementioned human elements.

The significance of heuristics and evidence use/receptivity is more relevant in the first-order policymaking stage; particularly with respect to the morally driven motivations of decision-makers, and the personal policy stories that are not widely known, yet are critical drivers of policy change. The personal convictions and values of decision-makers infiltrated every facet of the first-order stage of OPRS development. All of the IA-FO interviewees cited these as crucial, yet largely neglected aspects of the evidence-informed policymaking discourse. Not only does evidence inform and therefore shape decision-makers’ policy motivations and objectives, it is also used (framed and communicated) to further these agendas. The study’s participants emphasized that key decision-makers at the time, Mr. Dalton McGuinty (former Premier of Ontario) and Mr. Greg Sorbara (Minister of Finance in the initial planning stage) had personal convictions to address child poverty through policy. In fact, these personal convictions, perhaps more so than compelling evidence or political conditions, was a deciding factor in moving forward with the OPRS, and hence, manifested in how evidence was used to push the agenda.

People inevitably filter and use evidence through their lenses and biases, which are influenced by personal values, habits, beliefs, and emotions. This was most evident in the first-order stage of the OPRS, as the decision-makers were frequently described as having a pre-existing moral imperative to reduce poverty in Ontario. IA-FO interviewees referred to this as something that was “ingrained” or “within them.” The policy stories that are often attached to personal, private experiences, and events were said to be the underlying reason behind policymakers’ use of evidence in working towards policy change. Nevertheless, as mentioned, the factors influencing evidence utilization are not isolated variables, but rather, work conjunctively in complex and dynamic ways.

The interview data reveal that the OPRS policy intermediary actors were aware of the policy motives and objectives of their colleagues, including decision-makers. When the interviewees spoke about the “right people” being in place, they were referring to the moral incentives of key leaders to reduce poverty. For instance, Charles Pascal (special advisor to the Premier) highlighted the significance of key leaders’ values and deeply held beliefs in facilitating policy change:

[Greg Sorbara] had it in his soul to reform child welfare, as well as social welfare…he’s Finance Minister—somewhat unusual… You had somebody who really believed that we’ve gotta do something… You have [IA-FO1], who comes from a family of social activists, and he’s a change agent, and he’s passionate…

Mr. Sorbara’s deeply held beliefs superseded his expected role to “say no to everything” as head of the public treasury. As he explained,

I didn’t get into politics to figure out a way to make the rich richer and the well endowed more well endowed. My pre-disposition was that government needs to intervene in the lives of those who are struggling. That’s one of its missions.

However, carrying out this mission required political acuity to navigate the political realities:

I had decided from fairly early on that the theme of the budget in 2007 was going to be about assisting vulnerable populations. That was on my mind. And the trick was to make sure that was consistent with the Premier’s thinking, that the Cabinet was supportive of it and that the caucus would be supportive of it. And then it would be my job, after the budget, to sell it to the people.

In response to general questions about the enabling factors for the OPRS, the interviewees promptly and ardently described the human factor—in other words, the values driving the policy objectives—which was essential to every aspect of the OPRS process. It was emphasized that the evidence would not have been examined in the first place had it not been for the political will to address poverty reduction.

In response to whether the OPRS was a political move, IA-FO1 maintained that in fact, McGuinty was taking a serious political risk by pushing for big spending on poverty reduction during the threat of recession. He reiterated that McGuinty’s personal moral convictions moved him to strategize on how to accomplish political uptake for the OPRS and affected his approach to evidence use throughout the process: “It was definitely not political… [Mr. McGuinty] is a deeply moral guy.”

According to the interview data, ‘‘human’’ motivations and heuristics steered evidence use and ultimately acted as a critical and pervasive influence to enable the OPRS. Indeed, evidence serves the important function of informing people, who will have different interpretations of it; motivations for using it; and methods for applying it.

When it comes to policy establishment, there are numerous elements at play. As the interview data reveal, OPRS policymakers were influenced by a complex interplay of their own psychology, heuristics, and moral agendas, as well as the political and economic climate, their advisors, the evidence presented to them, and the roles and responsibilities of their particular positions. These powerful influences must be understood as working simultaneously to determine the establishment of the OPRS. The interview data show that possessing this knowledge was fundamental to the success of the policy intermediary actors in their evidence engagement strategies. While many of these factors are difficult or impossible to control, the data revealed how OPRS advisors were able to use evidence in particular ways to navigate resistance and leverage off of favorable conditions. Networks and persuasion tactics are discussed next as powerful tools for evidence use that were used by policy intermediary actors to move the OPRS forward.

Network significance and maintenance

Building and maintaining a network of key policymakers and stakeholders was cited by all of the interviewees as essential to obtaining status as policy intermediary actors. Whether or not they or their organizations were well-established, high-profile experts, all of the interviewees revealed that they were proactive in their efforts to maintain regular communications with policymakers. For instance, in response to how OA-SO2 (an academic) became involved in advising on the OPRS, he responded,

That’s more me being proactive, not necessarily them. Well, there’s a relationship. They’ll contact me on some issues and then I’ll also contact them and pitch them on certain ideas and ways of doing things.

In describing how her organization became involved in informing the OPRS, OA-SO5 revealed that they target policy efforts at all levels of government, and particularly at the municipal and community levels where the policies and ground-level poverty reduction evidence interact. Multi-level participation was one way in which the organization simultaneously establishes a policy presence and a relationship with government. As she explained,

We start to go have conversations with government. So things that we are doing municipally, we sit on many municipal committees related to issues where the thinkers and the decision-makers are at. We go to budget consultations. We take a very active role in going to those sessions that the government makes available to say, “We’re here as an organization and this is what we see.”

All of the external OA-SOs described different ways in which their established policy presence in both governmental and public sphere incited invitations to participate as advisors to the OPRS. For OA-SO2, it was his academic work that established him as an expert in particular areas of poverty reduction, coupled with his initiatives to reach out to government and communicate different policy options. Similarly, OA-SO1’s work had established her as an expert for decades, and her public presence in news and social media has contributed to her high policy currency. While interviewees felt that news and social media presence helped to initiate connections with government, more direct strategies such as engaging in policy-related events and even cold calling policymakers for meetings, were considered to be far more effective engagement tactics.

In addition to creating and building relationships, three of the interviewees also mentioned the importance of maintaining existing relationships, particularly in light of the sensitive political nature of government-stakeholder relations. OA-SO3, for example, described his organization’s politically sensitive approach to working with government on developing policy, in the interest of retaining positive relations:

We have informal political pressure that we impose on ourselves because we don’t want to burn the bridges that we have. The ability for us to get things done is based almost entirely on our relationships with decision-makers. We can make all the noise in the world, get all the media coverage for our reports that we want, but unless people like at [the Legislative Assembly of Ontario] or at City Hall are reading what we’re doing, then it’s not really worth it.

Interviewees considered relationship maintenance with policymakers to be a foundational precursor to advocating for evidence use. As OA-SO3 explained,

You kind of build those relationships. That means that you can start to try to push other policy items that are not on their agenda now, but you’ve kind of got those relationships, so you can actually push it a little bit more.

Building on positive relationships with government to effectively move policy conversations forward in particular directions was echoed by OA-SO5. When asked whether her organization feels free to push back against government policy action, she said, “We don’t want to compromise relationships. It’s way better to try to change things from inside the system.”

The responses from OA-SO3 and OA-SO5 are representative of the external OA-SO interviewees’ discussions on strategic relationship building and maintenance around political pressures. Interviewees felt that they were significantly more influential in developing the OPRS because of their positive, collaborative work internally, with government, as opposed to advocacy from the outside. In fact, four of the external OA-SO interviewees said that relationship building and maintenance with policymakers is formally built in to the policy work of their organizations. One aspect to maintaining positive relationships meant providing advance notice to policymakers about upcoming publications. For instance, OA-SO3 discussed how relationship maintenance with policymakers led to opportunities for policy engagement:

A lot of my job is to actually just do the relationship stuff down at [the Legislative Assembly of Ontario]. I have a bunch of people that I just go and meet with across a whole bunch of Ministries at semi-regular intervals and we update each other and work out what we can work together on. So with a report like the one we just released, it’s more just a kind of a heads up: This is what we did. Here are some of the things that we found. Have you thought about doing—and hopefully just getting them to start thinking about it. So we’ll do research that we know will be unpopular with decision-makers, but there’s a process that we go through to make sure that they don’t read it on the front page of The Star. We do tell them ahead of time; give them advanced copies, if appropriate, all that kind of stuff, so that they can be prepared for it. We don’t have to be best friends with them all the time, but we do have to make sure that we are not putting people offside.

Part of OA-SO3’s approach was to provide policymakers with the space and time to gather a response to publications of evidence that might compromise the policy agenda. This is illustrative of how the OA-SOs generally described their approaches to maintaining positive relations with policymakers regardless of whether their evidence was in agreement with the policy agenda or not.

In response to why certain high-quality evidence producing individuals or organizations might not be invited to advise on public policy/the OPRS, the general consensus from the OA-SO interviewees was that they are simply not equipped to effectively engage with policymakers. Interviewees generally felt that beyond high-quality evidence alone, strong political acuity, networks and/or resources were required for status as OPRS policy intermediary actors. Moreover, OA-SO2 said there is a reciprocal need to improve evidence exchange through policy dialog between external groups and government leaders. In particular, he referred to certain advocacy groups to illuminate this point:

It’s actually something that I noticed in Ontario, not just in Ontario, everywhere really, is that government would meet with advocates. The advocates would give their position and the government would sit there and nod and not say anything and then they’d walk away, and then they’d have the 400 reasons why what the advocates were saying won’t work or is wrong. So they never say it to their face and have a discussion; and part of the reason is that the advocates don’t know enough to give the government people the space to say it. To have that kind of dialog, you have to have a safe zone.

This “safe zone” was repeatedly referred to in different ways by the OA-SO interviewees, in reference to the key facilitators to meaningful policy dialog informed by evidence. When externals take on an abrasive approach (for various reasons), and when externals feel that government is not receptive to the evidence they contribute (again, for various reasons), the doors are mutually closed for productive policy engagement.

Purposeful communication

Deliberate, strategic planning for public presence as experts in poverty reduction issues was a high priority for external OA-SO interviewees. All of the interviewees agreed with the notion that there is high-quality evidence that is not used for policy, because efforts to ‘‘mobilize’’ it tend to be low, for various reasons (e.g., lack of capacity and skill, incentives, etc.). Six of the external OA-SO interviewees discussed their respective organizations’ prioritization of mobilization and networking plans for evidence use as a policy engagement strategy—specifically, social media, as well as news media coverage. Although social media was considered to be an important tool for policy engagement, it did not replace direct consultation with key policymakers as a more definitive marker for influence. OA-SO3 explained how it serves as part of an integrated plan for policy involvement:

When you are trying to influence decision-makers, you’ve always got your primary plan, which is to go and talk to them about it. I guess that’s how you really get things done; but you can build momentum through these other communication channels as well. We got good coverage in The Star; there was a good social media part that went with that, as well as going up to [the Legislative Assembly of Ontario] to talk to all the relevant people about it. It’s all part of an integrated thing where you are trying to get as much momentum behind what you’re doing as possible.

The external OA-SO interviewees’ targeted and varied approaches to informing policy (e.g., informing the public through social and news media, participating in consultations, and setting-up conversations with decision-makers) combined, served to drive the OPRS development forward. All of the external OA-SO interviewees representing NGOs discussed how their proactive engagement was a result of an organizational objective to become active and influential participants in shaping provincial social policy generally, and specifically the OPRS.

Although most of the committee and consultation opportunities were said to be available through government invitation, based on pre-existing relationships and reputation, three of the interviewees felt that an effective way to work towards that is to push evidence to policymakers for review. In spite of their organizations being well-established in the policy arena, the OA-SO interviewees said this did not make them immune to changes in the political currants.

Consequently, rather than taking for granted that they will be called upon for policy advice, they felt it was necessary to consistently and purposefully bring attention to the work of their respective organizations, to retain their status. When OA-SO6 was asked for her opinion on why certain organizations, including her own, seem to be consistently selected as policy advisors on poverty reduction issues, she responded,

I think it’s somewhat reputation, for sure. I think also paying attention to…the shifts in government and then, not always being invited, but certainly looking at who the key decision-makers are and getting recommendations in front of them.

Proactively bringing evidence to the attention of policymakers was a significant part of the organizational activities of the external OA-SOs—even those who had established relationships with government. Thus, networking with policymakers through evidence push strategies was one major way in which external OA-SO interviewees approached policy engagement.

Persuasion through narrative

Cairney (2015) explains that attention to policy problems is based on how they are framed or defined by stakeholders who, in the competition for attention, use evidence and persuasion tactics to sway policy decisions. This strategic way in which evidence is framed to push agendas applies to policymakers and external stakeholders alike, both within and between the many sub-groups and individuals in these broad categories.

According to the interviewees, political acuity was integral to effective evidence framing, which was cited as the key driver of success behind the OPRS. Evidence framing was utilized as a persuasion tool in every aspect of the policy process, in multiple ways and directions. Depending on the area and individuals involved, there were distinctive objectives and functions for its use. For instance, a neglected area in the EIPM literature is how internal policy actors use evidence within government to sway their policymaker colleagues in particular policy directions. This is briefly discussed, as it provides important insight for external stakeholders as well. It illuminates how evidence producers’ appeals to policymakers must take into consideration the different perspectives and uses of evidence within government, contingent upon factors such as internal politics and individuals’ heuristics. The interview data showed how effective policy intermediary actors understood this aspect of the policy process and used different framing approaches to speak to different audiences, even within government.

The successful inception of the OPRS was largely attributed to effective strategies to sell it through communications strategies, essentially framing the evidence in a politically appetizing way. Charles Pascal highlighted one particularly important strategy that used evidence to frame the issue in order to draw public support for the OPRS. To help circumvent public opposition to investing in poverty reduction in the midst of economic downturn, poverty reduction was framed as critical to achieving economic improvement. As Pascal explains,

We wanted to make the argument that it’s not that you can’t afford it; it’s that you can’t afford not to.…We funded a study by the Ontario Association of Food Banks that had partnerships with Don Drummond [noted Canadian economist and former chief economist for the Toronto Dominion Bank] and the Center for Competitiveness and Prosperity at the [University of Toronto] and we hired an economist who was actually formerly retired from the Toronto Star to write the thing and the study was called The Cost of Poverty. And we looked at what poverty cost to Ontario and Canada on an annual basis… we had a model that was validated up and down. It was totally innovative…and we came up with an astronomical price tag. Poverty costs Ontario 18 billion dollars a year—something like that. That ended up on the front page of the newspapers; it ended up on the radio. It got tons of traction and it became the talking point around poverty. I would say there was lots in the lead up to it, but I would say that that study was a game changer in terms of making an evidence-based case for why this is so important.

Here, Pascal highlights how evidence was effectively used for policy change through framing evidence around a strong economic imperative, supported by a network of influential experts and mobilized through news media connections. The interviewees spoke extensively about the purposeful involvement of particular individuals in their networks to inform and communicate the new evidence frame. This led to the paradigm shift that was necessary to gain public approval for the OPRS, making conditions amendable for decision-makers to move forward with it. To garner public buy-in from the government’s end, poverty issues were framed as matters of concern to the majority. Through changing the frame, poverty issues became important to the middle class. In IA-FO1’s words,

It’s all about the framing… can you turn the evidence into something saleable? By creating a middle class issue, we will solve the low-income issue. That’s the strategy. This doesn’t work in every field at every moment. You know, it worked in transit. Transit became saleable once we reframed it from a poverty issue to a middle-income issue…Change the frame and you create a new political space, which you will own.

Working towards political approval for the OPRS through a framing shift required long-term investment of efforts and resources by many different stakeholders. The successful establishment of the OPRS was contingent upon wide public approval and stakeholder support rather than an imposition of a policy agenda. Coupled with the economic imperative narrative, was the social and moral justification for the OPRS. The general consensus among the OA-FO interviewees was that the key priority area focused on children in order to optimize support and mitigate push back. Although poverty is arguably an issue of income distribution, rather than an issue that particular to children, framing and marketing the OPRS as children-focused was cited as a political tactic to enable its establishment.

Although Premier McGuinty was decidedly in favor of the OPRS, the IA-FO interviewees admitted that he would likely not have been able to push for it had it not been for the effective, strategic evidence-based framing to diminish the political risk. As Pascal explained,

Governments are naturally risk averse. They are unrelentingly committed to not making mistakes. Where did McGuinty get the courage to put down two billion dollars against the backdrop of an eighteen billion dollar deficit? Where did that come from? Well, it came from… the storytelling. I call it evidence-based storytelling that we did in terms of the media; thus reducing the amount of courage it took for Mr. McGuinty to do it… We intentionally raised the voter’s consciousness through the evidence-based storytelling.

In light of the poor economic climate with the threat of recession, it was critical to build a narrative that would generate public buy-in for the costly OPRS. It would not have been sufficient to simply have a leader with the political will to drive it forward through a difficult economic period.

Another framing strategy to win public approval was to emphasize the contributions of exhaustive consultations with many different stakeholders, with evidence from a wide range of sources. IA-FO1 said, “The OPRS in 2008 was a negotiated settlement. It was not government releasing a strategy. We co-developed that strategy.” Establishing strategic partnerships within and between government and external stakeholders to co-develop the OPRS was fundamental to political uptake. According to IA-FO1, it was important for supporters to feel they had been active participants in collaboratively developing the OPRS and legitimizing their perspectives as valued forms of evidence.

Evidence was framed and communicated in multiple directions. Not only did evidence producers frame evidence for policymakers and public opinion, policymakers also framed evidence to garner uptake from the public as well as internally, to their colleagues. IA-FO1 illuminated this point with an example of a policy ‘‘solution’’ within the OPRS that was tactically framed to generate wide support:

There were a lot of people against full day kindergarten, including a lot of the civil service who wanted to invest that in childcare… I have a good sense of what’s going to work. Some of this stuff, if you get into a policy quagmire, don’t try and fight yourself out of the quicksand. Change the frame…the child benefit was changing the frame on a quagmire of social assistance and claw backs. Full-day kindergarten was changing the frame on the childcare quagmire.

As OA-FO1’s point illustrates, exercising political acuity in framing evidence was required for dealing with internal governmental staff as well as external stakeholders. “Selling policy” to internal policymakers is rarely discussed in the literature, but it is an important way in which evidence is used and framed to build support from the inside. According to the FOPAs, it was a critical piece of the policy process that was required for the OPRS to move forward.

As a policy intermediary actor, Michael Mendelson experienced the effectiveness of this approach firsthand with some of his own work on poverty reduction. He described how changing the packaging and communication strategy on the same evidence resulted in completely different outcomes for policy influence. Specifically, he included detailed and pragmatic strategies, gave it a catchier title, and partnered with someone at a high-profile think tank:

Actually, I did a few pieces on the Canada Job Grant, and it was all ignored. And then I realized that I wasn’t explaining it right and so I had to do a longer piece, and I did a longer piece with…[a consultant from a prominent public policy think tank]. That was an important paper because it got people’s attention. So, this is where I added benefit, because I was like, “We can sell this. We can sell an economic message…” So, what we needed at the time, because we were in a recession: “…it’s about kids; it’s about breaking the cycle, so it’s a wise investment of dollars, because we don’t have many… and, it’s the right thing to do.”

Mendelson’s experience with this document demonstrates the effectiveness of strategic persuasion tactics through framing and communications strategies. Although the evidence had already been published, it was ignored until it was framed in particular ways, to appeal to the political context of the time. Framing and communicating evidence in this way helped to create the necessary political conditions for policymaker receptivity to policy change and simultaneously established these stakeholders as policy intermediary actors. Using political acuity to frame and communicate evidence in different ways was a predominant theme that emerged from the SOPA interview data.

Multiple policy languages

Framing for a wide audience required using “multiple policy languages,” cited by interviewees as a critical skill for gaining policy influence. In broad terms, this entailed framing the same evidence in ways that simultaneously reflected both economic and social welfare incentives, emphasizing particular areas depending on the audience. OA-SO3 provided the following example of his organization’s approach to framing for policy uptake:

By being able to talk to [policymakers] about the cost savings that they might get by doing one particular thing, it also means that I can talk to them about why they should do it from an equity perspective, as well. So you have to be able to talk more than one language.

This approach illuminates the need for evidence producers to be proficient in multiple policy languages using flexible framing tactics for policymakers, who are swayed by different appeals. However, this skill is not only necessary when engaging with policymakers. All of the OA-SOs described how important it was to use multiple policy languages for developing networks with potential partners and supporters for their poverty reduction policy objectives. As OA-SO6 put it,

We need to think about multiple audiences. The fact that the business community is one audience; there are not-for-profit organizations… So you’ve got to really think about: ‘‘What are the different audiences?’’ And then, ‘‘how do you position this in a way that has resonance and that you can move it forward?’’

While positioning and effective framing for wide and diverse audiences was discussed as fundamental to the work of all the interviewees, however, a complete shift in issues foci to align with the current policy agenda was also necessary at times.

Building a portfolio of “policy-relevant” expertize

Through aligning their work to “policy-relevant” issues, policy intermediary actors simultaneously maintained their networks with key policymakers and created a persuasive narrative around their evidence in the endeavor to push their agendas.

For instance, when the policy intermediary actors were asked how the foci of their work was determined, six of the interviewees said it was a combination of responding to issues on the government agenda, and working to move certain policy conversations forward. OA-SO3 provided an example of how his organization shifted the focus of their policy evidence production to match the public policy agenda:

Traditionally, the work at [my organization] was much more focused on things like health care and housing, which are important determinants of health, but because there was so much work going on in the province and elsewhere around income and income security, we decided that we needed to pivot our work a little bit more to match the prevailing policy directions… So, we’ve been building a little bit more of a portfolio of reports and research that actually have clearer links to income.

Simultaneously, as he mentioned, the organization worked on establishing a portfolio of evidence that demonstrated explicit connections to a policy priority issue from an angle determined by government (in this case: income and income security vs. health care and housing). The other three OA-SO interviewees from research-producing organizations also shared that their organizations work to establish a portfolio of expertize on policy issues prioritized by policymakers as they emerge. In the case of the OPRS, the overall objectives of government and external organizations aligned, in terms of reducing poverty in Ontario. However, as the approaches and foci were varied, the external OA-SOs shifted the frames of their respective organizations’ work to connect with the priority areas of the government. Nevertheless, as mentioned, the external OA-SOs did not base their work or frame evidence solely on the policy priorities of the government. Rather, they sought to integrate government policy efforts with the policy objectives of their respective organizations, primarily through re-framing and balancing responsive work with bringing evidence and attention to other issue areas.

For instance, when asked whether it would be more accurate to view his organizations’ work as contributing to setting the policy agenda, or whether the policy agenda contributes to the direction of his organizations’ work, OA-SO3 responded:

Both. We have goals within our organization where every year we try to get one new policy idea onto the agenda and get some traction around it and measure our success based on that—well, part of our success based on that. So we do try to set the direction for some things, but we do also respond to things as they come up…So we try to strike a balance, and one way that we try to do that is often by working with governments on issues that are of relevance to them.

While the policy intermediary actors worked closely with government to ensure their work would be relevant to them, they did not compromise on the integrity of the evidence. The common thread in OA-SOs’ policy engagement strategies was to frame and communicate the evidence in particular ways rather than changing their policy objectives or make attempts to fit the evidence to reflect the governmental policy directions. Instead, they found ways to use evidence in ways that would resonate with policymakers, in light of their knowledge of the policy process.

Discussion

The case of the OPRS demonstrates that when evidence is framed in particular ways, it has the potential and power to sway policy decisions—even through challenging political and economic conditions such as the threat of recession. Nevertheless, well-framed evidence cannot, by itself, determine policy. Conceptualizing how evidence is used in policymaking is complex, and cannot be considered apart from the heuristics and beliefs of policymakers, and the balance of power through influential networks.

The heuristics of key policy actors encompass numerous factors, such as personal motivations and agendas, who the evidence “sellers” are and whether they are amenable to the policy proposals being pitched, how they collaborate with other policy actors, and so on. The ways in which evidence is used is utterly dependent upon the policy objectives, and consequently, how individuals interpret it—all within the broader political context, and the associated political opportunities and constraints. Moreover, policies are inextricably linked to people’s morals and are essentially values-driven. Thus, the OPRS emerged out of a window of opportunity created by a complex interplay of evidence being used in different ways, by particular people, pervasively throughout the process.

Implications for policy stakeholders

The interviewees employed political acumen to constantly shift and re-focus the ways in which they use evidence to further their policy missions, and in so doing, simultaneously drove policy change forward with evidence and established themselves as influential policy actors. External stakeholders can more effectively bring evidence to the policy table when they understand how policy processes work and are able to navigate evidence through the political circumstances.

Furthermore, the human element of decision-making is a powerful aspect of the policy process that determines the course of evidence use. Personal values, moral imperatives, and ingrained beliefs require particular attention to future studies in evidence-informed policymaking, as shown by the OPRS case. According to Cairney et al. (2016), new evidence of a policy solution simultaneously necessitates successful persuasion preceded by a shift in policy prioritization (that is in large part, steered by these moral considerations). The authors explain that some forms of evidence can be utilized to encourage a shift in policy attention that is already occurring as a result of social or economic crises. The OPRS case provides validity to this perspective, illuminating the shortcomings in the predominant literature, that tend to focus on a singular point of decision-making in the policy process, rather than the broader context. A long-term, macro perspective of the process will help evidence producers advance EIPM through more strategic ways of approaching participation.

Knowledge about the different policy development stages (first and second-order) can also help external stakeholders make effective connections. For instance, given the FOPAs’ proximity to decision-makers, powerful influence on shaping policy, and relative accessibility, perhaps connecting with IA-FOs would be a more effective alternative to attempting to reach the decision-makers.

In short, in order to be effective participants of EIPM, external stakeholders must recognize the complex dynamics between the multitude of micro and macro factors at play. Not only must they be able navigate through these elements, they must use them to their advantage in their policy engagement strategies.

Conclusion

This study focused on examining the contextual conditions and strategic approaches that were favorable or limiting to evidence-informed policymaking in the OPRS. Findings contribute insight into why and how particular stakeholders were able to engage in informing the OPRS, and thus how evidence was used in different stages and areas in the process.

Evidence use had an important role in enabling the right conditions for an OPRS that was deliberately and explicitly evidence informed. For instance, evidence was framed and communicated by external stakeholders in particular ways to sway policymakers by appealing to their values, emotions, and political agendas. The policymakers, in turn, also framed and communicated evidence to justify the OPRS and garner political uptake—both internally and externally. This case is demonstrative of how evidence producers can proactively work to create a window of opportunity for evidence-informed policy change rather than awaiting its opening.

Future research

A number of questions are raised in light of the findings and should be considered for future research. For instance, a more in-depth investigation into policymakers’ uses of evidence internally, within government, to counteract dissenting views on policy change would add important insight into the policy process. This understanding would help stakeholders better understand how to frame evidence in a way that is politically appetizing, to appeal to a wide audience of policymakers.

It would also be worthwhile to explore how the trend towards evidence-informed policy has changed organizational and policymaking norms, and as a result, how this has affected social policy and community organizations’ work with their target populations. This would add important insight into the ways in which vulnerable populations might be inadvertently impacted by the evidence-informed policy movement through shifting organizational priorities. It would contribute to critical discussions about how evidence is defined and valued by diverse policy stakeholders and how people living in poverty are actually impacted by normative EIPM practices.

Data availability

The datasets generated during and/or analyzed during the current study are not publicly available due to the potential for individual privacy to be compromised, but are available from the corresponding author on reasonable request.

References

Belkhodja O, Landry R (2007) The Triple-Helix collaboration: Why do researchers collaborate with industry and the government? What are the factors that influence the perceived barriers? Scientometrics 70(2):301–332

Bogenschneider K, Corbett TJ (2011) Evidence-based policymaking: Insights from policy-minded researchers and research-minded policymakers. Routledge, New York, NY

Cairney P (2015) How can policy theory have an impact on policy making? Teaching Public Administration 33(1):22–39

Cairney P (2016) The Politics of Evidence‐Based Policymaking. Palgrave Pivot, London

Cairney P, Oliver K, Wellstead A (2016) To bridge the divide between evidence and policy: Reduce ambiguity as much as uncertainty. Public Administration Review 76(3):399–402

Campaign 2000 (2013) Strengthening families for Ontario’s future: 2012 report Card on Child Poverty, Family Service Toronto, Ontario

Caplan N (1979) The two-communities theory and knowledge utilization. Am Behav Sci 22(3):459–470

Cappe M, Fortin P, Mendelson M, Richards J (2010) Stand up for good government, MPs. Caledon Commentary, August. Caledon Institute of Social Policy, Ottawa

Corbin J, Strauss A (2008) Basics of Qualitative Research. Sage Publications, Los Angeles

Davies P (2012) The State of Evidence-Based Policy Evaluation and its Role in Policy Formation. National Institute Economic Review 219(1):R41–R52

Fafard P (2012) Public Health Understandings of Policy and Power: Lessons from INSITE. Journal of Urban Health 89(6):905–914

Gabbay J, le May A, Jefferson H, Webb D, Lovelock R, Powell J, Lathlean J (2003) A case study of knowledge management in multiagency consumer-informed communities of practice’: Implications for evidence-based policy development in health and social services. Health 7(3):283–310

Gilson L, Raphaely N (2008) The terrain of health policy analysis in low and middle income countries: a review of published literature 1994–2007. Health Policy Plan 23(5):294–307

Hennink M, Stephenson R (2005) Using research to inform health policy: barriers and strategies in developing countries. J Health Commun 10(2):163–180

Holsti OR (1969) Content analysis for the social sciences and humanities. Addison-Wesley, Reading, MA

Huberman M (1994) Research utilization: The state of the art. Knowl Policy 7(4):13–33

Hutchinson JR (1995) A multimethod analysis of knowledge use in social policy research use in decisions affecting the welfare of children. Sci Commun 17(1):90–106

Jenkins-Smith H, Sabatier PA (1993) The Study of the Public Policy Process. In: Sabatier PA, Jenkins-Smith. H (ed) Policy change and learning: An advocacy coalition approach. Westview Press, Boulder, CO, p 1–9

Jones B, Baumgartner FR (2012) From there to here: Punctuated equilibrium to the genderal punctuation thesis to a theory of government information processing. Policy Stud J 40(1):1–20

Kingdon JW (1984) Agendas, Alternatives, and Public Policies. Little, Brown, Boston

Laforest R (2013) Fighting poverty provincial style. In: Young SP (ed) Evidence-based policy-making in Canada: a multidisciplinary look at how evidence and knowledge shape Canadian public policy. Oxford University Press, Don Mills, pp 150–164

Lavis JN (2006) Research, public policymaking, and knowledge‐translation processes: Canadian efforts to build bridges. J Contin Educ Health Prof 26(1):37–45

Lindblom CE, Cohen DK (1979) Usable knowledge: Social science and social problem solving. vol. 21. Yale University Press, New Haven, CT

Mayring P (2010) Qualitative Inhaltsanalyse. In Mey G, Mruck K (eds) Handbuch Qualitative Forschung in der Psychologie. VS Verlag für Sozialwissenschaften

Nelson SR, Leffler JC, Hansen BA (2009) Toward a research agenda for understanding and improving the use of research evidence. Northwest Regional Educational Laboratory (NWREL), Portland, OR. http://www.nwrel.org/researchuse/report.pdf

Nutley SM, Powell AE, Davies HTO (2013) What counts as good evidence. Alliance for Useful Evidence, London

OECD (2005) Combating poverty and social exclusion through work. Policy Brief. OECD, Paris

Oliver TR (2006) The politics of public health policy. Annu Rev Public Health 27:195–233

Oliver K, Lorenc T, Innvær S (2014) New directions in evidence-based policy research: a critical analysis of the literature. Health Res Policy Syst 12(1):34

Ontario Government (2008) Breaking the cycle: Ontario’s Poverty Reduction Strategy. http://www.children.gov.on.ca/htdocs/English/documents/breakingthecycle/Poverty_Report_EN.pdf

Ontario Government (2015) Poverty Reduction Strategy 2015 annual report. https://www.ontario.ca/page/poverty-reduction-strategy-2015-annual-report

Parkhurst J (2017) The politics of evidence: From evidence-based policy to the good governance of evidence. Routledge, New York, NY

People for Education (2013) Poverty and inequality. Toronto: people for education http://www.peopleforeducation.ca/wp-content/uploads/2013/07/poverty-and-inequality-2013.pdf

PISA O (2010) Results: Overcoming social background: equity in learning opportunities and outcomes. vol II. OECD Publishing, Paris

Schreier M (2014) Qualitative content analysis. In: Flick U (ed) The Sage handbook of qualitative data analysis, Sage, London, pp 170–183

Tseng V (2012) The uses of research in policy and practice. Social policy report. vol. 26, No. 2. Society for Research on Child Development, Ann Arbor, MI

Weible CM, Heikkila T, Sabatier PA (2012) Understanding and influencing the policy process. Policy Sci 45(1):1–21

Weiss CH (1977) Research for policy’s sake: The enlightenment function of social research Policy Anal 3(4):531–545

Weiss CH (1979) The many meanings of research utilization. Public Adm Rev 39(5):426–431

Weiss CH, Bucuvalas MJ (1980a) Social science research and decision-making. Columbia University Press, New York, NY

Weiss CH, Bucuvalas MJ (1980b) Truth tests and utility tests: decision-makers’ frames of reference for social science research Am Sociol Rev 45(2):302–313

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The author declares no competing interests.

Additional information

Publisher's note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Sohn, J. Navigating the politics of evidence-informed policymaking: strategies of influential policy actors in Ontario. Palgrave Commun 4, 49 (2018). https://doi.org/10.1057/s41599-018-0098-4

Received:

Accepted:

Published:

DOI: https://doi.org/10.1057/s41599-018-0098-4

This article is cited by

-

Rethinking the path from evidence to decision-making

Israel Journal of Health Policy Research (2023)

-

Tailoring dissemination strategies to increase evidence-informed policymaking for opioid use disorder treatment: study protocol

Implementation Science Communications (2023)

-

Understanding the unintended consequences of public health policies: the views of policymakers and evaluators

BMC Public Health (2019)