Abstract

Numbers and letters are the fundamental building blocks of our everyday social interactions. Previous studies have focused on determining the cortical pathways shaped by numeracy and literacy in the human brain, partially supporting the hypothesis of distinct perceptual neural circuits involved in the visual processing of the two categories. In this study, we aim to investigate the temporal dynamics for number and letter processing. We present magnetoencephalography (MEG) data from two experiments (N = 25 each). In the first experiment, single numbers, letters, and their respective false fonts (false numbers and false letters) were presented, whereas, in the second experiment, numbers, letters, and their respective false fonts were presented as a string of characters. We used multivariate pattern analysis techniques (time-resolved decoding and temporal generalization), testing the strong hypothesis that the neural correlates supporting letter and number processing can be logistically classified as categorically separate. Our results show a very early dissociation (~ 100 ms) between numbers, and letters when compared to false fonts. Number processing can be dissociated with similar accuracy when presented as isolated items or strings of characters, while letter processing shows dissociable classification accuracy for single items compared to strings. These findings reinforce the evidence indicating that early visual processing can be differently shaped by the experience with numbers and letters; this dissociation is stronger for strings compared to single items, thus showing that combinatorial mechanisms for numbers and letters could be categorically distinguished and influence early visual processing.

Similar content being viewed by others

Introduction

Numbers and letters are fundamental building blocks of our everyday social interactions. Humans constantly mix alphanumeric codes in their everyday language experience. Such skills depend on the acquisition of literacy and numeracy, which are fundamental steps in standard education. They are formally acquired in the first years of primary school, so experience with alphanumeric codes develops in a similar developmental window. While literacy acquisition shapes the visual human brain circuitry1,2,3, evidence on numeracy modulating a functionally independent brain substrate is still debated. In the present study, we evaluate if the human brain responses to visually presented alphabetic and numerical symbols can be categorically classified in time during early visual processing, potentially providing strong evidence for the hypothesis that literacy and numeracy recruit functionally distinct neuronal processes.

A large number of studies focused on the definition of the brain regions and the neural biomarkers, related to numbers and letter processing. When focusing on the spatial dimension, Shum et al.4 isolated an inferior temporal region in the right hemisphere that responded preferentially to numbers using iEEG (intracranial electroencephalography). In an fMRI study, Park et al. reported opposite hemispheric recruitment for numbers and letters5: strings of numbers recruited mainly the right lateral occipital regions, while strings of consonants recruited more the left mid-fusiform and the inferior temporal regions. Critically for our study, this spatial dissociation has been related to early visual processing, by taking advantage of temporally-detailed techniques. In a follow-up ERP study, Park et al.6 observed a division of labor for the two hemispheres concerning the location of the N100 component (mainly between 140 and 170 ms), more evident in left-lateralized electrodes for letters and more right-lateralized for numbers. Although it is not possible to determine an unambiguous brain origin for these ERP effects, this is indirect evidence for the recruitment of different brain sources for the two types of visual stimuli (because of the biophysical fact that different potential field distributions on the scalp indicate different spatial configurations of intracranial current sources; McCarthy and Wood7). The string of letters also elicited larger P200 responses “suggesting that the visual cortex is tuned to selectively process combinations of letters, but not numbers, further along in the visual processing stream”. In this last study, the authors also explored the neural response to single stimuli compared to strings of stimuli and no reliable difference was observed for single numbers compared to single letters in the ERP sensor-level responses. Importantly, such strong experiential influence of reading and mathematics on the human visual system would mature during primary school and would be later evident in adolescence and adulthood8.

A different line of studies however, indicate a larger overlap in the visual mechanisms at work for numbers and letters. Numbers in fact would recruit populations of neurons in both hemispheres, bilaterally, providing evidence against the separate right hemispheric specialization for numbers. Along these lines, Grotheer et al.9 observed a bilateral preferential response for numbers in the inferior temporal gyrus (ITG) when compared with letters, false numbers, or everyday objects, in an fMRI study. More critically for our study, this bilateral hemispheric recruitment was also related to early visual processing. In a MEG study, Carreiras et al.10 reported source-level evidence of bilateral recruitment for strings of numbers at around 150 ms compared to other alphabetic string conditions (consonant strings, words, and pseudowords), which mainly showed activation in the left hemisphere. These studies suggest that rather than one specific hemisphere or brain area, numeracy and literacy are complex processes that recruit an extended set of highly overlapping brain regions, whose lateralization would not be so clear-cut. In other words, similar visual processing neural mechanisms (especially in the left hemisphere) could be recruited for numbers or letters, and the related neural activity could not be easily dissociated. Consistently, Aurtenetxe et al.11 did not report reliable differences in a MEG experiment (both at the sensor and at the source-level) between visual perception of numbers and letters. When presented in isolation, single numbers elicited larger evoked responses compared to single letters around ~ 150 ms. Such response was however evident in the same occipital and left temporal brain areas, potentially reflecting the recruitment of the same neural substrates. To put more light on this dissociation, here we focused on a multivariate pattern analysis (MVPA) temporal classification approach, testing the strong hypothesis that the neural mechanisms supporting letter and number visual processing can be logistically classified as categorically separate early in time (i.e., before ~ 200 ms). Since at the spatial level, the recruitment of distinct hemispheric neural resources has not been disambiguated, we here focus on trial-by-trial variability of the neural responses across time and evaluate if such variability can successfully account for categorically distinct neural processes for letters and numbers.

MVPA techniques, such as time-resolved decoding12,13,14,15 and temporal generalization16 have a focus on how cognitive processes unfold over time, providing evidence on the temporal evolution of the processing of specific types of stimuli and how this is classifiable as compared to others types. The neural classification of the processing dynamics shaped by numeracy and literacy have not been explored by taking advantage of these methods. Differently from classical evoked activity analysis that analyzed averaged brain activity (per condition and participant), MVPA techniques explore the fine-grained trial-by-trial variability and evaluate how accurately this variability in the neural responses to different stimuli can be classified into separate categories. If number and letter processing are recruiting similar neural mechanisms, we would expect chance-level classification accuracy in our study; on the other hand, if the neural dynamics for these two types of stimuli are different, their classification accuracy should be significantly higher than chance. Time-resolved decoding could provide evidence on the time intervals in which numbers and letters processing are more dissociable; temporal generalization will provide evidence on how stimulus-specific neural activity could generalize across time intervals.

The present study addressed two experimental questions. First, can the neural correlates of the visual perception of a specific class of stimuli (either numbers or letters) be successfully classified as compared to (i) visually similar but not meaningful stimuli (false fonts) and (ii) the other stimulus category (i.e., letters and number respectively)? Second, is this classification mediated by the fact that such stimuli were presented in isolation or in a string (see4,6)?

We used sensor-level MEG to compare the neural representations for numbers, letters, and the relative false fonts (i.e., for both numbers and letters). Participants were presented with visual stimuli (either single characters in Experiment 1 or strings of characters in Experiment 2) and required to perform a low-level visual task (pressing a button whenever a dot appeared on the screen). We selected a low-level visual task to avoid participants getting involved in highly specific cognitive operations that could be qualitatively different for numbers and letters.

Methods

Participants

The univariate evoked analysis of the data used in this article has been previously published in Ref.15. However, the multivariate analysis presented in this paper is unpublished and novel. Twenty-five participants (mean age: 24 years \(\pm\, 3\), all right-handed) were considered in the analysis (three rejected). The participants were recruited via the BCBL participant recruitment system (web Participa). All the participants had normal or corrected to normal vision and were free from any neurological or psychological brain disorder. The ethical committee and the scientific committee of the Basque Center on Cognition, Brain and Language (BCBL) approved the experiment and methods (following the principles of the Declaration of Helsinki). All participants gave their written informed consent following guidelines approved by the Research Committees of the BCBL.

Classification problem formulations

Experimental procedure

All the participants took part in two experiments performing a visual detection task (i.e., white dot detection). Both experiments were presented using Psychtoolbox17 and the experimental paradigm is illustrated in Fig. 1. In the first experiment (Fig. 1a,c), participants were presented with Numbers (1–9), nine capital Letters (i.e., A, C, D, F, L, P, S, U, and V), and their corresponding false fonts (i.e., false-Numbers font and false-Letter font). The false fonts were created using a process similar to Shum et al.4, keeping angles, curves, and the number of pixels as similar as possible. False fonts were unrecognizable. The stimuli were presented in the center of the screen (screen placed close to ~ 1 m away from the participant’s eyes) in a white-colored Arial font with a gray background. Each stimulus (i.e., Numbers, Letters, false-Number font, false-Letter font) was repeated 22 times resulting in a total of 198 stimuli per condition (trials). Each trial started with a 500 ms baseline period, followed by a stimulus presented for 500 ms. A variable interstimulus interval (1000–1500 ms ISI) was introduced between trials. The participants were asked to blink during the interstimulus interval to minimize the eye blink artifacts. To keep the participants focused and attentive to the experimental manipulations, catch trials (10% of total trials) consisting of a white dot in the center of the screen were introduced. Participants were asked to press a button. These catch trials were not included in any subsequent analyses.

In the second experiment (Fig. 1b,d), stimuli were created using 5–6 Letter combinations or Numbers (1–9) separately. Phonotactically legal pseudowords were used instead of consonant strings to reduce any effect of context, vocabulary, or prior probabilities in the experiment. The pseudowords were the following: BOIRA, DOCHAS, ASIMA, MODRO, DOBECA, TEPOR, PLETAR, TOLAS, EGALO.

MEG data recordings

MEG data were acquired in a magnetically shielded room (to avoid environmental magnetic interference) using the whole head MEG system (MEGIN-Elekta Neuromag, Finland) installed at BCBL (https://www.bcbl.eu/en/infrastructure-equipment/meg). The MEG system consists of 102 triplet sensor pairs (each sensor consists of 102 magnetometers and 204 gradiometers arranged in a helmet configuration). The head position and movement were continuously monitored with four Head positioning coils (HPI) during the experiment. The location of HPI coils was defined relative to three fiducial points (nasion, left preauricular (LPA) and right preauricular point (RPA)) using a 3D head digitizer (Fastrack Polhemus, Colchester, VA, USA). This process is critical for correcting movement artifacts (movement compensation) during the data acquisition. The MEG data were recorded continuously with a bandpass filter (0.01–330 Hz) and a sampling rate of 1 kHz. Eye movements were monitored with pairs of electrodes placed above and below the eye (horizontal electrooculogram—HEOG) and on the external canthus of both eyes (vertical electrooculogram—VEOG).

Similarly, an electrocardiogram (EKG) was also recorded using two electrodes (in bipolar montage) placed on the right side of the abdomen and below the left clavicle of the participant. The continuous MEG data were preprocessed offline to suppress the external electromagnetic field using the temporal Signal-Space-Separation (tSSS)18 method implemented in Maxfilter software (MaxFilter 2.1). The MEG data were also corrected for movement compensation, and the bad channels were repaired within the MaxFilter algorithms. All the analyses were carried out in Matlab version 2014B.

Data preprocessing

MEG data were further preprocessed using the Fieldtrip toolbox for EEG/MEG analysis19. The data were corrected for SQUID jump and muscle artifacts using automatic threshold-based methods implemented in the Fieldtrip toolbox. Independent components analysis (ICA) was used to remove the eye-blink (EOG) and electrocardiogram (EKG) artifacts from MEG data (using the ‘runica’ algorithm implemented in the fieldtrip). On average two components were removed for every participant, these components were visually inspected before rejection. The data were then bandpass filtered in the range of 1–35 Hz, demeaned, and segmented into shorter epochs ranging from 100 ms before and 500 ms after the presentation of stimuli. MEG data were then downsampled to 200 Hz. The data were baseline corrected in the range of 100 ms before the stimulus onset.

Time-resolved decoding

Time-resolved decoding (also known as the temporal decoding approach) was used to decode between Numbers and Letters perception and false fonts. Three decoding models were generated in both experiments (a) Numbers versus False-Number fonts; (b) Letters versus False-Letter fonts; (c) Numbers versus Letters. The data were then fed to the classifier separately for each model to perform decoding using a linear discriminant algorithm (LDA) implemented in the MVPA Light toolbox20 (In cognitive neuroscience, a range of different classifiers, such as linear discriminant analysis (LDA), logistic regressions, and linear support vector machines (SVM), have been extensively used for MVPA analyses. Despite having various persuasion, these classifiers belong to a common statistical framework. In practice, these classifiers make distinct assumptions about the data but often lead to very similar results21,22,23). The classification was performed separately at each time point in both experiments. We used k-fold cross-validation (k = 5) to avoid overfitting and used the area under the ROC curve (AUC) for evaluating the classification performance.

We also used an ‘undersampling’ approach to balance classes before feeding the data into a classifier for learning. This model learning process was repeated twenty (20) times for each subject to yield a stable decoding pattern. To evaluate the statistical significance of decoding across time, cluster-corrected sign permutation tests13,14,24,25 (i.e., one-tailed) were applied to the ‘AUC’ values obtained from the classifier with a cluster-defining threshold (i.e., alpha) p < 0.05, cluster-alpha threshold p < 0.05 and 50,000 permutations considering the chance level decoding 0.50. To evaluate the statistical decoding differences across experiments, the difference in ‘AUC’ for each condition (Experiment 2–Experiment 1) was computed. The cluster-corrected sign permutation tests (one-tailed) were applied to the differential ‘AUC’ values across experiments cluster-defining threshold (i.e., alpha) p < 0.05, cluster-alpha threshold p < 0.05 and 50,000 permutations considering the chance level decoding 0.00. The graphical representation of the data analysis pipeline has been included as Supplementary Fig. 1. Our statistical strategy is to first compare numbers and letters independently with the relative false font condition and then compare directly numbers vs. letters.

Temporal generalization

Time-resolved decoding helps to understand how cognitive processes unfold over time; however, this method does not inform about the temporal organization of different stages of information processing in the human brain. To do so, the temporal generalization method is recommended, which measures the ability of a classifier to generalize across time16. This method provides a novel way to understand how mental representations are manipulated and transformed in a given cognitive process. In our experiment, the data pre-processing steps (i.e., down-sampling (200 Hz)) followed by a linear discriminant analysis (LDA) classifier with fivefold cross-validation were used, as explained in the previous section. Here a classifier is trained on each time point in the data and then tested over all the available time points separately for each experimental contrast. The classifier output results in a square matrix per block per participant, where each point reflects the generalization strength of the classifier trained at a given time point and tested at another one. To evaluate the presence of a group-level effect, a cluster-based permutation test was used with cluster-defining threshold (i.e., alpha) p < 0.05, cluster-alpha threshold p < 0.05, and 10,000 permutations. Our statistical strategy is to first compare numbers and letters independently with the relative false font condition and then compare directly numbers vs. letters.

Results

Temporal decoding

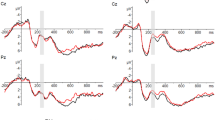

First, the time-resolved decoding was compared for conditions (i.e., Numbers versus False Numbers fonts; Letters versus False Letter fonts; and Numbers versus Letters) that were plotted separately for Experiment 1 and Experiment 2. Figure 2a shows the decoding in Experiment 1, the decoding curve for Numbers versus False Number fonts is reliably different from the chance level starting at 105 ms and achieving a peak decoding strength of 0.64 at 225 ms after the presentation of the stimuli. The decoding curve for Letters versus False Letter fonts is significantly different from the chance level starting at 110 ms and achieving a peak decoding of 0.58 at 220 ms after the presentation of the stimuli. Finally, the decoding curve between Numbers and Letters is reliable starting at 110 ms and achieving a peak accuracy of 0.55 at 160 ms after the presentation of the stimuli.

Time-resolved decoding (mean ± standard error (SE)) for (a) single characters for all three comparison conditions, i.e., Numbers versus False Number fonts; Letters versus False Letter fonts; and single Numbers versus single Letters (Experiment 1), and (b) the same contrasts for strings of characters (Experiment 2). The colored lines show the statistically significant different time points using a cluster-based permutation test (p < 0.05).

In Experiment 2, (Fig. 2b), the decoding curve for the Number strings versus False Number font strings differs from chance starting at 95 ms and achieving a peak decoding strength of 0.66 at 210 ms after the presentation of the stimuli. The decoding curve for the Letter strings versus the False Letter font strings is reliable starting at 120 ms and achieving a peak decoding of 0.68 at 215 ms after the presentation of stimuli. Finally, the decoding curve between the Number strings and the Letter strings is significant starting at 140 ms and achieving a peak accuracy of 0.60 at 420 ms after the presentation of stimuli.

Further, to evaluate the difference between Experiment 1 (single characters) and Experiment 2 (strings), all three comparisons were plotted together. Figure 3a shows the decoding curves for Numbers versus false Numbers in Experiments 1 and 2. The grey line shows the statistically significant different time points between the two curves. The difference between the curve lasts for 40 ms (180–220 ms) and the difference in peak decoding strength difference is nearly 2%. The difference between decoding curves for Letter versus false Letters in Experiments 1 and 2 emerges at 165 ms and lasts up to 500 ms with a peak decoding strength difference of almost 10% (Fig. 3c). The difference between decoding curves for Numbers versus Letters in Experiments 1 and 2 emerges at 175 ms and lasts up to 250 ms and then again emerges at 285 ms lasting up to 500 ms with a peak decoding strength difference of nearly 5% (Fig. 3b).

Time-resolved decoding (mean ± standard error (SE)) for Numbers versus False Number fonts (Left); Letters versus False Letter fonts (Middle); and Numbers versus Letters (Right) for single characters (Experiment 1) and strings of characters (Experiment 2). The colored lines show the statistically significant different time points using a cluster-based permutation test (p < 0.05). The grey line shows the statistically significant difference (Experience 2–Experience 1) between single characters (Experiment 1) and a sequence of characters (Experiment 2).

Temporal generalization

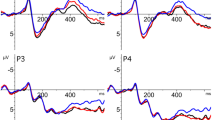

Temporal generalization matrices for Numbers versus False Numbers fonts in strings (Experiment 2) have slightly similar generalization across diagonal compared to single Numbers versus false Number fonts (Experiment 1). The generalization for single characters starts at 90 ms and becomes strongest between 200 and 300 ms and sustains up to 500 ms. For the strings (Experiment 2), it generalizes starting from 90 ms and peaks between 200 and 400 ms. The contrast between generalization matrices (Experiment 2–Experiment 1) does not show statistically significant differences across the diagonal (upper row of matrices in Fig. 4). Interestingly though, the decoding classifier trained at around 200 ms can generalize and successfully classify data from 200 to 400 ms.

Temporal generalization plots for Numbers versus False Number font in single characters i.e., Experiment 1 (first row first column); Letters versus False Letter fonts (second row first column); and Numbers versus Letters (third row first column). The second column represents similar comparisons to first column but for the string of characters (Experiment 2). The third column represents the difference between single and string of characters (Experiment 2–Experiment 1). The black colored outline shows the boundaries of statistically significant different time points using a cluster-based permutation test (p < 0.05).

For single (Experiment 1) Letters versus False Letter fonts, the generalization starts from 85 ms and peaks between 200 and 300 ms, whereas for the strings (Experiment 2), generalization starts as early as 45 ms and peaks from 200 to 500 ms. The diagonal generalization plot is stronger in the strings compared to the single characters. The generalization difference (Experiment 2–Experiment 1) starts at 160 ms, peaks between 200 and 300 ms, and appears to be sustained up to 500 ms (middle row of matrices in Fig. 4).

For single (Experiment 1) Numbers versus Letters, the generalization starts from 105 ms and peaks between 200 and 300 ms. This generalization is weaker compared to the other two comparisons i.e., Numbers versus False Numbers and Letters versus False Numbers. For strings (Experiment 2), the generalization starts from 100 ms and first peaks between 150 and 250 ms and then again peaks between 300 and 500 ms. The generalization difference (Experiment 2–Experiment 1) starts at 155 ms and peaks between 300 and 500 ms. Also, the classifier trained close to 200 ms can generalize when testing from 250 to 450 ms (lower row of matrices in Fig. 4).

Discussion

In these two experiments, we report logistically dissociable temporal dynamics for processing numbers and letters in the human brain. We observed a significant difference in decoding accuracy for both numbers and letters compared to the respective false fonts and between each other directly. We also observed that the decoding accuracy was highly mediated by the fact whether the stimuli were presented in isolation (i.e., single characters, Experiment 1) or as a string (i.e., a string of characters, Experiment 2) and this was especially true for letters.

Concerning our first experimental question (are numbers and letters functionally dissociable?), our results indicate that numbers and letters are processed differently both when presented as single/isolated and when in a string. When presented in isolation, the neural representation of numbers (vs. visually similar false fonts) is stronger (i.e., triggered higher decoding accuracy) if compared to the decoding accuracy for letters (vs. false letter fonts). When the stimuli are a string of similar characters, however, the decoding accuracy for letters is stronger in magnitude compared to the numbers (vs. false fonts). For both single items and strings, the direct classification of numbers vs. letters processing showed lower decoding accuracy, as compared to the individual comparisons with false fonts (Fig. 2). The temporal generalization results tell a similar story. Numbers and letters representations were stronger when compared to their respective false fonts but when compared across different categories (i.e., number versus letter), the generalization patterns were slightly weaker. It thus appears that one important component triggering such neural dissociation (letters or numbers vs. their respective false fonts), even in such an early time window (~ 100 ms), is the culturally determined visual familiarity with the visual input. When directly comparing the two types of stimuli (letters vs. numbers), the neural dissociation is weaker, probably because a relevant portion of the neural processes at work for those familiar stimuli is shared at the source level (Aurtenetxe et al.)11.

Importantly, however, our results highlight that the number and letter processing is highly affected by whether these characters were presented either in isolation as a string (Fig. 3). Number processing is not very affected by the presentation mode (either single or strings). The difference between the two presentation modes was only present around the peak decoding and was also short-lived (~ 40 ms, Fig. 3a). On the other hand, letters were strongly affected by the presentation mode. Letters presented as strings of characters were highly decodable (~ 20% higher peak decoding accuracy, compared to single letters): this difference across experiments started from 165 ms and was sustained for the whole window of interest (Fig. 3c). Along these lines, it is worth remarking that decoding accuracy for numbers was higher when stimuli were presented in isolation (Fig. 2a), while decoding for letters was higher when stimuli were strings (Fig. 2b). This is mainly due to the large across-experiment decoding difference observed for letters. Finally, numbers vs. letters decoding was stronger in experiment 2 compared to experiment 1 (Fig. 3b), even if decoding accuracy for the two classes of items compared to the respective false fonts was more similar in this second experiment (Fig. 2b).

The temporal generalization plots (in Fig. 4) confirm the pattern of results discussed for temporal decoding. Here, however, we can appreciate (i) the generalization patterns across different time points and consequently, (ii) if some neural mechanisms emerging across time can be functionally dissociated. Concerning the first point, the generalization plots for strings of letters indicate the presence of a recurrent neural process that can generalize from the activity ~ 200 ms in the diagonal to later activity ~ 350 ms, an effect that is not evident for single letters (second row in Fig. 4). Such an effect is not present for numbers (first row in Fig. 4). Concerning the second point, when comparing numbers and letters directly (third row in Fig. 4), there is evidence for two time intervals (early ~ 200 ms and later ~ 400 ms across the diagonal) reflecting independent processing steps, that are present for strings and not for single items.

Based on these findings, it emerges that the combinatorial mechanisms for numbers and letters are key for triggering dissociable neural patterns for the two categories of stimuli, at least when participants are involved in a low-level perceptual task. The presentation of numbers either in isolation or in a string does not drastically change the quality of neural processes at work. This is probably associated with the fact that numbers in isolation and strings of numbers are processed similarly at the more abstract level, after initial visual processing: a number in isolation identifies a numerical value as much as a string of numbers, the only difference being the quantity identified. On the other hand, letters in isolation elicit different neural processes compared to letter strings (pseudowords in our experiment). In other words, seeing isolated alphabetic stimuli, such as “a”, “c” and “t”, does not trigger the same cognitive processes compared to a word such as “cat”, or a pseudoword such as “tac” (as in Experiment 2). Such stimuli elicit early letter-specific neural activity (~ 200 ms) that is then reactivated later in time (~ 350 ms), probably feeding hierarchically more abstract neurocognitive processes at ~ 400 ms, that have been classically associated with language-specific lexical/semantic analysis of the printed stimuli. In fact, phonotactically legal pseudowords can elicit the activation of a larger cohort of orthographical and phonological similar words (neighbors) in the human mental lexicon, as compared to real words26. Park et al.6 observe “that the visual cortex is tuned to selectively process combinations of letters, but not numbers, further along in the visual processing stream”. The present results are in line with this observation. It is possible that stimulating more abstract mental operations (with specific arithmetic tasks) would trigger later neural activity also for numbers and that such activity could be dissociable for single compared to strings of numbers. Even so, it is important to observe that such activity is not automatically elicited in our low-level detection task, while this is the case for strings of letters. Thus, the present data speak for a clear neural dissociation between numeracy and literacy, mainly when presenting the stimuli in strings, but not so much when numbers and letters are presented in isolation.

Previous studies4,10,11 mainly focused on the spatial lateralization effect between number and letter processing. Park et al.6 investigated the time course of the dissociation between visually-presented letters and numbers and reported highly similar temporal effects to the ones we observed in the present analysis. A hemispheric dissociation between the ERP waveforms evoked by numbers and letters at the occipital-temporal electrodes was observed. Specifically, letters elicited significantly greater N1 amplitudes (~ 150 ms) in the left hemisphere, while numbers elicited significantly greater N1 amplitudes in the right hemisphere (especially for strings). However, this evidence of a different hemispheric sensitivity for the two types of stimuli does not preclude that both hemispheres are involved in the processing of numbers and letters. One potential explanation for the hemispheric dissociation observed by Park et al.6 could be the fact that one of the two categories of stimuli is more complex to process, hence triggering higher neurocognitive demands for the same neural mechanisms at work for the two categories of stimuli. Research by Shum et al.4 and by Carreiras et al.10 showed a right hemisphere involvement for numbers, but no clear dissociation in the recruitment of the left hemisphere during early visual processing. Univariate evoked analysis of the present data11 did not provide clear support for either the spatial hemispheric or the temporal dissociation either. The classical univariate analysis would not be suited for dissociating different sources of variability since the individual brain responses are averaged across multiple trials and the fine-grained trial-by-trial variability would be lost. We used a machine learning approach16,27 to explore the trial-by-trial variability of the neural responses to numbers and letters and thus evaluated the stronger hypothesis that the neural responses to numbers and letters can be logistically classified into separate categories. This analysis revealed, across time points, that the functional response to numbers and letters can be accurately decoded mainly for strings, thus showing when the neural activity becomes number- or letter-specific. Given the early decoding for numbers vs. letters observed in the present study (starting ~ 100 ms), we can thus conclude that the presentation of number and letter strings recruits dissociable visual processing resources in the human brain. Our results do not focus on hemispheric lateralization, but based on previous evidence4,11 we can advance that the two hemispheres (and especially the left one) are recruited for both letters and numbers, with more fine-grained functional dissociations in the micro-neural visual pathways recruited by the two categories of stimuli.

The present study thus provides interesting experimental evidence on the effects of literacy vs. numeracy that can stimulate further research. First, we have reported a significant difference in decoding patterns for letters while presented in strings compared to strings of numbers. Second, we reinforce the idea that early neural activity of the human cortex is fine-tuned to selectively process combinations of letters (as compared to single letters) along the visual processing stream. Third, we reported such dissociations in a low-level visual detection task, where no differential task demands for numbers of letters can explain the observed dissociations. Further research, maybe involving intracranial recordings, should further explore the differential role of the visual pathways in the two hemispheres, thus clarifying the spatial dissociation supporting the functional dissociation we report.

Data availability

The raw data can be accessed from Open-Neuro repository (https://openneuro.org/datasets/ds002712/versions/1.0.1). Due to the whole preprocessed dataset’s size and limited public storage options available, only raw data has been uploaded. However, the full preprocessed dataset is available upon requests directed to Dr. Nicola Molinaro (n.molinaro@bcbl.eu) or Dr. Sanjeev Nara (sanjeev.nara@math.uni-giessen.de). The full data set could then be shared through the private BCBL-secured institutional servers temporarily available for big data transfer.

References

Dehaene, S. et al. How learning to read changes the cortical networks for vision and language. Science 330(6009), 1359–1364. https://doi.org/10.1126/science.1194140 (2010).

Dehaene, S., Cohen, L., Morais, J. & Kolinsky, R. Illiterate to literate: Behavioural and cerebral changes induced by reading acquisition. Nat. Rev. Neurosci. 16(4), 234–244. https://doi.org/10.1038/nrn3924 (2015).

Caffarra, S., Lizarazu, M., Molinaro, N. & Carreiras, M. Reading-related brain changes in audiovisual processing: Cross-sectional and longitudinal MEG evidence. J. Neurosci. 41(27), 5867–5875. https://doi.org/10.1523/JNEUROSCI.3021-20.2021 (2021).

Shum, J. et al. A brain area for visual numerals. J. Neurosci. 33(16), 6709–6715. https://doi.org/10.1523/JNEUROSCI.4558-12.2013 (2013).

Park, J., Hebrank, A., Polk, T. A. & Park, D. C. Neural dissociation of number from letter recognition and its relationship to parietal numerical processing. J. Cogn. Neurosci. 24(1), 39–50. https://doi.org/10.1162/jocn_a_00085 (2012).

Park, J., Chiang, C., Brannon, E. M. & Woldorff, M. G. Experience-dependent hemispheric specialization of letters and numbers is revealed in early visual processing. J. Cogn. Neurosci. 26(10), 2239–2249. https://doi.org/10.1162/jocn_a_00621 (2014).

McCarthy, G. & Wood, C. C. Scalp distributions of event-related potentials: An ambiguity associated with analysis of variance models. Electroencephalogr. Clin. Neurophysiol. 62(3), 203–208. https://doi.org/10.1016/0168-5597(85)90015-2 (1985).

Park, J. A neural basis for the visual sense of number and its development: A steady-state visual evoked potential study in children and adults. Dev. Cogn. Neurosci. 30, 333–343. https://doi.org/10.1016/j.dcn.2017.02.011 (2018).

Grotheer, M., Herrmann, K. H. & Kovács, G. Neuroimaging evidence of a bilateral representation for visually presented numbers. J. Neurosci. 36(1), 88–97. https://doi.org/10.1523/JNEUROSCI.2129-15.2016 (2016).

Carreiras, M., Monahan, P. J., Lizarazu, M., Duñabeitia, J. A. & Molinaro, N. Numbers are not like words: Different pathways for literacy and numeracy. Neuroimage 118, 79–89. https://doi.org/10.1016/j.neuroimage.2015.06.021 (2015).

Aurtenetxe, S., Molinaro, N., Davidson, D. & Carreiras, M. Early dissociation of numbers and letters in the human brain. Cortex 130, 192–202. https://doi.org/10.1016/j.cortex.2020.03.030 (2020).

Cichy, R. M., Pantazis, D. & Oliva, A. Resolving human object recognition in space and time. Nat. Neurosci. 17(3), 455–462. https://doi.org/10.1038/nn.3635 (2014).

Nara, S. et al. Temporal dynamics of neural processing of facial expressions and emotions. BioRxiv. https://doi.org/10.1101/2021.05.12.443280 (2021).

Nara, S. et al. Temporal uncertainty enhances suppression of neural responses to predictable visual stimuli. Neuroimage 239, 118314. https://doi.org/10.1016/j.neuroimage.2021.118314 (2021).

Grootswagers, T., Wardle, S. G. & Carlson, T. A. Decoding dynamic brain patterns from evoked responses: A tutorial on multivariate pattern analysis applied to time series neuroimaging data. J. Cogn. Neurosci. 29(4), 677–697. https://doi.org/10.1162/jocn_a_01068 (2017).

King, J. R. & Dehaene, S. Characterizing the dynamics of mental representations: The temporal generalization method. Trends Cogn. Sci. 18(4), 203–210. https://doi.org/10.1016/j.tics.2014.01.002 (2014).

Brainard, D. H. The psychophysics toolbox. Spat. Vis. 10(4), 433–436. https://doi.org/10.1163/156856897X00357 (1997).

Taulu, S. & Simola, J. Spatiotemporal signal space separation method for rejecting nearby interference in MEG measurements. Phys. Med. Biol. 51(7), 1759–1768. https://doi.org/10.1088/0031-9155/51/7/008 (2006).

Oostenveld, R., Fries, P., Maris, E. & Schoffelen, J.-M. FieldTrip: Open source software for advanced analysis of MEG, EEG, and invasive electrophysiological data. Comput. Intell. Neurosci. 2011, 1–9. https://doi.org/10.1155/2011/156869 (2011).

Treder, M. S. MVPA-Light: A classification and regression toolbox for multi-dimensional data. Front. Neurosci. 14, 289. https://doi.org/10.3389/fnins.2020.00289 (2020).

Varoquaux, G. et al. Assessing and tuning brain decoders: Cross-validation, caveats, and guidelines. Neuroimage 145, 166–179. https://doi.org/10.1016/J.NEUROIMAGE.2016.10.038 (2017).

Lebedev, M. A. & Nicolelis, M. A. L. Brain–machine interfaces: Past, present and future. Trends Neurosci. 29(9), 536–546. https://doi.org/10.1016/J.TINS.2006.07.004 (2006).

Hastie, T., Tibshirani, R. & Friedman, J. The Elements of Statistical Learning (Springer, 2009).

Dima, D. C. & Singh, K. D. Dynamic representations of faces in the human ventral visual stream link visual features to behaviour. BioRxiv 6, 1–45. https://doi.org/10.1101/394916 (2018).

Maris, E. & Oostenveld, R. Nonparametric statistical testing of EEG- and MEG-data. J. Neurosci. Methods 164(1), 177–190. https://doi.org/10.1016/j.jneumeth.2007.03.024 (2007).

Kutas, M. & Federmeier, K. D. Thirty years and counting: Finding meaning in the N400 component of the event-related brain potential (ERP). Annu. Rev. Psychol. 62, 621–647. https://doi.org/10.1146/annurev.psych.093008.131123 (2011).

King, J. R., Gramfort, A., Schurger, A., Naccache, L. & Dehaene, S. Two distinct dynamic modes subtend the detection of unexpected sounds. PLoS ONE 9(1), 85791. https://doi.org/10.1371/journal.pone.0085791 (2014).

Acknowledgements

This research was supported by the Basque Government through the BERC 2018–2021 program and by the Spanish State Research Agency through BCBL’s Severo Ochoa excellence accreditation CEX2020-001010-S and the project BES-2016-077560 funded by the Spanish Ministry of Economy and Competitiveness (MINECO). SN acknowledges the support from “The Adaptive Mind,” funded by the Excellence Program of the Hessian Ministry of Higher Education, Science, Research and Art. MC was supported by “la Caixa” Foundation (ID 100010434), under the agreement HR18-00178-DYSTHAL, and by the Agencia Estatal de Investigación PID2021-122918OB-I00. NM was supported by the Spanish Ministry of Science, Innovation and University (Grants PSI2015-65694-P, RTI2018-096311-B-I00), the Agencia Estatal de Investigación (AEI), the Fondo Europeo de Desarrollo Regional (FEDER) and by the Basque government (Grant PI_2016_1_0014). HR was supported by the Economic and Social Research Council (ESRC) funded Business and Local Government Data Research Centre under Grant ES/S007156/1. SN thanks the BCBL lab research staff for their valuable support.

Author information

Authors and Affiliations

Contributions

S.N.: Conceptualization, Methodology, Software, Data curation, Writing—original draft. H.R.: Methodology, Visualization, Writing—review & editing, Funding acquisition. M.C.: Methodology, Supervision, Writing—review & editing. N.M.: Conceptualization, Methodology, Validation, Supervision, Funding acquisition, Project administration, Writing—review & editing.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Nara, S., Raza, H., Carreiras, M. et al. Decoding numeracy and literacy in the human brain: insights from MEG and MVPA. Sci Rep 13, 10979 (2023). https://doi.org/10.1038/s41598-023-37113-0

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-023-37113-0

This article is cited by

-

Revisiting the role of computational neuroimaging in the era of integrative neuroscience

Neuropsychopharmacology (2024)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.