Abstract

Can our brain perceive a sense of ownership towards an independent supernumerary limb; one that can be moved independently of any other limb and provides its own independent movement feedback? Following the rubber-hand illusion experiment, a plethora of studies have shown that the human representation of “self” is very plastic. But previous studies have almost exclusively investigated ownership towards “substitute” artificial limbs, which are controlled by the movements of a real limb and/or limbs from which non-visual sensory feedback is provided on an existing limb. Here, to investigate whether the human brain can own an independent artificial limb, we first developed a novel independent robotic “sixth finger.” We allowed participants to train using the finger and examined whether it induced changes in the body representation using behavioral as well as cognitive measures. Our results suggest that unlike a substitute artificial limb (like in the rubber hand experiment), it is more difficult for humans to perceive a sense of ownership towards an independent limb. However, ownership does seem possible, as we observed clear tendencies of changes in the body representation that correlated with the cognitive reports of the sense of ownership. Our results provide the first evidence to show that an independent supernumerary limb can be embodied by humans.

Similar content being viewed by others

Introduction

The representation of our body in the brain is plastic. Even relatively short multi-sensory (usually visuo-haptic) stimulations can induce an illusion of ownership, and embodiment in general, towards artificial limbs and bodies1 including human-looking limbs: rubber hands2,3, virtual avatars4,5,6, as well as robotic limbs and bodies7,8,9,10,11,12,13,14. However, most previous studies on ownership have been conducted in the context of “limb substitution,” where the rubber, virtual, or robotic limb replaces an already-existing limb. Even studies that have explored the sense of ownership towards additional limbs such as extra hands15, tail16, arm17, or finger18,19 for example, have utilized additional limbs that were controlled by existing limbs. It remains unclear whether the human brain can own an independent additional limb, which can be controlled independently of any other limb and provide independent non-visual feedback, and how it affects the body representations in the brain.

Ownership of a limb is traditionally believed to require two conditions to be satisfied. First, ownership requires a congruent multi-sensory stimulation, a condition that was the key discovery of the rubber hand illusion by Botvinick and Cohen2. This and subsequent studies by other researchers have shown that the vision of an artificial limb (like the rubber hand) being touched and the haptic perception of the same touch from the real limb that is touched simultaneously are sufficient for the induction of the illusion of ownership toward the artificial limb. However, an independent additional limb, by definition, does not have a replacement limb for providing this feedback. On the other hand, recent studies in virtual reality suggest that first-person perspective alone may be sufficient to induce ownership towards a limb20,21, though these again have been observed only during limb/body replacement. Second, it is believed that the illusion of ownership occurs only with artificial limbs that conform to the so-called “body model,” a representation of the body in our brain22. What the body model constitutes of, however, remains unclear. Multiple studies on this issue23,24,25,26,27,28,29,30 have discovered diverse results that are probably best explained by the functional body model hypothesis1, which hypothesizes that the entity a brain can embody is determined by whether it is recognized to sufficiently afford actions that the brain has learnt to associate with the limb it substitutes. This would, however, suggest that a new independent additional limb, by definition, will be difficult to produce perception of ownership since it will not have been associated with any actions. Overall, the previous studies therefore suggest that bodily ownership of an independent limb should be difficult to achieve.

To verify whether bodily ownership is possible for an independent limb, in this exploratory study we developed a robotic independent “sixth finger” system. Our robotic finger can be attached to a participant’s hand and is operated by a “null-space” electromyography (EMG) control strategy that enables the participant to operate the robotic finger. A feedback pin on the robotic finger enables the participant to sense its movement independently of any other fingers or body parts. Our participants used the robotic finger in a cued finger habituation task in two conditions: one when they had control of the robotic finger (controllable condition) and the other when they could not control the robotic finger (the random condition). Following the habituation (see Fig. 1C for the sequence of experiments), we evaluated whether and how the use of fingers in the two conditions affected the participant’s perception of their innate hand by using cognitive questionnaires (to measure changes in perceived levels of ownership and agency towards the robotic finger as well as body image of their hand) and behavioral measures collected in two test experiments that measured possible changes in their “body schema”31,32 and their “body image”33.

The robotic sixth finger setup and experiment. (A) The 3D-printed robotic sixth finger actuated by a servo motor in the chassis. The entire arrangement is designed to be strapped onto the hand of participants, near their left little finger. The right photo is a six-fingered glove worn by a participant with the robotic finger attached. (B) Schematic of the habituation task. In task 1, participants wore a glove with LEDs fixed on the tip of the glove fingers. They bent the finger corresponding to the LED that lit up. In task 2, a tablet PC on the desk displayed a piano-like key under each of the five fingers (including the robotic finger, and except the thumb). The participants pressed the key that lit up. Both tasks were aligned with the beats of popular music that were played in the headphones of the participants. (C) The experiment paradigm. The participants worked in two conditions: controllable and random. In each condition, they started with two pre-tests to measure specific body representations (explained later in the text), followed by the habituation, then two post-tests, and finally ending with a questionnaire (see Fig. 2) to assess their cognitive percept. The photos in (A,B) were taken by Yui Takahara.

Results

The independent robotic sixth finger system

Our developed robotic sixth finger system consists of a three-phalanged, one degree of freedom, plastic finger (6 cm in length) and a tactile stimulator in the form of a sliding plastic pin. The finger was actuated by a servo motor (ASV-15-MG, Asakusa Gear Co., Ltd.) (Fig. 1A) that enabled the robotic finger to flex like a real human finger. The robotic finger was connected to the stimulator by a crank-pin link such that the flexing of the robotic finger made the pin slide. The entire arrangement was designed to be strapped onto the hand of participants, near their left little finger, such that the tactile stimulator stimulated the side of their palm, below the little finger when the robotic finger flexed (see Fig. 1A). The robotic finger was operated using electromyography (EMG) recorded from four wrist/finger muscles (flexor carpi radialis muscle, flexor carpi ulnaris muscle, extensors carpi radialis muscle, and extensor digitorum muscle) in their left forearm. We used the Delsys Trigno Wireless EMG System (sampling rate, 2000 Hz) for the EMG recording. The recorded EMG signals were read into the Arduino Mega 2560, utilizing the Simulink Support Package for Arduino Hardware.

Two salient features distinguish our robotic finger from most previous developments of supernumerary limbs::

-

1.

Kinematically independent control: We developed an intuitive “null space” control scheme for the robotic finger that enables the participant to move the robotic finger independently of, and simultaneously with if required, any other finger and in fact any other limb on their body. Details of the control are provided in the methods section. The participants were given instructions to control the robotic finger and even first-time users can control it intuitively. We have attached a movie to show the robotic finger in motion (Supplementary movie 1).

-

2.

Independent haptic sensory feedback: The sliding pin stimulator incorporated with the finger provides haptic feedback of the movement of the robotic finger on the side of the palm, ensuring sensory independence to any other limb of the body.

Embodiment questionnaire

To quantify the cognitive change in perception of the finger and hand following the habituation with the robotic finger, we asked participants to answer questionnaires about the sense of agency (Q1, Q3, Q5), the sense of ownership (Q4, Q6), and body image (Q2, Q7) by using seven-level Likert scale after habituation to the use of the robotic finger. Results showed significant differences in the questionnaire scores across the controllable and random conditions for the items related to the sense of agency (FDR-corrected p < 0.05 for multiple comparisons by the Wilcoxon paired signed-rank test; Q1: T = 153, PSdep = 0.972, Q3: T = 113.5, PSdep = 0.861, Q5: T = 171, PSdep = 1), but not for the items related to the sense of ownership (Q4: T = 69, Q6: T = 62.5) and to body image (Q2: T = 93.5, Q4: T = 69) (Fig. 2). Control questions to confirm if participants performed the habituation tasks correctly also showed no significant difference between the conditions (Q8: T = 5, Q9: T = 30, Q10: T = 12) (Fig. 2). Questionnaire scores for each of the controllable and random conditions are shown in Supplementary Fig. 1.

Difference in the subjective questionnaire ratings between the two habituation conditions. The participants answered seven embodiment-related questions and three control questions on a seven-point Likert scale in the controllable and random conditions. The plots show the difference in the rated scores in the two conditions across the participants. In each box plot, the thick line indicates the median, and the bottom and the top lines of the box show the first and the third quartiles, respectively. The whiskers extend to the minimum and maximum points of the data or to the points of 1.5 times the interquartile range if the minimum and maximum points exceed them. The diamond markers showed the data points considered as outliers that exceed the whisker range. Asterisks show statistical significance (FDR-corrected p < 0.05 for multiple comparisons by the Wilcoxon paired signed-rank test).

Behavioral tests

We performed two behavioral tests to examine possible changes in body schema (reaching test) and body image (finger localization test) before and after habituation of the robotic finger. In the reaching task, participants were instructed to reach with their index finger to a target line while avoiding an obstacle to impede the shortest path from the starting location of the index finger to the target location (see Fig. 3A). Body schema is said to represent the internal representation of the body in the brain that is used for motor control31,34, and hence we expected an increase in the avoidance if the hand representation in the body schema was larger after the habituation task with the robotic finger.

Measurement of body representation changes. (A) Schematic of the reaching test. A rectangular-shaped obstacle was placed in front of the participant's left arm and a tablet PC was placed to the right of it. A target bar and an indicator were displayed on the screen. (B) Schematic of the finger localization test. An open box (without the front and back faces) and a PC standing behind it were placed in front of a participant. A target bar was displayed on the screen. The participants touched the target bar with either their index or little finger.

In the finger localization test, participants were instructed to point to a line visible above a box on the table, with their occluded index and little finger, below the box top (see Fig. 3B). Body image is believed to be the representation of our body in the visual space, and we expected that any change in their body image related to their hand would lead to an error in the localization of the fingers by the participants, relative to the presented lines.

Results obtained in the behavioral test, however, didn’t show any significant change in any of these measures from pre-habituation to post-habituation, between the controllable and random conditions (Fig. 4; p > 0.05 by the Wilcoxon paired signed-rank test, \({\upmu }_{\mathrm{length}}^{\mathrm{reaching}}\): T = 78, \({\upsigma }_{\mathrm{length}}^{\mathrm{reaching}}\): T = 57, \({\upmu }_{\mathrm{end}}^{\mathrm{reaching}}\): T = 78, \({\upsigma }_{\mathrm{end}}^{\mathrm{reaching}}\): T = 57, \({\upmu }_{\mathrm{end}-\mathrm{start}}^{\mathrm{reaching}}\): T = 78, \({\upsigma }_{\mathrm{end}-\mathrm{start}}^{\mathrm{reaching}}\): T = 77, \({\upmu }_{\mathrm{index}}^{\mathrm{localization}}\): T = 69, \({\upsigma }_{\mathrm{index}}^{\mathrm{localization}}\): T = 58, \({\upmu }_{\mathrm{little}}^{\mathrm{localization}}\): T = 93, \({\upsigma }_{\mathrm{little}}^{\mathrm{localization}}\): T = 93, \({\upmu }_{\mathrm{index}}^{\mathrm{localization}}-{\upmu }_{\mathrm{little}}^{\mathrm{localization}}\): T = 53).

Results of behavioral measures (top, reaching test; bottom, localizaion test). The box plots showed the data as in the same style as Fig. 2.

Correlation between cognitive and behavioral measures

However, these insignificant differences in population levels may result from a large variance in the questionnaire scores and behavioral measures across participants. Therefore, next we evaluated the correlation between the questionnaire score and behavioral measures across individuals. In a preliminary analysis, we observed a part of the data might serve as outliers (Smirnov-Grubbs test, \(\mathrm{\alpha }\)=0.01) that could distort estimates of the correlation. Therefore, we performed Bootstrap sampling to calculate the distribution of correlation values and determine the upper and lower limit within which 95% of the correlation values were distributed, which serve as a confidence interval of the correlation values.

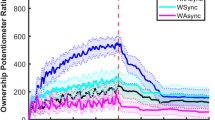

Results showed significant correlations (as per the Bootstrap analysis) between the standard deviation in localization of the little finger (\({\upsigma }_{\mathrm{little}}^{\mathrm{localization}}\)) and the question related to whether the robotic finger was perceived as a part of their body (Q4; median, 0.579), as well as between \({\upsigma }_{\mathrm{little}}^{\mathrm{localization}}\) and the question of how much the robotic finger was perceived as their own finger (Q6; median, 0.577). Note that positive correlations represent an increase of the measured variables with the increased difference in rating (controllable—random) related to a specific question. These results suggest that the participant’s level of uncertainty in localizing little finger increased with the level of ownership they perceived cognitively toward the robotic finger.

Discussion

In this exploratory study, we examined whether and to what extent humans can embody an “independent limb,” a limb that humans can move independently of any other limbs in their body and from which they receive movement feedback independently of any other limbs. For this purpose, we developed a robotic finger setup that attaches to the hand of a human participant and can be moved independent of the other fingers of the hand, ensuring independence at the level of movement kinematics. Furthermore, we provided the participants with independent feedback corresponding to the finger movement via a tactile pin on the side of the palm.

In addition to a questionnaire to judge perceived changes in ownership, agency, and body image, we used two test experiments to evaluate possible changes in body representation after the use of the robotic finger. The first reaching test evaluated changes in the body schema, the representation of our body in internal coordinates that are used for motor control31,32,34. Specifically, we evaluated changes in the representation of the width of the hand in the body schema. We expected the length of movement trajectory, and/or the distance between the start and end points of the reach to change in order to avoid the obstacle, in the case that the hand width is perceived to change. The second finger localization test was designed to analyze changes in the body image, the representation of our body in visual space35,36. Here again, we expected the position of the index or little finger to change or become uncertain if the perceived visual hand width or perceived visual location of the little finger changed. However, we did not observe any significant change in the values of the behavioral measures or the questionnaire answers except ones related to agency.

While the illusion of ownership towards a replaced rubber hand can be induced within a few minutes, our results suggest that the ownership of an additional limb is much tougher for the human brains. Although our EMG-finger interface is very intuitive and all our participants could operate the robotic finger almost immediately, participants’ reports of ownership did not differ between the controllable and random conditions even after an average of 51 min of habituation across participants in our experiment. This difficulty in perceiving ownership agrees with the functional body model hypothesis1. However, we observed clear tendencies of changes in body representation in the form of a clear correlation between the uncertainty in the position of the little finger and two questions that represent the ownership felt by the participants (Fig. 5). Controllability of the finger may have played a part in the slow embodiment of the robotic finger. While our EMG co-contraction-based control is very intuitive and robust (see Supplementary movie 1) and we tuned the music speed so as to ensure the participants were able to successfully follow the beats, the use of the new finger was still arguably more difficult compared to their real fingers. In any case, our results give probably the first behavioral evidence to suggest that the human body can embody a truly independent supernumerary limb.

Bootstrap distributions of correlation values between differences across controllable and random conditions for questionnaire scores and post- and pre-habituation difference in behavioral measures. The black thick lines indicate zero correlation, and the gray shades indicate the range of correlation within which 95% of bootstrapped correlation values distributed for each combination of questions and behavior measures. The asterisks indicate correlation pairs whose 95% bootstrapped correlation distribution is not overlapped with zero correlation.

Our questionnaire scores suggest that while the ownership felt towards our robotic finger was limited, participants clearly felt a sense of agency towards it. Our results thus again show the distinction between agency and ownership37,38,39. But is the robotic finger treated as a tool rather than an additional limb? Previous studies have shown the human can embody tools into their body. Tools are additional to the human body by definition and are also known to change the body schema31,32 and body image of the user33. However, one critical aspect that tool embodiment differs from limbs is in the lack of ownership, which is prominent only with limbs. And hence the correlated changes we observed between the behavior and ownership measure here suggest that our robotic finger tended to be considered as an additional limb by the participants.

In this study we could not clarify the role of haptic feedback in the embodiment. Our experiment consisted of two participant groups, with and without feedback in the random condition, and our initial plan was to investigate also the embodiment differences between these groups. However, at least for the behavioral measures we considered in this study, we did not find a significant difference between the two groups. This may be due to two reasons. First, the feedback pin in the current design may not be conspicuous enough. Second, participants reported some haptic sensation from the robotic finger due to its movement dynamics, even in the absence of the feedback pin. In fact, due to the absence of differences, we were able to combine the data during our analysis. Further studies are required to clarify the effects of feedback on the embodiment of additional limbs.

Methods

Participants

Eighteen male participants (mean age 26.6 years, range 20–39 years, all right-handed) with normal or corrected to normal vision participated in this study. This number is similar to the participant numbers in previous similar studies1,38 of embodiment. It corresponds to an effect size of 0.7, alpha = 0.05, and power = 0.77 using the G*Power 3.140,41. Each participant gave written informed consent before participating. The procedure was approved by the institutional review board of The University of Electro-Communications. Four participants who did not complete the experiments due to scheduling issues (one participant) or technical issues with the robot (three participants) are not included in the eighteen. All methods were performed in accordance with the relevant guidelines and regulations.

Independent control of the robotic sixth finger

Human joints are actuated by a redundant set of muscles and antagonist muscles. The difference between the antagonist flexor and extensor muscles provides the torque to actuate a joint while the co-activation, or the common part of their activations, lets us increase the impedance of the joints irrespective of the applied torque. In this study, it was critical for us that the participants moved their six fingers (the five real and one robotic finger) independently. We achieved this by isolating and utilizing the co-activation component of the muscle activations, which did not contribute to the motion of any of the real fingers, to actuate the robotic finger. The co-activation component in the muscle was isolated as follows.

Participants in our experiment started with a calibration session in which they were presented with a sinusoidal moving cursor and asked to repeatedly stiffen their hand and wrist (without moving their fingers) between relaxed and completely stiff states, coordinating their change of stiffness with the cursor, relaxing when it was in the base of a trough and stiffening maximally when the cursor was at the top of the sinus peaks. We recorded the EMG from the two flexor (\({E}_{f1}\), \({E}_{f2}\)) and two extensors (\({E}_{e1}\), \({E}_{e2}\)) through the calibration session. According to previous studies42, we assumed a linear joint-muscle model where the finger movement torque (\(\tau \)) is a consequence of the muscle tension and is correlated with the EMG, acting on the joint through a moment arm. As the participants do not move their wrist or fingers, the torque output is zero through the calibration period. Overall, these assumptions can be written as

where \(\beta\) represents the linear weights that include the moment arm and the calibration constant between the EMG and muscle tension. \(\beta\) was calculated using the root mean square fit on the flexor and extensor EMGs (after a third-order Butterworth filter of cutoff frequency 8 Hz) through the calibration session.

With the \(\beta\), one can subsequently estimate the co-activation at any time instance as

where min() represents the minimum function. The joint angle of the robotic finger (\(\theta\)) was then decided as

where \({\theta }_{bent}\) and \({\theta }_{extended}\) are the bent and extended positions of the finger. \(\alpha \) was adjusted for each participant by the experimenter during the calibration session to determine the minimum \(Ec\) that can be recognized as the co-activation.

Experimental procedure

Habituation task

After adjusting the control parameters in the above calibration, participants performed a habituation task to bend the fingers of the left hand (Fig. 1B). The robotic finger was attached on the side of the little finger of the participant’s left hand, and the six-fingered glove was worn over it. Markers were attached to the glove to track the movement of each finger.

Participants were presented with music through a pair of headphones and were required to bend one of the six fingers in a pseudo-random order, as indicated by visual cues. The visual cues were presented aligned with the beats of the music. The participant worked in one of two habituation tasks:

Task 1

Ten participants performed a finger bending task synchronized with the music beats. The participants sat on a chair in front of a desk and put their elbows on the desk with their palms facing toward them. Light emitting diodes (LEDs) were fixed to the tip of the fingers of the glove worn by the participants. The LEDs lighted up to indicate which finger to be bent (see Fig. 1B left).

Task 2

Eight participants performed a finger tapping task synchronized with the music beats. Piano-like keys were displayed on a tablet PC on the desk placed in front of the participants, and one of the keys lighted up to tell which finger to be bent. The participants were asked to hold their fingers over the keys, one above each, and touch the keys on the display by bending the corresponding finger (see Fig. 1B right).

We worked with these two different habituation tasks so as to explore possible effects due to the functionality of the tasks on embodiment since we expected that the tapping may be recognized as a more functional task than the bending1,43. However, we subsequently combined the two task groups since there were no noticeable distinctions. We confirmed that there were no statistical differences in any of the embodiment scores between the controllable and random conditions (see below) for the two task groups in the formal data (FDR-corrected p > 0.05 for multiple comparisons, the Wilcoxon rank-sum test for the scores of each question (Q1- Q7) between the two task groups).

The habituation task (either of task 1 or task 2) required participants to perform 10 runs on music A (lasting 1 min) and music B (2 min). One participant though did 15 runs of each, and one didn’t perform music B. When there were technical problems with the robotic finger, the tasks were repeated after the robotic finger was fixed until the required number of runs were collected (data measured with such technical problems was discarded). Overall, across the participants, the mean duration of the habituation task was 51 min (s.d., 13 min).

Participants practiced the above tasks under two conditions: controllable and random. In the controllable condition, the robotic finger was linked to their EMG and could be manipulated voluntarily. In the random condition, the robotic finger was flexed at a predetermined random time and could not be controlled by the participant. The participants were however asked to still co-contract the wrist muscles, as with the controllable condition, when they wanted to bend the robotic finger. The order of the two conditions was randomized across the participants.

All participants were provided with haptic feedback with the stimulus pin in the controllable conditions. In the random conditions, eight participants were provided with haptic feedback using the stimulus pin, which was synchronized with the robotic finger movement. The remaining participants worked without the stimulus pin in the random condition. We initially planned this procedure also to examine the role of haptic feedback in the process of embodiment. However, subsequently we decided to combine the results from the two random conditions because participants reported haptic feedback due to the shifting of the weight of the finger when it moved, even when the stimulus pin was absent.

Pre- and post-habituation tests

We utilized two behavioral tests to examine the changes in perception caused by the habituation task. Body representation refers to perception, memory, and cognition related to the body and is updated continuously by sensory input44. Ownership may be measured via skin conductance variations in response to a threatening stimulus45, hand temperature46, or somatosensory evoked potentials47. These measures are noisy especially in tasks like ours that involve movement. On the other hand, several studies have shown that embodiment and/or ownership are often correlated with changes in body representations leading to changes in proprioceptive drift2,22 and visual localization of the real body48. Proprioceptive drift measures changes in the so-called “body schema” while the visual tests measure changes in the “body image” of the participant, but in the context of limb replacement. Here motivated by these studies, our experiments were designed to test the body schema (reaching test) and body image (finger localization test) to verify the embodiment of the independent limb.

The tests were conducted both before (pre) and after (post) habituation and were performed with the EMG sensors and the robotic finger removed. In order to avoid loss of any induced feeling of embodiment, the participants were blindfolded after the habituation task session and instructed to keep the hand afloat to prevent tactile contact between the habituation task session and the test sessions.

Reaching test

The reaching test required the participants to sit on a chair in front of the table. They placed their left hand on the table. A tablet PC (VAIO Z VJZ13AIE) placed in front of them on the table displayed a horizontal “target bar” (see Fig. 3A), which the participants had to reach and touch with their left index finger, extending from the left and across the center of the screen. A cardboard box was placed on the table in front of the left hand such that it blocked the direct path of the left hand to the target bar on the tablet. The box forced the participants to curve their hand trajectory to avoid colliding with the box when they touched the target bar.

A colored square was presented on the right side of the tablet (Fig. 3A). The color of the square changed randomly (red, green, blue, or black). The duration of the color presentation was 500 ms for red, green, and blue and 3 s for black. The participants were instructed to move their left hand and touch the target as soon as they observed the black color of the square.

Participants performed 10 reach trials each in the pre- and post-habituation test sessions. We tracked the reach trajectories using a motion tracking marker attached to their index fingernail with MAC3D System (Motion analysis Corp.) to analyze whether and how the change in the features of the reach trajectories can be attributed to changes in the perceived width of the hand.

Finger localization test

The finger localization test required the participants to sit on a chair in front of a table. They inserted their left hand between an open box top and the table surface while keeping the palm down and the fingers near each other so that their hand and fingers were not visible to the participants. A tablet PC was placed vertically, with the screen facing the participants, behind but adjacent to the box such that only a part of the screen was above the box top and was visible to the participants (Fig. 3B).

The participant was presented a vertical target bar on the tablet screen, such that the part of the target above the box was visible to the participants, while the part below the box top was not. The participants were was instructed to touch the target line below the box top (without their hand being visible) with either their index or little finger. The name of the finger to be utilized was mentioned besides the target line. The participants performed 10 touches with each finger, both in the pre- and post-habituation tests sessions. The index and little finger touch locations were recorded and analyzed for differences across conditions. The lateral distance between the target line and the position touched by the instructed finger defined the “drift” of the finger.

Embodiment questionnaires

The questionnaire answers were recorded after the post-habituation tests by asking the participants to rate seven items written in Japanese on a seven-level Likert scale ranging from 1 (I did not feel that at all) to 7 (It felt extremely strong). The items of the questionnaire (translated to English) were:

- Q1:

-

During the task of the artificial finger manipulation, the artificial finger moved when I wanted to move it.

- Q2:

-

During the task of the artificial finger manipulation, the six-fingered hand began to look normal.

- Q3:

-

During the task of the artificial finger manipulation, moving the artificial finger was as easy as moving the actual finger.

- Q4:

-

During the task of the artificial finger manipulation, I felt that the artificial finger was a part of my hand.

- Q5:

-

During the task of the artificial finger manipulation, I felt that I was moving the artificial finger by myself.

- Q6:

-

During the task of the artificial finger manipulation, I felt that the artificial finger was my finger.

- Q7:

-

My hand is (narrowed/normal/widened) after removing the artificial finger.

For Q7, “narrowed” is assigned to 1 and “widened” is assigned to 7.

In addition to these embodiment questionnaires, participants were also asked by following three questions to confirm whether they performed the habituation task correctly:

- Q8:

-

I play music video games regularly.

- Q9:

-

During the task of the artificial finger manipulation, I was able to bend all my fingers except the artificial finger while following the rhythm.

- Q10:

-

During the task of the artificial finger manipulation, all LEDs on the fingertips were visible well.

For habituation task 2, an additional instruction was appended in Q10 to instruct participants to regard “all LEDs on the fingertips” as “keys corresponding to fingers.”

Lastly, participants were also asked to tell if they had some interesting or strange feelings and experiences during the experiment.

According to the answers to these additional questions, all participants were considered to perform the tasks correctly, and none was removed from the data analysis.

Data analysis

Reaching test

The motion tracking data were smoothed with a fourth-order Butterworth filter (7 Hz) for each marker's trajectory using Cortex (Version 7). Then the coordinates were rotated so that the X and Y axes followed the edge of the obstacle (see Fig. 3A). The peak Y-coordinate recorded in the time course of the index finger in each reach was considered as the end of the reach. We hypothesized that a change in perceived hand width would lead to a change in the obstacle avoidance, and therefore analyzed three representative measures of obstacle avoidance: the total length of each reach, the X coordinate of the end point, and the difference between the end point and start point of the trajectories on each trial (see Fig. 3A). The difference of the mean and standard deviation of these three measures (between the pre- and post-habituation tests sessions) gave us a measure of obstacle avoidance for each participant defined as \({\mu }_{length}^{reaching}\) and \({\sigma }_{length}^{reaching}\), \({\mu }_{end}^{reaching}\; \mathrm{ and } \; {\sigma }_{end}^{reaching}\), and \({\mu }_{end-start}^{reaching}\; \mathrm{ and } \; {\sigma }_{end-start}^{reaching}\), respectively.

Finger localization test

We calculated the finger drift by subtracting the horizontal positions touched by the instructed finger from that of the target line (see Fig. 3B). The change in the mean and standard deviation of these values were analyzed between the pre- and post-habituation tests, which gave us a measure of spatial drift for each participant defined as \({\mu }_{index}^{localization}\), \({\sigma }_{index}^{localization}\), \({\mu }_{little}^{localization}\), \({\sigma }_{little}^{localization}\). We also estimated the hand width without the thumb by \({\mu }_{index}^{localization}-{\mu }_{little}^{localization}\).

Bootstrap estimation of correlation between questionnaire scores and behavior measures

To estimate correlations between questionnaire scores and behavioral measures across individual participants while suppressing influences from outliers, we applied bootstrap sampling of the questionnaire scores and behavioral measures. Bootstrap sampling was performed by the following procedures. First, a pair of questionnaire scores and behavioral measures were resampled with replacement for the same sample size (N = 18). Second, a Spearman’s rank correlation coefficient was calculated using the resampled 18-pairs of questionnaire scores and behavioral measures. Third, these steps were repeated 1000 times and the distribution of the correlation value was calculated to define a confidence interval within which 95% of the correlation values were distributed while treating both of the bottom and top 2.5% estimates as statistical outliers. We performed this procedure for every combination of seven questionnaire scores and eleven behavioral measures and evaluated 77 distributions of the correlation values between questionnaire scores and behavioral measures. Then we identified pairs of a questionnaire item and a behavior measure whose confidence interval of correlation distribution exceeded zero correlation in either of the bottom or the top edge. These pairs were considered to show significant correlation.

References

Aymerich-Franch, L. & Ganesh, G. The role of functionality in the body model for self-attribution. Neurosci. Res. 104, 31–37. https://doi.org/10.1016/j.neures.2015.11.001 (2016).

Botvinick, M. & Cohen, J. Rubber hands “feel” touch that eyes see. Nature 391, 756 (1998).

Longo, M. R., Schüür, F., Kammers, M. P. M., Tsakiris, M. & Haggard, P. What is embodiment? A psychometric approach. Cognition 107, 978–998 (2008).

Slater, M., Perez-Marcos, D., Ehrsson, H. H. & Sanchez-Vives, M. V. Inducing illusory ownership of a virtual body. Front. Neurosci. 3, 214–220 (2009).

Slater, M., Spanlang, B., Sanchez-Vives, M. V. & Blanke, O. First person experience of body transfer in virtual reality. PLoS ONE 5, e10564 (2010).

Hagiwara, T., Ganesh, G., Sugimoto, M., Inami, M. & Kitazaki, M. Individuals prioritize the reach straightness and hand jerk of a shared avatar over their own. iScience https://doi.org/10.1016/j.isci.2020.101732 (2020).

Nishio, S., Watanabe, T., Ogawa, K. & Ishiguro, H. Body Ownership Transfer to Teleoperated Android Robot. Social Robotics 398–407 (Springer, 2012).

Alimardani, M., Nishio, S. & Ishiguro, H. Humanlike robot hands controlled by brain activity arouse illusion of ownership in operators. Sci. Rep. 3, 2396 (2013).

Aymerich-Franch, L., Petit, D., Ganesh, G. & Kheddar, A. Embodiment of a humanoid robot is preserved during partial and delayed control. In: 2015 IEEE International Workshop on Advanced Robotics and its Social Impacts, Lyon (France), 1–3 July (2015).

Aymerich-Franch, L., Petit, D., Ganesh, G. & Kheddar, A. The second me: seeing the real body during humanoid robot embodiment produces an illusion of bi-location. Conscious. Cogn. 46, 99–109. https://doi.org/10.1016/j.concog.2016.09.017 (2016a).

Aymerich-Franch, L., Petit, D., Kheddar, A. & Ganesh, G. Forward modelling the rubber hand: illusion of ownership modifies motor-sensory predictions by the brain. R. Soc. Open Sci. 3, 160407. https://doi.org/10.1098/rsos.160407 (2016b).

Aymerich-Franch, L., Petit, D., Ganesh, G. & Kheddar, A. Non-human looking robot arms induce illusion of embodiment. Int. J. Soc. Robot. 9, 479–490. https://doi.org/10.1007/s12369-017-0397-8 (2017a).

Aymerich-Franch, L., Petit, D., Ganesh, G. & Kheddar, A. Object touch by a humanoid robot avatar induces haptic sensation in the real hand. J. Comput-Mediat. Comm. 22, 215–230. https://doi.org/10.1111/jcc4.12188 (2017b).

Suzuki, Y., Galli, L., Ikeda, A., Itakura, S. & Kitazaki, M. Measuring empathy for human and robot hand pain using electroencephalography. Sci. Rep. 5, 15924. https://doi.org/10.1038/srep15924 (2015).

Ehrsson, H. How many arms make a pair? Perceptual illusion of having an additional limb. Perception 38, 310–312 (2009).

Steptoe, W., Steed, A. & Slater, M. Human tails: ownership and control of extended humanoid avatars. IEEE Trans. Vis. Comput. Graph. 19, 583–590 (2013).

Iwasaki, Y. & Iwata, H. A Face Vector—The point instruction type interface for manipulation of an extended body in dual-task situations. In Proceedings of 2018 IEEE International Conference on Cyborg and Bionic Systems (CBS2018) (2018).

Llorens-Bonilla, B., Parietti, F. & Asada, H.H.. Demonstration-based control of supernumerary robotic limbs. In 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems, 3936–3942; https://doi.org/10.1109/IROS.2012.6386055 (2012).

Kieliba, P., Clode, D., Maimon-Mor, R. O. & Makin, T. R. Robotic hand augmentation drives changes in neural body representation. Sci. Robot. 6, eabd7935. https://doi.org/10.1126/scirobotics.abd7935 (2021).

Kokkinara, E., Kilteni, K., Blom, K. J. & Slater, M. First person perspective of seated participants over a walking virtual body leads to illusory agency over the walking. Sci. Rep. 6, 28879. https://doi.org/10.1038/srep28879 (2016).

Burin, D., Liu, Y., Yamaya, N. & Kawashima, R. Virtual training leads to physical, cognitive and neural benefits in healthy adults. Neuroimage 222, 117297. https://doi.org/10.1016/j.neuroimage.2020.117297 (2020).

Tsakiris, M. & Haggard, P. The rubber hand illusion revisited: visuotactile integration and self-attribution. J. Exp. Psychol. Hum. Percept. Perform. 31, 80–91 (2005).

Haans, A., Ijsselsteijn, W. A. & de Kort, Y. A. W. The effect of similarities in skin texture and hand shape on perceived ownership of a fake limb. Body Image 5, 389–394 (2008).

Guterstam, A. & Ehrsson, H. H. Disowning one’s seen real body during an out-of-body illusion. Conscious. Cogn. 21, 1037–1042 (2012).

Pavani, F. & Zampini, M. The role of hand size in the fake-hand illusion paradigm. Perception 36, 1547–1554 (2007).

Pavani, F., Spence, C. & Driver, J. Visual capture of touch: out-of-the-body experiences with rubber gloves. Psychol. Sci. 11, 353–359 (2000).

Austen, E. L., Soto-Faraco, S., Enns, J. T. & Kingstone, A. Mislocalizations of touch to a fake hand. Cogn. Affect. Behav. Neurosci. 4, 170–181 (2004).

Ehrsson, H. H., Spence, C. & Passingham, R. E. That’s my hand! Activity in premotor cortex reflects feeling of ownership of a limb. Science 305, 875–877 (2004).

Costantini, M. & Haggard, P. The rubber hand illusion: sensitivity and reference frame for body ownership. Conscious. Cogn. 16, 229–240 (2007).

Lloyd, D. M. Spatial limits on referred touch to an alien limb may reflect boundaries of visuo-tactile peripersonal space surrounding the hand. Brain Cogn. 64, 104–109 (2007).

Cardinali, L. et al. Tool-use induces morphological updating of the body schema. Curr. Biol. 19, 1157 (2009).

Maravita, A. & Iriki, A. Tools for the body (schema). Trends Cogn. Sci. 8, 79–86 (2004).

Sposito, A., Bolognini, N., Vallar, G. & Maravita, A. Extension of perceived arm length following tool-use: Clues to plasticity of body metrics. Neurophysiologica 50, 2187–2194 (2012).

Ganesh, G., Yoshioka, T., Osu, R. & Ikegami, T. Immediate tool incorporation processes determine human motor planning with tools. Nat. Commun. 5, 4424 (2014).

Iriki, A., Tanaka, M. & Iwamura, Y. Coding of modified body schema during tool use by macaque postcentral neurons. NeuroReport 7, 2325–2330 (1996).

Canzoneri, E. et al. Tool-use reshapes the boundaries of body and peripersonal space representations. Exp. Brain Res. 228, 25–42 (2013).

Qu, J., Ma, K. & Hommel, B. Cognitive load dissociates explicit and implicit measures of body ownership and agency. Psychon. Bull. Rev. 28, 1567–1578. https://doi.org/10.3758/s13423-021-01931-y (2021).

Braun, N. et al. The senses of agency and ownership: a review. Front. Psychol. 9, 535. https://doi.org/10.3389/fpsyg.2018.00535 (2018).

Shibuya, S., Unenaka, S. & Ohki, Y. Body ownership and agency: task-dependent effects of the virtual hand illusion on proprioceptive drift. Exp. Brain Res. 235, 121–134. https://doi.org/10.1007/s00221-016-4777-3 (2017).

Faul, F., Erdfelder, E., Lang, A. G. & Buchner, A. G*Power 3: a flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behav. Res. Methods 39, 175–191 (2007).

Faul, F., Erdfelder, E., Buchner, A. & Lang, A. G. Statistical power analyses using G*Power 3.1: tests for correlation and regression analyses. Behav. Res. Methods 41, 1149–1160 (2009).

Tee, K. P., Franklin, D. W., Kawato, M., Milner, T. E. & Burdet, E. Concurrent adaptation of force and impedance in the redundant muscle system. Biol. Cybern. 102, 31–44. https://doi.org/10.1007/s00422-009-0348-z (2010).

Cardinali, L. et al. Grab an object with a tool and change your body: Tool-use-dependent changes of body representation for action. Exp. Brain. Res. 218, 259–271. https://doi.org/10.1007/s00221-012-3028-5 (2012).

Wen, W. et al. Goal-directed movement enhances body representation updating. Front. Hum. Neurosci. 10, 329. https://doi.org/10.3389/fnhum.2016.00329 (2016).

Armel, K. C. & Ramachandran, V. S. Projecting sensations to external objects: evidence from skin conductance response. Proc. Biol. Sci. 270, 1499–1506. https://doi.org/10.1098/rspb.2003.2364 (2003).

Moseley, G. L., Gallace, A. & Spence, C. Bodily illusions in health and disease: physiological and clinical perspectives and the concept of a cortical “body matrix”. Neurosci. Biobehav. Rev. 36, 34–46 (2012).

Heydrich, L. et al. Cardio-visual full body illusion alters bodily self-consciousness and tactile processing in somatosensory cortex. Sci. Rep. 8, 9230. https://doi.org/10.1038/s41598-018-27698-2 (2018).

Erro, R., Marotta, A., Tinazzi, M., Frena, E. & Fiorio, M. Judging the position of the artificial hand induces a “visual” drift towards the real one during the rubber hand illusion. Sci. Rep. 8, 2531. https://doi.org/10.1038/s41598-018-20551-6 (2018).

Acknowledgements

The authors would like to thank Yui Takahara for technical assistance with the experiments. This research was supported by JST ERATO Grant Number JPMJER1701 (Inami JIZAI Body Project), and JSPS KAKENHI Grant Number 15K12623.

Author information

Authors and Affiliations

Contributions

GG and YM developed the concept; YS and GG developed the finger design and control; KU, GG, and YM developed the experiment design and protocol; KU collected and analyzed the data; KU, GG, and YM wrote the paper. All authors reviewed the final manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Umezawa, K., Suzuki, Y., Ganesh, G. et al. Bodily ownership of an independent supernumerary limb: an exploratory study. Sci Rep 12, 2339 (2022). https://doi.org/10.1038/s41598-022-06040-x

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-022-06040-x

This article is cited by

-

Phantom Signs – Hidden (Bio)Semiosis in the Human Body(?)

Biosemiotics (2024)

-

Impact of supplementary sensory feedback on the control and embodiment in human movement augmentation

Communications Engineering (2023)

-

Knowing the intention behind limb movements of a partner increases embodiment towards the limb of joint avatar

Scientific Reports (2022)

-

Embodiment of supernumerary robotic limbs in virtual reality

Scientific Reports (2022)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.