Abstract

Several early childhood obesity prediction models have been developed, but none for New Zealand's diverse population. We aimed to develop and validate a model for predicting obesity in 4–5-year-old New Zealand children, using parental and infant data from the Growing Up in New Zealand (GUiNZ) cohort. Obesity was defined as body mass index (BMI) for age and sex ≥ 95th percentile. Data on GUiNZ children were used for derivation (n = 1731) and internal validation (n = 713). External validation was performed using data from the Prevention of Overweight in Infancy Study (POI, n = 383) and Pacific Islands Families Study (PIF, n = 135) cohorts. The final model included: birth weight, maternal smoking during pregnancy, maternal pre-pregnancy BMI, paternal BMI, and infant weight gain. Discrimination accuracy was adequate [AUROC = 0.74 (0.71–0.77)], remained so when validated internally [AUROC = 0.73 (0.68–0.78)] and externally on PIF [AUROC = 0.74 [0.66–0.82)] and POI [AUROC = 0.80 (0.71–0.90)]. Positive predictive values were variable but low across the risk threshold range (GUiNZ derivation 19–54%; GUiNZ validation 19–48%; and POI 8–24%), although more consistent in the PIF cohort (52–61%), all indicating high rates of false positives. Although this early childhood obesity prediction model could inform early obesity prevention, high rates of false positives might create unwarranted anxiety for families.

Similar content being viewed by others

Introduction

Worldwide, an estimated 38 million children under the age of 5 years are above the body mass index (BMI) cut-off for what is considered a healthy weight1. In New Zealand, 14.9% of 4–5-year-olds have obesity, and 2.9% have extreme obesity2, with Māori and Pacific children experiencing disproportionately higher levels of obesity than children from other ethnic groups2. Childhood obesity tracks into later life3, even in the very young; having a body mass index (BMI) or weight for length at or above the World Health Organization (WHO) 85th percentile before the age of 18 months predicts obesity at 6 years of age4. Such findings highlight the importance of early intervention.

There are a number of potentially modifiable risk factors associated with early childhood obesity, including maternal smoking during pregnancy5,6,7, high maternal pre-pregnancy BMI5,6,7, excessive gestational weight gain5,6, high birth weight5,7, rapid infant weight gain5,7, high-protein infant formula7, and poor infant sleep5,7. Despite the identification of potentially modifiable risk factors, early childhood obesity interventions have reported inconsistent results8, although a recent meta-analysis of four trials, including one based in New Zealand, reported that early intervention reduced infants’ BMI z-scores by 18–24 months9. However, health professionals report they lack the knowledge required to confidently identify obesity risk in young children10,11,12,13. Providing health professionals with a tool that enables accurate prediction of an infant’s risk of obesity could serve to increase the effectiveness of early childhood obesity interventions, through enabling timely intervention. Importantly, any such model would need to be accurate enough to warrant telling families what could be worrying information for them14. In particular, a prediction model with a low positive predictive value (PPV, i.e. the probability that those considered to be at risk by the model will actually go on to develop obesity) would likely create considerable unwarranted anxiety.

Internationally, several prediction models have been developed to determine risk of early childhood obesity15, but to date no such model has been developed for the ethnically diverse New Zealand population. Prediction models should be developed and validated using participant data that are representative of the population in which the model will be used16. Thus, we aimed to develop, internally validate, and externally validate a prediction model for obesity at 4–5-years-of-age for New Zealand children. Additionally, because the prevalence of severe childhood obesity is of increasing concern, and children with severe obesity tend to have poorer clinical outcomes compared to those with less severe forms17, we also aimed to derive and validate models for severe childhood obesity in the same population.

Methods

Primary study population

The derivation and internal validation samples were obtained using data from the Growing Up in New Zealand (GUiNZ) study, a longitudinal, prospective birth cohort that recruited 6853 children via their pregnant mothers with an estimated due date between 25 April 2009 and 25 March 2010, who were resident in the greater Auckland, Counties-Manukau, and Waikato District Health Boards regions of New Zealand18. The cohort characteristics and recruitment strategy have been described elsewhere18. The generalizability of the recruited cohort to all current New Zealand births has also been demonstrated, especially with regard to ethnicity and socio-demographics19. Multiple data collection waves have occurred from pregnancy and throughout the pre-school period. Sociodemographic information including parental education, ethnicity, and socioeconomic deprivation were collected during pregnancy, as was maternal pre-pregnancy BMI and paternal BMI. Consent for linkage to routine birth records was also sought from parents to complement parental reported size at birth measures18. Socioeconomic status was determined using the NZDep200618,20, an area level measure of deprivation, which provides scores ranging from 1 (least deprived areas) to 10 (most deprived areas)20. At ages 2 and 4 years, trained GUiNZ researchers obtained height and weight measurements from the children using standardised protocols during face-to-face home interviews21.

Populations for external validation

Two populations were used for external validation of the prediction models. External validation is vital to determine the generalisability of a prediction model to populations that are reflective of, but not identical to, the population used to derive the model22. Prevention of Overweight in Infancy (POI) was a randomised controlled trial of interventions to prevent overweight during infancy23. In brief, 802 mothers and infants living in Dunedin (New Zealand) were recruited between 2009 and 2012. Pregnant women were eligible to participate if they were over 16 years of age, could communicate in English or the indigenous Māori language, and were planning to live in the Dunedin area for the following 2 years23. Anthropometric measurements were taken by trained researchers when the children were 5 years old24. The majority of children in POI (79%) were of New Zealand European ethnicity23.

The Pacific Islands Families (PIF) study recruited newborn infants of Pacific Island ethnicity born at Middlemore Hospital (Auckland, New Zealand) between March and December 200025. Infants were considered to be of Pacific Island ethnicity if at least one parent identified themselves as such. Participants joined the study at their 6-week follow-up, when 1376 eligible mothers (1398 infants) consented to and completed an interview. Children’s heights and weights were measured by trained researchers using standardized equipment at approximately 4 years of age26.

Outcome

Children's BMI measures were transformed into z-scores as per WHO standards28,29. The primary outcome for the childhood obesity model was a binary outcome of obesity at age 4–5 years, which was defined as a BMI z-score ≥ 1.645 (or ≥ 95th percentile for age and sex)30.

A further two models were derived to predict severe childhood obesity. The outcome for the first was the likelihood of severe childhood obesity at age 4–5 years, defined as a BMI z-score ≥ 1.974 (i.e. ≥ 120% of the 95th percentile for age and sex); for the second model, it was defined as a BMI z-score ≥ 2.326 (or ≥ 99th percentile for age and sex)17.

Model development

Participants from GUiNZ with complete maternal and paternal data were randomly split into derivation (70%) and validation (30%) cohorts. A large number of categorical and continuous variables were selected from GUiNZ (Supplementary Table S1). The associations between categorical variables and obesity in childhood were examined using Chi-square tests. For continuous variables, univariable logistic regressions were used. All parameters displaying an association with p < 0.10 were selected for the next step.

Pairwise associations between all continuous variables were examined using Pearson's correlation coefficients, with high collinearity defined as |r|≥ 0.531. Similarly, associations between categorical variables were also examined to identify cases of high collinearity based on the phi (φ) coefficient: |φ|≥ 0.5. Whenever high collinearity between two variables was identified, one of these variables was eliminated, primarily based on their practicality for use in routine clinical practice. The remaining parameters were selected for further investigation. In addition, two-way interactions between all remaining variables were examined, and those that were statistically significant at p < 0.05 were also selected.

Ethnicity in GUiNZ was self-reported, and participants were able to select multiple ethnic groups with which they identified, and to self-prioritise their main ethnicity. Children’s expected ethnicity was also reported by their mothers19, but for a number of reasons, maternal prioritised ethnicity was used to represent children’s ethnicity in this study. Firstly, paternal demographic information is not always reliably available during pregnancy. Secondly, previous work on GUiNZ data has demonstrated that children’s reported ethnicity can vary by parent32. Lastly, maternal contributions to overweight or obesity in offspring, or risk factors such as high birth-weight, have been shown to be equal to33,34 or greater than35,36 that of paternal contributions. Ethnicity was defined using a hierarchical system of classification, such that if multiple ethnicities were selected, the participant was assigned to a single category37. Given the small numbers of participants in the categories ‘Other’ and ‘MELAA’ (Middle Eastern, Latin American and African), these were combined as ‘Other ethnicities’.

Model discrimination was defined as its ability to adequately differentiate those with high risk of an event from those with a low risk38, and it was estimated using the area under the receiver operating characteristic curve (AUROC). The following thresholds to assess model discrimination based on AUROC were adopted: poor (< 0.60), possibly useful (≥ 0.60 and < 0.70), acceptable (≥ 0.70, < 0.80), excellent (≥ 0.80 and < 0.90), and outstanding (≥ 0.90)38,39.

Model calibration was defined as its goodness of fit or extent to which the model correctly estimated the absolute risk38, and was determined using the Hosmer–Lemeshow test39. A p value < 0.05 was considered evidence of poor model calibration.

In addition, the following parameters were obtained to assess model accuracy and predictive capacity:

Using the derivation cohort, selected variables were included in a logistic regression model. Models were progressively modified with the addition and/or removal of parameters. This step was repeated multiple times, with model discrimination and calibration assessed at each step, until the most parsimonious model with the best discrimination possible was obtained, while accounting for a predictor's usability in routine practice. Using the method described by Moons et al.22, the model was then internally and externally validated, with the same equation applied to the GUiNZ internal validation cohort, and POI and PIF external validation cohorts.

Analyses were performed in SPSS v25 (IBM Corp, Armonk, NY, USA) and SAS v9.4 (SAS Institute, Cary, USA). All tests were two-tailed, with statistical significance set at p < 0.05 and without adjustment for multiple comparisons. There was no imputation of missing data.

Ethics approval

This study solely involved the use of anonymized data. Ethics approval for GUiNZ was granted by the Ministry of Health Northern Y Regional Ethics Committee, New Zealand18; for POI by the New Zealand Lower South Regional Ethics Committee, New Zealand27; and for PIF by the Auckland branch of the National Ethics Committee, New Zealand26. This study was performed in accordance with all appropriate institutional and international guidelines and regulations for research. Written informed consent was obtained from a parent or legal guardian of all participants from the individual studies.

Disclosure

The authors have no financial or non-financial conflicts of interest to disclose that may be relevant to this work. The funders had no role in study design, data analysis or interpretation, decision to publish, or preparation of this manuscript.

Results

GUiNZ

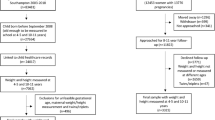

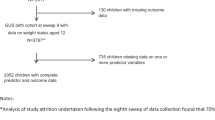

The selected derivation cohort consisted of 1731 participants, while the selected internal validation cohort consisted of 713 participants (Supplementary Figure S1). The number of participants that we ultimately used was reduced mostly due to the lack of paternal and/or infancy weight gain data (Supplementary Figure S1), which were identified as key parameters during variable selection. Of note, Supplementary Table S2 shows that there were some differences between included and excluded GUiNZ participants. In particular, the maternal ethnicity and sociodemographic status of participants differed, with more participants with mothers of Māori or Pacific ethnicity and/or from areas of greater socioeconomic deprivation in the excluded group. The exclusion of Māori and Pacific mother-infant pairs, primarily due to missing paternal data, is likely to have introduced some bias. However, ethnic-specific models (New Zealand European, Māori, and Pacific) were also derived, and yielded no clear advantage in comparison to the overall model (data not shown). For those included participants, the prevalence of obesity was similar in the GUiNZ derivation and validation cohorts (15.8% and 16.1%, respectively), while other demographic factors were also similar (Table 1).

Model predictors

The final prediction model included maternal pre-pregnancy BMI, paternal BMI, birth weight, maternal smoking during pregnancy, and infant weight gain as significant independent predictors of childhood obesity (Table 2).

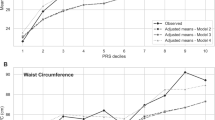

Derivation

Discrimination accuracy was acceptable for the derivation model [AUROC = 0.74 (0.71, 0.77), p < 0.0001], and there was no evidence of poor model calibration (p value for Hosmer–Lemeshow test = 0.27). Table 3 shows the sensitivity, specificity, PPV, and NPV of the derivation model at various risk thresholds ranging from 0.20 to 0.95. PPVs ranged from 18.6 to 54.0% (Table 3).

Internal validation

Discrimination accuracy was acceptable for the internal validation model [AUROC = 0.73 (0.68, 0.78), p < 0.0001]. There was no evidence of poor model calibration (p for Hosmer–Lemeshow test = 0.75). Model accuracy parameters are shown in Table 3. Similar to the derivation model, PPVs ranged from 19.0 to 47.7% (Table 3).

External validation

The demographic information for each of the external validation cohorts is provided in Table 1. Notably, the prevalence of childhood obesity in the POI cohort was 7.0% and 51.1% in PIF (Table 1).

Discrimination accuracy was excellent for the POI cohort [AUROC = 0.80 (0.71, 0.90), p < 0.0001] and acceptable for PIF [AUROC = 0.74 (0.66, 0.82), p < 0.0001]. There was no evidence of poor model calibration (all p > 0.05 for Hosmer–Lemeshow test).

Table 3 provides the sensitivity, specificity, PPV, and NPV of the external validation models at various percentile risk thresholds ranging from 0.20 to 0.95. PPVs were particularly low for POI, ranging from 7.8 to 23.5%, but higher and more consistent for PIF, ranging from 51.9 to 60.5% (Table 3).

Severe childhood obesity prediction

Both models with severe childhood obesity as the outcome included 2408 participants in their derivation cohorts (Supplementary Table S3). The predictors included for each model and their associations with the outcome are reported in Supplementary Tables S4 and S5. Discrimination accuracy was acceptable for the model predicting severe childhood obesity at BMI ≥ 120% of the 95th percentile [AUROC = 0.75 (0.72, 0.78); Supplementary Table S6] and at BMI ≥ 99th percentile [AUROC = 0.76 (0.72, 0.80); Supplementary Table S7]. There was no evidence of poor calibration for either derivation model (both p > 0.05 for Hosmer–Lemeshow test) or for the validation models (all p > 0.05 also), with the exception of the POI validation model for severe childhood obesity at a BMI z-score ≥ 99th percentile (p = 0.002).

The sensitivity, specificity, PPV, and NPV of the derivation and validation models for severe childhood obesity are reported in Supplementary Tables S6 and S7. PPVs for both derivation models were low, ranging from 15.0 to 47.9% for the model predicting severe childhood obesity at BMI ≥ 120% of the 95th percentile (Supplementary Table S6), and 9.0 to 33.1% at BMI ≥ 99th percentile (Supplementary Table S7). The PPVs for the internal validation model predicting severe childhood obesity at BMI ≥ 120% of the 95th percentile were similarly low to those of the derivation model (14.2–51.0%, Supplementary Table S6), as were the PPVs for the model at BMI ≥ 99th percentile (7.8–34.5%, Supplementary Table S7). Regarding external validations, the PPVs for both POI models were particularly poor (5.2–16.7%, Supplementary Table S6; 0–8.7%, Supplementary Table S7), although markedly higher for the PIF models (38.3–55.5%, Supplementary Table S6; 41.5–66.7%, Supplementary Table S7).

Discussion

New Zealand has a high prevalence of early childhood obesity, with one in three children starting school already overweight or with obesity2. Furthermore, New Zealand’s population is an ethnically diverse one40, with obesity rates inequitably distributed across ethnicities. For example, 30.2% of Pacific Island children have obesity by the age of four, versus 12.7% of New Zealand European children2. We have developed a model to attempt to predict likelihood of obesity at 4–5 years based on infancy data, starting with a cohort that is representative of the New Zealand birth population19. The derivation model, internal validation model, and two external validation models all produced AUROCs that were either acceptable or excellent. However, despite the encouraging AUROCs, PPVs were almost invariably low across the risk threshold range, indicating high rates of false positives. In addition, derivation and validation of two models to predict severe childhood obesity produced similar findings.

The performance of our childhood obesity prediction model was comparable with previous international early childhood obesity or overweight prediction models, with derivation AUROCs ranging from 0.67 to 0.8715. Our final model used five predictors: birth weight, maternal pre-pregnancy BMI, paternal BMI, infancy weight gain, and maternal smoking during pregnancy. The inclusion of infancy weight gain data in early childhood obesity prediction regression models has been suggested to produce higher AUROC values than models solely reliant on predictors available at birth15. Our model produced a higher AUROC than one previous regression model supplemented by infancy weight gain data, but was lower than two others15. However, our infancy weight gain data were obtained using only two time points: birth and anywhere between 6 and 12 months. It is possible that data collected at more regular intervals would have improved our model’s discrimination.

Our model’s discrimination capacity was excellent for the POI cohort, while it remained acceptable for the PIF cohort. This may be explained by differences between the populations. POI was a cohort consisting of mostly New Zealand Europeans, with a low prevalence of obesity at 7.0%. By comparison, the PIF cohort included only Pacific Island children, with a markedly higher prevalence of obesity at 51.1%. However, of particular relevance, there were also substantial contrasts in PPVs when the model was applied to these external validation cohorts. For example, the highest PPV achieved when the model was applied to the POI cohort was 23.5% compared to 60.5% in the PIF cohort. In actual numbers, at the 95th probability percentile threshold (where the PPV was highest), 52 of 69 participants in the PIF cohort who did develop obesity were correctly identified as being likely to do so (sensitivity = 75.4%), while 34 were incorrectly identified as at risk. By contrast, at the same threshold, the model correctly identified only 4 of 27 participants that went on to develop obesity in the POI cohort (sensitivity = 14.8%), with 13 false positives. Choosing a lower risk threshold would only serve to increase the rate of false positives. Of note, derivation and validation of models to predict severe childhood obesity according to two definitions (known to be associated with poorer long-term clinical outcomes compared to less severe obesity17) did not substantially improve PPVs for any included cohort.

Risk thresholds should be determined following consideration of a number of factors, including sensitivity and specificity of the model, financial cost of any proposed intervention, and any potential risks of intervening or not intervening in misclassified cases15. For obvious reasons, the weight given to these individual aspects will vary markedly depending on the condition in question, whether it is a relatively benign condition vs a disease with a very high mortality rate (e.g. pancreatic cancer). In this study, the observed rates of false positives across the risk threshold continuum indicate that in New Zealand’s multi-ethnic population, a large number of children would be incorrectly classified as at risk of childhood obesity. There is a large social stigma associated with childhood obesity41, and a high number of false positives (i.e. children being wrongly labelled as at risk of developing obesity) could create considerable unwarranted anxiety for many families, and thus should be avoided. In addition, screening for obesity may be unethical in the absence of proven treatments for early childhood obesity. Nonetheless, a recent meta-analysis reported that randomised controlled trials on interventions to prevent early childhood obesity conducted in Australia and New Zealand (n=4) resulted in lower BMI z-scores at 18–24 months9.

There has been some research into the acceptability of early childhood obesity prediction to families. In New Zealand, parents and other caregivers were hypothetically accepting of communication regarding early childhood obesity prediction, although many anticipated feeling upset or worried in response14. UK parents were also hypothetically accepting of such communication, but were fearful of judgement from health professionals and others42. One study has explored parental views of actual risk communication43, reporting that some parents did not trust the prediction and believed the risk was not likely to be relevant for their baby43. Of note, some of the health professionals delivering the risk communication also expressed similar beliefs43. Indeed, parental awareness of current childhood obesity in older children is not necessarily associated with action to reduce the child’s weight44. Furthermore, there is some evidence that in the long-term, young children considered to have overweight by their parent gain more weight than children not considered to have overweight45,46,47. In one study, this was regardless of the child’s initial weight status (i.e. normal weight or overweight)45. Therefore, accurate parental perception of children’s weight status could even be detrimental to children’s long-term weight outcomes. Nonetheless, it is important to note that all three of these studies were observational in design, and no intervention was offered to children considered to have overweight (as would be the case if a prediction model like ours were to be used in clinical practice). Furthermore, a prediction model does not require parents to perceive their child as having a current weight issue, but rather to consider them at risk of developing obesity in the future. Therefore, use of an early childhood obesity prediction model could have different long-term outcomes to the previously mentioned longitudinal studies. A randomised controlled trial on the use of a prediction model (with lower false positive rates than ours), alongside supportive intervention, would be needed to assess if this was the case.

Our study has several limitations. Parental anthropometric and infancy weight gain data were missing for many participants in all cohorts, meaning these otherwise suitable participants were excluded from the final analysis. While the overall GUiNZ cohort was representative of New Zealand's population19, the exclusion of participants with missing data (in particular missing paternal data) meant that a greater proportion of children from lower socioeconomic backgrounds and/or with mothers of Māori or Pacific ethnicity, were excluded. Thus, this exclusion of participants should be considered when interpreting the applicability of the model to the wider New Zealand population. Also, parental BMI was based on self-reported data and potentially inaccurate48, although a New Zealand study found no differences between self-reported and measured BMI in adults49. In addition, our model was derived using historical data that might not be reflective of current trends. Nonetheless, all GUiNZ infants were born between 2009 and 201018; the prevalence of obesity in 4–5-year-old New Zealand children has not increased since 2010 (regardless of ethnicity or socioeconomic status)2. Furthermore, the prevalence of obesity among New Zealand adults has been stable since 2012/13, with only a slight rise since 2011/1250. Taken together, this suggests that the prevalence of obesity among children included in our derivation cohort is likely to be reflective of current early childhood obesity trends in New Zealand children, and at least two of the models’ parameters (maternal pre-pregnancy BMI and paternal BMI) are also likely to be closely reflective of current trends. Additionally, while BMI ≥ 95th percentile is a commonly used threshold for obesity in children, it is acknowledged that BMI does not directly measure body fat30. Thus, it cannot be considered a definitive diagnostic tool for obesity, which is defined by the WHO to be “abnormal or excessive fat accumulation that may impair health”51. Nonetheless, its practicality means it is a commonly used tool for diagnosis of obesity in clinical practice30; in New Zealand for example, BMI (z-score) is a key tool to identify children with weight issues in the Ministry of Health's B4 School Check, a nationwide programme that offers free health and development assessments for all children at 4 years of age52. Strengths of our study include the internal and external validations, and all children’s anthropometric measurements being taken using consistent protocols by trained professionals, which reduces the potential for error.

In conclusion, we have derived and validated an early childhood obesity prediction model using a cohort that is representative of the diversity of the contemporary New Zealand birth population, although children of Māori and Pacific mothers and those from lower socioeconomic backgrounds were under-represented in our selected subset. While AUROCs for the derivation and validation models were at least acceptable, PPVs were generally low so that high rates of false positives could create considerable unwarranted anxiety for many families. This would be a particular issue in a context where there remains uncertainty regarding what constitutes a successful intervention to prevent early childhood obesity, thus, we are hesitant to recommend the use our model in clinical practice.

Data availability

The study data cannot be made available in a public repository, due to the strict conditions of the ethics approvals of the original studies from which our data were derived. However, the anonymised data on which this manuscript was based could be made available to other investigators upon bona fide request, and following all the necessary approvals (including ethics) of the detailed study proposal and statistical analyses plan. Any queries should be directed to Dr José Derraik (j.derraik@auckland.ac.nz).

References

UNICEF, WHO, The World Bank. Levels and Trends in Child Malnutrition: Key Findings of the 2020 Edition of the Joint Child Malnutrition Estimates. https://apps.who.int/iris/bitstream/handle/10665/331621/9789240003576-eng.pdf (2020).

Shackleton, N. et al. Improving rates of overweight, obesity and extreme obesity in New Zealand 4-year-old children in 2010–2016. Pediatr. Obes. 13, 766–777 (2018).

Singh, A. S., Mulder, C., Twisk, J. W., van Mechelen, W. & Chinapaw, M. J. Tracking of childhood overweight into adulthood: A systematic review of the literature. Obes. Rev. 9, 474–488 (2008).

Smego, A. et al. High body mass index in infancy may predict severe obesity in early childhood. J. Pediatr. 183, 87–93.e81 (2017).

Woo Baidal, J. A. et al. Risk factors for childhood obesity in the first 1,000 days: A systematic review. Am. J. Prev. Med. 50, 761–779 (2016).

Robinson, S. M. et al. Modifiable early-life risk factors for childhood adiposity and overweight: An analysis of their combined impact and potential for prevention. Am. J. Clin. Nutr. 101, 368–375 (2015).

Larqué, E. et al. From conception to infancy—early risk factors for childhood obesity. Nat. Rev. Endocinol. 15, 456–447 (2019).

Redsell, S. A. et al. Systematic review of randomised controlled trials of interventions that aim to reduce the risk, either directly or indirectly, of overweight and obesity in infancy and early childhood. Matern. Child Nutr. 12, 24–38 (2016).

Askie, L. M. et al. Interventions commenced by early infancy to prevent childhood obesity—the EPOCH Collaboration: An individual participant data prospective meta-analysis of four randomized controlled trials. Pediatr. Obes. 15, e12618 (2020).

Redsell, S. A. et al. Preventing childhood obesity during infancy in UK primary care: A mixed-methods study of HCPs’ knowledge, beliefs and practice. BMC Fam. Pract. 12, 54 (2011).

Isma, G. E., Bramhagen, A.-C., Ahlstrom, G., Östman, M. & Dykes, A.-K. Obstacles to the prevention of overweight and obesity in the context of child health care in Sweden. BMC Fam. Pract. 14, 143 (2013).

Redsell, S. A. et al. UK health visitors’ role in identifying and intervening with infants at risk of developing obesity. Matern. Child Nutr. 9, 396–408 (2013).

Bradbury, D. et al. Barriers and facilitators to health care professionals discussing child weight with parents: A meta-synthesis of qualitative studies. Br. J. Health Psychol. 23, 701–722 (2018).

Butler, É. M. et al. Acceptability of early childhood obesity prediction models to New Zealand families. PLoS One 14, e0225212 (2019).

Butler, É. M., Derraik, J. G. B., Taylor, R. W. & Cutfield, W. S. Prediction models for early childhood obesity: Applicability and existing issues. Horm. Res. Paediatr. 90, 358–367 (2019).

Grobbee, D. E. & Hoes, A. W. Clinical Epidemiology: Principles, Methods, and Applications for Clinical Research (Jones and Bartlett Publishers, 2009).

Kelly, A. S. et al. Severe obesity in children and adolescents: Identification, associated health risks, and treatment approaches: A scientific statement from the American Heart Association. Circulation 128, 1689–1712 (2013).

Morton, S. M. B. et al. Cohort profile: Growing Up in New Zealand. Int. J. Epidemiol. 42, 65–75 (2013).

Morton, S. M. B. et al. Growing Up in New Zealand cohort alignment with all New Zealand births. Aust. N. Z. J. Public Health 39, 82–87 (2015).

Salmond, C., Crampton, P. & Atkinson, J. NZDep2006 Index of Deprivation (Department of Public Health, 2007).

Morton, S. M. B. et al. Growing Up in New Zealand: A Longitudinal Study of New Zealand Children and Their Families. Now We Are Four: Describing the Preschool Years (Growing Up in New Zealand, 2017).

Moons, K. G. et al. Risk prediction models: II. External validation, model updating, and impact assessment. Heart 98, 691–698 (2012).

Fangupo, L. J. et al. Impact of an early-life intervention on the nutrition behaviors of 2-y-old children: A randomized controlled trial. Am. J. Clin. Nutr. 102, 704–712 (2015).

Meredith-Jones, K. et al. Physical activity and inactivity trajectories associated with body composition in pre-schoolers. Int. J. Obes. 42, 1621–1630 (2018).

Paterson, J. et al. Cohort profile: The Pacific Islands Families (PIF) Study. Int. J. Epidemiol. 37, 273–279 (2008).

Rush, E. C., Paterson, J., Obolonkin, V. V. & Puniani, K. Application of the 2006 WHO growth standard from birth to 4 years to Pacific Island children. Int. J. Obes. 32, 567–572 (2008).

Taylor, B. J. et al. Prevention of Overweight in Infancy (POI.nz) study: A randomised controlled trial of sleep, food and activity interventions for preventing overweight from birth. BMC Public Health 11, 942 (2011).

de Onis, M. et al. Development of a WHO growth reference for school-aged children and adolescents. Bull. World Health Organ. 85, 660–667 (2007).

WHO Multicentre Growth Reference Study Group. WHO Child Growth Standards based on length/height, weight and age. Acta Paediatr. Suppl. 450, 76–85 (2006).

Barlow, S. E. Expert committee recommendations regarding the prevention, assessment, and treatment of child and adolescent overweight and obesity: Summary report. Pediatrics 120(Suppl 4), S164-192 (2007).

Dormann, C. F. et al. Collinearity: A review of methods to deal with it and a simulation study evaluating their performance. Ecography 36, 27–46 (2013).

Atatoa Carr, P., Kukutai, T., Bandara, D. & Broman, P. Is ethnicity all in the family? How parents in Aotearoa/New Zealand identify their children. In Mana Tangatarua: Mixed Heritages, Ethnic Identity and Biculturalism in Aotearoa/New Zealand (eds. Rocha, Z.L., Webber, M.) 53-75 (Routledge, 2017).

Naess, M., Holmen, T. L., Langaas, M., Bjorngaard, J. H. & Kvaloy, K. Intergenerational transmission of overweight and obesity from parents to their adolescent offspring—the HUNT study. PLoS One 11, e0166585 (2016).

Aris, I. M. et al. Modifiable risk factors in the first 1000 days for subsequent risk of childhood overweight in an Asian cohort: Significance of parental overweight status. Int. J. Obes. 42, 44–51 (2018).

Derraik, J. G. B. et al. Paternal contributions to large-for-gestational-age term babies: Findings from a multicenter prospective cohort study. J. Dev Orig. Health Dis. 10, 529–535 (2019).

Pomeroy, E., Wells, J. C., Cole, T. J., O’Callaghan, M. & Stock, J. T. Relationships of maternal and paternal anthropometry with neonatal body size, proportions and adiposity in an Australian cohort. Am. J. Phys. Anthropol. 156, 625–636 (2015).

New Zealand Ministry of Health. HISO 10001:2017 Ethnicity Data Protocols (Ministry of Health, 2017).

Alba, A. C. et al. Discrimination and calibration of clinical prediction models: Users’ guides to the medical literature. JAMA 318, 1377–1384 (2017).

Hosmer, D. W. Applied Logistic Regression 3rd edn. (Wiley, 2013).

Research New Zealand. Special Report on the 2013 Census of New Zealand’s Population and Dwellings (Research New Zealand, 2014).

Pont, S. J. et al. Stigma experienced by children and adolescents with obesity. Pediatrics 140, e20173034 (2017).

Bentley, F., Swift, J. A., Cook, R. & Redsell, S. A. “I would rather be told than not know”—a qualitative study exploring parental views on identifying the future risk of childhood overweight and obesity during infancy. BMC Public Health 17, 684 (2017).

Rose, J. et al. Proactive Assessment of Obesity Risk during Infancy (ProAsk): A qualitative study of parents’ and professionals’ perspectives on an mHealth intervention. BMC Public Health 19, 294 (2019).

Butler, É. M. et al. Parental perceptions of obesity in school children and subsequent action. Child. Obes. 15, 459–467 (2019).

Robinson, E. & Sutin, A. R. Parental perception of weight status and weight gain across childhood. Pediatrics 137, e20153957 (2016).

Robinson, E. & Sutin, A. R. Parents’ perceptions of their children as overweight and children’s weight concerns and weight gain. Psychol. Sci. 28, 320–329 (2017).

Gerards, S. M. P. L. et al. Parental perception of child’s weight status and subsequent BMIz change: The KOALA birth cohort study. BMC Public Health 14, 291 (2014).

Gorber, S. C., Tremblay, M., Moher, D. & Gorber, B. A comparison of direct vs self-report measures for assessing height, weight and body mass index: A systematic review. Obes. Rev. 8, 307–326 (2007).

Kruse, K. BMI: A Comparison Between Self-Reported and Measured Data in Two Population-Based Samples [Technical report] (Health Promotion Agency Research and Evaluation Unit, 2014).

New Zealand Ministry of Health. Annual Data Explorer 2018/19: New Zealand Health Survey [Data File]. https://minhealthnz.shinyapps.io/nz-health-survey-2018-19-annual-data-explorer/ (2019).

World Health Organization. Obesity and overweight. https://www.who.int/en/news-room/fact-sheets/detail/obesity-and-overweight (2020).

New Zealand Ministry of Health. Well Child/Tamariki Ora Programe Practitioner Handbook: Supporting Families and Whānau to Promote Their Child’s Health and Development—Revised 2014 (Ministry of Health, 2013).

Funding

This work was conducted for A Better Start-National Science Challenge, which is funded by the New Zealand Ministry of Business, Innovation and Employment (MBIE).

Author information

Authors and Affiliations

Consortia

Contributions

J.G.B.D., É.M.B., S.M.B.M., E.-S.T., M.G., R.W.T., and W.S.C. conceptualised the study; J.G.B.D., W.S.C., É.M.B., A.P., S.M.B.M., B.M.S., and R.W.T. contributed to study design; A.P., S.M.B.M., C.G.W., K.L., E.-S.T., R.W.T., and J.G.B.D. worked on data curation; J.G.B.D. analysed the data with input from A.P., B.M.S., and É.M.B.; É.M.B. and J.G.B.D. drafted the manuscript with critical input from all other authors; all authors and collaborators have approved the final version of the manuscript, and agreed with its publication.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Butler, É.M., Pillai, A., Morton, S.M.B. et al. A prediction model for childhood obesity in New Zealand. Sci Rep 11, 6380 (2021). https://doi.org/10.1038/s41598-021-85557-z

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-021-85557-z

This article is cited by

-

A prediction model for childhood obesity risk using the machine learning method: a panel study on Korean children

Scientific Reports (2023)

-

Longitudinal body mass index trajectories at preschool age: children with rapid growth have differential composition of the gut microbiota in the first year of life

International Journal of Obesity (2022)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.