Abstract

Procedure-related cardiac electronic implantable device (CIED) infections have high morbidity and mortality, highlighting the urgent need for infection prevention efforts to include electrophysiology procedures. We developed and validated a semi-automated algorithm based on structured electronic health records data to reliably identify CIED infections. A sample of CIED procedures entered into the Veterans’ Health Administration Clinical Assessment Reporting and Tracking program from FY 2008–2015 was reviewed for the presence of CIED infection. This sample was then randomly divided into training (2/3) validation sets (1/3). The training set was used to develop a detection algorithm containing structured variables mapped from the clinical pathways of CIED infection. Performance of this algorithm was evaluated using the validation set. 2,107 unique CIED procedures from a cohort of 5,753 underwent manual review; 97 CIED infections (4.6%) were identified. Variables strongly associated with true infections included presence of a microbiology order, billing codes for surgical site infections and post-procedural antibiotic prescriptions. The combined algorithm to detect infection demonstrated high c-statistic (0.95; 95% confidence interval: 0.92–0.98), sensitivity (87.9%) and specificity (90.3%) in the validation data. Structured variables derived from clinical pathways can guide development of a semi-automated detection tool to surveil for CIED infection.

Similar content being viewed by others

Introduction

Cardiovascular electronic implantable devices (CIEDs), such as pacemakers and implantable automated defibrillators, are increasing as the population ages1,2. Procedure-related CIED infections are associated with high morbidity, mortality, and medical costs. Mortality rates for deep infections involving leads implanted into the heart approach 20% and infections are estimated to cost greater than $50,0003,4,5. CIED infections are increasingly a concern for cardiologists, infection control departments, and patient safety programs due to a trend toward an increasing rate of procedure-related infections1. The increased rate of CIED infections is compounded by the overall increasing rates of CIED placements6. Given this trend, developing and implementing effective infection prevention programs in the electrophysiology suite is crucial for minimizing morbidity and mortality among CIED implantation recipients.

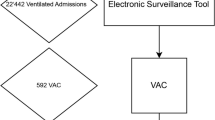

Surveillance with audit and feedback is a cornerstone of, and critical first step in, infection prevention (Fig. 1)7. Surveillance programs measure infections when they occur. This information can then be used to identify operational and patient-centered targets for prevention initiatives after infections and clusters are found. Surveillance programs can improve outcomes in several ways. They can be used to measure the effectiveness of novel infection prevention measures in real-time8 and can also provide feedback to providers to encourage uptake of evidence-based prevention practices9. Surveillance systems can also improve care through the observer, or Hawthorne, effect10. The Surgical Care Improvement Project (SCIP) infection metric bundle is an example of a surveillance and reporting system that lead to major improvements in surgical care and a reduction in post-operative infections11. However, due to the nature of the electrophysiology laboratory as a procedural area that straddles inpatient and outpatient settings, it is not encompassed by surgical site infection (SSI) quality improvement initiatives12.

Given limited resources, and the increasing dissemination of electronic health records (EHRs), a promising strategy for expanding surveillance to uncovered procedural areas is the development of surveillance tools that leverage clinical data warehouses to measure and track infections. These tools could be used as stand-alone systems or could be used in a semi-automated method to augment and triage the manual review process toward cases at highest probability of having an adverse event7,13. Thus, we sought to develop and validate a surveillance tool for CIED infection surveillance using structured data elements from the Veterans Health Administration (VA)’s Corporate Data Warehouse (CDW) in order to triage cases for manual review.

Methods

Study overview

This study used structured data elements along the diagnostic and treatment pathway of CIED infection management from the VA EHR captured in the CDW to develop an algorithm to detect infections. We used a limited definition of structured data focusing on diagnostic testing orders, laboratory results, pharmacy and billing data that is structured and standardized across all EHRs in order to ensure broad potential applicability of the detection algorithm. A sample of cardiac procedures collected as part of the VA Clinical Assessment Reporting and Tracking (CART) quality program underwent manual review for the presence of infection; these cases were used in the development and validation of the semi-automated tool.

Ethical considerations

Given the retrospective nature of the study, waiver of consent was obtained. The Veterans Health Administration Boston Healthcare System Institutional Review Board and the University of Colorado Denver Anschutz Medical Campus Multiple Institution Review Board (covering the University of Colorado Denver and its affiliates: Children’s Hospital Colorado, Denver Health and Hospital Authority, University of Colorado Hospital, and the VA Eastern Colorado Health Care System) approved this study and waived the need for informed consent as part of study approval prior to data collection and analysis. The study was also approved by the VA CART-Electrophysiology (EP) program. All methods were carried out in accordance with relevant guidelines and regulations.

Cohort development

Our cohort was a subset of CIED procedures captured by the CART program. CART is a national quality initiative integrated into the VA electronic medical record, which collects data about clinical outcomes, co-morbidities, and procedural details, but does not include measurement of infections. CART reporting is mandatory for all cardiac catheterization procedures and optional for EP procedures, including device implantations and revisions. Approximately 20% of EP device procedures performed across the national VA healthcare system are captured by CART-EP. Multiple clinical and procedural variables are captured and combined with other data from the VA CDW to create a single national data repository14,15.

CIED procedures, including implantations and revisions of permanent pacemakers, implantable cardioverter defibrillators, biventricular pacemaker-implantable cardioverter-defibrillators, and biventricular pacemakers, entered into the CART-EP program during the period from 10/2007–9/2015 were considered for inclusion (N = 5753). Given the volume of CIED procedures performed and relatively low prevalence of CIED infections, an enhanced sampling approach was undertaken; details of the sampling process are described in prior published work and included in Supplementary Methods S114,15.

After development of the cohort, a sample of cases underwent manual review by a trained clinician (AA, PM, WBE) to identify CIED infections that occurred within 90 days of the index procedure, applying standard cardiac device infection definitions based on clinical symptoms, microbiology results, and/or clinician diagnosis, based on recommendations from the Centers for Disease Control National Healthcare Safety Network and multi-society guidelines16,17,18. Further details on cohort development and the manual review process, including how infections were measured and defined as well as the other variables collected, are available in Supplementary Methods S1 and S2 and related references14,15,17.

Diagnostic and treatment pathways and selection of potential identifying variables

Diagnostic variables

CIED infections can be diagnosed and treated in several ways (Fig. 2); these interventions generate orders and results that can potentially be leveraged for retrospective infection detection17. Many infections are first identified due to fever, which is recorded in vital signs data as a continuous numeric variable. Microbiologic testing generates an order, which is a structured data element in the EHR, and a result, which is unstructured and not standardized. Microbiologic cultures can be obtained from the site of the device (e.g., wound cultures) or can be blood cultures, used to diagnose systemic infection, such as endocarditis. Thus, there are four potential flags associated with microbiologic testing to diagnose CIED infections: 1) a blood culture order, 2) a wound culture order, 3) wound culture result and 4) a blood culture positive result. Microbiology testing is important because it is an essential part of infection diagnosis and an essential aspect of most infection surveillance definitions. If cultures are positive, they can also provide information about the organism causing the infection and guide antibiotic treatment. Diagnosis of CIED infections may also involve imaging procedures, such as echocardiography, often transesophageal echocardiography, due to how endocarditis is defined. Mechanisms of echocardiography ordering and reporting of results are highly variable and not standardized across the VA EHR.

Treatment variables

Treatment of CIED infections generally involves incision and drainage of fluid collections, which may generate a structured procedure code, antimicrobials, which generate an antimicrobial order, and, in many cases, removal of the CIED with later replacement, thus generating a subsequent current procedural terminology (CPT) code. Another aspect of treatment is often clinical consultation, often with either infectious diseases or cardiology. Consultation is also recorded in various places throughout the EHR (clinical notes, orders, consult section) and is thus not a standardized data element.

Development of the surveillance algorithm

Based on diagnostic and treatment pathways, we identified potentially relevant variables to develop the infection detection algorithm using structured data elements stored in CDW. Administrative billing codes, which are highly structured, were also considered for potential inclusion. Specifically, we used the Inpatient and Outpatient tables to obtain CPT and International Classification of Disease (ICD) 9 and 10 codes (Supplementary Methods S2), pharmacy tables for drug order data, Microbiology tables for laboratory orders, and vitals tables. These variables were classified as diagnostic of infection, treatment of infection, or billing for infection-related healthcare utilization. Unstructured variables, such as microbiology results, echocardiography completion, and consultation orders, where not considered for inclusion in the final identification model due to programming complexity and high facility-level variation in the text data; thus, inclusion of these types of variables was felt to limit generalizability. However, these manually extracted variables were evaluated as part of a sensitivity analysis to determine if their inclusion would improve algorithm performance if available and searchable in some EHRs. In the sensitivity analysis, microbiology results were further sub-classified into positive cultures with a likely infecting organism (probable pathogen) and positive cultures likely representing contamination.

Statistical analysis

Demographic and clinical characteristics of CIED patients by infection status were compared. Chi-squared tests were used to compare categorical variables and Mann–Whitney Wilcoxon tests were used for continuous variables.

In order to build and test the infection surveillance algorithm, we randomly split the data into a training set, including approximately two-thirds of the observations, and a validation set of the remaining one-third19. Only the electronically-available structured variables (e.g. microbiology orders, rather than results) were considered for inclusion in the final tool, to ensure scalability and portability across EHRs. Based on the univariate association with infection status and clinical reasoning, the following were evaluated for inclusion in the flagging model: presence of a microbiology order, repeat procedure status, fever, blood, wound, antibiotic post-procedure, and a limited list of antibiotics commonly used to treat CIED infections post-procedure (Supplementary Note S3) SSI code (998.x), other CPT or ICD infection code (categorized as general infection code, material infection code or procedural infection code), and elective status for the procedure, which is routinely entered into the CART database at the time of the procedure.

Binary logistic regression was used to model the probability of an infection in the training data and chose the best combination of identifiers using the R function bestglm20, based on the Akaike information criterion (AIC), which is an estimate of relative quality of statistical models for a given set of data. We then used the GLM function with the caret package21 (with 3-fold cross-validation with 5 repeats, twoClassSummary and smote sampling in order to account for the rare outcome) in order to apply the model to the training data and validate on the test data. We used Youden’s method to choose the probability threshold to define positive infection status and to estimate the model performance measures22. To ensure model quality and robustness, we assessed calibration of the model using the givitiR package; a model is well calibrated if the predicted probabilities generated by the model accurately match the observed proportions of the response23. The goodness of fit of a logistic regression model can also be expressed by pseudo R-squared statistics, and we calculated Nagelkerke’s pseudo R2 of the logistic regressions of the training and test data using the selected predictors. This statistic is based on the log likelihood for the full model compared to the log likelihood for the baseline intercept-only model and rescaled to cover the full range of possible R2 values from 0 to 124,25. We also explored a second modelling approach using elastic net regression and accounting for hospital-level correlation using the glmnet method in the caret package with a grid search to evaluate the best choice of alpha and lambda. All analyses were completed using SAS software version 9.4 (SAS Institute, Cary, NC) and R v3.5.026.

Results

The VA CART-EP database captured 5,753 CIED procedures from FY 2008–2015, representing 39 VA medical centers. 2,107 procedures among 2,068 CIED patients were manually reviewed (Table 1). The majority of patients were male (N = 2024/2068, 97.9%) and Caucasian (N = 1784/2068, 86.3%). Patients included in the cohort had a high rate of medical comorbidities; among the most prevalent were tobacco use (N = 1041, 50.3%) and diabetes (N = 977, 47.2%). Among manually reviewed cases, 97 CIED infections in 95 patients were identified within the 90-day surveillance window. Full baseline demographic details are shown in Table 1 and additional information is available in Table 1 of a related reference15.

Univariate analysis identified several variables that were significantly associated with true CIED infection (Table 2). Diagnostic variables included presence of a wound or blood culture order (89/97 infections vs 453/2010 uninfected controls, p < 0.0001). Wound culture orders (55/97 vs 144/2010, p < 0.0001) and blood culture orders (78/97 vs 425/2010, p < 0.0001) were also independently associated with true infections. Therapeutic flags associated with true infection included a drug order for the limited list of antibiotics after a 72-hour window post-procedure (94/97 vs 884/2010, p < 0.0001). Billing code identifiers for SSI (73/97 vs 111/2010, p < 0.0001; list of codes in Supplementary Methods S2 as well as general infection codes (34/97 vs 109/2010, p < 0.0001) were significant identifiers; material infection and CPT infections were not. A summary of antibiotic treatment regimens against identified culprit pathogens of CIED infection cases is shown in Supplementary Table S4.

Sensitivity analysis of unstructured data elements

In the CART-EP manual review data, the presence of any positive microbiologic result (50/97 vs 49/2010, p < 0.0001), as well as growth from a wound culture (41/97 vs 14/2010, p < 0.0001) or growth in a blood culture (18/97 vs 40/2010), p < 0.0001) were also potential identifiers. Identification of a probable infecting organism (e.g. Staphylococcus spp) was also found to be a potential identifier (50/97 vs 24/2010, p < 0.0001). Infectious diseases consultations were also positively associated with true CIED infections (44/97 vs 78/2010, p < 0.0001). However, these unstructured and variable data elements were all excluded from consideration in the final detection algorithm due to their limited potential to be included in an operational tool.

The refined set of surveillance variables derived from the training set included in the surveillance algorithm is presented in Table 3 and Fig. 3. Among these, variables with the highest odds for identifying CIED infections included the presence of antibiotic order from the more limited set placed during the period>72 hours post-procedure (OR 17.76, 95% CI 4.11–76.79, p < 0.001), fever (OR 16.94, 95% CI 1.28–224.41, p = 0.032) billing code for SSI (OR 10.56, 95% CI 4.97–22.43, p < 0.001) and the presence of any microbiology order (OR 8.85, 95% CI 3.93–19.89, p < 0.001).

When combined in a surveillance tool, the algorithm demonstrated high sensitivity and specificity in both the training set (84.38% and 93.58%, respectively) and the validation set (87.88% and 90.25%, respectively). The c-statistic for the algorithm in the training sample was 0.95 (95% CI, 0.92–0.98, Table 4). The negative predictive value (NPV) was 99.20% and 99.36% for training and validation sets and the positive predictive value (PPV) was 38.85% (training) and 30.21% (validation, Table 4). Compared to the rates of infection in the CART-EP data, the algorithm overestimated true infections (10.0% estimated and 4.6% observed for the training set; 13.3% estimated and 4.6% observed for the validation set). The pseudo R2 values for the training and validation models were 57.9% and 58.4%, respectively (Supplementary Fig. S5). We investigated using elastic net regression, including the same set of possible identifiers and accounting for correlation of observations within a hospital, but this type of model did not significantly improve either calibration or estimation.

Discussion

Electronic, automated and semi-automated surveillance are important emerging tools in the epidemiologist’s arsenal as clinical data warehouses are increasingly available for improving bedside clinical care27. From a large sampling of VA CIED procedures, we found that a combination of clinically-oriented variables from CIED infection diagnostic and treatment pathways and administrative billing codes demonstrated clinically useful sensitivity and specificity for flagging true cases of CIED infection (Fig. 4). Algorithms based on structured data elements to retrospectively identify cases with a true infection can be used to expand infection surveillance to clinical care areas with limited infection prevention and surveillance coverage. Although the PPV was limited (~30%), the NPV was very high at 99%, demonstrating that the tool captured true infections well. This semi-automated flagging system could form the foundation of a surveillance program for measuring post-procedural CIED infections as part of a semi-automated process to expedite a manual review of cases most likely to have an infection; because only structured data elements are used, this tool has the potential to be easily implemented across many EHR systems.

Use of electronic identifier flags in detecting CIED infection cases. Made using https://www.meta-chart.com/venn#. *3 CIED infection cases either did not have an antibiotic prescription or had antibiotics started within 72 hours of index procedure, among these three, 2 had microbiology order and positive culture and 1 had microbiology order, positive culture and billing code for SSI. **Positive growth on blood or wound culture.

Electronically augmented surveillance has great promise for expanding prevention to uncovered clinical areas, including procedural areas, such as the EP laboratory, and outpatient care. However, the best methodology to develop and implement these tools is an active area of research. Here, we used a methodology of first developing a list of potential identifiers based on clinical diagnostic and therapeutic pathways, and then mapped these potential identifiers to structured data elements in the EHR. A similar method of developing detection tools based on standard clinical processes could be used to expand detection to other areas with limited surveillance. In addition, EHR systems and clinical practice patterns may vary by institution, thus, a selection of the various elements identified could be customized to institutional-specific algorithms28. Future iterations could include advanced data extraction strategies, such as natural language processing and machine learning techniques, to further improve operating characteristics of the algorithm.

Prior work on CIED infection detection is limited. One prior single center study evaluated the utility of billing codes to measure rates of CIED infections; our data from a large, multicenter national sample suggests that this strategy may have high specificity but low sensitivity. In our study, 27.6% of true-positive cases in our analysis would not have been flagged using a system reliant on billing codes alone29. Our finding about the limited indicative utility of billing codes alone is similar to work in cardiac surgery, which similarly found that coding data had limited value for measuring post-surgical infections (PPV < 26%)30,31,32. The challenge of the limited sensitivity of coding data is compounded by recent data which suggests that ICD-10 codes may even lower predictive probabilities than ICD-9 codes33.

We expanded upon prior research into semi-automated electronic surveillance tools by including structured variables available in commonly used EHRs that could be leveraged for CIED identification. In combining these variables, we developed and validated an electronic surveillance tool with excellent specificity and sensitivity, high NPV, and reasonable PPV, increasing the rate of case identification from 4–5% to 30–40%. The high NPV of the tool highlights the importance of the absence of clinical identifiers in ruling out cases that do not have an infection; this NPV can be leveraged to greatly streamline a manual review process.

Prior work demonstrates that a major barrier to implementing surveillance in outpatient and procedural areas is limited time and resources34,35; a semi-automated system that is easy to program and dramatically reduces chart review has the potential to bypass some of these implementation challenges to facilitate expansion of surveillance. The substantial improvement in PPV markedly enhances the yield of a manual review process and reduces the time and resources necessary for infection detection. In addition, although the tool identified flagged some negative cases, it was calibrated to optimize case ascertainment and to triage and minimize the burden of manual review, not to replace it entirely. A combined automated and manual approach to infection detection forms a powerful tool for generating accurate data not only on infection rates but also on quality and process metrics. Identifying patient safety metrics that can be acted upon to promote improvements in infection prevention strategies is an important aspect of the response for averting additional procedure-related infections. Critical changes to patient safety and process could not be identified or implemented without the information generated during a detailed manual review and root cause analysis.

When considering implementation and adaption to other settings of care, it is useful to consider how each type of structured data element impacted the probability an adverse event occurred. For example, use of antimicrobial prescriptions post-procedure was highly sensitive but not specific. This lack of specificity is driven by the breadth of CIED infection pathogens and the diverse set of antimicrobials that can be selected to treat them15. Using a limited set of antibiotics—for example, training the algorithm to detect only antimicrobials directed toward common bacterial pathogens— might improve specificity but at the cost of potentially not detecting CIED infections caused by unusual, and potentially more severe, pathogens. Another approach to enhance the PPV of the tool might be to use combinations of identifiers such as drug-bug matches (i.e. specific antibiotic prescriptions that correspond to particular microbiology results) as a discrete variable. However, this would layer in additional complexity to programming and simultaneously reduce sensitivity. In our sample, approximately half of infections did not have positive blood or wound microbiology, thus, the process of requiring a microbiology result, let alone layering results with interaction terms, would miss more than half of the cases. In addition, our focus was on the use of structured data flags due to excellent NPV of these variables, and to ensure generalizability to a wide variety of EHRs. However, the use of typically unstructured data—such as information contained in clinical notes—may be an approach to improve PPV in future iterations.

The limitations of this study were largely due the dataset used to create and validate the algorithm and difficulty in operationalizing clinical variables into electronic identifiers. We relied on CART-EP data to develop and validate the algorithm, and the selection process for these CIED procedures may limit the generalizability of our findings to other VA CIED procedures and procedures performed outside of the closed VA healthcare system. Our reliance on data available in VA records could mean that CIED infection occurring outside the VA system may not have been captured. However, we manually reviewed all scanned in outside hospital records and these were included as cases in the study; if they did not have clinical variables input into the VA EHR, these would have been included as “false negatives” in the development and assessment of the tool and reflected in the operating characteristics of the model. Further, prior studies demonstrate that the majority of patients return to the closed VA healthcare system for subsequent procedural care.

Regarding operational challenges, we found that wound culture results had excellent promise for infection detection, however, due the nature of the variable as free-text and not standardized, it is not easily measured using an automated system across multiple sites with highly variable documentation practices.

Finally, healthcare systems and practices are not static, but rather constantly evolving. Thus, automated infection surveillance algorithms that leverage clinical pathways, including this CIED infection flagging tool, will require ongoing adaption and updates as novel therapeutics are introduced and diagnostic strategies evolve. Thus, detection algorithms must move toward a learning health system model, with constant modifications and adaptations to maintain their estimative utility36.

Conclusions

Existing surveillance structures for CIED infections are absent in many institutions, partially due to limited resources for detection and monitoring35. This study demonstrates that electronic surveillance tools based on structured data elements can be developed with high sensitivity and specificity and have the potential to expand measurement with audit and feedback to clinical areas that have not been served by traditional surveillance programs. Future improvements could include strategies to enhance detection of variables primarily captured in clinical notes.

References

Greenspon, A. J. et al. 16-year trends in the infection burden for pacemakers and implantable cardioverter-defibrillators in the United States 1993 to 2008. J Am Coll Cardiol 58, 1001–1006, https://doi.org/10.1016/j.jacc.2011.04.033 (2011).

Bradshaw, P. J., Stobie, P., Knuiman, M. W., Briffa, T. G. & Hobbs, M. S. Trends in the incidence and prevalence of cardiac pacemaker insertions in an ageing population. Open Heart 1, e000177, https://doi.org/10.1136/openhrt-2014-000177 (2014).

Sohail, M. R. et al. Incidence, Treatment Intensity, and Incremental Annual Expenditures for Patients Experiencing a Cardiac Implantable Electronic Device Infection: Evidence From a Large US Payer Database 1-Year Post Implantation. Circ Arrhythm Electrophysiol 9, https://doi.org/10.1161/CIRCEP.116.003929 (2016).

Sohail, M. R., Henrikson, C. A., Braid-Forbes, M. J., Forbes, K. F. & Lerner, D. J. Mortality and cost associated with cardiovascular implantable electronic device infections. Arch Intern Med 171, 1821–1828, https://doi.org/10.1001/archinternmed.2011.441 (2011).

Greenspon, A. J., Eby, E. L., Petrilla, A. A. & Sohail, M. R. Treatment patterns, costs, and mortality among Medicare beneficiaries with CIED infection. Pacing Clin Electrophysiol 41, 495–503, https://doi.org/10.1111/pace.13300 (2018).

Greenspon, A. J. et al. Trends in permanent pacemaker implantation in the United States from 1993 to 2009: increasing complexity of patients and procedures. J Am Coll Cardiol 60, 1540–1545, https://doi.org/10.1016/j.jacc.2012.07.017 (2012).

Gastmeier, P. & Behnke, M. Electronic surveillance and using administrative data to identify healthcare associated infections. Curr Opin Infect Dis 29, 394–399, https://doi.org/10.1097/QCO.0000000000000282 (2016).

Borer, A. et al. Prevention of infections associated with permanent cardiac antiarrhythmic devices by implementation of a comprehensive infection control program. Infect Control Hosp Epidemiol 25, 492–497, https://doi.org/10.1086/502428 (2004).

Powell, B. J. et al. A refined compilation of implementation strategies: results from the Expert Recommendations for Implementing Change (ERIC) project. Implement Sci 10, 21, https://doi.org/10.1186/s13012-015-0209-1 (2015).

Chen, L. F., Vander Weg, M. W., Hofmann, D. A. & Reisinger, H. S. The Hawthorne Effect in Infection Prevention and Epidemiology. Infect Control Hosp Epidemiol 36, 1444–1450, https://doi.org/10.1017/ice.2015.216 (2015).

Awad, S. S. Adherence to surgical care improvement project measures and post-operative surgical site infections. Surg Infect (Larchmt) 13, 234–237, https://doi.org/10.1089/sur.2012.131 (2012).

Branch-Elliman, W. A Roadmap for Reducing Cardiac Device Infections: a Review of Epidemiology, Pathogenesis, and Actionable Risk Factors to Guide the Development of an Infection Prevention Program for the Electrophysiology Laboratory. Curr Infect Dis Rep 19, 34, https://doi.org/10.1007/s11908-017-0591-8 (2017).

Falen, T., Noblin, A. M., Russell, O. L. & Santiago, N. Using the Electronic Health Record Data in Real Time and Predictive Analytics to Prevent Hospital-Acquired Postoperative/Surgical Site Infections. The health care manager 37, 58–63, https://doi.org/10.1097/hcm.0000000000000196 (2018).

Asundi, A. et al. Prolonged antimicrobial prophylaxis following cardiac device procedures increases preventable harm: insights from the VA CART program. Infect Control Hosp Epidemiol 39, 1030–1036, https://doi.org/10.1017/ice.2018.170 (2018).

Asundi, A. et al. Real-world effectiveness of infection prevention interventions for reducing procedure-related cardiac device infections: Insights from the veterans affairs clinical assessment reporting and tracking program. Infect Control Hosp Epidemiol, 1–8, https://doi.org/10.1017/ice.2019.127 (2019).

Bongiorni, M. G. et al. How European centres diagnose, treat, and prevent CIED infections: results of an European Heart Rhythm Association survey. Europace 14, 1666–1669, https://doi.org/10.1093/europace/eus350 (2012).

Baddour, L. M. et al. Update on cardiovascular implantable electronic device infections and their management: a scientific statement from the American Heart Association. Circulation 121, 458–477, https://doi.org/10.1161/CIRCULATIONAHA.109.192665 (2010).

CDC. Surgical Site Infection (SSI) Event, http://www.cdc.gov/nhsn/PDFs/pscManual/9pscSSIcurrent.pdf (2015).

Dobbin, K. K. & Simon, R. M. Optimally splitting cases for training and testing high dimensional classifiers. BMC Med Genomics 4, 31, https://doi.org/10.1186/1755-8794-4-31 (2011).

McLeod, A. I. & Xu, C. bestglm: Best subset GLM and Regression Utilities. R package version 0.37, https://CRAN.R-project.org/package=bestglm (2018).

Kuhn, M., Contributions from Jed Wing, S. W., Andre Williams, Chris, Keefer, A. E., Tony Cooper, Zachary Mayer, Brenton Kenkel, the R & Core Team, M. B., Reynald Lescarbeau, Andrew Ziem, Luca Scrucca, Yuan Tang, Can Candan and Tyler Hunt. caret: Classification and Regression Training. R package version 6.0-81. https://CRAN.R-project.org/package=caret (2018).

Robin, X. et al. pROC: an open-source package for R and S+ to analyze and compare ROC curves. BMC Bioinformatics 12, 77, https://doi.org/10.1186/1471-2105-12-77 (2011).

Nattino, G., Finazzi, S., Bertolini, G. & Rossi, C. & Carrara, G. givitiR: The GiViTI Calibration Test and Belt. R package version 1, 3 (2017).

Nagelkerke, N. J. D. A Note on a General Definition of the Coefficient of Determination. Biometrika 78, 691–692, https://doi.org/10.2307/2337038 (1991).

Signorell et mult. al., A. DescTools: Tools for Descriptive Statistics. R package version 0.99.32. (2020).

R Core Team. R: A language and environment for statistical computing. R Foundation for Statistical Computing, Vienna, Austria, https://www.R-project.org/ (2018).

Branch-Elliman, W. et al. Cardiac Electrophysiology Laboratories: A Potential Target for Antimicrobial Stewardship and Quality Improvement? Infect Control Hosp Epidemiol 37, 1005–1011, https://doi.org/10.1017/ice.2016.116 (2016).

de Bruin, J. S., Seeling, W. & Schuh, C. Data use and effectiveness in electronic surveillance of healthcare associated infections in the 21st century: a systematic review. Journal of the American Medical Informatics Association: JAMIA 21, 942–951, https://doi.org/10.1136/amiajnl-2013-002089 (2014).

Boggan, J. C. et al. An Automated Surveillance Strategy to Identify Infectious Complications After Cardiac Implantable Electronic Device Procedures. Open Forum Infect Dis 2, ofv128, https://doi.org/10.1093/ofid/ofv128 (2015).

Jhung, M. A. & Banerjee, S. N. Administrative coding data and health care-associated infections. Clin Infect Dis 49, 949–955, https://doi.org/10.1086/605086 (2009).

Schweizer, M. L. et al. Validity of ICD-9-CM coding for identifying incident methicillin-resistant Staphylococcus aureus (MRSA) infections: is MRSA infection coded as a chronic disease? Infect Control Hosp Epidemiol 32, 148–154, https://doi.org/10.1086/657936 (2011).

Calderwood, M. S., Kleinman, K., Murphy, M. V., Platt, R. & Huang, S. S. Improving public reporting and data validation for complex surgical site infections after coronary artery bypass graft surgery and hip arthroplasty. Open forum infectious diseases 1, ofu106, https://doi.org/10.1093/ofid/ofu106 (2014).

Mainor, A. J., Morden, N. E., Smith, J., Tomlin, S. & Skinner, J. ICD-10 Coding Will Challenge Researchers: Caution and Collaboration may Reduce Measurement Error and Improve Comparability Over Time. Med Care 57, e42–e46, https://doi.org/10.1097/MLR.0000000000001010 (2019).

Cato, K. D., Liu, J., Cohen, B. & Larson, E. Electronic Surveillance of Surgical Site Infections. Surg Infect (Larchmt) 18, 498–502, https://doi.org/10.1089/sur.2016.262 (2017).

Branch-Elliman, W., Gupta, K. & Elwy, A. in IDWeek 2019.

Quality, T. A. f. H. R. a. About Learning Health Systems., https://www.ahrq.gov/learning-health-systems/about.html (2019).

Acknowledgements

This work would not be possible without help and assistance from the VA Clinical Assessment Reporting and Tracking program. The work was supported by American Heart Association Institute for Precision Cardiovascular Medicine Award # 17IG33630052. WBE is supported by NIH NHLBI 1K12HL138049-01.

Author information

Authors and Affiliations

Contributions

A.A. and W.B.E. designed the study and wrote the manuscript. M.S. and A.B. performed all statistical analyses. A.A., W.B.E. and P.M. performed manual data collection. H.M., M.S., M.H., K.G. commented on the research design, analysis and manuscript. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

AA is an investigator for studies funded by Gilead Sciences and Merck since the completion of this study. WBE is a consultant for DLA Piper, LLC. All other authors declare that have no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Asundi, A., Stanislawski, M., Mehta, P. et al. Development and Validation of a Semi-Automated Surveillance Algorithm for Cardiac Device Infections: Insights from the VA CART program. Sci Rep 10, 5276 (2020). https://doi.org/10.1038/s41598-020-62083-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-020-62083-y

This article is cited by

-

A novel disinfection protocol using ATP testing for lead garments in the electrophysiology lab

Journal of Interventional Cardiac Electrophysiology (2021)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.