Abstract

Various mental disorders are accompanied by some degree of cognitive impairment. Particularly in neurodegenerative disorders, cognitive impairment is the phenotypical hallmark of the disease. Effective, accurate and timely cognitive assessment is key to early diagnosis of this family of mental disorders. Current standard-of-care techniques for cognitive assessment are primarily paper-based, and need to be administered by a healthcare professional; they are additionally language and education-dependent and typically suffer from a learning bias. These tests are thus not ideal for large-scale pro-active cognitive screening and disease progression monitoring. We developed the Integrated Cognitive Assessment (referred to as CGN_ICA), a 5-minute computerized cognitive assessment tool based on a rapid visual categorization task, in which a series of carefully selected natural images of varied difficulty are presented to participants. Overall 448 participants, across a wide age-range with different levels of education took the CGN_ICA test. We compared participants’ CGN_ICA test results with a variety of standard pen-and-paper tests, such as Symbol Digit Modalities Test (SDMT) and Montreal Cognitive Assessment (MoCA), that are routinely used to assess cognitive performance. CGN_ICA had excellent test-retest reliability, showed convergent validity with the standard-of-care cognitive tests used here, and demonstrated to be suitable for micro-monitoring of cognitive performance.

Similar content being viewed by others

Introduction

Brain disorders can cause deficiency in cognitive performance. In particular, in neurodegenerative disorders, cognitive impairment is the phenotypical hallmark of the disease. Neurodegenerative disorders, including Dementia and Alzheimer’s disease, continue to represent a major economic, social and healthcare burden1. These diseases remain underdiagnosed or are diagnosed too late2; resulting in less favorable health outcomes. Current routinely used approaches to cognitive assessment, such as the Mini Mental State Examination (MMSE)3, Montreal Cognitive Assessment (MoCA)4, and Addenbrooke’s Cognitive Examination (ACE)5 are primarily paper-based, language and education-dependent and need to be administered by a healthcare professional (e.g. physician). These tests are therefore not ideal tools for wide pro-active screening of cognitive impairment, which can be crucial to earlier diagnosis.

Several studies have emphasized the importance of early diagnosis2,6,7,8,9 and its role in driving better treatment and improvement of cognition and quality of life10. Therefore, developing new tools for effective, accurate and timely cognitive assessment is key to tackling this family of brain disorders.

Growing attention has been drawn to changes in the visual system in connection with dementia and cognitive impairment11,12,13,14,15,16. Previous studies have linked visual function abnormalities with Alzheimer’s Disease and other types of cognitive impairment17,18,19. All parts of the visual system may be affected in Alzheimer’s disease, including the optic nerve, retina, lateral geniculate nucleus (LGN) and the visual cortex19. Therefore, visual dysfunction can predict cognitive deficits in Alzheimer’s Disease19,20. The human motor cortex21,22, and the oculomotor23,24 are also shown to be affected in Alzheimer’s Disease.

We therefore developed a rapid visual categorization test that measures subject’s accuracy and response reaction times, engaging both visual and motor cortices as well as oculomotor function. Categorization accuracies and reaction times are then summarized to assess participants’ cognitive performance. The proposed integrated cognitive assessment (CGN_ICA) test is designed to target cognitive domains and brain areas that are affected in the initial stages of cognitive disorders such as dementia, ideally before the onset of memory symptoms. Thus, as opposed to solely focusing on working memory, the test engages the retina, the visual cortex and the motor cortex, all of them are shown to be affected pre-dementia or in early stages of the disease21,25,26,27,28,29,30,31. The CGN_ICA’s focus on speed and accuracy of processing visual information32,33,34,35 is in line with latest evidence suggesting that simultaneous object perception deficits are related to reduced visual processing speed in amnestic mild cognitive impairment36. Additionally, the proposed test is self-administered and is intrinsically independent of language and culture, thus making it ideal for large-scale pro-active cognitive screening and cognitive monitoring.

This study aims to assess CGN_ICA’s convergent validity with the routinely used standard pen-and-paper cognitive tests, its test-retest reliability, and whether the proposed test is suitable for micro-monitoring of cognitive performance.

Material and Methods

CGN_ICA test description

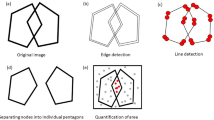

The CGN_ICA test is a rapid visual categorization task with backward masking33,37,38. One hundred natural images (50 animal and 50 non-animal) with various levels of difficulty were presented to the participants. Each image was presented for 100 ms followed by a 20 millisecond inter-stimulus interval (ISI), followed by a dynamic noisy mask (for 250 ms), followed by subject’s categorization into animal vs non-animal (Fig. 1). When using iPad, the categorization was done by tapping on the left or right side of the screen; when using RasPi, subjects indicated their responses by pressing either of the two pre-assigned keys on a keyboard (‘F’ vs. ‘J’). Images were presented at the center of the screen at 7 degree visual angle. For more information about rapid visual categorization tasks refer to Mirzaei et al.33.

The CGN_ICA test pipeline. One hundred natural images (50 animal and 50 non-animal) with various levels of difficulty are presented to the participants. Each image is presented for 100 ms followed by 20 ms inter-stimulus interval (ISI), followed by a dynamic noisy mask (for 250 ms), followed by subject’s categorization into animal vs. non-animal. Few sample images are shown for demonstration purposes.

The CGN_ICA test starts with a different set of 10 test images (5 animal, 5 non-animal) to familiarize participants with the task. These images are later removed from further analysis. If participants perform above chance (>50%) on these 10 images, they will continue to the main task. If they perform at chance level (or below), the test instructions will be presented again, and a new set of 10 introductory images will follow. If they perform above chance in this second attempt, they will progress to the main task. If they perform below chance for the second time the test will be aborted. Only in experiment 2, three participants, out of 61, were aborted from the study due to this reason, thus 58 subjects remaining in experiment 2 that are shown in Table 1.

Scientific rationale behind the CGN_ICA test

The CGN_ICA test takes advantage of millions of years of human evolution – the human brain’s strong reaction to animal stimuli39,40,41,42. Human observers are very good at recognising whether briefly flashed novel images contain the image of an animal, and previous work has shown that the underlying visual processing can be performed quickly38,43. The strongest categorical division represented in the human higher level visual cortex (known as inferior temporal cortex) appears to be that between animates and inanimates. Several studies have shown this in human and non-human primates38,39,40,44,45. Studies also show that on average it takes about 100 ms to 120 ms for humans to differentiate animate from inanimate stimuli46,47,48. Following this rationale, in the CGN_ICA test, the images are presented for 100 ms followed by a short inter-stimulus-interval (ISI), then followed by a dynamic mask. Shorter periods of ISI can make the animal detection task more difficult and longer periods reduce the potential use for testing purposes as it may not allow for detecting less severe cognitive impairments. The dynamic mask is used to remove (or at least reduce) the effect of recurrent processes in the brain49,50,51,52,53. This makes the task more challenging by reducing the ongoing recurrent neural activity that could boost subject’s performance. This leaves less room for the resilient brain to compensate for the subtle ongoing neurodegeneration in early stages of the disease.

Participants

As shown in Table 1, we conducted four different experiments; in total, 448 volunteers took part in this study. The study was conducted according to the Declaration of Helsinki and approved by the local ethics committee at Royan Institute. Informed consent was obtained from all participants.

Participants’ inclusion criteria were individuals above age 18, with normal or corrected-to-normal vision, without severe upper limb arthropathy or motor problems that could prevent them from completing the tests independently. For each participant, information about age, education and gender was also collected.

Stimulus set

We used a set of 100 grayscale natural images, half of them contained an animal. The images varied in their level of difficulty. In some images the head or body of the animal is clearly visible to the participants, which makes it easier to detect. In other images the animals are further away or otherwise presented in cluttered environments, making them more difficult to detect. Few sample images are shown in Fig. 1. Grayscale images were used to remove the possibility of some typical color blindness affecting participants’ results. Furthermore, color images can facilitate animal detection solely based on color, without fully processing the shape of the stimulus. This could have made the task easier and less suitable for detecting less severe cognitive dysfunctions.

To construct the mask, a white noise image was filtered at four different spatial scales, and the resulting images were thresholded to generate high contrast binary patterns. For each spatial scale, four new images were generated by rotating and mirroring the original image. This leaves us with a pool of 16 images. The noisy mask used in the CGN_ICA test was a sequence of 8 images, chosen randomly from the pool, with each of the spatial scales to appear twice in the dynamic mask.

Reference pen-and-paper cognitive tests

Montreal Cognitive Assessment (MoCA)

MoCA4 is a widely used screening tool for detecting cognitive impairment, typically in older adults. The MoCA test is a one-page 30-point test administered in approximately 10 minutes.

Mini-Mental State Examination (MMSE)

The MMSE3 test is a 30-point questionnaire that is used in clinical and research settings to measure cognitive impairment. It is commonly used to screen for dementia in older adults; and takes about 10 minutes to administer.

Addenbrooke’s Cognitive Examination -Revised (ACE-R)

The ACE54,55 was originally developed at Cambridge Memory Clinic as an extension to the MMSE. ACE-R is a revised version of ACE that includes MMSE score as one its sub-scores. The ACE-R5 assesses five cognitive domains: attention, memory, verbal fluency, language and visuospatial abilities. On average, the test takes about 20 minutes to administer and score.

Symbol Digit Modalities Test (SDMT)

The SDMT is designed to assess speed of information processing, and takes about 5 minutes to administer56. A series of nine symbols are presented at the top of a standard sheet of paper, each paired with a single digit. The rest of the page contains symbols with an empty box next to them, in which participants are asked to write down the digit associated with this symbol as quickly as possible. The outcome score is the number of correct matches over a time span of 90 seconds.

California Verbal Learning Test -2nd edition (CVLT-II)

The CVLT-II test57,58 begins with the examiner reading a list of 16 words. Participants listen to the list and then report as many of the items as they can recall. After that, the entire list is read again followed by a second attempt at recall. Altogether, there are five learning trials. The final score, which is out of 80, is the summation of all the correct recalls. As in the brief international cognitive assessment for multiple sclerosis (BICAMS) battery59, we only used the learning trials of the CVLT-II, which takes about 10 minutes to administer.

Brief Visual Memory Test–Revised (BVMT-R)

The BVMT-R test assesses visuo-spatial memory60,61. In this test, six abstract shapes are presented to the participant for 10 seconds. The display is removed from view and patients are asked to draw the stimuli via pencil on paper manual responses. There are three learning trials, and the primary outcome measure is the total number of points earned over the three learning trials. The test takes about 5 minutes to administer.

Experiments

We conducted four different experiments, as summarized in Table 1. The first three experiments were designed to measure the CGN_ICA correlation with a wide range of routinely used reference cognitive tests. The goal was to investigate whether the speed and accuracy of visual processing in a rapid visual categorization task is correlated with subject’s cognitive performance.

In the first and second experiments, we aimed to test CGN_ICA’s ability in assessing cognitive performance in older adults. Therefore, we used MoCA and/or ACE-R as reference cognitive tests, both of which are routinely used to screen for mild cognitive impairment (MCI) and dementia in older adults. In the first experiment, 212 volunteers participated; the CGN_ICA test was delivered via a Raspberry Pi (RaPi) platform, which is a small single-board computer, attached to a keyboard and a LCD monitor; and MoCA was used as the reference cognitive test. For the second experiment, we had 58 participants; the CGN_ICA was delivered via iPad, and both MoCA and ACE-R were used as reference tests in this experiment.

The third experiment had SDMT, BVMT-R and CVLT-II as the reference cognitive tests, measuring speed of information processing, visuo-spatial memory and verbal learning, respectively. These three tests together form the BICAMS battery, which requires about 15 to 20 minutes to administer, and is primarily used to detect cognitive dysfunction in younger adults who may suffer from multiple sclerosis (MS). 166 participants took part in this experiment. Forty-four of them were selected for a re-test as part of a second visit to assess CGN_ICA test-retest reliability. Participants for the re-test session were selected at random, while keeping the age-range, level of education, and gender ratio relatively similar to the set of participants in the first session. The CGN_ICA was delivered via an iPad platform.

All the pen-and-paper cognitive tests were administered by a healthcare professional. The administration order for CGN_ICA vs. reference cognitive tests was at random.

Finally, experiment 4 was designed to study whether the CGN_ICA test had a learning bias if taken multiple times in short intervals. Learning bias is defined as the ability to improve your test score by learning the test simply because of several exposures to the test. 12 young volunteers participated in this study. For convenience, the CGN_ICA was delivered remotely via a web platform. Participants took the CGN_ICA test every other day for two weeks.

Accuracy, speed, and CGN_ICA summary score calculations

Preprocessing

We used boxplot to remove outlier reaction times, before computing the CGN_ICA score. Boxplot is a non-parametric method for describing groups of numerical data through their quartiles; and allows for detection of outliers in the data. Following the boxplot approach, reaction times greater than q3 + w * (q3 − q1) or less than q1 − w * (q3 − q1) are considered outliers. q1 is the lower quartile, and q3 is the upper quartile of the reaction times. Where “w” is a ‘whisker’; w = 1.5.

Accuracy is simply defined as the number of correct categorisations divided by the total number of images, multiplied by a 100.

Speed is defined based on participant’s response reaction times in trials they responded correctly.

RT: reaction time

e: Euler’s number ~2.7182……

Speed is inversely related with participants’ reaction times; the higher the speed, the lower the reaction time. The reason for defining the above formula for speed, instead of using the raw reaction times, was to have a more intuitive and standardized score to report to the clinicians, scaled between 0 to 100.

The CGN_ICA summary score is a simple combination of accuracy and speed, defined as follows:

Statistical analysis

Within the manuscript, convergent validity, and test-retest reliability for the CGN_ICA test is shown with Pearson’s Correlation. P-values for Pearson’s correlation are based on a Student’s t distribution. Calculations are done using MathWorks’ statistics and machine learning toolbox (https://www.mathworks.com/help/stats/index.html).

To measure dependency of the cognitive tests with level of education, we used explained variance, defined as the square of Pearson’s Correlation between participants’ cognitive score and their level of education (i.e. number of years). Here the statistical significance was obtained by a permutation test (10,000 permutations of participants). To formally assess statistical independence, we used a non-parametric independence test, proposed by Gretton and Gyorfi62, based on 10,000 bootstrap resampling of participants.

Finally, we used a single factor analysis of variance (ANOVA) to compare average CGN_ICA scores for participants who had taken the CGN_ICA test every other day for two weeks. The goal was to see if the mean CGN_ICA scores are significantly different at any given day.

Results

Convergent validity with the standard-of-care cognitive tests

A key requirement for a clinically useful cognitive assessment test is to establish validity and a correlation with an existing recognized neuropsychological test that is routinely used in clinical practice. Here in three different experiments (see Table 1, experiments 1 to 3), we show that the CGN_ICA test is significantly correlated with six standard neuropsychological tests (Fig. 2 and Table 2).

The CGN_ICA test score is significantly correlated with a wide range of standard cognitive tests. Participants have taken the CGN_ICA test along with one or more standard cognitive tests (see Table 1). Each scatter plot shows the ICA score (y axis) vs. one of the standard cognitive tests (x axis). Each blue dot indicates an individual; the lines are results of linear regression, fitting a linear line to the data in each plot. For each plot, number of participants who have taken the tests and platform on which the CGN_ICA is taken are written on top of the scatter plot. ‘r’ and ‘p’ on top-right corner of each plot show the Pearson correlation between the two candidate tests, and the p-value of the correlation, respectively.

Given the variability in subject’s demographics, such as age, gender, and level of education, a statistically significant correlation typically above 0.4 (sometimes > 0.3) with reference cognitive tests is considered within the acceptable range for convergent validity (i.e. construct validity)63,64,65. To give few examples, convergent validity for ACE-R is shown with a correlation of −0.32 with clinical dementia scale5; for CogState (a computerized cognitive battery), convergent validity is shown by correlations that vary between 0.11 and 0.53 with reference pen-and-paper cognitive tests64,66,67. Similarly, cerebral spline fluid (CSF) and blood biomarkers have correlations in the range of 0.4 to 0.668,69 with standard cognitive tests, such as MoCA.

We show that the CGN_ICA score is significantly correlated with MoCA, tested on two different hardware platforms (RaPi and iPad). CGN_ICA correlation with MoCA varies from 0.46 to 0.55 (Fig. 2D and E) that is within the range for determining construct validity.

The CGN_ICA test had a slightly higher correlation with ACE-R (r = 0.60, p < 10−6), compared to MoCA. ACE-R provides a more comprehensive assessment of cognitive abilities and takes a longer time to administer and score (~20 minutes). It is comprised of five subsections, assessing attention, memory, fluency, language, and visuospatial abilities. The CGN_ICA correlation with ACE-R (Fig. 2F) and its different sub-sections are shown in Table 2. Subject’s MMSE score can also be extracted from the ACE-R test (see Table 2). MoCA and ACE-R are typically used to screen for MCI and dementia in older adults.

MMSE is shown to be less sensitive in detecting cognitive impairment4,5 compared to MoCA or ACE-R. Therefore, a smaller correlation with MMSE (r = 0.33), and a higher correlation with MoCA and ACE-R (r = 0.55 and 0.60) is of interest.

In addition, we compared CGN_ICA against another set of tests, including SDMT, BVMT-R and CVLT-II (Fig. 2A–C, and Table 2) that are more often used in younger individuals to assess cognitive performance. For example, all these three tests are included as part of larger battery of tests that assess cognitive impairment in individuals with MS, such as the ‘minimal assessment of cognitive function in MS’ (MACFIMS) and the ‘brief international cognitive assessment for MS’ (BICAMS).

ICA had the highest correlation with SDMT (r = 0.80, p < 10−7), which is a pen-and-paper test mostly measuring the speed of information processing. CVLT-II, measuring verbal learning, and BVMT-R, measuring visual memory, had correlations of 0.66 and 0.54 with the CGN_ICA score, respectively. SDMT is shown to be more sensitive in detecting cognitive impairment in patients with MS59,70, compared to CVLT-II and BVMT-R, therefore, CGN_ICA’s higher correlation with SDMT (compared to CVLT-II and BVMT-R) is of interest.

It is worth noting that a correlation of one is not desirable between the CGN_ICA test and any of these cognitive tests, as none of these standard tests are considered the ground truth (or gold standard) in detecting cognitive impairments.

The majority of cognitive tests (Table 2) were more correlated with the accuracy component of the CGN_ICA test, except for SDMT and CVLT-II, both of which have got a significantly higher correlation with speed compared to that of accuracy (p < 0.001; bootstrap resampling of subjects).

Each reference cognitive test used in this study (shown in Table 2) measured different domains of cognition. The CGN_ICA score had significant correlations with all of these tests, suggesting that it can be effectively used as one integrated test to provide insights about different cognitive domains (e.g. speed of processing, memory, verbal learning, attention, and fluency).

CGN_ICA shows excellent test-retest reliability

One of the most critical psychometric criteria for the applicability of a test is its reliability. That is the deviation observed when using the same instrument multiple times under similar circumstances.

To assess the reliability of the CGN_ICA test, a subgroup of 44 participants from experiment 3 (see Table 1) took the CGN_ICA test for the second time after about five weeks (+−15 days). Test-retest reliability was measured by computing the Pearson correlation between the two CGN_ICA scores [Fig. 3; Pearson’s r = 0.96 (p < 10−7)]. R values for test-retest correlation are considered adequate if > 0.70 and good if > 0.80 (Anastasi, 1988).

How much of the CGN_ICA score is explained by education?

People with higher levels of education tend to score better in the standard pen-and-paper tests, compared to their age-matched group that fall into the same cognitive category. This makes ‘the level of education’ a confounding factor for cognitive assessment.

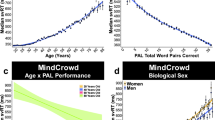

We were interested to see how much of the CGN_ICA score is explained by education in comparison to other cognitive tests. To this end, we computed the Pearson’s correlation between participants’ test scores and their level of education (in years). Explained variance is defined as the square of this correlation, and indicates how of much of the variance of these test scores can be explained by education (Fig. 4).

Dependency of standard-of-care cognitive tests on education. Bars indicate how much of the scores reported in each test are explained by education [explained variance = (Pearson’s r)2]. Stars show statistical significance, indicating that a significant variance of the test score is explained by education. Statistical significance is obtained by permutation test (10,000 permutations). Error bars are standard errors of the mean (SEM) obtained by 10,000 bootstrap resampling of subjects. P-values smaller than 0.01 (after Bonferroni correction for multiple comparison) are considered significant. ‘ns’ means not significant. The results for CGN_ICA (RaPi platform) are based on 212 subjects (Experiment 1 in Table 1); results for CGN_ICA (iPad platform) are based on the combined data from Experiments 2 and 3 (224 participants in total) all of whom took the CGN_ICA on an iPad. The results for MoCA are based on the combined data from Experiments 1 and 2, in both of which participants took MoCA (270 participants in total). ACE-R and MMSE results are based on the data from Experiment 2. Results for SDMT, BVMT-R and CVLT-II are based on the data from Experiment 3.

We find that a significant variance of all the standard cognitive assessment tests is explained by education, whereas the CGN_ICA test does not show a significant relationship with education (Fig. 4). In Fig. 4, we separately reported the CGN_ICA test results for the RaspberryPi platform (Experiment 1: 212 participants) and the iPad platform (experiments 2 and 3: 58 + 166 = 224 participants).

Furthermore, we formally tested whether the CGN_ICA score is independent of education using a non-parametric test of independence62. In experiment 1 (i.e. CGN_ICA taken on RaPi), the statistical test of independence was positive, showing that CGN_ICA score is independent of education (based on 10,000 bootstrap resampling of subjects).

The CGN_ICA test has no learning bias

One problem with many existing cognitive tests is that they have a learning bias, meaning that subject’s cognitive performance is improved by repeated exposure to the test as a result of learning the task, without any change in their cognitive ability. A learning bias reduces the reliability of a test if repeatedly used, for example when monitoring performance over time. An ideal test for early diagnosis of cognitive disorders and monitoring cognitive performance would show no ‘learning bias’.

The currently available pen-and-paper tests, such as MoCA, MMSE and Addenbrooke’s Cognitive Examination (ACE), are not appropriate for micro-monitoring of cognitive performance because if identical questions are repeated, healthy participants and those with mild impairment can easily learn the test and improve upon their previous scores – as a result of learning rather than any improvement in their cognitive performance.

To investigate whether CGN_ICA might be appropriate for such micro-monitoring, we recruited 12 young individuals with high capacity for learning [University students, aged 20 to 36], and asked them to take the test every other day for two weeks (8 days in total). The CGN_ICA was delivered remotely via a web platform.

The test data indicate that even in subjects with a high capacity to learn, no learning bias was detected (Fig. 5). The CGN_ICA score does not increase monotonically, and comparing the mean of the CGN_ICA scores across these days, no significant difference was observed (ANOVA, F(7) = 0.62; P-value = 0.73).

No significant effect of learning in repeated exposure to the CGN_ICA test. We find no learning bias when the test is taken multiple times. 12 healthy participants (age range = [20, 36]) took the CGN_ICA test every other day for over two weeks (ANOVA, F(7) = 0.62; P-value = 0.73). From these 12 participants, 7 of them completed all the sessions (8 days); and the rest did the test for at least the first three days.

Discussion

Early diagnosis is the mainstay of focus in scientific research71,72. There is currently no available cognitive screening tool that can detect early phenotypical changes prior to the emergence of memory problems and other symptoms of dementia. The vast majority of cognitive tests rely on the patients’ capacity to read and write while more educated individuals can often “second-guess” them. All of these standard tests require a clinician or a health-care professional to administer them, thus adding a considerable cost to the procedure.

We demonstrated that the combination of speed and accuracy of visual processing in a rapid visual categorization task can be used as a reliable measure to assess individual’s cognitive performance. The proposed visual test has significant advantages over the conventional cognitive tests because of its efficient administration, shorter duration, automatic scoring, language and education independency, potential for medical record or research database integration, and the capacity for micro monitoring of cognitive performance given the absence of a “learning bias”. Thus, we suggest CGN_ICA as a practical tool for routine screening of cognitive performance.

Potential use of CGN_ICA for early detection of dementia

Because of the high compensatory potential of the brain, symptoms of chronic neurodegenerative diseases, such as Alzheimer’s (AD), Parkinson (PD), Huntington (HD) diseases, vascular and frontotemporal (FTD) dementias occur 10–20 years after the beginning of the pathology9. Late stages of these disorders are characterized by massive neuronal death that is irreversible. Therefore, any late therapeutic treatment in the course of the disease will most likely fail to positively affect the disease progression in any meaningful way. This is illustrated by recent failures of anti-AD therapies in late stage clinical trials2,73. Thus further emphasizing the importance of the development of screening tests capable of detecting such diseases in their early asymptomatic stage.

ICA aims at early detection of cognitive dysfunction by targeting brain functionalities that are affected in the initial stages of the neurodegenerative disorders (e.g. dementia), specifically before the onset of memory symptoms. Given the decade-long lag between tissue damage and memory deficits in dementia, the CGN_ICA instead examines the visuo-motor pathway. Studies in the past 20 years reveal that all parts of the visual system may be affected in Alzheimer’s Disease, including the optic nerve, retina, lateral geniculate nucleus (LGN) and the visual cortex19. Particularly, in early stages of the disease, brain areas associated with the visuo-motor pathway are affected, beginning with the retina26,27,28,30, the visual cortex25,29,30 and the motor cortex21,31, so together these represent more effective areas to look for the impact of early stage neurodegeneration as opposed to solely focusing on memory. The CGN_ICA focuses on cognitive functions such as speed and accuracy of processing visual information which have been shown to engage a large volume of cortex, while being a predictor of people’s cognitive performance33,34,35; thus, monitoring the performance and functionality of these areas altogether can be a reliable early indicator of the disease onset.

Suitability for remote and frequent cognitive assessment

Remote monitoring or home-based online assessments is beneficial for patients, clinicians and researchers. Home-based assessment allows for a more comfortable setting for patients with a low stress environment. In addition, researchers and clinicians will have a time-efficient and convenient assessment instrument, which enables a valid and reliable evaluation of individuals’ cognitive performance. Furthermore, online assessment allows the researcher to collect data from a large number of participants in a short time period.

Given that the CGN_ICA test is self-administered and that it does not suffer from a learning bias, it can be used remotely and frequently to track changes in individuals’ cognitive performance over time. This makes the test even more useful for early diagnosis, by allowing the test to be used longitudinally, in a design wherein individuals are compared against their own baseline.

Conclusion and Future Directions

The CGN_ICA is designed to be an extremely easy to use, versatile and practical measurement tool for studies into dementias and other conditions that have an element of cognitive function, as it allows simple, sensitive and repeatable data collection of an overall score of a subject’s cognitive ability. The CGN_ICA platform is being further developed to employ artificial intelligence (AI) to improve its predictive power, utilizing patterns of participants’ response reaction times. The AI platform will allow for accurate classification of participants into cognitively healthy or cognitively impaired by comparing their CGN_ICA test profile with a large dataset of many individuals with validated clinical status which the AI platform has “learned” from. The AI engine will have the ability to improve its accuracy over time by learning from new data points that are incorporated into its training datasets.

Data Availability Statement

The data generated during this study are included in this published article. Commercially insensitive raw data can be made available upon reasonable request from the corresponding author.

References

2018 Alzheimer’s disease facts and figures. Alzheimers Dement. J. Alzheimers Assoc. 14, 367–429 (2018).

Sperling, R. A., Jack, C. R. & Aisen, P. S. Testing the Right Target and Right Drug at the Right Stage. Sci. Transl. Med. 3, 111cm33–111cm33 (2011).

Folstein, M. F., Folstein, S. E. & McHugh, P. R. “Mini-mental state”: a practical method for grading the cognitive state of patients for the clinician. J. Psychiatr. Res. 12, 189–198 (1975).

Nasreddine, Z. S. et al. The Montreal Cognitive Assessment, MoCA: a brief screening tool for mild cognitive impairment. J. Am. Geriatr. Soc. 53, 695–699 (2005).

Mioshi, E., Dawson, K., Mitchell, J., Arnold, R. & Hodges, J. R. The Addenbrooke’s Cognitive Examination Revised (ACE-R): a brief cognitive test battery for dementia screening. Int. J. Geriatr. Psychiatry 21, 1078–1085 (2006).

Pasquier, F. Early diagnosis of dementia: neuropsychology. J. Neurol. 246, 6–15 (1999).

Leifer, B. P. Early diagnosis of Alzheimer’s disease: clinical and economic benefits. J. Am. Geriatr. Soc. 51 (2003).

Prince, M., Bryce, R. & Ferri, C. World Alzheimer Report 2011: The benefits of early diagnosis and intervention. (Alzheimer’s Disease International, 2011).

Sheinerman, K. S. & Umansky, S. R. Circulating cell-free microRNA as biomarkers for screening, diagnosis and monitoring of neurodegenerative diseases and other neurologic pathologies. Front. Cell. Neurosci. 7 (2013).

Dubois, B., Padovani, A., Scheltens, P., Rossi, A. & Dell’Agnello, G. Timely diagnosis for Alzheimer’s disease: a literature review on benefits and challenges. J. Alzheimers Dis. 49, 617–631 (2016).

Katz, B. & Rimmer, S. Ophthalmologic manifestations of Alzheimer’s disease. Surv. Ophthalmol. 34, 31–43 (1989).

Mendez, M. F., Tomsak, R. L. & Remler, B. Disorders of the visual system in Alzheimer’s disease. J Clin Neuroophthalmol 10, 62–69 (1990).

Holroyd, S. & Shepherd, M. L. Alzheimer’s disease: a review for the ophthalmologist. Surv. Ophthalmol. 45, 516–524 (2001).

Jackson, G. R. & Owsley, C. Visual dysfunction, neurodegenerative diseases, and aging. Neurol. Clin. 21, 709–728 (2003).

Kirby, E., Bandelow, S. & Hogervorst, E. Visual impairment in Alzheimer’s disease: a critical revie. w. J. Alzheimers Dis. 21, 15–34 (2010).

Valenti, D. A. Alzheimer’s disease: visual system review. Optom.-J. Am. Optom. Assoc. 81, 12–21 (2010).

Bell, M. A. & Ball, M. J. Neuritic plaques and vessels of visual cortex in aging and Alzheimer’s dementia. Neurobiol. Aging 11, 359–370 (1990).

Iseri, P. K., Altinas, Ö., Tokay, T. & Yüksel, N. Relationship between cognitive impairment and retinal morphological and visual functional abnormalities in Alzheimer disease. J. Neuroophthalmol. 26, 18–24 (2006).

Tzekov, R. & Mullan, M. Vision function abnormalities in Alzheimer disease. Surv. Ophthalmol. 59, 414–433 (2014).

Cronin-Golomb, A., Corkin, S. & Growdon, J. H. Visual dysfunction predicts cognitive deficits in Alzheimer’s disease. Optom. Vis. Sci. Off. Publ. Am. Acad. Optom. 72, 168–176 (1995).

Ferreri, F. et al. Motor cortex excitability in Alzheimer’s disease: a transcranial magnetic stimulation follow-up study. Neurosci. Lett. 492, 94–98 (2011).

Bak, T. H. Why patients with dementia need a motor examination (BMJ Publishing Group Ltd 2016).

Pirozzolo, F. J. & Hansch, E. C. Oculomotor reaction time in dementia reflects degree of cerebral dysfunction. Science 214, 349–351 (1981).

Hutton, J. T., Johnston, C. W., Shapiro, I. & Pirozzolo, F. J. Oculomotor programming disturbances in the dementia syndrome. Percept. Mot. Skills 49, 312–314 (1979).

Armstrong, R. The visual cortex in Alzheimer disease: laminar distribution of the pathological changes in visual areas V1 and V2. in Visual cortex (eds Harris, J. & Scott, J.) 99–128 (Nova science, 2012).

Paquet, C. et al. Abnormal retinal thickness in patients with mild cognitive impairment and Alzheimer’s disease. Neurosci. Lett. 420, 97–99 (2007).

Berisha, F., Feke, G. T., Trempe, C. L., McMeel, J. W. & Schepens, C. L. Retinal Abnormalities in Early Alzheimer’s Disease. Invest. Ophthalmol. Vis. Sci. 48, 2285–2289 (2007).

Lu, Y. et al. Retinal nerve fiber layer structure abnormalities in early Alzheimer’s disease: Evidence in optical coherence tomography. Neurosci. Lett. 480, 69–72 (2010).

Brewer, A. A. & Barton, B. Visual cortex in aging and Alzheimer’s disease: Changes in visual field maps and population receptive fields. Front. Psychol. 1 (2012).

Chang, L. Y. L. et al. Alzheimer’s disease in the human eye. Clinical tests that identify ocular and visual information processing deficit as biomarkers. Alzheimers Dement, https://doi.org/10.1016/j.jalz.2013.06.004 (2013).

Bak, T. H. Why patients with dementia need a motor examination. J. Neurol. Neurosurg. Psychiatry jnnp-2016-313466, https://doi.org/10.1136/jnnp-2016-313466 (2016).

Khaligh-Razavi, S.-M. & Habibi, S. System for assessing mental health disorder. UK Intellect. Prop. Off. (2013).

Mirzaei, A., Khaligh-Razavi, S.-M., Ghodrati, M., Zabbah, S. & Ebrahimpour, R. Predicting the human reaction time based on natural image statistics in a rapid categorization task. Vision Res. 81, 36–44 (2013).

Zhang, R. et al. Novel object recognition as a facile behavior test for evaluating drug effects in AβPP/PS1 Alzheimer’s disease mouse model. J. Alzheimers Dis. JAD 31, 801–812 (2012).

Mudar, R. A. et al. Effects of age on cognitive control during semantic categorization. Behav. Brain Res. 287, 285–293 (2015).

Ruiz-Rizzo, A. L. et al. Simultaneous object perception deficits are related to reduced visual processing speed in amnestic mild cognitive impairment. Neurobiol. Aging 55, 132–142 (2017).

Vanrullen, R. & Thorpe, S. J. The time course of visual processing: from early perception to decision-making. J. Cogn. Neurosci. 13, 454–461 (2001).

Bacon-Macé, N., Macé, M. J. M., Fabre-Thorpe, M. & Thorpe, S. J. The time course of visual processing: Backward masking and natural scene categorisation. Vision Res. 45, 1459–1469 (2005).

Kiani, R., Esteky, H., Mirpour, K. & Tanaka, K. Object Category Structure in Response Patterns of Neuronal Population in Monkey Inferior Temporal Cortex. J. Neurophysiol. 97, 4296–4309 (2007).

Kriegeskorte, N. et al. Matching Categorical Object Representations in Inferior Temporal Cortex of Man and Monkey. Neuron 60, 1126–1141 (2008).

Connolly, A. C. et al. The representation of biological classes in the human brain. J. Neurosci. Off. J. Soc. Neurosci. 32, 2608–2618 (2012).

Khaligh-Razavi, S.-M. & Kriegeskorte, N. Deep Supervised, but Not Unsupervised, Models May Explain IT Cortical Representation. PLoS Comput Biol 10, e1003915 (2014).

Thorpe, S. J. The Speed of Categorization in the Human Visual System. Neuron 62, 168–170 (2009).

Naselaris, T., Stansbury, D. E. & Gallant, J. L. Cortical representation of animate and inanimate objects in complex natural scenes. J. Physiol.-Paris 106, 239–249 (2012).

Khaligh-Razavi, S.-M., Henriksson, L., Kay, K. & Kriegeskorte, N. Fixed versus mixed RSA: Explaining visual representations by fixed and mixed feature sets from shallow and deep computational models. J. Math. Psychol (2016).

Liu, H., Agam, Y., Madsen, J. R. & Kreiman, G. Timing, timing, timing: fast decoding of object information from intracranial field potentials in human visual cortex. Neuron 62, 281–290 (2009).

Carlson, T., Tovar, D. A., Alink, A. & Kriegeskorte, N. Representational dynamics of object vision: The first 1000 ms. J. Vis. 13, 1 (2013).

Cichy, R. M., Pantazis, D. & Oliva, A. Resolving human object recognition in space and time. Nat. Neurosci. 17, 455–462 (2014).

Lamme, V. A. F. & Roelfsema, P. R. The distinct modes of vision offered by feedforward and recurrent processing. Trends Neurosci. 23, 571–579 (2000).

Lamme, V. A. F., Zipser, K. & Spekreijse, H. Masking Interrupts Figure-Ground Signals in V1. J. Cogn. Neurosci. 14, 1044–1053 (2002).

Breitmeyer, B. G. & Ogmen, H. Recent models and findings in visual backward masking: A comparison, review, and update. Percept. Psychophys. 62, 1572–1595 (2000).

Fahrenfort, J. J., Scholte, H. S. & Lamme, V. A. F. Masking Disrupts Reentrant Processing in Human Visual Cortex. J. Cogn. Neurosci. 19, 1488–1497 (2007).

Rajaei, K., Mohsenzadeh, Y., Ebrahimpour, R. & Khaligh-Razavi, S.-M. Beyond Core Object Recognition: Recurrent processes account for object recognition under occlusion. bioRxiv 302034 (2018).

Mathuranath, P. S., Nestor, P. J., Berrios, G. E., Rakowicz, W. & Hodges, J. R. A brief cognitive test battery to differentiate Alzheimer’s disease and frontotemporal dementia. Neurology 55, 1613–1620 (2000).

Hodges, J. R. & Larner, A. J. Addenbrooke’s Cognitive Examinations: ACE, ACE-R, ACE-III, ACEapp, and M-ACE. In Cognitive Screening Instruments 109–137 (Springer, 2017).

Smith, A. Symbol digit modalities test. (Western Psychological Services Los Angeles, CA, 1982).

Delis, D. C., Kramer, J. H., Kaplan, E. & Ober, B. A. CVLT-II: California verbal learning test: adult version. (Psychological Corporation, 2000).

Stegen, S. et al. Validity of the California Verbal Learning Test–II in multiple sclerosis. Clin. Neuropsychol. 24, 189–202 (2010).

Benedict, R. H. et al. Brief International Cognitive Assessment for MS (BICAMS): international standards for validation. BMC Neurol. 12, 55 (2012).

Benedict, R. H., Schretlen, D., Groninger, L., Dobraski, M. & Shpritz, B. Revision of the Brief Visuospatial Memory Test: Studies of normal performance, reliability, and validity. Psychol. Assess. 8, 145 (1996).

Benedict, R. H. Brief visuospatial memory test–revised: professional manual. (PAR, 1997).

Gretton, A. & Györfi, L. Nonparametric independence tests: Space partitioning and kernel approaches. In International Conference on Algorithmic Learning Theory 183–198 (Springer, 2008).

Duffy, C. J. De novo classification request for cognivue. Cognivue submission number: den130033 date of de novo: june 24, 2013. 25 (2013).

Hammers, D. et al. Validity of a brief computerized cognitive screening test in dementia. J. Geriatr. Psychiatry Neurol. 25, 89–99 (2012).

Lam, B. et al. Criterion and Convergent Validity of the Montreal Cognitive Assessment with Screening and Standardized Neuropsychological Testing. J. Am. Geriatr. Soc. 61, 2181–2185 (2013).

Charvet, L. E., Shaw, M., Frontario, A., Langdon, D. & Krupp, L. B. Cognitive impairment in pediatric-onset multiple sclerosis is detected by the Brief International Cognitive Assessment for Multiple Sclerosis and computerized cognitive testing. Mult. Scler. J. 1352458517701588, https://doi.org/10.1177/1352458517701588 (2017).

Mielke, M. M. et al. Performance of the CogState computerized battery in the Mayo Clinic Study on Aging. Alzheimers Dement. J. Alzheimers Assoc, https://doi.org/10.1016/j.jalz.2015.01.008 (2015).

Yu, S. et al. Potential biomarkers relating pathological proteins, neuroinflammatory factors and free radicals in PD patients with cognitive impairment: a cross-sectional study. BMC Neurol. 14, 113 (2014).

Meng, Y. et al. A correlativity study of plasma APL1β28 and clusterin levels with MMSE/MoCA/CASI in aMCI patients. Sci. Rep. 5, (2015).

Langdon, D. W. et al. Recommendations for a brief international cognitive assessment for multiple sclerosis (BICAMS). Mult. Scler. J. 18, 891–898 (2012).

Robinson, L., Tang, E. & Taylor, J.-P. Dementia: timely diagnosis and early intervention. Bmj 350, h3029 (2015).

Nordberg, A. Dementia in 2014: Towards early diagnosis in Alzheimer disease. Nat. Rev. Neurol. 11, 69 (2015).

Pillai, J. A. & Cummings, J. L. Clinical trials in predementia stages of Alzheimer disease. Med. Clin. 97, 439–457 (2013).

Acknowledgements

We thank Mark Phillips, and Giulia Paggiola for reviewing the paper prior to submission. We also thank Jonathan El-Sharkawy, Johannes Bausch, and Jean Maillard for help with developing the software needed for data acquisitions. We are also grateful to Mohammad Arbabi for his help with subject recruitment.

Author information

Authors and Affiliations

Contributions

S.K.R. wrote the manuscript. C.K. gave comments on the manuscript. S.K.R., S.H., M.S., H.M., M.K., S.M.N., and E.S. helped with the data acquisitions. S.K.R., S.H., S.M.N. and C.K. helped with devising the protocol for the experiments. S.K.R., M.S., H.M., M.K. analyzed the data.

Corresponding author

Ethics declarations

Competing Interests

Dr. Khaligh-Razavi, Dr. Habibi, and Dr. Kalafatis serve as directors at Cognetivity ltd. Other authors declared no competing interests.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Khaligh-Razavi, SM., Habibi, S., Sadeghi, M. et al. Integrated Cognitive Assessment: Speed and Accuracy of Visual Processing as a Reliable Proxy to Cognitive Performance. Sci Rep 9, 1102 (2019). https://doi.org/10.1038/s41598-018-37709-x

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-018-37709-x

This article is cited by

-

Cognitive ergonomics and robotic surgery

Journal of Robotic Surgery (2024)

-

Evaluating the feasibility of cognitive impairment detection in Alzheimer’s disease screening using a computerized visual dynamic test

Journal of NeuroEngineering and Rehabilitation (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.