Abstract

We propose a scalable synaptic circuit realizing spike timing dependent plasticity (STDP)—compatible with randomly spiking neurons. The feasible working of the circuit was examined by circuit simulation using the BSIM 4.6.0 model. A distinguishable feature of the circuit is the use of floating-gate integrators that provide the compact implementation of biologically plausible relaxation time scale. This relaxation occurs on the basis of charge tunneling that mainly relies upon area-independent tunnel barrier properties (e.g. barrier width and height) rather than capacitance. The circuit simulations feature (i) weight-dependent STDP that spontaneously limits the synaptic weight growth, (ii) competitive synaptic adaptation within both unsupervised and supervised frameworks with randomly spiking neurons. The estimated power consumption is merely 34 pW, perhaps meeting one of the most crucial principles (power-efficiency) of neuromorphic engineering. Finally, a means of fine-tuning the STDP behavior is provided.

Similar content being viewed by others

Introduction

For nearly three decades, the brain and its information processing principles have been a benchmark in building artificial intelligence (AI) that enables recognition tasks by means of very-large-scale integration (VLSI) technology—often referred to as neuromorphic engineering1. Attention to this has recently been boosted with regard to remarkably growing demands for hardware-based AI systems. The early attempts mostly revolved around realizing scalable replicas of biological spiking units (neurons)2,3 and their application to front-end sensors, e.g. silicon retinas4. These early attempts were then followed by a number of spiking neuron models with different degrees of biological plausibility, complexity, and tunability5,6,7,8,9,10,11, enriching available neuron models. Essential to neuromorphic engineering for AI are memory and learning that are believed to involve synaptic weight modification in support of feature abstraction. Spike timing dependent plasticity (STDP) is a seminal learning rule that describes the causality of postsynaptic spiking in a time domain12,13,14,15. Frequently, neuromorphic engineers benchmark the STDP in view of, mostly, its capability of temporal learning (real-time learning) and compatibility with neuromorphic systems16,17,18,19,20.

The STDP relates the long-lasting change of synaptic weight w to the temporal order between pre- and postsynaptic spike times (Δt = t post − t pre); long-term potentiation (LTP) is induced when the presynaptic spike precedes the postsynaptic one, and long-term depression (LTD) in the opposite case. For convenience, the former configuration of spikes is denoted by pre-post, and the latter post-pre. Mathematically, the STDP is often simplified as

where s pre and s post are pre- and postsynaptic state variables that exponentially decay with Δt at likely different time constants (τ + and τ −), defining the degree of LTP and LTD, respectively. A + and A − define the maximum weight change. They can be either constant or reliant upon the current weight, which causes a significant difference in synaptic weight evolution through a learning period21,22,23,24.

A typical strategy for realizing the STDP in a neuromorphic circuit is to deploy two leaky integrators for pre and postsynaptic state variables (s pre and s post) in conjunction with an analog or digital memory unit to store the evaluated synaptic weight. This general framework has been applied to various synaptic circuit designs thus far; a good review is given by Bamford et al.17. Table 1 summarizes several previous STDP circuits that are capable of real-time scale operation. Notably, the state variables are often realized by (i) leaky voltage integrators using a standalone capacitor or the gate capacitor of a transistor (switched-capacitor integrators)17,25,26, (ii) current-starved inverter27, and (iii) operational transconductance amplifier (OTA)-based integrator19,28. Scaling down a metal-oxide-semiconductor field-effect transistor (MOSFET) in the integrator (particularly channel length below 100 nm) causes a significant rise in subthreshold leakage current29,30,31, and thus a large decrease in the relaxation time of the integrator with a given capacitor. For temporal learning, the relaxation time is a priori preferred to be comparable to that of the biological counterpart in favor of energy-efficient learning, sacrificing unnecessarily fast response. In this regard, needs for higher capacitance to compensate for the subthreshold leakage—maintaining the biologically plausible relaxation time—perhaps limit further scaling down. A workaround is to adopt digital technologies as done by Vogelstein et al.32; a random access memory (RAM) was deployed to store discrete state variable values, and their updates were evaluated in a programmable manner using a microcontroller unit (MCU). Upon every spiking event, the MCU scans the entire RAM and updates the synaptic weights according to the STDP rule. As such, this digital implementation readily offers flexibility in designing the STDP model, hence can serve as a convenient platform in combination with hardware neurons. A possible disadvantage is, however, such that the weight values in the RAM are updated in serial order (time consuming), which hinders a large network with a number of connections (synapses) from real-time interaction with physical environments.

Another important aspect of synaptic circuit design is synaptic weight storage. Ideally, each synaptic unit has a long-lasting analog weight value in a desired range. A common strategy is to use a standalone capacitor that enables current integration and consequently outputs analog voltage17,19,28. However, it is challenging to achieve ideal weight storage because of information loss in due course. The charge loss (poor retention) given the leakage in the subthreshold MOSFET is generally a downside of this common strategy. As a workaround, long-term storage is offered by a bistability circuit that drives the capacitor voltage to one of two stable states26 or by a RAM that stores binary weight values25. Regarding the latter approach, the stored weight value is not necessarily binary. An analog-digital converter can be used to digitize the value that is subsequently stored in a RAM as mentioned above32, though the memory capacity restricts the precision of weight. Floating-gate (FG) based synaptic circuits may meet the requirements, which offer both long-lasting storage and analog-type weight representation33. A gate voltage in a floating-gate MOSFET (FG-MOSFET) is in control of the charge on the FG—the charge can be maintained in the standby state—which alters the channel conductance. Following the original proposal, the design was refined by Ramakrishnan et al.27 and Brink et al.34, offering a viable solution to VLSI synapse design. Other than these mainstream strategies, an emerging approach offers the feasible use of resistive RAM (RRAM for short or popularly referred to as memristive device) based on novel materials as a memory bit35,36,37,38,39,40,41. RRAM exhibits nonvolatile resistive switching between multinary states (not all types of RRAMs though). Additionally, an RRAM array (particularly, passive crossbar array) is highly scalable, meeting the design rule of 4F 2 for the passive array.

In this work, we propose a VLSI-compatible synaptic circuit for spiking neural network, which captures the pair-based STDP behavior13. This synaptic circuit was designed by adopting 65 nm CMOS technology and its feasible operation was examined by using the BSIM 4.6.0 model42 with foundry parameters—a built-in model in LTspice IV. The circuit employs three FG-MOSFETs whose function is two-fold: two FG-based leaky integrators to realize pre and postsynaptic state variables (s pre and s post) and an additional FG-MOSFET to store the weight value. The first two FG-MOSFETs differ in retention time from the last one; the charge on the FG in each of them is released at a biologically plausible rate, whereas the last one needs to be of long retention. For this storage FG-MOSFET, the detailed balance between charge injection into and ejection out of the FG limits the growth of synaptic weight, leaving the coefficients A + and A − in (1) dependent upon synaptic weight. Eventually, we pay attention to the competitive adaptation of synaptic weight within unsupervised and supervised frameworks and the detailed kinetics of the adaptation by phase-plane analysis. The proposed synaptic circuit appears to host such synaptic functions.

Results

FG synaptic circuit

The FG synaptic circuit is shown in Fig. 1. This circuit realizes the pre and postsynaptic state variables (s pre and s post), and they are determined by spiking history. Note that considered is nearest-spike interaction between pre and postsynaptic spikes43. Evaluating the state variables follows the two steps: (i) introducing a continuously varying function that outputs each state variable and (ii) sampling the value upon an incoming spike. The pre and postsynaptic state variables are parameterized by V s_pre and V s_post, respectively. A positive weight change in LTP is dictated by the presynaptic state variable while a negative change in LTD by postsynaptic state variable, and thereby it is intuitive to endow V s_pre and V s_post with different polarities (here V s_pre ≤ 0 and V s_post ≥ 0). For the postsynaptic state variable, the subcircuit in Fig. 1a (leaky integrator) takes up the first task (introduction of a state variable function), and that in Fig. 1c (sampling) samples the current value only if a presynaptic spike is applied. For the presynaptic state variable, the subcircuits in Fig. 1b and d introduce a state variable function and sample the current value, respectively.

Proposed synaptic circuit. Leaky integration is realized on the FG of M2 and M11, which is incorporated into the state variable generators (a) and (b) for post and presynaptic variable, respectively. Sampling subcircuits (c) and (d) read out the current post and presynaptic variable, respectively, and relay them to (e) the storage subcircuit. This storage subcircuit converts V m to synaptic weight that is parameterized by V w.

Each leaky integrator comprises a FG-MOSFET (TJ1 + M2 and TJ4 + M11 for the post and presynaptic state variable, respectively) and voltage divider. Notably, for the postsynaptic state variable, the integrator has two stages for non-inverting voltage transfer characteristic (VTC). Each FG-MOSFET is a tunnel junction (TJ)-MOSFET stack in conjunction with an auxiliary capacitor (C1 and C3 for the post and presynaptic state variable, respectively) that is used for precisely initiating the desired charge relaxation. Each integrator piles up charge on the FG upon incident spikes in balance with charge relaxation (ejection) so that the channel conductance of the FG-MOSFET varies accordingly.

The sampling subcircuit for each variable outputs nonzero voltage only in the presence of a counter spike, i.e. sampling s pre and s post needs a post and a presynaptic spike, respectively. The nonzero output from the subcircuit reads the current state variable (V s_pre and V s_post) of different polarities and relays it to the weight storage subcircuit.

The synaptic weight is memorized on the FG of the FG-MOSFET (TJ2 + TJ3 + M10). V s_pre and V s_post are applied to TJ3 and TJ2, respectively, and charge is accordingly integrated on the FG, outputting V w in combination with the voltage divider M9. The auxiliary capacitor C2 is used as for the integrators. V w is subsequently sampled by a presynaptic spike and applied to the membrane of the postsynaptic neuron, raising the membrane potential.

Note that the circuit parameters in Tables 2 and 3 were used for the simulations unless otherwise stated. The subcircuit-wise synaptic circuit operation is fully detailed in Supplementary Information.

Plasticity induction

The STDP behavior of the proposed circuit was first identified in a time domain for two preliminary cases (causal and anti-causal cases) using the circuit parameters listed in Tables 2 and 3. The former indicates a synapse subject to a single presynaptic spike that precedes a postsynaptic spike train (pre-post), whereas the latter the opposite order (post-pre) as plotted in Fig. 2a and b, respectively. The first pre and postsynaptic spikes abruptly raise the corresponding FG voltage by approximately 180 and 135 mV, respectively (Fig. 2c and d). The amplitude and width of each spike were 0.5 V and 30 μs, respectively. Note that V FG_post has the larger relaxation time than V FG_pre, which endows the STDP behavior in a timing-difference (t post − t pre) domain with the larger depression window than potentiation window as will be discussed below. V FG_pre and V FG_post were subsequently sampled by the following opposite spike trains, resulting in the state variables V s_pre and V s_post, respectively (Fig. 2e and f). The sampled values were respectively applied to the tunneling junctions TJ2 and TJ3, causing the increase of V m as shown in Fig. 2g and h. Notably, V s_pre and V s_post larger than a certain threshold contribute to the V m change (Fig. 2g and h). This threshold is defined as voltage enabling the injection of one elementary charge for 30 μs—no noticeable change in V m results from voltage below this threshold. Given the difference in size for TJ2 and TJ3 (Table 3), the threshold voltage also differs: 0.35 V and −0.33 V for TJ2 and TJ3, respectively. The synaptic weight V w eventually varied upon the V m, which captures the synaptic plasticity upon the temporal order of pre and postsynaptic spikes (Fig. 2i and j). It is noted that the causal spike order (Fig. 2a) results in an increase in V w, i.e. potentiation while the anti-causal order in a decrease in V w, i.e. depression.

Simulated transient voltage characteristics during plasticity induction for (a) causal and (b) anti-causal spike patterns. The transient FG voltage following the first spike for both causal and anti-causal cases is plotted in (c) and (d), respectively. Given the spike pattern, the state variable is continuously elicited as shown in (e) and (f) and consequently alters the storage FG voltage (V m) [(g) and (h)], respectively. Eventually, synaptic weight V w evolves as plotted in (i) and (j), indicating potentiation and depression, respectively.

The proposed synaptic circuit with the same circuit parameters causes a STDP behavior in a timing-difference domain as plotted in Fig. 3. The reference (initial) weight (V w0) was approximately 151 mV. The LTD window is wider than the LTP; fitting the plot to (1) relates a LTD and LTP time constant of approximately 76.5 and 16.8 ms, respectively. The STDP behavior can be tweaked by means of circuit parameters as detailed in Supplementary Information.

STDP behavior in a timing-difference domain. The change in synaptic weight (ΔV w) was evaluated with a single pair of pre and postsynaptic spikes (different Δt). The circles indicate the simulation data, which are fitted to the simplified mathematical formula in (1) (solid red lines). The fitting results in τ + and τ − of 16.8 and 76.5 ms, respectively. The reference (initial) synaptic weight (V w0) was 151 mV that results from a V ctrl of 0.51 V.

Weights dependence of STDP

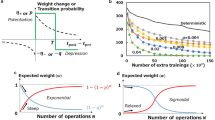

It is important to delimit synaptic weight such that the uncontrolled growth is avoided. To do so, the weight change for each pair of pre and postsynaptic spikes needs to depend upon the current weight in a way that the increase declines with the increase of the current weight. In fact, the weight dependence of STDP has been demonstrated to be of importance in its functionalities on a network scale, such as selectivity development21, temporal correlation encoding22, receptive field stability23, and synaptic weight distributions24. The proposed circuit spontaneously leads to weight-depending STDP given (i) the detailed balance governing the charge transfer into and out of the FG in the storage element (TJ2 + TJ3 + M10) and (ii) output saturation in the VTC of M9 + M10 (see Supplementary Information). Given the detailed balance of charge transfer, the more electrons the FG keeps, the more negatively the FG (V m) is charged and thus the more likely the potential configuration repels further electron injection (see Supplementary Information). Consequently, the lower V m for the moment, the more likely that a decrease in V m by the next pre-post spike pair tends to be small, relating ΔV m to the current synaptic weight. Besides, the common node (V m) for both LTP and LTD couples the weight-depending LTP and LTD such that ΔV m for LTD (i.e. ΔV m > 0) also depends upon the current V m. The lower V m (<0) for the moment, the larger ΔV m (ΔV m > 0) tends to be caused by a post-pre spike pair. Alongside the detailed balance of charge dynamics, the VTC of the storage subcircuit—outputting voltage in 0–0.5 V as addressed in Supplementary Information—underpins the weight-depending LTP, particularly, limited growth of synaptic weight below a V w of 0.5 V. Additionally, the VTC restricts V w (≥0) such that the LTD that outweighs the LTP cannot lead to negative output (V w < 0), V w = 0 instead—implying no synaptic transmission.

In support of the weight-depending STDP, ΔV w when Δt = ±1 ms was evaluated with V w (Fig. 4). As such, ΔV w significantly depends upon V w due to the aforementioned two factors that simultaneously (but relatively) contribute to the weight-dependence. The gray region in Fig. 4a indicates the weight change that is dominantly impeded by the detailed balance insomuch as the VTC in the given V w region is far from both output saturation regions (see Supplementary Information). In contrast, the VTC output saturation outweighs the detailed balance outside the gray region, resulting in ΔV w falling to zero in the vicinity of the two poles (0 and 0.5 V).

Simulated weight-depending STDP behavior of the proposed synaptic circuit. (a) Dependence of ΔV w on V w when the synaptic circuit is subject to a single pair of pre and postsynaptic spikes with Δt = ±1 ms. In the gray region, ΔV w is mostly governed by the detailed balance of charge transfer via the FG in the storage element, whereas out of the gray region the VTC of M9 + M10 mainly determines the weight dependence. (b) Schematic of bidirectionally wired neurons (N 1 and N 2) that fire correlated spikes with 1 ms Δt every 50 ms. (c) Consequent synaptic bifurcation.

This weight-depending STDP was applied to a preliminary system in which two neurons (N 1 and N 2) were bidirectionally coupled through the proposed synaptic circuit (Fig. 4b). A pair of spikes (N 1’s spike preceding N 2’s by 1 ms) was elicited every 50 ms (20 Hz). The two synapses accordingly adjusted their weight in the given circumstances as shown in Fig. 4c. It is clearly noticed that the persistent stimulation bifurcates the two synapses from the initial weight (ca. 151 mV) with regard to the temporal order of spikes; the synapse from N 1 to N 2 encountered spike pairs that support LTP (Δt = 1 ms) while the other underwent LTD given that Δt = −1 ms. Additionally, the synaptic weight saturation obviously reflects the weight-depending STDP. As shown in Fig. 4a, a pair of spikes (Δt = 1 ms) causes a noticeable rise in V w unless the current V w is below approximately 0.45 V. By all rights, this value (0.45 V) is supposed to be the maximum V w. However, the actual maximum V w is around 0.27 V. This inconsistency arises from the interaction between the postsynaptic spike in a pair and presynaptic spike in the next pair, which meets the LTD condition (Δt = −49 ms). Given the wide time window for LTD (Fig. 3), Δt of −49 ms is sufficient for a notable decrease in V w. In this regard, the maximum V w is determined mainly by the weight dependence of LTP, but in combination with LTD caused by the wide LTD time window.

The weight-dependence can be tweaked by means of control signal V ctrl, e.g. initial synaptic weight (V w0) and upper and lower limits of weight, and thus the desired STDP behavior can readily be achieved. We set aside this issue until Supplementary Information.

Competition between synapses

The feasibility of the proposed synaptic circuit was further validated for a small network within the unsupervised and supervised learning frameworks. The test network was composed of two presynaptic (N 1 and N 2) and a single postsynaptic neuron (N 3) as shown in the inset of Fig. 5a. The presynaptic neurons were Poisson neurons that spike following a renewal process (Poisson process). The procedure for Poisson spike generation can be seen in ref.44. The postsynaptic neuron was assumed to be a point neuron and realized by employing the Stein model45 in which the following subthreshold membrane potential u m holds:

where τ m denotes the relaxation time constant of the membrane and was set to 10 ms. N u and N s mean the number of presynaptic neurons (here two) and the total number of spikes from each presynaptic neuron. The superscript indicates presynaptic neuron label such that \({V}_{{\rm{w}}}^{i}(t)\) and \({t}_{j}^{i}\) mean the synaptic weight for the presynaptic neuron (i = 1 or 2) and j th spiking time for the same presynaptic neuron, respectively. Thus, the Dirac delta function samples the synaptic weight in response to spiking, and the sampled value—multiplied by constant α (set to 0.3)—is a rise in u m upon spiking. Once the threshold for spiking (90 mV) is reached, the neuron fires a spike and subsequently resets u m to zero. The parameters in Tables 2 and 3 were used for these simulations.

Unsupervised competition between two presynaptic neurons. (a) Synaptic evolution (adaptation) under temporal spiking configuration of two Poisson neurons (N 1 and N 2) and single postsynaptic neuron (N 3) is plotted. The schematic of the network is depicted in the inset. N 1 and N 2 fire Poisson spikes at 5 Hz, and N 3 spikes accordingly. (b) Phase plane analysis on synaptic bifurcation on the trials in (a)—indicated by different colors. The winning synapse was chosen at random. The same parameters as tabulated in Tables 2 and 3 were used.

First, we considered the synaptic weight evolution in response to uncorrelated Poisson spikes from N 1 and N 2 and the induced postsynaptic spikes from N 3 (unsupervised learning). N 1 and N 2 were assumed to spike at 5 Hz. N 3 receives the presynaptic spikes via the synaptic circuits, and the membrane potential consequently evolves. The change of V w1 (for N 1 → N 3) and V w2 (for N 2 → N 3) in these circumstances is displayed in Fig. 5a. The evolution can be divided into two phases, a simultaneous increase in weight in the first place (ca. 0–75 s) and the subsequent synaptic bifurcation. In the first phase, N 1 and N 2 together elicit spikes from N 3, and their contribution is likely equal given the same spiking rate. That is, synaptic association is dominant over competition such that both synapses are reinforced given the causal order of pre-postsynaptic spikes for both synapses. Through this phase, each synaptic weight becomes sufficiently high to evoke a postsynaptic spike without association, which is then followed by competition, implying transition to the bifurcation phase (t > 75 s). The competition takes place at random as follows; (i) in view of the high synaptic weight one of N 1 and N 2 is solely able to evoke a postsynaptic spike, (ii) the causal correlation between either N 1 or N 2 (chosen at random) and N 3 is consequently established, which reinforces the chosen synapse, and (iii) in contrast, the unchosen synapse is subject to uncorrelated pre and postsynaptic spiking. The uncorrelated spiking probabilistically makes the anti-causal effect dominant over the other in light of the larger time windows for LTD than LTP (see Fig. 3). Thus, the synapse falls behind in the competition.

The weight evolution from the initial value is better visualized on the V w1 − V w2 phase plane in Fig. 5b. This phase plane analysis helps us readily predict the dynamics of V w1 and V w2 changes and important states such as null-clines and fixed points (if exist). Each arrow on the plane denotes a vector field \((d{V}_{{\rm{w}}1}/dt)\overrightarrow{i}+(d{V}_{{\rm{w}}2}/dt)\overrightarrow{j}\) at a given (V w1, V w2) point. The vector indicates ΔV w1/Δt and ΔV w2/Δt from the current states (V w1(t), V w2(t)): ΔV w1/Δt = [V w1(t + Δt) − V w1(t)]/Δt and ΔV w2/ΔΔΔt = [V w2(t + Δt) − V w2(t)]/Δt. V w1(t + Δt) and V w2(t + Δt) were statistically evaluated with (V w1(t), V w2(t)) that were subject to Poisson presynaptic spikes at 5 Hz for 2 s (Δt = 2). This field evaluated was repeated over all nodes on the plane, resulting in the phase plane. The datasets in Fig. 5a are re-plotted on the phase plane, where their evolutions are in good agreement with the vector fields. Notably, the phase plane is symmetric with respect to a diagonal given that N 1 and N 2 spike at the same rate (5 Hz). Thus, the opposite trajectories were observed at random with equal probability.

Following is synaptic weight evolution upon time-varying spiking rate for the same simple network. In this simulation, only one of N 1 and N 2 fired spikes within a time bin of 200 ms, and the next spiking neuron was chosen at random. The firing rate was the same for all time bins (20 Hz). Likewise, the synaptic evolution encounters two phases. Alternating input spikes between N 1 and N 2 enhance the activity of N 3 in the first place; therefore, both weight values initially slightly increase. When one presynaptic neuron takes the lead at random, the corresponding pre and postsynaptic spiking pattern establishes a strong causal correlation outweighing the other synapse, consequently reinforcing the chosen synapse. As a result, the highly probable anti-causal spike pairing for the unchosen synapse weakens the synapse. Similar to the case shown in Fig. 5b, two types of trajectories (Fig. 6b) were observed at random with equal probabilities.

Comparison between unsupervised and supervised adaptation cases. (a) Unsupervised competition in the same network as the inset in Fig. 5(a). One of N 1 and N 2 was randomly chosen every time bin (width: 200 ms), and the chosen neuron was given a spiking rate of 20 Hz. Sampled synaptic evolution trajectories are plotted on the phase plane in (b)—each color denotes each sample. The two opposite types of trajectories were observed at random at the equal probabilities. (c) Supervised adaptation by deploying a bias neuron (N sup) that spikes at 80 Hz in sync with N 1. With the aid of N sup, N 1 always wins N 2 as seen on the phase plane in (d). For N sup in sync with N 2, which makes N 2 win N 1, data are shown in (e) and (f).

We finally justified the feasible use of the proposed synaptic circuit for supervised learning. To this end, an additional presynaptic neuron (N sup) was deployed, which maintained a synaptic weight (V w_sup) of 50 mV and spiked at 50 Hz in sync with one of N 1 and N 2 (Fig. 6c and e). N sup is termed as bias. Similar to the previous simulation, the activity (20 Hz) randomly toggled between N 1 and N 2 every 200 ms. A schematic of presynaptic spiking patterns in conjunction with a spiking pattern of N sup in sync with N 1 and N 2 is depicted in Fig. 6c and e, respectively. This bias—as its name indicates—biases the vector field on the phase plane towards the side on which the weight of the out-of-sync neuron out of sync vanishes as plotted in Fig. 6d and f. Therefore, supervised learning can be achieved using the bias.

Effect of MOSFET variability on STDP

MOSFET variability likely brings on a technical issue, particularly, for analog circuits. Bearing this in mind, we address the effect of such variability on STDP and the consequent selectivity evolution with regard to the robustness of the proposed synaptic circuit. MOSFET variability includes random dopant fluctuation (RDF) and line-edge roughness (LER)46,47. The former causes threshold voltage (V th) fluctuation that likely follows a normal distribution centered at the ideal V th value (for invariant dopant density) with a standard deviation σ Vt given by the Pelgrom’s model48; \({\sigma }_{{V}_{{\rm{t}}}}={A}_{{\rm{RDF}}}/\sqrt{LW}\) where A RDF, L, and W denote a proportionality constant, channel length, and channel width, respectively. In this work, A RDF was set to 1.27 × 10−9 V·m, conferring 15 mV in σ Vt on the smallest MOSFETs (60 nm × 120 nm) in line with ref.47. Additionally, LER was taken into account by allowing random variation in MOSFET channel length; the length for each MOSFET was drawn from a normal distribution with a standard deviation of \({A}_{{\rm{LER}}}/\sqrt{W}\) in which A LER was set to 1.04 × 10−12 m3/2. This LER effect results in approximately 3 nm standard deviation for 120 nm channel in line with ref.47.

Given these possible causes of variability, 200 pairs of synaptic circuits were acquired and subject to STDP and selectivity evolution identifications. In Fig. 7a, the 200 STDP behaviors (gray curves) are appended to the ideal one (red curve) identical to Fig. 3. Despite the present variability, the spike-timing effect (LTP and LTD for Δt > 0 and Δt < 0, respectively) is validated as a whole other than few exceptions. Alongside this spike-timing effect, the distribution of initial synaptic weight is of concern in selectivity evolution. The probability density function (PDF) of initial weight from 200 circuits is nicely fitted to a normal distribution function as plotted in Fig. 7b.

Effect of MOSFET variability including RDF and LER on STDP. (a) STDP behavior for 200 Monte Carlo simulation results (gray) and no variability (red). Each behavior was obtained with a different set of MOSFET parameters. (b) Distribution of initial synaptic weight, plotted from 200 Monte Carlo runs. (c) Synaptic evolution for 200 circuits given random MOSFET mismatch. The protocol in use was identical to that in Fig. 6(e) in attempt to lead V w2 to potentiation. (d) The distribution of V w1 and V w2 at 100 s.

Eventually, selectivity evolution was identified for 200 pairs of circuits using the supervised learning scheme depicted in Fig. 6e—N sup in sync with N 2 to let V w2 win V w1. The data are plotted in Fig. 7c that reveals V w2 outweighing V w1 as a whole albeit scattered. Additionally, variation in initial synaptic weight can be ascertained in Fig. 7c. Figure 7d displays a PDF for V w1 and V w2 values at 100 s. It is noticeable that the potentiation probability of V w2 is higher than V w1 in support of the supervised learning.

Discussion

The FG-based leaky integrators in the proposed synaptic circuit alleviate the area overhead for real-time scale operation in favor of scalability10. The FG-based leaky integrator may offer an advantage over the switched-capacitor integrator for deep submicron technology where the subthreshold leakage through the short channel29,30,31 is in need of high capacitance to enable real-time scale operation. To back the scalability of the FG-based integrator (e.g. TJ1 + C1 + M2 in Fig. 1) in part, its relaxation time was evaluated for different capacitances (C FG) and barrier thicknesses (t tun) (Fig. 8). Here the FG-based integrator was subject to a single spike (0.5 V amplitude and 30 μs width). The relaxation time τ relax was defined as a time period during which the FG voltage amplitude falls below the half of its peak value. As such, τ relax is remarkably susceptible to t tun to the extent that almost two orders of magnitude change in τ relax is managed by merely 30% change in t tun while a rise in C FG by approximately one order of magnitude increases τ relax by less than two orders of magnitude (Fig. 8a). Figure 8b shows a C FG-t tun relationship for a τ relax of 0.5 s, indicating that 1.3 nm t tun needs merely 2 fF C FG for real-time scale operation.

The same is applied to the synaptic weight storage (TJ2 + TJ3 + C2 + M10), the expected relaxation time is much longer though. Hereafter, it appears proper to term this relaxation time as retention time. A thicker tunnel barrier is desirable in favor of a better retention; however, as such, charge injection through a thick tunnel barrier is of difficulty with regard to the tunneling probability that decays exponentially with barrier thickness. To be precise, it turns out that the spike (0.5 V amplitude and 30 μs width)—that employed through the entire simulations—cannot drive tunneling through a tunnel barrier (>1.8 nm). Thus, we chose 1.75 nm, which offers the retention of V m programmed at different levels as shown in Fig. 9a. The retention time τ ret was defined as a time period during which |V m| declines by 10%. τ ret depends upon the programmed V m level, which is typically in the 70–100 s range (Fig. 9b). The retention is perhaps insufficient for long-term memory. However, it appears feasible for synaptic competition (bifurcation) to terminate within the retention time as identified in Fig. 5a. In addition, if the memory retention is of significance for a particular application, it can be stored as binary numbers25,26,32.

(a) Time-dependent change in V m that was initially set to different values. (b) Retention time τ ret for different initial V m values. The retention time was defined as the time period during which |V m| decreases by 10%. The storage element in Fig. 1 was re-sketched in the inset.

The reliability of FG-MOSFETs is an important issue since the tunnel barrier is typically so thin that the programming voltage causes a high electric field across the barrier—that often brings on dielectric breakdown. Dielectric breakdown is often parameterized by charge-to-breakdown (QTB)49,50,51. QTB increases with decreasing the operating voltage; for silicon oxide layers (<3 nm) at 2.5 V, it was shown to exceed 106 C/cm2 under constant voltage stress52,53,54. In our simulations, a single spike (0.5 V) to TJ1 and TJ4 drives <46 μC/cm2 and <4 μC/cm2, respectively. For TJ2 and TJ3, the value is below 1 μC/cm2. Therefore, the operation conditions partly support high endurance.

The observed variation in synaptic evolution due to MOSFET variability seemingly falls short of being accepted in a deterministic system without error-tolerance. However, benchmarking deep learning55, even a deterministic learning algorithm, such as back-propagation, needs to involve stochasticity in the beginning (initially random weight values) and during the training (regularization) for a better training56. Likewise, the stochasticity shown in the STDP behavior likely provides the network with high entropy (Shannon information) that allows a large number of representations. In line with deep neural networks, spiking neural networks may be in general error-tolerant such that the stochastic STDP shown in Fig. 7d may be acceptable to the extent to which the stochasticity does not lead to faulty results. Nevertheless, the degree of error-tolerance varies upon the architecture, learning rule, neuron model, etc., which is beyond the scope of this work. Thus, we leave this question open for the time being.

Energy efficiency is an important principle of neuromorphic engineering. The proposed synaptic circuit is energy-efficient with regard to the subthreshold operation of most MOSFETs in the circuit. The circuit theoretically consumes approximately 34 pW, and this power is almost identical to the standby power. Namely, the standby power consumption is dominant over the synaptic operational power consumption. Akin to the STDP behavior, the power consumption is also susceptible to MOSFET variability; the PDF of power consumption follows a normal distribution centered at 34 pW with a standard deviation of approximately 12 pW. This is fairly comparable to previous reports, for instance, 60 pW in the work by Bamford et al.17 and 37 pW by Cruz-Albrech et al.28.

The temperature-resilience of the proposed synaptic circuit should also be taken into consideration. Practically, the circuit varies on its temperature (mostly, temperature increase) due mainly to power dissipation on the chip and/or ambient temperature change. To identify the temperature resilience, we varied the circuit temperature from 0 to 60 °C and acquired the STDP behavior at each temperature. The simulation results reveal that the STDP behavior is as a whole preserved in the given temperature range in spite of the variation in detail (Fig. 10a). The detail is addressed in Supplementary Information. Further, the power consumption increases with circuit temperature, reaching approximately 86 pW at 60 °C as shown in Fig. 10b. The increase is due mainly to the subthreshold operation of all MOSFETs in the circuit in that the channel current is thermally activated, and thereby consuming more power.

Given the significantly low power operation of the synaptic circuit, the power consumption and consequent temperature increase are unlikely to be sufficiently high to heat the circuit above 60 °C even on the synaptic array level. As a benchmark, the recent central processing unit (Intel i7-6700K) under the maximum load consumes approximately 100 W and its core reaches approximately 70 °C when cooled by air. Thus, we believe that the temperature of the synaptic array with air-cooling stays much below this benchmark given the extremely low power consumption of a single synaptic unit.

The estimated circuit area is approximately 40 µm2, whose layout is shown in Fig. S4 in Supplementary Information. When implemented in a crossbar array, the area is reduced down to 24 µm2 such that the unit synaptic circuits in the same row and column can share the capacitors. Other synaptic circuit designs are nicely overviewed in ref.20. Notably, the three capacitors take a considerable portion (ca. 35%) so that the use of a high-k dielectric material in place of SiO2 is a solution to a reduction in the circuit area.

Conclusion

We proposed a synaptic circuit for STDP, which potentially fulfills competitive synaptic adaptation (selectivity) with randomly spiking neurons at significantly low expense (area overhead and power consumption). This outstanding potential of the proposed circuit mainly owes to the FG integrator for the state variables and synaptic weight storage, which is expected to outperform capacitor-based integrator, particularly, in the deep-submicron regime. In this study, the STDP was viewed as the reinforcement of causality of postsynaptic spiking. In this regard, the circuit simulation highlighted the spontaneous evolution of synaptic weight with regard to causality reinforcement in a random (unsupervised learning) and deliberate manner (supervised learning with the aid of bias).

References

Mead, C. Neuromorphic electronic systems. Proc. IEEE 78, 1629–1636 (1990).

Mead, C. Analog VLSI and neural systems. (Addison-Wesley, 1989).

Mahowald, M. & Douglas, R. A silicon neuron. Nature 354, 515–518 (1991).

Liu, S. Silicon retina with adaptive filtering properties. Analog Integr. Circuits Signal Process. 18, 243–254 (1999).

Asai, T., Kanazawa, Y. & Amemiya, Y. A subthreshold mos neuron circuit based on the volterra system. IEEE Trans. Neural Netw. 14, 1308–1312 (2003).

Wijekoon, J. & Dudek, P. Compact silicon neuron circuit with spiking and bursting behaviour. Neural Netw. 21, 524–534 (2008).

van Schaik, A. et al. A log-domain implementation of the Mihalas-Niebur neuron model. Proc. IEEE Int. Symp. Circuits Syst (2010).

Indiveri, G. et al. Neuromorphic silicon neuron circuits. Front. Neurosci. 5 (2011).

Lim, H. et al. Relaxation oscillator-realized artificial electronic neurons, their responses, and noise. Nanoscale 8, 9629–9640 (2016).

Kornijcuk, V. et al. Leaky integrate-and-fire neuron circuit based on floating-gate integrator. Front. Neurosci. 10 (2016).

Saighi, S., Bornat, Y., Tomas, J., Le Masson, G. & Renaud, S. A library of analog operators based on the Hodgkin-Huxley formalism for the design of tunable, real-time, silicon neurons. IEEE Trans. Biomed. Circuits Syst. 5, 3–19 (2011).

Markram, H. Regulation of synaptic efficacy by coincidence of postsynaptic APs and EPSPs. Science 275, 213–215 (1997).

Bi, G. & Poo, M. Synaptic modifications in cultured hippocampal neurons: dependence on spike timing, synaptic strength, and postsynaptic cell type. J. Neurosci. 18, 10464–10472 (1998).

Sjöström, P., Turrigiano, G. & Nelson, S. Rate, timing, and cooperativity jointly determine cortical synaptic plasticity. Neuron 32, 1149–1164 (2001).

Froemke, R. & Dan, Y. Spike-timing-dependent synaptic modification induced by natural spike trains. Nature 416, 433–438 (2002).

Indiveri, G. Neuromorphic bistable VLSI synapses with spike-timing dependent plasticity. Adv. Neural Inf. Process Syst. 15, 1091–1098 (2002).

Bamford, S., Murray, A. & Willshaw, D. Spike-timing-dependent plasticity with weight dependence evoked from physical constraints. IEEE Trans. Biomed. Circuits Syst. 6, 385–398 (2012).

Tanaka, H., Morie, T. & Aihara, K. A CMOS spiking neural network circuit with symmetric/asymmetric STDP Function. IEICE Trans. Fund. Electron. Commun. Comput. Sci. E92-A, 1690–1698 (2009).

Bofill-i-Petit, A. & Murray, A. Synchrony detection and amplification by silicon Neurons With STDP Synapses. IEEE Trans. Neural Netw. 15, 1296–1304 (2004).

Azghadi, M., Iannella, N., Al-Sarawi, S., Indiveri, G. & Abbott, D. Spike-based synaptic plasticity in silicon: design, implementation, application, and challenges. Proc. IEEE 102, 717–737 (2014).

Song, S., Miller, K. & Abbott, L. Competitive Hebbian learning through spike-timing dependent synaptic plasticity. Nature Neurosci. 3, 919–926 (2000).

Rubin, J., Lee, D. & Sompolinsky, H. Equilibrium Properties of Temporally Asymmetric Hebbian Plasticity. Phys. Rev. Lett. 86, 364–367 (2001).

Billings, G. & van Rossum, M. Memory retention and spike-timing-dependent plasticity. J. Neurophys. 101, 2775–2788 (2009).

Gutig, R., Aharonov, R., Rotter, S. & Sompolinsky, H. Learning input correlations through nonlinear temporally asymmetric Hebbian plasticity. J. Neurosci. 23, 3697–3714 (2003).

Arthur, J. & Boahen, K. Learning in silicon: timing is everything. Adv. Neural Inf. Process. Syst. 18, 75–82 (2005).

Indiveri, G., Chicca, E. & Douglas, R. A VLSI array of low-power spiking neurons and bistable synapses with spike-timing dependent plasticity. IEEE Trans. Neural Netw. 17, 211–221 (2006).

Ramakrishnan, S., Hasler, P. & Gordon, C. Floating gate synapses with spike-time-dependent plasticity. IEEE Trans. Biomed. Circuits Syst. 5, 244–252 (2011).

Cruz-Albrecht, J., Yung, M. & Srinivasa, N. Energy-efficient neuron, synapse and STDP integrated circuits. IEEE Trans. Biomed. Circuits Syst. 6, 246–256 (2012).

Roy, K., Mukhopadhyay, S. & Mahmoodi-Meimand, H. Leakage current mechanisms and leakage reduction techniques in deep-submicrometer CMOS circuits. Proc. IEEE 91, 305–327 (2003).

Rao, R., Srivastava, A., Blaauw, D. & Sylvester, D. Statistical analysis of subthreshold leakage current for VLSI circuits. IEEE Trans. Very Large Scale Integr. (VLSI) Syst. 12, 131–139 (2004).

Xue, J., Li, T., Deng, Y. & Yu, Z. Full-chip leakage analysis for 65 nm CMOS technology and beyond. VLSI Journal 43, 353–364 (2010).

Vogelstein, R. et al. Spike timing-dependent plasticity in the address domain. Adv. Neural Inf. Process Syst. 15, 1147–1154 (2003).

Hasler, P., Diorio, C., Minch, B. & Mead, C. Single transistor learning synapses. Adv. Neural Inf. Process Syst. 7, 817–824 (1995).

Brink, S., Nease, S. & Hasler, P. Computing with networks of spiking neurons on a biophysically motivated floating-gate based neuromorphic integrated circuit. Neural Netw. 45, 39–49 (2013).

Jo, S. et al. Nanoscale memristor device as synapse in neuromorphic systems. Nano Lett. 10, 1297–1301 (2010).

Jeong, D., Kim, I., Ziegler, M. & Kohlstedt, H. Towards artificial neurons and synapses: a materials point of view. RSC Adv. 3, 3169 (2013).

Kavehei, O. et al. An associative capacitive network based on nanoscale complementary resistive switches for memory-intensive computing. Nanoscale 5, 5119 (2013).

Lim, H., Kim, I., Kim, J., Seong Hwang, C. & Jeong, D. Short-term memory of TiO2-based electrochemical capacitors: empirical analysis with adoption of a sliding threshold. Nanotechnology 24, 384005 (2013).

Kornijcuk, V. et al. Multiprotocol-induced plasticity in artificial synapses. Nanoscale 6, 15151–15160 (2014).

Saïghi, S. et al. Plasticity in memristive devices for spiking neural networks. Front. Neurosci. 9, 51 (2015).

Prezioso, M., Merrikh Bayat, F., Hoskins, B., Likharev, K. & Strukov, D. Self-adaptive spike-time-dependent plasticity of metal-oxide memristors. Sci. Rep. 6, 21331 (2016).

Dunga, M. et al. BSIM4.6.0 MOSFET Model. (University of California).

Pfister, J. & Gerstner, W. Triplets of spikes in a model of spike timing-dependent plasticity. J. Neurosci. 26, 9673–9682 (2006).

Dayan, P. & Abbott, L. Theoretical Neuroscience. (MIT Press, 2014).

Stein, R. A theoretical analysis of neuronal variability. Biophys. J. 5, 173–194 (1965).

Bernstein, K. et al. High-performance CMOS variability in the 65-nm regime and beyond. IBM J. Res. Develop. 50, 433–449 (2006).

Ye, Y., Liu, F., Chen, M., Nassif, S. & Cao, Y. Statistical modeling and simulation of threshold variation under random dopant fluctuations and line-edge roughness. IEEE Trans. Very Large Scale Integr. (VLSI) Syst. 19, 987–996 (2011).

Pelgrom, M., Duinmaijer, A. & Welbers, A. Matching properties of MOS transistors. IEEE J. Solid-State Circuits 24, 1433–1439 (1989).

Rosenbaum, E., King, J. & Hu, C. Accelerated testing of SiO2 reliability. IEEE Trans. Electron Devices 43, 70–80 (1996).

Schuegraf, K. & Hu, C. Reliability of thin SiO2. Semicond. Sci. Technol. 9, 989 (1994).

Stathis, J. Physical and predictive models of ultrathin oxide reliability in CMOS devices and circuits. IEEE Trans. Device Mater. Rel. 1, 43–59 (2001).

Wann, C. & Hu, C. High endurance ultrathin tunnel oxide for dynamic memory applications. Proc. Int. Electron Devices Meeting, 867 (1995).

Stathis, J. & DiMaria D. Reliability projection for ultrathin oxides at low voltage. IEDM Tech. Dig. 167–170 (1998).

Vogel, E. et al. Reliability of ultrathin silicon dioxide under combined substrate hot-electron and constant voltage tunneling stress. IEEE Trans. Electron Devices 47, 1183–1191 (2000).

LeCun, Y., Bengio, Y. & Hinton, G. Deep learning. Nature 521, 436–444 (2015).

Krizhevsky, A., Sutskever, I. & Hinton, G. Conference on Neural Information Processing Systems (NIPS) 25 (2012).

Acknowledgements

This work was supported by a Korea Institute of Science and Technology grant (grant no. 2E27331). This work was also in part supported by the Future Semiconductor Device Technology Development Program (10048490) funded by Ministry of Trade, Industry & Energy and Korea Semiconductor Research Consortium

Author information

Authors and Affiliations

Contributions

D.S.J. and V.K. designed the circuit and experiments. V.K. conducted the circuit simulations and analyzed the data. H.L., V.K., and I.K. wrote the code together. J.P., W.L., J.C., and B.J.C. developed analysis tools. This manuscript was written by D.S.J. All authors contributed to the discussion.

Corresponding author

Ethics declarations

Competing Interests

The authors declare that they have no competing interests.

Additional information

Publisher's note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic supplementary material

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kornijcuk, V., Lim, H., Kim, I. et al. Scalable excitatory synaptic circuit design using floating gate based leaky integrators. Sci Rep 7, 17579 (2017). https://doi.org/10.1038/s41598-017-17889-8

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-017-17889-8

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.