Abstract

For cochlear implant users, combined electro-acoustic stimulation (EAS) significantly improves the performance. However, there are many more users who do not have any functional residual acoustic hearing at low frequencies. Because tactile sensation also operates in the same low frequencies (<500 Hz) as the acoustic hearing in EAS, we propose electro-tactile stimulation (ETS) to improve cochlear implant performance. In ten cochlear implant users, a tactile aid was applied to the index finger that converted voice fundamental frequency into tactile vibrations. Speech recognition in noise was compared for cochlear implants alone and for the bimodal ETS condition. On average, ETS improved speech reception thresholds by 2.2 dB over cochlear implants alone. Nine of the ten subjects showed a positive ETS effect ranging from 0.3 to 7.0 dB, which was similar to the amount of the previously-reported EAS benefit. The comparable results indicate similar neural mechanisms that underlie both the ETS and EAS effects. The positive results suggest that the complementary auditory and tactile modes also be used to enhance performance for normal hearing listeners and automatic speech recognition for machines.

Similar content being viewed by others

Introduction

Users of modern cochlear implants perform well in speech recognition tasks in quiet, but are limited in pitch-related tasks1,2,3. Electric pitch perception is limited by the electrode-to-nerve-interface, which currently does not provide access to low-frequency spiral ganglion neurons that are located in either the core of the auditory nerve bundle or the distal side of the internal auditory canal4. For those with residual acoustic hearing at lower frequencies, electro-acoustic stimulation (EAS) is an effective approach to access these low-frequency neurons5. The EAS combination of unintelligible low-frequency acoustic hearing and electric stimulation has been shown to provide a super-additive effect that improves speech recognition in noise6,7,8,9. However, the benefits of EAS are not readily available for those without any functional low-frequency acoustic hearing. Although penetrating electrodes have been previously proposed to directly access the low-frequency cells, mismatches between the hard electrodes and the soft tissue limits its immediate clinical application10. Here we consider an alternative strategy, namely, electro-tactile stimulation (ETS) that uses tactile vibrations to provide the low-frequency acoustic information.

Historically, tactile aids have competed with cochlear implants for providing auditory rehabilitation for those with profound hearing loss11,12,13,14. Modern advances in cochlear implants have now phased out the use of tactile aids. However there are several reasons for reconsidering tactile aids as a complementary mode to cochlear implants. First, tactile sensation is a low-frequency channel that operates in the same range (<500 Hz) as the acoustic frequencies in the EAS approach15. Second, tactile stimulation has been shown to convey some acoustic information that can benefit speech recognition, lipreading, and even word acquisition16,17,18,19. Third, it is especially interesting to note that tactile stimulation by converting voice pitch into vibration patterns improves discrimination of speech intonation contrasts20, 21, an approach that is similar to the demonstrated role of fundamental frequency in the EAS benefits22, 23.

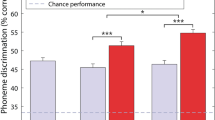

Here we extracted the fundamental frequency of speech sentences and converted it into tactile vibrations that were delivered to the index finger of ten cochlear implant users. We compared speech recognition in noise with cochlear implants alone and with the additional tactile stimulation. On average (Fig. 1A), the speech reception threshold was 13.1 dB for the cochlear implant alone condition, which was significantly worse than the 10.9 dB for the bimodal ETS condition (size of the effect = 2.2 dB: paired t-test (9) = 2.00, p < 0.05). On an individual basis (Fig. 1B), except for Subject 1, who displayed worse performance with the additional tactile stimulation (−1.2 dB), all subjects showed improved performance from 0.3 dB (Subject 2) to 7.0 dB (Subject 10) for the bimodal ETS condition.

(A) Average speech reception threshold (SRT) between the cochlear implant only condition (CI: open bar) and the combined electro-tactile stimulation (ETS: filled bar). The error bars represent one standard deviation of the mean. The asterisk indicates a significant improvement of the ETS condition over the CI condition. (B) Individual enhancement in terms of the SRT difference between the CI and ETS conditions, ranked from low (Subject 1) to high (Subject 10).

Discussion

Comparison with electro-acoustic stimulation

For EAS, the amount of potential improvement is known to depend on the quality of the low-frequency hearing. Under optimal EAS conditions simulated by normal-hearing subjects, low-frequency acoustic sounds can improve speech reception threshold by 10–15 dB24. A similar effect has also been observed when using only the voice fundamental frequency23, 25. For actual EAS users, the enhancement effect was reduced to 1–5 dB26,27,28,29, likely due to impairments in residual acoustic hearing30. The present 2.2 dB ETS effect is within the range as previously reported in actual EAS users.

Underlying mechanisms

The similar range of improvement for both ETS and EAS suggests the involvement of similar underlying mechanisms. First, ETS and EAS both utilize the same low- frequency range (<500 Hz). Second, compared with the auditory mode, the tactile mode produces similar intensity discrimination of 1–3 dB31 and gap detection of 10 ms32 at comfort levels. However, tactile frequency discrimination is more than one order of magnitude worse (~20%) compared to the 1% or less difference limen in acoustic hearing33, 34. In other words, tactile stimulation should only be considered as a spectrally-impaired channel for auditory information, with a psychophysical capacity similar to the actual EAS users. Third, tactile information is known to integrate with auditory information throughout the auditory pathway from the cochlear nucleus to the auditory cortex35, 36. Finally, tactile stimulation affects auditory perception from sound detection and discrimination to speech recognition and even tinnitus generation37,38,39,40. These bimodal interactions are likely the neural basis underlying the present ETS effect.

Design considerations for electro-tactile stimulation

In order to provide full-spectrum information for speech recognition, previous tactile aids had over-ambitious goals41 with designs having multiple contacts and complex stimulation patterns42. In contrast, the present ETS results suggest that tactile aids should be designed with different goals when integrated with cochlear implants. Due to the limited tactile capacity and the proven fundamental frequency advantage, tactile aids only need to provide low-frequency information to convey voice pitch with matched tactile capacity. For instances, in speakers, such as some females and children, with a fundamental frequency over 200 Hz, the tactile aid can transpose the fundamental frequency to a lower frequency range (e.g., <200 Hz) that is the most sensitive to touch43, while providing similar enhancement of cochlear implant performance as shown in previous EAS studies25. Alternatively, the temporal patterns of the fundamental frequency can instead be converted into spatial patterns44. Because vibrotactile and electrotactile modes have both shown similar perceptual capacity45, future studies may consider the delivery of electrotactile stimulation as an integrated tactile aid and cochlear implant option. Finally, tactile aids can be incorporated in future human and machine interface systems46,47,48.

Methods

Subjects

Ten cochlear implant subjects participated in this study, including 7 females, and 3 males with ages ranging from 35 to 82 years old. The subjects used either a Nucleus device (Cochlear Ltd., Sydney, Australia) or a Clarion device (Advanced Bionics Corp. Valencia, CA). They had over one year of experience with their respective devices and performed well on HINT sentences in quiet (82 ± 5% correct recognition scores). The subjects had an unaided air-conduction threshold that was greater than 80 dB HL at octave frequencies from 125 Hz to 8000 Hz. All subjects signed an informed consent approved by the University of California Irvine Institutional Review Board (IRB) and were paid for their participation in the study. The IRB approved the experimental protocol used in the present study, ensuring compliance with federal regulations, state laws, and university policies.

Stimuli

Figure 2 illustrates the experimental setup. A computer was used to control the stimulus generation, calibration, and delivery through custom Matlab programs and a 24-bit external USB sound card at a 44.1 kHz sampling rate (Creative Labs Inc., Milpitas, CA). Auditory stimulation was delivered via a GSI 61 audiometer and speaker (Grason-Stadler Inc., Eden Prairie, MN). The subjects were placed in a soundproof booth at a distance of 1 meter away from the speaker. The most comfortable level was presented on an individual basis, ranging from 65 to 75 dB SPL across subjects.

A tactile transducer (Tactaid Model VBW32, Audiological Engineering Corp., Somerville, MA) was used to deliver tactile stimulation. The tactile transducer was powered by an amplifier (Crown Audio, Elkhart, IN), and attached to the index fingertip of the non-dominant hand of the subject using electrical tape. The subjects rested their arms on a desk and were asked to place their hand palm-side up to keep the vibration intensity consistent. A 250-Hz sinusoid was used to calibrate the tactile stimulation, with the maximum output of the tactile stimulator being adjusted to 2.5 volts, or a 0 dB reference. The most comfortable level of tactile stimulation ranged between −20 dB to −10 dB relative to the maximum output across the subjects.

IEEE sentences49 were used as the target stimuli while speech-spectrum-shaped noise was used as the masker. Due to the limited bandwidth of tactile sensation15, only the fundamental frequency of the IEEE sentences was extracted and delivered to the tactile transducer. The method of fundamental frequency extraction was described previously22, 50. To deliver ETS, the unprocessed IEEE sentences were presented to the cochlear implants while the fundamental frequency was delivered simultaneously to the tactile transducer.

Procedures

A one-down, one-up adaptive procedure was used to measure the speech reception threshold51. Speech reception threshold was defined as the signal-to-noise ratio at which the subject achieved 50% correct responses. Therefore, lower speech reception thresholds meant better performance.

References

Zeng, F. G., Rebscher, S., Harrison, W., Sun, X. & Feng, H. H. Cochlear Implants: System Design, Integration and Evaluation. IEEE Rev Biomed Eng. 1, 115–142, doi:10.1109/RBME.2008.2008250 (2008).

Wilson, B. S. et al. Better speech recognition with cochlear implants. Nature 352, 236–238, doi:10.1038/352236a0 (1991).

Clark, G. M. The multichannel cochlear implant for severe-to-profound hearing loss. Nat. Med. 19, 1236–1239, doi:10.1038/nm.3340 (2013).

Fayad, J. N., Don, M. & Linthicum, F. H. Jr. Distribution of low-frequency nerve fibers in the auditory nerve: Temporal bone findings and clinical implications. Otol Neurotol 27, 1074–1077, doi:10.1097/01.mao.0000235964.00109.00 (2006).

von Ilberg, C. et al. Electric-acoustic stimulation of the auditory system. New technology for severe hearing loss. ORL. J. Otorhinolaryngol. Relat. Spec. 61, 334–340, 27695 (1999).

Kong, Y. Y., Stickney, G. S. & Zeng, F. G. Speech and melody recognition in binaurally combined acoustic and electric hearing. J. Acoust. Soc. Am. 117, 1351–1361, doi:10.1121/1.1857526 (2005).

Ching, T. Y., Incerti, P. & Hill, M. Binaural benefits for adults who use hearing aids and cochlear implants in opposite ears. Ear Hear. 25, 9–21, doi:10.1097/01.AUD.0000111261.84611.C8 (2004).

Dorman, M. F., Gifford, R. H., Spahr, A. J. & McKarns, S. A. The benefits of combining acoustic and electric stimulation for the recognition of speech, voice and melodies. Audiol. Neurootol. 13, 105–112, doi:10.1159/000111782 (2008).

Gantz, B. J. & Turner, C. W. Combining acoustic and electrical hearing. Laryngoscope 113, 1726–1730, doi:10.1097/00005537-200310000-00012 (2003).

Middlebrooks, J. C. & Snyder, R. L. Auditory prosthesis with a penetrating nerve array. J Assoc Res Otolaryngol 8, 258–279, doi:10.1007/s10162-007-0070-2 (2007).

Weisenberger, J. M. & Miller, J. D. The role of tactile aids in providing information about acoustic stimuli. J. Acoust. Soc. Am. 82, 906–916, doi:10.1121/1.395289 (1987).

Levitt, H. Cochlear prostheses: L’enfant terrible of auditory rehabilitation. J. Rehabil. Res. Dev. 45, ix–xvi (2008).

Working, G. on Communication Aids for the Hearing-Impaired. Speech-perception aids for hearing-impaired people: current status and needed research. J. Acoust. Soc. Am. 90, 637–683, doi:10.1121/1.402341 (1991).

Carney, A. E. et al. A comparison of speech discrimination with cochlear implants and tactile aids. J. Acoust. Soc. Am. 94, 2036–2049, doi:10.1121/1.407477 (1993).

Verrillo, R. T. Psychophysics of vibrotactile stimulation. J. Acoust. Soc. Am. 77, 225–232, doi:10.1121/1.392263 (1985).

Bernstein, L. E., Demorest, M. E., Coulter, D. C. & O’Connell, M. P. Lipreading sentences with vibrotactile vocoders: performance of normal-hearing and hearing-impaired subjects. J. Acoust. Soc. Am. 90, 2971–2984, doi:10.1121/1.401771 (1991).

Brooks, P. L., Frost, B. J., Mason, J. L. & Chung, K. Acquisition of a 250-word vocabulary through a tactile vocoder. J. Acoust. Soc. Am. 77, 1576–1579, doi:10.1121/1.392000 (1985).

Lynch, M. P., Eilers, R. E., Oller, D. K. & Lavoie, L. Speech perception by congenitally deaf subjects using an electrocutaneous vocoder. J. Rehabil. Res. Dev. 25, 41–50 (1988).

Cowan, R. S. et al. Perception of sentences, words, and speech features by profoundly hearing-impaired children using a multichannel electrotactile speech processor. J. Acoust. Soc. Am. 88, 1374–1384, doi:10.1121/1.399715 (1990).

Rothenberg, M. & Molitor, R. D. Encoding voice fundamental frequency into vibrotactile frequency. J. Acoust. Soc. Am. 66, 1029–1038, doi:10.1121/1.383322 (1979).

Hnath-Chisolm, T. & Kishon-Rabin, L. Tactile presentation of voice fundamental frequency as an aid to the perception of speech pattern contrasts. Ear Hear. 9, 329–334, doi:10.1097/00003446-198812000-00009 (1988).

Carroll, J., Tiaden, S. & Zeng, F. G. Fundamental frequency is critical to speech perception in noise in combined acoustic and electric hearing. J. Acoust. Soc. Am. 130, 2054–2062, doi:10.1121/1.3631563 (2011).

Zhang, T., Dorman, M. F. & Spahr, A. J. Information from the voice fundamental frequency (F0) region accounts for the majority of the benefit when acoustic stimulation is added to electric stimulation. Ear Hear. 31, 63–69, doi:10.1097/AUD.0b013e3181b7190c (2010).

Chang, J. E., Bai, J. Y. & Zeng, F. G. Unintelligible low-frequency sound enhances simulated cochlear-implant speech recognition in noise. IEEE Trans. Biomed. Eng. 53, 2598–2601, doi:10.1109/TBME.2006.883793 (2006).

Brown, C. A. & Bacon, S. P. Achieving electric-acoustic benefit with a modulated tone. Ear Hear. 30, 489–493, doi:10.1097/AUD.0b013e3181ab2b87 (2009).

Cullington, H. E. & Zeng, F. G. Comparison of bimodal and bilateral cochlear implant users on speech recognition with competing talker, music perception, affective prosody discrimination, and talker identification. Ear Hear. 32, 16–30, doi:10.1097/AUD.0b013e3181edfbd2 (2011).

Gifford, R. H. et al. Cochlear implantation with hearing preservation yields significant benefit for speech recognition in complex listening environments. Ear Hear. 34, 413–425, doi:10.1097/AUD.0b013e31827e8163 (2013).

Rader, T., Fastl, H. & Baumann, U. Speech perception with combined electric-acoustic stimulation and bilateral cochlear implants in a multisource noise field. Ear Hear. 34, 324–332, doi:10.1097/AUD.0b013e318272f189 (2013).

Turner, C. W., Reiss, L. A. & Gantz, B. J. Combined acoustic and electric hearing: preserving residual acoustic hearing. Hear. Res. 242, 164–171, doi:10.1016/j.heares.2007.11.008 (2008).

Gifford, R. H., Dorman, M. F. & Brown, C. A. Psychophysical properties of low-frequency hearing: implications for perceiving speech and music via electric and acoustic stimulation. Adv. Otorhinolaryngol. 67, 51–60, doi:10.1159/000262596 (2010).

Gescheider, G. A., Bolanowski, S. J. Jr., Verrillo, R. T., Arpajian, D. J. & Ryan, T. F. Vibrotactile intensity discrimination measured by three methods. J. Acoust. Soc. Am. 87, 330–338, doi:10.1121/1.399300 (1990).

Gescheider, G. A., Bolanowski, S. J. & Chatterton, S. K. Temporal gap detection in tactile channels. Somatosens. Mot. Res. 20, 239–247, doi:10.1080/08990220310001622960 (2003).

Mahns, D. A., Perkins, N. M., Sahai, V., Robinson, L. & Rowe, M. J. Vibrotactile frequency discrimination in human hairy skin. J. Neurophysiol. 95, 1442–1450, doi:10.1152/jn.00483.2005 (2006).

Wier, C. C., Jesteadt, W. & Green, D. M. Frequency discrimination as a function of frequency and sensation level. J. Acoust. Soc. Am. 61, 178–184, doi:10.1121/1.381251 (1977).

Shore, S. E. Plasticity of somatosensory inputs to the cochlear nucleus–implications for tinnitus. Hear. Res. 281, 38–46, doi:10.1016/j.heares.2011.05.001 (2011).

Kayser, C., Petkov, C. I., Augath, M. & Logothetis, N. K. Integration of touch and sound in auditory cortex. Neuron 48, 373–384, doi:10.1016/j.neuron.2005.09.018 (2005).

Ito, T., Tiede, M. & Ostry, D. J. Somatosensory function in speech perception. Proc. Natl. Acad. Sci. USA 106, 1245–1248, doi:10.1073/pnas.0810063106 (2009).

Wilson, E. C., Reed, C. M. & Braida, L. D. Integration of auditory and vibrotactile stimuli: effects of frequency. J. Acoust. Soc. Am. 127, 3044–3059, doi:10.1121/1.3365318 (2010).

Young, G. W., Murphy, D. & Weeter, J. Haptics in Music: The Effects of Vibrotactile Stimulus in Low Frequency Auditory Difference Detection Tasks. IEEE Trans Haptics (2016).

Levine, R. A. Somatic (craniocervical) tinnitus and the dorsal cochlear nucleus hypothesis. Am. J. Otolaryngol. 20, 351–362, doi:10.1016/S0196-0709(99)90074-1 (1999).

Cowan, R. S., Alcantara, J. I., Whitford, L. A., Blamey, P. J. & Clark, G. M. Speech perception studies using a multichannel electrotactile speech processor, residual hearing, and lipreading. J. Acoust. Soc. Am. 85, 2593–2607, doi:10.1121/1.397754 (1989).

Cowan, R. S. et al. Role of a multichannel electrotactile speech processor in a cochlear implant program for profoundly hearing-impaired adults. Ear Hear. 12, 39–46, doi:10.1097/00003446-199102000-00005 (1991).

Rothenberg, M., Verrillo, R. T., Zahorian, S. A., Brachman, M. L. & Bolanowski, S. J. Jr. Vibrotactile frequency for encoding a speech parameter. J. Acoust. Soc. Am. 62, 1003–1012, doi:10.1121/1.381610 (1977).

Hnath-Chisolm, T. & Medwetsky, L. Perception of frequency contours via temporal and spatial tactile transforms. Ear Hear. 9, 322–328, doi:10.1097/00003446-198812000-00008 (1988).

Blamey, P. J. et al. Perception of amplitude envelope variations of pulsatile electrotactile stimuli. J. Acoust. Soc. Am. 88, 1765–1772, doi:10.1121/1.400197 (1990).

Mroueh, Y., Marcheret, E. & Goel, V. Deep multimodal learning for audio-visual speech recognition. Proc. IEEE Conference Acoustics Speech Signal Processing (ICASSP), 2130–2134 (2015).

Feng, X., Richardson, B., Amman, S. & Glass, J. On using heterogeneous data for vehicle-based speech recognition: a DNN-based approach. Proc. IEEE Conference Acoustics Speech Signal Processing (ICASSP), 4385–4389 (2015).

Rutkowski, T. M. & Mori, H. Tactile and bone-conduction auditory brain computer interface for vision and hearing impaired users. Journal of Neuroscience Methods 244, 45–51 (2015).

Rothauser, E. H. et al. I.E.E.E. recommended practice for speech quality measurements. IEEE Trans Aud Electroacoust 17, 227–246 (1969).

Stickney, G. S., Assmann, P., Chang, J. & Zeng, F. G. Effects of cochlear implant processing and fundamental frequency on the intelligibility of competing sentences. J. Acoust. Soc. Am. 122(2), 1069–1078, doi:10.1121/1.2750159 (2007).

Zeng, F. G. et al. Speech recognition with amplitude and frequency modulations. Proc. Natl. Acad. Sci. USA 102, 2293–2298, doi:10.1073/pnas.0406460102 (2005).

Acknowledgements

This work was supported by NIH Grants 1R01-DC008858, 1R01-DC015587, 4P30-DC008369 (F.G.Z.) and NSF Grant of China #30670697 (J.H.).

Author information

Authors and Affiliations

Contributions

J.H. and F.G.Z. are responsible for all phases of the research. B.S. and P.L. helped design experiment and edit manuscript. F.G.Z. and J.H. wrote the manuscript.

Corresponding author

Ethics declarations

Competing Interests

F.G.Z. is a scientific founder of Nurotron Biotechnology and SoundCure.

Additional information

Publisher's note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Huang, J., Sheffield, B., Lin, P. et al. Electro-Tactile Stimulation Enhances Cochlear Implant Speech Recognition in Noise. Sci Rep 7, 2196 (2017). https://doi.org/10.1038/s41598-017-02429-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-017-02429-1

This article is cited by

-

Vibrotactile enhancement of musical engagement

Scientific Reports (2024)

-

Improved tactile speech robustness to background noise with a dual-path recurrent neural network noise-reduction method

Scientific Reports (2024)

-

Electro-vibrational stimulation results in improved speech perception in noise for cochlear implant users with bilateral residual hearing

Scientific Reports (2023)

-

Improved speech intelligibility in the presence of congruent vibrotactile speech input

Scientific Reports (2023)

-

Erlanger Modell der Tinnitusentstehung – Perspektivwechsel und neue Behandlungsstrategie

HNO (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.