Abstract

Despite abundant accessible traffic data, researches on traffic flow estimation and optimization still face the dilemma of detailedness and integrity in the measurement. A dataset of city-scale vehicular continuous trajectories featuring the finest resolution and integrity, as known as the holographic traffic data, would be a breakthrough, for it could reproduce every detail of the traffic flow evolution and reveal the personal mobility pattern within the city. Due to the high coverage of Automatic Vehicle Identification (AVI) devices in Xuancheng city, we constructed one-month continuous trajectories of daily 80,000 vehicles in the city with accurate intersection passing time and no travel path estimation bias. With such holographic traffic data, it is possible to reproduce every detail of the traffic flow evolution. We presented a set of traffic flow data based on the holographic trajectories resampling, covering the whole city, including stationary average speed and flow data of 5-minute intervals and dynamic floating car data (FCD).

Measurement(s) | speed |

Technology Type(s) | Interpolation Imputation Technique |

Sample Characteristic - Location | Xuancheng City Prefecture |

Similar content being viewed by others

Background & Summary

The hologram technology1 uses continuous media to record the optical information of objects whose three-dimensional light field can be reproduced afterward. Analogously, in this paper, the holographic data of the traffic flow is defined as the global information of all vehicles’ dynamics, i.e., the trajectories of each vehicle in the traffic flow. And the ability to reproduce accurate traffic flow on a city-wide scale has significant implications for real-world traffic control, path planning, and decision-making process.

Therefore, trajectory reconstruction is essential, considering the limitations of directly observed data. The most intuitive method to get trajectory data might be object recognition from a high-angle camera, such as the well-known NGSIM dataset2. However, further data enhancement procedures are needed to overcome the measurement error, such as data filtering3,4, and traffic dynamic-based model calibration5. Considering the price and the installation coverage, high-angle cameras are more suitable for application in local scenarios. On the contrary, FCD has the advantage of spatial-temporal coverage and the ability to track individual trajectories, which is better for creating city-wide scenarios. Such FCD could be generated by varying mobile sensors, such as GPS, RFID, or automated vehicle built-in sensors. In this way, the challenge is reconstructing the non-equipped vehicles’ trajectories in the traffic flow. Using the “first-in-first-out” principle on the signalized intersections and the traffic wave theory, one can reconstruct the trajectories of each vehicle based on the partial observation of the floating cars6. With the development of connected and automated vehicles (CAV), the method could also be used in the mixed traffic flow of human-driven vehicles and CAVs7,8. However, the reconstructed data’s accuracy depends on the floating cars’ sampling rate. And the rate changes during the day, which leads to the uncertainty of the data.

On the other hand, an AVI9 device is able to capture the identity and the timestamp of vehicles when passing by a specific checkpoint on the road. With the growing number of traffic cameras, AVI detectors are implemented in almost every intersection in Chinese cities. And one can obtain timestamped location sequences of all vehicles benefit from wide distributed AVI detectors on the road network.

With such comprehensive identified traffic data, it is possible to generate the holographic trajectories by enriching details of traffic flow dynamics. This paper presents a method to reconstruct trajectories of vehicles from discrete serials of AVI observations. Based on the reconstructed trajectories, we propose a sampling method on traffic flow data to simulate the detecting processes from both views of Eulerian and Lagrangian traffic flow observations, such as traffic count detection by loop detectors and real-time position detection by floating cars.

Moreover, the proposed methods are implemented in Xuancheng, China. With 97% of intersections equipped with AVI devices, the system captures almost every vehicular movement on the road network, daily producing 4 million records. In this case, Xuancheng might be known as the first city empowered with the insight of all-field round-the-clock vehicular trips. Considering the risk of personal information leaking, researchers are encouraged to collect cross-sectional aggregating data and limited vehicular trajectories through a supervised interactive virtual traffic measurement service.

Such resampled traffic data could support various of transportation-related researches. For instance, 1) consistent multi-source detected data could be resampled from the holographic dataset for data fusion research; 2) mobility patterns could be found from full sampled individual trip data; 3) optimal planning of traffic detectors deployment could be tested by placing custom virtual detectors on the data platform.

Methods

The AVI technology is widely used in traffic enforcement cameras to automatically identify vehicles involving traffic violations10, saving numerous human works to recognize license plates from raw images. Generally, active AVI detection identifies and records every vehicle passing the checkpoint11, even those not involving traffic violations. Thus, each vehicle on the road network would generate a trajectory constituted by a series of identifying records known as license plate recognition (LPR) data12.

However, in the early days, the AVI deployment coverage and license recognition accuracy are not enough to get precise travel paths. Hence, some of the researches focused on the macroscopic profile of the traffic flow, such as original-destination (OD) reconstruction13,14, and speed profile estimation15. With the significant development of dynamic AVI technology and the wide deployment of AVI cameras, it is possible to reconstruct the closed travel chain using successive LPR records16,17. Moreover, deep learning algorithms like GNN are employed to reduce uncertainties in identifying vehicles in recent research18,19.

Although the above methods provide plausible solutions to trip reconstruction, path estimation errors are introduced due to the limited AVI coverage. The estimation accuracy mainly depends on a certain coverage rate, as known as the proportion of AVI-equipped intersections in the whole road network. The higher coverage of AVI-equipped intersections implies that there are fewer trip paths to reconstruct. With the benefit of this high coverage, we could get promising results from some simple and effective reconstruction algorithms.

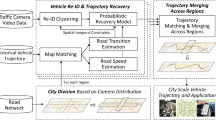

Therefore, the generic workflow for generating the holographic trajectories and the related resampled data is depicted in Fig. 1. Two main procedures (P1 & P2) turn the discrete raw LPR data into continuous trajectories through the workflow. Trip measurement turns the partial observable LPR data into segmental trip data with certain paths on a constructed full-sensing road network (FSRN). Then trajectory reconstructing interpolation is applied to each segment to form the holographic traffic flow data20. Finally, one can run virtual traffic detection (P3) on holographic trajectories and resample various traffic flow data.

Road network description

To avoid path estimation error, the trajectory reconstruction is conducted on a well-defined road network on which the LPR data are mapped. This paper describes the physical road network (PRN) as a directed graph, denoted asG*(N*, S*). The other related notation is in Table 2. There should be at most one trip path for any serial of LPR records to guarantee no path estimation bias, i.e., m(as, t) ϵ {0, 1}. Let \({N}^{A}\) be the set of the AVI-equipped intersections. It is clear that \({N}^{A}\subseteq {N}^{\ast }\). Assuming an ideal circumstance that NA = N*, all the trip paths on the physical network can be observed.

When \({N}^{A}\subset {N}^{\ast }\), it is still possible to capture all of the trips, as long as the following full-sensing condition is satisfied.

Definition 0.1 (Full-sensing road network (FSRN)) A full-sensing road network (FSRN) is a road network graph that among all the paths between any two different AVI-equipped intersections, there is no more than one path with non-AVI-equipped intersections.

It guarantees that the path between two consecutive LPR records is determined. Details of the full-sensing theorem are in Appendix A. This theorem demonstrates that it is unnecessary to deploy an AVI detector on each intersection to get the full-sensing condition.

Let LPR data be \({{\bf{a}}}_{I}=({t}_{I},I)\), containing the timestamp and indicator of a vehicle passing Node I. Then the record of the trip rI, J consists of a serial of spatial-temporal locations, i.e., \({{\boldsymbol{a}}}_{I,J}=\{{{\boldsymbol{a}}}_{I},{{\boldsymbol{a}}}_{A},...,{{\boldsymbol{a}}}_{J}\}\). Such as consecutive LPR records \(\{{{\boldsymbol{a}}}_{B},{{\boldsymbol{a}}}_{D}\}\) in Fig. 2, the path \({r}_{B,D}=\{B,E,D\}\) can be determined regardless of missing detection.

Generally speaking, if the PRN fails the full-sensing condition, the challenge is to construct an FSRN according to the locations of AVI-equipped intersections. The idea is to extract an FSRN from the physical network by eliminating some road segments and intersections. Then a trip on PRN would be divided into two parts, including on-FSRN parts and off-FSRN parts. For instance, a trip \({r}_{A,I}=\{A,B,J,K,F,I\}\) in Fig. 2 would be divided into \({r}_{A,B}=\{A,B\}\), \({r}_{B,F}=\{B,J,K,F\}\), and \({r}_{F,I}=\{F,I\}\), where \({r}_{B,F}\) is the off-FSRN part. Furthermore, The closed traffic zone is constructed to keep the off-FSRN parts in a particular area.

Definition 0.2 (Closed traffic zone) A closed traffic zone is an area bounded by FSRN road segments, and for any non-FSRN segments in the zone, their connected segments are also within the zone area.

In this way, a trip on the physical road network might be represented by several parts on FSRN separated by staying or mobility within the traffic zones. The trip \({r}_{A,I}\) mentioned above could be represented by inter-zone movements \({r}_{A,B}\), \({r}_{F,I}\), and inner-zone activity \({r}_{B,F}\). Related details can be found in Appendix B.

In order to obtain vehicular movements as high resolution as possible from an AVI-fixed-locating road network, the challenge is to minimize the area of the traffic zones by constructing a suitable sensing network under the constraint of the full sensing criterion. Additionally, more AVI implemented intersections indicate more resemblance to the FSRN and the PRN. Thus more detailed activities can be captured, i.e, \({N}^{A}\to {N}^{\ast }\), \(FSRN\to PRN\). A current sensing network of Xuancheng city is shown in Fig. 3. In Xuancheng city, the AVI installation rate among the intersections is 97%.

Despite such an almost ideal trip observation in Xuancheng, the trajectory reconstruction is still a problem of interpretation for observed passing time at both the upstream and downstream ends of a road segment. For trajectories, the turning directions on each intersection could be easily inferred by downstream LPR records, while their exact lanes are hardly recognized. Consider a series of AVI records from network in Fig. 2, \({{\boldsymbol{a}}}_{A,F}=\{{{\boldsymbol{a}}}_{A},{{\boldsymbol{a}}}_{B},{{\boldsymbol{a}}}_{C},...,{{\boldsymbol{a}}}_{F}\}\). We can infer the vehicle passing straight on Intersection B because \({s}_{A,B}\) and \({s}_{B,C}\) are in the same direction. And the vehicle was most likely in the right-turning stream passing Intersection C for a similar reason. The lane-level information, unfortunately, lacks confidence due to the complicated circumstances such as the left and straight sharing lane and even the occasional detection error by the AVI cameras. Thus, the traffic flow dynamic would be described by the turning stream on each intersection, rather than different lanes on the road segments21. For vehicular dynamics within the road segment \({s}_{I,J}\) of the trip, the trajectory \(x(t)\) between aI and aJ can be calculated as follows:

Since traffic flow dynamics are adapted to the stream level, a vehicular location on the FSRN at time t contains the linear reference of the road-segment upstream end and the turning direction. A direction is described as u(t), representing the traffic stream from the current segment to the next segment of a trip. u(t) during the trip from I to O is described as follows,

where \(\{I,A,B\}\) denotes the direction from segment \({s}_{I,A}\) to segment \({s}_{I,B}\) during \(({t}_{I},{t}_{A})\). Note that the last observed segment is s*, O. The turning direction {*, O, P} is inferred by the traffic stream, and the trajectory on the downstream segment \({s}_{O,P}\) is beyond the scope of reconstruction.

To sum up, for an observation (aI, O) on the FSRN, there is a determining trip path \(\left({r}_{I,O}\right)\) where I and O are not adjacent. And one can infer the segment-level location of the vehicle, denoted as s(t).

Thus, the network-wised stream-level continuous trajectory is represented as \(\{s(t),u(t),x(t)\}\), while the segment-level trajectory is represented by s(t). For instance, assuming the length of each segment in Fig. 2 is 400 m, and a vehicle moves with the speed of 20 m/s on the path {B, C, F}. Then the trajectory records with the 10-second time step are shown in Table 1. Note that on t = 20 s, the vehicle is on intersection C, which is both the downstream end of BC (x = 400) and the upstream end of CF (x = 0).

Trip measurement

As shown in the workflow (Fig. 1), a trip dividing algorithm is required to get trip-based spatial-temporal serials. The basic procedure is determining whether two consecutive records belong to the same trip. This paper uses the travel time of a vehicle passing two consecutive AVI-equipped intersections ni and ni + 1 as a spatial-temporal accessibility criterion. Here the index number of the intersection implies its sequence in the trip.

where \({l}_{i,i+1}\) is the length of segment \({s}_{{n}_{i},{n}_{i+1}}\), \({v}_{min}\) is the minimal travel speed, and \({t}_{{n}_{i}}\) is the passing time of record \({{\boldsymbol{a}}}_{{n}_{i}}\). \(H=1\) indicates that records \({{\boldsymbol{a}}}_{{n}_{i}}\) and \({{\boldsymbol{a}}}_{{n}_{i+1}}\) belong to one trip, while \(H=0\) means at least one staying behavior between \({{\boldsymbol{a}}}_{{n}_{i}}\) and \({{\boldsymbol{a}}}_{{n}_{i+1}}\).

It is common to reconstruct vehicular trajectories on signalized intersections using traffic wave theory. In these researches5,6,7,8, it is assumed that the time of a vehicle passing the intersection is observable. However, as shown in Fig. 2, not each passing point in trip r can be recorded by AVI detectors, such as \({r}_{B,D}=\{B,E,D\}\). In other word, the observation could be a subset of the trip records, i.e., \({{\boldsymbol{a}}}^{o}=\{{{\boldsymbol{a}}}_{{n}_{i}}| {n}_{i}\in {N}^{A}\},{{\boldsymbol{a}}}^{o}\subseteq {\boldsymbol{a}}\).

Under such circumstances that a trip path contains non-AVI-equipped intersections, the following algorithm is introduced to get the inferred possible passing time. It considers the non-AVI passing points and accessibility criteria in Eq. 4 (details in Appendix C). The idea is that, one can use Eq. 4 to judge accessibility on segment \({s}_{{n}_{k},{n}_{k+1}}\) between the green light phase \({\tau }_{k}=[{g}_{k}^{start},{g}_{k}^{end}]\) and \({\tau }_{k+1}=[{g}_{k+1}^{start},{g}_{k+1}^{end}]\).

For trip r = {ni, ni+1,…,nk,…,nj}, we can search accessible downstream green light phases into a set Tk as depicted in Fig. 4a, iteratively. The downstream searching process runs for \(i+1\le k\le j\) and generates the potential passing graph \({P}_{i,j}(T,E)\) in which the edges indicate two consequent passing phases. For each accessible phase node in layer Tj, we can pick the candidates \({T}_{j}^{\ast }\) where \({t}_{j}\in [{g}_{j}^{start},{g}_{j}^{end}]\) fits (black dots in Fig. 4b). Then remove other phase nodes and their connecting edges (dotted lines in Fig. 4b) from the graph as follows.

By updating candidates of phases and edges from the downstream end to the upstream end, we can trim the graph into an accessible passing graph \({P}_{i,j}^{\ast }\left(T,E\right)\) for the path from node ni to nj. Then the passing moments could be determined with the speed-density information given by the leading and following vehicles, as mentioned in Appendix D.

Note that AVI detectors might failed recognizing a small portion of the passing vehicles due to poor visual conditions. For instance, assuming missing observation aA on trip \({\boldsymbol{a}}=[{{\boldsymbol{a}}}_{B},{{\boldsymbol{a}}}_{A},{{\boldsymbol{a}}}_{D}]\) in Fig. 2, the passing-time inference algorithm would be applied for path \([{n}_{B},{n}_{E},{n}_{D}]\) since it is the only path between B and D without any AVI-equipped intersections. If the signals on E did not fit in, such situation would causes trip chain disconnection \(\left({P}_{i,j}^{\ast }=(\phi ,\phi )\right)\). Otherwise, it would be a false match. Therefore, the accuracy of the AVI detection is important to the trip measurement.

Vehicular trajectories reconstruction

The traffic streams consist of the vehicles of the same turning on the road segment. The dynamics in the same stream would be described as stop-and-go waves caused by the signal periods on the downstream end.

A demonstration of vehicular trajectories in the traffic stream is shown in Fig. 5. The green and red bars on x = xj represent green and red phases in the signal circles. Furthermore, the wave’s speed is determined by the vehicle queuing state and releasing state of the traffic flow, i.e.,

where qm is the capacity, km is the density under capacity, and kj is the jammed density. In order to calculate vehicular trajectories in Eq. 1, such as the 5 vehicles in Fig. 5, the solution of v(t) is formulated as a piecewise function.

To gain the solutions, a backward procedure of trajectories reconstruction is proposed for each passing vehicle, calculating from the downstream to the upstream of the traffic flow. Hence, the reconstruction begins at the last signal period and iterates by signal circles. In other words, the v(t) is calculated from vj to v1. Each iteration starts with observations of the passing vehicles in the current period and the remaining ones from the former iteration, resulting in the new reconstructing states of these vehicles. For instance, Iteration 2 in Fig. 5 contains remained vehicles (veh3, 4) and passing vehicle(s) (veh2). At the end of the iteration, veh4’s trajector has been constructed, while trajectories of (veh2, 3) remained undone and passed to Iteration 3. The key is to distinguish queued vehicles from non-queued ones. Then we can complete the trajectories of the non-queued vehicles, leaving the queued ones to the subsequent iterations. Details of the reconstruction method are in Appendix 4.

Virtual traffic flow detection

With the holistic reconstructed trajectories, the holograph of the city-scale mobility can be acquired. Note that such a high-resolution individual mobility dataset implies a high risk of personal information being abused. Thus it is restricted to access the generated raw trajectories directly. As an alternative, numerical traffic flow detection is applied. In reality, the traffic flow can be observed from both Eulerian and Lagrangian perspectives. Analogously, the reconstructed dataset supports both cross-sectional and vehicular detection.

Numerical stationary detection

For stationary observation, traditional loop data can be simulated by counting intersections of the curves of trajectories crossing the horizontal loop location line as the blue dash line in Fig. 6. Moreover, the occupancy and velocity can be measured according to the loop’s length. Additionally, segmental measurement could be employed, which detects the instant density (as on orange line) and the swwpace-mean speed (as in orange frame) of the traffic flow as the orange dash line in Fig. 6. The missing rate is introduced in the loop data resampling process to simulate the systematic detecting error in realistic circumstances. Each vehicle counting is taken as a Bernoulli trial having the missing rate as the possibility of failure. By manipulating the aggregating interval of the loop detectors, we can observe the different characteristics of the evolving traffic flow. Under a short interval, the characteristic of short-term traffic flow appeals, showing the dynamic state-changing phenomena. In contrast, under a long interval, the detected flow-density states scatter more concentrated, revealing the equilibrium of the traffic flow.

Virtual floating car detection

The sample rate controls the penetration of vehicular trajectories resampling, resulting in the red trajectories in Fig. 6. In order to balance the data utility and personal privacy protection, only the trajectories of commercial vehicles are included in the dataset. The proportion of commercial vehicles is about 4.5% to 7% depends on the time. Moreover, all of the license numbers are substituted with their unique and irreversible hash code.

Data Records

We provide three types of data to support different research interests:

-

Short-term anonymized original LPR data

-

Long-term encrypted reconstructed holographic trajectory data

-

Long-term resampled traffic data, including loop data and FCD based on the holographic trajectories

All of the data are available at the Figshare22 repository.

We limit the original LPR data because of the risk of personal information leaking, even if the data are anonymized. With travel characteristics revealed in the long-termed holographic trajectories, one can still recognize the personal identification using additional data, such as parking lot data. Hence, it is necessary to encrypt the trajectory data.

However, the long-term resampled traffic data could be used as the primary support for the related research, which could meet most of the needs. For supplemental use, others can customize their detectors’ settings and implement virtual traffic flow detection using the attached resampling software and the encrypted holographic trajectories. To those interested in the reconstruction method, the short-term anonymized original LPR data could be used for validation. Details of the three types of data are described as follows.

-

1)

The city-scale loop data and FCD are the one-month long resampling results of the Xuancheng holographic data in Sept. 2020. The link-based graph is given in Table 3 for road network description, including the whole 578 road segments of the city. The loop dataset provides the 5-minute aggregated flow-speed data, as shown in Table 4. The FCD includes the trajectories of 500 commercial vehicles are in Table 5, which is sampled every 10 seconds. Their unique IDs can be found in the data repositories.

Table 3 Road network data attributes. Table 4 Loop data attributes. Table 5 FCD attributes. -

2)

The encrypted holographic trajectories can not be accessed directly; however, one can obtain the self-customized results by using the attached resampling software. The usage can be found in the following Usage Notes, and the source code of the software is available, see in Code Availability.

-

3)

The short-term original LPR data for reconstruction validation are shown in Table 6, while the source code of the reconstruction can be found in Code Availability. The LPR data are collected from 7:00 to 8:00 on a workday morning in Xuancheng.

Table 6 LPR data attributes.

Technical Validation

The generated traffic flow profile of morning peak is revealed in Fig. 7. The number of passing vehicles is visualized by the heat map. It presents the radial distribution of the traffic flow. To demonstrate the validity of the generated data, we compared the data with different sources to test the consistency in between. Also, the characteristics of the generated data are analyzed. Several data profiles are drawn from the flow-based perspective and trip-based perspective, respectively.

Flow-based perspective

The flow-based validation includes comparing the traffic flow data on red-marked roads against another observation and analyzing the generated fundamental diagram.

Figure 8a depicts the resampled count numbers and the manual results of the southern in-coming stream on intersection N4724. The resampled data on intersection N4694 and N4724 are compared to the on-sited manual observation, considering vehicles from each in-coming road segment from 11:00 to 12:00 on Sept. 15th, 2020. The correlative coefficient is 0.748 with RMSE = 4.3 veh/min, which shows the consistency.

Furthermore, the network-wide travel time data are compared to the dynamic estimated results from the Amap API and Baidu Map API. Due to the different strategies of Amap and Baidu Map, we propose different comparisons accordingly. Amap API provides travel time estimations of specific paths with a limit on total paths. So we would compare the results on Aofeng Rd., Zhaoting Rd., Baocheng Rd., and Xunhua Rd, which are the main-stream roads of the network. (see Fig. 7) On the other hand, Baidu API allows speed inquiry of each road segment in a specific area. However, only the speed data under congested traffic conditions are recorded. Thus we would compare the results during peak hours. Since some smooth filters and delays on intersections are usually applied in travel time estimation algorithms, the estimated results are likely different from the raw detected ones.

For Amap API, the weekly averaged and zero-mean normalized travel time series are proposed. Figure 8b shows the result on Zhaoting Rd., demonstrating the daily deviation of travel time from the average. Generally speaking, the overall averaging daily travel time is similar to the estimated result by Amap with the correlative coefficient of 0.749.

For Baidu API, the hourly averaged and zero-mean normalized travel time series are proposed. The average speed is weighted by the length and lane numbers of the road segments. The correlative coefficient is 0.738 (see Fig. 8c).

Due to the differences in lane numbers of each segment and the varying green occupancy ratio of each signalized intersection, the fundamental diagram is adapted into a space-integrated form to describe the network-wide characteristics of the traffic. (Fig. 8d,e) The fundamental diagram is integratable on time and space dimensions because traffic macroscopic characteristics are aggregated measures that can be done over vehicles, time, and space23. Therefore, the density term is changed from the number of vehicles per kilometer (veh/km) to the number of vehicles on a road segment (veh). Then the flow term (veh/h) is changed to hourly vehicle-kilometer (veh·km/h). As for the number of vehicles on the road and the hourly vehicle-kilometer, the network-wide quantities can be represented by the sum of the segment-level quantities from each part of the road network.

However, since the speed is an averaging quantity, the ratio of the vehicle-kilometer and the number of vehicles en route keeps the same physical meaning as the average speed of the whole traffic flow. We take a snapshot of the whole network every 30 seconds to count the number of vehicles on the roads and their average speed. Then the vehicle-kilometer could be calculated, which is the product of the number of vehicles and the average speed. Figure 8d,e show the 10-minute moving average of the snapshots’ samples, in which the white color area indicates a denser cluster. As shown in Fig. 8d, the speed-density diagram during the day shift (from 5 A.M. to 7 P.M.) differs from the night shift (from 7 P.M. to 5 A.M.). Note that under the same average speed, the number of vehicles at night is less than that at day. Similarly, with the same amount of vehicles on the network, the travel speed is lower at night. It is implied that under a dimmer lighting condition, vehicles might move slower, and the performance of AVI equipment might be affected. Furthermore, the numbers of vehicles are around 200 and 1000 at night, while the numbers are around 1800 at day. As for the flow-density diagram in Fig. 8e, the vehicle-kilometer at day is slightly above that at night, which is consistent with the results in the speed-density diagram.

Trip-based perspective

The trip-based analysis focuses on the spatial-temporal distribution of the travel demands. The trip-based analysis is mainly according to the spatial-temporal concentration of the individual trips. In this paper, the level of spatial concentration of individual travelers is evaluated by the number of different origin-destination zones (ODZ) in a month. Meanwhile, the level of time concentration is determined by the number of different departure time sections (DTS). As the individual trip is related to the specific traffic zone surrounded by the road segments, the number of different ODZ is easily counted. Since departure time is a continuous variable, we conduct a DBSCAN clustering algorithm on each trip to spontaneously generate discrete departure time sections. Note that some vehicles, such as taxis, have random origin-destination points and departure time, which lead to a long tail distribution on ODZ and DTS, as depicted on Fig. 9a. To avoid the long tail phenomena of spatial-temporal distribution, we take the 85th percentile of the number of DTS and ODZ as the indicators of spatial-temporal concentrating characteristics.

Figure 9c shows the departure time distribution on weekdays of people in different DTS. One can recognition a typical “Work-Home” commute pattern of those DTS = 2, which has much higher peaks during commute time. Besides, the curve of DTS = 4 seems a “Work-Other-Work-Home” pattern and leads to a midday peak of traffic that does not exist in DTS = 2 or DTS = 3 curves. As for DTS = 3, there is a noticeable peak at around 20:00 and indicates a “Work-Other-Home” pattern. For DTS = other, one can find that there are four equivalent peaks at around 7:30, 11:30, 14:00, and 17:30, representing generally high frequent departure times. Since DTS = 2, 3, 4 show the comprehensive mobility patterns, the temporally concentrated travelers are defined as the ones with the 85th DTS in2,3,4. Note that these patterns have up to four different OD zones. Likewise, the spatially concentrated travelers are defined as the ones with the 85th ODZ less than 5.

Figure 9d shows the Lorenz curve of travel distance in a month for all travelers, where the cumulative proportion of the travel distance is plotted against the cumulative proportion of individuals24. It reveals that mobility distribution on the road network is of the same pattern as other business behaviors. Among all travelers, the commercial vehicles at the top 1% of the population share nearly 20% of the cumulative travel distances.

Some of the trips are predictable due to the traveler’s comprehensive characteristics, such as the commuters, the spatially concentrated ones, and the temporally concentrated ones. Furthermore, we can estimate the movements of commercial vehicles since they are under surveillance. These four types of travelers are defined as regular travelers whose patterns are recognizable.

Figure 9b is a pie chart of population and travel distance for different travelers, including commuters, commercial vehicles, temporally concentrated travelers, and spatially concentrated travelers. In summary, the regular ones share 37% of the whole travelers but form 45% of the whole travel distance. Thus, once these 37% regular travelers are well modeled, we can reproduce nearly half of the trips, and the other half might be generated with random methods.

Usage Notes

As mentioned above, there are three types of data we provide. The short-term LPR data and long-term resampled traffic data can be downloaded for static data usage. On the other hand, the encrypted holographic trajectories can be used in the interactive measurement of the traffic flow. Users can modify the virtual detecting environment and get customized virtual detection results. In this way, we can offer the user-customized round-the-clock long-term traffic flow data to the most satisfactory resolution without exposing personal trajectories.

Static dataset usage

The road network file can be imported into the PostGIS database or other supported GIS systems through QGIS. The loop data of each road segment can be used for studying large-scale traffic data prediction. By combining FCD with the loop data, users could examine various data fusion models. Moreover, the FCD data process script could help aggregate individual floating car samples into the segmental travel time. As for LPR data, each row of the dataset is a pair of consecutive records captured by the AVI detectors. One can rebuild the route between these two records with the road network.

Interactive measurement usage

The resampling software is a command-line tool to implement virtual traffic flow detection in encrypted trajectories. Users could tweak the settings in the running properties file and get resampled traffic data straight in the local output files.

In the properties file, users can set the road sections (“ftNode”) and time (“fTime”, “tTime”) of the measurement and define the parameters of loop and floating car detection. Users can switch on or off the floating car detection by setting the “needFCD” property to “true” or “false”. Furthermore, “fcdSamplingSec” denotes the FCD’s sampling period (seconds). For loop detectors, they are identified by the ID (“loopId”), detecting on the specified road segment (“ftNode”). The loop’s position is determined by the property “position”, which denotes the distance from the downstream end of the road. The missing rate (“missingRate”) and the aggregating interval (“interval”) settings are available.

The software can run on Linux, Windows, and macOS systems using different launchers. The command is simple as “osLauncher java -jar /path/to/resampling_software -d /path/of/holoData -c /path/of/properties_file”.

Other details can be found in the “README” file.

A. Full-sensing theorem

Among all the paths between any two different AVI intersections in the study area, if there is no more than one path with non-AVI-equipped intersections, then the trip path for the LPR record is determined, i.e.,

Theorem A.1

. \(\forall i,j\in {N}^{A}\), Let \(R=\{{r}_{i,j}| {r}_{i,j}\cap {N}^{A}=\{i,j\}\}\). If \(n\left({R}_{i,j}\right)\in \{0,1\}\), then \(\forall p,q\in {N}^{\ast }\), \(m\left({A}_{p,q}\right)\in \{0,1\}\)

Proof.

B. Closed zone theorem

If the traffic zone area is bounded by FSRN road segments, and for any non-FSRN segments in the zone, their connected segments are also within the zone area, then the trip of the physical road network (PRN) can be represented as parts on full-sensing road network (FSRN) separated by inner zone activities, i.e., ∵

Theorem B.1

. Let \({r}_{o,d}^{\ast }=\left\{o,i,i+1,i+2,...,i+m,d\right\}\) be a trip on a physical road network, and Z a closed traffic zone on the corresponding full-sensing road network that \({s}_{i,i+1}\subset \bar{Z}\). \(\forall m\ge 1\), \({s}_{i+m-1,i+m}\) on non-FSRN, then \(\forall m\ge 1\), \({s}_{i+m-1,i+m}\subset \bar{Z}\). (\(\bar{Z}\) denotes the closure of area Z.)

Proof. Suppose \({s}_{i+k-1,i+k}\subset \bar{Z}\). According to Definition 0.2,

C. Passing-time inference algorithm

Algorithm 1

Passing Time Inference

D. Details of trajectory reconstruction

As shown in Fig. 10a, there are two different circumstances we need to deal with when it comes to queuing discrimination. The common idea is that the low constructed travel speed assumes a queuing behavior since the vehicle does not move during the queuing process. For those vehicles leaving xj, the travel speed is simply determined by the slope between the entry point (A) and leaving point (B), as shown in Fig. 10a. As for vehicles from former iterations, since the exit point (G) remains unknown, the intersection (F) of the wave μτ and stopping position \(\overline{FH}\) is chosen as the referring point. Hence the adapted travel speed is related to Point E and Point F. Especially when it provides the green light period instead of the exact entry time, the end of the green light period is used as referring point.

After the independent queuing discrimination, the result might show that several vehicles are assumed queuing before the current green light period. For instance, let Vehicle 1,3 be the low-speed vehicles as depicted in Fig. 10b. It is a fact that there is no more than one stop wave during one signal period. Thus, the queuing vehicle must be in front of the other ones. Considering the one-wave constraint, let the last low-speed vehicle be the last queuing vehicle. In this case, Vehicle 1,2,3 would be marked as the queuing vehicles. Their stopped positions are calculated according to their leaving orders. The stop position of the i-th vehicle is formulated as follows,

where kj is the jam density. The passing speed is related to the stopped position and the exit point. On the other hand, the travel speed of the non-queued vehicles is calculated according to the passing information. The reconstructed trajectory is the straight passing line to vehicles with specific entry and leaving points, such as vehicle 4. For vehicles with one exact passing point, such as vehicles 5 and 7, the travel speed is formulated by the speed-density model26,

where vf is the free flow speed, and α = 1.0, β = 0.05 according to relating researches27,28. In this way, the travel speed is given based on the local density, representing the road segment’s traffic dynamic. Then their trajectories are fixed by one passing point and the running speed. Finally, to vehicles without exact observations, their speed is also calculated by the same speed-density model, and the endpoint is given randomly with constraints of the proceeding and following vehicles. (See Vehicle 4 in Fig. 10b.)

Code availability

To further describe the details of data processing in our method, we also provide code and instructions for reproducing the presented results25. In general, files that end with “.py” are supporting python module files, other files with “.ipynb” are written as Jupyter Notebook instruction, and the files under the folder “measurement” are the source code of the resampling software. The instruction files demonstrate the whole data processing workflow in Fig. 1, including trip measurement, trajectory reconstruction, virtual traffic flow detection, and data validation. These files can be used to better understand the modeling and validation steps.

This study proposes a resampling method of vehicular trajectories using the LPR data. A city-scale holographic unbiased trajectories dataset is reconstructed. Then it is validated by the consistency with other data sources on travel time results and demonstrated with the macroscopic characteristics of the fundamental diagram. The correlative coefficient of travel time is about 0.688 to 0.749. Moreover, with the anonymous interactive measurement, users can acquire multiple traffic data from the individual level without the risk of personal information abuse. This dataset and the tool could support relative research goals such as data fusion, patterns of mobility recognition, and sensor network optimization.

References

Gabor, D. A new microscopic principle. Nature 161, 777–778, https://doi.org/10.1038/161777a0 (1948).

U.S. Department of Transportation Federal Highway Administration Next generation simulation (ngsim) vehicle trajectories and supporting data. [dataset]. provided by its datahub through data.transportation.gov. https://doi.org/10.21949/1504477 (2016).

Punzo, V., Borzacchiello, M. T. & Ciuffo, B. On the assessment of vehicle trajectory data accuracy and application to the next generation simulation (ngsim) program data. Transportation Research Part C: Emerging Technologies 19, 1243–1262 (2011).

Zhang, T. & Jin, P. J. A longitudinal scanline based vehicle trajectory reconstruction method for high-angle traffic video. Transportation research part C: emerging technologies 103, 104–128 (2019).

Montanino, M. & Punzo, V. Trajectory data reconstruction and simulation-based validation against macroscopic traffic patterns. Transportation Research Part B: Methodological 80, 82–106 (2015).

Sun, Z. & Ban, X. J. Vehicle trajectory reconstruction for signalized intersections using mobile traffic sensors. Transportation Research Part C: Emerging Technologies 36, 268–283 (2013).

Wang, Y., Wei, L. & Chen, P. Trajectory reconstruction for freeway traffic mixed with human-driven vehicles and connected and automated vehicles. Transportation research part C: emerging technologies 111, 135–155 (2020).

Chen, X., Yin, J., Tang, K., Tian, Y. & Sun, J. Vehicle trajectory reconstruction at signalized intersections under connected and automated vehicle environment. IEEE Transactions on Intelligent Transportation Systems (2022).

Bernstein, D. & Kanaan, A. Y. Automatic vehicle identification: technologies and functionalities. Journal of Intelligent Transportation System 1, 191–204 (1993).

Sun, Z., Jin, W.-L. & Ritchie, S. G. Simultaneous estimation of states and parameters in newell’s simplified kinematic wave model with eulerian and lagrangian traffic data. Transportation research part B: methodological 104, 106–122 (2017).

Yu, R., Abdel-Aty, M. A., Ahmed, M. M. & Wang, X. Utilizing microscopic traffic and weather data to analyze real-time crash patterns in the context of active traffic management. IEEE Transactions on Intelligent Transportation Systems 15, 205–213 (2013).

Zhan, X., Li, R. & Ukkusuri, S. V. Lane-based real-time queue length estimation using license plate recognition data. Transportation Research Part C: Emerging Technologies 57, 85–102 (2015).

Asakura, Y., Hato, E. & Kashiwadani, M. Origin-destination matrices estimation model using automatic vehicle identification data and its application to the Han-Shin expressway network. Transportation 27, 419–438, https://doi.org/10.1023/A:1005239823771 (2000).

Zhou, X. & Mahmassani, H. S. Dynamic origin-destination demand estimation using automatic vehicle identification data. IEEE Transactions on Intelligent Transportation Systems 7, 105–114, https://doi.org/10.1109/TITS.2006.869629 (2006).

Mo, B., Li, R. & Zhan, X. Speed profile estimation using license plate recognition data. Transportation research part C: emerging technologies 82, 358–378 (2017).

Rao, W., Wu, Y.-J., Xia, J., Ou, J. & Kluger, R. Origin-destination pattern estimation based on trajectory reconstruction using automatic license plate recognition data. Transportation Research Part C: Emerging Technologies 95, 29–46 (2018).

Khare, V. et al. A novel character segmentation-reconstruction approach for license plate recognition. Expert Systems with Applications 131, 219–239 (2019).

Tong, P., Li, M., Li, M., Huang, J. & Hua, X. Large-scale vehicle trajectory reconstruction with camera sensing network. Proceedings of the Annual International Conference on Mobile Computing and Networking, MOBICOM 188–200, https://doi.org/10.1145/3447993.3448617 (2021).

Li, Y., Yu, R., Shahabi, C. & Liu, Y. Diffusion convolutional recurrent neural network: Data-driven traffic forecasting. In International Conference on Learning Representations (2018).

Wang, Y., Yang, X., Liang, H. & Liu, Y. A review of the self-adaptive traffic signal control system based on future traffic environment. Journal of Advanced Transportation 2018 (2018).

Robertson, D. TRANSYT: A Traffic Network Study Tool. RRL report (Road Research Laboratory, 1969).

Wang, Y. et al. City-scale holographic traffic flow data based on vehicular trajectory resampling. Figshare https://doi.org/10.6084/m9.figshare.c.5796776.v1 (2022).

Ni, D. Traffic flow theory: Characteristics, experimental methods, and numerical techniques (Butterworth-Heinemann, 2015).

Wittebolle, L. et al. Initial community evenness favours functionality under selective stress. Nature 458, 623–626 (2009).

Wang, Y., Li, G., Lu, Y., He, Z. & Yu, Z. City-scale holographic traffic flow data set of xuancheng. https://github.com/sysuits/City-Scale-Holographic-Traffic-Flow-Data-based-on-Vehicular-Trajectory-Resampling (2021).

May, A. & Keller, H. E. Non-integer car-following models. Highway Research Record 199, 19–32 (1967).

Ben-Akiva, M., Bierlaire, M., Burton, D., Koutsopoulos, H. N. & Mishalani, R. Network State Estimation and Prediction for Real-Time Traffic Management. Networks and Spatial Economics 1, 293–318 (2001).

Xu, Y., Song, X., Weng, Z. & Tan, G. An Entry Time-based Supply Framework (ETSF) for mesoscopic traffic simulations. Simulation Modelling Practice and Theory 47, 182–195, https://doi.org/10.1016/j.simpat.2014.06.006 (2014).

Acknowledgements

The work was done at the SYSU Research Center of ITS in the context of the collaboration with the Joint Research and Development Laboratory of Smart Policing in Xuancheng Public Security. The research was also supported by the National Natural Science Foundation of China (No. U1811463).

Author information

Authors and Affiliations

Contributions

Z.Y. conceived of the presented idea. Y.W. developed the theoretical framework and performed the computations. Y.C and Y.L. contributed to the technical details of the the theory. G.L. conducted part of the experiments. Z.H. supervised the findings of this work. All authors discussed the results and contributed to the final manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no Competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Wang, Y., Chen, Y., Li, G. et al. City-scale holographic traffic flow data based on vehicular trajectory resampling. Sci Data 10, 57 (2023). https://doi.org/10.1038/s41597-022-01850-0

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41597-022-01850-0

This article is cited by

-

A unified dataset for the city-scale traffic assignment model in 20 U.S. cities

Scientific Data (2024)

-

Enhanced building energy harvesting through integrated piezoelectric materials and smart road traffic routing

Letters in Spatial and Resource Sciences (2024)

-

City-scale Vehicle Trajectory Data from Traffic Camera Videos

Scientific Data (2023)

-

City-scale synthetic individual-level vehicle trip data

Scientific Data (2023)