Abstract

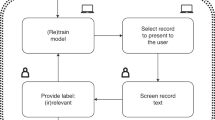

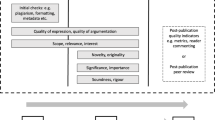

While ancient scientists often had patrons to fund their work, peer review of proposals for the allocation of resources is a foundation of modern science. A very common method is that proposals are evaluated by a small panel of experts (due to logistics and funding limitations) nominated by the grant-giving institutions. The expert panel process introduces several issues, most notably the following: (1) biases may be introduced in the selection of the panel and (2) experts have to read a very large number of proposals. Distributed peer review promises to alleviate several of the described problems by distributing the task of reviewing among the proposers. Each proposer is given a limited number of proposals to review and rank. We present the result of an experiment running a machine-learning-enhanced distributed peer-review process for allocation of telescope time at the European Southern Observatory. In this work, we show that the distributed peer review is statistically the same as a ‘traditional’ panel, that our machine-learning algorithm can predict expertise of reviewers with a high success rate, and that seniority and reviewer expertise have an influence on review quality. The general experience has been overwhelmingly praised by the participating community (using an anonymous feedback mechanism).

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The anonymized data are available at https://zenodo.org/record/2634598.

References

Mervis, J. Just one proposal per year, please, NSF tells astronomers. Science 344, 1328–1328 (2014).

Merrifield, M. R. & Saari, D. G. Telescope time without tears: a distributed approach to peer review. Astron. Geophys. 50, 16–20 (2009).

Kurokawa, D., Lev, O., Morgenstern, J. & Procaccia, A. D. Impartial peer review. In IJCAI’15: Proc. 24th International Conference on Artificial Intelligence (eds Yang, Q. & Wooldridge, M.) 582–588 (AAAI Press, 2015); http://dl.acm.org/citation.cfm?id=2832249.2832330

Steppi, A. et al. Simulation study on a new peer review approach. Preprint at https://arxiv.org/abs/1806.08663 (2018).

Andersen, M. et al. The Gemini Fast Turnaround program. Am. Astron. Soc. Meet. Abstr. 233, 761 (2019).

Ardabili, P. N. & Liu, M. Incentives, quality, and risks: a look into the NSF proposal review pilot. Preprint at https://arxiv.org/abs/1307.6528 (2013).

Mervis, J. A radical change in peer review. Science 345, 248–249 (2014).

Strolger, L.-G. et al. The Proposal Auto-Categorizer and Manager for time allocation review at the Space Telescope Science Institute. Astron. J. 153, 181 (2017).

Kerzendorf, W. E. Knowledge discovery through text-based similarity searches for astronomy literature. J. Astrophys. Astron. 40, 23 (2019).

Ehrlinger, J. & Dunning, D. How chronic self-views influence (and potentially mislead) estimates of performance. J. Pers. Soc. Psychol. 84, 5–17 (2003).

Patat, F. Peer review under review—a statistical study on proposal ranking at ESO. Part I: the premeeting phase. Publ. Astron. Soc. Pac. 130, 084501 (2018).

Van Rooyen, S., Godlee, F., Evans, S., Black, N. & Smith, R. Effect of open peer review on quality of reviews and on reviewers’ recommendations: a randomised trial. BMJ 318, 23–27 (1999).

Cook, W. D., Golany, B., Kress, M., Penn, M. & Raviv, T. Optimal allocation of proposals to reviewers to facilitate effective ranking. Manag. Sci. 51, 655–661 (2005).

Milojević, S. Accuracy of simple, initials-based methods for author name disambiguation. J. Informetr. 7, 767–773 (2013).

Acknowledgements

This paper is the result of independent research and is not to be considered as expressing the position of the ESO on proposal review and telescope time allocation procedures and policies.

We thank the 167 volunteers who participated in the DPR experiment for their work and enthusiasm. We also thank M. Kissler-Patig for promoting the DPR experiment following his experience at Gemini, ESO’s Director General X. Barçons and ESO’s Director for Science R. Ivison for their support and H. Schütze for several suggestions on the natural language processing. We thank J. Linnemann for help with some of the statistics tests.

W.E.K. is part of SNYU, and the SNYU group is supported by the NSF CAREER award AST-1352405 (PI Modjaz) and the NSF award AST-1413260 (PI Modjaz). W.E.K. was also supported by an ESO Fellowship and the Excellence Cluster Universe, Technische Universität München, for part of this work. W.E.K. thanks the Flatiron Institute. G.v.d.V. acknowledges funding from the European Research Council under the European Union’s Horizon 2020 research and innovation programme with grant agreement 724857 (consolidator grant ArcheoDyn).

Author information

Authors and Affiliations

Contributions

We use the CRT standard (see https://casrai.org/credit/) for reporting the author contributions. Conceptualization: W.E.K., F.P., G.v.d.V. Data curation: W.E.K., F.P. Formal analysis: W.E.K., F.P. Investigation: W.E.K., F.P. Methodology: W.E.K., G.v.d.V., T.A.P. Software: W.E.K., D.B. Supervision: W.E.K., F.P. Validation: W.E.K., F.P., G.v.d.V., T.A.P. Visualization: W.E.K., F.P., T.A.P. Writing—original draft: W.E.K., F.P., T.A.P. Writing—review & editing: W.E.K., F.P., G.v.d.V., T.A.P.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Peer review information Nature Astronomy thanks Morten Andersen, Anna Severin and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information

Supplementary Sections 1–3 and appendices 1,2.

Rights and permissions

About this article

Cite this article

Kerzendorf, W.E., Patat, F., Bordelon, D. et al. Distributed peer review enhanced with natural language processing and machine learning. Nat Astron 4, 711–717 (2020). https://doi.org/10.1038/s41550-020-1038-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41550-020-1038-y

This article is cited by

-

Record number of first-time observers get Hubble telescope time

Nature (2021)

-

Distributing the load with machine learning

Nature Reviews Physics (2020)

-

Easing the burden of peer review

Nature Astronomy (2020)