Abstract

Diagnosing asthma is challenging. Misdiagnosis can lead to untreated symptoms, incorrect treatment and avoidable deaths. The best combination of clinical features and tests to achieve a diagnosis of asthma is unclear. As asthma is usually diagnosed in non-specialist settings, a clinical prediction model to aid the assessment of the probability of asthma in primary care may improve diagnostic accuracy. We aimed to identify and describe existing prediction models to support the diagnosis of asthma in children and adults in primary care. We searched Medline, Embase, CINAHL, TRIP and US National Guidelines Clearinghouse databases from 1 January 1990 to 23 November 17. We included prediction models designed for use in primary care or equivalent settings to aid the diagnostic decision-making of clinicians assessing patients with symptoms suggesting asthma. Two reviewers independently screened titles, abstracts and full texts for eligibility, extracted data and assessed risk of bias. From 13,798 records, 53 full-text articles were reviewed. We included seven modelling studies; all were at high risk of bias. Model performance varied, and the area under the receiving operating characteristic curve ranged from 0.61 to 0.82. Patient-reported wheeze, symptom variability and history of allergy or allergic rhinitis were associated with asthma. In conclusion, clinical prediction models may support the diagnosis of asthma in primary care, but existing models are at high risk of bias and thus unreliable for informing practice. Future studies should adhere to recognised standards, conduct model validation and include a broader range of clinical data to derive a prediction model of value for clinicians.

Similar content being viewed by others

Introduction

Making an accurate diagnosis of asthma is fundamental to improving asthma care and outcomes. However, asthma is commonly misdiagnosed, with over- and under-diagnosis of asthma in children and adults reported.1,2,3 Over-diagnosis leads to costly, potentially harmful treatment and unnecessary health care, whilst under-diagnosis risks inadequate treatment and avoidable morbidity and mortality.

Accurately diagnosing asthma is challenging. Asthma is a heterogeneous disease comprising different genotypes, endotypes and phenotypes.4 There is no ‘gold’ reference standard that can categorically confirm or refute the diagnosis. Asthma is thus a clinical diagnosis, but individual symptoms, signs and tests have poor sensitivity/specificity for the diagnosis. Uncertainty about the best combination of clinical features and tests for asthma diagnosis is reflected in conflicting recommendations between national5,6 and international7 guidelines and highlighted in commentaries seeking to reduce confusion for clinicians.8,9

One solution could be to use a clinical prediction model, a data-driven algorithm that combines at least two predictors, such as elements from a clinical history, physical examination, test results and/or response to treatment, to estimate the probability that an outcome is present.10 Clinical prediction models can assist healthcare professionals to weigh up the probability of a diagnosis, enhance shared decision-making and aid patient stratification into subtypes.11,12 As most asthma diagnoses occur in non-specialist settings,4 where health problems typically present in an undifferentiated manner, and assessment is often based on probability,13 a prediction model could increase the accuracy of asthma diagnosis by supporting the appraisal of available clinical information and guiding next steps.

We aimed to identify, compare and synthesise existing clinical prediction models designed to support the diagnosis of asthma in children and adults presenting with symptoms suggestive of asthma in primary care or equivalent settings.

Results

Study selection

Our searches identified 13,798 records. Following the removal of duplicates, 13,180 titles and abstracts were screened (Fig. 1). Fifty three articles were reviewed in full text, with 45 articles excluded (Supplementary Table 1). Eight articles from seven studies met the review criteria and were included.14,15,16,17,18,19,20,21

Study characteristics

The included studies all derived new clinical prediction models (Table 1). Each study presented a model that could be used to aid the diagnosis of asthma; however, study rationale varied, and this was reflected in the design and approach to modelling used. Six studies used multivariable logistic regression to derive their prediction models.14,15,17,18,20,21 One study developed a decision tree.19 Six models were derived from adults,14,17,18,19,20,21 and one from children.15 The three studies14,18,21 that recruited exclusively from out-patient departments were conducted in countries without established primary care services, where patients commonly presented with undifferentiated symptoms to secondary care.22,23

Risk of bias

All included studies were judged to be at high risk of bias. Bias was introduced by various means, though certain limitations were shared by several studies (Table 2). Most notable was the lack of model validation. See Supplementary Note 1 for detailed risk of bias assessment.

Model performance and validation

Three studies reported model performance using classification measures (Table 1),15,19,21 whilst three reported model discrimination using the area under the receiver operating characteristic curve (AUROC), which ranged from 0.61 to 0.82.14,18,20 None of the studies reported model calibration.

Hirsch et al.17 conducted internal validation, but did not report model performance. Metting et al.19 conducted an internal (10-fold cross) validation and external validation of the final decision tree using data from a different asthma/COPD referral service within the Netherlands. Model performance (derived from available data; no confidence intervals (CIs) available) was similar in the derivation (sensitivity 0.79, specificity 0.75) and validation datasets (sensitivity 0.78, specificity 0.60).19 Five studies reported no validation, with model performance likely to be over-estimated in these cases.14,15,18,20,21

Model presentation

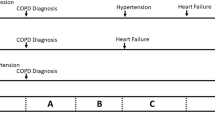

Of the six studies that derived a prediction model using logistic regression, four presented a scoring system,14,17,18,21 one a web-based clinical calculator20 and one presented model output from which a probability could be calculated.15 The decision tree had six ‘branches’ of predictors that led to a probability of asthma, though this approach limited the number of predictor combinations.19

Model outcome measures

Four studies based their outcome measure on bronchial challenge testing;14,18,20,21 an asthma diagnosis was indicated by a 20% fall in forced expiratory volume in 1 s (FEV1) from baseline after stepwise inhalation of methacholine up to a maximum 8 mg/ml 21 or 16 mg/ml.14,18,20

Expert opinion informed the outcome in two studies.17,19 Hirsch et al.17 used a panel of three experts, whilst Metting et al.19 used one of ten respiratory specialists to make a diagnosis. In one study, healthcare providers made an asthma diagnosis when a child demonstrated reversible episodic symptoms, indicated by spirometry or symptom resolution.15

Description of predictor variables

The clinical prediction models combined between 4 (ref. 15) and 22 (ref. 19) predictors to estimate the probability of asthma. Three studies collected data from questionnaires only.14,15,18 The remaining studies collected a wider range of clinical data, though not all of the information was included in model development (Table 3). Figure 2 illustrates the strength of association between predictors included in the prediction models and the outcome, asthma. The most common predictors were wheeze, cough, symptom variability and allergy. Estimates for individual predictors were unavailable from two studies.17,19

Forest plots demonstrating the strength of association of predictor variables against the outcome asthma. Not all studies had extractable data. PP = private practice, Co = combined dataset (private practice and primary care), OPD = out-patient department, PC = primary care. Confidence intervals were not reported for all estimates, indicated by [NR]. No overall estimates were produced as meta-analysis was not possible

Participant age was collected in all studies, but only considered in the model development of two studies.17,20 The decision tree used age of onset of respiratory symptoms in five of six branches.19 Male sex was associated with asthma in one model.17

Wheeze as a symptom was used in five clinical prediction models,14,15,17,18,19 though six different questions were used. Despite wide variation in how wheeze was recorded between studies, the magnitude of association between wheeze variables and asthma outcome were similar (Fig. 2) in four of five studies. The exception was Hall et al.15 whose reported estimates were much greater than other studies.

Cough was included in five of seven prediction models and asked about in three different ways (Fig. 2).14,15,17,18,20 Variables for cough were not clearly predictive for asthma in four studies.14,17,18,20 In contrast, Hall et al.15 reported that a cough lasting beyond 10 days after a cold was associated with asthma (odds ratio (OR) 5.8 (outpatients); OR 3.1 (primary care), CIs not reported), despite cough in children commonly taking over 10 days to settle.24

Respiratory tract infection was included in four prediction models, though was of unclear value as all studies were judged at high risk of bias.14,17,18,20

Being woken by chest tightness was associated with asthma in one study.17 Waking up because of cough in the past year was associated with asthma in one study at high risk of bias, though the lack of CIs makes the precision of estimates unclear.15 Symptoms disturbing sleep were not predictive in two other models.19,20

Episodic symptoms and diurnal variation were associated with asthma in one study,21 yet Choi et al.14 found ‘fluctuation of exacerbation and improvement’ was not associated with asthma (OR 1.24, 95% CI 0.75–2.05). Exercise-induced symptoms were associated with asthma in three studies.14,15,18 However, ‘dyspnoea on exertion’ was not significant in one study.20

The presence of allergy/atopic disease was predictive of asthma in five studies.17,18,19,20,21 Five of six decision tree branches included the presence/absence of allergy;19 past allergic disease, respiratory symptoms triggered by aeroallergens/pollutants and nasal allergy were significantly associated with asthma (Fig. 2).18,20,21

Current use of asthma medication was asked about and valuable in two studies,17,20 whilst past asthma attack was recorded by one study.17

Participants who smoked scored ‘−1’ in the prediction model by Hirsch et al.17 ‘never smoked’ and a ratio of FEV1 by forced vital capacity (FEV1/FVC) <70%, formed one of six decision tree branches leading to asthma.19 Four studies collected smoking data, but did not include it in their analysis.14,18,20,21

Family history of asthma was included by one study,17 but having a ‘close relative with allergic diseases’ was not associated with asthma in another (OR 1.19, 95% CI 0.73–1.93).21

Only Tomita et al.21 incorporated information from clinical examination. Wheeze heard on auscultation was associated with asthma (OR 3.68, 95% CI 1.78–7.62).21

FEV1/FVC was included in all branches that led to asthma in the decision tree.19 Bronchodilator reversibility was used in four out of six branches, though in contrast to guideline recommendations,5,6,7 two branches included reversibility of <7%.19 Schneider et al.20 included fractional exhaled nitric oxide (FeNO) as the main predictor in their clinical prediction models. Tomita et al21 collected relevant data but did not include in model development.

Discussion

This systematic review identified seven clinical prediction models to support the diagnosis of asthma in primary care. All studies were judged to be at high risk of bias and cannot be recommended for diagnosing asthma in routine clinical practice. Wheeze, allergy, allergic rhinitis, symptom variability and exercise-induced symptoms were associated with asthma and should be considered as predictors in future prediction models. Cough, respiratory tract infection and nocturnal respiratory symptoms were inconsistently associated with asthma.

The use of Checklist for critical Appraisal and data extraction for systematic Reviews of prediction Modelling Studies (CHARMS) and Prediction model Risk Of Bias ASsessment Tool (PROBAST), systematic review frameworks specific for prediction models, in undertaking this review ensured each step was conducted to international standards. PROBAST was yet to be published, but we used it for risk of bias assessment as it was purposefully developed for reviews of prediction models by the Cochrane Prognosis Group, and had been successfully piloted.25,26 We reduced the possibility of reporting bias by duplicate, independent data extraction and risk of bias assessment. We planned to evaluate the overall quality of evidence using the Grading of Recommendations Assessment, Development and Evaluation (GRADE) system. Originally designed for reviews of intervention studies, GRADE has been adapted for reviews of prognostic studies, though not specifically for prediction models.27,28 Consequently, in its current form we did not find GRADE to be a suitable tool for our systematic review and decided not to use it. Future research should consider how to adapt GRADE so that it can be used for reviews of clinical prediction models.

We searched databases from 1 January 1990, having found no relevant literature before this date in preliminary searches. Our decision to search five databases was informed by the strategies of similar systematic reviews,29,30 but despite this we may have missed some relevant studies.

Restricting the population of interest to primary care (or equivalent) populations limited the number of studies we could include. Asthma may be diagnosed in both primary and secondary care, and current guidelines present diagnostic algorithms irrespective of clinical setting.5,6,7 However, the diagnostic value of symptoms, signs and tests vary depending on the setting in which they are used,31 and the general approach to making a diagnosis differs, as secondary care tend to see referred patients.13 As most diagnoses occur in non-specialist settings,4 we opted to focus on clinical prediction models derived from primary care participants. The degree to which study participants presented with undifferentiated symptoms was unclear in some studies. We sought additional information about the country of origin and made decisions based on team discussion to mitigate this uncertainty.

National and international guidelines are consistent in their advice to build up evidence to support a diagnosis of asthma based on history, examination, investigations and when necessary, a monitored trial of treatment.5,6,7 The Global Initiative for Asthma describes a characteristic pattern of symptoms (wheezing, shortness of breath, cough, chest tightness varying over time and in intensity) as indicative of asthma.7 Our included clinical prediction models endorse wheeze and symptom variability as potentially valuable predictors; however, cough and breathlessness were inconsistently associated with asthma. This inconsistency may in part have arisen from the different ways in which predictors were defined. For example, participants were asked about coughs that were variously ‘paroxysmal’, ‘nocturnal’, ‘daytime’, ‘often’ in the different studies limiting the comparison between prediction models and preventing meta-analysis. Additionally, patients and parents understand and describe symptoms differently from clinicians (and researchers),32 and future studies should choose reliable terms when phrasing questions about symptoms.33

Another reason for the inconsistent association between predictors and asthma observed in the included studies may be the imperfect nature of the outcome measure (reference standard) available for asthma. There is no universally accepted method to deal with an imperfect reference standard.34 Subsequently, in asthma diagnostic research it is not uncommon for different reference standards to be used between studies. For instance, in a systematic review reporting the accuracy of FeNO for asthma diagnosis, included studies were found to have substantial heterogeneity in the reference standards used.35 In this review, four studies used methacholine bronchial provocation, considered to be the best available reference standard for asthma, though it is known to be better at ruling out, rather than ruling in the diagnosis.36 The remaining studies used clinician judgement to classify those with/without asthma, a valid solution in the face of an imperfect reference standard,34 but highly dependent on the performance, consistency and agreement of the clinicians. Understanding that the performance of a prediction model for asthma diagnosis depends so heavily on the outcome measure chosen, future studies should consider recommendations to move away from the umbrella term ‘asthma’, instead focussing on identifying ‘treatable traits’ as failure to recognise asthma as an aggregate diagnosis is likely to limit any improvement in diagnostic accuracy gained from a clinical prediction model.4,37

This review highlights the paucity of current evidence to inform diagnostic algorithms. A validated clinical prediction model for asthma diagnosis could help healthcare professionals improve the accuracy of a diagnosis by guiding decision-making and reducing variability between clinicians. That only two studies considered diagnostic tests as candidate predictors was disappointing given the potential for prediction models to combine information from a clinical history, physical examination and tests. Failure to confirm the presence of asthma with objective tests has been implicated in the widespread misdiagnosis.1 So, on a practical level, a validated prediction model that guides a clinician in the questions to ask, and the test(s) required to confirm or refute an asthma diagnosis, is likely to be most useful.

Future attempts at model derivation for asthma diagnosis should be informed by recognised standards such as the Transparent Reporting of a multivariable prediction model for Individual Prognosis Or Diagnosis (TRIPOD).38 Prediction models should undergo internal and external validation and report model performance using calibration and discrimination measures.39 In this review, none of the included studies reported model calibration. Model validation was completed by only two studies, a finding that matches the wider literature.40 Finally, strategies to implement the validated model in routine clinical practice need to be developed, piloted and evaluated,41 to assess impact on clinical outcomes.40

In conclusion, existing clinical prediction models to support clinicians in making a diagnosis of asthma in primary care are at high risk of bias and thus of limited clinical value. Wheeze, symptom variability and the presence of other allergic disease were associated with asthma diagnosis. Informed by this review, future studies should address the limitations identified and follow established methods to derive and validate a prediction model of value to clinicians. Establishing a data-driven approach to asthma diagnosis could resolve current discrepancies in guidelines and enable the unacceptable level of asthma misdiagnosis to be reduced.

Methods

The systematic review was registered with PROSPERO (CRD42018078418). Detailed methods were described in the published protocol,42 with salient points presented here. We followed the CHARMS39 and Preferred Reporting Items for Systematic Review and Meta-Analysis (PRISMA).43

Study eligibility criteria

Population

Children or adults presenting with symptoms suggestive of asthma in primary care.

Intervention

Any clinical prediction model designed to aid the diagnostic decision-making of a healthcare professional during the assessment of an individual with symptoms suggestive of asthma.

Comparator

Not applicable.

Outcome

The primary outcome to be predicted was the probability of an asthma diagnosis. We included studies that presented a prediction model, or equivalent statistical method, that allowed the probability of asthma to be calculated for an individual. To be included, the study had to use an outcome based on an internationally recognised definition for asthma (as available, for instance, from the Global Initiative for Asthma.7)

Timing

Any diagnostic prediction model that provides an estimate for the probability that asthma is present at the time of clinical assessment.

Setting

We included any clinical prediction model designed for use in a primary care population or equivalent (defined as any setting where undifferentiated health problems are presented to healthcare professionals).13

Study type

We included prediction model derivation studies (with or without external validation) and external model validation studies.39 Randomised controlled trials, cohort studies (prospective or retrospective), cross-sectional, nested case–control and case–cohort studies were eligible for inclusion.39

Exclusion criteria

Studies were excluded if:

-

1.

Variables were not combined to produce a diagnostic estimate

-

2.

Publication occurred before 1 January 1990 (preliminary searches identified no relevant citations before this date)

-

3.

Variables used in the clinical prediction model were not clearly reported, or unavailable in routine clinical practice (for example genetic tests)

-

4.

Separate outcomes for asthma were not reported or the asthma outcome was not extractable

-

5.

The prediction model was derived to predict the future risk of asthma

-

6.

Over half of study participants were children <5 years old (because of the overlap between asthma and viral associated wheeze in this age group)

-

7.

Non-original studies such as editorials, expert views.

Information sources and search strategy

We searched Medline, Embase, CINAHL, TRIP (https://www.tripdatabase.com) and US National Guidelines Clearinghouse (https://www.guideline.gov) databases from 1 January 1990 to 23 November 2017. The search strategy (Supplementary Table 2) combined published searches for prediction models44,45 with Cochrane Airways asthma search terms.46 Forward and backward citation searching was completed. No language restrictions were used. Studies were translated when necessary.

Study selection

Retrieved records were de-duplicated, screened and managed using Covidence (https://www.covidence.org). Two reviewers (L.D., A.B.) independently screened titles and abstracts. Full-text copies of all relevant records were obtained. Two reviewers (L.D., S.McL.) independently assessed each full-text record for eligibility. Discrepancies were arbitrated by discussion (H.P., S.L. and A.S.).

Data collection process

A standardised data extraction form was developed using CHARMS and piloted.39 Two reviewers (L.D., S.McL.) independently extracted data from included studies, with disagreements resolved by third reviewer (H.P., S.L. or A.S.). Study authors were contacted if further information or clarification was required. Data were summarised in descriptive tables (Supplementary Table 3).

Critical appraisal of individual studies

Two reviewers (L.D., S.McL.) used the PROBAST to independently evaluate risk of bias and concerns about applicability before reaching a consensus for each included study.25 According to PROBAST, risk of bias assessment is guided by 20 signalling questions across four domains; participant selection, predictors, outcome and analysis. Each domain is scored low, high or unclear risk of bias and combined to provide an assessment for each study. If a study scores high risk of bias for any domain, PROBAST advises the study to be rated high risk of bias overall. The extent to which each study matched the review question was assessed using PROBAST applicability concern questions. Three domains were assessed; participant selection, predictors and outcome, leading to an overall rating for applicability.

Data synthesis and summary measures

Results were summarised by narrative synthesis as between-study heterogeneity precluded meta-analyses. We summarised the final model presentation and available measures of overall performance, including calibration, discrimination and classification parameters, from each included study. We appraised the strength of association of predictors used in each model against the outcome (asthma) by comparing regression coefficients and odds ratios.

Evaluating confidence in cumulative evidence

We planned to report the overall quality of evidence using GRADE. However, in a change from our protocol, we decided to omit the use of GRADE, as without an adaptation for prediction modelling studies, we did not find it to be a suitable tool.27 Assessment of publication bias was not completed due to heterogeneity between studies.

Data availability

Any data generated or analysed are included in this article and the Supplementary files. Additional data may be available from the corresponding author on reasonable request.

References

Aaron, S. D. et al. Reevaluation of diagnosis in adults with physician-diagnosed asthma. JAMA 317, 269–279 (2017).

Looijmans-Van den Akker, I., van Luijn, K. & Verheij, T. Overdiagnosis of asthma in children in primary care: a retrospective analysis. Br. J. Gen. Pract. 66, e152–e157 (2016).

José, B. P. D. S. et al. Diagnostic accuracy of respiratory diseases in primary health units. Rev. Assoc. Méd. Bras. 60, 599–612 (2014).

Pavord, I. D. et al. After asthma: redefining airways diseases. Lancet 391, 350–400 (2018).

Nice Guideline. A sthma: Diagnosis, Monitoring and Chronic Asthma Management, Nice nG80 (2017) https://www.nice.org.uk/guidance/ng80. Accessed Dec 2018.

Health Improvement Scotland. BTS/SIGN British Guideline for the Management of Asthma. SIGN 153 (2016). https://www.sign.ac.uk/assets/sign153.pdf. Accessed Dec 2018.

Global Initiative for Asthma. Global Strategy for Asthma Management and Prevention (2018). http://www.ginasthma.org. Accessed Dec 2018.

White, J., Paton, J. Y., Niven, R. & Pinnock, H. Guidelines for the diagnosis and management of asthma: a look at the key differences between BTS/SIGN and NICE. Thorax 1–5 (2018).

Keeley, D. & Baxter, N. Conflicting asthma guidelines cause confusion in primary care. BMJ 360, k29 (2018).

Toll, D. B., Janssen, K. J., Vergouwe, Y. & Moons, K. G. Validation, updating and impact of clinical prediction rules: a review. J. Clin. Epidemiol. 61, 1085–1094 (2008).

Steyerberg, E. Clinical Prediction Models: A Practical Approach to Development, Validation, and Updating (Springer Science & Business Media, Singapore, 2009).

Plüddemann, A. et al. Clinical prediction rules in practice: review of clinical guidelines and survey of GPs. Br. J. Gen. Pract. 64, e233–e242 (2014).

Knottnerus, J. A. Between iatrotropic stimulus and interiatric referral: the domain of primary care research. J. Clin. Epidemiol. 55, 1201–1206 (2002).

Choi, B. W. et al. Easy diagnosis of asthma: computer-assisted, symptom-based diagnosis. J. Korean Med. Sci. 22, 832–838 (2007).

Hall, C. B., Wakefield, D., Rowe, T. M., Carlisle, P. S. & Cloutier, M. M. Diagnosing pediatric asthma: validating the Easy Breathing Survey. J. Pediatr. 139, 267–272 (2001).

Hirsch, S. et al. Using a neural network to screen a population for asthma. Ann. Epidemiol. 11, 369–376 (2001).

Hirsch, S., Frank, T. L., Shapiro, J. L., Hazell, M. L. & Frank, P. I. Development of a questionnaire weighted scoring system to target diagnostic examinations for asthma in adults: a modelling study. BMC Fam. Pract. 5, 30 (2004).

Lim, S. Y., Jo, Y. J. & Chun, E. M. The correlation between the bronchial hyperresponsiveness to methacholine and asthma like symptoms by GINA questionnaires for the diagnosis of asthma. BMC Pulm. Med. 14, 161 (2014).

Metting, E. I. et al. Development of a diagnostic decision tree for obstructive pulmonary diseases based on real-life data. ERJ Open Res. 2, 00077–02015 (2016).

Schneider, A., Wagenpfeil, G., Jörres, R. A. & Wagenpfeil, S. Influence of the practice setting on diagnostic prediction rules using FENO measurement in combination with clinical signs and symptoms of asthma. BMJ Open. 5, e009676 (2015).

Tomita, K. et al. A scoring algorithm for predicting the presence of adult asthma: a prospective derivation study. NPJ Prim. Care Respir. Med. 22, 51 (2013).

Sakamoto, H et al. Japan Health System Review (World Health Organization, Regional Office for South-East Asia, New Delhi, 2018).

Kwon, S., Lee, T.-J. & Kim, C.-Y. Republic of Korea health system review. Health Syst. Trans. 5, 4 (2015).

Thompson, M. et al. Duration of symptoms of respiratory tract infections in children: systematic review. BMJ 347, f7027 (2013).

Moons, K. G. M. et al. PROBAST: a tool to assess risk of bias and applicability of prediction model studies: explanation and elaboration. Ann. Intern. Med. 170, W1–W33 (2019).

Ensor, J. et al. Systematic review of prognostic models for recurrent venous thromboembolism (VTE) post-treatment of first unprovoked VTE. BMJ Open. 6, e011190 (2016).

Schünemann, H. J. et al. Rating Quality of Evidence and Strength of Recommendations: GRADE: grading quality of evidence and strength of recommendations for diagnostic tests and strategies. BMJ 336, 1106–1110 (2008).

Iorio, A. et al. Use of GRADE for assessment of evidence about prognosis: rating confidence in estimates of event rates in broad categories of patients. BMJ 350, h870 (2015).

Smit, H. A. et al. Childhood asthma prediction models: a systematic review. Lancet Respir. Med. 3, 973–984 (2015).

Damen, J. A. et al. Prediction models for cardiovascular disease risk in the general population: systematic review. BMJ 353, i2416 (2016).

Schneider, A., Ay, M., Faderl, B., Linde, K. & Wagenpfeil, S. Diagnostic accuracy of clinical symptoms in obstructive airway diseases varied within different health care sectors. J. Clin. Epidemiol. 65, 846–854 (2012).

Cane, R. S., Ranganathan, S. C. & McKenzie, S. A. What do parents of wheezy children understand by “wheeze”? Arch. Dis. Child. 82, 327–332 (2000).

Netuveli, G., Hurwitz, B. & Sheikh, A. Lineages of language and the diagnosis of asthma. J. R. Soc. Med. 100, 19–24 (2007).

Reitsma, J. B., Rutjes, A. W., Khan, K. S., Coomarasamy, A. & Bossuyt, P. M. A review of solutions for diagnostic accuracy studies with an imperfect or missing reference standard. J. Clin. Epidemiol. 62, 797–806 (2009).

Harnan, S. E. et al. Measurement of exhaled nitric oxide concentration in asthma: a systematic review and economic evaluation of NIOX MINO, NIOX VERO and NObreath. Health Technol. Assess. 19, 1–330 (2015).

Crapo, R. O. et al. Guidelines for methacholine and exercise challenge testing-1999. This official statement of the American Thoracic Society was adopted by the ATS Board of Directors, July 1999. Am. J. Respir. Crit. Care Med. 161, 309 (2000).

Belgrave, D. et al. Disaggregating asthma: big investigation versus big data. J. Allergy Clin. Immunol. 139, 400–407 (2017).

Collins, G. S., Reitsma, J. B., Altman, D. G. & Moons, K. G. Transparent reporting of a multivariable prediction model for individual prognosis or diagnosis (TRIPOD): the TRIPOD statement. BMC Med. 13, 1 (2015).

Moons, K. G. et al. Critical appraisal and data extraction for systematic reviews of prediction modelling studies: the CHARMS checklist. PLoS Med. 11, e1001744 (2014).

Wallace, E. et al. Framework for the impact analysis and implementation of Clinical Prediction Rules (CPRs). BMC Med. Inform. Decis. Mak. 11, 62 (2011).

Pinnock, H. et al. Standards for reporting implementation studies (StaRI) statement. BMJ 356, i6795 (2017).

Daines, L. et al. Clinical prediction models to support the diagnosis of asthma in primary care: a systematic review protocol. NPJ Prim. Care Respir. Med. 28, 1–4 (2018).

Moher, D., Liberati, A., Tetzlaff, J. & Altman, D. G. Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement. Ann. Intern. Med. 151, 264–269 (2009).

Ingui, B. J. & Rogers, M. A. Searching for clinical prediction rules in MEDLINE. J. Am. Med. Inf. Assoc. 8, 391–397 (2001).

Geersing, G. J. et al. Search filters for finding prognostic and diagnostic prediction studies in Medline to enhance systematic reviews. PLoS ONE 7, e32844 (2012).

Welsh, E. J. & Carr, R. Pulse oximeters to self monitor oxygen saturation levels as part of a personalised asthma action plan for people with asthma. Cochrane Database Syst. Rev. 9, Art. No.: CD011584 (2015).

Acknowledgements

We are grateful to Marshall Dozier, Academic Librarian at the University of Edinburgh, for her help in developing the search strategy and Asthma UK for their support of the Asthma UK Centre for Applied Research. L.D. is supported by a clinical academic fellowship from the Chief Scientist Office, Edinburgh (CAF/17/01). A.S. is supported by the Farr Institute and Health Data Research UK. Neither funder nor sponsor (University of Edinburgh) contributed to protocol development.

Author information

Authors and Affiliations

Contributions

L.D. and H.P. conceived the idea for this work supported by A.S. and S.L. L.D. and A.B. completed title and abstract screening. L.D. and S.M. completed full-text screening, data extraction and risk of bias assessment. All authors contributed to analysis and interpretation of the findings. L.D. wrote the first draft, and all authors contributed to the manuscript.

Corresponding author

Ethics declarations

Competing interests

A.S. is Editor-in-Chief of npj Primary Care Respiratory Medicine, but was not involved in the editorial review of, nor the decision to publish, this article. The other authors declare no competing interests.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Daines, L., McLean, S., Buelo, A. et al. Systematic review of clinical prediction models to support the diagnosis of asthma in primary care. npj Prim. Care Respir. Med. 29, 19 (2019). https://doi.org/10.1038/s41533-019-0132-z

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41533-019-0132-z

This article is cited by

-

Predictive models for personalized asthma attacks based on patient’s biosignals and environmental factors: a systematic review

BMC Medical Informatics and Decision Making (2021)

-

Implementing asthma management guidelines in public primary care clinics in Malaysia

npj Primary Care Respiratory Medicine (2021)

-

Resolution of inflammation: from basic concepts to clinical application

Seminars in Immunopathology (2019)