Abstract

The identification of generalizable treatment response classes (TRC[s]) in major depressive disorder (MDD) would facilitate comparisons across studies and the development of treatment prediction algorithms. Here, we investigated whether such stable TRCs can be identified and predicted by clinical baseline items. We analyzed data from an observational MDD cohort (Munich Antidepressant Response Signature [MARS] study, N = 1017), treated individually by psychopharmacological and psychotherapeutic means, and a multicenter, partially randomized clinical/pharmacogenomic study (Genome-based Therapeutic Drugs for Depression [GENDEP], N = 809). Symptoms were evaluated up to week 16 (or discharge) in MARS and week 12 in GENDEP. Clustering was performed on 809 MARS patients (discovery sample) using a mixed model with the integrated completed likelihood criterion for the assessment of cluster stability, and validated through a distinct MARS validation sample and GENDEP. A random forest algorithm was used to identify prediction patterns based on 50 clinical baseline items. From the clustering of the MARS discovery sample, seven TRCs emerged ranging from fast and complete response (average 4.9 weeks until discharge, 94% remitted patients) to slow and incomplete response (10% remitted patients at week 16). These proved stable representations of treatment response dynamics in both the MARS and the GENDEP validation sample. TRCs were strongly associated with established response markers, particularly the rate of remitted patients at discharge. TRCs were predictable from clinical items, particularly personality items, life events, episode duration, and specific psychopathological features. Prediction accuracy improved significantly when cluster-derived slopes were modelled instead of individual slopes. In conclusion, model-based clustering identified distinct and clinically meaningful treatment response classes in MDD that proved robust with regard to capturing response profiles of differently designed studies. Response classes were predictable from clinical baseline characteristics. Conceptually, model-based clustering is translatable to any outcome measure and could advance the large-scale integration of studies on treatment efficacy or the neurobiology of treatment response.

Similar content being viewed by others

Introduction

Developing a major depressive disorder (MDD) and recovering from it is a dynamic process. While consensus definitions of MDD include core symptoms such as anhedonia and a depressed mood1, multiple additional symptoms may co-occur during an episode, each with individual patterns and variability throughout the episode2,3. During the development of a MDD, patients may go through sub-clinical phases with areas of preserved functioning in daily life, yet already show impaired psychosocial stress tolerance4,5. Strong inter-individual differences in the sensitivity towards psychosocial stress—a major risk factor for MDD6—may underlie such symptom plurality. Similarly, the regression of symptoms under treatment shows strong inter-individual differences. However, it is hypothesized that stable subgroups7,8,9 and predictive clinical patterns8,9,10,11,12,13 exist.

The latter is important for the successful clinical management of MDD. Treatment should ideally lead to full remission, as the persistence of residual symptoms increases the likelihood of a relapse14. Accordingly, delays of treatment intensifications or a switch of medication further increases the risk of treatment resistance and chronification15. Early treatment response (e.g., within 2 weeks) is particularly predictive of the longer course16—an established finding that also applies to outpatients and patients receiving a first-time antidepressant treatment17. Similarly, distinct psychopathological profiles reflect differences in the sensitivity of functional domains to stress and may be predictive of response patterns. For example, a patient suffering from severe anhedonia as a core symptom may respond particularly well to a treatment that addresses the dopaminergic system 18.

Due the heterogeneous symptomatology of depression, treatment response classes are typically based on compound scores on which relative change criteria or absolute thresholds are then applied (e.g., depression severity below a certain threshold over a defined time period). Different multivariate statistical techniques have been employed to identify predictive patterns for such conventional treatment response classes10,12,13. Chekroud et al.10 used an elastic net to identify 25 out of 164 patient-reportable variables of the Sequenced Treatment Alternatives to Relieve Depression (STAR*D) study that predicted response to citalopram. These variables were used to train a machine learning model, which could be validated with significant, yet low accuracy (59.7%) in an external sample. Nie et al.12, using data from the STAR*D study, trained five different machine learning algorithms on the full (700) or differently reduced (30 and 22, respectively) sets of clinical features to predict treatment resistance and non-resistance in STAR*D (at week 12) and an independent study (at week 6). Predictions were carried by early response markers and reached moderate accuracy. Wardenar et al.13 reported that the effect of information on comorbidities significantly improved the prediction of depression persistence and severity. Yet, while the here predicted response classes are mostly rooted in the long-known importance of early response and full remission, they are not data-based and may thus not represent all patterns contained in the data. Here, clustering analysis may be useful to dissect the dataspace into subspaces based on features that are shared within a subgroup and distinct between subgroups19. Clustering analysis has so far mainly been applied towards cross sectional markers to identify subgroups based on clinical symptom profiles20,21,22,23, cognitive markers24, or functional imaging markers25, assuming that clusters could indirectly reflect distinct pathophysiological components. Here, we attempt to cluster the treatment response space based on (total) symptom severity trajectories, i.e., the patients’ clinical development over a defined observation period. Longitudinal latent class analysis has reported five26 or nine such prototypical trajectories27 based on 12 weeks of observation. More specifically, the first study26 demonstrated rather limited prediction from ~13 clinical baseline items and polygenic scores formed from five literature-based, treatment associated genetic variants. The second study27 reported weak associations of response trajectories with the type of medication, yet investigated no clinical predictors. Another study revealed seven trajectories based on 1 year of observation28, yet, no prediction models were tested. One limitation of these studies, however, is their narrow generalizability as data from single centers studies were used.

In order to understand whether treatment response classes (TRC[s]) are specific to a study site-specific patient selection and treatment approach or whether they represent a generalizable dynamical fingerprint, we included two types of cohort studies in our work: First, the Munich Antidepressant Response Signature (MARS) study, a prospective, open, observational trial performed at the MPI of Psychiatry, Munich, and collaborating hospitals29. Second, the Genome-based Therapeutic Drugs for Depression (GENDEP) study, a partially randomized, multicenter clinical and pharmacogenomic study30. In both studies, the Hamilton Depression Rating Scale (HAM-D), which achieves good test-retest and interrater reliabilities31 was used to assess current symptom levels, covering most domains that define MDD such as depressed mood, suicidality, anhedonia, lack of drive, circadian symptom changes, and autonomous nervous system disturbances.

The aims of this study were (i) to establish TRCs in a data-driven fashion, based on serial depression ratings as acquired during studies with naturalistic or partially randomized treatment, and (ii) to assess the generalizability and clinical validity of the resulting TRCs. For this purpose, we applied a mixed model-based, non-linear longitudinal clustering technique to detect TRCs (also referred to as clusters) in MDD in our discovery sample, a subsample of the MARS cohort. The core feature of this clustering technique is to assigns individuals to a cluster (here: a TRC) by while borrowing information from all other individuals and hereby improving cluster stability, which often is critical for generalizability and clinical applications. For the second aim, we assessed cluster generalizability empirically in a second subsample of MARS (MARS validation sample) and in the GENDEP sample, and employed random forest analyses to explore if clinical characteristics at baseline can predict the TRCs in the MARS discovery and validation sample.

Methods and materials

General study samples characterization

Both the MARS and the GENDEP study protocol were approved by the respective local ethics committees. All participants gave their written informed consent before participation. MARS patients were admitted to the hospital of the MPIP, Munich, Germany, or collaborating hospitals in southern Bavaria and Switzerland for the treatment of different depressive disorders. Started in 2000, the study aimed at generating a large database of longitudinal observations with weekly ratings along with sociodemographic, psychopathological, and biological data from in-patients with all types of depressive disorders including MDD, bipolar depression, and schizoaffective disorder29. Diagnoses according to ICD1032 were obtained from trained psychiatrists using patient interviews and clinical documentation29. Of 1286 available patients, only patients with either a single episode of MDD (ICD-10 F32, N = 373) or a recurrent (unipolar) depressive episode (ICD-10 F33, N = 698) were eligible. Patients with bipolar depression (N = 175), chronic depression (ICD-10 F34, N = 3), or patients with a baseline HAM-D score <14 (N = 37) were excluded. Of the remaining 1071 datasets suitable for this study, 834 patients (recruited 2002–2011) formed the discovery sample and 236 patients (recruited 2012–2016) the MARS validation sample. The split point represented an organizational intercept related to genotyping activity unrelated to this study. The age range was 18–87 years (see Table 1 for demographic and clinical details). Patients were treated psychopharmacologically according to the attending doctor’s choice and received therapeutic drug monitoring to optimize plasma medication levels. Depression symptoms were evaluated weekly using the 21-item version of the HAM-D until week 6 and, after that, bi-weekly until discharge or, if not discharged, until week 16 as the latest assessment. During the first six weeks, 7.1% of the HAM-D scores were accidentally missing due to organizational reasons. Accidentally missing HAM-D scores of the first 6 weeks and bi-weekly skipped HAM-D scores between week 6 and 16 were linearly interpolated from the previous and subsequent scores to obtain complete time series. Eighty-eight percent of patients of the discovery and 99% of the MARS validation samples were discharged before week 16 and thus provided HAM-D time series with fewer than 17 data points.

The GENDEP study represents a partially randomized, multicenter clinical, and pharmacogenomic study on depression33 into which 826 subjects were enrolled between July 2004 and December 2007. The main inclusion criterion was the diagnosis of a major depressive episode of at least moderate severity as defined by DSM-V1 and ICD-10 criteria32 and as established by the Schedules for Clinical Assessment in Neuropsychiatry (SCAN, version 2.1)34. Exclusion criteria were a first-degree relative with bipolar affective disorder or schizophrenia, a history of a hypomanic or manic episode, mood incongruent psychotic symptoms, primary substance misuse, primary organic disease, current treatment with an antipsychotic or a mood stabilizer, and pregnancy or lactation. Patients eligible for both antidepressants were randomly allocated to receive either nortriptyline (50–150 mg/day) or escitalopram (10–30 mg/day) for 12 weeks with clinically informed dose titration. Patients with a history of adverse effects, non-response or contraindications to one of these drugs were non-randomly allocated to the other drug. Patients who could not tolerate the initially allocated medication or who did not experience sufficient improvement with adequate dosage within 8 weeks were offered the other antidepressant. Depression symptoms were evaluated weekly until week 12 by psychiatrists or psychologists using the 17-item version of the HAM-D score35. The age range of all subjects was between 18 and 72 years, all patients were of European ethnicity. A total of 15 subjects who had missing data on all three suicidality items at baseline were excluded, as were patients with a baseline HAM-D score <14, leaving 809 patients for analysis35. Demographic data are given in Supplementary Table S1. Different biological aspects of treatment response36,37 and psychopathological predictor schemes have been reported from this study 27.

Clustering algorithm

A mixed model approach was used to describe the course of the individual HAM-D score time series, after (natural-) logarithm (ln) transformation (ln of [HAM-D scores +0.5]), considering information not only from the individual trajectory, but combining trajectories of several patients to identify TRCs. For a first organization of HAM-D responses into TRCs, we applied the FlexMix38,39 clustering algorithm in R (version 3.3) on the HAM-D trajectories of the MARS discovery sample. FlexMix provides an infrastructure for the flexible fitting of finite mixture models using the expectation-maximization algorithm to cluster individual trajectories. The algorithm iterates between computing the expectation of the log-likelihood and maximizing it to find the parameters of the TRCs. To achieve a stable cluster solution, we ran the clustering model with 200 repetitions and determined the optimal number of TRCs based on the Integrated Completed Likelihood (ICL) criterion generated by the model.

To validate the stability and generalizability of the clustering solution, the coefficients of the model of the discovery sample were projected onto a second, later acquired subsample of the same cohort, referred to as MARS validation sample (N = 236). Here, the hypothesis was that the patients are classifiable into the defined TRCs with approximately equal proportions and similar cluster-wise median HAM-D courses as had been observed for the discovery sample. In addition, we projected the same clustering model onto 12-week HAM-D courses of the GENDEP sample, hypothesizing similar median HAM-D courses per class, yet, not necessarily similar cluster proportions due to differences in the patient population and the study design. For both projection experiments, resulting proportions of classes were compared with the original distribution of the discovery sample using a χ2 test. In order to assess suitability of the clustering solution for the validation samples, posterior likelihood values, classification log-likelihoods and eventually ICL values for were calculated on the basis of the clustering model of the discovery sample.

To assess the applicability of the original clustering coefficients to samples with a shorter observation interval, we systematically lowered the number of applied coefficients down to 1 and, for each observation interval report, compared this classification with the classification based on all coefficients (i.e., the full observation interval). The true distance (or dis-correlation) between the two solutions was calculated by Pearson correlation between model-based slope values of the respective TRC.

Multivariate prediction analyses

We then conducted a multivariate analysis using a random forest algorithm as implemented in the R package Ranger40 to detect associations between clinical variables and the previously obtained TRCs in the MARS sample.

Clinical predictors

All 72 clinical variables are explained in Table 1. Their selection was based on two rationales: First, availability in both MARS subsamples and, second, preference of such variables that are based on broadly available measurement instruments. The main model (model 0) comprised 50 clinical variables strictly from the baseline assessment, covering the domains of sociodemographic data, clinical diagnosis, history of the MDD, the current episode, psychiatric family history, basic laboratory data, life events, the current psychopathology (Symptom Checklist [SCL-90R])41, and personality questionnaires (Eysenck Personality Questionnaire [EPQ]42, Tridimensional Personality Questionnaire [TPQ]43). As random forest models require complete datasets, missing data were filled by the respective median of the total sample (for details see Supplementary Table S2). Extended models were: Model 1, which is model 0 expanded by 21 baseline HAM-D single items to investigate the effect of unfolding the baseline psychopathology; model 2, which is model 0 expanded by the partial response at week 2 to investigate the influence of early longitudinal observations; model 3, the combination of both expansions (Supplementary Fig. S1).

Random forest-based prediction models

The basic algorithm used in the Ranger package is a fast implementation of random forests for high dimensional data. In a random forest, each node is split using the best among a subset of predictors randomly chosen at that node44. Two parameters were used to control this process: the number of prediction trees (bagging) and the number of features to search across to find the best feature (mtry). Mtry is the square root of D, which is the number of independent predictors used for classification. Predictions were obtained by aggregating the prediction trees (i.e., the majority votes for classification and the average for regression models). We calculated adjusted coefficients of multiple correlation R2 (to quantify the explained variance and predictive quality of the entire model) and corresponding p-values. To characterize feature importance, a permutation based method that exploits the distribution of measured importance for each variable in a non-informative setting was applied45 (10000 permutations); predictors with p < 0.05 are reported in more detail. Further, differences in R2 between competing models were compared after Fisher’s Z-transformation of the respective r values.

Prediction models were estimated on the pooled discovery and validation MARS sample. For each set of predictors, two ways of modeling the HAM-D time series were considered: first, the patient’s individual treatment response slope, a simple linear regression on ln-transformed HAM-D values, and, second, the slope derived from the clustering model. The rationale for this comparison was to determine the quality of the clustering method to generate meaningful and generalizable outcome classes. Further, class specific classification accuracy values (i.e., [true positives + true negatives]/[true positives + false positives + true negatives + false negatives]), were calculated on the basis of respective confusion matrices in which the class of interest was defined as true class, and the remaining six other classes as false class.

Results

Clustering of HAM-D time courses

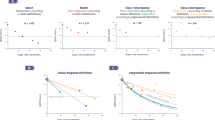

When applied to the HAM-D time courses of the discovery sample, the FlexMix clustering algorithm did not converge for any number of clusters k < 4 or k > 10. We therefore assessed cluster stability in more detail for k ≥ 4 and k ≤ 10, using 200 repetitions of the algorithm for each k. The lowest value of the ICL criterion, representing an optimal model fit, was found for seven clusters (Supplementary Fig. S2A). Figure 1 shows the resulting TRCs (C1 to C7), sorted by their model-derived slope. C1 showed the fastest symptom improvement, whereas C2 and C3 were characterized by improvements at slower rates. Cluster C4 reflected a more volatile symptom development, while C5, C6, and C7 were characterized by low improvement rates, with C7 showing practically no improvement over at least 16 weeks. Mean baseline HAM-D scores differed slightly between clusters (ANOVA, p = 0.009); mean average HAM-D scores of the episode differed strongly (ANOVA, p = 4.022 × 10−116). Cluster-derived slopes correlated weakly with baseline HAM-D (r = 0.09, p = 0.002) and strongly with average HAM-D scores of the episode (r = 0.57, p = 8.270 × 10−76) (Supplementary Table S3).

X-axis: observation time in weeks; Y-axis: natural logarithm-transformed HAM-D values (purple: raw values, black: cluster-specific median, pink: model-based linear fit). Slope and intercept values of all clusters are given on the right. Clusters are sorted from C1 to C7 according to the cluster-specific slope. Absolute and relative cluster sizes in all samples are given within the subplots. Green borders represent the limits in which 95% of HAM-D values of the discovery sample were contained. These were transferred to columns 2 and 3 to allow for comparison with the validation samples. S slope, I intercept, ln natural logarithm-transformed

To examine whether the TRCs represent stable and generalizable entities, we assigned the patients of the two MARS- and GENDEP-based validation samples to clusters, using the coefficients of the model estimated in the discovery sample. Figure 1 compares the individual trajectories across the three samples and shows the respective cluster-specific median time courses along with boundaries that include 95% of values of the discovery sample. Supplementary Fig. S2B shows ICL values for both validation samples, separately and combined. All samples showed an ICL minimum for seven clusters except for the MARS validation sample. The latter showed a flat ICL profile with a relative minimum at five clusters, most likely due to the relatively small sample size of about 30% compared with the MARS discovery and the GENDEP validation sample. For the MARS validation sample we observed that median HAM-D courses were highly similar to the discovery sample and cluster proportions were not different (Χ2 = 6.157, p = 0.40). The GENDEP validation sample exhibited very similar median HAM-D courses compared with the discovery sample, except for C4, which had lower median values compared with MARS, caused by several patients with high volatility between week 4 and ~10 and HAM-D values below the 95% threshold. Compared with the MARS discovery sample, GENDEP clusters had different proportions (Χ2 = 177.13, p = 1.38 × 10−35), showing fewer fast responders (e.g., in C1, average 4.9 weeks to discharge) and more slow responders (e.g., in C7 average 20.8 weeks to discharge).

Next, we analyzed to what degree a lower number of sequential observations would suffice to predict the TRCs instead of using the full observation interval. Here, we detected an almost linear increase of the correlation coefficient between the reduced and full solutions from week 0–4. Correlations were already high at week 8 for the MARS validation and the combined MARS sample (0.96–0.98) (Fig. 2). For GENDEP, as fully independent sample, the slope was generally lower, reaching 0.77 at week 8 and remaining linear until its maximum.

Correlation of prediction result achieved from reduced observation intervals ranging from one observation (baseline HAM-D) to the full set of either 17 HAM-D values (baseline through week 16, for MARS derived samples) or 13 HAM-D values (baseline through week 12, for GENDEP sample). Pearson correlations were calculated between clusters predicted using the reduced and predicted with the full observation interval, using the model-based HAM-D slope of the respective cluster. Note that a positive linear correlation of ≈0.50 was reached at week 2 and a correlation of ≈0.96 (for the MARS samples) and ≈0.77 (for GENDEP) was reached at week 8

Strong correlations between the TRCs and established response markers (weeks until discharge, response [50% relative symptom decrease at discharge] and remission [HAM-D < 10 at discharge]) were confirmed (Supplementary Table S4). These differences were significant between ~80% of neighboring clusters, particularly for remission as a conservative criterion (Supplementary Table S5), highlighting an ecological importance of the cluster differences. Clusters also differed regarding the psychopharmacological treatment administered throughout the episode for three of nine medication classes (benzodiazepines, tricyclic antidepressant, and antipsychotics) (Supplementary Table S4).

Predicting TRCs from clinical characteristics

We assessed whether the attribution of patients to the TRCs can be predicted from clinical characteristics. While explorative, the analysis served mainly as general cluster validation step by probing if the TRCs associate with clinically plausible and previously reported prediction patterns. To this end, we analyzed four models with a focus on model 0 that comprised 50 clinical baseline items. Model 1 comprised additional baseline HAM-D single items, model 2 contained the early partial response at week 2, and model 3 combined models 1 and 2. All four models predicted treatment response in the combined MARS sample for both alternatives of modelling the slope (individual and cluster-derived) (both p < 2.17 × 10−21, Table 2). Overall, two performance levels (A and B) were observed for models using the cluster-derived slope: (A) Model 0 and 1 both explained 13% of the variance, which means that no gain was achieved by inclusion of the baseline HAM-D single items. (B) Model 2 and 3 explained 20% and 21% of the variance, respectively, with the improvement over (A) induced by the early partial response item; as observed in the first comparison (A), no added effect of the baseline HAM-D single items was seen for model 3. Predictions were also significant for all four models when analyzing the two MARS subsamples (p < 1.30 × 10−17 and p < 8.71 × 10−5 for the discovery and validation sample, respectively). It is worth mentioning that for the MARS validation sample the prediction analysis was entirely independent from the clustering procedure. Across all models, using the cluster-derived slope explained significantly more of the variance than using the individual slopes (Table 2). Classification accuracies as calculated from cluster-specific confusion matrices ranged between 75.0% and 95.2% (Supplementary Table S6 for details).

Table 3 lists 10 (out of 50) predictors of model 0 that gained significance based on a multivariate comparison of the respective single item against all other competing items45. We also analyzed univariate associations of these items with the TRCs (likelihood ratio test on a generalized linear model). Concordantly, both types of comparison revealed strongest effects for the personality items neuroticism, extraversion, and harm avoidance. Furthermore, we investigated the cluster-specific averages of each clinical item, comparing them to the 95% confidence interval (CI) of the entire sample (Table 3): Clusters with fast improvement (C1 and C2) showed below-average values of all predictors except for the personality trait of extraversion. By contrast, the treatment resistance cluster C7 showed above-average values of all items except for the personality items extraversion and psychoticism. More generally, except for extraversion, there was a tendency that lower clinical scores (i.e., a shorter duration of the current episode, less SCL-90R symptoms, fewer stress-weighted life events, and lower scores for the personality items neuroticism and harm avoidance) were found in clusters with good treatment response, and higher scores in clusters C6 or C7. Deviations from this pattern, mostly in the intermediate clusters C3–C5 (see, for example, the stress-weighted life events) may point towards non-linear relationships or complex interactions. No demographic variables were selected by the random forest algorithm. Still, to not overlook demographic variables that could have driven the clustering, we compared these between the clusters, particularly of the MARS discovery sample, finding no relevant differences (Supplementary Table S7).

Supplementary Table S8 summarizes significant predictors of the three extended models. In brief, model 1, compared to model 0, was characterized by prioritizing three baseline HAM-D single items; model 2 identified, as expected, early partial response as a strong predictor, along with minor other shifts; model 3 produced a combined pattern with baseline HAM-D single items, early partial response, and current psychotic symptoms as additional predictors over model 0.

Discussion

We employed model-based non-linear clustering38,39 on symptom courses of 834 in-patients treated for MDD and identified seven TRCs. These classes were already distinct at the visual level and ranged from fast, unambiguous response to severe treatment resistance. The average HAM-D decrease differed strongly between classes, and classes were strongly associated with established response markers, highlighting that they represent clinically meaningful entities. Baseline severity was only weakly correlated with the response slope over a small HAM-D range, contradicting the intuitive expectation that a high initial disease severity is closely coupled to a steep symptom decline. Classification of 236 patients of the MARS validation sample and 809 patients of the GENDEP validation sample demonstrated that the patients’ response dynamics can be captured by these clusters, yet study-specific differences in the response profiles are also reflected.

Construct validity of the clustering solution

Similar cluster sizes and shape characteristics emerged when the discovery sample coefficients were applied to the validation samples (Fig. 1). The consistency observed in this validation is superior to previous latent variables analyses not using machine learning, which did not produce stable, symptom-based subtypes of depression3. Still, a major difference that limits the comparability is that the mentioned analyses (factor analyses, principal component analyses, latent class analyses) built their grouping on cross sectional symptom spectrum and not on trajectories of symptom changes.

Here, we applied a machine learning strategy to identify MDD subtypes based on longitudinal data collected over up to 16 weeks. Our results indicate that significant latent subtypes for MDD indeed exist in the MARS cohort. One advantage of our approach may have been the identification of the best model through the ICL criterion that appears more robust to the violation of some of the mixture model assumptions compared with the commonly used Bayesian Information Criterion. Therefore, the use of the ICL may have led to a more optimal choice for the number of clusters and, accordingly, to a more sensible data partitioning 46,47.

Within each model, the use of slopes derived from the linear mixed model characterizing each TRC led to higher R2 coefficients than the use of individual slopes, particularly in the validation sample (Table 2). This observation strengthens the validity of the classes and highlights that the individual information of the HAM-D time courses was indeed assessed by the clustering algorithm. Moreover, this emphasizes that the average slope of the class is a good approximation of the response behavior, helping to denoise individual observations.

Clustering independent patient groups and simulating reduced observation intervals

To facilitate the translation of our clustering scheme to other cohorts and to understand the degree of generalizability of our clustering solution, we analyzed two aspects:

First, we projected the clustering coefficients to an independent MARS subsample and found that these patients were assigned to classes with similarly shaped group plots and median HAM-D courses as observed for the discovery sample. The observation that the classes formed from the MARS validation sample were also equally proportioned as in the discovery sample confirmed that, within the MARS cohort, a stable solution had been gained. The additional projection onto the GENDEP sample was also informative: Here, patients could be captured equally well by the seven TRCs except for a small proportion of patients that exceeded the lower boundary of one (discovery) cluster due to volatile courses between week 4 and ~10. More relevant, however, significantly different cluster proportions emerged compared with MARS. We speculate that the limited options to intensify treatment in the GENDEP study with defined treatments—or generally different patient characteristics—could underlie the proportional shift towards clusters that represent a slower treatment response. The combination these two observations led us to conclude that indeed generalizable response patterns seem to be described by the seven TRCs. Though, different cluster stability criteria may lead to different solutions, as for example pointed out by a longitudinal latent class analysis that used Bayesian Information Criterion and detected nine clusters in GENDEP27. Comparability with our solution, though, is hampered by the use of a different depression rating scale (Montgomery-Åsberg Depression Rating Scale).

Second, in a simulation, we reduced the observation interval to probe whether studies with shorter observation windows could also benefit from the current clustering solution. We found that a correlation of r ≈ 0.96 was reached after eight weeks of HAM-D measurements in the MARS-based samples and r ≈ 0.77 in the independent GENDEP sample (Fig. 2). Of note, the remaining increase of prediction accuracy between weeks 8 and 12 was stronger in GENDEP, indicating that observation windows of 8 weeks generally seem sufficient, but that, expectedly, differences in study characteristics play a role, rendering more observations advisable. One such difference that could explain the difference at week 8 might have been the higher flexibility in the MARS study to adjust the treatment to the individual patient. Overall, the generalizability of our clustering solution could be higher for observational than for controlled studies.

Prediction of TRCs from clinical baseline features

We next investigated the clinical usefulness of the TRCs by testing whether these can be predicted from clinical baseline characteristics in a multivariate model (random forest algorithm)40. Rather than as a separate study we conceptualized this analysis as additional clinical validation of the clusters that primarily represent statistical constructs. Several machine learning techniques have before been used to predict treatment outcome in MDD48,49,50, yet, their models were directed towards classical categories of remission, non-remission10, treatment resistance12, or persistence-severity13. In brief, we found that 50 clinical baseline variables, obtained through interviews, symptom self-reports, and standard physical or laboratory tests, predicted about 13% of the variance of the TRCs. While seemingly low, this is actually in the range of previous multivariate analyses that focused on the prediction of two outcome categories, reporting low to medium accuracy values from receiver operating characteristic analyses10,12,13. In contrary to using pre-defined cutoff thresholds for these categories, clustering as exemplified here for the HAM-D measure can reveal more fine-grained, yet still sparse and data-driven classification systems. Of our clinical predictors, nine carried significantly more weight than the others: (i) the duration of the index episode, (ii–iv) symptom checklist-based scores for psychosocial self-assuredness, psychoticism, and phobic anxiety, (v–viii) the personality traits neuroticism, extraversion, psychoticism, and harm avoidance, and, (ix), sum scores for life events (weighted for their straining impact). Although all items support the overall prediction, a review of these nine items strengthened the clinical validity in several ways:

A longer duration of time in depression before initiation of antidepressant treatment has before been identified as a negative predictor of treatment outcome51. In contrary, no consistent predictive value was found for the total duration of the current episode including periods with and without treatment52,53. As the period without treatment was not quantified in our sample, we speculate that our current episode duration marker incorporated the untreated period, and significance was gained through the large statistical power. Furthermore, baseline symptom profiles made a relevant contribution to the model. Several reports emphasized that strong anxiety symptoms during a depressive episode increase the risk for non-remission54. Of the predictive symptom items (phobic anxiety, psychosocial self-assuredness, and psychoticism) at least two reflect aspects of anxiety, corroborating that high anxiety levels in MDD impede treatment response. Of note, in an analysis on a MARS subsample, patients with high anxiety levels showed structural brain differences in areas involved in the processing of social cues55, critically overlapping with areas that predict treatment response over six weeks 56.

While the symptom checklist covers state-related items, personality questionnaires target more stable characteristics of a person. Here, harm avoidance and neuroticism—which both represent similar concepts of developing feelings of anxiety and avoidance behavior in the face of challenges—were confirmed as predictors. Such an association has been reported before57,58, which constitutes an indirect validation of the TRCs. Extraversion has so far mainly been found to protect against developing clinical symptoms in the face of chronic stress59. We report a clearer direct impact on treatment response, a finding possibly facilitated by the random forest approach that integrates multiple interaction effects. Eventually, weighted life events emerged as a negative predictor, as reported60,61. Life events, particularly early adverse events, represent episodes of prolonged adaptation, stress, and liability that increase the risk for MDD, but that also influence recovery chances51,52,53,62,63. Information on early childhood adversity was only available in a subsample (≈35%), disqualifying it for the full model. We speculate that the inclusion of additional details on the type and timing of life events could improve the model.

In an earlier representative MARS sample29, previous treatment resistance—usually defined by at least two unsuccessful trials with different antidepressants in adequate dosages for at least six weeks64—has been identified as a strong univariate predictor of non-remission. In this study, treatment resistance was encoded by the Antidepressant Treatment Response Questionnaire (ATRQ) that showed no significant importance p-value (yet a significant univariate association [data not shown]). Results based on the ATRQ may differ because this measure tends to underreport failed trials65. Similarly, the BMI, previously reported to be associated with remission rates29 and treatment response66, was not associated with the TRCs in our study. One explanation is the use of a binary cutoff (25 kg/m2) in the positive report66, which may point to a non-linear relationship. Of note, the number of previous depressive episodes—a lifetime disease burden marker—did not emerge as a predictor, confirming other negative reports64. Similarly, age at onset (AAO), which is often inversely correlated with the number of episodes, was not predictive. Concerning this marker, reports are mixed, some finding no correlation67,68 and some reporting an influence on remission speed69 or treatment resistance70. Hidden interactions of AAO with subgroups (as reported for comorbid alcohol dependency)71 or non-linear relationships may explain this variability. Baseline cortisol as a simple HPA axis marker was also not predictive; stimulation tests, particularly when obtained longitudinally, are most likely more sensitive72. TRCs also differed by the type of psychopharmacological treatment (Supplementary Table S2), yet, due to the observational study design, this likely reflects either disease acuity (anxiolytic medication), treatment escalation following non-response (e.g., tricyclic antidepressants), or episode severity (antipsychotic medication for psychotic depression). Similar confounding co-correlations between medication variables and disease severity have been reported for biological markers, e.g., in meta-analyses of brain structure 73,74.

We explored two different strategies for improving our base model 0 (Table 1), by either adding single baseline HAM-D items or by adding information on the partial early response after 2 weeks. Interestingly, the inclusion of single baseline HAM-D items did not improve the model (Table 2), possibly because the current symptomatology was already reflected in the symptom checklist items. This does not imply that primary clustering of single item trajectories would not result in additional clusters. While representing an important follow-up question and adding clinical elaborateness, this conceptual modification would increase the number of observations per case and could lead to model instability. Eventually, including the partial early response increased the model fit markedly, confirming similar reports from both observational and controlled studies12,16,48,49,50,62,63. Notably, personality items were among the strongest predictors in all models (Table 3).

Limitations

Our study has several limitations. First, due to a necessary tradeoff between higher statistical power through a large sample size and the use of powerful, specific single predictors, clinical variables like neurocognitive results, complex endocrine tests, or neuroimaging markers were not included, despite reports on them being potentially useful72,75. Second, while psychopharmacological treatments are well-documented in MARS, no formalized assessment of previous non-pharmacological treatments, including psychotherapy, was available, preventing an inclusion of these factors. Third, the MARS discovery and validation samples significantly differed in six clinical baseline items, which may explain minor differences of the prediction results. However, these six items showed no overlap with the most informative predictors of model 0 or predictors emerging from the other models.

Conclusions

By employing model-based non-linear clustering to clinical ratings of a large cohort of MDD patients, we detected seven distinct treatment response classes that proved stable in two validation samples. In a multivariate prediction analysis, these classes could be predicted from 50 clinical baseline variables, with personality items, life events, duration of the episode, and psychopathological baseline characteristics carrying particular weight. Overall, the construct and clinical validity of these treatment response classes in MDD encourages an exploration of their neurobiological underpinnings and, more generally, effectively describes response patterns across multiple clinical cohorts.

References

American Psychiatric Association. Diagnostic and statistical manual of mental disorders: DSM-5. 5th ed. American Psychiatric Association, Washington, D.C, 2013.

Rush, A. J. The varied clinical presentations of major depressive disorder. J. Clin. Psychiatry 68(Suppl 8), 4–10 (2007).

van Loo, H.M., de Jonge, P., Romeijn, J-W., Kessler, R.C., Schoevers, R.A. Data-driven subtypes of major depressive disorder: a systematic review. BMC Med. https://doi.org/10.1186/1741-7015-10-156 (2012).

Leyro, T. M., Zvolensky, M. J. & Bernstein, A. Distress tolerance and psychopathological symptoms and disorders: a review of the empirical literature among adults. Psychol. Bull. 136, 576–600 (2010).

Nelson, B., McGorry, P. D., Wichers, M., Wigman, J. T. W. & Hartmann, J. A. Moving from static to dynamic models of the onset of mental disorder: a review. JAMA Psychiatry 74, 528 (2017).

McEwen, B.S. Neurobiological and systemic effects of chronic stress. Chronic Stress (Thousand Oaks) 1, https://www.ncbi.nlm.nih.gov/pubmed/28856337 (2017).

Wardenaar, K. J., Monden, R., Conradi, H. J. & de Jonge, P. Symptom-specific course trajectories and their determinants in primary care patients with Major Depressive Disorder: evidence for two etiologically distinct prototypes. J. Affect Disord. 179, 38–46 (2015).

Bühler, J., Seemüller, F. & Läge, D. The predictive power of subgroups: an empirical approach to identify depressive symptom patterns that predict response to treatment. J. Affect Disord. 163, 81–87 (2014).

Fava, M. et al. Clinical correlates and symptom patterns of anxious depression among patients with major depressive disorder in STAR*D. Psychol. Med. 34, 1299–1308 (2004).

Chekroud, A. M. et al. Cross-trial prediction of treatment outcome in depression: a machine learning approach. Lancet Psychiatry 3, 243–250 (2016).

Gili, M. et al. Clinical patterns and treatment outcome in patients with melancholic, atypical and non-melancholic depressions. PLoS ONE 7, e48200 (2012).

Nie, Z., Vairavan, S., Narayan, V. A., Ye, J. & Li, Q. S. Predictive modeling of treatment resistant depression using data from STAR*D and an independent clinical study. PLoS ONE 13, e0197268 (2018).

Wardenaar, K. J. et al. The effects of co-morbidity in defining major depression subtypes associated with long-term course and severity. Psychol. Med. 44, 3289–3302 (2014).

Verhoeven, F. E. A., Wardenaar, K. J., Ruhé, H. G. E., Conradi, H. J. & de Jonge, P. Seeing the signs: using the course of residual depressive symptomatology to predict patterns of relapse and recurrence of major depressive disorder. Depress Anxiety 35, 148–159 (2018).

Habert, J. et al. Functional recovery in major depressive disorder: focus on early optimized treatment. Prim. Care Companion CNS Disord. https://doi.org/10.4088/PCC.15r01926 (2016).

Szegedi, A. et al. Early improvement under mirtazapine and paroxetine predicts later stable response and remission with high sensitivity in patients with major depression. J. Clin. Psychiatry 64, 413–420 (2003).

Nierenberg, A. A. et al. Residual symptoms after remission of major depressive disorder with citalopram and risk of relapse: a STAR*D report. Psychol. Med. 40, 41 (2010).

Peciña, M. et al. Striatal dopamine D2/3 receptor-mediated neurotransmission in major depression: implications for anhedonia, anxiety and treatment response. Eur. Neuropsychopharmacol. 27, 977–986 (2017).

Xu, D. & Tian, Y. A comprehensive survey of clustering algorithms. Ann. Data Sci. 2, 165–193 (2015).

Rhoades H. The Hamilton Depression Scale: factor scoring and profile classification. Psychopharmacol. Bull 19, 91–96 (1983).

Maier, W. Dimensions of the Hamilton-Depression-Scale (HAMD), a factor analytical study. Eur. Arch. Psychiatry Neurol. Sci. 234, 417–422 (1985).

Monden, R., Wardenaar, K. J., Stegeman, A., Conradi, H. J. & de Jonge, P. Simultaneous decomposition of depression heterogeneity on the person-, symptom- and time-level: the use of three-mode principal component analysis. PLoS ONE 10, e0132765 (2015).

Hybels, C. F., Blazer, D. G., Pieper, C. F., Landerman, L. R. & Steffens, D. C. Profiles of depressive symptoms in older adults diagnosed with major depression: latent cluster analysis. Am. J. Geriatr. Psychiatry J. Am. Assoc. Geriatr. Psychiatry 17, 387–396 (2009).

Cotrena, C., Damiani Branco, L., Ponsoni, A., Milman Shansis, F. & Paz Fonseca, R. Neuropsychological clustering in bipolar and major depressive disorder. J. Int Neuropsychol. Soc. 23, 584–593 (2017).

Zeng, L.-L., Shen, H., Liu, L. & Hu, D. Unsupervised classification of major depression using functional connectivity MRI: unsupervised Classification of Depression. Hum. Brain Mapp. 35, 1630–1641 (2014).

Kelley, M. E. et al. Response rate profiles for major depressive disorder: characterizing early response and longitudinal nonresponse. Depress Anxiety 35, 992–1000 (2018).

Uher, R. et al. Early and delayed onset of response to antidepressants in individual trajectories of change during treatment of major depression: a secondary analysis of data from the genome-based therapeutic drugs for depression (GENDEP) study. J. Clin. Psychiatry 72, 1478–1484 (2011).

Hartmann, A., von Wietersheim, J., Weiss, H. & Zeeck, A. Patterns of symptom change in major depression: classification and clustering of long term courses. Psychiatry Res. 267, 480–489 (2018).

Hennings, J. M. et al. Clinical characteristics and treatment outcome in a representative sample of depressed inpatients - findings from the Munich Antidepressant Response Signature (MARS) project. J. Psychiatr. Res. 43, 215–229 (2009).

Uher, R. et al. Differential efficacy of escitalopram and nortriptyline on dimensional measures of depression. Br. J. Psychiatry 194, 252–259 (2009).

Zimmerman, M., Chelminski, I. & Posternak, M. A review of studies of the Hamilton depression rating scale in healthy controls: implications for the definition of remission in treatment studies of depression. J. Nerv. Ment. Dis. 192, 595–601 (2004).

Dilling H, Weltgesundheitsorganisation (eds). Internationale Klassifikation psychischer Störungen: ICD-10 Kapitel V (F); klinisch-diagnostische Leitlinien. 6., vollst. überarb. Aufl. unter Berücksichtigung der Änderungen entsprechend ICD-10-GM 2004/2008. Huber, Bern, 2008.

Uher, R. et al. Genome-wide pharmacogenetics of antidepressant response in the GENDEP project. Am. J. Psychiatry 167, 555–564 (2010).

Wing JK, Sartorius N, Üstün TB. Diagnosis and clinical measurement in psychiatry: a reference for SCAN. Cambridge University Press, Cambridge, 2006.

Uher, R. et al. Measuring depression: comparison and integration of three scales in the GENDEP study. Psychol. Med. https://doi.org/10.1017/S0033291707001730 (2008).

Uher, R. et al. An inflammatory biomarker as a differential predictor of outcome of depression treatment with escitalopram and nortriptyline. Am. J. Psychiatry 171, 1278–1286 (2014).

Powell, T. R. et al. DNA methylation in interleukin-11 predicts clinical response to antidepressants in GENDEP. Transl. Psychiatry 3, e300–e300 (2013).

Leisch F. FlexMix: A General Framework for Finite Mixture Models and Latent Class Regression in R. J. Stat. Softw. https://doi.org/10.18637/jss.v011.i08 (2004).

Grün B, Leisch F. FlexMix Version 2: Finite mixtures with concomitant variables and varying and constant parameters. J. Stat. Softw. https://doi.org/10.18637/jss.v028.i04 (2008).

Wright MN, Ziegler A. ranger: A Fast Implementation of Random Forests for High Dimensional Data in C++ and R. J. Stat. Softw. https://doi.org/10.18637/jss.v077.i01 (2017).

Derogatis LR. SCL-90-R, administration, scoring & procedures manual-I for the R(evised) version. Baltimore, MD: Johns Hopkins University, School of Medicine. Johns Hopkins University, School of Medicine, Baltimore, 1977.

Eysenck, S. B. G., Eysenck, H. J. & Barrett, P. A revised version of the psychoticism scale. Pers. Individ Differ. 6, 21–29 (1985).

Cloninger, C. R. A systematic method for clinical description and classification of personality variants: a proposal. Arch. Gen. Psychiatry 44, 573 (1987).

Breiman L. Random forests. Mach. Learn. 45: 5–32 (2001).

Altmann, A., Toloşi, L., Sander, O. & Lengauer, T. Permutation importance: a corrected feature importance measure. Bioinformatics 26, 1340–1347 (2010).

Biernacki, C., Celeux, G. & Govaert, G. Assessing a mixture model for clustering with the integrated completed likelihood. IEEE Trans. Pattern Anal. Mach. Intell. 22, 719–725 (2000).

Baudry, J.-P. Estimation and model selection for model-based clustering with the conditional classification likelihood. Electron J. Stat. 9, 1041–1077 (2015).

Kudlow, P. A., Cha, D. S. & McLntyre, R. S. Predicting treatment response in major depressive disorder: the impact of early symptomatic improvement. Can. J. Psychiatry 57, 782–788 (2012).

McIntyre, R. S. et al. Early symptom improvement as a predictor of response to extended release quetiapine in major depressive disorder. J. Clin. Psychopharmacol. 35, 706–710 (2015).

Henkel, V. et al. Does early improvement triggered by antidepressants predict response/remission?—Analysis of data from a naturalistic study on a large sample of inpatients with major depression. J. Affect Disord. 115, 439–449 (2009).

Hung, C.-I., Liu, C.-Y. & Yang, C.-H. Untreated duration predicted the severity of depression at the two-year follow-up point. PLoS ONE 12, e0185119 (2017).

Gilmer, W. S. et al. Does the duration of index episode affect the treatment outcome of major depressive disorder? A STAR*D report. J. Clin. Psychiatry 69, 1246–1256 (2008).

Sung, S. C. et al. The impact of chronic depression on acute and long-term outcomes in a randomized trial comparing selective serotonin reuptake inhibitor monotherapy versus each of 2 different antidepressant medication combinations. J. Clin. Psychiatry 73, 967–976 (2012).

Otte, C. Incomplete remission in depression: role of psychiatric and somatic comorbidity. Dialog-. Clin. Neurosci. 10, 453–460 (2008).

Inkster, B. et al. Structural brain changes in patients with recurrent major depressive disorder presenting with anxiety symptoms. J. Neuroimaging 21, 375–382 (2011).

Sämann, P. G. et al. Prediction of antidepressant treatment response from gray matter volume across diagnostic categories. Eur. Neuropsychopharmacol. 23, 1503–1515 (2013).

Quilty, L. C., Meusel, L.-A. C. & Bagby, R. M. Neuroticism as a mediator of treatment response to SSRIs in major depressive disorder. J. Affect Disord. 111, 67–73 (2008).

Katon, W., Unützer, J. & Russo, J. Major depression: the importance of clinical characteristics and treatment response to prognosis. Depress Anxiety 27, 19–26 (2010).

Uliaszek, A. A. et al. The role of neuroticism and extraversion in the stress–anxiety and stress–depression relationships. Anxiety Stress Coping 23, 363–381 (2010).

Bulmash, E., Harkness, K. L., Stewart, J. G. & Bagby, R. M. Personality, stressful life events, and treatment response in major depression. J. Consult Clin. Psychol. 77, 1067–1077 (2009).

Mazure, C. M. Adverse life events and cognitive-personality characteristics in the prediction of major depression and antidepressant response. Am. J. Psychiatry 157, 896–903 (2000).

van Calker et al. Time course of response to antidepressants: predictive value of early improvement and effect of additional psychotherapy. J. Affect Disord. 114, 243–253 (2009).

Joel, I. et al. Dynamic prediction of treatment response in late-life depression. Am. J. Geriatr. Psychiatry 22, 167–176 (2014).

Souery, D. et al. Treatment resistant depression: methodological overview and operational criteria. Eur. Neuropsychopharmacol. J. Eur. Coll. Neuropsychopharmacol. 9, 83–91 (1999).

Chandler, G. M., Iosifescu, D. V., Pollack, M. H., Targum, S. D. & Fava, M. RESEARCH: Validation of the Massachusetts General Hospital Antidepressant Treatment History Questionnaire (ATRQ): Validation of the MGH ATRQ. CNS Neurosci. Ther. 16, 322–325 (2010).

Kloiber, S. et al. Overweight and obesity affect treatment response in major depression. Biol. Psychiatry 62, 321–326 (2007).

Reynolds, C. F. et al. Effects of age at onset of first lifetime episode of recurrent major depression on treatment response and illness course in elderly patients. Am. J. Psychiatry 155, 795–799 (1998).

Zisook, S. et al. Effect of age at onset on the course of major depressive disorder. Am. J. Psychiatry 164, 1539–1546 (2007).

Park, S.-C. et al. Does age at onset of first major depressive episode indicate the subtype of major depressive disorder?: The clinical research center for depression study. Yonsei Med J. 55, 1712 (2014).

Kloiber, S. et al. Clinical risk factors for weight gain during psychopharmacologic treatment of depression: results from 2 large German observational studies. J. Clin. Psychiatry 76, e802–e808 (2015).

Muhonen, L. H., Lönnqvist, J., Lahti, J. & Alho, H. Age at onset of first depressive episode as a predictor for escitalopram treatment of major depression comorbid with alcohol dependence. Psychiatry Res. 167, 115–122 (2009).

Ising, M. et al. Combined dexamethasone/corticotropin releasing hormone test predicts treatment response in major depression–a potential biomarker? Biol. Psychiatry 62, 47–54 (2007).

Schmaal, L. et al. Cortical abnormalities in adults and adolescents with major depression based on brain scans from 20 cohorts worldwide in the ENIGMA Major Depressive Disorder Working Group. Mol. Psychiatry 22, 900–909 (2017).

Rentería, M. E. et al. Subcortical brain structure and suicidal behaviour in major depressive disorder: a meta-analysis from the ENIGMA-MDD working group. Transl. Psychiatry 7, e1116 (2017).

Zobel, A. W. et al. Cortisol response in the combined dexamethasone/CRH test as predictor of relapse in patients with remitted depression. a prospective study. J. Psychiatr. Res. 35, 83–94 (2001).

Acknowledgements

We are grateful to all patients for their participation and thank all clinical raters and study assistants of the MARS study and the GENDEP study for their support.

Funding

The MARS project was supported by the German Federal Ministry of Education and Research (BMBF) through the NGFN and NGFN-Plus programs (FKZ 01GS0481), the Molecular Diagnostics program (FKZ 01ES0811), the Research Network for Mental Diseases program (FKZ 01EE1401D), by the Bavarian Ministry of Commerce, and by the Excellence Foundation for the Advancement of the Max Planck Society. GENDEP was funded by the European Commission Framework 6 grant (EC Contract Ref.: LSHB-CT-2003-503428). H. Lundbeck provided nortriptyline and escitalopram for the GENDEP study. R.P. reports funding by BMBF (Title: IntegraMent: Data integration and systems modeling in mental disorders), the DFG Munich Cluster for Systems Neurology (SyNergy) (Title: Core 6) and the Max Planck Institute of Psychiatry, Munich. T.F.M.A. reports funding by the BMBF through the Integrated Network IntegraMent, under the auspices of the e:Med Programme (01ZX1614J). C.M.L. is partly funded by the National Institute for Health Research (NIHR) Biomedical Research Centre at South London and Maudsley NHS Foundation Trust and King’s College London. C.M.L. has received support from RGA UK Services Ltd. B.M.M. reports funding from the German Research Foundation (DFG MU 1315/8-2, EXC 1010), the EU (EU ITN MLPM) and the German Federal Ministry of Education and Research (BMBF, 01ZX1614J), and is a consultant to HMNC Brain Health, Munich. M.I. reports funding by the German Federal Ministry of Education and Research (BMBF, FKZ 01EE1401D) and the German Research Foundation (DFG, GZ IS196/2-1), and is consultant to HMNC Brain Health, Munich. P.G.S. reports funding by the German Research Foundation (DFG, SA 1358/2-1) and the Max Planck Institute of Psychiatry, Munich.

Author information

Authors and Affiliations

Corresponding authors

Ethics declarations

Conflict of interest

The authors declare that they have no conflicts of interest.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Paul, R., Andlauer, T.F.M., Czamara, D. et al. Treatment response classes in major depressive disorder identified by model-based clustering and validated by clinical prediction models. Transl Psychiatry 9, 187 (2019). https://doi.org/10.1038/s41398-019-0524-4

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41398-019-0524-4

This article is cited by

-

A distinctive subcortical functional connectivity pattern linking negative affect and treatment outcome in major depressive disorder

Translational Psychiatry (2024)

-

Identifying latent subtypes of symptom trajectories in major depressive disorder patients and their predictors

European Archives of Psychiatry and Clinical Neuroscience (2024)

-

Why is it so hard to identify (consistent) predictors of treatment outcome in psychotherapy? – clinical and research perspectives

BMC Psychology (2023)

-

Predicting treatment outcome in depression: an introduction into current concepts and challenges

European Archives of Psychiatry and Clinical Neuroscience (2023)

-

Creating sparser prediction models of treatment outcome in depression: a proof-of-concept study using simultaneous feature selection and hyperparameter tuning

BMC Medical Informatics and Decision Making (2022)