Abstract

Objective

To clarify the screening potential of the Amsler grid and preferential hyperacuity perimetry (PHP) in detecting or ruling out wet age-related macular degeneration (AMD).

Evidence acquisition

Medline, Scopus and Web of Science (by citation of reference) were searched. Checking of reference lists of review articles and of included articles complemented electronic searches. Papers were selected, assessed, and extracted in duplicate.

Evidence synthesis

Systematic review and meta-analysis. Twelve included studies enrolled 903 patients and allowed constructing 27 two-by-two tables. Twelve tables reported on the Amsler grid and its modifications, twelve tables reported on the PHP, one table assessed the MCPT and two tables assessed the M-charts. All but two studies had a case–control design. The pooled sensitivity of studies assessing the Amsler grid was 0.78 (95% confidence intervals; 0.64–0.87), and the pooled specificity was 0.97 (95% confidence intervals; 0.91–0.99). The corresponding positive and negative likelihood ratios were 23.1 (95% confidence intervals; 8.4–64.0) and 0.23 (95% confidence intervals; 0.14–0.39), respectively. The pooled sensitivity of studies assessing the PHP was 0.85 (95% confidence intervals; 0.80–0.89), and specificity was 0.87 (95% confidence intervals; 0.82–0.91). The corresponding positive and negative likelihood ratios were 6.7 (95% confidence intervals; 4.6–9.8) and 0.17 (95% confidence intervals; 0.13–0.23). No pooling was possible for MCPT and M-charts.

Conclusion

Results from small preliminary studies show promising test performance characteristics both for the Amsler grid and PHP to rule out wet AMD in the screening setting. To what extent these findings can be transferred to a real clinic practice still needs to be established.

Similar content being viewed by others

Introduction

With the availability of highly effective anti-VEGF therapies in the treatment of wet age-related macular degeneration (AMD),1, 2 the role of early detection of the disease as well as early detection of changes in macular function during treatment and follow-up has greatly gained in importance.3, 4

To date, the Amsler grid and preferential hyperacuity perimetry (PHP) are two frequently used tests in the diagnostic work-up in clinical practice.5, 6 Besides them, several additional modifications and new tests, such as the shape discrimination hyperacuity test,7, 8 have been proposed, but their diagnostic value has not yet been systematically studied. In particular, their role as a screening tool needs to be established.

Early targeted treatment is a key component of successful AMD management. A recent study by Lim and co-worker showed that delayed intervention leads to insufficient treatment, irreversible macular damage, and a poorer visual outcome.9 In their study, a delay of 14 weeks doubled the likelihood for the worsening of vision after treatment.

To clarify the diagnostic potential of the Amsler grid, PHP, and other tests, we performed a systematic review and meta-analysis of diagnostic test accuracy studies investigating the concordance of an index test results with the presence or absence of wet age-related macular degeneration.

Materials and methods

This review was conducted according to the PRISMA statement recommendations.10

Literature search

Electronic searches were performed without any language restriction on MEDLINE (PubMed interface), Scopus (from inception until August 28th, 2013), and Web of Science (by citation of reference). The full search algorithm is available on request.

Eligibility criteria

The minimum requirement was the availability of original data and the possibility to construct a two-by-two table. We accepted the following reference tests classifying presence or absence of AMD: optic coherence tomography (OCT), color fundus photographs, fluorescein angiography (FA), and scanning laser ophthalmoscopy (SLO).

Study selection, data extraction, and quality assessment

The methodological quality of all eligible papers was assessed based on published recommendations.11 We refrained from doing a rating or ranking of findings based on recommendations by Whiting et al.12 Quality assessment involved scrutinizing the methods of data collection and patient selection, and descriptions of the test and reference standard. Blinding was fulfilled if the person(s) classifying presence or absence of AMD did not know the results of the index examination or alternative reference standard investigations. Two reviewers independently assessed papers and extracted data using a standardized form (the data extraction form is available on request). Discrepancies were resolved in a consensus between the two reviewers.

Statistical analysis

For each study, we constructed a two-by-two contingency table consisting of true-positive (TP), false-positive (FP), false-negative (FN), and true-negative (TN) results. For the analysis, we called a result a true positive if the Amsler grid or PHP finding was concordant and in agreement with the reference standard findings. We calculated sensitivity as TP/(TP+FN) and specificity as TN/(FP+TN). We estimated and plotted summary receiver operating characteristics (ROC) curves using a unified model for meta-analysis of diagnostic test accuracy studies.13 We also indicated on the ROC figures, the confidence and prediction regions. The advantage of doing this is that it provides estimates of average sensitivity and specificity across studies, and can be used to provide a 95 percent confidence region for this summary point and prediction regions within which we expect the sensitivity and specificity of 95 percent of future studies to lie.

Because of methodological reasons, the minimum requirement to be included into meta-analysis was at least four studies providing a two-by-two table. Thus, a meta-analysis was not possible for M-charts (two studies) and the macular computerized psychophysical test (MCPT) (one study).

Following recent recommendations, we did not pool positive and negative likelihood ratios because these are sensible parameters to analyze statistically in a meta-analysis.14 Instead, we calculated the likelihood ratios from the estimated pooled sensitivities and specificities.

All analyses were done using the Stata 11.2 statistical software package (StataCorp 2009, Stata Statistical Software: Release 11, StataCorp LP, College Station, TX, USA).

Results

Study selection

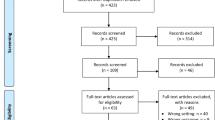

Electronic searches retrieved 1422 records. After excluding duplicates, 1319 records remained and were screened based on title and abstract. Subsequently, 1289 studies were excluded because they did not investigate the diagnostic accuracy of tests, contained no primary data, or investigated other conditions. Thirty articles were finally retrieved and read in full text to be considered for inclusion. Out of these 10 studies fulfilled our inclusion criteria. In addition, two studies were included via screening of reference lists and the science citation index database. Thus, 12 studies were included for quantitative analyses. The study selection process is outlined in Figure 1.

Patients’ characteristics, design features

The 12 studies enrolled 903 patients. Among studies reporting this, 58 percent of participants were women on average. The included studies also involved other diagnoses, ie, geographic atrophy and dry AMD, but only patients with wet AMD were included into the analysis. In nine studies patients were included consecutively. Patients’ characteristics are summarized in Table 1.

Index and reference tests

Seven studies each investigated diagnostic accuracy of the Amsler grid test—or modifications—or the PHP, while two included studies evaluated the M-Charts and one study investigated the Macular Computerized Psychophysical Test (MCPT).

Color fundus photography, fundus angiography, and OCT were the most commonly used reference tests, while SLO was only used in two and the Amsler grid only in one study against the M-chart. Table 2 shows the index and reference tests that were applied in each study.

Test performance

The 12 studies allowed constructing 27 two-by-two tables. Twelve tables reported on the Amsler grid and its modifications, twelve tables reported on the PHP, one table assessed the MCPT, and two tables assessed the M-charts. In the twelve Amsler grid studies, sensitivity ranged from 0.34 to 1.0 and specificity ranged from 0.85 to 1.0. In the twelve PHP studies, sensitivity ranged from 0.68 to 1.0 and specificity ranged from 0.71 to 0.97. The reported sensitivity of MCPT was 0.94 and specificity was 0.94. The mean sensitivity of the two studies reporting on the M-chart was 0.81 and specificity was 1. Detailed results are provided in Table 3.

Results from HSROC analysis

The pooled sensitivity of studies assessing the Amsler grid was 0.78 (95% confidence intervals; 0.64–0.87) and the pooled specificity was 0.97 (95% confidence intervals; 0.91–0.99). The corresponding positive and negative likelihood ratios were 23.1 (95% confidence intervals; 8.4–64.0) and 0.23 (95% confidence intervals; 0.14–0.39), respectively.

The pooled sensitivity of studies assessing PHP was 0.85 (95% confidence intervals; 0.80–0.89) and specificity was 0.87 (95% confidence intervals; 0.82–0.91). The corresponding positive and negative likelihood ratios were 6.7 (95% confidence intervals; 4.6–9.8) and 0.17 (95% confidence intervals; 0.13–0.23), (see Figures 2 and 3).

Assuming a one percent prevalence of wet AMD in the screening setting and using Bayes theorem (probability odds before testing x likelihood ratio (LR)=probability odds after testing), a positive Amsler grid test would increase the probability of AMD presence to 18.9 percent (probability odds of 1% prevalence=1%/(100%−1%)=0.0101; multiplied by positive LR (23.1)=probability odds after test=0.2333; probability of wet AMD after a positive test result=0.2333/1+1.2333=18.9%). That is, in a (mass) screening population with the probability of wet AMD presence of 1 percent, approximately every fifth person with a positive Amsler grid test result would have a wet AMD. Correspondingly, a negative test would decrease the probability to 0.23 percent. In the case of PHP, the probability given a positive test would be 6.3 percent. Given a negative test result, the probability would decrease to 0.17 percent.

Discussion

Main findings

A meta-analysis of the two commonly used screening tests for wet AMD, the Amsler grid, and the PHP, assessed in small patient samples, showed to be promising candidates in ruling out the illness. However, most of the studies were so called diagnostic case–control studies, ie, test results of patients with diagnosed wet AMD were compared with test results of healthy subjects or another sampled group of patients. Although this design may be appropriate in the early, proof of concept phase of evaluation, it must be noted that they are prone to exaggerate test performance.11 For MCPT and the M-chart, data were to scarce to perform a meta-analysis.

Results in light of existing literature

To our knowledge, this is the first comprehensive assessment of studies examining the diagnostic value of various screening tests in age-related macular degeneration. We are aware of one systematic review of the US Preventive Task Force examining the evidence on tools to screen for impaired visual acuity in elderly adults. They did not systematically quantify the various tests in a selected population of patients with age-related macular degeneration but on a broader spectrum, including patients with other ocular conditions leading to impaired vision such as cataract or strabismus and amblyopia.15 In 2007, Crossland and Rubin16 provided an unsystematic overview of the diagnostic value of the Amsler grid and PHP. In their comprehensive paper, they found a low sensitivity and specificity for the Amsler grid. For the PHP, they reported a higher sensitivity but a lower specificity than that with the Amsler grid. Our findings partly disagree with them. Although we found large variability both in sensitivity and specificity, expressed by large confidence regions in the meta-analysis, the average performance of these tests in preliminary study was clearly higher than that reported by Crossland and Rubin. Whether or not these findings translate into real clinical practice still needs to be investigated. We envision that the ideal screening test has excellent test performance in the relevant clinical setting and is easy to handle, apply, and interpret. Perhaps it might also be useful to adopt the role model of home blood pressure monitoring for AMD screening and therapeutic management. In view that disease progression may occur before visual distortion, the test should identify very early phases of AMD progression.

Strength and limitations

Our study applied up-to-date systematic review methodology and used state of the art statistical methods for quantitative summaries.13 A stratified pooled analysis was not possible for specific clinical strata due to the limited number of studies and due to the limited number of studies per clinical subgroup. We therefore also refrained from exploring factors explaining heterogeneity and we did not formally test for heterogeneity. A further important limitation of our meta-analysis is the fact that many studies used different—arguably sub-optimal—reference tests. This not only limits the validity of these studies but also the validity of the meta-analysis, because an inappropriate reference test will lead to a biased test performance.17 Most of the included studies used a so called diagnostic case–control design. Again, we were unable to perform a stratified analysis based on this item. Arguably, mixing the effects found in prospective cohorts and case–control studies introduced bias in our results.11 However, given the ‘proof of concept’ type of purpose of this review, we believe that our decision is acceptable, but conclusions drawn from our analysis must be made very cautiously. We agree with other authors that the ideal test for AMD screening still needs to be found.16 Even for the Amsler grid, which has been around for over 60 years now and is broadly used in clinical practice, there is yet no compelling evidence supporting its usefulness. However, also the PHP, which seems to be a valid alternative, needs to confirm its potential in daily practice. Finally, we excluded various papers describing new tests due to lack of data to construct a two-by-two table. Thus far, it might be justified to repeat our analysis in a couple of years when additional data emerge.

Implications for practice

The Amsler grid test has been around and in use for over 60 years. From the time of Amsler’s publication in 1947 until the late 1990s, its role as a screening test was, however, only at a low level in patient management. This might be a reason for the relatively weak body of evidence assessing its diagnostic usefulness. Only recently, with the availability of various effective anti-VEGF treatments, its possible role in patient management has become apparent. Also the development of the PHP and particularly the home-testing ForeseeHome device (Notal Vision Ltd, Tel Aviv, Israel) using this technology needs to be seen in this context.6 Very recently, the HOME study showed an advantage of PHP home monitoring in patients with choroidal neovascularisations (CNV), because regular home testing discovered a new CNV development at an earlier stage.18 If confirmed in cost-effectiveness analyses, this method could be promoted for clinical use in these patients. A second line of research focuses on shape discrimination hyperacuity testing.8, 19 Very recently, a mobile phone version of this test has become available and its feasibility and usability is currently assessed.7, 20 Whether the Amsler grid or the PHP should be used in a (mass) screening context still needs to be examined. From our preliminary analysis, we extrapolate that one out of approximately five patients with a positive Amsler grid test actually do have a wet AMD needing treatment. This figure might actually be too low. However, once this figure is validly established, it must be assessed whether an early, otherwise not discovered case of wet AMD out of five who are referred to an ophthalmologist is a sufficient yield to use it in a screening context. In the case of the PHP, our study showed somewhat lower yield. However, again, we still require a valid number before assessing its usefulness in screening.

Implication for further research

As stated above, our results need confirmation in carefully designed clinical studies. Moreover, issues of practicability need to be considered. For example, the easiness of application and interpretation of a screening test is another important aspect. It has been argued that Amsler grid testing is difficult to perform correctly and thus often leads to uncertain test results. For example, in 1986, Fine et al21 raised the awareness that the screening with an Amsler grid is not fully self-explanatory. Only about 10 percent of patients spontaneously complained about a distorted vision when using it on their own. This figure raised substantially under proper instruction and supervision. This review of test accuracy studies was unable to address this important aspect of testing. Arguably, test performance will drop in clinical practice if the Amsler grid test is performed without clear instructions and monitoring of patients. To assess this issue, further studies, particularly ones examining the clinical impact of screening on patient relevant outcomes, are needed.

Conclusion

Results from small preliminary studies show promising test performance characteristics both for the Amsler grid and PHP in the diagnostic work-up of wet AMD. On the basis of test performance, the Amsler grid showed some advantages in ruling-in wet AMD and could thus help in monitoring disease, but data were very heterogeneous. The PHP in return had small advantages over the Amsler grid in ruling out wet AMD and could thus be useful in the screening context. However, to what extent our findings can be transferred to a real clinic practice still needs to be established. Moreover, new promising technologies with theoretical advantages over the Amsler grid and the PHP are currently emerging that need careful clinical examination to confirm their usefulness in a screening and monitoring context. If confirmed, further studies assessing their impact on patient management need to be quantified.

References

Heier JS, Boyer DS, Ciulla TA, Ferrone PJ, Jumper JM, Gentile RC et al. Ranibizumab combined with verteporfin photodynamic therapy in neovascular age-related macular degeneration: year 1 results of the FOCUS Study. Arch Ophthalmol 2006; 124 (11): 1532–1542.

Rosenfeld PJ, Brown DM, Heier JS, Boyer DS, Kaiser PK, Chung CY et al. Ranibizumab for neovascular age-related macular degeneration. N Engl J Med 2006; 355 (14): 1419–1431.

Martin DF, Maguire MG, Ying GS, Grunwald JE, Fine SL, Jaffe GJ . Ranibizumab and bevacizumab for neovascular age-related macular degeneration. N Engl J Med 2011; 364 (20): 1897–1908.

Ying GS, Huang J, Maguire MG, Jaffe GJ, Grunwald JE, Toth C et al. Baseline predictors for one-year visual outcomes with ranibizumab or bevacizumab for neovascular age-related macular degeneration. Ophthalmology 2013; 120 (1): 122–129.

Amsler M . L'Examen qualitatif de la fonction maculaire. Ophthalmologica 1947; 114: 248–261.

Goldstein M, Loewenstein A, Barak A, Pollack A, Bukelman A, Katz H et al. Results of a multicenter clinical trial to evaluate the preferential hyperacuity perimeter for detection of age-related macular degeneration. Retina 2005; 25 (3): 296–303.

Kaiser PK, Wang YZ, He YG, Weisberger A, Wolf S, Smith CH . Feasibility of a novel remote daily monitoring system for age-related macular degeneration using mobile handheld devices: results of a pilot study. Retina 2013; 33 (9): 1863–1870.

Wang YZ, Wilson E, Locke KG, Edwards AO . Shape discrimination in age-related macular degeneration. Invest Ophthalmol Vis Sci 2002; 43 (6): 2055–2062.

Lim JH, Wickremasinghe SS, Xie J, Chauhan DS, Baird PN, Robman LD et al. Delay to treatment and visual outcomes in patients treated with anti-vascular endothelial growth factor for age-related macular degeneration. Am J Ophthalmol 2012; 153 (4): 678–686 686 e671-672.

Moher D, Liberati A, Tetzlaff J, Altman DG . Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement. BMJ 2009; 339: b2535.

Lijmer JG, Mol BW, Heisterkamp S, Bonsel GJ, Prins MH, van der Meulen JH et al. Empirical evidence of design-related bias in studies of diagnostic tests. JAMA 1999; 282 (11): 1061–1066.

Whiting PF, Rutjes AW, Westwood ME, Mallett S, Deeks JJ, Reitsma JB et al. QUADAS-2: a revised tool for the quality assessment of diagnostic accuracy studies. Ann Intern Med 2011; 155 (8): 529–536.

Harbord RM, Whiting P, Sterne JA, Egger M, Deeks JJ, Shang A et al. An empirical comparison of methods for meta-analysis of diagnostic accuracy showed hierarchical models are necessary. J Clin Epidemiol 2008; 61 (11): 1095–1103.

Zwinderman AH, Bossuyt PM . We should not pool diagnostic likelihood ratios in systematic reviews. Stat Med 2008; 27 (5): 687–697.

Chou R, Dana T, Bougatsos C . Screening older adults for impaired visual acuity: a review of the evidence for the U.S. Preventive Services Task Force. Ann Intern Med 2009; 1511: 44–58 W11-20.

Crossland M, Rubin G . The Amsler chart: absence of evidence is not evidence of absence. Br J Ophthalmol 2007; 91 (3): 391–393.

Bachmann LM, Juni P, Reichenbach S, Ziswiler HR, Kessels AG, Vogelin E . Consequences of different diagnostic "gold standards" in test accuracy research: Carpal Tunnel Syndrome as an example. Int J Epidemiol 2005; 34 (4): 953–955.

Chew EY, Clemons TE, Bressler SB, Elman MJ, Danis RP, Domalpally A et al. Randomized trial of a home monitoring system for early detection of choroidal neovascularization home monitoring of the eye (HOME) study. Ophthalmology 2014; 121 (2): 535–544.

Chhetri AP, Wen F, Wang Y, Zhang K . Shape discrimination test on handheld devices for patient self-test. Proceedings of the 1st ACM International Health Informatics Symposium 2010 ACM New York, NY, USA 2010: 502–506.

Wang YZ, He YG, Mitzel G, Zhang S, Bartlett M . Handheld shape discrimination hyperacuity test on a mobile device for remote monitoring of visual function in maculopathy. Invest Ophthalmol Vis Sci 2013; 54 (8): 5497–5505.

Fine AM, Elman MJ, Ebert JE, Prestia PA, Starr JS, Fine SL . Earliest symptoms caused by neovascular membranes in the macula. Arch Ophthalmol 1986; 104 (4): 513–514.

Nowomiejska K, Oleszczuk A, Brzozowska A, Grzybowski A, Ksiazek K, Maciejewski R et al. M-charts as a tool for quantifying metamorphopsia in age-related macular degeneration treated with the bevacizumab injections. BMC Ophthalmol 2013; 13: 13.

Alster Y, Bressler NM, Bressler SB, Brimacombe JA, Crompton RM, Duh YJ et al. Preferential Hyperacuity Perimeter (PreView PHP) for detecting choroidal neovascularization study. Ophthalmology 2005; 112 (10): 1758–1765.

Isaac DL, Avila MP, Cialdini AP . Comparison of the original Amsler grid with the preferential hyperacuity perimeter for detecting choroidal neovascularization in age-related macular degeneration. Arq Bras Oftalmol 2007; 70 (5): 771–776.

Lai Y, Grattan J, Shi Y, Young G, Muldrew A, Chakravarthy U . Functional and morphologic benefits in early detection of neovascular age-related macular degeneration using the preferential hyperacuity perimeter. Retina 2011; 31 (8): 1620–1626.

Loewenstein A, Malach R, Goldstein M, Leibovitch I, Barak A, Baruch E et al. Replacing the Amsler grid: a new method for monitoring patients with age-related macular degeneration. Ophthalmology 2003; 110 (5): 966–970.

Loewenstein A, Ferencz JR, Lang Y, Yeshurun I, Pollack A, Siegal R et al. Toward earlier detection of choroidal neovascularization secondary to age-related macular degeneration: multicenter evaluation of a preferential hyperacuity perimeter designed as a home device. Retina 2010; 30 (7): 1058–1064.

Mathew R, Sivaprasad S . Environmental Amsler test as a monitoring tool for retreatment with ranibizumab for neovascular age-related macular degeneration. Eye 2012; 26 (3): 389–393.

Robison CD, Jivrajka RV, Bababeygy SR, Fink W, Sadun AA, Sebag J . Distinguishing wet from dry age-related macular degeneration using three-dimensional computer-automated threshold Amsler grid testing. Br J Ophthalmol 2011; 95 (10): 1419–1423.

Arimura E, Matsumoto C, Nomoto H, Hashimoto S, Takada S, Okuyama S et al. Correlations between M-CHARTS and PHP findings and subjective perception of metamorphopsia in patients with macular diseases. Invest Ophthalmol Vis Sci 2011; 52 (1): 128–135.

Klatt C, Sendtner P, Ponomareva L, Hillenkamp J, Bunse A, Gabel VP et al. Diagnostics of metamorphopsia in retinal diseases of different origins. Ophthalmologe 2006; 103 (11): 945–952.

Kampmeier J, Zorn MM, Lang GK, Botros YT, Lang GE . [Comparison of Preferential Hyperacuity Perimeter (PHP) test and Amsler grid test in the diagnosis of different stages of age-related macular degeneration]. Klin Monbl Augenheilkd 2006; 223 (9): 752–756.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare no conflict of interest.

Rights and permissions

About this article

Cite this article

Faes, L., Bodmer, N., Bachmann, L. et al. Diagnostic accuracy of the Amsler grid and the preferential hyperacuity perimetry in the screening of patients with age-related macular degeneration: systematic review and meta-analysis. Eye 28, 788–796 (2014). https://doi.org/10.1038/eye.2014.104

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/eye.2014.104

This article is cited by

-

System usability, user satisfaction and long-term adherence to mobile hyperacuity home monitoring—prospective follow-up study

Eye (2023)

-

Home vision monitoring in patients with maculopathy: current and future options for digital technologies

Eye (2023)

-

Perspectives on the Home Monitoring of Macular Disease

Ophthalmology and Therapy (2023)

-

False alarms and the positive predictive value of smartphone-based hyperacuity home monitoring for the progression of macular disease: a prospective cohort study

Eye (2021)

-

Diabetic retinopathy and diabetic macular oedema pathways and management: UK Consensus Working Group

Eye (2020)