Abstract

Predicting the properties of a material from the arrangement of its atoms is a fundamental goal in materials science. While machine learning has emerged in recent years as a new paradigm to provide rapid predictions of materials properties, their practical utility is limited by the scarcity of high-fidelity data. Here, we develop multi-fidelity graph networks as a universal approach to achieve accurate predictions of materials properties with small data sizes. As a proof of concept, we show that the inclusion of low-fidelity Perdew–Burke–Ernzerhof band gaps greatly enhances the resolution of latent structural features in materials graphs, leading to a 22–45% decrease in the mean absolute errors of experimental band gap predictions. We further demonstrate that learned elemental embeddings in materials graph networks provide a natural approach to model disorder in materials, addressing a fundamental gap in the computational prediction of materials properties.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$99.00 per year

only $8.25 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

Multi-fidelity band gap data and molecular data are available at https://doi.org/10.6084/m9.figshare.1304033048. The data for all figures and extended data figures are available in Source Data.

Code availability

Model fitting and results plotting codes are available at https://github.com/materialsvirtuallab/megnet/tree/master/multi-fidelity. MEGNet is available at https://github.com/materialsvirtuallab/megnet. The specific version of the package can be found at https://doi.org/10.5281/zenodo.407202950.

References

Chevrier, V. L., Ong, S. P., Armiento, R., Chan, M. K. Y. & Ceder, G. Hybrid density functional calculations of redox potentials and formation energies of transition metal compounds. Phys. Rev. B 82, 075122 (2010).

Heyd, J. & Scuseria, G. E. Efficient hybrid density functional calculations in solids: assessment of the heyd-scuseria-ernzerhof screened coulomb hybrid functional. J. Chem. Phys. 121, 1187–1192 (2004).

Zhang, Y. et al. Efficient first-principles prediction of solid stability: towards chemical accuracy. npj Comput. Mat. 4, 9 (2018).

Butler, K. T., Davies, D. W., Cartwright, H., Isayev, O. & Walsh, A. Machine learning for molecular and materials science. Nature 559, 547–555 (2018).

Chen, C., Ye, W., Zuo, Y., Zheng, C. & Ong, S. P. Graph networks as a universal machine learning framework for molecules and crystals. Chem. Mat. 31, 3564–3572 (2019).

Xie, T. & Grossman, J. C. Crystal graph convolutional neural networks for an accurate and interpretable prediction of material properties. Phys. Rev. Lett. 120, 145301 (2018).

Behler, J. & Parrinello, M. Generalized neural-network representation of high-dimensional potential-energy surfaces. Phys. Rev. Lett. 98, 146401 (2007).

Bartók, A. P., Payne, M. C., Kondor, R. & Csányi, G. Gaussian approximation potentials: the accuracy of quantum mechanics, without the electrons. Phys. Rev. Lett. 104, 136403 (2010).

Zuo, Y. et al. Performance and cost assessment of machine learning interatomic potentials. J. Phys. Chem. A 124, 731–745 (2020).

Perdew, J. P., Burke, K. & Ernzerhof, M. Generalized gradient approximation made simple. Phys. Rev. Lett. 77, 3865–3868 (1996).

Jain, A. et al. Commentary: the materials project: a materials genome approach to accelerating materials innovation. APL Mat. 1, 011002 (2013).

Kirklin, S. et al. The Open Quantum Materials Database (OQMD): assessing the accuracy of DFT formation energies. npj Comput. Mat. 1, 15010 (2015).

Heyd, J., Scuseria, G. E. & Ernzerhof, M. Hybrid functionals based on a screened Coulomb potential. J. Chem. Phys. 118, 8207–8215 (2003).

Hachmann, J. et al. The Harvard Clean Energy Project: large-scale computational screening and design of organic photovoltaics on the World Community Grid. J. Phys. Chem. Lett. 2, 2241–2251 (2011).

Hellwege, K. H. & Green, L. C. Landolt-Börnstein, numerical data and functional relationships in science and technology. Am. J. Phys. 35, 291–292 (1967).

Meng, X. & Karniadakis, G. E. A composite neural network that learns from multi-fidelity data: application to function approximation and inverse PDE problems. J. Comput. Phys. 401, 109020 (2020).

Kennedy, M. C. & O’Hagan, A. Predicting the output from a complex computer code when fast approximations are available. Biometrika 87, 1–13 (2000).

Pilania, G., Gubernatis, J. E. & Lookman, T. Multi-fidelity machine learning models for accurate bandgap predictions of solids. Comput. Mat. Sci. 129, 156–163 (2017).

Batra, R., Pilania, G., Uberuaga, B. P. & Ramprasad, R. Multifidelity information fusion with machine learning: a case study of dopant formation energies in hafnia. ACS Appl. Mat. Interfaces 11, 24906–24918 (2019).

Ramakrishnan, R., Dral, P. O., Rupp, M. & vonLilienfeld, O. A. Big data meets quantum chemistry approximations: The Δ-machine learning approach. J. Chem. Theory Comput. 11, 2087–2096 (2015).

Zaspel, P., Huang, B., Harbrecht, H. & von Lilienfeld, O. A. Boosting quantum machine learning models with a multilevel combination technique: Pople diagrams revisited. J. Chem. Theory Comput. 15, 1546–1559 (2019).

Dahl, G. E., Jaitly, N. & Salakhutdinov, R. Multi-task neural networks for QSAR predictions. Preprint at https://arxiv.org/abs/1406.1231 (2014).

Battaglia, P. W. et al. Relational inductive biases, deep learning, and graph networks. Preprint at https://arxiv.org/abs/1806.01261 (2018).

Schütt, K. T., Sauceda, H. E., Kindermans, P.-J., Tkatchenko, A. & Müller, K.-R. SchNet – a deep learning architecture for molecules and materials. J. Chem. Phys. 148, 241722 (2018).

Gritsenko, O., van Leeuwen, R., van Lenthe, E. & Baerends, E. J. Self-consistent approximation to the Kohn-Sham exchange potential. Phys. Rev. A 51, 1944–1954 (1995).

Kuisma, M., Ojanen, J., Enkovaara, J. & Rantala, T. T. Kohn-Sham potential with discontinuity for band gap materials. Phys. Rev. B 82, 115106 (2010).

Castelli, I. E. et al. New light-harvesting materials using accurate and efficient bandgap calculations. Adv. Energy Mat. 5, 1400915 (2015).

Sun, J., Ruzsinszky, A. & Perdew, J. P. Strongly constrained and appropriately normed semilocal density functional. Phys. Rev. Lett. 115, 036402 (2015).

Borlido, P. et al. Large-scale benchmark of exchange-correlation functionals for the determination of electronic band gaps of solids. J. Chem. Theory Comput. 15, 5069–5079 (2019).

Jie, J. et al. A new MaterialGo database and its comparison with other high-throughput electronic structure databases for their predicted energy band gaps. Sci. China Technol. Sci. 62, 1423–1430 (2019).

Zhuo, Y., Mansouri Tehrani, A. & Brgoch, J. Predicting the band gaps of inorganic solids by machine learning. J. Phys. Chem. Lett. 9, 1668–1673 (2018).

Perdew, J. P. & Levy, M. Physical content of the exact Kohn-Sham orbital energies: band gaps and derivative discontinuities. Phys. Rev. Lett. 51, 1884–1887 (1983).

Davies, D. W., Butler, K. T. & Walsh, A. Data-driven discovery of photoactive quaternary oxides using first-principles machine learning. Chem. Mat. 31, 7221–7230 (2019).

Morales-García, Á., Valero, R. & Illas, F. An empirical, yet practical way to predict the band gap in solids by using density functional band structure calculations. J. Phys. Chem. C 121, 18862–18866 (2017).

van der Maaten, L. & Hinton, G. Visualizing data using T-SNE. J. Mach. Learn. Res. 9, 2579–2605 (2008).

Hellenbrandt, M. The Inorganic Crystal Structure Database (ICSD)–present and future. Crystallogr. Rev. 10, 17–22 (2004).

Chen, H., Chen, K., Drabold, D. A. & Kordesch, M. E. Band gap engineering in amorphous AlxGa1–xN: experiment and ab initio calculations. Appl. Phys. Lett. 77, 1117–1119 (2000).

Santhosh, T. C. M., Bangera, K. V. & Shivakumar, G. K. Band gap engineering of mixed Cd(1–x)Zn(x) Se thin films. J. Alloys Compd. 703, 40–44 (2017).

Rana, N., Chand, S. & Gathania, A. K. Band gap engineering of ZnO by doping with Mg. Phys. Scr. 90, 085502 (2015).

Fasoli, M. et al. Band-gap engineering for removing shallow traps in rare-earth Lu3Al5O12 garnet scintillators using Ga3+ doping. Phys. Rev. B 84, 081102 (2011).

Harun, K., Salleh, N. A., Deghfel, B., Yaakob, M. K. & Mohamad, A. A. DFT+U calculations for electronic, structural, and optical properties of ZnO wurtzite structure: a review. Results Phys. 16, 102829 (2020).

Kamarulzaman, N., Kasim, M. F. & Chayed, N. F. Elucidation of the highest valence band and lowest conduction band shifts using XPS for ZnO and Zn0.99Cu0.01O band gap changes. Results Phys. 6, 217–230 (2016).

Shao, Z. & Haile, S. M. A high-performance cathode for the next generation of solid-oxide fuel cells. Nature 431, 170–173 (2004).

Nordheim, L. The electron theory of metals. Ann. Phys 9, 607 (1931).

Ong, S. P. et al. Python Materials Genomics (pymatgen): a robust, open-source python library for materials analysis. Comput. Mat. Sci. 68, 314–319 (2013).

Ong, S. P. et al. The Materials Application Programming Interface (API): a simple, flexible and efficient API for materials data based on REpresentational State Transfer (REST) principles. Comput. Mat. Sci. 97, 209–215 (2015).

Huck, P., Jain, A., Gunter, D., Winston, D. & Persson, K. A community contribution framework for sharing materials data with materials project. In 2015 IEEE 11th International Conference on E-Science 535–541 (2015).

Chen, C., Zuo, Y., Ye, W., Li, X. & Ong, S. P. Learning properties of ordered and disordered materials from multi-fidelity data. Figshare https://doi.org/10.6084/m9.figshare.13040330 (2020).

Abadi, M. et al. TensorFlow: a system for large-scale machine learning. In 12th USENIX Symposium on Operating Systems Design and Implementation (OSDI 16) 265–283 (2016).

Chen, C., Ong, S. P., Ward, L. & Himanen, L. materialsvirtuallab/megnet v.1.2.3 https://doi.org/10.5281/zenodo.4072029 (2020).

Acknowledgements

This work was primarily supported by the Materials Project, funded by the US Department of Energy, Office of Science, Office of Basic Energy Sciences, Materials Sciences and Engineering Division under contract no. DE-AC02-05-CH11231: Materials Project program KC23MP. The authors also acknowledge support from the National Science Foundation SI2-SSI Program under award no. 1550423 for the software development portions of the work. C.C. thanks M. Horton for his assistance with the GLLB-SC data set.

Author information

Authors and Affiliations

Contributions

C.C. and S.P.O. conceived the idea and designed the work. C.C. implemented the models and performed the analysis. S.P.O. supervised the project. Y.Z., W.Y. and X.L. helped with the data collection and analysis. C.C. and S.P.O. wrote the manuscript. All authors contributed to discussions and revisions.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Peer review information Nature Computational Science thanks Keith Tobias Butler and the other, anonymous, reviewer(s) for their contribution to the peer review of this work. Fernando Chirigati was the primary editor on this article and managed its editorial process and peer review in collaboration with the rest of the editorial team.

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

Extended Data Fig. 1 Five-fidelity model test error distributions.

a, The model errors decomposed into metals vs non-metals and (b) the test error distributions. The ‘metal-clip’ category means that the predicted negative band gaps are clipped at zero.

Extended Data Fig. 2 Band gap data distribution and correlation.

Plots of the pairwise relationship between band gaps from different fidelity sources. The band gap distribution in each data set is presented along the top diagonal, and the Pearson correlation coefficient r between each pair of data are annotated in each plot.

Extended Data Fig. 3 Predicted experimental band gaps of Ba0.5Sr0.5CoxFe1−xO3−δ using 4-fi models.

Both the Co ratio x and oxygen non-stoichiometry δ are changed to chart the two dimension band gap space.

Extended Data Fig. 4 Multi-fidelity modeling of energies of molecules.

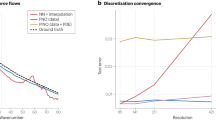

a, Average MAE in G4MP2 energy predictions for the QM9 data set using 1-fi G4MP2 models and 2-fi B3LYP/G4MP2 models trained with different G4MP2 data sizes. b, Average MAE in CCSD(T) energy predictions for the QM7b data set using 1-fi CCSD(T) models, 2-fi HF/CCSD(T) and MP2/CCSD(T) models, and 3-fi HF/MP2/CCSD(T) models. s is the ratio of data sizes. s = 1 and 2 correspond to CCSD(T):MP2:HF ratios of 1:2:4 and 1:4:16, respectively. The error bars indicate one standard deviation.

Supplementary information

Supplementary Information

Supplementary Fig. 1, Tables 1–6 and discussion.

Supplementary Data 1

Data statistics and average MAEs with standard deviations of multi-fidelity graph network models trained on different combinations of fidelities. The data size Nd and the MAD are listed for each fidelity. For the model error section, the leftmost columns indicate the combination of fidelities used to train the model and the other columns are the model MAEs in eV on the corresponding test data fidelity. The errors are reported by the mean and standard deviation of the MAEs using six random data splits.

Supplementary Data 2

Model test MAE comparisons for transfer learning, 2-fi models and 5-fi models. The first column is the model category and the other columns are the average model MAEs with standard deviation on the corresponding test data fidelity.

Supplementary Data 3

Average MAEs of 2-fi and 4-fi graph network models trained using non-overlapping-structure data split. The first column shows the data fidelity combinations in training the models, and the other columns are the average MAEs with standard deviations on the corresponding test data fidelity.

Source data

Source Data Fig. 2

Statistical Source Data

Source Data Fig. 3

Statistical Source Data

Source Data Fig. 4

Statistical Source Data

Source Data Extended Data Fig. 1

Statistical Source Data

Source Data Extended Data Fig. 2

Statistical Source Data

Source Data Extended Data Fig. 3

Statistical Source Data

Source Data Extended Data Fig. 4

Statistical Source Data

Rights and permissions

About this article

Cite this article

Chen, C., Zuo, Y., Ye, W. et al. Learning properties of ordered and disordered materials from multi-fidelity data. Nat Comput Sci 1, 46–53 (2021). https://doi.org/10.1038/s43588-020-00002-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s43588-020-00002-x

This article is cited by

-

Transfer learning with graph neural networks for improved molecular property prediction in the multi-fidelity setting

Nature Communications (2024)

-

Robust training of machine learning interatomic potentials with dimensionality reduction and stratified sampling

npj Computational Materials (2024)

-

Knowledge-integrated machine learning for materials: lessons from gameplaying and robotics

Nature Reviews Materials (2023)

-

Materials fatigue prediction using graph neural networks on microstructure representations

Scientific Reports (2023)

-

A rule-free workflow for the automated generation of databases from scientific literature

npj Computational Materials (2023)