Abstract

With the rise of artificial intelligence and automation, moral decisions that were formerly the preserve of humans are being put into the hands of algorithms. In autonomous driving, a variety of such decisions with ethical implications are made by algorithms for behaviour and trajectory planning. Therefore, here we present an ethical trajectory planning algorithm with a framework that aims at a fair distribution of risk among road users. Our implementation incorporates a combination of five ethical principles: minimization of the overall risk, priority for the worst-off, equal treatment of people, responsibility and maximum acceptable risk. To the best of our knowledge, this is the first ethical algorithm for trajectory planning of autonomous vehicles in line with the 20 recommendations from the European Union Commission expert group and with general applicability to various traffic situations. We showcase the ethical behaviour of our algorithm in selected scenarios and provide an empirical analysis of the ethical principles in 2,000 scenarios. The code used in this research is available as open-source software.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

All data gathered in this research are available via figshare at https://doi.org/10.6084/m9.figshare.21195817.v1. This includes the evaluation files for the simulated scenarios with the three shown algorithms and a sample log file for one scenario. All remaining log files are available upon request. Source data are provided with this paper.

Code availability

The algorithm for trajectory planning40, as well as corresponding tools for analysis and visualization, are available open source at https://github.com/TUMFTM/EthicalTrajectoryPlanning.

References

Lin, P. in Autonomous Driving: Technical, Legal and Social Aspects (eds Maurer, M. et al.) 69–85 (Springer, 2016); https://doi.org/10.1007/978-3-662-48847-8

Kriebitz, A., Max, R. & Lütge, C. The German Act on Autonomous Driving: why ethics still matters. Phil. Technol. 35, 29 (2022).

Vehicle Automation Report #HWY18MH010 (NTSB, 2018); https://www.ntsb.gov/investigations/Pages/HWY18MH010.aspx

Gill, T. Ethical dilemmas are really important to potential adopters of autonomous vehicles. Ethics Inf. Technol. 23, 657–673 (2021).

Thornton, S. M., Pan, S., Erlien, S. M. & Gerdes, J. C. Incorporating ethical considerations into automated vehicle control. IEEE Trans. Intell. Transp. Syst. 18, 1429–1439 (2017).

Wang, H., Huang, Y., Khajepour, A., Cao, D. & Lv, C. Ethical decision-making platform in autonomous vehicles with lexicographic optimization based model predictive controller. IEEE Trans. Veh. Technol. 69, 8164–8175 (2020).

Geisslinger, M., Poszler, F., Betz, J., Lütge, C. & Lienkamp, M. Autonomous driving ethics: from trolley problem to ethics of risk. Phil. Technol. 34, 1033–1055 (2021).

Hübner, D. & White, L. Crash algorithms for autonomous cars: how the trolley problem can move us beyond harm minimisation. Ethical Theory Moral Pract. 21, 685–698 (2018).

Bhargava, V. & Kim, T. W. in Robot Ethics 2.0: From Autonomous Cars to Artificial Intelligence (eds Lin, P. et al.) 5–19 (Oxford Academic, 2017); https://doi.org/10.1093/oso/9780190652951.003.0001

Keeling, G., Evans, K., Thornton, S. M., Mecacci, G. & Santoni de Sio, F. Four perspectives on what matters for the ethics of automated vehicles. Road Veh. Autom. 6, 49–60 (2019).

Goodall, N. J. Away from trolley problems and toward risk management. Appl. Artif. Intell. 30, 810–821 (2016).

Reuel, A. K., Koren, M., Corso, A. & Kochenderfer, M. J. Using adaptive stress testing to identify paths to ethical dilemmas in autonomous systems. Proceedings of the Workshop on Artificial Intelligence Safety 2022 (SafeAI 2022) retrieved from CEUR Workshop Proc. Vol-3087.

Bonnefon, J. F., Shariff, A. & Rahwan, I. The trolley, the bull bar, and why engineers should care about the ethics of autonomous cars. Proc. IEEE 107, 502–504 (2019).

Horizon 2020 Commission Expert Group Ethics of Connected and Automated Vehicles: Recommendations on Road Safety, Privacy, Fairness, Explainability and Responsibility (Publication Office of the European Union, 2020); https://doi.org/10.2777/035239

Luetge, C. The German ethics code for automated and connected driving. Phil. Technol. 30, 547–558 (2017).

Xiao, W., Cassandras, C. G. & Belta, C. A. Bridging the gap between optimal trajectory planning and safety-critical control with applications to autonomous vehicles. Automatica 129, 109592 (2021).

Nyberg, T., Pek, C., Dal Col, L., Noren, C. & Tumova, J. Risk-aware motion planning for autonomous vehicles with safety specifications. In IEEE Intelligent Vehicles Symposium 1016–1023 (IEEE, 2021).

Zheng, L., Zeng, P., Yang, W., Li, Y. & Zhan, Z. Bézier curve‐based trajectory planning for autonomous vehicles with collision avoidance. IET Intell. Transp. Syst. 14, 1882–1891 (2020).

Jasour, A., Huang, X., Wang, A. & Williams, B. C. Fast nonlinear risk assessment for autonomous vehicles using learned conditional probabilistic models of agent futures. Auton. Robot. 46, 269–282 (2021).

Blake, A. et al. FPR—Fast Path Risk algorithm to evaluate collision probability. IEEE Robot. Autom. Lett. 5, 1–7 (2019).

Bonnefon, J. F., Shariff, A. & Rahwan, I. The social dilemma of autonomous vehicles. Science 352, 1573–1576 (2016).

Nida-Rümelin, J., Schulenburg, J. & Rath, B. Risikoethik (DE Gruyter, 2012); https://doi.org/10.1515/9783110219982

Rawls, J. A Theory of Justice (Harvard Univ, Press, 1971).

Awad, E. et al. The moral machine experiment. Nature 563, 59–64 (2018).

Contissa, G., Lagioia, F. & Sartor, G. The ethical knob: ethically-customisable automated vehicles and the law. Artif. Intell. Law 25, 365–378 (2017).

Applin, S. Autonomous vehicle ethics: stock or custom? IEEE Consum. Electron. Mag. 6, 108–110 (2017).

Goodall, N. Ethical decision making during automated vehicle crashes. Transp. Res. Rec. 2424, 58–65 (2014).

Hansson, S. O., Belin, M. Å. & Lundgren, B. Self-driving vehicles—an ethical overview. Phil. Technol. 34, 1383–1408 (2021).

Trautman, P. & Krause, A. Unfreezing the robot: navigation in dense, interacting crowds. IEEE/RSJ International Conference on Intelligent Robots and Systems 797–803 (IEEE, 2010); https://doi.org/10.1109/IROS.2010.5654369

World Forum for Harmonization of Vehicle Regulations Framework Document on Automated/autonomous Vehicles (UNECE, 2020); https://www.unece.org/fileadmin/DAM/trans/doc/2020/wp29grva/FDAV_Brochure.pdf

Shariff, A., Bonnefon, J. F. & Rahwan, I. How safe is safe enough? Psychological mechanisms underlying extreme safety demands for self-driving cars. Transp. Res. Part C 126, 103069 (2021).

Liu, P., Yang, R. & Xu, Z. How safe is safe enough for self-driving vehicles? Risk Anal. 39, 315–325 (2019).

Harsanyi, J. C. & Harsanyi, B. J. C. Bayesian decision theory and utilitarian ethicse. Am. Econ. Rev. 68, 223–228 (1978).

Faulhaber, A. K. et al. Human decisions in moral dilemmas are largely described by utilitarianism: virtual car driving study provides guidelines for autonomous driving vehicles. Sci. Eng. Ethics 25, 399–418 (2019).

Pek, C., Manzinger, S., Koschi, M. & Althoff, M. Using online verification to prevent autonomous vehicles from causing accidents. Nat. Mach. Intell. 2, 518–528 (2020).

Shalev-Shwartz, S., Shammah, S. & Shashua, A. On a formal model of safe and scalable self-driving cars. Preprint at https://arxiv.org/abs/1708.06374 (2017).

Maierhofer, S., Moosbrugger, P. & Althoff, M. Formalization of intersection traffic rules in temporal logic. In IEEE Intelligent Vehicles Symposium 1135–1144 (IEEE, 2022).

Yoshida, J. Robotaxi priorities: avoid crashes or avoid Blame? Ojo-Yoshida Report https://ojoyoshidareport.com/robotaxi-priorities-avoid-crashes-or-avoid-blame/?utm_source=rss&utm_medium=rss&utm_campaign=robotaxi-priorities-avoid-crashes-or-avoid-blame (2022).

Kauppinen, A. Who should bear the risk when self-driving vehicles crash? J. Appl. Phil. 38, 630–645 (2020).

Geisslinger, M. & TUM - Institute of Automotive Technology. TUMFTM/EthicalTrajectoryPlanning: initial release. Zenodo https://doi.org/10.5281/zenodo.6684625 (2022).

Althoff, M. Reachability analysis and its application to the safety assessment of autonomous cars. Ph.D. Thesis. Fak. für Elektrotechnik und Informationstechnik 221 (2010); https://mediatum.ub.tum.de/doc/1287517

Evans, K., de Moura, N., Chauvier, S., Chatila, R. & Dogan, E. Ethical decision making in autonomous vehicles: the AV ethics project. Sci. Eng. Ethics 26, 3285–3312 (2020).

Althoff, M., Koschi, M. & Manzinger, S. CommonRoad: composable benchmarks for motion planning on roads. In IEEE Intelligent Vehicles Symposium 719–726 (IEEE, 2017); https://doi.org/10.1109/IVS.2017.7995802

Gogoll, J. & Müller, J. F. Autonomous cars: in favor of a mandatory ethics setting. Sci. Eng. Ethics 23, 681–700 (2017).

De Freitas, J. et al. From driverless dilemmas to more practical commonsense tests for automated vehicles. Proc. Natl. Acad. Sci. USA 118, e2010202118 (2021).

Werling, M., Ziegler, J., Kammel, S. & Thrun, S. Optimal trajectory generation for dynamic street scenarios in a frenét frame. In Proc. IEEE International Conference on Robotics and Automation 987–993 (IEEE, 2010); https://doi.org/10.1109/ROBOT.2010.5509799

Hansson, S. O. The Ethics of Risk (Palgrave Macmillan, 2013); https://doi.org/10.1057/9781137333650

Geisslinger, M., Karle, P., Betz, J. & Lienkamp, M. Watch-and-learn-net: self-supervised online learning for probabilistic vehicle trajectory prediction. In IEEE International Conference on Systems, Man, and Cybernetics 869–875 (IEEE, 2021); https://doi.org/10.1109/smc52423.2021.9659079

WHOQOL: Measuring Quality of Life (WHO, 2012); https://www.who.int/toolkits/whoqol

Lütge, C. et al. AI4people: ethical guidelines for the automotive sector-fundamental requirements and practical recommendations. Int. J. Technoethics 12, 101–125 (2021).

Crash Report Sampling System. National Highway Traffic Safety Administration https://www.nhtsa.gov/crash-data-systems/crash-report-sampling-system

Gennarelli, T. A. & Wodzin, E. AIS 2005: a contemporary injury scale. Injury 37, 1083–1091 (2006).

Acknowledgements

M.G. and F.P. received financial support from the Technical University of Munich—Institute for Ethics in Artificial Intelligence (IEAI). Any opinions, findings and conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of the IEAI or its partners.

Author information

Authors and Affiliations

Contributions

M.G., as the first author, initiated the idea for this paper and made essential contributions to its conception, implementation, content and experimental results. F.P. contributed to the conception and revised the paper critically. M.L. made an essential contribution to the conception of the research project. He revised the paper critically for important intellectual content. He gave final approval of the version to be published and agrees to all aspects of the work. As a guarantor, he accepts the responsibility for the overall integrity of the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Machine Intelligence thanks Anthony Corso and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

Extended Data Fig. 1 Our ethical trajectory planning algorithm in four steps.

The small orange balls symbolize trajectories that are sampled in the first step. Next, the trajectories are subjected to validity checks like in a filter screen visualized here (Step 2). Only those trajectories of the highest available validity level (here: five trajectories from ‘valid’) are assigned costs, whereas higher costs are represented with higher transparency (Step 3). In the last step, the trajectory with the lowest cost is selected.

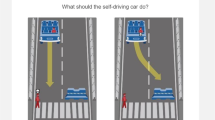

Extended Data Fig. 2 The usage of different ethical principles for risk distribution leads to different choices.

Three exemplary and fictive scenarios which are simplified to two options (A and B) to choose from showcase the trade-offs in risk distribution regarding these principles. In every option, there are two fictive persons which are assigned a collision probability p and an estimated harm H. While option A corresponds to each of the three principles in every case, option B might be an intuitive alternative choice to many people, showing that there are good reasons to incorporate all three principles instead of relying on a single one.

Extended Data Fig. 3 Runtime analysis of the proposed algorithm.

Computation times are broken down separately by Prediction (bottom) and Planning (top) over the number of sampled trajectories. Our extensions compared to the state of the art - namely the Risk assessment and the Responsibility analysis - together require about 2 ms computing time per trajectory. The analysis was performed based on a prototype implementation without parallelization on a single thread of an Intel Core i7 (9th generation) laptop CPU.

Supplementary information

Supplementary Data 1

Evaluation files for the simulated scenarios with the three shown algorithms and a sample log file for an exemplary scenario.

Source data

Source Data Fig. 4

Statistical source data.

Source Data Fig. 5

Statistical source data.

Source Data Fig. 6

Statistical source data.

Source Data Extended Data Fig. 3

Statistical source data.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Geisslinger, M., Poszler, F. & Lienkamp, M. An ethical trajectory planning algorithm for autonomous vehicles. Nat Mach Intell 5, 137–144 (2023). https://doi.org/10.1038/s42256-022-00607-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s42256-022-00607-z

This article is cited by

-

Online legal driving behavior monitoring for self-driving vehicles

Nature Communications (2024)

-

Formalizing ethical principles within AI systems: experts’ opinions on why (not) and how to do it

AI and Ethics (2024)

-

Taking ethics seriously in AV trajectory planning algorithms

Nature Machine Intelligence (2023)