Abstract

Camera trapping is increasingly being used to monitor wildlife, but this technology typically requires extensive data annotation. Recently, deep learning has substantially advanced automatic wildlife recognition. However, current methods are hampered by a dependence on large static datasets, whereas wildlife data are intrinsically dynamic and involve long-tailed distributions. These drawbacks can be overcome through a hybrid combination of machine learning and humans in the loop. Our proposed iterative human and automated identification approach is capable of learning from wildlife imagery data with a long-tailed distribution. Additionally, it includes self-updating learning, which facilitates capturing the community dynamics of rapidly changing natural systems. Extensive experiments show that our approach can achieve an ~90% accuracy employing only ~20% of the human annotations of existing approaches. Our synergistic collaboration of humans and machines transforms deep learning from a relatively inefficient post-annotation tool to a collaborative ongoing annotation tool that vastly reduces the burden of human annotation and enables efficient and constant model updates.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Steenweg, R. et al. Scaling-up camera traps: monitoring the planet’s biodiversity with networks of remote sensors. Front. Ecol. Environ. 15, 26–34 (2017).

Rich, L. N. et al. Assessing global patterns in mammalian carnivore occupancy and richness by integrating local camera trap surveys. Global Ecol. Biogeogr. 26, 918–929 (2017).

Barnosky, A. D. et al. Has the Earth’s sixth mass extinction already arrived? Nature 471, 51–57 (2011).

Ahumada, J. A. et al. Wildlife insights: a platform to maximize the potential of camera trap and other passive sensor wildlife data for the planet. Environ. Conserv. 47, 1–6 (2020).

Norouzzadeh, M. S. et al. Automatically identifying, counting, and describing wild animals in camera-trap images with deep learning. Proc. Natl Acad. Sci. 115, E5716–E5725 (2018).

Miao, Z. et al. Insights and approaches using deep learning to classify wildlife. Sci. Rep. 9, 8137 (2019).

Liu, Z. et al. Large-scale long-tailed recognition in an open world. In Proc. IEEE Conference on Computer Vision and Pattern Recognition 2537–2546 (IEEE, 2019).

Liu, Z. et al. Open compound domain adaptation. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition 12406–12415 (IEEE, 2020).

Hautier, Y. et al. Anthropogenic environmental changes affect ecosystem stability via biodiversity. Science 348, 336–340 (2015).

Barlow, J. et al. Anthropogenic disturbance in tropical forests can double biodiversity loss from deforestation. Nature 535, 144–147 (2016).

Ripple, W. J. et al. Conserving the world’s megafauna and biodiversity: the fierce urgency of now. Bioscience 67, 197–200 (2017).

Dirzo, R. et al. Defaunation in the Anthropocene. Science 345, 401–406 (2014).

O’Connell, A. F., Nichols, J. D. & Karanth, K. U. Camera Traps in Animal Ecology: Methods and Analyses (Springer Science & Business Media, 2010).

Burton, A. C. et al. Wildlife camera trapping: a review and recommendations for linking surveys to ecological processes. J. Appl. Ecol. 52, 675–685 (2015).

Kays, R., McShea, W. J. & Wikelski, M. Born-digital biodiversity data: millions and billions. Divers. Distrib. 26, 644–648 (2020).

Swanson, A. et al. Snapshot Serengeti, high-frequency annotated camera trap images of 40 mammalian species in an African savanna. Sci. Data 2, 1–14 (2015).

Ahumada, J. A. et al. Community structure and diversity of tropical forest mammals: data from a global camera trap network. Philos. Trans. R. Soc. B Biol. Sci. 366, 2703–2711 (2011).

Pardo, L. E. et al. Snapshot Safari: a large-scale collaborative to monitor Africa’s remarkable biodiversity. South Africa J. Sci. https://doi.org/10.17159/sajs.2021/8134 (2021).

Anderson, T. M. et al. The spatial distribution of African savannah herbivores: species associations and habitat occupancy in a landscape context. Philos. Trans. R. Soc. B Biol. Sci. 371, 20150314 (2016).

Palmer, M., Fieberg, J., Swanson, A., Kosmala, M. & Packer, C. A ‘dynamic’ landscape of fear: prey responses to spatiotemporal variations in predation risk across the lunar cycle. Ecol. Lett. 20, 1364–1373 (2017).

Tabak, M. A. et al. Machine learning to classify animal species in camera trap images: applications in ecology. Methods Ecol. Evol. 10, 585–590 (2019).

Whytock, R. C. et al. Robust ecological analysis of camera trap data labelled by a machine learning model. Methods Ecol. Evol 12, 1080–1092 (2021).

Beery, S., Van Horn, G. & Perona, P. Recognition in terra incognita. In Proc. European Conference on Computer Vision (ECCV) 456–473 (IEEE, 2018).

Tabak, M. A. et al. Improving the accessibility and transferability of machine learning algorithms for identification of animals in camera trap images: MLWIC2. Ecol. Evol. 10, 10374–10383 (2020).

Shahinfar, S., Meek, P. & Falzon, G. How many images do I need? Understanding how sample size per class affects deep learning model performance metrics for balanced designs in autonomous wildlife monitoring. Ecol. Inform. 57, 101085 (2020).

Norouzzadeh, M. S. et al. A deep active learning system for species identification and counting in camera trap images. Methods Ecol. Evol. 12, 150–161 (2020).

Willi, M. et al. Identifying animal species in camera trap images using deep learning and citizen science. Methods Ecol. Evol. 10, 80–91 (2019).

Schneider, S., Greenberg, S., Taylor, G. W. & Kremer, S. C. Three critical factors affecting automated image species recognition performance for camera traps. Ecol. Evol. 10, 3503–3517 (2020).

Kays, R. et al. An empirical evaluation of camera trap study design: how many, how long and when? Methods Ecol. Evol. 11, 700–713 (2020).

Prach, K. & Walker, L. R. Four opportunities for studies of ecological succession. Trends Ecol. Evol. 26, 119–123 (2011).

Mech, L. D., Isbell, F., Krueger, J. & Hart, J. Gray wolf (Canis lupus) recolonization failure: a Minnesota case study. Can. Field-Nat. 133, 60–65 (2019).

Taylor, G. et al. Is reintroduction biology an effective applied science? Trends Ecol. Evol. 32, 873–880 (2017).

Clavero, M. & Garcia-Berthou, E. Invasive species are a leading cause of animal extinctions. Trends Ecol. Evol. 20, 110 (2005).

Caravaggi, A. et al. An invasive-native mammalian species replacement process captured by camera trap survey random encounter models. Remote Sens. Ecol. Conserv. 2, 45–58 (2016).

Arjovsky, M., Bottou, L., Gulrajani, I. & Lopez-Paz, D. Invariant risk minimization. Preprint at https://arxiv.org/abs/1907.02893 (2019).

Yosinski, J., Clune, J., Bengio, Y. & Lipson, H. How transferable are features in deep neural networks? In Advances in Neural Information Processing Systems 3320–3328 (IEEE, 2014).

Deng, J. et al. ImageNet: a large-scale hierarchical image database. In Proc. 2009 IEEE Conference on Computer Vision and Pattern Recognition 248–255 (IEEE, 2009).

Pimm, S. L. et al. The biodiversity of species and their rates of extinction, distribution and protection. Science https://doi.org/10.1126/science.1246752 (2014).

Liu, W., Wang, X., Owens, J. & Li, Y. Energy-based out-of-distribution detection. In Advances in Neural Information Processing Systems (eds Larochelle, H. et al.) 21464–21475 (Curran Associates, 2020).

Lee, D.-H. Pseudo-label: the simple and efficient semi-supervised learning method for deep neural networks. In Workshop on Challenges in Representation Learning, ICML, Vol. 3 (2013).

He, K., Zhang, X., Ren, S. & Sun, J. Deep residual learning for image recognition. In Proc. IEEE Conference on Computer Vision and Pattern Recognition 770–778 (IEEE, 2016).

Hinton, G., Vinyals, O. & Dean, J. Distilling the knowledge in a neural network. Preprint at https://arxiv.org/abs/1503.02531 (2015).

Gaynor, K. M., Daskin, J. H., Rich, L. N. & Brashares, J. S. Postwar wildlife recovery in an African savanna: evaluating patterns and drivers of species occupancy and richness. Anim. Conserv. 24, 510–522 (2020).

Paszke, A. et al. in Advances in Neural Information Processing Systems Vol. 32 (eds Wallach, H. et al.) 8024–8035 http://papers.neurips.cc/paper/9015-pytorch-an-imperative-style-high-performance-deep-learning-library.pdf (Curran Associates, 2019)

Chen, T., Kornblith, S., Norouzi, M. & Hinton, G. A simple framework for contrastive learning of visual representations. Preprint at https://arxiv.org/abs/2002.05709 (2020).

He, K., Fan, H., Wu, Y., Xie, S. & Girshick, R. Momentum contrast for unsupervised visual representation learning. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition 9729–9738 (IEEE, 2020).

Xiao, T., Wang, X., Efros, A. A. & Darrell, T. What should not be contrastive in contrastive learning. Preprint at https://arxiv.org/abs/2008.05659 (2020).

Acknowledgements

We thank T. Gu, A. Ke, H. Rosen, A. Wu, C. Jurgensen, E. Lai, M. Levy and E. Silverberg for annotating the images used in this study, as well as everyone else involved in this project. Data collection was supported by J. Brashares and through grants to K.M.G. from HHMI BioInteractive, the Rufford Foundation, Idea Wild, the Explorers Club and the UC Berkeley Center for African Studies. We are grateful for the support of Gorongosa National Park, especially M. Stalmans, in permitting and facilitating this research. Z.L. is supported by NTU NAP. K.M.G. is supported by Schmidt Science Fellows in partnership with the Rhodes Trust, and the National Center for Ecological Analysis and Synthesis Director’s Postdoctoral Fellowship. M.S.P. is funded by National Science Foundation grant no. PRFB #1810586.

Author information

Authors and Affiliations

Contributions

This study was conceived by Z.M., Z.L., K.M.G. and M.S.P. The methods were designed by Z.M. and Z.L. Code was written by Z.M., and the computations were undertaken by Z.M. with help from Z.L. The main text was drafted by Z.M. and Z.L., with contributions, editing and comments from all authors. K.M.G. and M.S.P. collected all data and oversaw annotation. Z.M. created all figures and tables in consultation with W.M.G., Z.L. and S.X.Y.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Peer review information Nature Machine Intelligence thanks Dan Morris and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

Extended Data Fig. 1 The distribution of images across species in the entire camera trap data set.

There are 55 categories in total. 14 categories were tagged as "unknown” (colored in orange) and used to improve and validate our model’s sensitivity to novel and difficult samples.

Extended Data Fig. 2 The distribution of species across the two groups of data.

We split the data set into two groups to mimic two sequential data collection seasons. In the first group, there are 26 categories (colored in blue). The second group has 41 categories. Group 1 is used in the first period experiment to train a baseline model, and Group 2 is used in the second period experiment to test and update the model.

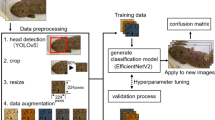

Extended Data Fig. 3 The overall experimental workflow of our framework.

In the first time step, a baseline model is trained using group 1 training data with only 26 categories. Next, the classifier is fine-tuned using the 14 unknown categories and energy-based loss to increase the sensitivity to out-of-distribution categories. After the classifier is fine-tuned, the classifier is then used to predict classifications for group 2 training data. Here, high-confidence predictions are trusted while low-confidence predictions are flagged for human annotation. In the final step, both machine- and human-annotations are used to update the previous model with OLTR and semi-supervised techniques. Once the model is updated, the classifier is fine-tuned using energy-based loss again for out-of-distribution sensitivity.

Rights and permissions

About this article

Cite this article

Miao, Z., Liu, Z., Gaynor, K.M. et al. Iterative human and automated identification of wildlife images. Nat Mach Intell 3, 885–895 (2021). https://doi.org/10.1038/s42256-021-00393-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s42256-021-00393-0