Abstract

The spread of online misinformation has gained mainstream attention in recent years. This paper approaches this phenomenon from a cultural evolution and cognitive anthropology perspective, focusing on the idea that some cultural traits can be successful because their content taps into general cognitive preferences. This research involves 260 articles from media outlets included in two authoritative lists of websites known for publishing hoaxes and ‘fake news’, tracking the presence of negative content, threat-related information, presence of sexually related material, elements associated to disgust, minimally counterintuitive elements (and a particular category of them, i.e., violations of essentialist beliefs), and social information, intended as presence of salient social interactions (e.g., gossip, cheating, formation of alliances), and as news about celebrities. The analysis shows that these features are, to a different degree, present in most texts, and thus that general cognitive inclinations may contribute to explain the success of online misinformation. This account can elucidate questions such as whether and why misinformation online is thriving more than accurate information, or the role of ‘fake news’ as a weapon of political propaganda. Online misinformation, while being an umbrella term covering many different phenomena, can be characterised, in this perspective, not as low-quality information that spreads because of the inefficiency of online communication, but as high-quality information that spreads because of its efficiency. The difference is that ‘quality’ is not equated to truthfulness but to psychological appeal.

Similar content being viewed by others

Introduction

At the beginning of 2018, more than half of the world population had Internet access (Wikipedia, 2018). In Europe and North America, nine-in-ten adults use the Internet daily. This figure goes to practically ten-in-ten when considering individuals between 18 and 50 years old (Pew Research Centre, 2018a). In parallel, the growth rate of social media has been extraordinary. Facebook, opened to public in 2006, declared 2,20 billion monthly users worldwide twelve years after. In the first quarter of 2018, Facebook reported 185 million ‘daily active users’ in the US and Canada, which is roughly the 85% of the population between 18 and 69 years old (Facebook, 2018). An increasing fear that the new information ecosystem would prove fertile ground for the spread of misinformation, hoaxes, and false or ‘fake’ news, has accompanied the diffusion of Internet and social media, especially in recent years.

I approach the spread of online misinformation from a cultural evolution and cognitive anthropology perspective. In particular, I draw on the idea that certain general evolved cognitive preferences make some cultural traits more likely to succeed with respect to others, making them more appealing, attention-grabbing and memorable (Sperber and Hirschfeld, 2004; Morin, 2016). The spread of online misinformation can be ascribed to several different reasons, but one is that misinformation has the obvious advantage, with respect to correct information, of not being constrained by reality. We can shape misinformation to be appealing, attention-grabbing and memorable more than what we can do with real information. Notice this does not need to be a conscious process: creators of fake news can deliberately tailor their content to be appealing, but it could also be that, amongst the misinformation websites, the ones that publish non-attractive content are rarely visited and they end up disappearing.

To illustrate this possibility, I analysed the content of 260 articles, published in 26 websites included in two authoritative lists of ‘suspect’ outlets, for the presence of seven factors likely to enhance the cultural success of narratives (a methodology similar to Stubbersfield et al., 2017), plus a more general assessment of the content as negative, positive, or neutral.

A general preference for negative content has been identified in cultural evolutionary studies as a feature favoring the recall of stories, with negative events being better recalled than positive ones, and ambiguous events being more likely to be transformed in negative events when the stories are reported (Bebbington et al., 2017). Information framed negatively (‘When civil litigation cases go to trial, 60% of plaintiffs lose, winning no money, and often having to pay attorney fees’) is considered more truthful that information framed positively (‘When civil litigation cases go to trial, 40% of plaintiffs succeed and win money’) even when the content is exactly the same (Fessler et al., 2014). These experimental results are paralleled by similar findings in real-life cultural dynamics. English language literary fiction has become less ‘emotional’ in the last two centuries (i.e., books contain less words that are associated to emotions), and this trend is entirely driven by a decrease in positive emotion content, while the number of words associated to negative emotions remained roughly constant (Morin and Acerbi, 2017). A negative bias in news reporting has been documented repeatedly (see e.g., Niven, 2001; Hester and Gibson, 2003; van der Meer et al., 2018). Various hypotheses have been put forward to explain why a negative bias could be adaptive. To start with, there is a fundamental asymmetry between negative and positive events, with avoidance of dangers having greater effect on fitness than the pursuit of advantages (Rozin and Royzman, 2001). Some researchers have also suggested that negative information could be adaptive in fictional narratives (as misinformation can be considered): according to the hypothesis that artistic expressions provide hypothetical scenarios to simulate social interactions (Mar and Oatley, 2008), simulating negative events would be more beneficial than simulating positive ones (Clasen, 2007).

The first specific cognitive preference considered is for threat-related information. Blaine and Boyer (2018) proposed that the preference for negative content described above is not related to negativity per se, but it is targeted to threat-related information, which would explain its evolutionary rationale. In addition, they showed that the threats described in the rumours do not need to be directly relevant to the individuals to enhance the success of the rumours. This seems to be indeed the case for the majority of online misinformation, where threats and hazards are, in fact, hardly credible (the fifth most successful ‘fake news’ on Facebook in 2017 was titled Morgue employee cremated by mistakes while taking a nap, and was liked, shared, or commented more than one million times according to BuzzFeed, 2017, one of the sources used for the articles sampled in this research).

The second element coded was the presence of sexually related information. While I am not aware of any specific study in cultural evolution dealing with the hypothesis that sexually related information enhances the success of narratives (but see Mesoudi et al., 2006, that showed that ‘gossip’ content, which involved a sexual affair between a student and her professor, was better transmitted than non-social content, see also below), references to sexual activities are intuitively common in popular press. Sexual themes were present in five out of ten of the top-10 ‘fake news’ by Facebook engagement in the same BuzzFeed list (BuzzFeed, 2017) On the other side, perhaps contrary to intuition, the effect of the presence of erotic scenes, nudity, and sexual innuendos for the success of movies (Cerridwen and Simonton, 2009) or advertisements (Wirtz et al., 2018) is unclear.

I then considered a preference for elements associated to disgust. A disgust-bias is probably, in cultural evolution, the most well-studied factor favouring the success of narratives. Psychological research shows that disgust is especially provoked by information about contaminated food, usually by animals (everybody will have heard the decades old story about the presence of worm meat or rats in McDonald’s hamburgers) or body products. Diseases, mutilations, body products in general, and sexual acts considered ‘unnatural’ are also primary disgust evokers. The fact that disgust elicitors are often associated with pathogens and infectious threats explains why disgust-related information grabs our attention (Curtis, 2007). Urban legends, rumours, and children stories have often motifs that elicit disgust. Analysing a sample of urban legends, Stubbersfield et al. (2017) found that 13% of them contained disgust-evoking content. Heath et al. (2001) similarly found that being high in a ‘disgust-scale’ (a quantitative measure of how disgusting is a story) was a good predictor of online success of urban legends. Stories with elements associated to disgust were shown to be more successful, in an experimental setting, than the same stories without those elements (Eriksson and Coultas, 2014) even though transcultural differences have been also found (Eriksson et al., 2016).

Another cognitive preference widely studied is for minimally counterintuitive (MCI) content. MCI content refers to content that violate (few) intuitive expectations about basic properties of ontological categories. For example, supernatural beings behave in many expected, non-surprising, ways (they are jealous, they can get angry, etc.) but they also breach other expectations (they may be immortal, they may read our thoughts, etc.). It has been proposed that entities that violate few intuitive expectations represent a cognitive optimum, being at the same time memorable, because of the violations, and still allowing making inferences, because of the confirmed intuitions (Boyer, 1994). This cognitive advantage may determine the success of certain cultural traits: Norenzayan et al. (2006) found for example that, amongst Grimm brothers’ folktales, the successful ones (the like of Cinderella or Hansel and Gretel) tend to have around two or three counterintuitive elements, whereas unsuccessful folktales are more or less uniformly distributed amongst all possible numbers of counterintuitive elements.

The analysis also focused on a specific category of minimally counterintuitive elements, that is, elements concerning the violation of ordinary essentialist thinking. Essentialist thinking refers broadly to the intuition that living beings, contrary to artifacts, have a hidden essence that does not change, and that is responsible for their physical appearance and for their behaviour (Keil, 1992). It has been suggested that the opposition to genetically modified organisms can stem, among other things (such as intuitive disgust, or breach of intuitive teleological thinking that results in the idea that we should not ‘interfere with nature’), from a violation of our intuitive essentialist expectations (Blancke et al., 2015).

The last element coded was related to social interactions and gossip. Given their importance for human beings, possibly in relation to The Machiavellian intelligence/social brain hypothesis (Mesoudi et al., 2006), it is not surprising that the presence of elements related to social interactions have been considered a factor enhancing the cultural success of narratives. Previous studies have differentiated between ‘social’ content, defined as containing ‘everyday interactions and relationship’, and ‘gossip’ content, defined as containing ‘particularly intense and salient social interactions and relationships’ (Mesoudi et al. 2006; Stubbersfield et al., 2015, 2017). In my study, the ‘social’ category refers instead to the latter as, from an explorative analysis, practically all the articles in the sample had some form of ‘everyday interactions and relationship’. Thus, the ‘social’ category here is similar to the ‘gossip’ category in those studies. In addition, the articles contained actual material about celebrities like pop stars, politicians, and actors, so I also quantified how many news were reporting this kind of social information with respect to news regarding unknown people: this is coded as ‘presence of celebrities’.

Finally, since that the diffusion of online misinformation has been considered especially worrying when related to politics (see discussion in Allcott and Gentzkow, 2017), I additionally coded whether the articles concerned political matters or not.

Methods

I used two authoritative lists of outlets known for publishing hoaxes and misinformation, provided by the websites Snopes.com and BuzzFeed. The two websites were chosen because they had been already used in other comparable analyses (see e.g., Stubbersfield et al., 2017; Pew Research Centre, 2018b; Vosoughi et al., 2018). I excluded other lists, such as the ones produced by FactCheck.org and PolitiFact, as they only take into account (mainly US) politics. Both lists used were updated in 2017. I excluded from the lists outlets that, at the time of data collection, were not active or had manifestly changed their activity, e.g., they were not publishing anything recognisable as news. I also excluded few outlets that were not in English language, obtaining a final sample of 26 websites.

I extracted 10 articles from each of the 26 websites, for a total of 260 articles. The articles chosen were the ones appearing prominently in the homepage of the websites. To collect the articles, the pages were visually scanned starting from the top-left corner, moving toward right and, when reached the border of the page, the procedure was repeated at a lower level, until 10 titles linking to full articles were found. This procedure may have selected different kinds of articles from different outlets, as some websites had in evidence ‘trending’ recent articles, others the overall ‘most viewed’ articles, and others again what they considered the actual ‘last’ news. Keeping this into account, this simple procedure should have picked the articles that were considered as more appealing by the websites themselves. The texts were extracted in August and September 2018.

Each text was manually coded for the features described in the Introduction. The texts were coded as having a general positive, negative, or neutral content, and for the presence or absence of the following specific content: (i) threat-related information, (ii) sexually related information, (iii) elements associated to disgust, (iv) minimally counterintuitive (MCI) elements, (v) anti-essentialist elements, (vi) social information, and (vii) presence of celebrities. In addition, the articles were coded for whether or not they concerned political matters. Supplementary Table S1 summarises the elements that were coded, and the short descriptions used and provided to the additional coders.

The articles were coded by the author and by four additional independent coders. Each of them was provided with 50 randomly selected articles. The agreement among coders was high and consistent, with 85, 87, 89 and 86% of the overall elements coded in the same way (cumulative 87 ± 1.7). Supplementary Table S2 shows the agreements by category. The general content was the only category with three possible outcomes (positive, negative, or neutral) so in Supplementary Table S2 is reported also a figure on a broader version of the agreement, failing only when texts were coded as positive versus negative or vice versa (this broader agreement was not used in the calculation of the overall agreements). In this case, only 1 out of 200 articles coded by the additional coders differed from the main coding.

Results

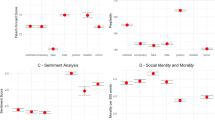

The general content was, for the majority of the articles, negative. Out of 260, 128 articles had a negative content (49%), 110 a neutral (42%) and only 22 (9%) a positive one (see Fig. 1). In other words, negative articles were between five and six times more frequent than articles with a positive content.

The majority of articles, 224 of them (86%), contained information that could be traced back to at least one of the seven specific elements of content analysed. The majority of articles had one or two cognitive preferences (81 and 82 articles, respectively, representing cumulatively the 63% of the total). 31 articles (12%) had three cognitive preferences, 24 (9%) had four, and 4 (1.5%) had five. Only 2 articles had more than five coded elements (one article had six and one had seven).

Threat-related information was present in 74 articles out of 260 (28%) and it represented, behind social information or celebrities, which I will consider below, the most diffuse element (see Fig. 2). Sexually related information came after, and they were present in 45 articles (17%). 40 articles (15%) were coded as containing elements evoking disgust. 33 articles (13%) had content that was classified as containing MCI elements. The majority of MCI elements present were more specifically violating intuitive essentialist beliefs, with 21 out of 33 articles being coded as such (making for the 8% of the total articles, and 64% of the articles containing MCI elements in general). Social information and presence of celebrities were the two most common elements, and they were found in in 50 and 48% of the articles, respectively.

There are few things to notice when looking at the co-occurrence of the preferences (see Table 1). Threat, sexual and disgust-related information tended, not surprisingly, to be found together. However, there is an asymmetry between threat-related information, on one side, and sexual and disgust-related information on the other. The majority of articles that contained threat information (57%) did not contain also sexual or disgust-eliciting elements. On the contrary, only the 27% of articles coded as containing sexual information, and the 13% of articles coded as containing element evoking disgust, were not coded as also containing at least one of the other two elements. Second, both social information and presence of celebrities are evenly distributed among the other categories, but while the total amount of both if similar, articles about celebrities tend to be associated less frequently with others factors.

Finally, 40% of the articles (105 out of 260) were identified as concerning political matters. Figure 3 shows the proportion of cognitive preferences for political and non-political articles. Notice that the majority of articles coded as regarding ‘celebrities’ were in fact about well-known political figures. Articles about non-political celebrities were 43, that is, around the 16% of the entire sample. The two categories ‘social’ and ‘celebrities’ are overrepresented in political articles, whereas the other cognitive factors are more present in non-political articles. Political articles identified as containing any category other than ‘social’ or ‘celebrities’ were 20 out of 105 (19%), whereas this figure was 88 out of 155 (57%) for non-political ones.

Discussion

Overall, the analysis presented here suggests that one of the factors that could explain the success of online misinformation is that it appeals to general cognitive preferences. Consistent with previous research, ‘suspect’ articles were found heavily leaning towards negative content. The various cognitive factors coded were present to a different degree. Descriptions of threats were prominent, with almost 30% of the articles containing them. Elements eliciting disgust and sexual details were also present, but they were generally co-occurring with threat-related information (the single most successful ‘fake news’ in Facebook in 2017 is a good example of this combination: Babysitter transported to hospital after inserting a baby in her vagina, BuzzFeed, 2017). An interpretation is that disgust and sexual details were mainly used to produce threatening narratives, giving some support to the hypothesis that threat-related information would have a primary role amongst the factors that make stories culturally successful (Blaine and Boyer, 2018), but further research is needed to substantiate this link. On the other side, elements eliciting disgust and sexual details seem rarely present in mainstream reporting, on the contrary of threats (accidents, violent acts, etc.). In this respect, while the absolute amount of threat-related information in online misinformation is higher than the absolute amount of disgust- and sexually-related information, it is possible that the relative importance of disgust and sex, when compared with true information, will be higher. An explicit comparison with truthful reporting (an obvious extension of this study) could address this question.

Articles with minimally counterintuitive elements were less common than articles with threat-, sex-, and disgust-related information. In addition, violations of intuitions that could be considered ‘supernatural’ in the common sense of the term were even less, making for around 5% of the articles (the other articles consisted in violations of essentialist intuitions, see below). This is partly surprising, giving the importance given in the cultural evolution literature to MCI elements. The result is consistent with the analysis of Stubbersfield et al. (2017) that also found, analysing contemporary urban legends, a similar low number of MCI elements (only 6% of the urban legends they analysed contained MCI elements). On the other side, it could simply be that online misinformation needs to maintain some level of credibility, so that it is a sensible strategy (whether conscious or not) to limit the number of stories about aliens and Bigfoots. Interestingly, more than 60% of the MCI elements coded consisted in violations of essentialist intuitions, such as ‘experimental’ transplants of organs from a species to another, inter-species sex, chirurgical sex changes, or clones and GMOs.

Social information and presence of celebrities were the elements quantitatively most important. Two remarks need to be done. First, although in experimental conditions social information can be clearly separated by non-social one (Mesoudi et al., 2006), the task proved more difficult when using real content, and ‘social’ was the category with the lower inter-coder agreement (see Supplementary Table S2). As for the categories above, in addition, one should compare this result with the proportion of social information in a sample of ‘real’ news, where it would also seem prominent. Second, the high number of articles coded as concerning ‘celebrities’ is linked to the presence of political articles. For the large majority, celebrities were indeed politicians, and articles about actors, musicians, sport-stars were around 16%, a figure comparable with the articles about sex or eliciting disgust, and lower than the articles containing description of threats, going against the common intuition of popular press being obsessed with celebrities. The relatively low proportion of articles concerning celebrities could also be due, as mentioned above for MCI elements, to a trade-off between cognitive attraction and plausibility. Many stories with high levels of disgust or threat-information, for example, would de be scarcely credible (or quickly debunked) when associated to public figures.

The majority of articles (63% of the total) contained 1 or 2 factors, an amount largely compatible, again, with the finding of Stubbersfield et al. (2017) for contemporary urban legends. If it is sensible both that too many factors would make narratives too convoluted and that different factors could conflict with each other, it is also difficult to generalise from this result, as it may depend on how the lists of factors are compiled by the researchers (for example Stubbersfield et al. 2017, considered ‘emotional bias’ as one of the possible features of urban legends, while here ‘negativity’ and ‘positivity’ were considered as a general property of the content).

This analysis suggests a few general considerations on the spread of online misinformation. First, articles concerning political misinformation, while abundant, were still technically a minority in the sample considered (40% of the articles). Different sampling methods could, of course, give different results, but this figure is consistent with the idea that online misinformation is not necessarily political misinformation. While there may be good reasons to focus the attention to the possible risks that the spread of political ‘fake news’ online entails, it may also be conceivable that the danger of misinformation online has been overstated by previous research, by artificially limiting the breadth of the phenomenon on explicitly malicious political articles (similar conclusions on the overestimation of the effect of political misinformation are reached, for example, in Allcott and Gentzkow, 2017 and Guess et al. 2018).

In addition, political misinformation seems to have peculiar differences with respect to non-political one. Political misinformation can thrive online also because it possesses some of the features considered here cognitively attractive (think about the notorious ‘Pizzagate’, a debunked conspiracy theory according to which several high-level officials of US Democratic Party were involved in a pedophilia ring in a pizza parlor, Snopes, 2016). In the sample analysed, however, an article classified as being about politics was three times less likely than a non-political article to have a cognitive preference other than ‘presence of celebrities’ or ‘social’.

Second, is there any specificity of the spreading of online misinformation? Various reasons have been proposed to explain why misinformation should thrive online (as opposed to offline), including the fact that everybody can quickly and cheaply spread information, that digital media make easier to find other individuals confirming incorrect information, that online interactions can preserve anonymity (Allcott and Gentzkow, 2017) and that search engines, and especially social media algorithms, are optimised for (shallow) engagement, giving disproportionate weight to ‘like’ and previous traffic (Chakraborty et al. 2016). This analysis points to the fact that, however, the same features that make urban legends, fiction, and in fact any narrative, culturally attractive also operate for online misinformation. While this does not exclude that specific mechanisms favour online spreading of misinformation, it suggests that to better understand them, some knowledge of why some narratives are attractive and others are not can be useful.

An interesting aspect of digital media is however that they provide a cheap, fast, and easy support for high fidelity transmission. In the majority of cases—think about a social media ‘share’—transmission is virtually replication of a message that hardly requires an active role from the individuals involved, besides a motivation to share it (Acerbi, 2016). The experiments considered in cultural evolution and cognitive anthropology generally involve the so-called ‘transmission chain’ methodology, where participants listen to a narrative, and then they need to remember and reproduce it. Few studies have investigated transmission chains with features comparable to online transmission and considered situations in which participants did not need to recall and reproduce the transmitted material, but simply choose what they wanted to read or what they wanted to transmit (see e.g., Eriksson and Coultas, 2014; Stubbersfield et al. 2015; Mercier et al., 2018; Stubbersfield et al. 2018; van Leeuwen et al., 2018). A review of the differences between the habitual modality of cultural transmission, which involves cognitive aspects like comprehension, memory, and verbal or written reproductions, and digitally-mediated cultural transmission represents an interesting topic for future studies.

In this analysis I considered ‘misinformation’ as anything that was published by websites identified as spreading misinformation. Sure, misinformation is a general label that covers various phenomena. Non-truthful news can be anything from poor and unintentionally misleading report, ‘fake news’ explicitly aimed at political propaganda, or even satire. The motivations to share political conspiracies are different from the motivations to share a ‘news’ about a morgue employee cremated by mistake (Petersen et al., 2018). Individuals can be aware of sharing satirical news, so that quantitative assessments of diffusion of fake news could possibly be inflated by the conscious diffusion of satire. The unifying theme here is that misinformation is less constrained by the need to be consistent with reality with respect to truthful information. In this respect, misinformation can be designed (as commented before, this does not need to be a conscious process) to appeal cognitive preferences more than truthful information can. If there is any advantage for misinformation, this could be one. This perspective differs starkly from the widespread idea that misinformation is low-quality information that succeeds in spreading because of shortcomings of digital media (Del Vicario et al. 2016; Qiu et al. 2017). Quite the opposite, misinformation, or at least some of it, is very high-quality information. The difference is that ‘quality’ is not about truthfulness, but about how it fits with our cognitive predispositions.

Finally, this study represents an exploratory, descriptive, analysis, mainly aimed to illustrate the possibility that cognitive factors can contribute to our understanding of the spreading of misinformation. Future works should test specific hypotheses, such as comparing false and real news with respect to the presence of cognitively appealing features, or examining how these features contribute to the relative success of each article. In addition, topic modelling techniques could be used to investigate whether the same attractive features could be automatically detected, allowing to expand the sample studied.

Data availability

The full coding results and the dataset used for this analysis, including (i) the list of the 26 websites sampled, (ii) the 260 links to the articles coded, and (iii) the full texts of the 260 articles are available in an Open Science Framework repository: https://osf.io/78vhn/

References

Acerbi A (2016) A cultural evolution approach to digital media. Front Human Neurosci 10:636

Allcott H, Gentzkow M (2017) Social media and fake news in the 2016 election. J Econ Perspect 31(2):211–36

Bebbington K, MacLeod C, Ellison TM, Fay N (2017) The sky is falling: evidence of a negativity bias in the social transmission of information. Evol Human Behav 38(1):92–101

Blaine T, Boyer P (2018) Origins of sinister rumors: a preference for threat- related material in the supply and demand of information. Evol Human Behav 39(1):67–75

Blancke S, Van Breusegem F, De Jaeger G, Braeckman J, Van Montagu M (2015) Fatal attraction: the intuitive appeal of gmo opposition. Trends Plant Sci 20(7):414–418

Boyer P (1994) The naturalness of religious ideas: A cognitive theory of religion. University of California Press, Berkeley and Los Angeles

BuzzFeed (2017) These are 50 of the biggest fake news hits on facebook in 2017. https://www.buzzfeednews.com/article/craigsilverman/these-are-50-of-the-biggest-fake-news-hits-on-facebook-in. Accessed 17 Dec 2018

Cerridwen A, Simonton DK (2009) Sex doesn’t sell—nor impress! Content, box office, critics, and awards in mainstream cinema. Psychol Aesthet Creat Arts 3(4):200

Chakraborty A, Paranjape B, Kakarla S and Ganguly N (2016) Stop clickbait: detecting and preventing clickbaits in online news media. In: Proceedings of the 2016 IEEE/ACM international conference on advances in social networks analysis and mining. IEEE Press, Piscataway, New Jersey, United States pp. 9–16

Clasen M (2007) Why horror seduces. Oxford University Press, Oxford

Curtis VA (2007) Dirt, disgust and disease: a natural history of hygiene. J Epidemiol Community Health 61(8):660–664

Del Vicario M, Bessi A, Zollo F, Petroni F, Scala A, Caldarelli G, Stanley HE, Quattrociocchi W (2016) The spreading of misinformation online. Proc Natl Acad Sci 113(3):554–559

Eriksson K, Coultas JC (2014) Corpses, maggots, poodles and rats: emotional selection operating in three phases of cultural transmission of urban legends. J Cogn Cult 14(1-2):1–26

Eriksson K, Coultas JC, De Barra M (2016) Cross-cultural differences in emotional selection on transmission of information. J Cogn Cult 16(1-2):122–143

Facebook (2018) Facebook reports first quarter 2018 results. https://investor.fb.com/investor-news/press-release-details/2018/Facebook-Reports-First-Quarter-2018-Results/default.aspx. Accessed 17 Dec 2018

Fessler DM, Pisor AC, Navarrete CD (2014) Negatively-biased credulity and the cultural evolution of beliefs. PloS One 9(4):e95167

Guess A, Nyhan B and Reifler J (2018) Selective exposure to misinformation: Evidence from the consumption of fake news during the 2016 us presidential campaign. European Research Council, Brussels, Belgium

Heath C, Bell C, Sternberg E (2001) Emotional selection in memes: the case of urban legends. J Personal Social Psychol 81(6):1028

Hester JB, Gibson R (2003) The economy and second-level agenda setting: A time-series analysis of economic news and public opinion about the economy. J Mass Commun Q 80(1):73–90

Keil FC (1992) Concepts, kinds, and cognitive development. MIT Press, Cambridge

Mar RA, Oatley K (2008) The Function of Fiction is the Abstraction and Simulation of Social Experience. Perspect Psychol Sci 3(3):173–192

Mercier H, Majima Y, Miton H (2018) Willingness to transmit and the spread of pseudoscientific beliefs. Appl Cogn Psychol 32(4):499–505

Mesoudi A, Whiten A, Dunbar R (2006) A bias for social information in human cultural transmission. Br J Psychol 97(3):405–423

Morin O (2016) How traditions live and die. Oxford University Press, Oxford

Morin O and Acerbi A (2017) Birth of the cool: a two-centuries decline in emo-tional expression in anglophone fiction. Cognition Emotion 31(8): 1663–1675

Niven D (2001) Bias in the news: Partisanship and negativity in media coverage of presidents George Bush and Bill Clinton. Harv Int J Press/Polit 6(3):31–46

Norenzayan A, Atran S, Faulkner J, Schaller M (2006) Memory and mystery: the cultural selection of minimally counterintuitive narratives. Cogn Sci 30(3):531–553

Petersen M, Osmundsen M and Arceneaux K (2018) A ‘need for chaos’ and the sharing of hostile political rumors in advanced democracies. PsyArXiv preprint, https://doi.org/10.31234/osf.io/6m4ts

Pew Research Centre (2018a) Internet/broadband fact sheet. http://www.pewinternet.org/fact-sheet/internet-broadband/. Accessed 17 Dec 2018

Pew Research Centre (2018b) Sources shared on twitter: a case study on Immigration. http://www.journalism.org/2018/01/29/sources-shared-on-twitter-a-case-study-on-immigration/. Accessed 17 Dec 2018

Qiu X, Oliveira DF, Shirazi AS, Flammini A, Menczer F (2017) Limited individual attention and online virality of low-quality information. Nat Human Behav 1(7):0132

Rozin P, Royzman EB (2001) Negativity bias, negativity dominance, and contagion. Personal Social Psychol Rev 5(4):296–320

Snopes (2016) Comet ping pong pizzeria home to child abuse ring led by Hillary Clinton. https://www.snopes.com/fact-check/pizzagate-conspiracy/. Accessed 17 Dec 2018

Sperber D, Hirschfeld LA (2004) The cognitive foundations of cultural stability and diversity. Trends Cogn Sci 8(1):40–46

Stubbersfield JM, Flynn EG, Tehrani JJ (2017) Cognitive evolution and the transmission of popular narratives: a literature review and application to urban legends. Evolut Stud Imaginative Cult 1(1):121–136

Stubbersfield JM, Tehrani JJ, Flynn EG (2015) Serial killers, spiders and cybersex: Social and survival information bias in the transmission of urban legends. Br J Psychol 106(2):288–307

Stubbersfield JM, Tehrani JJ, Flynn EG (2018) Faking the news: Intentional guided variation reflects cognitive biases in transmission chains without recall. Cult Sci J 10(1):54

van der Meer TG, Kroon AC, Verhoeven P and Jonkman J (2018) Mediatization and the disproportionate attention to negative news: The case of airplane crashes. Journal Stud. https://doi.org/10.1080/1461670X.2018.1423632

van Leeuwen F, Parren N, Miton H, Boyer P (2018) Individual choose-to- transmit decisions reveal little preference for transmitting negative or high-arousal content. J Cogn Cult 18(1-2):124–153

Vosoughi S, Roy D, Aral S (2018) The spread of true and false news online. Science 359(6380):1146–1151

Wikipedia (2018) Global internet usage. https://en.wikipedia.org/wiki/Global_Internet_usage. Accessed 17 Dec 2018

Wirtz JG, Sparks JV, Zimbres TM (2018) The effect of exposure to sexual appeals in advertisements on memory, attitude, and purchase intention: a meta-analytic review. Int J Advert 37(2):168–198

Acknowledgements

This research was supported by a grant from The Netherlands Organisation for Scientific Research (NWO VIDI-grant 016.144312). I thank Christa Emmett, Siobhan Kaus, Lucy Mason, and Amy Staff for their work as additional coders, and Daphné Kerhoas to introduce them to me. Sacha Yesilaltay spotted an imprecision in the preprint.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The author declares no competing interests.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Acerbi, A. Cognitive attraction and online misinformation. Palgrave Commun 5, 15 (2019). https://doi.org/10.1057/s41599-019-0224-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1057/s41599-019-0224-y

This article is cited by

-

Psychological inoculation strategies to fight climate disinformation across 12 countries

Nature Human Behaviour (2023)

-

Machine culture

Nature Human Behaviour (2023)

-

Pandemic and infodemic: the spread of misinformation about COVID-19 from a cultural evolutionary perspective

Biology & Philosophy (2023)

-

Analysis of Online Misinformation Spread Model Incorporating External Noise and Time Delay and Control of Media Effort

Differential Equations and Dynamical Systems (2023)

-

A comparative study of deterministic and stochastic dynamics of rumor propagation model with counter-rumor spreader

Nonlinear Dynamics (2023)