Abstract

We applied detrended fluctuation analysis, power spectral density, and eigenanalysis of detrended cross-correlations to investigate fMRI data representing a diurnal variation of working memory in four visual tasks: two verbal and two nonverbal. We show that the degree of fractal scaling is regionally dependent on the engagement in cognitive tasks. A particularly apparent difference was found between memorisation in verbal and nonverbal tasks. Furthermore, the detrended cross-correlations between brain areas were predominantly indicative of differences between resting state and other tasks, between memorisation and retrieval, and between verbal and nonverbal tasks. The fractal and spectral analyses presented in our study are consistent with previous research related to visuospatial and verbal information processing, working memory (encoding and retrieval), and executive functions, but they were found to be more sensitive than Pearson correlations and showed the potential to obtain other subtler results. We conclude that regionally dependent cognitive task engagement can be distinguished based on the fractal characteristics of BOLD signals and their detrended cross-correlation structure.

Similar content being viewed by others

Introduction

Working memory is the foundation for goal-directed behaviours in which short-term storage and manipulation of sensory information are crucial for successful task execution. In understanding working memory as a core cognitive process it is essential to investigate the selection, initiation, and termination of information-processing, in other words, stages of the memory process. In our study we utilised one of the most widely used experimental method for memory investigation—the Deese–Roediger–McDermott (DRM) paradigm1,2 in which the temporal subprocesses of working memory such as encoding and retrieval are separated. This paradigm is a popular research tool because of its methodological simplicity and controllability. Originally used to study long-term memory, it was later adapted to the working memory and functional magnetic resonance imaging (fMRI) environment3,4, allowing measurement of neural activity while a person memorises and retrieves information. In the classical DRM paradigm participants are presented with a list of semantically related words at the encoding stage but the paradigm has been successfully used with other stimuli which allowed us to employ the modified versions of the DRM paradigm in four experimental visual tasks: two verbal and two nonverbal. Studies on neural correlates of working memory revealed mainly activations of the prefrontal or visual regions5. In recent years, functional activity in general has been intensely analysed with a variety of methods6. However, blood oxygen-level-dependent (BOLD) signals have a nontrivially associated autocorrelation and cross-correlation structure7 and remain notoriously challenging to analyse due to their very low temporal resolution. Motivated by prior work, we applied the fractal methodology to test for regional differences in BOLD scaling properties between the tasks and experimental phases of a working memory experiment.

Many natural systems representing diverse scientific disciplines such as physics, chemistry, biology, economics, and social sciences can be considered complex systems8. These systems consist of numerous nonlinearly interacting elements and reveal extremely complex dynamical behaviour challenging to characterise by the standard analytic tools. Despite their complexity, the systems reveal some universal features, with fractality being one of the most intriguing effects among them. It is the consequence of scale-free—multiscale—data/system organisation and, thus, the system’s complexity can be quantitatively measured by fractal dimensions. Moreover, recognising the fractal structure can manifest the system’s underlying process, like self-organised criticality or cascade processes9,10. A clear example is a human brain, for which fractal organisation has been identified in morphological and physiological properties11,12. Moreover, in recent years this methodology, as very effective in detecting long-range temporal correlations, has also been developed in the cross-correlation case and used to quantify the nonlinear coupling between time series in economic systems13.

However, the proper quantification of the temporal organisation of the data is a subtle task, especially when the data are nonstationary14. For fractals, the considered data’s correlation structure obeys a power law which denotes long-range temporal or spatial correlations. Thus, the system (or signal) properties are evaluated on different scales to detect such correlations. To do this, one can use the autocorrelation function with correlations estimated at different time lags. However, determining the correlation in this way is problematic due to the noise superimposed on the data collected15,16. As a result, such data nonstationarity can lead to inaccurate estimation of the scaling exponents. Thus, one is interested in methodology, eliminating the trends without knowing their origin and shape. In this respect, two approaches are commonly used15: one related to the investigation of the signal in the frequency domain and the other directly in the time domain. In the former case, analysis of the signal wavelet transform reveals the correlation structure of the data at different levels of temporal resolution. One can also use Fourier transform methods to assess the scaling exponents as a complementary method. However, in this case, the nonstationarity can affect the calculations. In the latter approach, through estimating the detrended variance on different time scales, one can evaluate the Hurst exponents and quantify the level of data persistency.

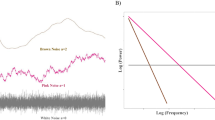

Fractal-like or spectral methods can also help capture the information hidden in physiological signals. These methods are used successfully in the study of EEG signals17,18,19, identifying the signature of scale-free dynamics. The temporal characteristics of the fMRI signals have also been demonstrated to follow the power-law relationship expressed in the frequency domain f in the form \(P(f) \propto 1/f^\beta\) with \(\beta >0\)20 and the spatiotemporal functional correlations have been related to the dynamics of a critical system21. Recently, also the fractal methodology was also applied to analyse fMRI data22. Moreover, several studies showed that the fractal properties can vary between brain regions as well as during task activation23,24,25.

Our study focused on the temporal organisation of data related to involvement in various cognitive tasks. To this end, we applied the fractal methodology to investigate the correlation structure of the time series related to four different experimental visual tasks: nonverbal (abstract objects requiring global or local information processing) and verbal (words or pseudowords), phases (information encoding and retrieval) and times of day of a working memory experiment. Two well-established methods of estimating the self-affinity parameter representing power-law autocorrelation signal properties, namely detrended fluctuation analysis and power spectrum density, have been applied in this context. Moreover, the nonlinear cross-correlation coefficient has been used to quantify the strength of the coupling between the time series under investigation. Our results confirm that both fractal characteristics of the time series organization and cross-correlation between brain areas are strongly associated with the brain activity related to engagement in a given task.

The organisation of the paper is as follows; in “Data” we provide a summary of data specification and preprocessing. Section “Methods” presents the methods of fractal, spectral, and cross-correlation analysis; a brief summary of the consecutive steps of data preprocessing and analysis is depicted in Fig. 1. Results of the analyses are presented in “Results”. Finally, in “Discussion” the findings are discussed and conclusions presented.

Results

Analysis of the fractal organisation of the time series

At the beginning, we estimated the scaling properties of the time series extracted from the specific brain regions (ROIs; regions of interest) in four experimental tasks (visual-verbal: semantic, SEM, and phonological, PHO, and visual-nonverbal: local information processing, LOC, and global information processing, GLO) by applying both the DFA and PSD presented in “Methods”. Examples of the analysed data are depicted in “Data”. For each session, we estimated the fluctuation function F(s) and the power spectral density S(f) of the time series in each ROI in a given task. The corresponding quantities were averaged over all sessions, yielding the averages \(\langle F(s)\rangle\) and \(\langle S(f) \rangle\) for each ROI and task separately. Based on the power-law dependence they clearly follow, as shown in detail in “Detrended fluctuation analysis”, the Hurst and \(\beta\) exponents were estimated for both tasks and the resting state and depicted in Fig. 2. The difference in these exponents between the resting state and the tasks is clear. The extreme values of the scaling exponents were obtained for the resting state with \(\beta \in [0.1, 1.4]\) and \(H\in [0.9, 1.2]\). In most cases, the theoretical relation between \(\beta\) and H (7) was fulfilled. Still, we note that in some ROIs the \(\beta\) exponent dropped and assumed low values, which was not observed for the Hurst exponent. This is due to the nonstationarity of the signal, which is removed in the DFA-based methods but can strongly influence the \(\beta\) values.

The estimated exponents indicate strongly correlated signals similar to the 1/f process, which is a ubiquitous phenomenon in physical and biological processes. However, the signals corresponding to task performance were much less autocorrelated and characterised by exponents \(\beta\) and H within the range between [0.2, 0.8] and [0.49, 0.9], respectively. Moreover, the variation of the exponents for different ROIs was similar for all kinds of task. In figures such as Fig. 2, we group ROIs belonging to the same resting-state networks (RSN) for greater interpretability. The Hurst exponent assumed the highest values—suggesting a strong persistence of the signals—for the ROIs belonging to the Auditory RSN. On the other hand, the smallest H values characterise Visual II RSN.

Plots of the spectral, \(\beta\), and Hurst, H, scaling exponents estimated for the entire time series. The exponents were calculated for each ROI and ordered on the plot according to the AAL atlas (top labels) and resting-state networks (bottom labels). For evening session results, see Supplementary Fig. A.2. visual-verbal tasks (SEM semantic, PHO phonological), visual-nonverbal tasks (LOC local information processing, GLO global information processing), resting-state (rest).

To investigate possible differences in the fractal signal properties recorded in subsequent experimental phases, we extracted the time series separately from the memory encoding and retrieval phases, as described in Fig. 1 (see also examples in “Data preprocessing”). The PSD and DFA characteristics were then estimated and compared with respect to the time of day when the experiment was performed (morning, evening), the tasks and the phases of each task.

In Figs. 3 and 4, the estimated exponents \(\beta\) and H in the encoding and retrieval phases are presented. There are clear differences from the results obtained for the entire time series. The H values in the segmented tasks are higher than in the entire ones. On the other hand, the values of the resting state H obtained from similarly preprocessed data (cf. “Data preprocessing”) reveal a slightly weaker correlation level than for the original data. However, the most exciting observations come from comparing the scaling properties of different experimental phases. All tasks generally decreased H exponents with respect to the resting state (by 0.049 on average, PHO and SEM more than GLO and LOC), and even more so in the encoding phase (by another 0.020, especially PHO and SEM, then GLO but without interaction for LOC). For the encoding phase, the Hurst exponent variation is greater than those estimated for the retrieval phase. The variance of H due to ROIs is more than twice the residual variance of the mixed model, indicating that finding interactions between tasks and individual ROIs should be the next step.

For the AAL regions belonging to the Visual and Sensorymotor RSNs, the values of H drop significantly. This effect is especially strong for verbal tasks (SEM and PHO) for which \(H \approx 0.5\) (Visual II) and \(H \approx 0.7\) (Motor), which is characteristic of uncorrelated and moderately positively correlated data, respectively. It is not as conspicuous in the case of visuospatial tasks (GLO and LOC) where a significant difference between resting state and task is mainly visible for Visual RSN. The Hurst exponent’s highest values indicate the 1/f process identified for the Auditory RSN, similarly to the resting state data. The H values in Visual RSN are higher in the memory retrieval phase than in encoding; however, there is still a noticeable decrease with respect to the resting state. Moreover, in retrieval, there is hardly any difference between verbal and visuospatial tasks. We have found no significant effect of time-of-day (TOD, morning and evening sessions), but there was one significant positive interaction of TOD with the PHO task. The code for and full output of the statistical model are provided in the Supplementary Listing 1 and Table C.1.

(Left panel) Plots of the spectral, \(\beta\), and Hurst, H, scaling exponents estimated for time series in the encoding phase. The exponents were calculated for each ROI and ordered on the plot according to the AAL atlas (top labels) and resting-state networks (bottom labels). (Right panel) Glass brain plot of \(\langle H \rangle\) differences between the GLO and PHO tasks rendered with nilearn26,27,28. For evening session results, see Supplementary Fig. A.3. visual-verbal tasks (SEM semantic, PHO phonological), visual-nonverbal tasks (LOC local information processing, GLO global information processing), resting-state (rest).

(Left panel) Plots of the spectral, \(\beta\), and Hurst, H, scaling exponents estimated for time series in the retrieval phase. The exponents were calculated for each ROIs and ordered on the plot according to the AAL atlas (top labels) and resting-state networks (bottom labels). (Right panel) Glass brain plot of \(\langle H \rangle\) differences between the GLO and PHO tasks rendered with nilearn26,27,28. For evening session results, see Supplementary Fig. A.4. visual-verbal tasks (SEM semantic, PHO phonological), visual-nonverbal tasks (LOC local information processing, GLO global information processing), resting-state (rest).

To illustrate the difference in the hierarchical structure of the analysed signals, we applied the wavelet transform. This method is capable of revealing the self-similar properties of the signals, which can be presented as a heat map on a space-scale half-plane called a scalogram. The scalogram in Fig. 5 represents the sample signals (ROI no. 54, Visual II RSN, TOD evening) for which the most evident differences between the scaling properties of two tasks, GLO and SEM, appear with respect to the experimental phase. The \(W_{\psi }\) amplitude is coded by 256 colours. It is easy to notice the hierarchical structure underlying the fluctuations. The wavelet transform produces a kind of branching structure with large regions of high amplitude on a large scale and a much denser distribution of singularities on the smaller one. Moreover, for the semantic task in the encoding phase, the concentration of the high amplitude on a small scale is also noticeable. It indicates the high volatility of the signal and the strong singularities quantified by small values of scaling exponents H.

Scalogram of the signals from ROI no. 54 (Visual II RSN) in evening (eve.) for two tasks, GLO and SEM, in memory encoding and retrieval phases. The \(W_{\psi }\) amplitude is coded by 256 colours. Experimental phases (ENC encoding, RET retrieval), visual-verbal tasks (SEM semantic), visual-nonverbal tasks (GLO global information processing).

Cross-correlations study

From the perspective of cross-correlation analysis, we can identify which ROIs are the most correlated with the other ones. To measure it, we averaged \(\rho (q=1,s=10)\) over all subjects; next, we calculated the absolute difference of each element of the correlation matrix between the four tasks and resting-state; finally, for a given ROI we averaged the difference over all the other ROIs, \(\langle \rho (q=1,s=10)\rangle\); the results are presented in Fig. 6.

In the case of visuospatial tasks (GLO and LOC), the largest difference from the resting state is reached for Visual I and Visual II RSNs. For the verbal tasks (PHO and SEM), again the largest difference is for the Visual II region, but also for the sensory-motor and part of the executive areas, especially in the encoding phase of the experiment.

We also checked whether the largest eigenvalues calculated for the correlation matrix of 116 ROIs in the task were significantly different from the resting state. As expected, naturally ordered eigenvalues, \(\lambda _i\), showed much weaker differences, since they were associated with noisier eigenvectors than the clustered eigenvalues, as visible in Fig. 7. Henceforth, we focus only on the clustered eigenvalues, \(\tilde{\lambda }_i\). The eigenvalues of Pearson correlations showed fewer differences, which appeared dispersed among several eigenvalues and had smaller magnitudes (for instance, when testing for all pairwise differences between GLO, LOC, PHO, SEM and resting-state, \(\tilde{\lambda }_i\) with \(i=5, 11, 13\) showed 2-4 significant differences each and their magnitudes lied between 0.9 and 2.5). From the perspective of \(\rho (q=1,s=10)\) coefficient, taking into account also nonlinear correlations, more differences appeared, they were localised in larger eigenvalues and had larger magnitudes (in the same pairwise comparisons as above, \(\tilde{\lambda }_1\) contained six differences of magnitude between 4.0 and 8.8, \(\tilde{\lambda }_2\) four differences ranging between 3.4 and 4.6, and many smaller ones for \(i=3,4,5,7,9,10,15\)). There were significant differences between morning and evening; however, they appeared only in three eigenvalues for Pearson and two for \(\rho (q=1,s=10)\) correlations. For details, see Supplementary Tables B.2–B.7.

In particular, Fig. 7 (bottom) shows that the largest clustered eigenvalue indicates differences not only between the resting state and the tasks, but also between the encoding and retrieval phases. Similarly, the largest clustered eigenvalue shows differences not only between the resting state and GLO, LOC, PHO, and SEM (4.5***, 4.0**, 6.6*** and 8.8***, respectively), but also between SEM and both GLO and LOC (− 4.3**, − 4.8***). Such differences (either the tasks vs resting state or GLO vs PHO and SEM, and LOC vs PHO) repeat also in other eigenvalues, although they are fewer per eigenvalue.

In Fig. 7 we show an example of an eigenvector associated with the largest eigenvalue of the \(\rho\) correlation matrix. Clustering of the eigenvectors was effective, as reflected by the smaller error bars compared to the unclustered principal eigenvectors. This eigenvector encodes, for instance, opposite activations of such RSNs as Executive Function and DMN versus Sensorymotor and Visual II, with some other RSNs visibly divided, e.g., Visual III or Basal Ganglia. The principal eigenvector also shows less variance in the resting state than in encoding and retrieval phases. Particularly more active in tasks are the Executive Function, DMN, Sensorymotor, Visual II and III RSNs. Even though the pattern between encoding and retrieval is almost identical, the minima and maxima have a systematically greater spread in encoding, reflecting, among others, their greater consistency across subjects and tasks. Naturally, the eigenvectors corresponding to the other results in Supplementary Tables B.2–B.5 encode subtler effects. They show different patterns of ROI contributions, and each will need a separate interpretation and validation.

Average elements of (top left) the principal eigenvector and (bottom left) the first aligned eigenvector of \(\rho\) correlation matrices for encoding, retrieval, and resting state. The bars mark standard errors over all subjects, tasks, and conditions. Histograms of eigenvalues of (top right) the principal eigenvector and (bottom right) the first aligned eigenvector of nonlinear correlation matrices for encoding, retrieval and resting state pooled over all subjects, tasks and conditions. The values below the legend indicate the location differences between pairs of distributions. Experimental phases (ENC encoding, RET retrieval), resting-state (rest).

Discussion

Analysis of BOLD signal scaling properties reveals significant differences between task performance and resting state. Resting-state signals are more regular than those related to task phases (see Fig. 2). This observation is quantitatively described by the Hurst exponents \(\langle H \rangle \approx 1.1\) for resting state and \(\langle H \rangle \approx 0.7\) for tasks. The most distinct BOLD signals are related to Auditory (highly correlated), AAL ROI 83-84 (left and right superior temporal poles), and 87-88 (left and right middle temporal poles), and Visual II (minimally correlated), ROI 53-54 (left and right inferior occipital gyrus). The temporal pole is a heterogeneous structure and many studies have demonstrated its role in various cognitive functions29. It is engaged in both visual memory processing30 and semantic functions31,32. Previous research confirmed the dense connections between the dorsolateral temporal pole and the medial temporal cortex and proved the involvement of the temporal pole in memory33. The inferior occipital gyrus belonging to the Visual II network participates in primary visual processing, as well as word identification as part of the visual word form area34. These results are also consistent with the study by Churchill et al. (2016)25, who showed that cognitive effort decreases fractal scaling throughout the brain. It should be noted that the value of H in resting-state indicates 1/f process commonly identified in nature.

However, the most intriguing results come from the analysis of the encoding and retrieval phases. The Hurst exponents estimated especially for the Visual II network are low compared to the other ROIs, whereby the differences are the most significant for the encoding phase. Visual cortex activity is associated with the processing of stimuli in working memory tasks and has been shown to distinguish correct and incorrect stimuli at both encoding and retrieval5. In retrieval, parts of the early visual processing areas had been found to be preferentially linked to correct recognition when compared to false ones, and these differences were linked to a sensory reactivation of memorised stimuli3,35, namely, true recognitions were associated with more sensory details than false ones. Similarly, visual cortex activity had also been linked to imagery retrieval of sensory details when recognising information from memory36. Taking these findings into consideration, the differences in H values between our tasks (the lowest H in visual-verbal tasks and particularly high differences during encoding) could be linked to a larger amount of sensory details (to be encoded and then retrieved) in visuospatial stimuli than in verbal material. Based on the results obtained, it is easy to distinguish between the experimental phase (encoding/retrieval) and the task type (visual-verbal/nonverbal). The recent EEG study37 provides evidence for dynamics of encoding and maintenance processes of working memory. The results show a temporally stable coding scheme with possible dynamics during working memory maintenance. The results of our study using fMRI and fractal and spectral analysis indicate differences in dynamics within encoding and retrieval phases. Both studies confirm a dynamic-processing model of working memory and demonstrate the necessity of applying the new methodological and computational approaches.

According to the detrended cross-correlation analysis, \(\rho (q)\), the most significant differences in the level of correlation as compared to resting-state are in the areas of Visual I and Visual II for the visuospatial tasks and in the Visual II and Sensorymotor networks for the verbal tasks. The above differences are more visible when correlations are measured with the coefficient \(\rho (q,s)\), which, in addition to linear correlations, can identify nonlinear relationships. From this perspective, the most outstanding networks are DMN (default mode network), Sensorymotor, Cerebellum, and Visual II (ROI 53,54—inferior occipital gyrus). DMN consists of the posterior cingulate cortex, angular gyrus, and precuneus and is traditionally thought to be active while mind-wandering at wakeful rest. However, more recent studies showed its involvement during thoughts about task performance38. The precuneus had been found to be involved in the encoding and retrieval processes of episodic memory, executive functions, and visuospatial imaginary (for a review, see: Cavanna and Trimble, 200639). Rao and colleagues (2003)40 showed that both the angular gyrus and the precuneus are activated during the spatial location information processing. Furthermore, some studies confirmed the involvement of the precuneus in attention41. The cerebellum, which is similar in structure to the cerebral cortex, has its own functional architecture, where individual lobules are involved in various motor and cognitive functions (for a review, see: Stoodley and Schmachmann, 200942). Among others, lobule VI and vermis had been reported to be activated during spatial, attentional, and working memory tasks43,44. Our results are in line with the studies mentioned above, showing differences in correlations in lobule VI and vermis of the cerebellum. Sensorimotor network (ROI: 1, 2—left and right precentral gyrus, 57, 58—left and right postcentral gyrus, and 69, 70—left and right paracentral lobules) is activated primarily during processing of sensory and motor information. However, there is evidence that the precentral gyrus is involved in the retrieval of false memories45. Both the precentral and postcentral gyri were also previously reported in a similar visuospatial working memory to show correctness-related differences in activity when younger adults processed a lure46. The one limitation of the presented analysis is that it does not allow distinguishing between positive responses and false memories. Another technique based on extracting short significant fMRI events can be used for this purpose47.

Conclusions

To our knowledge, this is the first time that spectral and fractal analysis were used to investigate the encoding and retrieval processes of the working memory. The presented results clearly show that the temporal organisation of the fMRI time series contains distinctive information about brain activity related to different types of tasks and phases of working memory. Analysis of the scaling properties of the signals revealed that those corresponding to task performance are significantly different from those in the resting state. The differences in signals between encoding and retrieval phases showed that the used methods of analysis are sensitive to dynamic changes in these processes. The results of the fractal and spectral analysis presented in our study—consistent with previous research related to visuospatial and verbal information processing, working memory (encoding and retrieval), and executive functions—shed new light on these processes.

Data

Below, in “Participants” and “Tasks” we provide a summary of the data from the experiment described at length by Lewandowska et al. (2018)48. Details on fMRI data acquisition and preprocessing can be found in Fafrowicz et al. (2019)49. In “Data preprocessing” we provide processing details specific to the current paper.

Participants

The volunteers first underwent an online sleep-wake assessment that included night sleep quality—Pittsburgh Sleep Quality Index (PSQI)50and daytime sleepiness—Epworth Sleepiness Scale (ESS)51, and completed sleep-wake assessment diurnal preference as measured by the Chronotype Questionnaire52. Exclusion criteria were: age below 19 or above 35, left-handedness, psychiatric or neurological disorders, dependence on drugs, alcohol or nicotine, shift work or travel involving moving between more than two time zones within the past two months, ESS score above 10 points. Next, they were qualified for the study with genetic testing for the polymorphism of the clock gene PER3, which was considered a hallmark of extreme diurnal preferences53. Finally, 54 subjects were selected (32 females, mean age: 24.17±3.56 y.o.) divided into 26 morning-oriented and 28 evening-oriented types based on the Chronotype Questionnaire and PER3 genotyping.

The volunteers signed an informed consent form and were remunerated for their participation in the experiment. The study was carried out in accordance with the Declaration of Helsinki and approved by the Research Ethics Committee at the Institute of Applied Psychology at the Jagiellonian University.

Tasks

Data were collected at two times of the day (TOD; morning and evening), four experimental visual tasks (verbal: semantic and phonological, ‘SEM’ and ‘PHO’, and nonverbal: processing of global or local characteristics of abstract objects, ‘GLO’ and ‘LOC’), two phases of each task (encoding, ‘ENC’, and retrieval, ‘RET’) and resting state (‘rest’). The four tasks were based on the Deese-Roediger-McDermott paradigm1,2 and specifically the version dedicated to studying short-term/working memory distortions4. In the semantic task, the stimuli were Polish words matched by semantic similarity. In the phonological task, meaningless pseudowords characterised by phonological similarity were used. On the other hand, in the global processing task, the stimuli were abstract figures requiring holistic processing (differing in the number of overlapping similarities). In the local processing task, the stimuli differed in one specific detail and thus required local processing.

In each task, participants were asked to memorise sets of stimuli (encoding phase, 1.2–1.8 s) which were followed by either simple distractors (in verbal tasks, 2.2 s) or masks (in visuospatial tasks, 1.2 s). Then (after about 6.1 s), another single stimulus, called probe, appeared on the screen (2 s). The probe stimulus came in one of three possible types: positive (it had appeared in encoding), negative (it was new and dissimilar to the encoding ones) or lure (it was new but associated with or similar to one of the encoding stimuli). At this point (retrieval phase), participants were asked to answer whether the stimulus had appeared in the preceding set (with the right-hand key for ”yes” and the left-hand key for ”no” responses). After an 8.4-s intertrial interval, a fixation point (450 ms) and a blank screen (100 ms) were presented, followed by a new set.

There were 60 sets of stimuli followed by 25 positive probes, 25 lures, and 10 negative probes in each task. Sample experimental tasks are depicted in Supplementary Fig. A.1. The behavioural data on the response accuracy and reaction times for each task and probe type are presented in Supplementary Table B.1.

Each participant performed the tasks twice: during morning and evening sessions, two versions of each task were created and presented in a random way, and the order of the presented stimuli was randomised as well. The resting state with eyes open was recorded for 10 minutes. One day before the study was conducted, participants were familiarised with the experimental procedure and underwent a training session. Further details of the experimental paradigm can be found in48.

Data preprocessing

BOLD signals were collected at repetition time TR \(= 1.8\text { s}\) with a 3T Siemens Skyra MR System and underwent the standard preprocessing described in49. The signals were then averaged within 116 brain regions (Regions of Interest, ROIs) from the Automated Anatomical Labeling (AAL1) brain atlas54. For a given experimental session, one can form the data in a matrix with 116 columns corresponding to the ROIs and the number of rows corresponding to the length of the time series. Examples of the time series are shown in Fig. 8 (left panel).

To highlight possible differences between the encoding and retrieval phases, we additionally preprocessed the time series as follows. For each experimental session, from the ROI time series matrix, all data segments related to the encoding (retrieval) phase were extracted and concatenated, in order of appearance, into a new matrix. Each segment started at the stimulus onset and was 10 s long (6–7 TRs), which approximately corresponds to the time width of the peak of the haemodynamic response function. In the case of encoding, the segments included presenting the distractor, and in the case of retrieval, they included the intertrial interval. For a given session, concatenation of segments from all 60 stimuli resulted in 400-TR long time series. To evaluate the statistical significance of the results, we also analysed the time series of resting state with eyes open preprocessed similarly to the task data. Therefore, in this case, the time series length of 400 time points was cut into nonoverlapping segments of \(~10\text { s}\) (the same length as in the task), which were then randomly shuffled, see Fig. 8 (right panel). Thus, we considered the time series corresponding to the memorisation, retrieval, and resting-state phases separately.

Methods

Analysis of the time series’ correlation structure is one of the primary methods for identifying patterns or dependencies in the data and inferring the system’s organisation responsible for generating the signal. We decided on applying two techniques of autocorrelation time series analysis, namely, detrended fluctuation analysis (DFA)55 and power spectral density (PSD)56. These two complementary methods can quantitatively describe the temporal multiscale organisation of the data and, hence, its fractal properties. Moreover, to qualitatively present the hierarchical structure of the time series, we applied wavelet-based multiresolution analysis. To characterise cross-correlations between the time series, matrices of the q-dependent detrended correlation coefficients (DCC) were analysed57. The diagram representing the consecutive steps of the data preprocessing and analysis is depicted in Fig. 1.

Fractal analysis of the time series

Detrended fluctuation analysis

One of the most reliable and commonly used methods to detect the self-affinity of the signal is the detrended fluctuation analysis proposed by Peng et al.55 to analyse DNA. The algorithm consists of the following steps. For a time series \(x_i\) of length N (\(i=1,2,\ldots ,N\)), first, calculate the profile

where \(\langle . \rangle\) denotes the average of \(x_i\) over the entire time series. Next, the profile is divided into \(2N_s\) nonoverlapping segments of length s (where \(N_s=\lfloor N/s \rfloor\) and \(\lfloor . \rfloor\) denotes the floor function), numbered by an index \(\nu\), starting from the beginning and the end of the time series. The local trend is estimated in each part \(\nu\) by fitting a polynomial \(P^{(m)}_{\nu }\) of the order m to the data and then is subtracted. The polynomial order m is a parameter of the method. According to the study14 a reasonable choice is \(m=2\), which we used in our analysis. In each segment, the detrended variance is calculated

Finally, the scale-dependent fluctuation function is estimated

For a fractal time series, one observes the power-law behaviour

where H denotes the Hurst exponent. Therefore, if the time series is only short-range correlated, the Hurst exponent assumes the value \(\sim\) 0.5. In the case of long-range fractal-correlated time series, a deviation of H from 0.5 is observed, respectively,

-

\(0.5<H<1\) for positively autocorrelated data (persistent signal),

-

\(0<H<0.5\) for negatively autocorrelated one (antipersistent signal).

The results for the GLO and SEM tasks (visuospatial and verbal, respectively) and resting-state are presented in Fig. 9. Since the present study focuses on short-term dependencies in the signals, the plots are restricted to a range of small scales. The presented characteristics clearly follow power laws within the scale range, s, considered. Furthermore, the scaling properties depend on the ROI, which can be easily seen by their different slopes of \(\langle F(s)\rangle\) and \(\langle S(f) \rangle\) in Fig. 9 (left panels). These quantities were recomputed after dividing the tasks into the experimental phase (encoding and retrieval) averaged over all participants, as shown in Fig. 9 (right panels). Even though the scaling length after the additional processing is shorter than the results for the full-length data, the scaling proprieties can still be identified.

The average of PSD, \(\langle S(f) \rangle\) and fluctuation functions, \(\langle F(s)\rangle\), estimated for two tasks (verbal semantic, SEM, and nonverbal global information processing, GLO) and resting-state (RES). (Left) Computed on entire time series. (Right) Computed after dividing time series into the experimental phases: encoding and retrieval. Each line corresponds to a different ROI.

Power spectrum and wavelet transform analysis

One of the main mathematical tools used to identify quasiperiodicity in time series is power spectrum analysis. It is able to characterise the linear correlations of the time series via the distribution of its variance (energy) at specific frequencies. The spectrum of the time series \(x_j\) of length N (\(j=0,1,\ldots ,N-1\)) is estimated via the square of the Fourier transform modulus:

where \(f=0,1,\ldots ,N-1\). In the case of fractal signals, the scaling relation is observed

where \(\beta\) is a spectral scaling exponent related to the Hurst exponent via the relation

Thus, DFA and PSD can be considered complementary methods of quantifying linear long-range correlations in fractal time series.

To emphasise differences in the hierarchical organisation of the time series analysed, we applied wavelet analysis58. By its virtue, the time series is decomposed into different periodic components taking into account the time of their occurrence. Thus, the wavelet transform can be considered a mathematical microscope that provides insight into the intrinsic organisation of the time series. The wavelet transform for discrete signals is defined as

where \(\psi\) is the wavelet well localised in the time and frequency domain, s denotes the scale parameter, and k is the wavelet’s position in time. In this work, we used the Mexican hat (the second derivative of the Gaussian) as a wavelet.

Cross-correlation analysis

To describe the nonlinear cross-correlations, in addition to the standard Pearson correlation coefficient59, we used q-dependent DCC, \(\rho (q,s)\)57. It allows one to quantify the cross-correlations between two time series \(x_i\) and \(y_i\) both at particular time scales s and with respect to the amplitude of fluctuations filtered by q. The procedure of estimating \(\rho (q,s)\) extends the formulas (2) and (3) to the case of the covariance function. Given the profiles \(X\left( j\right)\) and \(Y\left( j\right)\) of the time series [cf. (1)] the covariance is estimated as

for segments \(\nu =1,\ldots ,N_s\) and

for \(\nu =N_s+1,\ldots ,2N_s\), where \(P^{(m)}_{X,\nu }\) and \(P^{(m)}_{Y,\nu }\) denote polynomials fitted to the X(j) and Y(j) profiles, respectively. Then, q-th order covariance function13 is

where \(\mathrm{sign}(F_{xy}^{2}(\nu ,s))\) denotes the sign of \(F_{xy}^{2}(\nu ,s)\). The parameter q plays the role of a signal amplitude filter. Finally, the q-dependent DCC, \(\rho (q,s)\), is estimated as follows

where \(F_{xx}^{q}\), \(F_{yy}^{q}\) are calculated by means of (9) and (10) when only X(j) or Y(j) is separately considered. Furthermore, in our calculations we used \(q=1\), which ensures that the coefficient \(\rho (q,s)\) assumes values within the range \([-1,1]\), making its interpretation analogous to the Pearson correlation coefficient57.

Eigenvector alignment

Having computed the correlation matrix and its eigendecomposition for each configuration of the independent variables (subject, TOD, task, phase), one would like to statistically compare the distributions of eigenvalues between these configurations. Naïvely, the groups of all the largest eigenvalues could be compared, then the groups of all the second largest eigenvalues, and so on. However, the order of eigenvalues corresponding to a given correlation pattern may be contingent on interindividual variability. If the effect of, say, TOD were a shift in the distribution location of a given eigenvalue, then we might as well expect this shift to cause a change in the order of neighbouring eigenvalues. In such a case, the naïve approach would compare the eigenvalue distributions of different correlation patterns.

For these reasons, the eigenvalues were first grouped into sets corresponding to the same correlation pattern. The grouping was obtained by agglomerative hierarchical clustering of the eigenvectors. We used Ward’s method with squared Euclidean distance d(u, v) modified to allow reflection \(\tilde{d}(u,v)=\min (d(u,v),d(-u,v))\), since the eigenvector opposites remain eigenvectors. The clustering was performed on eigenvectors coming from all possible (subject, TOD, task, phase) configurations associated with large eigenvalues \(\lambda > 2\). This arbitrary cut-off lies above the standard upper fence for outliers (\(Q_3+1.5 IQR =1.5\) on average) and results in \(13.5\pm 1.1\) valid eigenvalues per configuration. Consequently, the clustering was set to produce 15 clusters. Additionally, when in a given cluster more than one eigenvector belonging to a given subject had the same (TOD, task, phase) configuration, only the one associated with the largest eigenvalue was kept, and the others were deleted from further analysis (\(24\%\) in total). The clusters were then ordered according to the average of member eigenvalues.

Statistical tests

To assess the statistical reliability of the results, we performed an analysis of the randomly shuffled data. This procedure destroys all temporal correlations in the time series but preserves its statistical distribution. The scaling properties of the surrogates can be quantified by the Hurst exponent \(H = 0.5\). We also tested a mixed linear model for the global effects of TOD, task, task phase and their interactions, with ROIs as random effects. We performed a stepwise model selection by dropping single terms based on \(\chi ^2\) tests.

We report tests that compare the distributions of correlation matrix eigenvalues in natural order and clustered eigenvalues. Individual data points corresponded to single subjects in a given condition. We used linear models with eigenvalues as the dependent variable and the independent variables: TOD (morning and evening), task (GLO, LOC, PHO, SEM, and resting-state), and task phase (encoding and retrieval; for resting-state we used three independent random samples) and all pairwise interactions. Next, we performed a stepwise model selection by dropping single terms based on F-tests. To assess the differences between levels within the remaining factors, due to the exploratory nature of the study, post-hoc we utilised the more conservative Scheffé method60. These results are provided below the legends in Fig. 7, right panels. We use the following significance codes: p<0.001 ‘***’, 0.01 ‘**’, 0.05 ‘*’. The R code and the data needed to reproduce all statistical tests’ results are provided in the Supplementary Information S2-S6.

Data availability

The functional MRI data analysed are available from Magdalena Fafrowicz (email: magda.fafrowicz@uj.edu.pl) on reasonable request.

References

Deese, J. On the prediction of occurrence of particular verbal intrusions in immediate recall. J. Exp. Psychol. 58, 17–22. https://doi.org/10.1037/h0046671 (1959).

Roediger, H. L. & McDermott, K. B. Creating false memories: Remembering words not presented in lists. J. Exp. Psychol. Learn. Mem. Cogn. 21, 803–814. https://doi.org/10.1037/0278-7393.21.4.803 (1995).

Slotnick, S. D. & Schacter, D. L. A sensory signature that distinguishes true from false memories. Nat. Neurosci. 7, 664–672. https://doi.org/10.1038/nn1252 (2004).

Atkins, A. S. & Reuter-Lorenz, P. A. Neural mechanisms of semantic interference and false recognition in short-term memory. Neuroimage 56, 1726–1734. https://doi.org/10.1016/j.neuroimage.2011.02.048 (2011).

Luiz, P., Gutierrez, E., Bandettini, P. & Ungerleider, L. Neural correlates of visual working memory: fMRI amplitude predicts task performance. Neuron 35, 975–87. https://doi.org/10.1016/s0896-6273(02)00817-6 (2002).

Lurie, D. J. et al. Questions and controversies in the study of time-varying functional connectivity in resting fMRI. Netw. Neurosci. 4, 30–69. https://doi.org/10.1162/netn_a_00116 (2020).

Ochab, J. K., Tarnowski, W., Nowak, M. A. & Chialvo, D. R. On the pros and cons of using temporal derivatives to assess brain functional connectivity. Neuroimage 184, 577–585. https://doi.org/10.1016/j.neuroimage.2018.09.063 (2019).

Kwapień, J. & Drożdż, S. Physical approach to complex systems. Phys. Rep. 515, 115. https://doi.org/10.1016/j.physrep.2012.01.007 (2012).

Chialvo, D. R. Critical brain networks. Phys. A Stat. Mech. Appl. 340, 1. https://doi.org/10.1016/j.physa.2004.05.064 (2004).

Hesse, J. & Gross, T. Self-organized criticality as a fundamental property of neural systems. Front. Syst. Neurosci. 8, 1. https://doi.org/10.3389/fnsys.2014.00166 (2014).

Johnson, J. K., Wright, N. C., Xià, J. & Wessel, R. Is the brain cortex a fractal?. J. Neurosci. 39, 1. https://doi.org/10.1523/JNEUROSCI.3163-18.2019 (2019).

Kiselev, V. G., Hahn, K. R. & Auer, D. P. Is the brain cortex a fractal?. Neuroimage 20, 1. https://doi.org/10.1016/s1053-8119(03)00380-x (2003).

Oświȩcimka, P., Drożdż, S., Forczek, M., Jadach, S. & Kwapień, J. Detrended cross-correlation analysis consistently extended to multifractality. Phys. Rev. E 89, 023305. https://doi.org/10.1103/PhysRevE.89.023305 (2014).

Oświȩcimka, P., Drożdż, S., Kwapień, J. & Górski, A. Effect of detrending on multifractal characteristics. Acta Phys. Pol., A 123, 597–603 (2013).

Kantelhardt, J. W., Koscielny-Bunde, E., Rego, H. H., Havlin, S. & Bunde, A. Detecting long-range correlations with detrended fluctuation analysis. Phys. A 295, 441–454 (2001).

Holl, M. & Kantz, H. The relationship between the detrendend fluctuation analysis and the autocorrelation function of a signal. Eur. Phys. J. B 88, 327 (2015).

Finotello, F., Scarpa, F. & Zanon, M. EEG signal features extraction based on fractal dimension. Annu. Int. Conf. IEEE Eng. Med. Biol. Soc. 4154–7, 2015. https://doi.org/10.1109/EMBC.2015.7319309 (2015).

Marino, M. et al. Neuronal dynamics enable the functional differentiation of resting state networks in the human brain. Hum. Brain Mapp. 40, 1445–1457 (2019).

Van De Ville, D., Britz, J. & Michel, C. M. EEG microstate sequences in healthy humans at rest reveal scale-free dynamics. Proc. Natl. Acad. Sci. 107, 18179–18184. https://doi.org/10.1073/pnas.1007841107 (2010).

Zarahn, E., Aguirre, G. K. & D’Esposito, M. Empirical Analyses of BOLD fMRI Statistics. Neuroimage 5, 179–197. https://doi.org/10.1006/nimg.1997.0263 (1997).

Fraiman, D. & Chialvo, D. What kind of noise is brain noise: anomalous scaling behavior of the resting brain activity fluctuations. Front. Physiol. 3, 1. https://doi.org/10.3389/fphys.2012.00307 (2012).

Porcaro, C., Mayhew, S. D., Marino, M., Mantini, D. & Bagshaw, A. P. Characterisation of haemodynamic activity in resting state networks by fractal analysis. Int. J. Neural Syst. 30, 2050061 (2020).

He, B. J. Scale-free properties of the functional magnetic resonance imaging signal during rest and task. J. Neurosci. 31, 13786–13795. https://doi.org/10.1523/jneurosci.2111-11.2011 (2011).

Barnes, A., Bullmore, E. T. & Suckling, J. Endogenous human brain dynamics recover slowly following cognitive effort. PLoS ONE 4, e6626. https://doi.org/10.1371/journal.pone.0006626 (2009).

Churchill, N. W. et al. The suppression of scale-free fMRI brain dynamics across three different sources of effort: Aging, task novelty and task difficulty. Sci. Rep. 6, 30895. https://doi.org/10.1038/srep30895 (2016).

Abraham, A. et al. Machine learning for neuroimaging with scikit-learn. Front. Neuroinf. 8, 1. https://doi.org/10.3389/fninf.2014.00014 (2014).

Gramfort, A. et al. nilearn. Version 0.9.0. URL: https://github.com/nilearn/nilearn (2022).

Pedregosa, F. et al. Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 12, 2825–2830 (2011).

Herlin, B., Navarro, V. & Dupont, S. The temporal pole: From anatomy to function-a literature appraisal. J. Chem. Neuroanat. 113, 1–11. https://doi.org/10.1016/j.jchemneu.2021.101925 (2021).

Roland, P. E., Gulyás, B., Seitz, R. J., Bohm, C. & Stone-Elander, S. Functional anatomy of storage, recall, and recognition of a visual pattern in man. NeuroReport 1, 53–56. https://doi.org/10.1097/00001756-199009000-00015 (1990).

Jouen, A. L. et al. Beyond the word and image: Characteristics of a common meaning system for language and vision revealed by functional and structural imaging. Neuroimage 106, 72–85. https://doi.org/10.1016/j.neuroimage.2014.11.024 (2015).

Jouen, A. L. et al. Beyond the word and image: II - structural and functional connectivity of a common semantic system. Neuroimage 166, 185–197. https://doi.org/10.1016/j.neuroimage.2017.10.039 (2018).

Córcoles-Parada, M. et al. Frontal and insular input to the dorsolateral temporal pole in primates: Implications for auditory memory. Front. Neurosci. 13, 1–21. https://doi.org/10.3389/fnins.2019.01099 (2019).

Vigneau, M., Jobard, G., Mazoyer, B. & Tzourio-Mazoyer, N. Word and non-word reading: What role for the visual word form area?. Neuroimage 27, 694–705. https://doi.org/10.1016/j.neuroimage.2005.04.038 (2005).

Slotnick, S. D. & Schacter, D. L. The nature of memory related activity in early visual areas. Neuropsychologia 44, 2874–2886. https://doi.org/10.1016/j.neuropsychologia.2006.06.021 (2006).

Dennis, N. A., Johnson, C. E. & Peterson, K. M. Neural correlates underlying true and false associative memories. Brain Cogn. 88, 65–72. https://doi.org/10.1016/j.bandc.2014.04.009 (2014).

Wolff, M. J., Jochim, J., Akyürek, E. G., Buschman, T. J. & Stokes, M. G. Drifting codes within a stable coding scheme for working memory. PLoS Biol. 18, 1–19. https://doi.org/10.1371/journal.pbio.3000625 (2020).

Sormaz, M. et al. Default mode network can support the level of detail in experience during active task states. Proc. Natl. Acad. Sci. 115, 9318–9323. https://doi.org/10.1073/pnas.1721259115 (2018).

Cavanna, A. E. & Trimble, M. The precuneus: A review of its functional anatomy and behavioural correlates. Brain 129, 564–583. https://doi.org/10.1093/brain/awl004 (2006).

Rao, H., Zhou, T., Zhuo, Y., Fan, S. & Chen, L. Spatiotemporal activation of the two visual pathways in form discrimination and spatial location: A brain mapping study. Hum. Brain Mapp. 18, 79–89. https://doi.org/10.1002/hbm.10076 (2003).

Simon, O., Mangin, J.-F., Cohen, L., Le Bihan, D. & Dehaene, S. Topographical layout of hand, eye, calculation, and language-related areas in the human parietal lobe. Neuron 133, 475–487. https://doi.org/10.1016/s0896-6273(02)00575-5 (2002).

Stoodley, C. & Schmachmann, J. Functional topography in the human cerebellum: A meta-analysis of neuroimaging studies. Neuroimage 44, 489–501. https://doi.org/10.1016/j.neuroimage.2008.08.039 (2009).

Küper, M. et al. Cerebellar fMRI activation increases with increasing working memory demands. The Cerebellum 15, 322–335. https://doi.org/10.1007/s12311-015-0703-7 (2015).

Brissenden, J. & Somers, D. Cortico-cerebellar networks for visual attention and working memory. Curr. Opin. Psychol. 29, 239–247. https://doi.org/10.1016/j.copsyc.2019.05.003 (2019).

Kurkela, K. & Dennis, N. Event-related fMRI studies of false memory: An activation likelihood estimation meta-analysis. Neuropsychologia 81, 149–167. https://doi.org/10.1016/j.neuropsychologia.2015.12.006 (2016).

Sikora-Wachowicz, B. et al. False recognitions in short-term memory—age-differences in neural activity. Brain Cogn. 151, 105728. https://doi.org/10.1016/j.bandc.2021.105728 (2021).

Ceglarek, A. et al. Non-linear functional brain co-activations in short-term memory distortion tasks. Front. Neurosci. 15, 778242. https://doi.org/10.3389/fnins.2021.778242 (2021).

Lewandowska, K., Wachowicz, B., Marek, T., Oginska, H. & Fafrowicz, M. Would you say “yes” in the evening? Time-of-day effect on response bias in four types of working memory recognition tasks. Chronobiol. Int. 35, 80–89. https://doi.org/10.1080/07420528.2017.1386666 (2018).

Fafrowicz, M. et al. Beyond the low frequency fluctuations: Morning and evening differences in human brain. Front. Hum. Neurosci. 13, 288. https://doi.org/10.3389/fnhum.2019.00288 (2019).

Buysse, D. J., Reynolds, C. F., Monk, T. H., Berman, S. R. & Kupfer, D. J. The Pittsburgh sleep quality index: A new instrument for psychiatric practice and research. Psychiatry Res. 28, 193–213. https://doi.org/10.1016/0165-1781(89)90047-4 (1989).

Johns, M. W. A new method for measuring daytime sleepiness: The Epworth sleepiness scale. Sleep 14, 540–545. https://doi.org/10.1093/sleep/14.6.540 (1991).

Oginska, H., Mojsa-Kaja, J. & Mairesse, O. Chronotype description: In search of a solid subjective amplitude scale. Chronobiol. Int. 34, 1388–1400. https://doi.org/10.1080/07420528.2017.1372469 (2017).

Archer, S. N. et al. A length polymorphism in the circadian clock gene Per3 is linked to delayed sleep phase syndrome and extreme diurnal preference. Sleep 26, 413–415. https://doi.org/10.1093/sleep/26.4.413 (2003).

Tzourio-Mazoyer, N. et al. Automated anatomical labeling of activations in SPM using a macroscopic anatomical parcellation of the MNI MRI single-subject brain. Neuroimage 15, 273–289. https://doi.org/10.1006/nimg.2001.0978 (2002).

Peng, C.-K. et al. Mosaic organization of DNA nucleotides. Phys. Rev. E 49, 1685 (1994).

Welch, P. The use of fast fourier transform for the estimation of power spectra: A method based on time averaging over short, modified periodograms. IEEE Trans. Audio Electroacoust. 15, 70 (1967).

Kwapień, J., Oświȩcimka, P. & Drożdż, S. Detrended fluctuation analysis made flexible to detect range of cross-correlated fluctuations. Phys. Rev. E 92, 052815. https://doi.org/10.1103/PhysRevE.92.052815 (2015).

Arneodo, A., Bacry, E.,Muzy, J.F. The thermodynamics of fractals revisited with wavelets. Phys. A 213, 232–275. https://doi.org/10.1016/0378-4371(94)00163-N (1995).

Pearson, K. Note on Regression and Inheritance in the Case of Two Parents. Proc. R. Soc. Lond. 58, 240–242 (1895).

Scheffe, H. The analysis of variance Vol. 72 (John Wiley & Sons, USA, 1999).

Acknowledgements

We would like to thank Prof. Patricia Reuter-Lorenz for her constructive suggestions during the planning and development of the Harmonia project and her valuable support. We also thank Anna Beres and Monika Ostrogorska for their assistance in data collection, Piotr Faba for his technical support on the project and help in data acquisition, and Aleksandra Zyrkowska and Halszka Oginska for help in the process of participant selection. The authors thank Ignacio Cifre for providing the AAL to RSN mapping.

Funding

The fMRI data analysis was funded by the Foundation for Polish Science co-financed by the European Union under the European Regional Development Fund in the POIR.04.04.00-00-14DE/18-00 project carried out within the Team-Net programme. The experimental study was funded by the Polish National Science Centre through grant Harmonia (2013/08/M/HS6/00042).

Author information

Authors and Affiliations

Contributions

M.F., K.L., B.S.-W., and T.M. conceptualized and established the fMRI study, M.F., K.L., and B.S.-W. collected the fMRI and behavioural data. A.C. performed behavioural data and fMRI preprocessing. J.O., M.W. and P.O. managed the statistical analysis, analysed the results, and prepared the figures. J.O., M.W., P.O., A.C., and M.F. interpreted the results, wrote and edited the manuscript. All authors reviewed the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Ochab, J.K., Wątorek, M., Ceglarek, A. et al. Task-dependent fractal patterns of information processing in working memory. Sci Rep 12, 17866 (2022). https://doi.org/10.1038/s41598-022-21375-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-022-21375-1

This article is cited by

-

Multifractal signal generation by cascaded chaotic systems and their analog electronic realization

Nonlinear Dynamics (2024)

-

Age- and Severity-Specific Deep Learning Models for Autism Spectrum Disorder Classification Using Functional Connectivity Measures

Arabian Journal for Science and Engineering (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.