Abstract

Head Start is a federally funded, nation-wide program in the U.S. for enhancing school readiness of children aged 3–5 from low-income families. Understanding heterogeneity in treatment effects (HTE) is an important task when evaluating programs, but most attempts to explore HTE in Head Start have been limited to subgroup analyses that rely on average treatment effects by subgroups. This study applies an extension of multilevel modelling, complex variance modelling, to data from a randomized controlled trial of Head Start, Head Start Impact Study (HSIS). The treatment effects on the variance, in addition to the mean, of nine cognitive and social-emotional outcomes were assessed for 4,442 children aged 3–4 years who were followed until their 3rd grade year. Head Start had positive short-term effects on the means of multiple cognitive outcomes while having no effect on the means of social-emotional outcomes. Head Start reduced the variances of multiple cognitive and one social-emotional outcomes, meaning that substantial HTE exists. In particular, the increased mean and decreased variance reflect the ability of Head Start to improve the outcomes and reduce their variability. Exploratory secondary analyses suggested that larger benefits for children with Spanish as a primary language and low parental educational level partly explained the reduced variability, but the HTE remained and the variability was reduced even within these subgroups. Routinely monitoring the treatment effects on the variance, in addition to the mean, would lead to a more comprehensive program evaluation that describes how a program performs on average and on the entire distribution.

Similar content being viewed by others

Introduction

Program evaluations generally focus on assessing average treatment effect (ATE) which is estimated by the difference in an outcome variable between those who are treated versus not treated. However, reporting of ATE as a single number summary of all individual treatment effects can be misleading as it dismisses the heterogeneity around the group average.1,2 If the heterogeneity in the treatment effects (HTE) were meaningfully large, the ATE would be insufficient in describing how well and for whom the intervention worked.3,4 Policies and interventions guided by such an ATE estimate would be ineffective, especially when deciding to scale up the intervention, as they would not meet heterogeneous needs of individuals.

Head Start is one example of a governmental program scaled up without an adequate understanding of its HTE. Initiated in 1965 in the U.S., the federally funded child developmental program aims to enhance school readiness of children aged 3–5 from low-income families by providing educational, health, nutritional, and social services.5 Across the country, it has served more than 37 million children and their families since. Head Start and its expanded version to infants and toddlers, Early Head Start, have been successful in receiving bipartisan support and saw an $890 million increase in funding between fiscal year 2016 and 2019, and its funding was set at $10.61 billion in 2020. Understanding HTE is especially important for such a nation-wide program with many recipients. In 2002, the Head Start Impact Study (HSIS), a nationally representative randomized controlled trial (RCT), was launched to evaluate the effectiveness of Head Start. Official reports of the HSIS documented positive short-term effects on some cognitive, social-emotional, health, and parenting outcomes, but null long-term effects for most outcomes.6,7 Recognizing that child development interventions like Head Start may have meaningfully large HTE,8,9,10 subsequent studies have moved beyond the assessment of ATE. They further examined for which subgroups of children Head Start was effective and found that the program in general had compensatory effects, or greater benefits for those with greater needs.11 Head Start benefitted several subgroups with more disadvantages, such as children with Spanish as a primary language,12,13 those who had lower cognitive skills at baseline,12,13 and those with home-based or non-parental care.14,15,16 However, further examination of HTE in Head Start is needed because findings on the treatment effects were mixed for many other disadvantaged subgroups, such as children with low parental education level,17,18 special needs,19 single parents,20 or caregivers with depressive symptoms.21.

A common methodological approach of the previous studies on HTE was a subgroup analysis which restricts the analysis to a subgroup or tests for statistical interactions between the treatment and covariates of interest (e.g., gender, race/ethnicity).11 However, such an approach has been shown to be insufficient in capturing HTE because it still relies on ATE.22 While one can test whether ATE estimates are heterogeneous across selected subgroups, heterogeneity around those estimates remains masked. Indeed, using the HSIS data, Ding, Feller, & Miratrix23,24 found substantial HTE beyond what the observed covariates and treatment noncompliance can explain, suggesting that different approaches are necessary to better understand HTE in Head Start.

Complex variance modelling, an extension of multilevel modelling, is one way to examine HTE.25,26,27,28 Instead of making the common assumption of constant variance (i.e., homoscedasticity), it explicitly models the variance, in addition to the mean, of an outcome as a function of covariates. By analyzing the treatment effect on the entire outcome distribution, individual variability is a main estimand of interest without being sidelined by the simple average. At baseline of a well-designed RCT with sufficient sample size, the variance, as well as the mean, of an outcome is expected to be comparable across treatment and control groups. In turn, a substantial difference in post-treatment variance between the two groups would be a systematic phenomenon and could be attributed to HTE.1 Such treatment effect on the variance would indicate that the ATE estimate alone does not sufficiently describe for whom the treatment worked and warrant further investigation.

Another important information that complex variance modelling provides is the magnitude and direction of the effect on variance. In many cases, societal-level governmental programs and policies aim to not only improve an outcome on the average, but also reduce social inequality in the outcome.29,30 Head Start, for example, helps low-income children, who generally score lower on school readiness than the country average, to ultimately pull them up towards the mean, reducing the variance in addition to increasing the overall mean. Even among low-income children that are targeted by the program, the academic performance gap can exist, and under the spirit of Head Start, it would be ideal to benefit every child but more for those at the lower part of the outcome distribution, thereby increasing the mean and reducing the variance. In such a way, when statistical analyses consider the mean and variance simultaneously, the treatment effect can be described in nine possible scenarios.1 The mean can increase, decrease, or be left unchanged, and for each of these cases, the variance can increase, decrease, or be left unchanged. For example, the increased (improved, in this case) mean with the decreased variance could mean that those who were lower at the outcome distribution were able to benefit from the intervention and perhaps for those at the higher tail of the distribution, to a lesser degree or not at all. No change for the mean with the increased variance may mean some were benefitted, some were harmed, or both. If the treatment effect is evaluated in these nine scenarios, our understanding of the impact of an intervention would be more comprehensive.

One study has applied complex variance modelling on the HSIS data and found reduced variance of cognitive outcomes among the Head Start children, but the effect on the variance was not interpreted with the effect on the mean.13 Other methodological approaches to the distributional effect, such as quantile regressions, were also implemented and found that Head Start benefitted those at the lower tail of the outcome distribution more for cognitive outcomes.12,14 However, both of these studies analyzed only the small number of outcomes at the 1st follow-up year of the 6-year-long study.

Using the HSIS data, the present study applied complex variance modelling on nine child developmental outcomes (six cognitive and three social-emotional outcomes) at four time points (1st, 2nd, 3rd, and the 3rd grade follow-up years) to comprehensively analyze the effect of Head Start. We visualized the treatment effect on the entire outcome distribution and interpreted the treatment effect based on the ATE (i.e., the effect on the mean) and the individual variability (i.e., the effect on the variance). Then, to further investigate HTE, we conducted exploratory subgroup analyses with complex variance modelling. The subgroups were specified post-hoc and based on a primary language (English or Spanish) and a parental education level (high school graduates, less, or more).

Methods

Sample

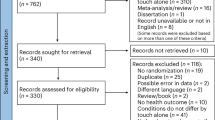

The HSIS utilized multi-stage sampling to select Head Start programs, centers, and participants (Fig. 1).6,7 First, all Head Start programs that operated less than two years, those that only served a special population (e.g., migrant, seasonal, tribal), and Early Head Start programs were excluded. The remaining 1,715 programs were grouped into 161 geographic clusters to easily monitor random assignment and obtain high-quality data. After stratifying the clusters by contextual criteria (i.e., state pre-K and childcare policy, child race/ethnicity, urban/rural location, and region), one cluster per stratum was randomly selected, resulting in 261 programs. Programs that were closed, merged, or saturated (i.e., being able to serve all applicants)were excluded. Only programs that had more applicants than available spots (i.e., not saturated) were included so that a control group could be formed. Small programs in the same geographic cluster were grouped to ensure a comparable probability of being selected across programs, resulting in 184 programs. These programs were once again stratified by the contextual criteria considered above to create strata, and three programs were randomly selected per cluster. In the selected 87 programs, there were 1,427 centers potentially eligible for the study. These centers were stratified into strata based on the same contextual criteria, and three centers per stratum were randomly sampled. All Head Start applicant children in the selected centers were included in the final sample, which consisted of 4,442 children in 378 centers out of 84 programs. Additional details are available in the HSIS official reports6,7.

Treatment

The Head Start intervention included educational, health, nutritional, and social services with the goal of improving school readiness and child development. All Head Start centers must adhere to the Head Start Performance Standards, which are federally regulated to ensure the comprehensiveness and quality of the services provided by the centers.6 Thus, the treatment is a mixture of various services with the pre-specified standards. With such multidimensional treatment, a precise mechanism through which Head Start affects children is challenging to uncover. Nonetheless, the overall impact of the national-level program and its heterogeneity can be evaluated.

Randomization of Head Start occurred within each Head Start center in the first year of the HSIS. The treatment group (or the Head Start children) were offered to participate in Head Start, while the control group (or the Control children) were not. The randomization was designed to yield a higher proportion of children having access to Head Start in order to allow as many children as possible to be potentially benefitted from the program.

For both the 3- and 4-year-old cohorts, the treatment of interest is the offer of one year of Head Start. Unlike the 4-year-old cohort who had only one eligible year for Head Start (i.e., the first year, or the randomization year), the 3-year-old cohort had one more eligible year (i.e., the second year, or the year after the randomization year) when they turned age 4. However, for that year, both the Head Start children and the Control children were free to enroll in Head Start. It was not reasonable to prevent 3-year-old children from enrolling in Head Start for two years. Therefore, the treatment is the same for both cohorts in that it offers one year of Head Start. One important difference is that the 3-year-old cohort has an opportunity to enroll again in the next year, while the 4-year-old cohort does not have an opportunity to enroll again.

The Control children were prevented from enrolling in the Head Start center where they applied, but their alternative experiences were not controlled. Therefore, their experiences range widely from non-Head Start childcare programs to home care. About 60% of the Control children participated in non-Head Start childcare programs. In addition, as with any RCT, there was noncompliance to the random assignment; 12% of the Control children enrolled in Head Start, and 19% of the Head Start children did not actually enroll in Head Start. In summary, the causal question of this RCT is whether one year of Head Start had an impact on children’s developmental outcomes when compared against a mixture of alternative experiences that low-income children would have had if Head Start did not exist.

Outcomes

The participating children were followed up and assessed for multiple cognitive and social-emotional outcomes at preschool years, kindergarten year, the 1st grade year, and the 3rd grade year. Since all outcomes measured in the HSIS have theoretical reasons to believe that they may be influenced by Head Start, we would ideally analyze as many outcomes as possible so that we can identify unexplored HTE to better understand the effects of Head Start and demonstrate the utility of complex variance modelling. However, based on the following criteria, only outcomes with reliable data quality and that are compatible with our analytical approach are selected. Outcomes were excluded if there were: (1) no or limited evidence on reliability of the measure, (2) problems raised in the HSIS official reports on scoring and interpreting results, (3) subjective academic performance measures in the presence of comparable objective measures, (4) measures not available for both 3- and 4- year-old cohorts at a given follow-up assessment, and( 5) in a categorical form. The final outcome selections were six cognitive outcomes (Peabody Picture Vocabulary Test (PPVT),31 Woodcock-Johnson (WJ) III Letter-Word Identification, WJ III Applied Problems, WJ III Oral Comprehension, WJ III Spelling, WJ III Pre-Academic; "WJ III" is omitted hereafter for brevity)32 measured by child assessments, and three social-emotional outcomes (Behavior Problems, Social Skills, Social Competency) measured by parent interviews.

Cognitive outcomes were measured by one-on-one child assessments for 45 to 60 min.6,7,33 PPVT measures receptive vocabulary in standard English (Cronbach's α = 0.62–0.84). Oral Comprehension measures an ability to comprehend a short passage by listening and provide a missing word through reasoning (α = 0.76–0.89). Letter-Word Identification measures the ability to identify letters and words from a picture or isolated letters and words (α = 0.82–0.94). Spelling measures the ability to correctly spell spoken words (α = 0.70–0.94). Applied Problems measures an ability to analyze and solve math problems (α = 0.85–0.90). Pre-Academic is a composite measure of Letter-Word Identification, Applied Problems, and Spelling (α = 0.67–0.85). To reduce the time required to test the participating children, PPVT was adapted to create a shortened version using item response theory, and WJ III tests were subject to a rule that stopped the test when three consecutive items were incorrect. PPVT was scored with a marginal maximum likelihood estimation that is based on each child’s actual test scores and a prior distribution separately by the age cohorts estimated from all children in each cohort. The WJ III tests were measured in W-ability scores, a mathematical transformation of the Rasch model, which is based on item response theory. These scores for PPVT and WJ III were provided with the HSIS dataset.

Parent interviews were conducted for primary caregivers.6,7,33 Social Skills assesses social skills such as cooperative and emphatic behaviors and approaches to learning such as openness to new concepts, curiosity, and positive attitudes towards gaining knowledge (α = 0.57–0.85). Social Competency measures the ability to have social interactions (α = 0.50–0.94). Behavior Problems is a composite measure of aggressive, withdrawn, and hyperactive behaviors (α = 0.74–0.96). A more detailed description and a measurement method of each outcome are available in the HSIS official reports.6,7,33.

Covariates

Although the HSIS was an RCT with no expected confounding, the HSIS official reports recommended covariate adjustment for two reasons6,7,33: 1) strong predictors of the outcome, such as sociodemographic variables and baseline outcomes, were included to enhance statistical precision; 2) baseline outcomes were included to account for any systematic bias at baseline. Following these recommendations, we adjusted for children’s sociodemographic variables and HSIS-related variables. Children’s sociodemographic variables included gender (male, female), race/ethnicity (White/other, Black, Hispanic), primary language at baseline (English, Spanish), special needs (yes, no), primary caregiver’s age (continuous), teen mom at birth (yes, no), living with a single parent (yes, no), recent immigrant parents (yes, no), parents’ marital status (not married, married, separated/divorced/widowed), parental education level (less than high school, high school graduates, beyond high school), urbanicity (urban, rural), household risk (low, moderate, high). Household risk index was developed by the researchers of the HSIS official reports based on five characteristics6: 1) receipt of TANF or Food Stamps, 2) both parents with education level less than high school, 3) both parents unemployed or not in education, 4) living with a single parent, 5) teen mom at birth. Three categories (low, moderate, high) were created by the number of these characteristics reported in the parent interview. HSIS-related variables included age cohort (age 3, age 4) and baseline outcomes (PPVT, Pre-Academic, Behavior Problems, Social Skills, and Social Competency).

Statistical analysis

Sample characteristics were presented for the total sample and by treatment status. Primary analyses were performed on the 3-year-old cohort, the 4-year-old cohort, and the pooled cohort. Three-level multilevel models were fitted by specifying Head Start programs at level-3, centers at level-2, and children at level-1 to account for clustering at Head Start programs and centers. While multilevel models are generally fitted with the assumption that level-1 residuals are normally distributed with constant variance (i.e., homoscedasticity), we applied an extended version that models level-1 variance as a function of level-1 covariates. Such a variance modelling approach is called a complex (level-1) variance model.27,34 The primary analyses (Model 1) were specified as,

Model 1:

Model 1 residual distribution:

where \({Y}_{ijk}\) is an outcome variable for child \(i\) in center \(j\) in program \(k\), \({X}_{ijk}^{^{\prime}}\) is a vector of child-level covariates, \({T}_{ijk}\) is an indicator variable for the treatment group (i.e., Head Start), and \({C}_{ijk}\) is an indicator variable for the control group. All continuous covariates (baseline outcomes, primary caregiver’s age) were centered at their means for interpretability of regression coefficients. Total variance is partitioned into the program-level (\({\sigma }_{{v}_{0}}^{2}\)), the center-level (\({\sigma }_{{u}_{0}}^{2}\)), the child-level, and the child-level variance is further partitioned into treatment group variance (\({\sigma }_{{e}_{1}}^{2}\)) and control group variance (\({\sigma }_{{e}_{2}}^{2}\)). These two variance estimates are the main parameters of interest, and the equality of the variances was tested by F-test for normally distributed outcomes (PPVT, Letter-Word Identification, Applied Problems, Oral Comprehension, Spelling, Pre-Academics) and Levene’s test for the rest (Behavior Problems, Social Skills, Social Competency). A statistically significant difference between the two variances indicates that there may be a substantial amount of HTE, and more exploration should follow. The variance estimates were visualized in the 95% variation bounds, which indicate that 95% of the observations lie between the lower and upper bounds.35 They were calculated with the complex variance model estimates as follows: \(mean \pm 1.96*\sqrt {child - level~variance~}\)

Exploratory secondary analyses were conducted on the pooled cohort to investigate for which subgroups the treatment effects were meaningfully differential, and whether there remains HTE even after accounting for these treatment-subgroup interactions. Model 2 and 3 tested for the interactions between the treatment and a child’s primary language, parental education level, respectively, and for the difference in the treatment group variance and control group variance within each subgroup. Model 2 was specified as,

Model 2:

Model 2 residual distribution:

where \({S}_{ijk}\) is an indicator variable for Spanish as a primary language, \({S(T)}_{ijk}\) and \({S(C)}_{ijk}\) are indicator variables for treatment and control groups among children with Spanish as a primary language, and \({E(T)}_{ijk}\) and \({E(C)}_{ijk}\) are indicator variables for treatment and control groups among children with English as a primary language. The parameter for interaction, \({\beta }_{3}\), between the treatment and the subgroup (i.e., Spanish as a primary language) is included to test for HTE across the subgroups, and the treatment group variance and control group variance are now separated into each subgroup (Spanish-Treatment: \({\sigma }_{{e}_{1}}^{2}\); Spanish-Control: \({\sigma }_{{e}_{2}}^{2}\); English-Treatment: \({\sigma }_{{e}_{3}}^{2}\); English-Control: \({\sigma }_{{e}_{4}}^{2}\)). Within each subgroup, the treatment group variance and the control group variance are compared to check whether there is remaining HTE after accounting for the interactions between the treatment the subgroups. There are one more interaction parameter and two more variance parameters in Model 3 because the parental education level has three subgroups, one more than Model 2.

Loss to follow-ups occurred as with any longitudinal study. After applying list-wise deletions for children with missing data, we applied weights provided by the HSIS dataset to control for potential bias from differential loss to follow-ups by treatment status. The weights included the nonresponse probability to adjust for different response rates across demographic groups and the selection probability at every stage of sampling to ensure the model estimates reflect the parameters for a nationally representative Head Start sample. The weights were also used in the HSIS official reports. Descriptions of the weight construction are detailed in the HSIS official technical report.33 All models were fitted in R 4.0.0 using the R2MLwiN package to access MLwiN 3.0436 for multilevel modelling.

Ethical approval

The HSIS data were not collected specifically for this study and no one on the study team has access to identifiers linked to the data. These activities do not meet the regulatory definition of human subject research. As such, an Institutional Review Board (IRB) review is not required. The Harvard Longwood Campus IRB allows researchers to self-determine when their research does not meet the requirements for IRB oversight via guidance online regarding when an IRB application is required using an IRB Decision Tool.

Results

At baseline, the treatment group (n = 2,646) had a larger sample size than the control group (n = 1,796), which is consistent with the randomization design described above (Table 1). The percentage of missing data for each variable in the analyses ranged from 0 to 1.5%. A slightly higher proportion of children were Hispanics/other (36.0%) than White (33.7%) and Black (30.3%). About a quarter (25.7%) used Spanish as a primary language. Approximately half (50.4%) of children lived with a single biological parent, 84.3% lived in an urban setting, 16.9% had teen mothers at birth, and 12.8% had special needs. The average primary caregiver’s age was about 29, 38.0% of the children had mothers who did not graduate from high school, and 19.2% were recent immigrants. The treatment group was more likely to have special needs (13.8% vs. 11.4%; p = 0.018) and less likely to have had teen mothers at birth (15.9% vs. 18.4%; p = 0.038). Baseline means and variances were comparable between treatment and control groups for all outcomes except PPVT, which had a slightly lower mean score among the treatment group (p = 0.020). The response rates varied across the outcomes, ranging from 80.2 to 81.8% in 2003, 78.8 to 79.4% in 2004, 76.5 to 79.1% in 2005, and 71.3 to 75.3% in 2007–8 (Table A1).

Three combinations of the effect on the mean and variance (i.e., mean and variance for the Head Start children vs. the Control children) are observed from the complex variance model results: 1) increase in the mean, decrease in the variance (Fig. 2a); 2) increase in the mean, no change in the variance (Fig. 2c, d); 3) no change in the mean, decrease in the variance (Fig. 2b). An increase in the mean reflects improvement for the outcomes except in the case of Behavior Problems for which a decrease would mean improvement. In both scenario 1) and 2) for the main analysis (i.e., Model 1), Head Start increased the mean, indicating that Head Start improves the outcomes on average. In scenario 1), a decrease in the variance that was accompanied with an increase in the mean suggests that the improvement may have been larger for those at the lower tail of the outcome distribution. In scenario 3), Head Start did not change the mean on average, but a decrease in the variance suggests that some were benefitted or harmed. Further exploration of HTE is needed. In the subgroup analyses (i.e., Model 2, 3, and 4), if the variance change observed in Model 1 disappeared with statistically significant interactions, the treatment-subgroup interactions may have explained away the HTE observed in Model 1. If the variance change persisted, on the other hand, further stratification within the subgroups with the variance change may be able to explain the HTE.

Visualized examples of the outcome distribution comparison between the Head Start and Control groups. The plot (a), (b), (c), and (d) visualize the outcome distributions for PPVT in the first year after Head Start, Oral Comprehension in the first year, Behavior Problems in the third grade year, and Spelling in the first year, respectively. The centered line is the mean of the outcome, and the surrounding bars are the 95% variation bounds, describing how variable the data are.

Outcomes with increased mean and decreased variance

The pooled cohort analyses showed that PPVT, Letter-Word Identification, Applied Problems, and Pre-Academic had the pattern of increased mean and decreased variance for the Head Start children compared to the Control children (Table 2). For the four cognitive outcomes, Head Start had short-term effects that did not last beyond the third year. For example, the Head Start children scored higher on PPVT until the third year after Head Start but the effect was attenuated with time (1st year: β [SE] = 5.69 [0.90], p < 0.001; 2nd year: β [SE] = 2.09 [1.08], p = 0.051; 3rd year: β [SE] = 2.00 [0.77], p = 0.009). The effects on the mean were often accompanied with the effects on the variance. For example, the Head Start children had smaller variance of PPVT until the second year after Head Start (1st year: δ = − 21.90, p < 0.001; 2nd year: δ = − 13.65, p = 0.051). The visualization suggests that those at the lower part of the outcome distribution may have benefitted more (Fig. 2a). When the cohorts were analyzed separately, the pattern of increased mean and decreased variance persisted for the four cognitive outcomes at most follow-ups (Tables A2 and A3). At a few time points, the change in variance was statistically insignificant, but had the consistent direction and magnitude, indicating loss of power. At second and third year of follow-ups, the increased mean was only observed for the 3-year-old cohort.

For PPVT, Applied Problems, and Pre-Academic, subgroup analyses revealed that larger effects for children with Spanish as a primary language or with low parental education level can partly explain the Head Start effect on the variance in Model 1. For example, Head Start had a consistently larger effect on PPVT for children with Spanish as a primary language, which was statistically significant even in the third grade year (β [SE] = 4.89 [1.85], p = 0.008). After taking the interactions into account, the variance for the Spanish-Head Start group was smaller in the first and second years after Head Start (1st year: δ = − 21.70, p = 0.032; 2nd year: δ = − 34.00, p < 0.001) compared to the Spanish-Control group, whereas the variance for the English-Head Start group was 21.06% smaller only in the first year (p < 0.001) (Table A1). No statistically significant interactions were observed across parental education levels, but Head Start reduced the variance of the Head Start group with parents with high school as the highest education level in the first year (δ = − 27.96, p < 0.001) and those with less than high school in the first and second years (1st year: δ = − 23.23, p = 0.003; 2nd year: δ = − 20.37, p = 0.008) (Table A2).

Outcomes with no change in the mean and decreased variance

For Oral Comprehension and Behavior Problems, Head Start did not change the mean but reduced the variance of children’s scores (Table 2). In the first year after Head Start, the Head Start children had the variance of Oral Comprehension that was 10.47% lower than the Control children (p = 0.045). Both tails of the outcome distribution shrunk toward the mean (Fig. 2c). No interactions explained the reduced variance in the first year, but the reduced variance was observed only for the children that had parents with less than high school education (δ = − 17.97, p < 0.044) (Table A4). In the second year, Head Start had a negative effect for children that had parents with high school as the highest education (β [SE] = − 2.25 [0.97], p = 0.020). For Behavior Problems, in the third grade year, the Head Start children had the variance 10.70% lower than the Control children (p = 0.04) (Table 2). Because the scores of Behavior Problems cannot be lower than zero, the reduced variance was due to the higher tail of the outcome distribution shifted down (Fig. 2d). The reduced variance was not explained by the tested interactions and found even within children who use Spanish as a primary language (δ = − 15.17, p < 0.043) (Table A5) or had parents with high school as the highest education (δ = − 19.80, p < 0.010) (Table A4).

For Oral Comprehension and Behavioral Problems, the pattern for the mean and variance was consistent at most follow-ups when the cohorts were analyzed separately (Tables A2 and A3). For Oral Comprehension at the first follow-up, the variance change for the 3-year-old cohort was not statistically significant, but its direction and magnitude was consistent, indicating loss of power. For Behavioral Problems at the first and second follow-ups, the 3-year-old cohort experienced decreased mean (i.e., reduced behavioral problems; positive effect), which was masked in the pooled cohort analyses.

Outcomes with no change in the variance

For Spelling, there was a pattern of an increased mean for the Head Start children without a change in the variance. In the first year after Head Start, the Head Start children scored higher on average (β [SE] = 2.96 [0.69], p < 0.001), but the effect faded away in the later years (Table 2). The entire outcome distribution shifted upwards without a substantial change in the variance (Fig. 2b). For Social Skills and Social Competency, there was no consistent pattern of change in either the mean or the variance across all follow-up years (Table 2). For Spelling, Social Skills, and Social Competency, the pattern for the mean and variance was consistent when the cohorts were analyzed separately (Tables A2 and A3).

Discussion

We applied complex variance modelling using the HSIS data to examine HTE of Head Start, in addition to ATE. Head Start had positive short-term effects on the means of multiple cognitive outcomes, while having no effect on the means of social-emotional outcomes. Modelling variance by treatment status revealed that Head Start reduced the variances of multiple cognitive and one social-emotional outcomes, meaning that substantial HTE exits. In particular, the increased mean and the decreased variance reflect the ability of Head Start to improve the outcomes while reducing their variability. The reduced variances were partly explained by the larger benefits for children with Spanish as a primary language or low parental education level, suggesting that at least some parts of the reduced variances reflect the reduced social inequalities in the outcomes. Interestingly, even after accounting for these treatment-subgroup interactions, the HTE remained for some outcomes, and their variances were reduced even within these subgroups. For multiple outcomes at certain follow-up years, the effects on the variance were present even when the effects on the mean were null. Without modelling variance, such an HTE is likely to have been masked by the non-significant effect on average.

Consistent with the HSIS official reports, Head Start improved several cognitive outcomes at the first and second years, but the effects faded away at later follow-ups.6,7 We additionally showed that the variances of these outcomes were also reduced for the Head Start children compared to the Control children. With the comparable variances at baseline, the difference in the post-treatment variances suggests that there was a meaningful amount of HTE that should be further investigated. In particular, the reduction in the variance with the increased mean may mean that Head Start was able to pull those at the lower part of the outcome distribution upwards to the mean. Indeed, previous studies found that Head Start was more effective at improving cognitive outcomes for many high-risk subgroups, including children with Spanish as a primary language,12,13 lower cognitive test scores at baseline,12 non-parental care at baseline,15 low and moderate parental pre-academic stimulation,37 or special needs.19 Similarly, we found that larger benefits for children with Spanish as a primary language or a low parental education level appeared to explain away some of the effects on the variance. Head Start may have been more effective on cognitive outcomes for these children because it offered academic resources, which their home environments may have lacked, for developing English language skills and cognitive abilities. However, even after accounting for these treatment-subgroup interactions, the Head Start children within these subgroups had smaller variability than the Control children. After Head Start, in other words, the outcome distributions of even these high-risk subgroups shrunk, indicating that substantial HTE exists within these subgroups. Particularly, those scored lower within these subgroups appeared to have benefitted more, further suggesting the compensatory effects of Head Start. If statistical power allows, finer stratification may be able to uncover for whom Head Start was effective among children with Spanish as a primary language or a low parental education level.

No clear pattern of the effects on the mean were observed for the social-emotional outcomes, except that the 3-year-old cohort experienced short-term positive effects on Behavioral Problems. Even the subgroup analyses did not find a clear pattern for the effects on the mean. Previous studies have also investigated heterogeneous effects on social-emotional outcomes for children who had foster care at baseline38 and who had experienced violence,39 but found no effects on the mean. Despite the absence of meaningful ATE, the Head Start children had smaller variances for one social-emotional outcome, Behavior Problems, and one cognitive outcome, Oral Comprehension, suggesting there are subgroups with heterogeneous effects for these outcomes. In this case, since the ATE was null, comparing the outcome distributions of the Head Start and Control groups by visualization helped understand the effects. For Oral Comprehension, the distribution shrunk from both tails, suggesting that there may have been subgroups that experienced negative impacts, as well as subgroups with positive impacts. For Behavior Problems, the distribution shrunk from the higher tail, meaning that there were positive effects for certain subgroups because a lower score means a better outcome for Behavior Problems. The positive effect in the 3-year-old cohort may explain such a distributional shift. The smaller variances were observed within children with Spanish as a primary language or children of parents with high school as the highest education level. Further exploration among these subgroups may reveal for which subgroup Head Start worked well.

Findings that Head Start improved multiple outcomes on average and reduced their variance are especially important because the program had an additional goal of shrinking the outcome distribution. The reduced variance on cognitive outcomes may be transferred further to academic performances. Indeed, previous observational studies found that Head Start decreased grade repetition rates, while increasing high school graduation rate and college attendance, which are signs of reduced outcome distribution by improving at the lower tail.40,41 If the HSIS participants were tracked in their adulthood, the Head Start effect on the mean and variance of their adulthood outcomes such as income also could be evaluated.

One strength of our study is the use of multilevel models to adjust for clustering among Head Start programs and centers. Partitioning variance at program-, center-, and child-level gives more valid estimates of variance and is especially important when variance estimates are the parameters of primary interest. Another strength is the use of the RCT data. While analytical approaches to modelling individual variability have been extended to quasi-experimental28 and cross-sectional observational studies,42 a well-designed RCT remains the most appropriate setting to estimate the treatment effect on variance because treatment and control groups are expected to be exchangeable at baseline. In HSIS, the treatment group had a larger sample size than the control group, but this difference does not alone explain the observed variance differences; no identical pattern was found across all outcomes. When the sample size is large enough to represent the population variance, the difference in sample size between the two groups would not drive the difference in variance estimates.

Our study has limitations. First, our analysis excluded categorical outcomes because only continuous outcomes fit with our framework of comparing variances and visualizing them as distributions. Especially for binary outcomes, extending this complex level-1 variance modelling approach is not very straightforward because level-1 variance in a multilevel logistic regression model is assumed to come from a logistic distribution with a fixed variance of π2/3.25 Nonetheless, future studies should utilize methods that can reveal HTE for categorical outcomes beyond what is possible with a single covariate interaction analysis, such as latent class analysis43 and intersectional multilevel analysis.44,45 Second, the treatment effect on variance is a summary statistic of the overall outcome distribution and does not identify for whom exactly Head Start worked. For example, when Head Start increased a cognitive outcome on average and reduced variance by shifting up those at the lower tail of the outcome distribution, we interpreted that Head Start improved those at the lower tail more than others. This is only true under the rank preservation assumption, in which children keep their ranks in the outcome distribution regardless of the treatment status. Although the assumption is untestable, we found that some subgroups that scored lower before were benefitted more, which provide support for our interpretation.

Given that children experience multiple social identities and environments simultaneously, it is no surprise to see HTE even within subgroups like children with low parental education level.46,47 However, analysis of HTE often terminates at a single covariate stratification, offering a limited aspect of HTE. Individual variability around the averages is often disregarded. In an RCT setting, we demonstrated that modelling post-treatment variances can enrich interpretations of a treatment effect in two major ways. First, a substantial difference in variances between treatment and control groups can motivate further investigation to better understand for whom the treatment works. Second, the magnitude and direction of the effect on variance can suggest which part of the outcome distribution had heterogeneous effects. Routinely monitoring the treatment effects on variances of the outcomes, in addition to the means, would lead to a more comprehensive program evaluation that describes how a program performs on average and on the entire distribution.

Data availability

The Head Start Impact Study data are hosted by Inter-university Consortium for Political and Social Research. Restrictions apply to the availability of these datasets. All methods were carried out in accordance with relevant guidelines and regulations.

References

Subramanian, S., Kim, R. & Christakis, N. A. The “average” treatment effect: a construct ripe for retirement. A commentary on deaton and cartwright. Soc. Sci. Med. 210, 77–82 (2018).

Merlo, J., Mulinari, S., Wemrell, M., Subramanian, S. & Hedblad, B. The tyranny of the averages and the indiscriminate use of risk factors in public health: the case of coronary heart disease. SSM Popul. Health 3, 684–698 (2017).

Pepe, M. S., Janes, H., Longton, G., Leisenring, W. & Newcomb, P. Limitations of the odds ratio in gauging the performance of a diagnostic, prognostic, or screening marker. Am. J. Epidemiol. 159, 882–890 (2004).

Wald, N., Hackshaw, A. & Frost, C. When can a risk factor be used as a worthwhile screening test?. BMJ 319, 1562–1565 (1999).

Fund, F. F. Y. Head Start & Early Head Start, <https://www.ffyf.org/issues/head-start-early-head-start/?mc_cid=4c8abeeea8&mc_eid=e63ec363fd> (2020).

Puma, M. et al. Head Start Impact Study. Final Report. Administration for Children & Families (2010).

Puma, M. et al. Third Grade Follow-Up to the Head Start Impact Study: Final Report. OPRE Report 2012-45. Administration for Children & Families (2012).

Brand, J. E. & Thomas, J. S. Causal effect heterogeneity. Handbook of causal analysis for social research pp. 189–213 (Springer, 2013).

Kravitz, R. L., Duan, N. & Braslow, J. Evidence-based medicine, heterogeneity of treatment effects, and the trouble with averages. Milbank Q. 82, 661–687 (2004).

Plewis, I. Modelling impact heterogeneity. J. R. Stat. Soc. A. Stat. Soc. 165, 31–38 (2002).

Lee, S. Y., Kim, R., Rodgers, J. & Subramanian, S. Treatment effect heterogeneity in the head start impact study: a systematic review of study characteristics and findings. SSM Popul. Health 16, 100916 (2021).

Bitler, M. P., Hoynes, H. W. & Domina, T. Experimental evidence on distributional effects of head start (National Bureau of Economic Research, 2014).

Bloom, H. S. & Weiland, C. Quantifying variation in Head Start effects on young children's cognitive and socio-emotional skills using data from the National Head Start Impact Study. Available at SSRN 2594430 (2015).

Feller, A., Grindal, T., Miratrix, L. & Page, L. C. Compared to what? Variation in the impacts of early childhood education by alternative care type. Ann. Appl. Stat. 10, 1245–1285 (2016).

Lipscomb, S. T., Pratt, M. E., Schmitt, S. A., Pears, K. C. & Kim, H. K. School readiness in children living in non-parental care: impacts of head start. J. Appl. Dev. Psychol. 34, 28–37 (2013).

Zhai, F., Brooks-Gunn, J. & Waldfogel, J. Head Start’s impact is contingent on alternative type of care in comparison group. Dev. Psychol. 50, 2572 (2014).

Long, C. Promoting family economic self-sufficiency: the impact of head start on maternal human capital investment (University of Illinois at Chicago, 2016).

Sabol, T. J. & Chase-Lansdale, P. L. The influence of low-income children’s participation in Head Start on their parents’ education and employment. J. Policy Anal. Manag. 34, 136–161 (2015).

Lee, K. & Rispoli, K. Effects of individualized education programs on cognitive outcomes for children with disabilities in Head Start programs. J. Soc. Serv. Res. 42, 533–547 (2016).

Gelber, A. & Isen, A. Children’s schooling and parents’ behavior: evidence from the head start impact study. J. Public Econ. 101, 25–38 (2013).

Cooper, B. R. & Lanza, S. T. Who benefits most from Head Start? Using latent class moderation to examine differential treatment effects. Child Dev. 85, 2317–2338 (2014).

Bitler, M. P., Gelbach, J. B. & Hoynes, H. W. Can variation in subgroups’ average treatment effects explain treatment effect heterogeneity? Evidence from a social experiment. Rev. Econ. Stat. 99, 683–697. https://doi.org/10.1162/REST_a_00662 (2017).

Ding, P., Feller, A. & Miratrix, L. Randomization inference for treatment effect variation. J. R. Stat. Soc. Ser. B Stat. Methodol. 78(3), 655–671 (2016).

Ding, P., Feller, A. & Miratrix, L. Decomposing treatment effect variation. J. Am. Stat. Assoc. 114, 304–317 (2019).

Browne, W. J., Subramanian, S. V., Jones, K. & Goldstein, H. Variance partitioning in multilevel logistic models that exhibit overdispersion. J. R. Stat. Soc. A. Stat. Soc. 168, 599–613 (2005).

Bryk, A. S. & Raudenbush, S. W. Heterogeneity of variance in experimental studies: a challenge to conventional interpretations. Psychol. Bull. 104, 396 (1988).

Goldstein, H. Heteroscedasticity and complex variation. Encycl. Stat. Behave. Sci. 2, 790–795 (2005).

Kim, J. & Seltzer, M. Examining heterogeneity in residual variance to detect differential response to treatments. Psychol. Methods 16, 192 (2011).

Benach, J., Malmusi, D., Yasui, Y., Martínez, J. M. & Muntaner, C. Beyond rose’s strategies: a typology of scenarios of policy impact on population health and health inequalities. Int. J. Health Serv. 41, 1–9. https://doi.org/10.2190/HS.41.1.a (2011).

Benach, J., Malmusi, D., Yasui, Y. & Martínez, J. M. A new typology of policies to tackle health inequalities and scenarios of impact based on Rose’s population approach. J. Epidemiol. Community Health 67, 286–291. https://doi.org/10.1136/jech-2011-200363 (2013).

Dunn, L. M. & Dunn, L. Peabody picture vocabulary test (American Guidance Service, 1997).

Woodcock, R. W., McGrew, K. S. & Mather, N. Woodcock-Johnson III tests of achievement. (2001).

U.S. Department of Health and Human Services, A. f. C. a. F. Head Start Impact Study Technical Report. 207 (2011).

Browne, W. J., Draper, D., Goldstein, H. & Rasbash, J. Bayesian and likelihood methods for fitting multilevel models with complex level-1 variation. Comput. Stat. Data Anal. 39, 203–225 (2002).

Subramanian, S. & Jones, K. Multilevel statistical models: concepts and applications. Center for Society and Health, Harvard School of Public Health and Bristol, United Kingdom: Centre for Multilevel Modeling, University of Bristol (2006).

Charlton, C., Rasbash, J., Browne, W., Healy, M. & Cameron, B. MLwiN (Version 3.04)[Computer software. University of Bristol, Centre for Multilevel Modelling (2019).

Miller, E. B., Farkas, G., Vandell, D. L. & Duncan, G. J. Do the effects of head start vary by parental preacademic stimulation?. Child Dev. 85, 1385–1400 (2014).

Lee, K. & Lee, J.-S. Parental book reading and social-emotional outcomes for Head Start children in foster care. Soc. Work Public Health 31, 408–418 (2016).

Lee, K. & Ludington, B. Head start’s impact on socio-emotional outcomes for children who have experienced violence or neighborhood crime. J. Fam. Violence 31, 499–513 (2016).

Deming, D. Early childhood intervention and life-cycle skill development: evidence from Head Start. Am. Econ. J. Appl. Econ. 1, 111–134 (2009).

Garces, E., Thomas, D. & Currie, J. Longer-term effects of Head Start. Am. Econ. Rev. 92, 999–1012 (2002).

Kim, R., Kawachi, I., Coull, B. A. & Subramanian, S. V. Patterning of individual heterogeneity in body mass index: evidence from 57 low-and middle-income countries. Eur. J. Epidemiol. 33, 741–750 (2018).

Lanza, S. T. & Rhoades, B. L. Latent class analysis: an alternative perspective on subgroup analysis in prevention and treatment. Prev. Sci. 14, 157–168 (2013).

Evans, C. R., Williams, D. R., Onnela, J.-P. & Subramanian, S. A multilevel approach to modeling health inequalities at the intersection of multiple social identities. Soc. Sci. Med. 203, 64–73 (2018).

Jones, K., Johnston, R. & Manley, D. Uncovering interactions in multivariate contingency tables: a multi-level modelling exploratory approach. Methodol. Innov. 9, 2059799116672874 (2016).

Collins, P. H. & Bilge, S. Intersectionality (Polity, 2016).

Crenshaw, K. Demarginalizing the intersection of race and sex: a black feminist critique of antidiscrimination doctrine, feminist theory and antiracist politics. u. Chi. Legal f., 139 (1989).

Funding

This research was funded by Robert Wood Johnson Foundation, ID 75602.

Author information

Authors and Affiliations

Contributions

R.K. and S.V.S. conceptualized the study. S.L. performed the analysis and wrote the main manuscript text. R.K., J.R., and S.V.S. contributed to data analysis and interpretation of results. All authors reviewed and provided critical revision to the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Lee, S.Y., Kim, R., Rodgers, J. et al. Assessment of heterogeneous Head Start treatment effects on cognitive and social-emotional outcomes. Sci Rep 12, 6411 (2022). https://doi.org/10.1038/s41598-022-10192-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-022-10192-1

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.