Abstract

Prosthetic arms can significantly increase the upper limb function of individuals with upper limb loss, however despite the development of various multi-DoF prosthetic arms the rate of prosthesis abandonment is still high. One of the major challenges is to design a multi-DoF controller that has high precision, robustness, and intuitiveness for daily use. The present study demonstrates a novel framework for developing a controller leveraging machine learning algorithms and movement synergies to implement natural control of a 2-DoF prosthetic wrist for activities of daily living (ADL). The data was collected during ADL tasks of ten individuals with a wrist brace emulating the absence of wrist function. Using this data, the neural network classifies the movement and then random forest regression computes the desired velocity of the prosthetic wrist. The models were trained/tested with ADLs where their robustness was tested using cross-validation and holdout data sets. The proposed framework demonstrated high accuracy (F-1 score of 99% for the classifier and Pearson’s correlation of 0.98 for the regression). Additionally, the interpretable nature of random forest regression was used to verify the targeted movement synergies. The present work provides a novel and effective framework to develop an intuitive control for multi-DoF prosthetic devices.

Similar content being viewed by others

Introduction

Myoelectric prostheses use a pair of surface electromyography sensors (EMG) to utilize electric motors for actuation of the terminal device. The most widely used transradial myoelectric prosthesis can only actuate power grasping and typically the wrist is fixed1. Limited mobility of these prosthetic devices causes compensatory trunk movement and unnatural upper body posture when using the terminal device to manipulate objects2,3. Furthermore, repeated excessive upper body movements generate early fatigue and pain which naturally leads to overuse of the intact limb4,5. Multiple survey studies indicate that the dissatisfaction factor of upper limb prostheses has been attributed to limited function, control strategy, and having higher weight6,7,8,9. Especially to increase the functionality of the prosthetic devices, state-of-the-art prosthetic designs have been developed to restore lost limb functions with multiple active degrees of freedom (DoF)10,11,12.

To actuate multi-DoF prosthetic devices, various control methods using EMG signals have been developed. One of the widely explored methods is the state machine approach which used two EMG signals to control single joint but allowed for switching between different joints by co-activation of both muscles13. However, these approaches lacked intuitive and simultaneous control of multiple DoF which hindered the dexterity of the hand movement during daily living tasks. To overcome the limitations of the state machine approach, pattern recognition based solutions used machine learning to identify the patterns present in the EMG signals generated during different motor tasks13,14,15. But due to the low degree of intuitiveness and increased cognitive burden16, its transition from the lab environment to daily use has been challenging. Furthermore, performance of EMG based controllers is limited due to electrode shift, variation in the force from the different pose, and transient changes in EMG due to muscle fatigue from long-term use17. These limitations have rekindled the search for alternative approaches to control multi-DoF prosthetic devices. Numerous studies have investigated alternative control strategies such as using ultrasound signals18,19, mapping the muscle deformation to the intended joint angle20, myokinetic control21, using the tongue as a joystick22, and movement based approaches23. Movement based control methods are shown to be intuitive by controlling the prosthesis using other joint movements instead of muscle signals24.

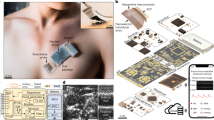

Overview of the proposed framework for modelling multi-DoF wrist control. Data collected from activities of daily living is used to train neural network classifier and regression models of radial/ulnar deviation (RUD) & pronation/supination (PS). Regression models and the control modules predict the angular velocity for controlling motors in a robotic prosthetic wrist. \(\dot{\theta }_{M\_RUD}\) and \(\dot{\theta }_{M\_PS}\) represent the measured angular velocities for RUD and PS computed using a motion capture system. \(\dot{\theta }_{R\_RUD}\) and \(\dot{\theta }_{R\_PS}\) represent angular velocities for RUD and PS predicted by regression models. \(\dot{\theta }_{C\_RUD}\) and \(\dot{\theta }_{C\_PS}\) are angular velocities generated by the controller modules for RUD and PS.

Movement based control approaches exploit the residual joint movements for the control of prosthetic joints using inertial measurement unit (IMU) sensors. Compensatory movement of the trunk and the arm was used to actuate prosthetic wrist pronation/supination to reduce these compensatory movements25. Other movement based control used existing movement synergies to control the distal joints using the proximal joints of the residual limb24,26. Using the movement synergies between the wrist and the shoulder, the controller allowed not only natural and intuitive control of prosthetic wrist pronation/supination but also reduced the cognitive burden on the prosthesis user24.

Especially, various regression models utilizing movement synergies between proximal and distal upper limb joints have been widely explored for the control of robotic prosthesis. Merad et al. modeled elbow flexion/extension by leveraging the natural co-ordination between the elbow and the shoulder27. Popovic et al.23,28,29,30 demonstrated the efficacy of controlling the wrist pronation/supination and elbow flexion/extension using shoulder movement. This was achieved by leveraging radial basis function networks (RBFN) to learn and map the movement synergies between the proximal and distal joints during reaching movements towards different target locations. Although the control approach was intuitive, manual switching between synergies for different targeted direction made the control inefficient. To avoid manual switching, multiple artificial neural networks were trained using all three DoF of the shoulder and scapuloclavicular movement31. These previous studies showed improved intuitive control, however, they had several limitations. First, they were limited to using the movement synergies for modeling only a single DoF of each upper limb joint. Employing movement synergies for modeling multi-DoF joint control has not been widely investigated yet. Second, the movement synergies were mostly modeled during simple reaching movements. However, tasks carried out during daily living do not comprise of only the reaching movements. Last, the previous regression based approaches were modelled based on the movement synergies from non-amputee participants. When this model was transferred to the amputee participant, the performance decreased because the residual limb movement of amputees is kinematically different from that of non-amputees32.

This study proposes solutions to overcome the limitations discussed above. First, we propose a novel framework that leverages multiple joint synergies for modeling control of a multi-DoF wrist and demonstrate its effectiveness. Second, the framework proposes to use the data obtained during ADL tasks to span different work spaces and improve the performance of the controller. Third, the inclusion of a wrist brace during the data collection is proposed to simulate the kinematics of amputees3. Fourth, the process of manually modeling complex joint synergies for multi-DoF control is eliminated as the framework incorporates machine learning. Furthermore, the interpretable nature of the machine learning algorithm enables quantifying the contribution of each component of the IMU sensors to the angular velocity of the wrist. Lastly, the proposed framework does not apply feature extraction on the IMU signals. This eliminates the time-consuming process of identifying the optimal features of the signals and minimizes the post-processing which typically leads to the integration of error from long-term use of the prosthetic arm and degrades the performance of the controller.

The core idea behind the proposed framework is to develop a control using machine learning which leverages the residual upper limb movement to allow for intuitive control of a multi-DoF prosthetic wrist. Therefore, movement synergies between the wrist and proximal joints are modeled using IMU sensors placed on the residual limb. For training the machine learning models, ADL tasks such as Drinking from a cup, Hammering a nail, Twisting screws, and Turning a pulley are used. These tasks are chosen because they are commonly used tasks in ADL performance measures such as Arm Motor Ability Test (AMAT), Activities Measure for Upper Limb Amputees (AM-ULA), and Southampton Hand Assessment Protocol (SHAP)33,34,35,36,37. To record the synergistic relationship, the wrist of the participants is braced during the data collection. This allowed to train the machine learning models using data acquired from the non-amputee participants emulating the absence of the active wrist movement. Due to its interpretable nature, random forest regression is used to map the residual limb movement to the intended velocity for radial/ulnar deviation and pronation/supination of the wrist. Rigorous off-line testing involving unknown participants’ data is conducted to assess the efficacy of the controller developed using the proposed framework. The residual upper limb movement will be mapped to the angular velocity of the respective wrist movement by the machine learning models. This angular velocity can be used as a control signal for a robotic prosthesis to actuate a multi-DoF wrist. Due to the use of the intuitive movement synergies for volitional control, we envision this novel framework for the development of the prosthetic controller, to contribute towards reduced compensatory movement and reduced mental burden on the prosthesis user. Furthermore, the framework can be easily extended to incorporate other additional upper limb movements including wrist flexion/extension.

Results

The upper limb kinematics of ten non-amputee subjects was collected when they performed four activities of daily living (ADL). The ADL tasks required the subjects to perform wrist radial/ulnar deviation or pronation/supination. Motion capture data and IMU signals were used to train and test the machine learning models. Especially, for training the machine learning models the IMU signals were used without computing the joint angles or extracting any features from the signals.

As shown in Fig. 1, the presented framework for developing the controller consists of three main components: neural network classifier, random forest regression models, and control modules. The neural network classifier was trained to identify the intent for either radial/ulnar deviation (RUD) or pronation/supination (PS) based on the IMU signals. For training regression models, the measured angular velocities of RUD and PS were obtained using a motion capture system. Random forest regression models were trained with IMU signals as inputs and the measured angular velocity as outputs. Two regression models were individually trained to predict the angular velocities for RUD and PS movements respectively. Next, two control modules received the predicted velocity from the regression models for each wrist movement. The control modules compute angular velocities which will be used to control the velocity of the motors in a prosthetic arm. Each control module is comprised of an Algorithm 1 that augments the angular velocity to incite increased wrist movement. The control modules augment the wrist movement because the brace restrained the movement of the wrist. Since control modules can modulate the magnitude of predicted angular velocities, the importance of achieving low root mean squared error (RMSE) is reduced if the correlation coefficient is high38.

In the following sections, \(\dot{\theta }_{M}\) will be used to represent the angular velocities observed using a motion capture system during ADL tasks (measured angular velocity) , \(\dot{\theta }_{R}\) will be used to represent the angular velocity predicted by the regression models (predicted angular velocity), and \(\dot{\theta }_{C}\) will be used to represent the angular velocity generated by the controller (controller output angular velocity).

(a) Neural network classifier performance with precision, recall, and F-1 score averaged across all the test sets. Variation 1 involved testing on two unknown participants to the model, variation 2 involved testing on three unknown participants, and variation 3 involved testing on four unknown participants. (b) Confusion matrices depicting the classification performance of neural network models for all 3 variations of testing.

Neural network classifier

The validation process of neural network classifier focused primarily on the ability of the model to accurately classify and generalize between subjects. Therefore, the model was trained using a set of randomly selected participants and tested on remaining participants that were unknown to the model. Figure 2a shows the observed classifier performance in the three variations of testing that were conducted. Each variation was repeated 30 times, each time generating a unique combination of participants. The performance of the model on each combination was averaged to indicate overall performance for the corresponding variation of testing. A detailed description of this process is discussed in the methods section. The performance of the model was evaluated using F-1 score which is the harmonic mean of precision and recall39. Precision evaluates the fraction of correctly classified instances among the ones classified as positive. The recall is a metric that quantifies the number of correct positive predictions made out of all positive predictions that could have been made. Figure 2a shows the precision, recall, and F-1 score averaged across all combinations for each variation of testing.

In the first variation of testing, an average F-1 score, precision, and recall of 0.99 were observed for different combinations of participants. Although a slight drop from 0.99 to 0.987 and 0.988 was observed in average precision and recall during the second variation of testing, the model’s performance was similar to the first variation of testing. Furthermore, the model’s performance in the third variation of testing was analogous to the second variation of testing even though it was trained with less number of participants. Between all the three variations of testing, the smallest F-1 score, precision, and recall of 0.95 were observed in the second variation for one of the combinations of participants. Further analysis of the model’s performance is shown in Fig. 2b representing the confusion matrix which indicates the number of correctly and incorrectly classified instances averaged across all combinations of the participants for each variation of testing.

Random forest regression model

The validation process of the regression models focused primarily on quantifying how far the regression model’s predictions were from the measured angular velocity. For assessing the robustness of the models, the models were not only trained/tested using the grouped data of all participants (generic model) but also trained/tested using each individuals’ data separately (individual model). Furthermore, to assess the model’s performance on unknown participants, the three variations of testing discussed in neural network section was also conducted. Fig. 3 illustrates the comparison between the measured angular velocity and the predicted angular velocity from the generic regression models for one representative participant during ADL tasks. Table 1a shows the performance of the regression models trained using all participants’ data and Table 1b shows the performance of the regression models in the three variations of testing.

In Table 1a, models trained using all participants’ data had an overall root mean squared error (RMSE) of 7.45 deg/s when averaged over all ADL tasks. The highest RMSE of 11.67 deg/s was observed for the pulley task. For radial/ulnar deviation related tasks RMSE of 4.84 deg/s was observed whereas pronation/supination related tasks, RMSE was 9.82 deg/s. Mean absolute error (MAE) between the peaks in measured angular velocity and the predicted angular velocity was also computed. The average peak MAE for all tasks was 2.34 deg/s with the highest MAE of 3.04 deg/s for the pulley task. Pearson’s correlation coefficient was also computed between the measured (\(\dot{\theta }_M\)) and the predicted (\(\dot{\theta }_R\)) angular velocity for each ADL task to evaluate the similarity in the patterns of the two signals. In Table 1a, the highest correlation of 0.99 was observed for the Pulley task whereas the lowest correlation of 0.97 was observed for the Hammer task. Lastly, the coefficient of determination for each trained tree was computed using 37% of the training data points that are unknown to the trees40 and was aggregated across the trees to indicate the performance of the random forest regression model, called as Out-of-Bag score41. An Out-of-Bag score of 0.99 was observed for both RUD and PS related tasks. As shown in Table 1b, when the models were tested on unknown participants in the three variations of testing, the RMSE values were in the range of 13.22 to 13.53 deg/s. Furthermore, Table 2 summarizes the RMSE values observed for the models that were trained and tested for data of each individual participant. The average RMSE of individual models was in the range of 4.4 to 12.4 deg/s, similar to the generic model trained with all participants’ data.

Controller output

The brace limited the wrist movement and the controller utilizes the synergistic movement of the residual proximal joints to create wrist movement. To reduce the movement of proximal joints in the residual limb, the wrist movement needs to be amplified. Therefore, the control module was implemented, comprising of Algorithm 1, to augment the angular velocities predicted by the regression models. The validation process focused on assessing the similarity between the angular velocity measured by the motion capture system (\(\dot{\theta }_{M}\)) and the angular velocity generated by the controller (\(\dot{\theta }_{C}\)). Figure 3 depicts the angular velocity generated by the controller for one of the subjects during all four tasks.

An overall correlation of 0.9974 was observed between the measured and the controller generated angular velocity for all the ADL tasks. An average correlation of 0.9998 was observed for RUD and 0.9979 was observed for PS related tasks. These high correlation values indicate that the angular velocity generated by the controller closely follows the angular velocity measured by the motion capture system. But higher peaks were observed in the angular velocities generated by the controller when comparing with the magnitude of the measured angular velocities. This is expected because the angular velocities, predicted based on the movement synergies, are amplified by the controller with the intention of reducing the movement of the proximal joints in the residual limb.

Discussion

The aim of this study is to demonstrate the feasibility of controlling multi-DoF wrist of a prosthetic arm by using the residual upper limb motion. A methodical off-line investigation was performed to present the robustness of the developed controller. A novel framework, illustrated by Fig. 1, is proposed where a neural network classifier and two random forest regression models were trained using IMU signals to model the movement synergies and predict the user’s intent and angular velocities for the wrist movement. The result of our study shows that the controller developed using the framework can be successfully used to generate the angular velocity for both RUD and PS, using only residual upper limb motion. Furthermore, the proposed framework has the advantage of not requiring feature extraction or joint angle computations. Since joint angles were not used to train the models, accumulation of errors due to integration was also minimized. Moreover, the models were designed with the data acquired from ADL tasks, instead of using simple reaching tasks as in previous studies23,27,28,29,30,31,32.

(a) IMU sensor locations on the upper limb. The faded sensor is attached on the posterior side of the upper limb. (b) Illustration of the important features contributing to predicting angular velocities for radial/ulnar deviation of the wrist. (c) Illustration of the important features for pronation/supination. The solid circles in the outer rim indicate features with highest contribution towards prediction of angular velocities.

The neural network classifier not only showed good classification performance but also generalized across unknown participants as shown in Fig. 2 indicating the F-1 score and confusion matrices. The neural network classifier, trained using only six participant’s data, was able to decode the intent of the 4 unknown participants to either perform radial/ulnar deviation or pronation/supination with a high accuracy of 98%. Augmenting the training data with more participant data only increased the classification accuracy as shown in Fig. 2a. This performance is comparable to the results reported in previous literature where finger and wrist posture were classified with the accuracy ranging from 80 to 99.99 % using various machine learning methods such as linear discriminant analysis (LDA), support vector machines (SVM) or artificial neural networks (ANN)42,43.

The random forest regression models were able to map the accelerometer and gyroscope signals to the angular velocity of RUD and PS with high precision as shown in Fig. 3 and Table 1a. Furthermore, the model’s success in exploiting the postural synergies and the robustness of the model was demonstrated by comparison of generic and individual model, in addition to the validation with hold out data sets. Among the four different ADLs, pulley task showed higher RMSE compared to other tasks. We believe that this observation is due to the need for complex movement involved in pulley task, spanning three dimensional workspace. However, a high correlation coefficient of 0.99 is observed for the Pulley task which indicates high degree of similarity between the measured and predicted angular velocities as shown in Table 1a. As control modules allow to change the magnitude of predicted angular velocities, high correlation coefficient is sufficient even though high RMSE values were observed38.

An overall correlation coefficient of 0.98 for random forest regression and 0.99 for the control modules was observed in our study. Kaliki et al. reported a correlation coefficient of 0.97 by using shoulder movement as an input to a neural network for predicting wrist pronation/supination angles during reaching tasks31. Merad et al. modeled the postural synergies when the participants performed reaching movements using radial basis function network to control elbow flexion/extension which resulted in a correlation coefficient of 0.88. Another study using time delayed neural network and EMG signals observed a correlation coefficient of 0.68 between the measured and predicted joint angles for wrist pronation/supination44. Other previous studies that used EMG signals and regression methods for wrist flexion/extension and radial/ulnar deviation reported correlation coefficients in the range of 0.70 to 0.9845,46,47,48,49. We believe that using common synergistic patterns present in different tasks and individuals improved the prediction capability of our random forest regression models50.

For designing a prosthetic controller with multiple DoFs, identification of multiple movement synergies and manually mapping the movement synergies for each DoF are required which can be challenging. The proposed framework allows identifying the synergistic relationship between the residual and prosthetic joints by using the data collected from different ADLs. The random forest regression algorithm was adopted to identify features that contributed to the prediction. In Fig. 4b,c, the important features identified by random forest regression models were used to validate the movement synergies. The features identified as important by the random forest regression were in harmonious to the previously published movement synergies3,26,51. As shown in Fig. 5, radial/ulnar deviation related ADL tasks involved elbow flexion/extension. For pronation/supination related ADL tasks, shoulder ab/adduction instigated the axial rotation in forearm. Figure 4b,c demonstrate the framework’s capability to successfully learn these movement synergies to predict the angular velocities for multi-DoF wrist movements. Additionally, the information about the sensor contribution can be used to determine the optimal number of sensors for the prosthetic controller with high DoF or assist in troubleshooting the controller in case the model’s behaviour is unexpected. Lastly, as the angular velocity and the acceleration measured by the IMU sensors contribute to the predicted angular velocity of the wrist, the controller is less sensitive to different upper arm postures.

Although the proposed framework was shown to yield high level of off-line performance, the study was limited to off-line evaluation. Therefore, future studies will be focused on empirical analysis in a real-time virtual environment and assess the reliability of the controller. Real-time testing will be also conducted to garner in-depth user experience of operating the suggested controller. We will also explore long-term use of our controller and validate the performance when it is repeatedly used. This study did not analyze the effects of discarding less significant features on the performance of regression and classification models. Further studies are required for analyzing the effects of removing such features on the performance of models and how the explainable nature of the models can be used in reducing the number of sensors.

Using this study as a starting point, we plan to further analyze the efficacy of the framework by recruiting both amputee and non-amputee participants. A comparison between the models developed on non-amputee participants and the models developed on the amputee participants using the suggested framework would provide more insight into the efficacy of the framework. This comparison will test our hypothesis that developing machine learning models using movement synergy data, acquired by emulating amputee participants with a brace, has better prediction capabilities and higher chances of success with amputees compared to the models developed on data acquired without using the brace. Furthermore, we plan on extending the proposed framework for modeling 3-DoF active wrist movements by including flexion/extension. Lastly, if the framework is found to be successful in amputee trials, we plan to implement the developed controller in a robotic prosthesis and conduct experiments with amputee participants to assess the performance of the framework using standard clinical measures.

Conclusion

In this paper, a novel framework for the control of multi-DoF prosthetic wrist was presented. The framework uses movement synergies present in the upper limbs by leveraging a neural network classifier and random forest regression to allow multi-DoF control of a prosthetic wrist. This movement synergy based actuation of the prosthetic limb has a higher chance of creating natural wrist movements during ADL tasks and reduce compensatory movement. The model was trained using raw IMU data without any post-processing such as converting IMU data in global coordinates to compute joint angles or extracting features. Furthermore, the random forest algorithm allows for easy inclusion of sensor data with different physical metrics due to its robust structure. In the present study, the models’ performance on the dataset recorded during trials involving four different ADL tasks showed high performance. Classification accuracy of 99% was achieved by the neural network classifier. The random forest regression models had an RMSE of 7.44 deg/s with a correlation coefficient of 0.98 between the measured and predicted angular velocity. The observed results are quite promising, propounding the use of the proposed framework in developing the controller for multi-DoF prosthetic wrist. The proposed framework can be easily extended to additional upper limb movements. Future work will involve real-time testing of the proposed framework using a virtual reality environment and amputee participants.

Methods

Human experiment set-up

The experimental protocol was approved by the Institutional Review Board (IRB) of University at Buffalo. All participants were over 18 years old. They were informed about the research procedures and signed a written consent form approved by the IRB before participating in the study. All experiments were performed in accordance with relevant guidelines and regulations. Ten healthy subjects (three females and seven males with an average weight of 70.20 ± 17.31 kg and an average height of 173.29 ± 12.07 cm) performed tasks that were designed to emulate activities of daily living (ADL). An off-the-shelf brace was modified to restrict the wrist motion so that the movements made by the proximal joints will be emphasized. A motion capture system with ten infrared cameras (Vicon, UK) sampling at 100 Hz was used to record the upper limb movements. In addition, five Trigno Avanti IMU sensors (Delsys, US) sampling at 148 Hz were used to acquire movement data.

(a) Location of reflective markers attached on the upper limb during the experiment. (b) Illustration of basis vectors used for computation of angular velocity for radial/ulnar deviation and pronation/supination of the wrist. (c) A participant performing four different ADL tasks with the wrist brace.

Experimental procedure

Fifteen reflective markers were attached on bony landmarks as shown in Fig. 6a. Five IMU sensors were placed on the participants as shown in Fig. 4a. Y-axes of IMU’s were aligned with the long bones of the arm and the Z-axes of IMU’s were perpendicular to the skin. The ADL tasks were primarily designed to acquire data pertaining to two wrist motions: radial/ulnar deviation and pronation/supination52,53. Drinking from a cup and hammering a nail were designed for acquiring wrist radial/ulnar deviation data. Twisting the screws and turning the pulley were designed to acquire pronation/supination data. For each ADL task, five trials were conducted, and each trial started and ended with participants maintaining a default pose known as a T-Pose where participants stand with both shoulders abducted at 90\(^{\circ }\), elbows completely extended, and palms facing down. Furthermore, for the tasks involving clockwise and anti-clockwise movement, participants transitioned to T-Pose for a brief period after performing the clockwise movement and before initiating anti-clockwise movement. A metronome was used to have uniform speed throughout the trials. The experiment took an average of 2.5 h to complete. Following were the four ADL tasks for each trial:

-

Drinking from a cup: This task was adopted from the AM-ULA performance assessment measure where participants mimicked the action of drinking from a cup34. The participants had to pick up the cup placed on the table, bring it closer to the mouth and then try to tilt the cup to simulate drinking. The participants mimicked the drinking action for 10 times.

-

Hammering a nail: This task is similar to ‘Use a hammer and nail’ task from the AM-ULA performance assessment measure and involved the participant hitting a nail with a hammer at a constant speed34. The participant picked up the hammer and positioned the hammer on top of the nail mounted on a wooden board. The participants hit the nail ten times.

-

Twisting screws: This task was designed to simulate ‘Rotate a Screw’ task from SHAP performance assessment protocol36, and consisted of twisting 3 screws in the clockwise and anti-clockwise directions in the transverse plane. Participants picked up the screwdriver and first twisted each screw three times in a clockwise direction, and then twisted the three screws in an anti-clockwise direction.

-

Turning a pulley: This activity was designed to simulate turning a doorknob or a bulb which are activities performed on a daily basis. These tasks are a part of multiple performance assessment measures34, 36, 54. This task consisted of turning a pulley in the clockwise and anti-clockwise directions in the frontal plane. Participants reached the pulley and turned the pulley five times in a clockwise direction and then turned the pulley five times in an anti-clockwise direction.

Data pre-processing

For computing angle representing radial/ulnar deviation, two vectors, one from RSHO to LSHO and the other from RSHO to C7, were used to form a plane. A vector perpendicular to this plane was used as the reference axis and had the origin at RSHO. Another vector from RSHO to RFIN was created and the angle between the reference axis and this vector was used as the angle representing the radial/ulnar deviation. For pronation/supination of the wrist, joint angles of the distal segment (forearm) relative to the proximal segment (upper arm) were computed. The coordinate system described by the ISB standards was used to define the coordinates for the forearm segment55. For the forearm, the Y-axis was defined by the vector from wrist midpoint to the elbow midpoint. The Z-axis was a vector from wrist midpoint to RWRLAT and the X-axis was the vector perpendicular to the plane formed by the Z and Y-axis. For the proximal segment, the Y-axis was defined by a vector from the elbow midpoint to the RSHO marker. The Z-axis was a vector from elbow midpoint to RELBLAT and the X-axis was the vector perpendicular to the plane formed by the Z and Y-axis. Using the Z-X-Y Euler rotation sequence, the rotation of the forearm segment around Y-Axis was used as angle for wrist pronation/supination. Figure 6b illustrates the detailed coordinate axes used for computing both wrist angles.

The kinematic data captured by the motion capture system was filtered using 4th order low-pass Butterworth filter with a cutoff frequency of 6 Hz. The radial/ulnar deviation and pronation/supination angles were filtered with a low-pass Butterworth filter having a cutoff frequency of 1 Hz. Angular velocities were numerically computed and then passed through the same low-pass filter. The signals generated by the IMU sensors were filtered using a 3rd order low-pass Butterworth filter with a cutoff frequency of 1 Hz.

ML model training

Machine learning was employed to achieve two things. First, the intent of the user for either radial/ulnar deviation or pronation/supination will be identified. Second, machine learning model will predict the angular velocity required to provide the desired motor speed for the respective wrist movement. To achieve this, first, a neural network based binary classifier was developed to initially classify the user’s intent to either deviate radius/ulna or pronate/supinate the wrist. Then two random forest regression models were trained to predict the angular velocity, one for radial/ulnar deviation and the other for pronation/supination. Figure 1 illustrates the training process along with the inputs to the ML models and their outputs. Systematic off-line evaluation was conducted on the trained machine learning models by excluding some participants’ data from the training set.

Classification model

A densely connected neural network with two hidden layers was created using Python 3.7 and Keras 2.4.356 to accomplish the task of binary classification. The input to the neural network classifier was IMU signals from the sensors shown in Fig. 4a and the output was probabilities for each class. The class with the highest probability was considered as the user’s intent. The first hidden layer consisted of ten neurons and the second hidden layer consisted of five neurons. The hidden layers used ReLU (rectified linear unit)57 as the activation function and the output layer used Softmax as the activation function58. Binary cross entropy was used as a loss function with Adam (adaptive moment estimation) as the optimizer59. The number of hidden layers and neurons was determined by trial and error to avoid overfitting of the model. Neural networks trained using smaller batch sizes have been shown to generalize well60. Therefore, through trial and error, a small batch size of eight was used for training the classifier.

To validate the efficacy of the model, three variations of testing were performed. In the first variation of testing, 30 unique combinations of eight participants were generated by random selection. For each of the 30 combinations, eight participants’ data was used to train the model and the two excluded participants’ data was used to assess the quality of the fit. For the second variation of testing, a procedure similar to the first variation was adopted however, instead of eight participants, seven unique participants were used to form 30 unique combinations. And in the third variation, a similar procedure but with six participants was performed.

Macro-Averaged F-1 score, Macro-Averaged precision, and Macro-Averaged recall along with confusion matrix provided quantitative measures indicating the performance of the trained classifier models across different variations. F-1 score is the harmonic mean of precision and recall which indicates the accuracy with which the classifier identifies a class and is robust to the class imbalance in dataset61. Precision evaluates the fraction of correctly classified instances among the ones classified as positive. The recall is a metric that quantifies the number of correct positive predictions made out of all positive predictions that could have been made. A detailed description of these assessment methods can be found in39.

Regression model

When evaluated against a variety of supervised learning algorithms using different performance criteria and data sets, random forest performed better than most of the other popular learning algorithms62. They have been fairly successful in inferring from different bio signals63 and have been used in modeling controllers for prostheses64,65,66.

Random forest is a type of ensemble learning algorithm based on the ‘divide and conquer’ strategy and consists of two core components: CART (classification and regression trees) split criterion and Bagging41. CART split criterion regulates the construction of each individual tree in the forest. Bagging is a method in which bootstrapped samples are generated from the original data set and each sample is used to fit a different tree in the forest. The random forest algorithm is comprised of three main steps. First, for a given training set D, T sets of n elements are sampled from D with replacement. Second, for each subsample, a decision tree is constructed using CART. In random forest, CART is modified to have a fixed number of randomly selected features for splitting the data. The number of randomly selected features used for splitting the subsample is held constant throughout the process. The quality of the split is assessed using mean squared error (MSE), and the set of randomly selected features that yields the best split is selected. Furthermore, the trees are not pruned and are allowed to grow to their largest possible extent or to some predefined threshold. Lastly, prediction p for a new input r is computed by aggregating the outputs of the trained regression trees \(R_1,R_2 ... R_T\) in the forest as indicated by Eq. (1)67.

Another advantage of random forest is computation of feature importance which helps in identifying features that have a strong influence in predicting the angular velocity, \(\dot{\theta }_R\). The feature importance is measured by the overall decrease in variance when split on a feature averaged across all the trees. Thus, weighted decrease in variance corresponding to split along the feature \(f_j\) is computed and is averaged over all trees, where \(f_j\) is the jth feature in dataset D. A detailed description of the algorithm can be found in41,68. The feature importance was used to identify the signals of the IMU sensors which influenced the most in predicting the angular velocity \(\dot{\theta }_R\). Furthermore, it also allowed us to validate the movement synergies by checking if the sensors identified as important were harmonious to the observed movement synergies.

The random forest regression models were developed using Python 3.7 and Scikit-learn69. Using GridsearchCV from Scikit-learn, it was found that a forest with 50 trees having a maximum depth of 40 performed well in predicting the angular velocities for both radial/ulnar deviation and pronation/supination. Two training data sets were created, one for radial/ulnar deviation and the other for pronation/supination containing IMU data collected during ADLs. To assess the model’s predictions, three different approaches were used. The first approach involved using 4 trials of all the participants to train the model and using the 5th trial to test it. In the Second approach, models were trained and tested for each individual instead of one generic model trained using all participants’ data. The third approach was similar to the three variations of testing discussed in the neural network classifier section where the regression models were trained on a few participants and tested on the remaining unknown participants. The model’s performance was judged by measuring the similarity between measured (\(\dot{\theta }_M\)) and the predicted (\(\dot{\theta }_R\)) angular velocity for the wrist using root mean squared error (RMSE) and mean absolute difference between the peaks. Pearson’s correlation coefficient R was also computed because it is independent of the unit, enabling comparison with previous studies70. Furthermore, due to bagging when the individual trees are trained, around 37% of the training data points are unknown to the trees in the model40. Hence, these unknown data points are used to assess the performance of the trained tree and aggregated across the trees to indicate the performance of the entire model also known as Out-of-Bag score41. Performance on Out-of-Bag samples was also tracked by computing the coefficient of determination, R\({^2}\).

Control module design and testing

As shown in Fig. 1, two control modules were developed for each radial/ulnar deviation and pronation/supination of the wrist, in order to increase the wrist movement and reduce the required range of motion of residual limb to control the wrist. Each control module receives the predicted angular velocity (\(\dot{\theta }_{R}\)) from the regression models. The control module is comprised of an algorithm that augments the predicted angular velocity (\(\dot{\theta }_{R}\)) by a given value gain to increase the wrist movement. Now instead of keeping a constant value for gain, we increase or decrease the gain by \(\lambda\) at time t by comparing the predicted angular velocity (\(\dot{\theta }_{R}\)) at time t and \(t-1\). The gain value had upper (\(gain_{max}\)) and lower (\(gain_{min}\)) bounds to ensure that controller generates angular velocity (\(\dot{\theta }_{C}\)) that isn’t too large or too low. From trial and error, \(\lambda =0.00008\), \(gain_{max}=2\) and \(gain_{min}=1\) were used to compute the desired motor velocity in the controller algorithm. Furthermore, the maximum angular velocity is controlled by \(\dot{\theta }_{max}\). Algorithm 1 depicts the pseudo-code of the iterative gain algorithm.

Data availability

The data from IMU sensors and motion capture system during four different ADLs will be available upon request to the corresponding author (jiyeonk@buffalo.edu).

References

Dalley, S., Wiste, T., Withrow, T. & Goldfarb, M. Design of a multifunctional anthropomorphic prosthetic hand with extrinsic actuation. IEEE/ASME Trans. Mech. 14, 699–706. https://doi.org/10.1109/TMECH.2009.2033113 (2009).

Cowley, J., Resnik, L., Wilken, J., Smurr Walters, L. & Gates, D. Movement quality of conventional prostheses and the deka arm during everyday tasks. Prosthet. Orthot. Int. 41, 33–40. https://doi.org/10.1177/0309364616631348 (2017).

Carey, S. L., Jason Highsmith, M., Maitland, M. E. & Dubey, R. V. Compensatory movements of transradial prosthesis users during common tasks. Clin. Biomech. 23, 1128–1135. https://doi.org/10.1016/j.clinbiomech.2008.05.008 (2008).

Gambrell, C. R. Overuse syndrome and the unilateral upper limb amputee: Consequences and prevention. J. Prosth. Orthot. 20, 126–132. https://doi.org/10.1097/JPO.0b013e31817ecb16 (2008).

Østlie, K., Franklin, R. J., Skjeldal, O. H., Skrondal, A. & Magnus, P. Musculoskeletal pain and overuse syndromes in adult acquired major upper-limb amputees. Arch. Phys. Med. Rehabil. 92, 1967–1973. https://doi.org/10.1016/j.apmr.2011.06.026 (2011).

Biddiss, E., Beaton, D. & Chau, T. Consumer design priorities for upper limb prosthetics. Disabil. Rehabil. Assist. Technol. 2, 346–357. https://doi.org/10.1080/17483100701714733 (2007).

Cordella, F. et al. Literature review on needs of upper limb prosthesis users. Front. Neurosci. 10, 209. https://doi.org/10.3389/fnins.2016.00209 (2016).

Stephens-Fripp, B., Jean Walker, M., Goddard, E. & Alici, G. A survey on what Australians with upper limb difference want in a prosthesis: Justification for using soft robotics and additive manufacturing for customized prosthetic hands. Disabil. Rehabil. Assist. Technol. 15, 342–349. https://doi.org/10.1080/17483107.2019.1580777 (2020).

Resnik, L. J., Borgia, M. L. & Clark, M. A. A national survey of prosthesis use in veterans with major upper limb amputation: Comparisons by gender. PM and R 12, 1086–1098. https://doi.org/10.1002/pmrj.12351 (2020).

Bennett, D. A., Mitchell, J. E., Truex, D. & Goldfarb, M. Design of a myoelectric transhumeral prosthesis. IEEE/ASME Transact. Mechatron. 21, 1868–1879. https://doi.org/10.1109/TMECH.2016.2552999 (2016).

Lenzi, T., Lipsey, J. & Sensinger, J. W. The ric arm-a small anthropomorphic transhumeral prosthesis. IEEE/ASME Transact. Mechatron. 21, 2660–2671. https://doi.org/10.1109/TMECH.2016.2596104 (2016).

Bandara, D., Gopura, R., Hemapala, K. & Kiguchi, K. Development of a multi-dof transhumeral robotic arm prosthesis. Med. Eng. Phys. 48, 131–141. https://doi.org/10.1016/j.medengphy.2017.06.034 (2017).

Vujaklija, I., Farina, D. & Aszmann, O. C. New developments in prosthetic arm systems. Orthoped. Res. Rev. 8, 31–39. https://doi.org/10.2147/ORR.S71468 (2016).

Hudgins, B., Parker, P. & Scott, R. N. A new strategy for multifunction myoelectric control. IEEE Transact. Biomed. Eng. 40, 82–94. https://doi.org/10.1109/10.204774 (1993).

Graupe, D., Salahi, J. & Kohn, K. H. Multifunctional prosthesis and orthosis control via microcomputer identification of temporal pattern differences in single-site myoelectric signals. J. Biomed. Eng. 4, 17–22. https://doi.org/10.1016/0141-5425(82)90021-8 (1982).

Jiang, N., Dosen, S., Muller, K. & Farina, D. Myoelectric control of artificial limbs-is there a need to change focus? [in the spotlight]. IEEE Signal Process. Mag. 29, 150–152. https://doi.org/10.1109/MSP.2012.2203480 (2012).

Scheme, E. & Englehart, K. Electromyogram pattern recognition for control of powered upper-limb prostheses: State of the art and challenges for clinical use. J. Rehabil. Res. Dev. 40, 643–660. https://doi.org/10.1682/JRRD.2010.09.0177 (2011).

Dhawan, A. S. et al. Proprioceptive sonomyographic control: A novel method for intuitive and proportional control of multiple degrees-of-freedom for individuals with upper extremity limb loss. Sci. Rep. 9. https://doi.org/10.1038/s41598-019-45459-7 (2019).

Guo, J. Y., Zheng, Y. P., Xie, H. B. & Koo, T. K. Towards the application of one-dimensional sonomyography for powered upper-limb prosthetic control using machine learning models. Prosth. Orthot. Int. 37, 43–49. https://doi.org/10.1177/0309364612446652 (2013).

Kato, A. et al. Continuous wrist joint control using muscle deformation measured on forearm skin. in IEEE International Conference on Robotics and Automation (ICRA), 1818–1824. https://doi.org/10.1109/ICRA.2018.8460491 (2018).

Tarantino, S., Clemente, F., Barone, D., Controzzi, M. & Cipriani, C. The myokinetic control interface: Tracking implanted magnets as a means for prosthetic control. Sci. Rep. 7. https://doi.org/10.1038/s41598-017-17464-1 (2017).

Palsdottir, A. A., Dosen, S., Mohammadi, M. & Andreasen Struijk, L. N. Remote tongue based control of a wheelchair mounted assistive robotic arm—A proof of concept study. in IEEE International Conference on Mechatronics and Automation (ICMA), 1300–1304. https://doi.org/10.1109/ICMA.2019.8816415 (2019).

Popović, D. B., Popović, M. B. & Sinkjær, T. Life-like control for neural prostheses: “Proximal controls distal”. in Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), 7648–7651. https://doi.org/10.1109/iembs.2005.1616283 (2005).

Bennett, D. A. & Goldfarb, M. IMU-based wrist rotation control of a transradial myoelectric prosthesis. IEEE Transact. Neural Syst. Rehabil. Eng. 26, 419–427. https://doi.org/10.1109/TNSRE.2017.2682642 (2018).

Legrand, M., Jarrassé, N., Richer, F. & Morel, G. A closed-loop and ergonomic control for prosthetic wrist rotation. in IEEE International Conference on Robotics and Automation (ICRA), 2763–2769. https://doi.org/10.1109/ICRA40945.2020.9197554 (2020).

Montagnani, F., Controzzi, M. & Cipriani, C. Exploiting arm posture synergies in activities of daily living to control the wrist rotation in upper limb prostheses: A feasibility study. in Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), 2462–2465. https://doi.org/10.1109/EMBC.2015.7318892 (2015).

Merad, M., De Montalivet, É., Roby-Brami, A. & Jarrassé, N. Intuitive prosthetic control using upper limb inter-joint coordinations and IMU-based shoulder angles measurement: A pilot study. in IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 5677–5682. https://doi.org/10.1109/IROS.2016.7759835 (2016).

Popovic, D. & Popovic, M. Tuning of a nonanalytical hierarchical control system for reaching with FES. IEEE Transact. Biomed. Eng. 45, 203–212. https://doi.org/10.1109/10.661268 (1998).

Popovic, M. & Popovic, D. Cloning biological synergies improves control of elbow neuroprostheses. IEEE Eng. Med. Biol. Mag. 20, 74–81. https://doi.org/10.1109/51.897830 (2001).

Iftime, S. D., Egsgaard, L. L. & Popović, M. B. Automatic determination of synergies by radial basis function artificial neural networks for the control of a neural prosthesis. IEEE Transact. Neural Syst. Rehabil. Eng. 13, 482–489. https://doi.org/10.1109/TNSRE.2005.858458 (2005).

Kaliki, R. R., Davoodi, R. & Loeb, G. E. Evaluation of a noninvasive command scheme for upper-limb prostheses in a virtual reality reach and grasp task. IEEE Trans. Biomed. Eng. 60, 792–802. https://doi.org/10.1109/TBME.2012.2185494 (2013).

Merad, M. et al. Can we achieve intuitive prosthetic elbow control based on healthy upper limb motor strategies?. Front. Neurorobot. 12, 1. https://doi.org/10.3389/fnbot.2018.00001 (2018).

Kopp, B. et al. The arm motor ability test: Reliability, validity, and sensitivity to change of an instrument for assessing disabilities in activities of daily living. Arch. Phys. Med. Rehabil. 78, 615–620. https://doi.org/10.1016/S0003-9993(97)90427-5 (1997).

Resnik, L. et al. Development and evaluation of the activities measure for upper limb amputees. Arch. Phys. Med. Rehabil. 94, 488–494. https://doi.org/10.1016/j.apmr.2012.10.004 (2013).

Light, C. M., Chappell, P. H. & Kyberd, P. J. Establishing a standardized clinical assessment tool of pathologic and prosthetic hand function: Normative data, reliability, and validity. Arch. Phys. Med. Rehabil. 83, 776–783. https://doi.org/10.1053/apmr.2002.32737 (2002).

Burgerhof, J. G., Vasluian, E., Dijkstra, P. U., Bongers, R. M. & van der Sluis, C. K. The Southampton hand assessment procedure revisited: A transparent linear scoring system, applied to data of experienced prosthetic users. J. Hand Ther. 30, 49–57. https://doi.org/10.1016/j.jht.2016.05.001 (2017).

Wang, S. et al. Evaluation of performance-based outcome measures for the upper limb: A comprehensive narrative review. PM and R 10, 951–962. https://doi.org/10.1016/j.pmrj.2018.02.008 (2018).

Pan, L., Crouch, D. L. & Huang, H. Comparing emg-based human-machine interfaces for estimating continuous, coordinated movements. IEEE Transact. Neural Syst. Rehabil. Eng. 27, 2145–2154. https://doi.org/10.1109/TNSRE.2019.2937929 (2019).

Tharwat, A. Classification assessment methods. Appl. Comput. Inform.https://doi.org/10.1016/j.aci.2018.08.003 (2018).

Breiman, L. Out-of-bag estimation. https://www.stat.berkeley.edu/pub/users/breiman/OOBestimation.pdf (1996).

Biau, G. & Scornet, E. A random forest guided tour. TEST 25, 197–227. https://doi.org/10.1007/s11749-016-0481-7 (2016).

Patel, G. K., Castellini, C., Hahne, J. M., Farina, D. & Dosen, S. A classification method for myoelectric control of hand prostheses inspired by muscle coordination. IEEE Trans. Neural Syst. Rehabil. Eng. 26, 1745–1755. https://doi.org/10.1109/TNSRE.2018.2861774 (2018).

Peerdeman, B. Myoelectric forearm prostheses: State of the art from a user-centered perspective. J. Rehabil. Res. Dev. 48, 719–738. https://doi.org/10.1682/JRRD.2010.08.0161 (2011).

Pulliam, C. L., Lambrecht, J. M. & Kirsch, R. F. Electromyogram-based neural network control of transhumeral prostheses. J. Rehabil. Res. Dev. 48, 739–754. https://doi.org/10.1682/JRRD.2010.12.0237 (2011).

Kim, Y., Stapornchaisit, S., Kambara, H., Yoshimura, N. & Koike, Y. Muscle synergy and musculoskeletal model-based continuous multi-dimensional estimation of wrist and hand motions. J. Healthc. Eng. 2020, 13. https://doi.org/10.1155/2020/5451219 (2020).

Smith, L. H., Kuiken, T. A. & Hargrove, L. J. Evaluation of linear regression simultaneous myoelectric control using intramuscular EMG. IEEE Trans. Biomed. Eng. 63, 737–746. https://doi.org/10.1109/TBME.2015.2469741 (2016).

Ameri, A., Kamavuako, E. N., Scheme, E. J., Englehart, K. B. & Parker, P. A. Support vector regression for improved real-time, simultaneous myoelectric control. IEEE Trans. Neural Syst. Rehabil. Eng. 22, 1198–1209. https://doi.org/10.1109/TNSRE.2014.2323576 (2014).

Hahne, J. M. et al. Linear and nonlinear regression techniques for simultaneous and proportional myoelectric control. IEEE Trans. Neural Syst. Rehabil. Eng. 22, 269–279. https://doi.org/10.1109/TNSRE.2014.2305520 (2014).

Nielsen, J. L. et al. Simultaneous and proportional force estimation for multifunction myoelectric prostheses using mirrored bilateral training. IEEE Trans. Biomed. Eng. 58, 681–688. https://doi.org/10.1109/TBME.2010.2068298 (2011).

Desmurget, M. & Prablanc, C. Postural control of three-dimensional prehension movements. J. Neurophysiol. 77, 452–464. https://doi.org/10.1152/jn.1997.77.1.452 (1997).

Montagnani, F., Controzzi, M. & Cipriani, C. Is it finger or wrist dexterity that is missing in current hand prostheses?. IEEE Trans. Neural Syst. Rehabil. Eng. 23, 600–609. https://doi.org/10.1109/TNSRE.2015.2398112 (2015).

Gates, D. H., Walters, L. S., Cowley, J., Wilken, J. M. & Resnik, L. Range of motion requirements for upper-limb activities of daily living. Am. J. Occup. Ther. 70, 7001350010p1–7001350010p10. https://doi.org/10.5014/ajot.2016.015487 (2016).

Kaufman-Cohen, Y., Portnoy, S., Levanon, Y. & Friedman, J. Does object height affect the dart throwing motion angle during seated activities of daily living?. J. Motor Behav. 52, 456–465. https://doi.org/10.1080/00222895.2019.1645638 (2020).

PJ, R. A list of everyday tasks for use in prosthesis design and development. Bull. Prosth. Res. 10, 135–145 (1970).

Wu, G. et al. ISB recommendation on definitions of joint coordinate systems of various joints for the reporting of human joint motion—Part II: Shoulder, elbow, wrist and hand. J. Biomech. 38, 981–992. https://doi.org/10.1016/j.jbiomech.2004.05.042 (2005).

Chollet, F. et al. Keras. https://github.com/fchollet/keras (2015).

Nair, V. & Hinton, G. E. Rectified linear units improve restricted Boltzmann machines. in International Conference on Machine Learning (ICML), 807–814. https://icml.cc/Conferences/2010/papers/432.pdf (2010).

Goodfellow, I., Bengio, Y., Courville, A. & Bengio, Y. Deep Learning, Vol. 1. http://www.deeplearningbook.org (MIT Press, 2016).

Kingma, D. P. & Ba, J. L. Adam. A method for stochastic optimization. in International Conference on Learning Representations (ICLR), https://arxiv.org/abs/1412.6980 (2015).

Smith, S. L., Kindermans, P. J., Ying, C. & Le, Q. V. Don’t decay the learning rate, increase the batch size. in International Conference on Learning Representations (ICLR). https://arxiv.org/abs/1711.00489 (2018).

Lipton, Z. C., Elkan, C. & Naryanaswamy, B. Optimal thresholding of classifiers to maximize F1 measure. Mach. Learn. Knowl. Discov. Databases 225–239. https://doi.org/10.1007/978-3-662-44851-9_15 (2014).

Caruana, R. & Niculescu-Mizil, A. An empirical comparison of supervised learning algorithms. in International Conference on Machine Learning, 161–168. https://doi.org/10.1145/1143844.1143865 (2006).

Chen, W., Wang, Y., Cao, G., Chen, G. & Gu, Q. A random forest model based classification scheme for neonatal amplitude-integrated EEG. BioMed. Eng. Online 13. https://doi.org/10.1186/1475-925X-13-S2-S4 (2014).

Palermo, F. et al. Repeatability of grasp recognition for robotic hand prosthesis control based on sEMG data. in IEEE International Conference on Rehabilitation Robot (ICORR), 1154–1159. https://doi.org/10.1109/ICORR.2017.8009405 (2017).

Burtsev, N. I., Shagdurov, V. C. & Demkin, I. O. Application of the random forest machine learning algorithm for recognizing patient arm movements while using a bionic prosthesis. AIP Conf. Proc. 2140, 020010. https://doi.org/10.1063/1.5121935 (2019).

Dey, S., Yoshida, T., Ernst, M., Schmalz, T. & Schilling, A. F. A random forest approach for continuous prediction of joint angles and moments during walking: An implication for controlling active knee-ankle prostheses/orthoses. in IEEE International Conference on Cyborg and Bionic Systems (CBS), 66–71. https://doi.org/10.1109/CBS46900.2019.9114439 (2019).

König, I. R. et al. Patient-centered yes/no prognosis using learning machines. Int. J. Data Min. Bioinform. 2, 289–341. https://doi.org/10.1504/IJDMB.2008.022149 (2008).

Breiman, L. Random forests. Mach. Learn. 45, 5–32. https://doi.org/10.1023/A:1010933404324 (2001).

Pedregosa, F. et al. Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 12, 2825–2830. http://jmlr.org/papers/v12/pedregosa11a.html (2011).

Xiloyannis, M., Gavriel, C., Thomik, A. A. & Faisal, A. A. Gaussian process autoregression for simultaneous proportional multi-modal prosthetic control with natural hand kinematics. IEEE Transact. Neural Syst. Rehabil. Eng. 25, 1785–1801. https://doi.org/10.1109/TNSRE.2017.2699598 (2017).

Acknowledgements

The study was partially supported by the SUNY Multidisciplinary Small Team Grant (RFP #20-02-RSG), 240202-06. We would like to thank all the trial subjects for participating in the trials.

Author information

Authors and Affiliations

Contributions

J.K. led the research, provided subject matter expertise, and support throughout all the stages of the research and wrote the manuscript. C.P.S. designed the experiment, conducted the trials, processed the trial data, developed the Machine learning scripts, developed the Euler angle scripts, wrote the manuscript, and fabricated the trial hardware. N.L. created a base version of the Euler angle script for pronation/supination, designed the trials, and modified the pulley experiment hardware.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Swami, C.P., Lenhard, N. & Kang, J. A novel framework for designing a multi-DoF prosthetic wrist control using machine learning. Sci Rep 11, 15050 (2021). https://doi.org/10.1038/s41598-021-94449-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-021-94449-1

This article is cited by

-

Artificial Neural Network-Based Activities Classification, Gait Phase Estimation, and Prediction

Annals of Biomedical Engineering (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.