Abstract

Early admission to the neurosciences intensive care unit (NSICU) is associated with improved patient outcomes. Natural language processing offers new possibilities for mining free text in electronic health record data. We sought to develop a machine learning model using both tabular and free text data to identify patients requiring NSICU admission shortly after arrival to the emergency department (ED). We conducted a single-center, retrospective cohort study of adult patients at the Mount Sinai Hospital, an academic medical center in New York City. All patients presenting to our institutional ED between January 2014 and December 2018 were included. Structured (tabular) demographic, clinical, bed movement record data, and free text data from triage notes were extracted from our institutional data warehouse. A machine learning model was trained to predict likelihood of NSICU admission at 30 min from arrival to the ED. We identified 412,858 patients presenting to the ED over the study period, of whom 1900 (0.5%) were admitted to the NSICU. The daily median number of ED presentations was 231 (IQR 200–256) and the median time from ED presentation to the decision for NSICU admission was 169 min (IQR 80–324). A model trained only with text data had an area under the receiver-operating curve (AUC) of 0.90 (95% confidence interval (CI) 0.87–0.91). A structured data-only model had an AUC of 0.92 (95% CI 0.91–0.94). A combined model trained on structured and text data had an AUC of 0.93 (95% CI 0.92–0.95). At a false positive rate of 1:100 (99% specificity), the combined model was 58% sensitive for identifying NSICU admission. A machine learning model using structured and free text data can predict NSICU admission soon after ED arrival. This may potentially improve ED and NSICU resource allocation. Further studies should validate our findings.

Similar content being viewed by others

Introduction

Critically ill neurological patients benefit from care in the neurosciences intensive care unit (NSICU)1,2,3,4. Delays in reaching the NSICU are associated with adverse clinical outcomes5. Moreover, ED overcrowding is a well-recognized hindrance to patient care that is associated with increased length of stay and hospitalization costs6,7,8,9,10. NSICU care is both time sensitive and costly11, 12, and use of the NSICU requires prompt and judicious decision-making. However, identification of patients requiring NSICU care may occur downstream from initial triage (as in Fig. 1), potentially contributing to harmful and costly care delays. Timely identification of patients requiring NSICU admission may improve ED overcrowding and resource allocation.

Diagram of patient flow through our institutional emergency department. Patient movement occurs from far left (arrival) to far right (final disposition). Current-state triage point to the neuroscience intensive care unit appears to the right. Proposed triage point and predictive time window appear in red. EMS emergency medical services, ESI emergency severity index, NSICU neuroscience intensive care unit.

You cannot alter accepted Supplementary Information files except for critical changes to scientific content. If you do resupply any files, please also provide a brief (but complete) list of changes. If these are not considered scientific changes, any altered Supplementary files will not be used, only the originally accepted version will be published.No Supplementary Information files need to be altered at the current time.

Driven by increases in electronic health data availability13 and computing power, machine learning is increasingly used to automate processes in healthcare14,15,16,17,18,19,20. While many such approaches make use of structured, or “tabular”, data, free text constitutes a large proportion of electronic health record (EHR) data21, and may capture greater clinical expressivity than tabular data22,23,24. Despite its richness, free text is vulnerable to the ambiguities of human language and is thus challenging to analyze. Natural language processing (NLP) can assist with such challenges by providing structure to free text25. While previous tabular data models26,27,28,29,30,31,32 have addressed the problems of reducing ED crowding, early disposition prediction, and unplanned ICU readmission33, other models have been developed using either a combination of free-text and tabular data34 or exclusively free-text data sources35,36,37. To our knowledge, NLP methods have not been employed to predict NSICU admission. We sought to develop a predictive model of NSICU admission at 30 min after arrival to the ED, using a combination of tabular and free text data.

Methods

Design, patient population and measurements

All research was performed in accordance with relevant guidelines and regulations of the Institutional Review Board of the Icahn School of Medicine at Mount Sinai, which approved this retrospective study under protocol number 19-02333, and waived the requirement of informed consent. We retrieved patient data from the Mount Sinai Hospital, an urban academic tertiary-care medical center located in New York City. Using our institutional data warehouse, we identified patients that presented to our ED between January 2014 and December 2018. From this cohort, we extracted tabular clinical and demographic data, which included age, sex, home address ZIP code, ethnicity, means of arrival, chief complaint, triage vital signs, patient escort, Emergency Severity Index38, medical history, number of previous hospitalization and ED visits, time since previous ED presentation, and admission/discharge/transfer records, which we used to identify patient bed movements. All variables were available in the EHR within 30 min of the patient’s arrival in the ED.

From the same data source, we also extracted all nurse and physician notes recorded up to 30 min from ED arrival. Upon a patient’s arrival in the Mount Sinai ED, the first clinical documentation recorded in a patient’s chart is a triage note consisting of structured chief complaint field and an abbreviated patient history written by a triage nurse. Therefore, the triage note time was used to designate ED arrival time. We derived continuous variables from tabular and free text data, including time from ED arrival to triage vital signs. Admission/discharge/transfer records were used to identify NSICU admission. We excluded all patients that were 17 years of age or younger at the time of ED presentation, or died within 30 min of arrival to the ED. We also excluded erroneously created or duplicate patient records, as well as visits that lacked an initial triage note.

Model development

Using both tabular and free text data, we built a model to predict NSICU admission at 30 min from ED arrival time. While they constituted tabular data, admission/discharge/transfer records were used to identify the outcome of interest and therefore were not included as model predictors. Tabular data used in model training is represented in Supplemental Table 1. A bag-of-words (BOW) approach was used to represent the free text data. In a BOW model, a free text paragraph is represented as an unordered collection (or “bag”) of words. Words in the “bag” are organized into tabular representation according to frequency and number. A statistical classifier is then trained to classify each paragraph based on word frequency and number.

We first cleaned the free text data by lower-casing all words and removing punctuation and rare words. We then applied the bag-of-words (BOW) model to the clean text corpus. Both tabular data and BOW vectors were combined and incorporated into a gradient boosting model (XGBoost)39 to predict NSICU admission. All vectors were represented using sparse vector representations and the structured tabular vectors were directly concatenated to the unstructured vectors. Although the dimensionality of the structured vectors was much smaller than the unstructured vectors, due to the sparseness of the unstructured vectors, the latter occupied a relatively small amount of memory. Missing values were handled by the XGBoost model. Cohort imbalance was handled by the XGBoost weight scaling feature.

Model training was performed using data from January 1st, 2014 to December 31st, 2017. Model testing was performed using a hold-out dataset, which included data from January 1st, 2018 to December 31st, 2018. This was done to minimize model over-fitting and provide a pseudo-prospective estimate of model performance. We also separately determined the classifying ability of models exclusively trained using tabular data and free text, respectively. To ensure that our study exclusion criteria did not introduce selection bias, we performed a sensitivity analysis in which we derived all 3 models using the same methodology as the main analysis, but with a cohort population that included all patients who died within 30 min of ED arrival. To investigate whether using chronological testing-training data split introduced potential bias, we performed a second sensitivity analysis where all patient data was randomly split into 90% training and 10% testing sets. The same hyper-parameters were used in this sensitivity analysis as those used in the main analysis.

Statistical analysis

To analyze word importance, we determined the mutual information (MI) between each word in the free text corpus and admission to the NSICU. MI measures the statistical dependence between two random variables40,41,42, such as an outcome (A) and a given word (w), according to the formula

where P(A,w) represents the joint probability of (A) and (w), P(A) represents the probability of (A), and P(w) represents the probability of (w). To contrast the words associated with admission to the NSICU, we also determined the MI between each word and admission to non-NSICU services or discharge from the ED. For each word, we also determined the odds ratios (OR) associated with NSICU admission a swell as admission to non-NSICU services or ED discharge. We used the chi-squared test to evaluate the statistical significance of the associations between specific words and NSICU admission.

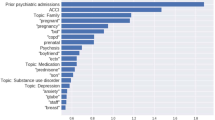

For all models, we constructed receiver operating characteristic (ROC) curves and evaluated the area under the ROC curve (AUC). We determined sensitivity, false-positive rate (FPR), positive predictive value (PPV), negative predictive value (NPV), F1 score, and Matthews correlation coefficient (MCC) for fixed model specificities of 0.90, 0.95, and 0.99. We characterized the relationship between all tabular variables and admission to the NSICU using univariate logistic regression, and determined AUCs corresponding to each variable. We reported the variables with the 10 highest AUC values. We determined the words with the 20 highest MIs with admission to the NSICU, and separately with admission to either non-NSICU services or discharge from the ED. Bootstrapping validations with 1,000 bootstrap re-samples were used to calculate 95% confidence intervals (CI) for all metrics. We used Youden's index to identify optimal sensitivity–specificity cutoff points for each ROC curve. Due to multiple comparisons, a Bonferroni correction was applied for controlling Type I error and Bonferroni-corrected alpha was set to 0.001.

Results

Over the 5-year study period, we identified 412,858 patients after applying exclusions (Fig. 2), of whom 1900 (0.5%) were admitted to the NSICU (Fig. 3). The median number of ED visits was 231 (IQR 200–256), and the median time difference between ED arrival to the time of decision for NSICU admission was 169 min (IQR 80–324). Patients that were admitted to the NSICU were significantly more likely to be older, female, present with an “Immediate” (Level 1) or “Emergent” (Level 2) ESI, be transported to the ED by ambulance or emergency medical services, and have fewer preceding ED presentations than patients who were admitted to non-NSICU services or discharged from the ED. Patients admitted to the NSICU were also more likely to have higher triage systolic blood pressures, respiratory rates, and heart rates, and were also more likely to have a history of stroke, hypertension, cardiovascular disease, and neoplastic disease than patients who were admitted to non-NSICU services or discharged from the ED (Table 1).

Free text words that had the highest MI with NSICU admission were related to stroke, including treatment (e.g. “tpa”), first-line neuroimaging (e.g. “ct”), symptoms (e.g. “droop”), alert notification (e.g. “activated”), and transfer from other hospitals (e.g. “transferred”) (Table 2). Words associated with the highest MI values with admission to a non-NSICU service or hospital discharge were related to pain, subacute complaints (e.g., “days”), or complaints referable to non-neurological organ systems (e.g., “sob”, “swelling”), as well as denial of symptoms (e.g., “denies”) (Supplemental Table 2). In the univariate analysis, the tabular variables with the highest AUC were chief complaint (AUC 0.85), followed by acuity (AUC 0.77), age (AUC 0.74), and means of arrival (AUC 0.73) (Table 3).

Using tabular data alone, the model demonstrated an AUC of 0.92 (95% CI 0.91–0.94) (Supplemental Table 3), whereas the model trained solely with text generated an AUC of 0.90 (95% CI 0.88–0.92) (Supplemental Table 4). The final, combined model demonstrated an AUC of 0.93 (95% CI 0.92–0.95) (Fig. 4). At a false positive rate of 1:100 (99% specificity), the combined model was 58% sensitive for identifying NSICU admission, whereas at a false negative rate of 1:5 (80% sensitivity), the model was 88% specific for identifying NSICU admission (Table 4). In the first sensitivity analysis including patients that died within 30 min of ED arrival, the text data model showed an AUC of 0.89 (95% CI 0.87–0.91), the tabular data only model had an AUC of 0.92 (95% CI 0.91–0.94), and the combined model had an AUC of 0.94 (95% CI 0.92–0.95). In the second sensitivity analysis using a random data split, the text data model had an AUC 0.90 (95% CI 0.87–0.92), the tabular-only model showed an AUC 0.90 (95% CI 0.87–0.93), and the combined model showed 0.93 (95% CI 0.91–0.95).

Discussion

We developed a machine learning model based on free text and tabular data to predict NSICU admission with good discriminatory performance. The model performance did not significantly change after we used a random training–testing data split and included patients that had been excluded from the study cohort, both of which suggest robust internal validity. Such a model could be used by neurocritical care experts and clinical stakeholders, such as ED clinicians and nursing managers, to identify patients that might need a NSICU bed early in the ED triage process. More importantly, this could also permit providers to administer timely neurocritical care therapies, accelerate patient movement into the NSICU, and potentially reduce ED boarding time.

We found that the NSICU admission rate from our ED was low, which likely explains the low positive predictive value of our model. As such, the task of distinguishing patients that require NSICU admission from other patients in the ED constitutes a “needle in a haystack” problem. Nonetheless, by using EHR data and machine learning approaches, our model can predict the need for NSICU admission within 30 min of arrival in triage with a reasonable tradeoff between false-positive and false-negative rates. Furthermore, considering that the median time from ED arrival to the decision for NSICU admission was 169 min over the study period, our model’s ability to generate a disposition prediction within 30 min of ED arrival could potentially translate to operational and clinical benefits.

The free text data corpus for our model consisted of all physician and nursing notes documented up to 30 min from ED arrival. Our model’s short predictive time window and extent of included text data builds on prior NLP-based approaches aimed at predicting patient disposition from the ED. For example, Lucini et al.36 incorporated provider notes available several hours after patient arrival, and Zhang et al.37 used text taken from patients’ chief complaints. Sterling et al. demonstrated that triage notes were useful predictors of ED disposition when used as the sole data source35. Our findings suggest that prediction of NSICU admission is feasible within 30 min of ED arrival, and that combining tabular and free text data can slightly improve overall model performance over tabular data alone. However, incorporating text data into a predictive model for NSICU admission provided limited incremental benefit in performance over a tabular-only model, suggesting that tabular data may represent overlapping predictive features with those denoted by highly predictive keywords identified using NLP. From an implementation perspective, this modest benefit in model performance should be weighed against any computing requirements or technical costs of using NLP to incorporate textual data into a combined predictive model.

Our analysis of ED notes suggests that words most highly associated with NSICU admission related directly to acute cerebrovascular events, such as stroke or symptoms thereof, and hospital transfers. By contrast, words associated with admission to non-NSICU services or ED discharge suggested non-acute illnesses and non-neurological complaints. While many neurological and non-neurological emergencies are managed in NSICUs43, these findings are expected. Acute cerebrovascular diseases are commonly encountered in the ED and frequently require NSICU care, especially in cases of large-vessel arterial occlusion that necessitate mechanical thrombectomy. Our institutional ED is a frequent referral destination in a New York City-wide network for emergent endovascular thrombectomy in acute ischemic stroke, thereby also explaining some of the findings from our text analysis44, 45. However, because clinical factors such as neurological examination findings may be the main driver for identifying patients who require NSICU admission, the keywords we have identified may provide limited predictive benefit over existing decision making approaches.

One potential implementation of such a model could be a clinical decision support tool that identifies patients requiring NSICU admission, and immediately delivers a notification to specialized neurocritical care teams. In such an implementation, selection of an optimal alert threshold necessitates careful evaluation of model performance, and likely depends on multiple factors, including healthcare institution needs, clinical stakeholder preferences, and NSICU resource availability. In many institutions, such as ours, the costliness of NSICU resource mobilization may justify a model threshold that seeks to minimize false positive notifications.

At a false positive rate of 1:100, the false-negative rate of our model was 42%. Consistent with stroke care system guidelines46, and like many other stroke-capable hospitals, Mount Sinai possesses a stroke notification paging system that alerts clinicians of patients with suspected stroke in the ED and hospital floors. This system is frequently used for patients with acute neurological signs or symptoms irrespective of cerebrovascular etiology. This system could therefore be used in combination with our predictive model as to potentially minimize type II error in identifying patients that require NSICU admission.

Limitations

Our study was limited by a number of notable factors. First, this was a single-center, retrospective study. Although our medical center treats a diverse urban population, our analysis incorporated multiple, potentially non-generalizable factors, such as a specific staffing configurations, resource availability, triage procedures, and practice styles. For instance, in many institutions such as ours, admission to a dedicated stroke unit is standard practice following administration of tissue plasminogen activator for acute ischemic stroke, whereas in other institutions lacking a stroke unit, the same clinical scenario requires admission to the NSICU for post-thrombolysis monitoring. Therefore, it is important to consider that the classifying ability of our model for NSICU admission may decrease when tested or deployed in settings comprising different setting-specific factors. Second, biases exist in the documentation technique and content of triage notes used in the model. Third, we did not compare the performance of our model to that of trained clinicians, and we did not operationalize our model, which prevented us from assessing the feasibility of implementing our model and supporting care decisions. Fourth, we handled the free text data in our model by using BOW modeling, which does not capture word order. Alternative NLP methods, such as distributional embeddings or neural networks, may provide better results than those in our study. However, despite its limitations, BOW has the advantages of being simple to use, infer, implement, and integrate with tabular data.

Conclusions

We developed a model to predict admission to the NSICU within 30 min of ED arrival using free text and tabular data with good discriminatory performance. Our results suggest that NLP can be used to combine text with tabular data, although such a combination only afforded a marginal improvement in overall discriminatory performance over tabular-only models. Despite the discriminatory performance demonstrated in this study, specificity and sensitivity thresholds should be guided by institutional priorities and preferences, and our findings should be validated in other patient cohorts. Future plans include implementation of this model as a clinical decision support tool, and prospectively comparing the triage our model’s performance against that of unassisted neurocritical care experts.

References

Diringer, M. N. & Edwards, D. F. Admission to a neurologic/neurosurgical intensive care unit is associated with reduced mortality rate after intracerebral hemorrhage. Crit. Care Med. 29, 635–640. https://doi.org/10.1097/00003246-200103000-00031 (2001).

Varelas, P. N. et al. Impact of a neurointensivist on outcomes in patients with head trauma treated in a neurosciences intensive care unit. J. Neurosurg. 104, 713–719. https://doi.org/10.3171/jns.2006.104.5.713 (2006).

Suarez, J. I. Outcome in neurocritical care: advances in monitoring and treatment and effect of a specialized neurocritical care team. Crit. Care Med. 34, S232-238. https://doi.org/10.1097/01.CCM.0000231881.29040.25 (2006).

Suarez, J. I. et al. Length of stay and mortality in neurocritically ill patients: impact of a specialized neurocritical care team. Crit. Care Med. 32, 2311–2317. https://doi.org/10.1097/01.ccm.0000146132.29042.4c (2004).

Rincon, F. et al. Impact of delayed transfer of critically ill stroke patients from the Emergency Department to the Neuro-ICU. Neurocrit. Care 13, 75–81. https://doi.org/10.1007/s12028-010-9347-0 (2010).

Derlet, R. W. & Richards, J. R. Emergency department overcrowding in Florida, New York, and Texas. South. Med. J. 95, 846–849 (2002).

Chalfin, D. B. et al. Impact of delayed transfer of critically ill patients from the emergency department to the intensive care unit. Crit. Care Med. 35, 1477–1483. https://doi.org/10.1097/01.CCM.0000266585.74905.5A (2007).

Di Somma, S. et al. Overcrowding in emergency department: An international issue. Intern. Emerg. Med. 10, 171–175. https://doi.org/10.1007/s11739-014-1154-8 (2015).

Rabin, E. et al. Solutions to emergency department “boarding” and crowding are underused and may need to be legislated. Health Aff (Millwood) 31, 1757–1766. https://doi.org/10.1377/hlthaff.2011.0786 (2012).

Forero, R., McCarthy, S. & Hillman, K. Access block and emergency department overcrowding. Crit. Care 15, 216. https://doi.org/10.1186/cc9998 (2011).

Lefrant, J. Y. et al. The daily cost of ICU patients: A micro-costing study in 23 French Intensive Care Units. Anaesth. Crit. Care Pain Med. 34, 151–157. https://doi.org/10.1016/j.accpm.2014.09.004 (2015).

McLaughlin, A. M., Hardt, J., Canavan, J. B. & Donnelly, M. B. Determining the economic cost of ICU treatment: a prospective “micro-costing” study. Intensive Care Med. 35, 2135–2140. https://doi.org/10.1007/s00134-009-1622-1 (2009).

Pivovarov, R. & Elhadad, N. Automated methods for the summarization of electronic health records. J. Am. Med. Inform. Assoc. 22, 938–947. https://doi.org/10.1093/jamia/ocv032 (2015).

Deo, R. C. Machine learning in medicine. Circulation 132, 1920–1930. https://doi.org/10.1161/circulationaha.115.001593 (2015).

Cabitza, F. & Banfi, G. Machine learning in laboratory medicine: Waiting for the flood?. Clin. Chem. Lab. Med. 56, 516–524. https://doi.org/10.1515/cclm-2017-0287 (2018).

Handelman, G. S. et al. eDoctor: Machine learning and the future of medicine. J. Intern. Med. 284, 603–619. https://doi.org/10.1111/joim.12822 (2018).

Saber, H., Somai, M., Rajah, G. B., Scalzo, F. & Liebeskind, D. S. Predictive analytics and machine learning in stroke and neurovascular medicine. Neurol. Res. 41, 681–690. https://doi.org/10.1080/01616412.2019.1609159 (2019).

Obermeyer, Z. & Emanuel, E. J. Predicting the future: Big data, machine learning, and clinical medicine. N. Engl. J. Med. 375, 1216–1219. https://doi.org/10.1056/NEJMp1606181 (2016).

Klug, M. et al. A gradient boosting machine learning model for predicting early mortality in the emergency department triage: Devising a nine-point triage score. J. Gen. Intern. Med. 35, 220–227. https://doi.org/10.1007/s11606-019-05512-7 (2020).

Klang, E. et al. Promoting head CT exams in the emergency department triage using a machine learning model. Neuroradiology 62, 153–160. https://doi.org/10.1007/s00234-019-02293-y (2020).

Meystre, S. M., Savova, G. K., Kipper-Schuler, K. C. & Hurdle, J. F. Extracting information from textual documents in the electronic health record: A review of recent research. Yearb. Med. Inf. 1, 128–144 (2008).

Jensen, K. et al. Analysis of free text in electronic health records for identification of cancer patient trajectories. Sci. Rep. 7, 46226. https://doi.org/10.1038/srep46226 (2017).

Kreimeyer, K. et al. Natural language processing systems for capturing and standardizing unstructured clinical information: A systematic review. J. Biomed. Inform. 73, 14–29. https://doi.org/10.1016/j.jbi.2017.07.012 (2017).

Kehl, K. L. et al. Assessment of deep natural language processing in ascertaining oncologic outcomes from radiology reports. JAMA Oncol. https://doi.org/10.1001/jamaoncol.2019.1800 (2019).

Yim, W. W., Yetisgen, M., Harris, W. P. & Kwan, S. W. Natural language processing in oncology: A review. JAMA Oncol. 2, 797–804. https://doi.org/10.1001/jamaoncol.2016.0213 (2016).

Hong, W. S., Haimovich, A. D. & Taylor, R. A. Predicting hospital admission at emergency department triage using machine learning. PLoS ONE 13, e0201016. https://doi.org/10.1371/journal.pone.0201016 (2018).

Lee, S. Y., Chinnam, R. B., Dalkiran, E., Krupp, S. & Nauss, M. Prediction of emergency department patient disposition decision for proactive resource allocation for admission. Health Care Manag. Sci. https://doi.org/10.1007/s10729-019-09496-y (2019).

Raita, Y. et al. Emergency department triage prediction of clinical outcomes using machine learning models. Crit Care 23, 64. https://doi.org/10.1186/s13054-019-2351-7 (2019).

Kong, G. et al. Current state of trauma care in China, tools to predict death and ICU admission after arrival to hospital. Injury 46, 1784–1789. https://doi.org/10.1016/j.injury.2015.06.002 (2015).

Sun, Y., Heng, B. H., Tay, S. Y. & Seow, E. Predicting hospital admissions at emergency department triage using routine administrative data. Acad. Emerg. Med. 18, 844–850. https://doi.org/10.1111/j.1553-2712.2011.01125.x (2011).

Barak-Corren, Y., Israelit, S. H. & Reis, B. Y. Progressive prediction of hospitalisation in the emergency department: Uncovering hidden patterns to improve patient flow. Emerg. Med. J. 34, 308–314. https://doi.org/10.1136/emermed-2014-203819 (2017).

Dinh, M. M. et al. The Sydney Triage to Admission Risk Tool (START) to predict Emergency Department Disposition: A derivation and internal validation study using retrospective state-wide data from New South Wales Australia. BMC Emerg. Med. 16, 46. https://doi.org/10.1186/s12873-016-0111-4 (2016).

Desautels, T. et al. Prediction of early unplanned intensive care unit readmission in a UK tertiary care hospital: A cross-sectional machine learning approach. BMJ Open 7, e017199. https://doi.org/10.1136/bmjopen-2017-017199 (2017).

Choi, S. W., Ko, T., Hong, K. J. & Kim, K. H. Machine learning-based prediction of Korean triage and acuity scale level in emergency department patients. Healthc. Inform. Res. 25, 305–312. https://doi.org/10.4258/hir.2019.25.4.305 (2019).

Sterling, N. W., Patzer, R. E., Di, M. & Schrager, J. D. Prediction of emergency department patient disposition based on natural language processing of triage notes. Int. J. Med. Inform. 129, 184–188. https://doi.org/10.1016/j.ijmedinf.2019.06.008 (2019).

Lucini, F. R. et al. Text mining approach to predict hospital admissions using early medical records from the emergency department. Int. J. Med. Inform. 100, 1–8. https://doi.org/10.1016/j.ijmedinf.2017.01.001 (2017).

Zhang, X. et al. Prediction of emergency department hospital admission based on natural language processing and neural networks. Methods Inf. Med. 56, 377–389. https://doi.org/10.3414/me17-01-0024 (2017).

Gilboy, N., Tanabe, T., Travers, D. & Rosenau, A. M. Emergency Severity Index (ESI): A Triage Tool for Emergency Department Care, Version 4. Implementation Handbook 2012 Edition. Publication No. 12–0014. (Agency for Healthcare Research and Quality., Rockville, MD).

Chen, T. & Guestrin, C. in Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining 785–794 (Association for Computing Machinery, San Francisco, California, USA, 2016).

Shannon, C. E. A mathematical theory of communication. Bell Syst. Tech. J. 27, 379–423. https://doi.org/10.1002/j.1538-7305.1948.tb01338.x (1948).

Steuer, R., Kurths, J., Daub, C. O., Weise, J. & Selbig, J. The mutual information: detecting and evaluating dependencies between variables. Bioinformatics (Oxford, England) 18(Suppl 2), S231-240. https://doi.org/10.1093/bioinformatics/18.suppl_2.s231 (2002).

Kinney, J. B. & Atwal, G. S. Equitability, mutual information, and the maximal information coefficient. Proc. Natl. Acad. Sci. USA 111, 3354–3359. https://doi.org/10.1073/pnas.1309933111 (2014).

Moheet, A. M. et al. Standards for neurologic critical care units: A statement for healthcare professionals from the neurocritical care society. Neurocrit. Care 29, 145–160. https://doi.org/10.1007/s12028-018-0601-1 (2018).

Wei, D. et al. Mobile interventional stroke teams lead to faster treatment times for thrombectomy in large vessel occlusion. Stroke 48, 3295–3300. https://doi.org/10.1161/STROKEAHA.117.018149 (2017).

Morey, J. R. et al. Major causes for not performing endovascular therapy following inter-hospital transfer in a complex urban setting. Cerebrovasc. Dis. 1, 1–6. https://doi.org/10.1159/000503716 (2019).

Higashida, R. et al. Interactions within stroke systems of care: A policy statement from the American Heart Association/American Stroke Association. Stroke 44, 2961–2984. https://doi.org/10.1161/STR.0b013e3182a6d2b2 (2013).

Author information

Authors and Affiliations

Contributions

All authors reviewed and approved the manuscript. B.R.K. drafted the manuscript, interpreted the data, and substantively revised the manuscript. E.K. acquired, analyzed and interpreted the data, and substantively revised the manuscript. N.S.D. conceived the study and substantively revised the manuscript. A.Z. prepared Figs. 1, 2, 3 and 4 and substantively revised the manuscript. P.T., M.A.K., and I.C. substantively revised the manuscript. A.B.C. acquired the data and substantively revised the manuscript. M.A.L. and E.K.O conceived the study, interpreted the data, and substantively revised the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Klang, E., Kummer, B.R., Dangayach, N.S. et al. Predicting adult neuroscience intensive care unit admission from emergency department triage using a retrospective, tabular-free text machine learning approach. Sci Rep 11, 1381 (2021). https://doi.org/10.1038/s41598-021-80985-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-021-80985-3

This article is cited by

-

An ensemble model for predicting dispositions of emergency department patients

BMC Medical Informatics and Decision Making (2024)

-

Application of artificial intelligence technology in the field of orthopedics: a narrative review

Artificial Intelligence Review (2024)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.