Abstract

Music is organised both spectrally and temporally, determining musical structures such as musical scale, harmony, and sequential rules in chord progressions. A number of human neuroimaging studies investigated neural processes associated with emotional responses to music investigating the influence of musical valence (pleasantness/unpleasantness) comparing the response to music and unpleasantly manipulated counterparts where harmony and sequential rules were varied. Interactions between the previously applied alterations to harmony and sequential rules of the music in terms of emotional experience and corresponding neural activities have not been systematically studied although such interactions are at the core of how music affects the listener. The current study investigates the interaction between such alterations in harmony and sequential rules by using data sets from two functional magnetic resonance imaging (fMRI) experiments. While replicating the previous findings, we found a significant interaction between the spectral and temporal alterations in the fronto-limbic system, including the ventromedial prefrontal cortex (vmPFC), nucleus accumbens, caudate nucleus, and putamen. We further revealed that the functional connectivity between the vmPFC and the right inferior frontal gyrus (IFG) was reduced when listening to excerpts with alterations in both domains compared to the original music. As it has been suggested that the vmPFC operates as a pivotal point that mediates between the limbic system and the frontal cortex in reward-related processing, we propose that this fronto-limbic interaction might be related to the involvement of cognitive processes in the emotional appreciation of music.

Similar content being viewed by others

Introduction

Music has been ubiquitous in human cultures for more than 40,000 years1, presumably, at least partly, for its hedonic value2,3,4. As a form of art that uses sound as a medium, music embodies unique spectral structures (e.g. musical scale systems, tonal hierarchy, timbres of musical instruments) and temporal structures (e.g. sequential rules in the progression of chords, voice leading, motif development, dynamic rhythms, regular tempo). In the discourse on aesthetical experience of music from the perspective of cognitive neuroscience, the affective influence of harmony (either consonant or dissonant) was suggested to render sensory pleasantness, or “core liking5,6”, whereas temporal structures of music, or sequential rules, were proposed to rather be evaluated via “cognitive processing”, which extracts rules from a sequence of sound and creates expectancy.

Dissonant harmony was associated with decreased functional signal (e.g. regional cerebral blood flow [rCBF] or blood oxygen level dependent [BOLD] signal) in the temporal and frontal cortices including the bilateral superior temporal gyrus (STG), frontal opercula, and insulae7,8,9,10,11. A violation of expectancy based on a sequential rule evoked synchronised neural responses in the inferior frontal gyrus (IFG) mostly in the right hemisphere12,13,14,15,16. Furthermore, scrambling the temporal order of musical excerpts resulted in decreased BOLD signal in the ventral striatum and the orbitofrontal cortex17, which seems to support the notion that a major underlying mechanism of music-induced pleasure is based on tension that is built up by violation of expectation and its prolonged fulfilment18,19.

While a number of previous studies used disruption in either a spectral or temporal structure to create unpleasant counterparts to investigate music-induced emotions, the interaction between these musical structures remains largely unknown. If aesthetical appreciation of music were indeed a holistic process as has been suggested6, one would expect that the processing of both structures is closely related and that disrupting the processing of one would disrupt the processing of the other. In other words, a disruption of spectral structure for example would alter neural responses differently depending on the temporal structure. As suggested in previous publications3,20, it is probable that these spectral and temporal structures of music are integrated in association cortices (i.e. ventromedial prefrontal cortex [vmPFC] or IFG) corresponding to a type of “higher-order” emotional processing distinct from a more “lower-order” auditory signal processing along the auditory pathway (e.g. inferior colliculus or STG). To test this conjecture, we investigated an interaction between spectral and temporal structures in music using functional magnetic resonance imaging (fMRI) data from two human experiments that are different in MR sequences, mutually complementarily capturing brain activities related to music perception. We were especially interested in areas that showed interaction effects in integrating spectral and temporal dimensions in music.

Materials and Methods

Stimuli

Musical excerpts were extracted from instrumental music from the last four centuries, which have been or had been popular to general audience. Musical styles included classical (e.g. J. S. Bach), swing (e.g. Benny Goodman), and tango (e.g. Francisco Canaro) as used in previous studies11,21 (see Supplementary Table S1 for a full list of excerpts). The musical excerpts were manipulated in the spectral structure (i.e. harmony) and the temporal structure (i.e. play direction) resulting in an orthogonal 2 × 2 factorial design. To alter the spectral structure, the original excerpt was transposed two semitones up (i.e. major second) and six semitones down (i.e. diminished fifth), and subsequently mixed together, resulting in added dissonant intervals throughout the excerpts that affect local harmony and tonal context. To alter the temporal structure, the excerpt was played backward. This resulted in changes in musical timbre, locally, and direction of chord progressions, more globally. All stimuli across conditions were controlled for loudness by equalizing the root-mean-square of the waveforms.

It is important to note that these physical manipulations were orthogonal in the sense that the change of spectral content (or temporal order) does not alter temporal order (or spectral content), but the results of manipulations are not musically independent. For example, the sequential rule of chord progression (e.g. frequent use of a tonic [the first chord of a diatonic scale] chord after a dominant [the fifth chord of a diatonic scale] at the end of a musical phrase) is based on tonal context (because it defines a tonic function of a triad [i.e. a major triad can be either a tonic, subdominant, or dominant of a major key]) as well as local harmony (because it forms simultaneous tones as a chord). Therefore, when the spectral manipulation makes the chords dissonant, expectation based on tonal context can be weakened, which would make the effect of reversal less salient.

It is also noteworthy that these manipulations do not “completely” abolish musical organisation but proportionally degrade it. For illustration, spectrograms of four versions of a representative musical excerpt by J. S. Bach, played by Glenn Gould, are shown in Fig. 1 (download Supplementary File S1 for an audio file of the example). The conditions are labelled as “forward-consonant” (FC), “backward-consonant” (BC), “forward-dissonant” (FD), “backward-dissonant” (BD) for the play direction and the dissonance level. In general, the “dissonant” conditions (Fig. 1c,d) show more unresolved spectral components (thus perceived as dissonant) compared to “consonant” conditions (Fig. 1a,b). However, it is clear that the local spectral density in the dissonant excerpts fluctuates proportionally to the original harmony. Note that the condition labels (“consonant” and “dissonant”) are only relative descriptions; the original excerpts included both consonant and dissonant chords but the excerpts in “dissonant” conditions always had dissonant chords.

Magnetic resonance imaging sequences

Functional neuroimaging data were adopted from two of our fMRI experiments, where the same stimuli but different MR sequences were used, thus providing us with complementary views of the brain activities (see Table 1 for an overview of the datasets). The first data set (“Experiment I”) has not been published elsewhere. The second data set (“Experiment II”) was used in two of our previous papers that revealed certain aspects of music perception that are different from the focus of the current study7,10; both studies addressed an investigation of the temporal dynamics of the ventral striatum, with respect to response to pleasant music7 and inter-subject correlation between the inferior colliculus response and subjective disliking of dissonant harmony10. The key difference between the two experiments was a silent delay between acquisition of fMRI volumes. In Experiment I, an fMRI volume was taken every 12 s (2 s to take one volume), allowing a silent period of 10 s without acoustic scanner noise, which was used to present auditory stimuli in the absence of acoustic noise from the MR sequence. This kind of MR sequence is known as “(temporally) sparse” scanning22. In Experiment II, fMRI volumes were “continuously” taken every second. Thus, in this experiment, subjects listened to musical stimuli in the presence of acoustic scanner noise.

Contamination of auditory stimuli by the acoustic noise of the MRI sequence is a serious problem in auditory fMRI experiments. In principle, given the delay of canonical hemodynamic function, acoustic contamination would be minimal with a non-scanning interval of 8 s, particularly when studying the primary auditory cortex23. However, this is an inefficient way to acquire fMRI data in terms of the number of volumes per given experiment time. Shortening the duration of a silent delay may increase statistical power by the increased number of samples (i.e. volumes), although it may also increase the interference by the acoustic scanner noise. A technical report study systematically compared sparse, alternating, and continuous sampling and reported non-linear alteration of auditory processing due to the presence of scanning noise24. Moreover, there is evidence that the effect of scanner noise is not limited to the primary auditory cortex but also to non-primary auditory cortices when processing spoken language25.

While the acoustic scanner noise is an issue that is not to be dealt with lightly, recent studies demonstrated that tonotopy experiments using continuous scanning can be successful with phase-encoded stimuli (i.e. frequency sweeping) and modern sound delivery systems26,27. Moreover, fMRI data at a higher temporal resolution enable us to investigate dynamic aspects of brain responses, particularly to dynamically evolving stimuli such as music. In our previous report of Experiment II28, we showed that continuous scanning yielded comparable results as the sparse scanning: a number of structures, including the cortical limbic areas and striatal regions, involved emotional appreciation. Thus, we aimed to use advantages of both MR sequences: (1) a precise localisation of involved brain regions without scanner noise using sparse sampling data (i.e. Experiment I) and (2) investigation of temporal dynamics and functional connectivity of the identified brain regions using continuous sampling data (i.e. Experiment II).

In Experiment I, twenty-four axial slices of echo planar imaging (EPI) that cover the whole brain were acquired with an in-plane resolution of 3 × 3 mm2, a thickness of 4 mm, and an inter-slice gap of 1 mm, resulting in a resolution of 3 × 3 × 5 mm3. Functional and T1-weighted images (1 × 1 × 1 mm3) were obtained using a 3-T Magnetom Tim Trio scanner (Siemens, Erlangen, Germany).

In Experiment II, fifteen axial slices of EPI that cover the ventral half of the brain were acquired with an in-plane resolution of 2.5 × 2.5 mm2, a thickness of 4 mm, and an inter-slice gap of 0.5 mm, resulting in a resolution of 2.5 × 2.5 × 4.5 mm3. EPI images were acquired using a 3-T MedSpec 30/100 scanner (Bruker, Ettlingen, Germany) and a birdcage head coil, and T1-weighted images at unit-mm isotropic resolution were acquired using a 3-T Magnetom Tim Trio scanner (Siemens, Erlangen, Germany).

Experimental paradigms

Experiment paradigms of the two experiments are depicted in Fig. 2.

Schematic drawings depicting experimental paradigms of Experiment I (a) and Experiment II (b). Blue rectangles indicate acquisition of one fMRI volume. Grey shades with musical notations indicate presentation of musical stimuli. White rectangles with a pictogram of a hand pressing a button indicate the response time-window. Note that the duration of stimulus presentation and silent delay varied across trials in Experiment I (a).

In Experiment I, one trial consisted of a silent period without scanning for 10 s and a scanning period for 2 s. The musical excerpts were presented during the silent period at pseudorandom timing (from 3.6 to 10 s with a step of 0.7 s before the acquisition of each volume) to sample different phases of the hemodynamic response to musical excerpts (namely, event-related design). Participants were instructed to press a button to rate subjective pleasantness of each excerpt (1 = very unpleasant, 2 = unpleasant, 3 = pleasant, 4 = very pleasant) during the 2-s scanning period. Twenty-five instrumental tunes were used to create 4 versions (FC, FD, BC, BD) and played twice, resulting in 25 × 4 × 2 = 200 trials.

In Experiment II, one trial consisted of a 30-s period for presentation of musical excerpts and a 6-s period for subjective rating of presented musical excerpts (36 s in total). Acoustic scanner noise was present throughout the whole experiment. Participants were instructed to press a button to rate subjective unpleasantness (1 = very pleasant, 2 = pleasant, 3 = unpleasant, 4 = very unpleasant) of each excerpt during the late 6-s period. Twenty instrumental tunes were played only once, resulting in 20 × 4 = 80 trials.

Participants

Overall, 39 healthy volunteers participated in either one of two experiments (n = 16, 14 females, mean age 25.8 ± 2.8 years in Experiment I; n = 23, 13 females, mean age 25.9 ± 2.9 years in Experiment II). The studies were conducted strictly following guidelines approved by the Ethics Committee of the University of Leipzig. Informed written consent was obtained before the fMRI experiments. One participant in Experiment II was studying for a Bachelor’s degree in music, and other participants were students or professionals in non-musical fields. Some participants (four in Experiment I, sixteen in Experiment II) reported experience in playing musical instruments. However, the differences in the proportions of gender and musical experience were not statistically significant between the datasets (gender: Z = 0.29, p = 0.77; age: T(37) = −0.48, p = 0.64; musical experience: Z = 1.59, p = 0.11).

Image processing

Using SPM12 (v6225; Wellcome Trust Centre for Neuroimaging, University Colleague of London, London, UK) and MATLAB (v8.6, R2015b; MathWorks, Natick, Massachusetts, USA), anatomical and functional images were processed, including unwarping and realignment, unified segmentation, spatial normalisation, and spatial smoothing. Because of the different TRs, slice-timing correction was done only for Experiment II. Also, for the same reason, we used 6 rigid body motion parameters and their lengths (i.e. L2-norm) of temporal derivatives of translation and rotation, respectively (i.e. 8 regressors in total) to regress out head movement artefacts in Experiment II. We resampled the functional data at isotropic resolutions that are close to the original resolutions29: 3-mm isotropic resolution for Experiment I; 2.5-mm for Experiment II. Different smoothing kernel sizes (full width at half maximum [FWHM] of 5 mm for Experiment I; 4 mm for Experiment II) were chosen to approximately match the effective smoothness of the two data sets at an isotropic FWHM of 8 mm. See Table 1 for detailed parameters.

Functional activation analysis

A subject-level autoregressive general linear model (GLM) was carried out by encoding onsets and durations of four conditions (i.e. FC, FD, BC, and BD) for both data sets. Effects of conditions were estimated after adjustment for non-sphericity of the functional data using SPM12. Although the TR of Experiment I was very long (i.e. 12 s), because the images were obtained at various post-stimulus-onset times (from 3.6 to 10 s; namely event-related design), modelling hemodynamics in Experiment I is a valid approach. Because we previously reported that the ventral striatal response attenuated over time in Experiment II7, we modelled a 30-s condition into three 10-s segments and used the first segment to compute contrasts to match Experiment I. High-pass filter cut-off was 1/128 Hz for both experiments.

We computed multiple contrasts to test various effects: a partial effect of dissonance when excerpts were forward (FD - FC) or backward (BD - BC), a partial effect of reversal when excerpts were consonant (BC - FC) or dissonant (BD - FD), a joint effect of dissonance and reversal (BD - FC), and an interaction between the dissonance and reversal: (BD - BC) - (FD - FC).

A group-level one-sample T-test was carried out on subject-level contrast images. The Gaussian assumption was tested by carrying out a Kolmogorov–Sminov test at each voxel with false discovery rate correction. Because all corrected p-value in the brain mask was one, we used parametric inferences with random field theory (RFT)30 to control family-wise error rate (FWER) less than 0.05, as implemented in SPM12. The cluster-forming height-threshold was 0.001, and the extent-threshold was determined by the minimal extent of a cluster with a cluster-wise p-value less than 0.05, which was approximately 640 mm3 (24 voxels in Experiment I; 44 voxels in Experiment II). As pointed out by Flandin and Friston31, a recent criticism on cluster-extent thresholding by Eklund, et al.32 was based on results with liberal thresholds in height (uncorrected p = 0.01) and extent (80 mm3; only 3 voxels at 3-mm isotropic resolution). In the replication with a stringent height-threshold (uncorrected p = 0.001) by Flandin and Friston31, resulting FWERs were between 4 and 6%, demonstrating that the cluster-wise thresholding using SPM does not critically inflate FWER when employed carefully. Thus, we used conservative thresholds in both height and extent in the present study.

Psychophysiological interaction analysis

In the functional activation analysis, the vmPFC showed similar BOLD activation to the most pleasant and the most unpleasant stimuli. Since the region showed sensitivity to disruptions in either spectral or temporal organisation of music in the current data, it is possible that what was altered was the functional connectivity of the vmPFC instead of the local activity. To test this idea, an analysis of psychophysiological interaction (PPI)33 was performed on the functional connectivity of the VMFPC using an SPM-based MATLAB toolbox for Generalized PPI34 (https://www.nitrc.org/projects/gppi). It has been known that a correlation between two BOLD time series is highly sensitive to abrupt and simultaneous changes in image intensities over many voxels, unlike BOLD activation analysis. Head motions during scanning may induce such signal changes, leading to spurious correlation35. Thus, for the PPI analysis, we employed 6 “anatomical CompCor” regressors, which are eigenvariates extracted from white matter and cerebrospinal fluid voxels to model non-neural global fluctuation in BOLD time series36. Also, for reliable estimation of functional connectivity, we used the whole 30-s trial for the PPI analysis. For a group-level statistical inference, the RFT was also used to control FWER to be less than 0.05.

Results

Experiment I

Partial effects of disrupted musical structures

We found decreases in the BOLD signal due to the dissonance when the excerpts were played forwards (i.e. FD – FC; Fig. 3a) in a number of brain regions, including the bilateral superior temporal gyri (STGs) and planum temporale (PT), the right planum polare (PP), the left amygdala and nucleus accumbens (NAc), the bilateral putamina (Ptm) and globus pallidi (GPs), and the medial parts of thalami (significant clusters are listed in Table 2). We also found a positive effect in the right superior frontal gyrus (SFG). Interestingly, the partial effect of dissonance when the excerpts were played backwards (i.e. BD - BC; Fig. 3b) was only significant in the auditory cortices (i.e. decreased BOLD signal in the bilateral STGs and the right PP), but not in the limbic areas (i.e. NAc, Ptm, or GPs).

T-statistic maps (degrees of freedom of 15) of the partial effect of dissonance when forward (a) or backward (b), the partial effect of reversal when consonant (c) or dissonant (d), and the joint effect of disruption in both domains (e) in Experiment I. Zoomed views are given with contours of subcortical structures in white. Abbreviations: E1, Experiment I; Diss/D, dissonance; F, forward; Back/B, backward, C, consonant; Ptm, putamen; NAc, nucleus accumbens; GP, globus pallidus; CdN, caudate nucleus; Thal, thalamus.

The reversal of play direction when the excerpts were consonant (i.e. BC - FC; Fig. 3c) was associated with decreases in the BOLD signal in the bilateral Ptm, GP, and anterior cingulate cortex (ACC). We also found an increase in the right SFG similar to the partial effect of dissonance when played forwards. Similar to the analysis above, the partial effect of reversal when dissonant (i.e. BD - FD; Fig. 3d) was different from that when consonant. For the contrast BD – FD, we found decreases in the BOLD signal in the right PT and the right lateral orbital frontal cortex (OFC), but no change in the BOLD signal in the auditory cortices (i.e. STG, PT, and PP). We also found increases in the BOLD signal in a number of cortical regions, including the bilateral angular gyri, right ACC, left middle frontal gyrus (MFG), and the left frontal pole (FP). See Fig. S1 for all slices over the whole brain.

The joint effect of disruptions in spectral and temporal domains (i.e. BD - FC; Fig. 3e) was found as decreased BOLD signals in the bilateral STGs, the left GP, the anterior part of the left amygdala, and the medial parts of the bilateral thalami. Compared to the partial effects of either dissonance or reversal alone (Fig. 3a,c), the joint effect was weaker (i.e. less decrease in the BOLD signal) in the limbic system (i.e. NAc, Ptm, GP) and stronger (i.e., more decrease) in the auditory cortices.

Importantly, we found that the partial effect of one domain was dependent on the other domain. By definition, this implies an interaction between the two domains. We further quantitatively tested this observation in the following section.

Effect of interaction between disrupted musical structures in spectral vs. temporal domains

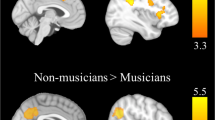

We tested the interaction by subtracting the partial effect of dissonance when played backwards from that when played forwards; that is, (BD - BC) - (FD - FC). This is equivalent to the subtraction of the partial effect of reversal when dissonant from that when consonant because (BD - BC) - (FD - FC) = (BD - FD) - (BC - FC). We found a positive interaction in the ventromedial prefrontal cortex (vmPFC), ACC, and the subcortical limbic system including the NAc, the GPs, and thalami (Fig. 4a). This confirmed that the partial effect of a disruption in one domain was nullified when the other domain was disrupted in the cortical and subcortical limbic areas. In other words, a disruption in addition to a stimulus already with another disruption did not produce a further decrease in BOLD activation in the fronto-limbic areas. This was not the case in auditory regions.

T-statistic maps (degrees of freedom of 15) for the interaction between dissonance and reversal (a) and boxplots showing effect sizes (i.e. beta coefficients) of four conditions averaged within clusters in the ventromedial prefrontal cortex (b), the left nucleus accumbens and caudate nucleus (c), and the right nucleus accumbens and caudate nucleus. (d) Abbreviations: E1, Experiment I; DxB, interaction between dissonance and backward; vmPFC, ventromedial prefrontal cortex; NAc, nucleus accumbens; Ptm, putamen; CdN, caudate nucleus; Thal, thalamus; Fwd, forward; Bwd, backward; Cons., consonant; Diss., dissonant.

To illustrate the GLM result in terms of effect size, beta coefficients averaged within each significant clusters are plotted in Fig. 4b–d. Interestingly, the positive interaction was so strong that the signs of marginal effects were flipped in the vmPFC and the bilateral striata. Indeed, the beta coefficients between the FC and BD conditions were not significantly different in all three clusters (max T(15) = 1.01; p > 0.10), which is surprising given the sensitivity to disrupted musical structures of the regions and the widely different acoustics and related emotional valances of conditions. We addressed this issue later in the analyses of PPI.

Experiment II

Replication of the functional activation analysis

We analysed the Experiment II data set using the same processing pipeline except for the temporal processing (i.e. slice-timing correction and head motion covariates). The results from Experiment II were in good agreement with Experiment I, as shown in Fig. 5 and Table 3 (see Fig. S2 for all slices). Specifically, (1) deactivation in the bilateral STGs, vmPFC, and GPs due to dissonance alone (Fig. 5a,h), (2) selective deactivation only in the limbic area but not in the STGs due to reversal alone (Fig. 5c,j), (3) deactivation in the STGs due to joint disruption (Fig. 5e,j), and the positive interaction in the vmPFC (Fig. 5f,m). There were also noticeable differences between the experiments. However, significant differences between the experiments were mainly found in the auditory cortices (i.e., HG and PT), presumably related to the acoustic noise from gradient coils during continuous scanning, but not the left NAc in the interaction (see Fig. S3 for all slices).

Psychophysiological interaction

In the vmPFC, the effect size (i.e. beta coefficient) of the FC condition was not significantly different from that of the BD condition in the Experiment I (T(15) = 1.01; p > 0.10) and Experiment II (T(22) = 1.22, p = 0.24). As mentioned earlier, this may look incongruent because two conditions were widely different in terms of acoustics and subjective rating. It may look more puzzling given that the vmPFC showed a strong decrease in BOLD activation due to the disruption of either a spectral or temporal structure. However, it is known that the vmPFC is engaged in widely various cognitive sub-processes37. Recent studies demonstrated reconfiguration of functional networks of the vmPFC due to external inputs38,39 and a level of arousal40. Given that, we suspected that the vmPFC might work similarly in terms of the activation level during the conditions of FC and BD but as a part of different functional networks. To test this idea, we analysed PPI between the vmPFC time series and the contrast between the FC and BD on the Experiment II data, which was acquired continuously.

The results of the PPI analysis are shown in Fig. 6. The physical factor (i.e. correlation with the vmPFC time series) was found in extensive areas of the frontal cortices, temporal cortices, and subcortical structures (Fig. 6a). This functional connectivity of the vmPFC was reduced by the BD condition compared to the FC condition in the right IFG and FP (Fig. 6b; see Table 3g for statistics), supporting our conjecture on the vmPFC.

Behavioural measures

In correspondence to the observed changes in BOLD signal, we also found significant effects of disruptions and their interactions in the pleasantness ratings during scanning, as shown in Fig. 7 and Table 4. Notably, most participants rated the partially disrupted conditions (i.e. FD and BC) as “unpleasant” (mean rating of 2) without any particular preference for either (min p = 0.270). The interaction was also positive, but unlike the BOLD signal, the direction of effect was not changed so that the jointly disrupted condition (i.e. BD) was not more preferred than the partially disrupted conditions (i.e. FD or BC).

Since it was confirmed that the interaction between spectral and temporal structures is significant in terms of both BOLD signal and behavioural response, we further explored if there is a correlation between individual differences in neural and behavioural effects, as recently demonstrated in our previous study10. In other words, we looked at if an individual showed a small (or large) effect of the interaction in the pleasantness ratings also exhibited a small (or large) effect of the interaction in the BOLD signal. We tested the inter-subject correlation between contrast coefficients of the BOLD signal and the behavioural response for the interaction, but no significant correlation was found in either experiment (min p = 0.676).

Discussion

In the current study, we found decreased BOLD signal in auditory and limbic systems (i.e. the bilateral STGs, vmPFC, NAc, Ptm, GP, amygdala, and thalami) due to partial disruption of both spectral and temporal organisation, which corresponded to decreased subjective ratings of pleasantness. These anatomical structures have been reported to be involved in the processing of music-induced emotions in the previous studies3,8,9,11,20,41,42,43,44,45. As we hypothesised, we found a significant, positive interaction between the disruptions in the temporal and spectral organisation of the music, which was localised in the fronto-limbic system (i.e. vmPFC, Nac, CdN, Ptm). Furthermore, we found a significant modulation of functional connectivity of the vmPFC by the combined disruption of the temporal and spectral organisation. Major findings were consistently observed in both fMRI data sets. In the following sections, we will discuss the relevance of our findings to neural mechanisms that contribute to the aesthetic appreciation of music.

Partial effect of alteration of spectral and temporal structures

Dissonance is very often associated with unpleasant emotions not only by those who are familiar with Western music but also by infants46,47, people from an autochthonous African ethnic group with no prior exposure to polyphonic Western music21, in a documented case a non-human primate (i.e. a chimpanzee)48, and even chicken49. This suggests that an association between harmony and emotional valence is to some degree universal and innate, presumably related to the physical properties of tonal sounds and the network characteristics of the low-level auditory stream; for instance, encoding of beating and sensory consonance/dissonance by the neurons in the inferior colliculus50. An fMRI study51 revealed that certain acoustic information of aversive sound goes from the auditory cortex to the amygdala instead of directly from thalamic inputs, supporting the notion that certain complex aversive sounds need to be analysed at cortex level to induce negative emotional responses. A similar pathway was implicated from an intracranial recording of an epileptic patient, showing a cascade of information of dissonant harmony from the auditory cortex to the orbitofrontal cortex, ACC, and amygdala52 supporting that certain aversive acoustic information reaches the amygdala via the auditory cortex, presumably followed by feedback from the amygdala to the auditory cortex. We believe our finding of decreased BOLD activation in the auditory cortex and the limbic system also reflects such a communication between the auditory cortex and the limbic system that is related to “core liking”.

Constant alteration of a temporal structure in music seems to decrease activation in the limbic areas, such as the ventral striatum, hypothalamus, and the orbitofrontal cortex17, unlike a focal alteration that evokes a prediction error response in the IFG20. We also found reliable decreases in the bilateral putamina, which is known to be sensitive to emotional and motivational information53 and vastly studied in the context of decision-making54. It was theorised that the dorsal striatum (including the Ptm) contributes to an action selection in the context of decision-making, whereas the ventral striatum encodes reward value and prediction error55. More relevantly, a similar distinction was reported in the context of musical pleasure: The dorsal striatum encoded anticipation of musical pleasure, whereas the ventral striatum encoded experience of pleasure43. Therefore, the current finding of the decreased BOLD signal in the Ptm in response to the reversed excerpts seems to be related to impaired reward anticipation processes.

Interaction between spectral and temporal structures of music

We found an interaction between spectral and temporal domains in areas including the ventral and dorsal striata, vmPFC, and ACC (prominently in Experiment I) that have been well associated with the reward processing6 and emotional appraisal56,57,58. In particular, we demonstrated that the direction of the effect of dissonance (or reversal) can be switched by the presence of reversal (or dissonance) in beta coefficients. In other words, it was shown that physically identical manipulations (e.g. dissonance) can produce opposite effects depending on the context (e.g. forward or backward) in those regions.

However, such an interaction (i.e. changes of the directions of effects) does not indicate that the disruption of the harmonic structure could be perceived as more pleasant when the temporal rules are already disrupted. In fact, the behavioural ratings showed that the two types of effects both contributed to rendering the musical excerpts more unpleasant. That is, a certain manipulation of a musical structure (e.g. dissonance) was similarly still unfavourable when the other musical structure (e.g. a sequential rule) was disrupted whereas it increased the BOLD signal in the vmPFC whereas it decreased the BOLD signal in the same region when it was presented with the other musical structure was intact.

One possible explanation of this might be a specific functionality of the vmPFC at integrating positive and negative emotions59, which was also subject to a computational imaging study60. Both human lesion and imaging studies point towards such an emotional functionality of the vmPFC. For example, patients with lesions in the vmPFC showed impairment in processing negative emotions61,62. In the current study, musical excerpts in their original forms (i.e. FC) and the most disrupted forms (i.e. BD) were rated as either very pleasant or very unpleasant while partially disrupted forms (i.e. FD and BC) were rated as (mildly) unpleasant. That is, relatively more (or less) intensive emotional valance might have been related to decreased (or increased) BOLD signal in the vmPFC. In fact, in a neuroimaging study63, where various types of musical emotions (e.g. peacefulness, joy, sadness and so on) were used, the vmPFC showed higher BOLD when a certain group of emotions (both positive and negative valance) was intensified, which also supports an integrative role of the vmPFC.

Modulated functional connectivity of the fronto-limbic network

Another important finding in relation to the vmPFC was its differential functional connectivity with the IFG/FP when both musical structures were intact or disrupted although the BOLD activation levels were similar. We interpret this finding such that it suggests the vmPFC interacts with emotional processing (presumably in limbic areas) and cognitive processing (presumably in the IFG/FP) that is related to musical structures. The following findings support this notion:

- (1)

The vmPFC has been mostly found to be involved in higher-order cognitive processing of emotional information. For instance, the vmPFC was found to be necessary in reward evaluation in decision-making64,65, nullifying learned conditioning66,67, emotional judgment of affective pictures68, and intensely pleasant emotions induced by music4. It has also been suggested that the vmPFC is involved in modulating autonomic processes69, which accompany emotional responses70,71.

- (2)

The right IFG has been implicated in processing a series of chords13, melodies72, or even a periodic loop of random tones73, suggesting the IFG to be highly relevant for extracting regularity from sequential auditory inputs and forming expectation74.

- (3)

Anatomically, in non-human primate models, direct connections between the frontal operculum and the basoventral PFC were found using a tracing technique75, suggesting a close relationship between the vmPFC and IFG.

- (4)

The functional connectivity between the vmPFC and right IFG has been implicated in studies where emotional regulation is crucial. In a human study64, the functional connectivity between the vmPFC and IFG correlated with the performance level of a self-control task in relation to successfully inhibiting emotional responses. In another human study76, it was reported that an unstable interaction between the vmPFC and IFG was found in patients with anxiety disorder, which is suggestive of the IFG delivering higher-order sensory information to the vmPFC so that it can modulate the limbic system’s activities, that is, the vmPFC seems to work as a pivotal point that mediates between the limbic system and the frontal cortex in the regulation of emotion.

Taken together, it seems plausible that the vmPFC was engaged in cognitive processes that modulate emotional responses by differentially communicating with the right IFG and FP when listening to musical excerpts with varying musical structures.

Technical limitation

It would be noteworthy that our manipulation in the temporal domain has some limitations. In this study, we were focused on sequential order in various temporal organisations of music, therefore reversal was one possible choice. In a study investigating phoneme encoding in EEG signals77, a similar manipulation (i.e., reversed speech) was used to disrupt the intelligibility of speech while preserving overall acoustic structures. However, in our experiments, reversing entire waveforms altered musical timbre together and did not alter beats and temporal intervals between notes while it remains unclear how these factors would interact with the sequential orders. Thus, more sophisticated temporal manipulations such as local reversal78 or quilting algorithm79 should be considered for more precise control of temporal structures for future studies.

Conclusion

In the current study, we found a significant interaction between disruptions in the spectral and temporal structures of music in the brain activity of the fronto-limbic network. In particular, the vmPFC exhibited distinctive functional connectivity with the right IFG to altered spectral and temporal organisation of music, which is indicative of cognitive involvement in emotional processes, with the vmPFC as a pivotal node of a functional network mediating integration of cognition and emotion during music listening.

Data availability

The datasets analysed in the current study are available upon reasonable request to the corresponding author.

References

Conard, N. J., Malina, M. & Munzel, S. C. New flutes document the earliest musical tradition in southwestern Germany. Nature 460, 737–740, https://doi.org/10.1038/nature08169 (2009).

Salimpoor, V. N., Benovoy, M., Longo, G., Cooperstock, J. R. & Zatorre, R. J. The rewarding aspects of music listening are related to degree of emotional arousal. PLoS One 4, e7487, https://doi.org/10.1371/journal.pone.0007487 (2009).

Zatorre, R. J. & Salimpoor, V. N. From perception to pleasure: music and its neural substrates. Proc. Natl. Acad. Sci. USA 110(Suppl 2), 10430–10437, https://doi.org/10.1073/pnas.1301228110 (2013).

Salimpoor, V. N. et al. Interactions between the nucleus accumbens and auditory cortices predict music reward value. Science 340, 216–219, https://doi.org/10.1126/science.1231059 (2013).

Brattico, E., Bogert, B. & Jacobsen, T. Toward a neural chronometry for the aesthetic experience of music. Front. Psychol. 4, 206, https://doi.org/10.3389/fpsyg.2013.00206 (2013).

Brattico, E. & Pearce, M. The neuroaesthetics of music. Psychology of Aesthetics, Creativity, and the Arts 7, 48 (2013).

Mueller, K. et al. Investigating the dynamics of the brain response to music: A central role of the ventral striatum/nucleus accumbens. Neuroimage 116, 68–79, https://doi.org/10.1016/j.neuroimage.2015.05.006 (2015).

Koelsch, S., Fritz, T., Von Cramon, D. Y., Muller, K. & Friederici, A. D. Investigating emotion with music: An fMRI study. Hum. Brain Mapp. 27, 239–250, https://doi.org/10.1002/hbm.20180 (2006).

Blood, A. J. & Zatorre, R. J. Intensely pleasurable responses to music correlate with activity in brain regions implicated in reward and emotion. Proc. Natl. Acad. Sci. USA 98, 11818–11823, https://doi.org/10.1073/pnas.191355898 (2001).

Kim, S.-G., Lepsien, J., Fritz, T. H., Mildner, T. & Mueller, K. Dissonance encoding in human inferior colliculus covaries with individual differences in dislike of dissonant music. Sci. Rep. 7, 5726, https://doi.org/10.1038/s41598-017-06105-2 (2017).

Sammler, D., Grigutsch, M., Fritz, T. & Koelsch, S. Music and emotion: electrophysiological correlates of the processing of pleasant and unpleasant music. Psychophysiology 44, 293–304 (2007).

Koelsch, S. Music‐syntactic processing and auditory memory: Similarities and differences between ERAN and MMN. Psychophysiology 46, 179–190 (2009).

Maess, B., Koelsch, S., Gunter, T. C. & Friederici, A. D. Musical syntax is processed in Broca’s area: an MEG study. Nat. Neurosci. 4, 540–545 (2001).

Koelsch, S., Fritz, T., Schulze, K., Alsop, D. & Schlaug, G. Adults and children processing music: an fMRI study. Neuroimage 25, https://doi.org/10.1016/j.neuroimage.2004.12.050 (2005).

Koelsch, S. et al. Bach speaks: a cortical “language-network” serves the processing of music. Neuroimage 17, https://doi.org/10.1006/nimg.2002.1154 (2002).

Kim, S.-G., Kim, J. S. & Chung, C. K. The effect of conditional probability of chord progression on brain response: an MEG study. PLoS One 6, e17337, https://doi.org/10.1371/journal.pone.0017337 (2011).

Menon, V. & Levitin, D. J. The rewards of music listening: response and physiological connectivity of the mesolimbic system. Neuroimage 28, 175–184, https://doi.org/10.1016/j.neuroimage.2005.05.053 (2005).

Meyer, L. B. Emotion and meaning in music. (University of Chicago Press, 1956).

Huron, D. B. Sweet anticipation: music and the psychology of expectation. (MIT Press, 2006).

Koelsch, S. Towards a neural basis of music-evoked emotions. Trends Cogn. Sci. 14, 131–137, https://doi.org/10.1016/j.tics.2010.01.002 (2010).

Fritz, T. et al. Universal recognition of three basic emotions in music. Curr. Biol. 19, 573–576 (2009).

Hall, D. A. et al. ‘Sparse’ temporal sampling in auditory fMRI. Hum. Brain Mapp. 7, doi:3.0.co;2-n (1999).

Humphries, C., Liebenthal, E. & Binder, J. R. Tonotopic organization of human auditory cortex. Neuroimage 50, 1202–1211 (2010).

Langers, D. R., Sanchez-Panchuelo, R. M., Francis, S. T., Krumbholz, K. & Hall, D. A. Neuroimaging paradigms for tonotopic mapping (II): the influence of acquisition protocol. Neuroimage 100, 663–675, https://doi.org/10.1016/j.neuroimage.2014.07.042 (2014).

Gaab, N., Gabrieli, J. D. E. & Glover, G. H. Assessing the influence of scanner background noise on auditory processing. II. An fMRI study comparing auditory processing in the absence and presence of recorded scanner noise using a sparse design. Hum. Brain Mapp. 28, 721–732, https://doi.org/10.1002/hbm.20299 (2007).

Da Costa, S. et al. Human primary auditory cortex follows the shape of Heschl’s gyrus. J. Neurosci. 31, 14067–14075, https://doi.org/10.1523/JNEUROSCI.2000-11.2011 (2011).

Da Costa, S., Saenz, M., Clarke, S. & Van Der Zwaag, W. Tonotopic gradients in human primary auditory cortex: concurring evidence from high-resolution 7 T and 3 T fMRI. Brain Topogr. 28, 66–69 (2015).

Mueller, K. et al. Investigating brain response to music: A comparison of different fMRI acquisition schemes. Neuroimage 54, 337–343, https://doi.org/10.1016/j.neuroimage.2010.08.029 (2011).

Mueller, K., Lepsien, J., Moller, H. E. & Lohmann, G. Commentary: Cluster failure: Why fMRI inferences for spatial extent have inflated false-positive rates. Front. Hum. Neurosci. 11, 345, https://doi.org/10.3389/fnhum.2017.00345 (2017).

Worsley, K. J., Taylor, J. E., Tomaiuolo, F. & Lerch, J. Unified univariate and multivariate random field theory. Neuroimage 23(Suppl 1), S189–195, https://doi.org/10.1016/j.neuroimage.2004.07.026 (2004).

Flandin, G. & Friston, K. J. Analysis of family-wise error rates in statistical parametric mapping using random field theory. ArXiv e-prints 1606, http://adsabs.harvard.edu/abs/2016arXiv160608199F (2016).

Eklund, A., Nichols, T. E. & Knutsson, H. Cluster failure: Why fMRI inferences for spatial extent have inflated false-positive rates. Proc. Natl. Acad. Sci. USA 113, 7900–7905, https://doi.org/10.1073/pnas.1602413113 (2016).

Friston, K. J. et al. Psychophysiological and modulatory interactions in neuroimaging. Neuroimage 6, 218–229, https://doi.org/10.1006/nimg.1997.0291 (1997).

McLaren, D. G., Ries, M. L., Xu, G. & Johnson, S. C. A generalized form of context-dependent psychophysiological interactions (gPPI): A comparison to standard approaches. Neuroimage 61, 1277–1286, https://doi.org/10.1016/j.neuroimage.2012.03.068 (2012).

Power, J. D., Schlaggar, B. L. & Petersen, S. E. Recent progress and outstanding issues in motion correction in resting state fMRI. Neuroimage 105, 536–551, https://doi.org/10.1016/j.neuroimage.2014.10.044 (2015).

Behzadi, Y., Restom, K., Liau, J. & Liu, T. T. A component based noise correction method (CompCor) for BOLD and perfusion based fMRI. Neuroimage 37, 90–101, https://doi.org/10.1016/j.neuroimage.2007.04.042 (2007).

Yeo, B. T. et al. Functional Specialization and Flexibility in Human Association Cortex. Cereb. Cortex 25, 3654–3672, https://doi.org/10.1093/cercor/bhu217 (2015).

Cole, M. W., Bassett, D. S., Power, J. D., Braver, T. S. & Petersen, S. E. Intrinsic and task-evoked network architectures of the human brain. Neuron 83, 238–251, https://doi.org/10.1016/j.neuron.2014.05.014 (2014).

Braun, U. et al. Dynamic reconfiguration of frontal brain networks during executive cognition in humans. Proc. Natl. Acad. Sci. USA 112, 11678–11683, https://doi.org/10.1073/pnas.1422487112 (2015).

Young, C. B. et al. Dynamic Shifts in Large-Scale Brain Network Balance As a Function of Arousal. The Journal of Neuroscience 37, 281–290, https://doi.org/10.1523/jneurosci.1759-16.2016 (2017).

Peretz, I., Aubé, W. & Armony, J. L. Towards a neurobiology of musical emotions. The evolution of emotional communication: from sounds in nonhuman mammals to speech and music in man 277 (2013).

Blood, A. J., Zatorre, R. J., Bermudez, P. & Evans, A. C. Emotional responses to pleasant and unpleasant music correlate with activity in paralimbic brain regions. Nat. Neurosci. 2, 382–387 (1999).

Salimpoor, V. N., Benovoy, M., Larcher, K., Dagher, A. & Zatorre, R. J. Anatomically distinct dopamine release during anticipation and experience of peak emotion to music. Nat. Neurosci. 14, 257–262, https://doi.org/10.1038/nn.2726 (2011).

Flores-Gutierrez, E. O. et al. Metabolic and electric brain patterns during pleasant and unpleasant emotions induced by music masterpieces. Int. J. Psychophysiol. 65, 69–84, https://doi.org/10.1016/j.ijpsycho.2007.03.004 (2007).

Green, A. C. et al. Music in minor activates limbic structures: a relationship with dissonance? Neuroreport 19, 711–715, https://doi.org/10.1097/WNR.0b013e3282fd0dd8 (2008).

Zentner, M. R. & Kagan, J. Infants’ perception of consonance and dissonance in music. Infant Behavior and Development 21, 483–492, https://doi.org/10.1016/S0163-6383(98)90021-2 (1998).

Trainor, L. J. & Heinmiller, B. M. The development of evaluative responses to music. Infant Behavior and Development 21, 77–88, https://doi.org/10.1016/S0163-6383(98)90055-8 (1998).

Sugimoto, T. et al. Preference for consonant music over dissonant music by an infant chimpanzee. Primates 51, 7–12, https://doi.org/10.1007/s10329-009-0160-3 (2010).

Chiandetti, C. & Vallortigara, G. Chicks Like Consonant Music. Psychol. Sci. 22, 1270–1273, https://doi.org/10.1177/0956797611418244 (2011).

McKinney, M., Tramo, M. & Delgutte, B. Neural correlates of musical dissonance in the inferior colliculus. Physiological and psychophysical bases of auditory function (Breebaart DJ, Houtsma AJM, Kohlrausch A, Prijs VF, Schoonhoven R, eds), 83–89 (2001).

Kumar, S., von Kriegstein, K., Friston, K. & Griffiths, T. D. Features versus feelings: dissociable representations of the acoustic features and valence of aversive sounds. J. Neurosci. 32, 14184–14192, https://doi.org/10.1523/JNEUROSCI.1759-12.2012 (2012).

Dellacherie, D. et al. The birth of musical emotion: a depth electrode case study in a human subject with epilepsy. Ann. N. Y. Acad. Sci. 1169, 336–341, https://doi.org/10.1111/j.1749-6632.2009.04870.x (2009).

Vytal, K. & Hamann, S. Neuroimaging support for discrete neural correlates of basic emotions: a voxel-based meta-analysis. J. Cogn. Neurosci. 22, 2864–2885, https://doi.org/10.1162/jocn.2009.21366 (2010).

Delgado, M. R., Locke, H. M., Stenger, V. A. & Fiez, J. A. Dorsal striatum responses to reward and punishment: effects of valence and magnitude manipulations. Cogn. Affect. Behav. Neurosci. 3, 27–38 (2003).

Balleine, B. W., Delgado, M. R. & Hikosaka, O. The role of the dorsal striatum in reward and decision-making. J. Neurosci. 27, 8161–8165, https://doi.org/10.1523/JNEUROSCI.1554-07.2007 (2007).

Mohanty, A. et al. Differential engagement of anterior cingulate cortex subdivisions for cognitive and emotional function. Psychophysiology 44, 343–351, https://doi.org/10.1111/j.1469-8986.2007.00515.x (2007).

Etkin, A., Egner, T. & Kalisch, R. Emotional processing in anterior cingulate and medial prefrontal cortex. Trends Cogn. Sci. 15, 85–93, https://doi.org/10.1016/j.tics.2010.11.004 (2011).

Bush, G., Luu, P. & Posner, M. I. Cognitive and emotional influences in anterior cingulate cortex. Trends Cogn. Sci. 4, 215–222, https://doi.org/10.1016/S1364-6613(00)01483-2 (2000).

Talmi, D. & Frith, C. Feeling right about doing right. Nature 446, 865, https://doi.org/10.1038/446865a (2007).

Basten, U., Biele, G., Heekeren, H. R. & Fiebach, C. J. How the brain integrates costs and benefits during decision making. Proceedings of the National Academy of Sciences 107, 21767–21772, https://doi.org/10.1073/pnas.0908104107 (2010).

Rolls, E. T., Hornak, J., Wade, D. & McGrath, J. Emotion-related learning in patients with social and emotional changes associated with frontal lobe damage. Journal of Neurology, Neurosurgery & Psychiatry 57, 1518–1524, https://doi.org/10.1136/jnnp.57.12.1518 (1994).

Koenigs, M. et al. Damage to the prefrontal cortex increases utilitarian moral judgements. Nature 446, 908, https://doi.org/10.1038/nature05631, https://www.nature.com/articles/nature05631#supplementary-information (2007).

Trost, W., Ethofer, T., Zentner, M. & Vuilleumier, P. Mapping aesthetic musical emotions in the brain. Cereb. Cortex 22, 2769–2783, https://doi.org/10.1093/cercor/bhr353 (2012).

Hare, T. A., Camerer, C. F. & Rangel, A. Self-control in decision-making involves modulation of the vmPFC valuation system. Science 324, 646–648, https://doi.org/10.1126/science.1168450 (2009).

Damasio, A. R. Descartes’ error: emotion, reason, and the human brain. (Putnam, 1994).

Phelps, E. A., Delgado, M. R., Nearing, K. I. & LeDoux, J. E. Extinction learning in humans: role of the amygdala and vmPFC. Neuron 43, 897–905, https://doi.org/10.1016/j.neuron.2004.08.042 (2004).

Apergis-Schoute, A. M., Schiller, D., LeDoux, J. E. & Phelps, E. A. Extinction resistant changes in the human auditory association cortex following threat learning. Neurobiol. Learn. Mem. 113, 109–114, https://doi.org/10.1016/j.nlm.2014.01.016 (2014).

Northoff, G. et al. Reciprocal modulation and attenuation in the prefrontal cortex: an fMRI study on emotional-cognitive interaction. Hum. Brain Mapp. 21, 202–212, https://doi.org/10.1002/hbm.20002 (2004).

Hansel, A. & von Kanel, R. The ventro-medial prefrontal cortex: a major link between the autonomic nervous system, regulation of emotion, and stress reactivity? Biopsychosoc. Med. 2, 21, https://doi.org/10.1186/1751-0759-2-21 (2008).

Phillips, M. L., Drevets, W. C., Rauch, S. L. & Lane, R. Neurobiology of emotion perception I: The neural basis of normal emotion perception. Biol. Psychiatry 54, 504–514 (2003).

Critchley, H. D., Corfield, D. R., Chandler, M. P., Mathias, C. J. & Dolan, R. J. Cerebral correlates of autonomic cardiovascular arousal: a functional neuroimaging investigation in humans. J. Physiol. 523(Pt 1), 259–270 (2000).

Koelsch, S. & Jentschke, S. Differences in Electric Brain Responses to Melodies and Chords. J. Cogn. Neurosci. 22, 2251–2262, https://doi.org/10.1162/jocn.2009.21338 (2010).

Barascud, N., Pearce, M. T., Griffiths, T. D., Friston, K. J. & Chait, M. Brain responses in humans reveal ideal observer-like sensitivity to complex acoustic patterns. Proceedings of the National Academy of Sciences 113, E616–E625, https://doi.org/10.1073/pnas.1508523113 (2016).

Salimpoor, V. N., Zald, D. H., Zatorre, R. J., Dagher, A. & McIntosh, A. R. Predictions and the brain: how musical sounds become rewarding. Trends Cogn. Sci. 19, 86–91, https://doi.org/10.1016/j.tics.2014.12.001 (2015).

Barbas, H. & Pandya, D. N. Architecture and intrinsic connections of the prefrontal cortex in the rhesus monkey. J. Comp. Neurol. 286, 353–375, https://doi.org/10.1002/cne.902860306 (1989).

Cha, J. et al. Clinically Anxious Individuals Show Disrupted Feedback between Inferior Frontal Gyrus and Prefrontal-Limbic Control Circuit. J. Neurosci. 36, 4708–4718, https://doi.org/10.1523/JNEUROSCI.1092-15.2016 (2016).

Di Liberto, G. M., O’Sullivan, J. A. & Lalor, E. C. Low-frequency cortical entrainment to speech reflects phoneme-level processing. Curr. Biol. 25, 2457–2465 (2015).

Saberi, K. & Perrott, D. R. Cognitive restoration of reversed speech. Nature 398, 760 (1999).

Overath, T., McDermott, J. H., Zarate, J. M. & Poeppel, D. The cortical analysis of speech-specific temporal structure revealed by responses to sound quilts. Nat. Neurosci. 18, 903 (2015).

Acknowledgements

This study was supported by Max Planck Society and International Max Planck Research School (IMPRS) on Neuroscience of Communication. We thank anonymous reviewers for valuable comments and suggestions to improve the ealier version of the manuscript.

Author information

Authors and Affiliations

Contributions

S.-G.K. and K.M. conceived the analysis ideas together. J.L. and T.M. designed and conducted the experiments together. S.-G.K. carried out the analysis. S-.G.K. and T.H.F. wrote the manuscript together.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kim, SG., Mueller, K., Lepsien, J. et al. Brain networks underlying aesthetic appreciation as modulated by interaction of the spectral and temporal organisations of music. Sci Rep 9, 19446 (2019). https://doi.org/10.1038/s41598-019-55781-9

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-019-55781-9

This article is cited by

-

Neural signatures of imaginary motivational states: desire for music, movement and social play

Brain Topography (2024)

-

Spectro-temporal acoustic elements of music interact in an integrated way to modulate emotional responses in pigs

Scientific Reports (2023)

-

Music modulates emotional responses in growing pigs

Scientific Reports (2022)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.