Abstract

Quantifying the nodal spreading abilities and identifying the potential influential spreaders has been one of the most engaging topics recently, which is essential and beneficial to facilitate information flow and ensure the stabilization operations of social networks. However, most of the existing algorithms just consider a fundamental quantification through combining a certain attribute of the nodes to measure the nodes’ importance. Moreover, reaching a balance between the accuracy and the simplicity of these algorithms is difficult. In order to accurately identify the potential super-spreaders, the CumulativeRank algorithm is proposed in the present study. This algorithm combines the local and global performances of nodes for measuring the nodal spreading abilities. In local performances, the proposed algorithm considers both the direct influence from the node’s neighbourhoods and the indirect influence from the nearest and the next nearest neighbours. On the other hand, in the global performances, the concept of the tenacity is introduced to assess the node’s prominent position in maintaining the network connectivity. Extensive experiments carried out with the Susceptible-Infected-Recovered (SIR) model on real-world social networks demonstrate the accuracy and stability of the proposed algorithm. Furthermore, the comparison of the proposed algorithm with the existing well-known algorithms shows that the proposed algorithm has lower time complexity and can be applicable to large-scale networks.

Similar content being viewed by others

Introduction

Spreading process is a ubiquitous phenomenon in the nature1,2. In most of the real-world networks, the spreading process is the results of the interactions between infected individuals and uninfected individuals. Some spreading processes, including virus propagation and rumor spreading have profound negative economical and social impacts3,4. In order to prevent potential disruptions and ensure social stability, external intervention is widely essential as the most effective way to control epidemic transmission or rumor spreading5,6. Specifically, influential users, which are source spreaders, can obtain higher information diffusion levels on the network than non-influential users7. Thus, the optimized solution for the influence maximization problem is the most common and effective intervention method. However, quantifying the individual spreading abilities in complex networks, especially identifying the potential super-spreaders in a large-scale social network, is still a big challenge nowadays8,9,10,11.

The term “super-spreaders” refers to those who are particularly effective in transmitting infectious diseases or spreading information. In epidemiology, a super-spreader is an infected organism that infects disproportionally more secondary contacts than others who are also infected with the same disease12. Similarly, in information diffusion, super-spreaders play a more important role than normal individuals in promoting the information diffusion or determining the emergence of hot topics, including opinion leaders who have the ability to influence others to share and retweet their messages13, information brokers who connect different groups of users or have strong ties with the influential followers14. In fact, identifying individuals with the ability to be super-spreaders during virus propagation and rumor spreading can help us to better prevent the epidemic or public events bursting15,16.

The ranking algorithms were proposed initially to investigate the influence or prestige of individuals in social networks by several social scientists17,18. Nowadays, the ranking algorithms are introduced to study real-world issues. Moreover, they are utilized for novel applications, including optimizing communication networks19, finding social leaders20, and assessing network vulnerability21. However, these conventional centrality indices just consider a fundamental quantification through combing geodesics between individuals and they are not suitable to describe the pivotal positions of individuals from multiple angles. Thus, some researchers have attempted to redefine the concept of the individual influence. Stephenson and Zelen introduced the concept of the information centrality to capture the information contained in all possible paths of a connected network22. Kitsak et al.proposed the k-shell decomposition algorithm to evaluate the nodal spreading capabilities8. Wang and Zhao suggested a multi-attribute integrated index based on the degree centrality, the closeness centrality, the clustering coefficient and the topology potential23. Sheikhahmadi and Nematbakhsh introduced a hybrid algorithm called the MCDE algorithm16. Ahajjam and Badir provided a new centrality for the identification of influential nodes in networks based on the improved coreness centrality and the eigenvector centrality7.

Furthermore, with the explosive data growth, designing efficient and effective ranking algorithms on large-scale networks has attracted considerable attentions. Some diffusion algorithms based on random-walk were proposed, including the well-known PageRank24, HITS scores25, LeaderRank20, ClusterRank26 and other improved PageRank algorithms27,28. These representative algorithms have a common assumption that a node is expected to be of high influence if it points to many highly influential neighbours. So, they work well for directed networks, however they have a poor performance for undirected networks.

In fact, many researches regarding artificial network dataset and real social networks have demonstrated that when investigating the effectiveness of a ranking algorithm, it must be combined with the structural properties of networks and a certain functional goal29,30. For example, identifying influential nodes according to their roles in maintaining the network connectivity or facilitating information flow31. In addition, the existing ranking algorithms are difficult to reach a balance between accuracy and simplicity. In other words, some measures perform very simple but limit the accuracy, such as the local centrality indices, whereas others with a high computational complexity perform accurately but are incapable to be applied in large-scale networks, such as the global centrality indices. Thus, in the present study it is intended to fill this gap by exploring a new algorithm to quantify the nodal spreading abilities and identify potential super-spreaders in real-world social networks.

In the present study, a new ranking algorithm named CumulativeRank is proposed to quantify the nodal spreading abilities, which combines the local and global performances of each node in a given social network. Finally, the SIR model is applied to simulate the spreading processes in real social networks. The experimental results show that the proposed algorithm outperforms other well-known ranking algorithms in terms of accuracy and simplicity. The contributions of this study can be summarized as the following:

-

The CumulativeRank algorithm is a three-step implementation algorithm that combined the local and global performances of each node.

-

The improved network constraint coefficient (INCC) is proposed to assess the local performances of each node.

-

The concept of the tenacity is introduced to measure the node’s prominent position in maintaining the network’s connectivity.

-

The experimental results verify the outperformance of the proposed algorithm in terms of accuracy and simplicity.

The rest of the paper is organized as the following: In section 2, several widely-used ranking algorithms are reviewed briefly and the SIR model is introduced as the evaluation metric. In Section 3, the proposed algorithm is described in detail. In Section 4, the SIR model is applied to compare the performances of the proposed algorithm and six well-known ranking algorithms in real-world datasets. Finally, conclusions are given in Section 5.

Background

Ranking algorithms

A social network is a social structure made up of social members and their relationships. From the view of graph theory, most of social networks can be written as a graph G = (V, E), where \(V=\{{v}_{1},{v}_{2},\cdots \cdots \,{v}_{n}\}\) represents a node set and \(E=\{{e}_{1},{e}_{2},\cdots \cdots \,{e}_{m}\}\) represents an edge set. A node vi ∈ V denotes an individual or organizations in the social network. Moreover, an edge ei ∈ E denotes a possible social interaction between individuals or organizations, including communication or collaboration between members of a social group. The number of elements in V and E are presented by n and m, respectively. Nowadays, with the emergence of web2.0, people can share their opinions, communicate and relate to one another anytime and anywhere. In fact, some individuals play an important role in facilitating information flow and ensuring the stabilization operations of the whole network. In this section, five widely-used ranking algorithms, including degree centrality, betweenness centrality, eigenvector centrality, LocalRank, PageRank and a hybrid algorithm defined by Fu et al.32 are introduced as benchmark algorithms.

Degree centrality (DC)

The DC is obtained by calculating the ratio of the number of edges of node i to the maximum possible number of edges, which reflects the ability of a node to connect directly with other nodes.

Betweenness centrality (BC)

The BC measures a node’s influence through the ratio of the shortest path over the nodes to the number of all paths. The BC considers the global structure information of a given graph. The higher the BC value of a node, the stronger its controlling or spreading abilities.

Eigenvector centrality (EC)

Another important index in the category of global centrality indices is the EC, which considers that the centrality of a node depends not only on the number of its neighbours, but also on the centrality of its neighbours.

LocalRank (LR)

Most of the global centrality indices have rather high computational complexity, which restricts their applications to large-scale networks. To overcome this problem, Chen et al.33 proposed a semi-local centrality, named the LocalRank, as a tradeoff between the local centrality indices and the global centrality indices. The LR value of node i is written as the following:

where Γ(i) and N(w) are the total number of first-degree neighbours of the node i and the total number of first- and second-degree neighbours of node w, respectively.

PageRank (PR)

The PR is an application of the random walker model on a Markov chain24, where nodes are the web pages and edges represent the links from one page to another. The PR value of a page i at t step is described as the following:

Where the damping factor a ∈ [0, 1] is usually set to be around 0.85. Moreover, \({\Gamma }_{i}^{in}\), \({k}_{j}^{out}\) and n are the set of pages that link to page i, the out-degree of page j and is the total number of pages, respectively.

Fu et al.’ algorithm (FA)

Fu et al.32 proposed a hybrid algorithm that combines global diversity and local features to identify the most influential network nodes. They used the k-shell entropy to measure the global connecting capability of each node and considered the local degree values of its neighbours. The FA value of node i is defined as the following:

Where Ei and Li are the k-shell entropy value of node i and the sum of all neighbours’ degree centrality values within the two-step distance of node i, respectively.

SIR model

Centrality measures provide a method to quantify the nodal influence values, however the numeric values may not be directly interpretable34. As the information diffusion is similar to the epidemic spreading, a series of epidemic models are proposed to track the information spreading process and identify the influential spreaders. In the present study, the standard SIR model is utilized to estimate the spreading abilities of selected individuals and illustrate competitive advantages of the proposed algorithm over the conventional well-known algorithms. It should be noted that the standard SIR model assumes that nodes in a network can be in one of three possible states, including susceptible (denoted by S), infected (denoted by I) and recovered (denoted by R). Only a few individuals are set to be infective initially, while other individuals are in the susceptible state. The initial infected individuals are the originators of diseases, which can be obtained by various ranking indices. Once susceptible nodes get in contact with one or more infected neighbours, they become infected with the infection probability of β. Meanwhile, the infected individuals can be cured with the recovery probability of γ. The epidemic spreading is repeated until there are no infected individuals in the network and the network reaches a stable state. Since the large infection probability β makes the spreading cover almost all of the network, where the role of the individual is no longer important, the β value is set to be slightly larger than the epidemic threshold \({\beta }_{th}\approx \langle k\rangle /\langle {k}^{2}\rangle \), where 〈k〉 and 〈k2〉 represent the average degree and the second order average degree35, respectively. Moreover, the recovery probability is γ = 0.3.

In order to characterize the spreading abilities of individuals, the spreading scope F(t) at time t is presented as the following:

Where n is the number of individuals in a given network. Moreover, nI(t) and nR(t) represent the number of infected and recovered nodes at time t, respectively. It should be noted that all of the simulations are carried out on the real social networks. When all infected individuals are converted to the recovered state, the spreading process ends and the final spreading scope F(tc) is equal to the maximum values of the recovered individuals. Generally, within the same network, the larger the final spreading scope \(F({t}_{c})\) triggered by initial spreaders, the stronger their spreading abilities.

The Proposed Algorithm

From the previous section, it is resulted that a reasonable algorithm for the identification of the key individuals should focus on two aspects, including accuracy and simplicity. The accuracy is mainly reflected in the computational accuracy and stability, while simplicity is mainly correlated to the computational runtime. Especially for a social network, which usually contains millions of nodes, the key issue is how to reduce the complexity and improve the computational efficiency. In order to fill this gap, a novel algorithm is required to effectively reach a balance between the accuracy and the simplicity. According to Burt’s structural holes theory36, the individuals’ structural position in the social network is more important than the corresponding external relationship strength.Certain positional advantages indicate that the individuals occupying these positions have more information, resources and power than others. The positional advantages in the social network include local and global advantages as the following: The former advantages can be quantified by the local structural information9,37, while the latter advantages should consider global topological connections8,38. In this regard, it is intended to propose a three-step algorithm, named the CumulativeRank,in the present study. It is expected that the proposed algorithm can sufficiently combine node’s local and global performances. The details of the proposed algorithm are described as the following:

Step 1:Quantifying the local advantage of each node

The structural hole theory provides a novel perspective for understanding the local performance of individuals. In fact, a structural hole is a gap between two unconnected nodes. When these two unconnected nodes are connected by a third node, the bridging node usually has more information advantages and control advantages since it acts as a mediator between different nodes. In order to quantify the control advantage of bridging nodes, Burt introduced the network constraint coefficient (NCC). The NCC for ith node is described as the following:

Where pij is the proportion of a given node i’s energy invested directly related to node j, which is written as the following:

where zij is equal to 1 when a path from i to j exists, and it is zero otherwise. Γ(i) is the set of the nearest neighbours i. \(\mathop{\sum }\limits_{k=1,k\ne i,j}^{N}{p}_{ik}{p}_{kj}\) measures the strength of the indirect connection from i to j. The NCC value of a node generally has a negative association with its influence in a given network. Therefore, it is found that as the NCC values reduce, the formation of structural holes is enhanced and subsequently the influence of nodes increases.

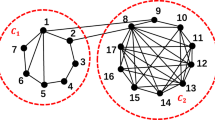

Equation (6) indicates that the NCC value of a node is calculated based on its neighbourhood topology, including the number of neighbours and the the the corresponding closeness between them. However, the NCC has similar disadvantages to the DC. It only collects information of the nearest neighbours, while the structural information from farther neighbours is ignored. In fact, the NCC is ineffective when it faces the nodes bridging the same number of non-redundant contacts. For example, Fig. 1 illustrates that nodes C and F act as bridges between nodes G and H, and between nodes L and G, respectively. Based on Eq. (6), nodes C and F have the same value (i.e. NCCC = NCCF = 0.46). In other words, the two nodes have the same local influence. However, Fig. 1 shows that in addition to the common neighbour node G and its neighbours, node C has a high-order neighbour like node H, while node F only has one-order neighbour, which is entitled by node L. In fact, it is found that the spreading ability of each node highly depends on its neighbours39. For example, although nodes C and F have the same NCC values, node C has stronger spreading ability, which originates from wide range of contacts of high-order neighbours. Therefore, it is concluded that the NCC cannot accurately quantify the difference between nodes C and F in the abovementioned sample network.

This analysis shows that the NCC only collects information from the nearest neighbours, which leads to low resolution. In order to increase the accuracy of the method, more local structural information should be considered. Therefore, an improved network constraint coefficient (INCC) is proposed in the present study. It should be indicated that the INCC scheme is inspired by Chen’ semi-local centrality index33. In the proposed method, the local influence of a node combines the direct and indirect influences on its nearest and next nearest neighbours. Compared with the Burt’s NCC, INCC can provide richer connection information that individual has established, which gives a full understanding of the node influence in facilitating the information flow. The INCC value of node i is defined as the following:

Where \({p^{\prime} }_{ij}=\frac{{Q}_{j}}{L{R}_{i}}\), LRi and Qj are calculated by the Eqs (1) and (2), respectively. Take node A as an example, \(INC{C}_{A}={(\frac{{Q}_{F}}{L{R}_{A}}+\frac{{Q}_{G}}{L{R}_{A}}\times \frac{{Q}_{F}}{L{R}_{G}})}^{2}+{(\frac{{Q}_{G}}{L{R}_{A}}+\sum _{i\in (E,F)}\frac{{Q}_{i}}{L{R}_{A}})}^{2}+{(\frac{{Q}_{E}}{L{R}_{A}}+\frac{{Q}_{G}}{L{R}_{A}}\times \frac{{Q}_{E}}{L{R}_{G}})}^{2}\).

As shown in Fig. 1, node C has three nearest neighbours, including nodes B, G and H and seven next nearest neighbours, including nodes A, D, E, F, I, J and K, thus N(C) = 10. The N values of the other nodes are presented in the third column of Table 1. According to Eqs (1) and (2), we can calculate QC = NB + NG + NH = 24, LRC = QB + QG + QH = 92. Similarly, QF = NA + NG + NL = 18 and LRF = QA + QG + QL = 71. Thus, it is easy to know the difference between node C and F by the corresponding INCC values, where INCCC = 0.566 and INCCF = 0.771. INCCF > INCCC, that indicates node C has more spreading influence than node F. Table 1 shows that, node H has the lowest INCC value, which indicates that it has the largest local influence in the example network, while nodes L, M, N and O have the largest INCC values, indicating that they have the lowest influence. In a descending order of the nodal influence, the ranking result is H, G, K, C, F, B, A, D, E, I = J, L = M = N = O. It is found that the INCC can more accurately quantify the differences between node C and F, node A and B, node D and E, in comparison to the performance of the NCC method.

Step 2: Quantifying the global advantages of each node

Generally, the influential nodes also play a crucial role in maintaining the network connectivity. If these top influential nodes are removed or not involved in the spreading process, the final spreading scope and the spreading efficiency are reduced40. Consequently, the global performances of nodes are considered on maintaining the network connectivity and facilitating the information flow. In general, if the removal of a node leads to the network remaining more components and smaller connected components, the removed node is important in maintaining the network connectivity. The inherent attachment mechanism of social networks often leads to their excessive sensitivity to the removal of key nodes. In order to measure the vulnerability of a given network, Cozzens et al. proposed the concept of the tenacity41. As a vulnerability parameter of graph, the tenacity integrates three criteria, including the cost of the network breakage, the number of components and the size of largest connected component.

In this paper, the tenacity are introduced to assess the individual prominent position in maintaining the network connectivity. Due to the inhomogeneity of general networks, most nodes don’t belong to the cut-set of a given network, so random removal of these nodes cannot change the balance of the network structure or directly trigger the collapse of the network42. Thus, we redefine the tenacity of each node, which is denoted as the following:

Where \(|i|\) is the cost of node i removed, when the targeted removal obeys one by one model, \(|i|=1\). \(m(G-i)\) and \(w(G-i)\) denote the giant component size after destruction and the number of components of the remaining network \(G-i\). In Supplementary Note, an iterative algorithm is proposed for evaluating the nodes’ global performances. If node i plays a key role in maintaining the network connectivity, it either is the root with at least two descendants, or has a descendant u whose lowest depth-first number (low) is not less than its depth-first number (dfn). According to the above rule, we can identify five key nodes in the example network, which are H, G, K, C and F, respectively. The identification process is shown in Fig. 2.

Step 3: Identifying the influential spreaders in the network

Based on the basic definitions mentioned above, a new ranking algorithm named the CumulativeRank algorithm is presented in this study, which measures the node spreading ability from two layers, including locally, the proposed algorithm considers both the local influence of nodes on their neighbours and globally, the algorithm considers the nodes’ prominent position in maintaining the network connectivity. The precise definition of the CumulativeRank is defined as the following:

Where INCCi is calculated by the Eq. (8). Moreover, TCi is the normalized tenacity value of a given node i, \(0\le T{C}_{i}\le 1\) and it is defined as the following:

According to the above algorithm, nodes with the lowest CR values have the largest influence in facilitating information flow and maintaining the network connectivity. In a descending order of the nodal spreading ability, the ranking result of the example network is H, K, G, C, F, B, A, D, E, I = J, L = M = N = O.

Experimental Evaluation

Dataset

To validate the effectiveness of the proposed algorithm, the algorithm is evaluated in nine real social networks. All of the data except for OClinks can be downloaded from the Stanford network dataset43. Table 2 indicates that the six real social network include:(1) OClinks, which is a representative online community network, where users are from the University of California, Irvine44; (2)Ego-Facebook, which is an ego network consisting of friends lists from Facebook; (3)Soc-Epinions, which is a who-trust-whom online social network of a general consumer review site Epinions.com; (4)Wiki-Vote, which is a network containing all of the Wikipedia voting data from the inception of Wikipedia till January 2008; (5)Ca-HepPh, which is a collaboration network covering scientific collaborations between authors papers submitted to High Energy Physics from January 1993 to April 2003; (6) Email-Enron, which is an email communication network from Enron posted to the web by the Federal Energy Regulatory Commission during its investigation;(7) Ca-CondMat,which is a collaboration network covering scientific collaborations between authors papers submitted to Condense Matter category;(8) Ca-GrQc,which is a collaboration network covering scientific collaborations between authors papers submitted to General Relativity and Quantum Cosmology category;(9) Email-Eu-core,which is an email communication network from a large European research institution.

Experimental results

In general, the super-spreader in a social network is regarded as an individual that has greater spreading abilities. In other words, it is capable of widely spreading the message to other recipient individuals infinitely. In this section, the standard SIR model is utilized to simulate the spreading processes in real social networks.

The ranking order of each node is calculated in the above mentioned six networks initially according to DC, BC, EC, LR, PR, FA and CR, respectively. In a sample network, if nodes have the same calculated scores according to the same ranking algorithm, they will have the same rank. In the present study, we are more interested in the super-spreaders instead of all nodes in the network. Thus, only the top nodes of the ranking list are considered. For this purpose, the top-10 nodes of each ranking algorithm are selected as the initial spreaders and all of the other nodes in the network are marked as susceptible nodes.

Table 3 shows that the ranking results of the proposed algorithm are quite different from those of other ranking algorithms. In Wiki-Vote, it is observed that the proposed algorithm suggests node 326 as the most influential node, followed by the nodes 656 and in the third position comes node 488. The same data is not found for the other ranking algorithms, indeed DC, BC and LR suggest node 699 as the most influential node, followed by nodes 286 and 2374 (third for DC and LR) or 409(third for BC). It is indicated that the CR gives the ranking results with the most significant difference from other five well-known ranking algorithms in Ca-HepPh and Ca-CondMat, as none of the 10 key nodes identified by CR appear in the top 10 nodes for DC, BC, EC, PR and LR. In addition, it is observed that both the proposed algorithm and the FA algorithm combine the global and local attributes of the network, however there are great differences between the two algorithms in terms of ranking results.

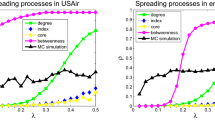

In order to evaluate the performances of the proposed algorithm, the all-contact SIR model is applied to simulate the information spreading process. In the all-contact SIR model each infected node can contact all of its susceptible neighbours at per time step. Figure 3 shows the simulation result of the spreading scopes, F (t), as a function of time for nine networks. For each initial infective node set, the SIR process is repeated 100 times to ensure the stability of the results (see the Supplementary Note). By comparing the changes of F (t) under different spreading sources, it is observed that in almost all of the networks, the initial spreaders obtained by the CR algorithm spread the information faster and the final spreading scopes F(tc) always reach the highest value (see Table 4). In the cases of OClinks, Ego-Facebook, Ca-HepPh,Ca-GrQc and Email-Eu-core, it is observed that the performance of the CR is much better than those of other algorithms. Even though in Soc-Epinions, the initial infection sources determined by different ranking algorithms are very similar and the changes of F (t) under different spreading sources perform nearly the same, but the proposed algorithm is slightly better and the final spreading scope still reaches the highest value. Relatively, other six classical ranking algorithms do not show significant consistency in the change tendency of F (t). Although the performance of the EC can outperform the other five algorithms except the CR in Wiki-Vote and Email-Enron, DC and LR perform better than EC in OClinks, Ego-Facebook, Ca-HepPh and Email-Eu-core. Experimental results show that the ranking of the proposed algorithm is more accurate and stable.

Plot of the spreading scope of the top-10 nodes ranked by different ranking algorithms in (a) OClinks, (b)Ego-Facebook, (c) Soc-Epinions, (d)Wiki-Vote, (e) Ca-HepPh, (f) Email-Enron, (g) Ca-CondMat, (h) Ca-GrQc and (i) Email-Eu-core. The infection probability β are 0.02 in (a), 0.01 in (b–f,i), 0.05 in (g) and 0.06 in (h), the recovery rate γ is 0.3. The results are averaged over 100 independent runs.

The SIR model is based on discrete step iterations to demonstrate the spreading process of information. If information cannot continue to flow between nodes according to the model rules, iterations end and the network reaches its stable state. The final time required for the end of the spreading process is obtained from the number of iterations. In general, the higher the number of iterations, the longer information propagates within a given network. Table 4 shows that in the same network, the difference in the spreading sources can increase the number of iterations and prolong the time of information propagation in reaching a steady state. For example, in Ego-Facebook, when β = 0.01, the spreading sources selected by the CR have the least number of iterations before convergence and the time steps are 33. The PR performs next above the CR, and then LR, BC, DC and EC show ascending order of the number of iterations. In contrast, the CR has the average lesser number of iterations before convergence, however it achieves the largest final spreading scope in all sample networks, which means that the spreading sources selected by the CR effectively accelerate the spreading process of information.

The total runtime of the CR consists of two parts, including the time of computing INCC values for all nodes and the time of quantifying the tenacity of each node. For the former, as Nw requires traversing node v’s neighbourhood within two steps and takes O(〈k〉2) time on average, the time complexity is O(n〈k〉2), where n and 〈k〉 are the total number of nodes and the average degree in a given network, respectively. After each iteration, the number of components \(w(G-i)\) and the size of the largest connected component \(m(G-i)\) are re-calculated, which takes the complexity O(n2). Totally, the whole time complexity of the proposed algorithm is O(n〈k〉2 + n2). In contrast, the time complexity of the CR is much lower than that of the BC and the EC, which have the complexity of \(O({n}^{2}\,\log \,n+nm)\) and O(n3) respectively. These are close to the PR with the complexity of O(n2 + n). Analysis of time complexity demonstrates that the proposed algorithm has relatively less computational burden in identifying potential super-spreaders and can be applicable to large-scale networks.

Conclusion

In the present study, a three-step ranking algorithm, named the CumulativeRank, is proposed in order to identify and quantify potential super-spreaders in a social network. Previous studies have shown that the nodal spreading ability originate from the local prominent position and the total connectivity strength that the node obtains. The proposed algorithm sufficiently combines the node’s local and global performances. Locally, inspired by Burt’s structural holes theory, the improved network constraint coefficient is proposed based on the semi-local centrality index. Compared with the conventional network constraint coefficient, the improved network constraint coefficient provides richer connection information for evaluating the local performance of each node. Globally, the concept of the tenacity are introduced to evaluate the nodes’ global connectivity strengths. Furthermore, extensive experiments on real-world social networks show explicitly that the proposed algorithm outperforms the existing well-known ranking algorithms and can be applicable to large-scale networks.

References

Goh, K. I. et al. The human disease network. PNAS 104, 8685–8690 (2007).

Wang, P., Gonzalez, M. C., Hidalgo, C. A. & Barabasi, A. L. Understanding the spreading patterns of mobile phone viruses. Science 324, 1071–1076 (2009).

Funk, S., Gilad, E., Watkins, C. & Jansen, V. A. The spread of awareness and its impact on epidemic outbreaks. PNAS 106, 6872–6877 (2009).

Wang, Q. S., Yang, X. & Xi, W. Y. Effects of group arguments on rumor belief and transmission in online communities: An information cascade and group polarization perspective. Information and Management 55, 441–449 (2018).

Tian, R., Zhang, X. & Liu, Y. SSIC model: A multi-layer model for intervention of online rumors spreading. Physica A 427, 181–191 (2015).

Jiang, J. & Zhou, T. S. Resource control of epidemic spreading through a multilayer network. Scientific Reports 8, 1629 (2018).

Ahajjam, S. & Badir, H. Identification of influential spreaders in complex networks using HybridRank algorithm. Scientific Reports 8, 11932 (2018).

Kitsak, M., Gallos, L. K., Havlin, S. & Makse, H. A. Identification of Influential Spreaders in Complex Networks. Nature Physics 6, 888–893 (2010).

Hu, Y. et al. Local structure can identify and quantify influential global spreaders in large scale social networks. PNAS 115, 7468–7472 (2018).

Liu, J., Ren, Z. & Guo, Q. Ranking the spreading influence in complex networks. Phys. A 392, 4154–4159 (2014).

Xu, S. & Wang, P. Identifying important nodes by adaptive LeaderRank. Phys. A 469, 654–664 (2017).

Liu, Y. et al. Characterizing super-spreading in microblog: An epidemic-based information propagation model. Phys. A 463, 202–218 (2016).

Aleahmad, A., Karisani, P., Rahgozar, M. & Oroumchian, F. OLFinder: Finding opinion leaders in online social networks. Journal of Information Science 42, 659–674 (2016).

Araujo, T., Neijens, P. & Vliegenthart, R. Getting the word out on Twitter: the role of influentials, information brokers and strong ties in building word-of-mouth for brands. International Journal of Advertising 36, 496–513 (2016).

Liu, Y., Tang, M., Zhou, T. & Do, Y. Core-like groups result in invalidation of identifying super- spreader by k-shell decomposition. Scientific Reports 5, 9602 (2015).

Sheikhahmadi, A. & Nematbakhsh, M. A. Identification of multi-spreader users in social networks for viral marketing. Journal of Information Science 43, 412–423 (2017).

Freeman, L. C. Centrality in social networks conceptual clarification. Social Networks 1, 215–239 (1979).

Bonacich, P. Power and centrality: a family of measures. Am. J. Sociol. 92, 1170–1182 (1987).

Tizghadam, A. & Leon-Garcia, A. Autonomic Traffic Engineering for Network Robustness. IEEE J. Sel. Area. Comm. 28, 39–50 (2010).

Lü, L. Y. et al. Leaders in social networks, the delicious case. PLoS One 6, e21202 (2011).

Crucitti, P., Latora, V., Marchiori, M. & Rapisarda, A. Error and attack tolerance of complex networks. Phys. A 340, 388–394 (2004).

Stephenson, K. & Zelen, M. Rethinking centrality: methods and applications. Social Networks 11, 1–37 (1989).

Wang, S. & Zhao, J. Multi-attribute integrated measurement of node importance in complex networks. Chaos 25, 113105 (2015).

Brin, S. & Page, L. The anatomy of a large-scale hypertextual web search engine. Computer Networks and ISDN Systems 30, 107–117 (1998).

Kleinberg, J. M. Authoritative sources in a hyperlinked environment. J.ACM 46, 604–632 (1999).

Chen, D. B. et al. Identifying influential nodes in large-scale directed networks: The role of clustering. PLoS One 8, e77455 (2013).

Rizos, G., Papadopoulos, S. & Kompatsiaris, Y. Multilabel user classification using the community structure of online networks. Plos One 12, e0173347 (2017).

Alp, Z. Z. & Oguducu, S. G. Identifying topical influencers on twitter based on user behavior and network topology. Knowledge-based Systems 141, 211–221 (2018).

Wang, X., Zhang, X., Yi, D. & Zhao, C. Identifying influential spreaders in complex networks through local effective spreading paths. J.Stat. Mech-Theory E. 5, 053402 (2017).

Yu, E.-Y., Chen, D.-B. & Zhao, J.-Y. Identifying critical edges in complex networks. Scientific Reports 8, 14469 (2018).

Moreno, Y., Nekovee, M. & Vespignani, A. Efficiency and reliability of epidemic data dissemination in complex networks. Phys. Rev. E 69, 055101 (2004).

Fu, Y. H., Huang, C.Y. & Sun, C.T. Identifying super-spreader nodes in complex networks. Math. Probl. Eng., 675713 (2015).

Chen, D. B. et al. Identifying influential nodes in complex networks. Phys.A 391, 1777–1787 (2012).

Ibnoulouafi, A. & El Haziti, M. Density centrality: identifying influential nodes based on area density formula. Chaos, Solitons and Fractals 114, 69–80 (2018).

Castellano, C. & Pastor-Satorras, R. Thresholds for epidemic spreading in networks. Phys. Rev. Lett. 105, 218701 (2010).

Burt, R. S. Structural Holes: The Social Structure of Competition.(Harvard University Press,1992).

Gao, S. et al. Ranking the spreading ability of nodes in complex networks based on local structure. Phys. A 403, 130–147 (2014).

Wen, S. et al. Using epidemic betweenness to measure the influence of users in complex networks. J. Netw. Comput. Appl. 78, 288–299 (2017).

Xu, S., Wang, P. & Lü, J. Iterative neighbour-information gathering for ranking nodes in complex networks. Scientific Reports 7, 41321 (2017).

Saito, K., Kimura, M., Ohara, K. & Motoda, H. Super mediator-A new centrality measure of node importance for information diffusion over social network. Information Sciences 329, 985–1000 (2016).

Cozzens, M. B., Moazzami, D. & Stueckle, S. Tenacity of Harary Graphs. JCMCC 16, 33–56 (1994).

Boccaletti, S., Latora, V., Moreno, Y., Chavez, M. & Hwang, D.-U. Complex networks: structure and dynamics. Physics Reports 424, 175–308 (2006).

Panzarasa, P., Opsahl, T. & Carley, K. M. Patterns and Dynamics of users’ behavior and interaction: network analysis of an online community. J. Am. Soc. Inf. Sci.Tec. 60, 911–932 (2009).

Acknowledgements

This research was supported by the National Science and Technology Major Project under Grant No. 2017YFB0803001,the National Natural Science Foundation of China No. 61571144, No. 61370215 and No. 61370211, and Humanities and Social Sciences Founds of Ministry of Education of China No. 19YJA630106.

Author information

Authors and Affiliations

Contributions

Dayong Zhang conceived and designed the experiments and wrote the manuscript; Yang Wang performed the numerical analysis; Zhaoxin Zhang is supervisor.

Corresponding author

Ethics declarations

Competing Interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Zhang, D., Wang, Y. & Zhang, Z. Identifying and quantifying potential super-spreaders in social networks. Sci Rep 9, 14811 (2019). https://doi.org/10.1038/s41598-019-51153-5

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-019-51153-5

This article is cited by

-

Dynamic identification of important nodes in complex networks based on the KPDN–INCC method

Scientific Reports (2024)

-

Quantifying agent impacts on contact sequences in social interactions

Scientific Reports (2022)

-

Identifying influential spreaders in complex networks for disease spread and control

Scientific Reports (2022)

-

Identifying spreading influence nodes for social networks

Frontiers of Engineering Management (2022)

-

Spatial super-spreaders and super-susceptibles in human movement networks

Scientific Reports (2020)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.