Abstract

Humans interpret others’ behaviour as intentional and expect them to take the most energy-efficient path to achieve their goals. Recent studies show that these expectations of efficient action take the form of a prediction of an ideal “reference” trajectory, against which observed actions are evaluated, distorting their perceptual representation towards this expected path. Here we tested whether these predictions depend upon the implied intentionality of the stimulus. Participants saw videos of an actor reaching either efficiently (straight towards an object or arched over an obstacle) or inefficiently (straight towards obstacle or arched over empty space). The hand disappeared mid-trajectory and participants reported the last seen position on a touch-screen. As in prior research, judgments of inefficient actions were biased toward efficiency expectations (straight trajectories upwards to avoid obstacles, arched trajectories downward towards goals). In two further experimental groups, intentionality cues were removed by replacing the hand with a non-agentive ball (group 2), and by removing the action’s biological motion profile (group 3). Removing these cues substantially reduced perceptual biases. Our results therefore confirm that the perception of others’ actions is guided by expectations of efficient actions, which are triggered by the perception of semantic and motion cues to intentionality.

Similar content being viewed by others

Introduction

Humans see others’ behaviour as purposeful and goal directed1,2,3,4. A key signature of this “intentional stance”5 is the assumption that other people generally act rationally: they take the most energy-efficient path to achieve their goal, and expend additional energy only when an obstacle has to be overcome3,6. This simple heuristic of action efficiency arises early in development and allows children to attribute intentionality to observed behaviours, even when carried out by inanimate objects7,8,9. Human infants (and some non-human primates) show surprise, for example, when actors that are believed to be intentional violate these assumptions, such as when they do not try to avoid an obstacle or exert additional unnecessary energy to reach their goal4,10. Once established, this simple heuristic may form a stepping-stone for more sophisticated abilities for reasoning about others4,11. For example, observing an inefficient action (e.g. reaching directly towards an object despite an obstacle in the way) can help people realize that others act according to beliefs and not objective reality (i.e. they may not have sight of the obstacle), forming the basis of a prototypical theory of mind.

We have argued that these expectations of efficient action are, to some extent, perceptually represented, in the form of an ideal “reference” trajectory that a rational actor would take through a given environment, against which observed actions can be judged12,13. This proposal emerges from recent predictive processing accounts of social perception12,14,15,16,17 which argue that perception of others’ actions – like perception in general – is always hypothesis-driven. Any assumption about the external world (and the people within it) is translated into the perceptual input that would result from such a state. These expectations of future input can guide perception and be tested against actual stimulation18,19,20. In non-social perception, such expectations explain several visual illusions (e.g., dress illusion21), the switch between different bi-stable percepts22, or why the same objects can appear convex or concave depending on prior assumptions about light sources23. In social perception, simply attributing a goal to another person could similarly elicit predictions about how this individual would realise such a goal, specifying which action they may soon carry out14,15,16,24,25 (for theoretical arguments, see26,27). The principle of efficient action can make a direct contribution here, specifying the ideal “reference” trajectory that achieves the actor’s goals with minimum energy expenditure, given the current environmental constraints, such as potential obstacles in the way12,26.

In a recent series of studies, we attempted to reveal these expectations of efficient action13. These studies relied on the well-established phenomenon that the uncertainty during motion perception is perceptually sharpened using top-down information28,29, filling in missing information30,31,32 in a predictive manner33,34. The resulting perceptual biases can be reliably measured by suddenly removing the moving object from view, and asking participants to report its disappearance point, either on a touch screen35,36,37 or by comparing it to probe stimuli presented shortly after38,39,40,41,42. In such a paradigm, people generally over-estimate the movement they have seen, reporting the moving stimulus to have disappeared further along its trajectory than it really did (i.e. the representational momentum effect38,39). These displacements have been shown to not only reflect a simple extrapolation of motion based on the previously seen trajectory43, but also prior knowledge about it’s causes, such as how the motion would be affected by one’s own actions44, by physical forces such as friction or gravity45, or the most likely behaviours of the other person41,42,46,47,48.

In the case of observed actions, the perceptual biases reflect the predictions derived from the assumption of efficient action13. In a recent series of experiments, participants observed a hand starting to reach for an object with a straight or arched trajectory. The actions were either efficient (reaching straight when the path was clear or arched over an obstacle) or inefficient (straight towards an obstacle or arched over empty space). The movement disappeared before it was completed and participants reported the hand’s last seen position on a touch screen, or by comparing it to probe stimuli presented immediately after. Both measures revealed that perceptual judgements were reliably biased by expectations of efficient action. Straight reaches were reported to have reached higher when an obstacle was blocking its path, in line with the expectation that the hand would soon lift to avoid it. Conversely, high arched reaches were reported lower when no obstacle was present, and corrected towards the straighter, more energy-efficient trajectory. These biases were present automatically, but increased when participants explicitly predicted–prior to action onset–the most efficient trajectory through the scene, or when attention was drawn to the environmental constraints. Moreover, they could be disrupted by dynamic visual noise masks presented directly after stimulus offset, suggesting that the biases emerge during ongoing perception or directly after the sudden offset, when the visual system “fills in” the expected future path49,50.

Together, these results indicate that the teleological stance is at least partly perceptually represented, providing an ideal reference trajectory that informs the action that was indeed perceived. Here, we test on what stimulus features these predictions of efficient action depend. In children, as well as in adults, intention attribution – and the resulting surprise when seeing an inefficient action–depends on the presence of cues to intention51,52,53, such as seeing an agentive stimulus (such as a hand relative to a ball54), or observing movements with biological motion trajectories55,56,57,58,59. If such cues indeed trigger attributions of intentionality to others, and the expectation of efficient action is tied to such intentionality attribution, then they should also determine to what extent perceptual biases towards efficient actions are observed.

In the first experimental group, we replicated the original experiment by Hudson and colleagues13, in which participants saw efficient and inefficient reach trajectories (arched/straight over an obstacle vs. empty space) and indicated the hand’s last location after it had suddenly disappeared on a touch screen. In two further experimental groups, we progressively removed intentional cues. First, as in prior research on infant intention attribution54, we replaced the hand with a non-agentive stimulus – a ball –, which however followed the same characteristic biological motion trajectories and profiles as the hands in the first experimental group, showing the classical bell-shaped velocity profile of reaches towards objects60. Second, humans are sensitive to motion cues that distinguish the intentional biological agents from inanimate objects, such as self-propulsion and change of direction55,58, or a trajectory and speed of movement that is similar to one’s own movement56,57,59. In a third group, participants therefore saw the same ball, but it did not now follow a biological motion profile, removing all kinematic cues to intention. If biases toward efficient action emerge from cues that signal intentionality, then they should be substantially reduced in group 2, and further reduced in group 3, as cues to intentionality are removed.

Method

Participants

Eighty-two participants took part in the experiment: twenty-nine participants in group 1 (hand stimuli, mean age = 21 years, SD = 4.7, 25 females), twenty-seven in group 2 (balls with biological motion, mean age = 20 years, SD = 4.1, 21 females), and twenty-six in group 3 (balls with non-biological motion, mean age = 21 years, SD = 4.2, 20 females). Nine additional participants (two from group 1, three from group 2, four from group 3) were excluded based on previously established exclusion criteria (see Results). All participants in all groups were tested in the same three-week period. Both the gender mix and age distribution did not differ between groups (ps > 0.39). All participants were right-handed, had normal/corrected-to-normal vision, gave informed consent, and were recruited from the University of Plymouth or the wider community for course credit or payment. The study was approved by the University of Plymouth’s ethics board, in line with the ESRC and the Declaration of Helsinki. A power analysis revealed that a sample size of 26 provides 0.80 power to detect two-sided within-subjects effects in each of the group with Cohen’s d = 0.57. Our prior study investigating the same effect13 (“report obstacle” condition) and pilot data revealed consistently larger effect sizes, d = 0.76 to d = 1.29. For the between-subjects effects, a power analysis revealed that a sample size of 26 per group provides 0.80 power to detect effects in either direction with Cohen’s d = 0.79. This should provide enough power to detect reductions of the original effect to about 40% of the original size (assuming that standard deviations remain the same).

Apparatus

Presentation (NeuroBS) software was used to present the experiment via a HP EliteDisplay S230tm 23-inch widescreen (1920 × 1080) Touch Monitor. Verbal responses were recorded with Presentation’s sound threshold logic via a Logitech PC120 combined microphone and headphone set.

Stimuli

Example stimuli can be seen in Fig. 1A. To derive a set of stimuli of efficient actions, videos were filmed of an arm at rest to the right of the screen, which then reached for one of four objects (an apple, a packet of crisps, a glue stick, or a stapler) on the left of the screen. The reaches were either directed straight for the target object (Straight/Efficient), or arched over one of three obstacles (an iPad, lamp or pencil holder; Arched/Efficient). Each video clip was then converted into individual frames, and the first 22 from frame 1 (initial rest position) to 22 (mid-way through the action) were used as stimuli. For each efficient action, an inefficient action sequence was created by digitally removing the obstacles from the Arched/Efficient videos (Arched/Inefficient), or by inserting the obstructing objects into the Straight/Efficient videos, (Straight/Inefficient). The inefficient actions were therefore identical to the efficient actions in terms of movement kinematics, and differed only by the presence/absence of the obstacle. Finally, response stimuli were created by taking one frame from each action sequence and digitally removing the actor’s arm from the scene, so that only the objects and background remained. Presenting this frame immediately after the action sequence gave the impression of the hand disappearing from the scene, and participants indicated the last seen location of the tip of the index finger on this frame with a touch response on screen.

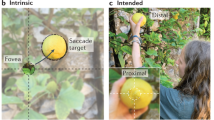

Stimulus conditions and trial sequence. The stimulus conditions used in all three experimental groups are depicted in Panel A. The four panels show the hand in the starting position and the possible action trajectories. These Action Trajectories were either straight or arched and were rendered either efficient or inefficient by the presence or absence of an obstructing object. Panel B depicts an equivalent example of a Straight/Inefficient trial in the Biological Ball group (top) and the Non-Biological Ball group (bottom). The white markers depict the disappearance point of the index finger tip/ball in each of the four final frames. Panel C shows an example of a trial sequence in the Arched/Efficient condition of group 1. This trial sequence is equivalent across all experimental groups.

For experimental group 2 (balls with biological motion), the forty videos of hand movements used in experimental group 1 were digitally manipulated so that the actor’s hand was replaced with a ball, coloured using the same tones as the hand. The ball was the same size as the tip of the index finger that participants had to touch in experimental group 1 (30 px. diameter) and was positioned at the same coordinates in each frame. An additional frame was created by positioning the ball mid-air before the first frame (where the ball contacts the table) creating an illusory “bounce” motion, providing a realistic context for the ball movement in order to reduce impressions of self-propelled movement that could also cue the observer that the motion is intentional61.

For Experimental group 3 (balls with non-biological motion), the forty videos from group 2 were digitally manipulated so that the ball now appeared to move in a straight line and at a constant speed after the bounce frame, eliminating the biological motion profile. To ensure that comparability of disappearance points between experimental groups, the line of best fit was calculated through the last four frames of each sequence of experimental group 1 (i.e. all possible disappearance points). The constant speed of the ball was created by recalculating the Y coordinates at equal distances along this line, between the first and last frame.

Procedure

An example trial sequence can be seen in Fig. 1B. Participants completed four blocks of 48 trials in which each condition was presented an equal amount of times (Straight/Efficient, Straight/Inefficient, Arched/Efficient, Arched/Inefficient). At the start of each trial, participants saw an instruction to “Hold the spacebar”, to which they pressed the spacebar with their right hand and kept it depressed. This ensured that they did not track the observed motion with their finger and could only initiate their response once the action sequence had disappeared. Participants then saw the first frame of the action sequence as a static image (the hand at rest in experimental group 1 and the “bouncing ball” frame in experimental groups 2 and 3) and were required to say “yes” into the microphone if there was an obstructing object present, and “no” if there was not.

The action sequence began 1000 ms after a verbal response had been detected. Every third frame of the action sequence was presented for 80 ms each, with a randomly selected sequence length of 5, 6, 7 or 8 frames (e.g. trials with a length of 8 frames showed frames 1-4-7-10-13-16-19-22). The final frame was then immediately replaced with the response frame, which showed the same scene without the moving object, creating the impression that the hand/ball had simply disappeared. Participants released the spacebar and, with their right hand, touched the screen where they thought the final position of the tip of the observed index finger was in group 1, or the final ball position in groups 2 and 3. As soon as a response was registered, the next trial began.

Note that the presentation of every third frame of the videos resulted in illusory “apparent” motion between the steps in the trajectory62. Such non-smooth motion retains the relevant characteristics of intentional biological motion (e.g. parabolic path, bell-shaped velocity profile) and provides ideal conditions to measure predictive influences in motion perception, which are larger with apparent motion than smooth motion63. This is in line with the notion that top-down influences that govern everyday perception become apparent the more the bottom-up sensory input becomes ambiguous or uncertain (e.g. through bi-stable images64; visual noise65). For motion, non-smooth step-wise presentation is assumed to disrupt low-level motion detectors, prompting a stronger weighting of top-down influences that compensate and “fill in” the intervening steps in the trajectory30,32,66.

Results

Data filtering was identical to our original study13. In all three experimental groups, trials were excluded if the correct response procedure was not followed (e.g. lifting the spacebar too early; 3.5%), or if response initiation or execution times were shorter than 200 ms or more than 3 SDs above the sample mean (2.2%, Initiation: mean = 393.7 ms, SD = 173.3; Execution: mean = 571.9 ms, SD = 203.3). Participants were excluded if too few trials remained after trial exclusions (<50% valid trials, 3 participants), if the distance between the real and selected positions exceeded 3 SD of the sample mean (mean = 39.9 pixels, SD = 18.9, 2 participants excluded), or if the correlation between the real and selected positions was more than 3 SD below the median r value (X axis: median r = 0.940, SD = 0.041; Y axis: median r = 0.908, SD = 0.063, 4 participants excluded).

Analysis was conducted on the predictive perceptual bias by subtracting the real final coordinates of the tip of the index finger/ball from the participant’s selected coordinates on each trial. This resulted in separate “difference” scores along the X and Y axis where positive X and Y scores represented a rightward and upward displacement respectively, and negative X and Y scores represented a leftward and downward displacement respectively. A score of 0 on both axes indicated that the participant selected the real final position exactly. These difference scores were entered into a 2 × 2 × 3 ANOVA for the X and Y axis separately, with Trajectory (straight vs arched) and Efficiency (efficient vs inefficient) as repeated-measures factors, and Experimental group as a between-subjects factor.

The data from the original experiments13, as well as further pilot studies in our lab, have shown that expectations of efficient action primarily induce biases on the Y-axis, but not the X-axis. This is consistent with the view that rather than viewing the current trajectory relative to the trajectory that was initially predicted (e.g. an arched trajectory when an obstacle was present), expectations of action efficiency reflect expectations about how the current trajectory will further develop. In other words, when seeing a straight reach towards an obstacle, one expects the hand to be merely lifted upwards to avoid the obstacle (rather than it being also displaced backwards to its corresponding location had it followed the arched trajectory from the outset). Similarly, when seeing an arched reach over empty space one expects the current reach would straighten downwards towards the goal object (rather than also being displaced forwards to where the hand would be had it followed the alternative straight trajectory). If the current results replicate this established pattern13, displacement should therefore again primarily affect the Y axis (capturing this lifting or lowering of the hand towards the target or away from the obstacle), but not the X-axis (indexing a displacement forwards/backwards to the alternative trajectory).

Y axis

If intentionality is perceptually instantiated, we predicted (1) that inefficient actions would be perceptually “corrected” towards the more efficient action alternative, and (2) that these biases should be strongest in experimental group 1 (hand stimuli) but weaker when cues to intentionality are removed in groups 2 (balls with biological motion) and 3 (balls with non-biological motion). Indeed, the analysis revealed the interaction of Trajectory and Efficiency (F(1,79) = 45.0, p < 0.001, ηp2 = 0.363), replicating our prior study13. Across groups, the disappearance points for straight trajectories were reported higher when the actions were inefficient (i.e. reaching towards an obstacle, 2.26 px) than when the actions were efficient (no obstacle, −0.967 px; t(81) = 5.46, p < 0.001, d = 0.60). Conversely, the perceived disappearance points for arched reaches were perceived to be lower for inefficient actions (7.87 px) than for efficient actions (11.6 px; t(81) = 4.81, p < 0.001, d = 0.53).

Importantly, as predicted, these biases differed between experimental groups, as indicated by an interaction of Trajectory, Efficiency and Experimental group (F(1,79) = 6.47, p = 0.002, ηp2 = 0.141). Pairwise step-down comparisons showed that the interaction between Trajectory and Efficiency was smaller in the Non-biological Ball group (group 3) than in the Biological Hand group (group 1; F(1,53) = 11.7, p = 0.001, ηp2 = 0.181), and the Biological Ball group (group 2; F(1,51) = 4.00, p = 0.051, ηp2 = 0.073). No difference was found between Biological Hand group and the Biological Ball group (F(1,54) = 2.77, p = 0.102, ηp2 = 0.049), although a Two One-Sided Tests (TOST) procedure67 indicated that the observed effect size (d = 0.45) was not significantly within the equivalence bounds of ΔL = −0.53 and ΔU = 0.53, t(53.85) = −0.31, p = 0.38 (equivalence bounds calculated as critical Cohen’s d-values from our prior study13 investigating the same effect67). When experimental groups were analysed separately, the interaction between Trajectory and Efficiency was only present for the groups seeing Hands and Balls on biological motion trajectories (Biological Hand: F(1,28) = 41.7, p < 0.001, ηp2 = 0.598; Biological Ball: F(1,26) = 21.0, p < 0.001, ηp2 = 0.447), but not in the group viewing balls on a non-biological motion trajectory (Non-biological Ball: F(1,25) = 1.20, p = 0.284, ηp2 = 0.046). Indeed, the TOST procedure indicated that the observed effect size in the latter group (d = 0.21) was significantly within the equivalence bounds of ΔL = −0.55 and ΔU = 0.55, t(25) = −1.71, p = 0.05.

As unpredicted effects are subject to alpha inflation in an ANOVA due to multiple testing68 all additional results in the analysis of Y-Axis and X-Axis should be interpreted with caution, and considered relative to a Bonferroni-adjusted alpha of 0.004. The analysis revealed an additional main effect of Trajectory that passed this threshold (F(1,79) = 197.5, p < 0.001, ηp2 = 0.714), with perceived disappearance points of stimuli on arched trajectories being displaced further upwards (9.76 px, t(81) = 9.72, p < 0.001, d = 1.1) than for straight trajectories (0.67 px). This bias is consistent with the well-known predictive displacement in the direction of motion (e.g. further upwards for arched trajectories, but not for straight ones), known as the representational momentum effect. Interestingly, this forward displacement again differed between experimental groups, as indicated by an interaction of Trajectory and Experimental group that passed corrected thresholds (F(1,79) = 40.4, p < 0.001, ηp2 = 0.506). Direct comparisons showed that the upwards displacements for arched trajectories were larger in Non-biological Ball (group 3) than the Biological Ball group (group 2; F(1,51) = 12.9, p = 0.001, ηp2 = 0.203), which in turn were larger than in the Biological Hand group (group 1; F(1,54) = 31.9, p < 0.001, ηp2 = 0.371). When analysing each experimental group separately, the upwards shift of straight trajectories was only present with ball stimuli, both when following biological, (F(1,26) = 100.5, p < 0.001, ηp2 = 0.794), and non-biological trajectories, (F(1,79) = 147.7 p < 0.001, ηp2 = 0.855), but not with moving hands, (F(1,28) = 2.35, p = 0.136, ηp2 = 0.077). While not explicitly predicted, these displacements may reflect further changes to motion prediction depending on the presence of intentional cues. Balls, especially those that do not follow a biological motion trajectory, would be expected to continue on their upwards path, but hands would not when the goal of the reach is located towards the bottom, such as here. Nevertheless, due to the post-hoc nature of these findings, they should be treated with caution.

X axis

We did not have any prediction for the X axis. All effects are therefore subject to alpha inflation in an ANOVA68 and should be interpreted with caution, relative to a Bonferroni-adjusted alpha of 0.00468. A main effect of Trajectory, (F(1,79) = 199.0, p < 0.001, ηp2 = 0.716), passed this threshold, which was further qualified by an interaction of Trajectory and Experimental group (F(1,79) = 112.9, p < 0.001, ηp2 = 0.741). As can be seen in Fig. 2, perceptual judgments of hands – but not balls – on arched trajectories were generally biased leftwards and rightwards for hands on straight trajectories. This difference replicates previous results13 and simply reflects stimulus differences between the hand shapes of the naturally recorded reaches on straight and arched trajectories, specifically the further rightwards centre of gravity for hands on straight trajectories, which biases location judgments69. No other effects passed the Bonferroni-adjusted thresholds of 0.004. Specifically, there was no main effect of Efficiency (F(1,79) = 0.4.66, p = 0.034, ηp2 = 0.056), no interaction between Efficiency and Experimental group (F(1,79) = 5.42, p = 0.006, ηp2 = 0.121), no interaction between Trajectory and Efficiency (F(1,79) = 5.15, p = 0.026, ηp2 = 0.061) and no three-way interaction between Trajectory, Efficiency and Experimental group (F(1,79) = 1.25, p = 0.293, ηp2 = 0.031).

The Trajectory X Efficiency interactions for the Biological Hand (A), Biological Ball (B), and Non-biological Ball (C) groups. The difference between the real final position and the selected final position is plotted for the X axis and Y axis. The real final position on any given trial is at point 0,0, as indicated on each plot. Panel D depicts a comparison of the size of the Y axis interaction in pixels, equivalent to the total amount by which inefficient actions were corrected towards a more efficient trajectory. Error bars depict 95% confidence intervals.

Testing for general differences in attention between groups

In an exploratory analysis, we tested whether the observed differences between groups can be explained by more general differences in attention towards the biological and non-biological stimuli. In particular, it is well-established that agentive stimuli with a biological motion profile attract attention70,71,72. To ensure that our results cannot be explained simply by more attentive perception of the more biological stimuli, we used the across-trial correlations between actual disappearance points and participants’ judgments that we used to identify participants that did not follow the task (i.e. if the reported x coordinates did not bear enough relationship to the actual coordinates). If participants attend more strongly to biological stimuli than to non-biological stimuli, one would expect their judgements to be more accurate and to more closely follow what was observed, resulting in smaller deviations for biological hand stimuli than for the other, less intentional stimulus types. We found no evidence for this prediction. While these correlations were generally high, they were, if anything, higher in the ball conditions in which participants’ judgments are less affected by their expectations (Hands, mean x r = 0.91, mean y r = 0.88; biological ball, mean x r = 0.95, mean y r = 0.93; non-biological ball, mean x r = 0.92, mean y r = 0.90). While this runs counter to the argument for decreased attention in the non-biological conditions, it is fully in line with our proposal of a stronger reliance on prior expectations as soon as intentions can be attributed to these stimuli. Indeed, as predicted from this hypothesis, participants’ across-trial correlations between actual and selected coordinates correlated negatively with how much they are affected by their expectations (r = −0.30, p = 0.006), even when gross between-groups differences are factored out via z standardization in each group (r = −0.31, p = 0.005). Thus, across all participants in the three groups, differences in the ability to track the actual disappearance points do no provide evidence for higher attention in the hand conditions but show, if anything, better accuracy in the non-biological groups, which can be explained by an (over-) reliance on prior expectations for stimuli that provide intentional cues.

Discussion

Previous studies have shown that perceptual representations of observed actions are predictively biased towards the goals attributed to them37,41,42,46,47,48 and that these predictions are informed by the assumption of efficient action, reflecting the specific trajectories that would allow an actor to efficiently reach the inferred goal13. To investigate if these prior expectations emerge from assumptions about action intentionality, we asked participants to watch moving stimuli and to accurately report the object’s last seen position after it suddenly disappeared. We tested whether perceptual reports would again be predictively biased towards the expected trajectory13,37,41,42 but varied whether the stimulus was a hand with biological motion kinematics (i.e. bell-shaped velocity profile of reaching60), a non-agentive ball that travelled the same biological motion trajectory as the hand, or a ball travelling a non-biological trajectory.

Replicating our prior studies, perceptual reports of hand disappearance points were not veridical, but “corrected” towards the expected action kinematics of a rational, efficient actor. The perceived disappearance points of hands reaching straight towards an obstacle were reported higher than if the path was clear. Similarly, the perceived disappearance point of arched reaches was perceived lower if there was no obstacle to reach across, compared to when there was an obstacle. Importantly, our new data now show that these biases towards efficient action depend on cues to intentionality. The biases were numerically reduced when participants watched a non-intentional object – a ball – travel on the same biological motion trajectory, starting slowly and speeding up along, as if self-propelled. They were almost completely eliminated when the same ball was now seen travelling with a non-biological trajectory that nevertheless traversed, on average, the same path of motion as the hands, but did not show the characteristic bell-shaped velocity profile of goal-directed reaches60.

These results confirm first that, as in our prior studies, observers predict the ideal action trajectory a rational actor would take that is fully aware of all relevant environmental constraints. Second, they show that these predictions influenced the perceptual judgments of observed actions, subtly biasing them towards the most efficient trajectory. These findings are therefore in line with predictive processing models of social perception12,15,16,17,26,41,42, which assume that the perceptual experience of others’ actions emerges from an integration of bottom-up sensory information and prior assumptions about others’ goals and how they would (best) realise them. Our data now show, third, that when observing the behaviour of others these predictions of efficient action depend on bottom-up cues to intentionality derived from the objects’ semantics and its trajectory and motion profile. Both types of cues have been previously identified as a basis for attributing intentionality to observed agents in children55,56,57,58. The finding that these cues also modulate predictive biases towards efficient action in adult action observation directly supports the proposal that these predictions emerge from the attribution of intention to the observed actions13,37,41,42, which then inform their perceptual representation.

During everyday action observation these top-down influences can fulfil several important functions. First, they can disambiguate perception by compensating for the perceptual “blurring” during motion perception (i.e. motion sharpening28,29), or filling in missing steps of the input30. Second they can support planning of one’s own actions, allowing them to be coordinated with the others’ future behaviour or the end-state of their actions73. Finally, they can be compared to actual behaviour, triggering revisions of prior assumptions if prediction errors become too large18,20, signalling, for example, that a behaviour may not be intentional after all, or that the actor is not aware of all relevant environmental constraints (e.g., they may not have seen an obstacle). As such, they may underlie the proposed link between teleological perception of others’ behaviour and more sophisticated theory of mind and mentalizing processes3.

Further work now needs to resolve via which mechanisms cues of intentionality induce predictive biases towards efficient action. One possibility is that the biases emerge via predictive mechanisms in one’s own motor system14,15,16,74,75. On such views, people make higher-level “cognitive” attributions of intentions of others and then feed these goals into their own motor system to predict the kinematics they would need to achieve if they were in the actor’s place. Indeed, the perceptual effects observed here bear a striking similarity to similar motoric effects that can be measured when people watch others’ behaviour. Both behavioural and neuroimaging studies suggest that, during action observation, one’s own motor system does not only mirror the actually seen behaviour (e.g. a finger being depressed) but also the behaviour that is only predicted from the goals attributed to the actor, even if it is not actually observed24,26 (e.g., finger held up by a clamp76). Even if one watches an inanimate ball that one has experience of controlling oneself, one’s motor behaviour subtly captures both the ball’s actual trajectory and the trajectory one intended for it to travel on77,78. These motoric changes might therefore index the recruitment of such predictive (forward modelling) mechanisms that have evolved for the control of one’s own actions but are applied to the actions of others.

An alternative possibility is that attributions of intentionality are made within the (higher-level) perceptual system itself. It is well-known that the perceptual system itself can make sophisticated “unconscious inferences” about objects, extracting, for example, the real colour of a stimulus by subtracting out cues to shading and illumination79. In the same way, the perceptual system could use object and motion information (e.g., balls vs. hands; biological vs. non-biological motion profiles) to make inferences about the intentionality of a moving object80. Indeed, several imaging studies suggest that such cues to intentionality act on lower-level regions within higher-level visual cortex, such as the superior temporal sulcus81,82. Moreover, it well known that children can attribute intentionality to stimuli which are unlikely to engender motor activation, such as abstract geometric shapes or biomechanically impossible actions83,84 or that they process action efficiency before they have competence in the observed action85,86. Local interactions within the perceptual system could explain such observation. In such views, the motoric activation measured during action observation described above therefore does not reflect the origin of the perceptual effects, but a mere passive “motor resonance” that captures instead the changes to the action’s perceptual representation that has already occurred.

While we are sympathetic to both explanations12, and we do not deem them as mutually exclusive, our prior data seems to be more consistent with the latter, perceptual locus of effects. In our original study42, we observed that while attributing goals (e.g. to reach or withdraw) to others reliably biased perceptual measures towards these goals, the same was not true for when these action possibilities were motorically activated (i.e. by asking participants to make a forward or backwards movement with their own hand). While this conclusion is certainly preliminary, and needs to be supported by further studies, it makes a strong causal role of motoric processes unlikely.

Another question is how the present effects on perceptual judgments emerge. Several studies, both psychophysical and based on neuroimaging, have shown that predictions can exert downstream effects on early perceptual processes, across different modalities (e.g., vision30,33, audition22), providing sensory “templates” of expected stimulation33, or filling in missing information during apparent motion30,34. Others, however, argue that expectations influence primarily decision-related processes that integrate bottom-up with top-down information on all levels of the hierarchy87,88, or that they reflect attentional modulations of the response properties of neurons in early sensory areas89,90. Others argue that many of the psychophysical effects of expectation may in fact reflect testing artefacts or demand effects, when participants realise what is being tested91,92.

While the precise mechanism has to be confirmed, several aspects of prior studies13 imply a role in the action’s perceptual representation. First, when asked during piloting of the original set of studies, participants were unaware of the experimental hypotheses, arguing against demand effects. Second, the effects were present already very briefly (250 ms) after action offset, in psychophysical probe judgment tasks13,37,41,42 (for a review of similar findings in non-biological motion perception, see40) that has been shown to be relatively robust against cognitive control processes93,94. Third, and most importantly, the biases towards efficient action were disrupted by brief (560 ms.) dynamic visual noise masks that interfere with the re-entrant feedback from higher cortical areas with visual cortex that is required for the stabilisation of percepts for conscious access, during both perception49,50,95,96 and imagery97. The observed biases in perceptual judgments are therefore unlikely to stem from unspecific perceptual changes in memory or motor control92 (see98 for an example for perceptual changes in action memory). Instead, we propose that they either play a role in ongoing motion perception emerging from the re-current interactions between lower and higher visual regions involved in stabilising percepts and compensating for the substantial blurring during motion perception.

Conclusions

The principle of efficient action allows observers to predict ideal reference trajectories that intentional actions will follow, given that the agent is fully aware of all relevant environmental constraints. The data presented here confirm that these predictions are at least partially perceptually represented and influence perceptual judgments of others actions, biasing them towards these expectations. They show that these predictions emerge from attributions of intentionality to the observed actor, triggered by the perception of biological “agentive” objects and kinematics that follow biological motion profiles.

Data Availability

Data, code and materials are available at https://osf.io/x53uj/.

References

Baillargeon, R., Scott, R. M. & Bian, L. Psychological Reasoning in Infancy. Annual Review of Psychology 67, 159–186, https://doi.org/10.1146/annurev-psych-010213-115033 (2016).

Baker, C. L., Saxe, R. & Tenenbaum, J. B. Action understanding as inverse planning. Cognition 113, 329–349, https://doi.org/10.1016/j.cognition.2009.07.005 (2009).

Csibra, G. & Gergely, G. ‘Obsessed with goals’: Functions and mechanisms of teleological interpretation of actions in humans. Acta Psychologica 124, 60–78, https://doi.org/10.1016/j.actpsy.2006.09.007 (2007).

Gergely, G. & Csibra, G. Teleological reasoning in infancy: the naïve theory of rational action. Trends in Cognitive Sciences 7, 287–292, https://doi.org/10.1016/s1364-6613(03)00128-1 (2003).

Dennett, D. C. The intentional stance, (MIT Press, 1987).

Hunnius, S. & Bekkering, H. What are you doing? How active and observational experience shape infants’ action understanding. Philosophical Transactions of the Royal Society B: Biological Sciences 369, 20130490–20130490, https://doi.org/10.1098/rstb.2013.0490 (2014).

Gergely, G., Bekkering, H. & Király, I. Developmental psychology: Rational imitation in preverbal infants. Nature 415, 755–755, https://doi.org/10.1038/415755a (2002).

Gergely, G., Nádasdy, Z., Csibra, G. & Bíró, S. Taking the intentional stance at 12 months of age. Cognition 56, 165–193, https://doi.org/10.1016/0010-0277(95)00661-h (1995).

Liu, S. & Spelke, E. S. Six-month-old infants expect agents to minimize the cost of their actions. Cognition 160, 35–42, https://doi.org/10.1016/j.cognition.2016.12.007 (2017).

Rochat, M. J., Serra, E., Fadiga, L. & Gallese, V. The Evolution of Social Cognition: Goal Familiarity Shapes Monkeys’ Action Understanding. Current Biology 18, 227–232, https://doi.org/10.1016/j.cub.2007.12.021 (2008).

Wellman, H. M. & Brandone, A. C. Early intention understandings that are common to primates predict children’s later theory of mind. Current Opinion in Neurobiology 19, 57–62, https://doi.org/10.1016/j.conb.2009.02.004 (2009).

Bach, P. & Schenke, K. C. Predictive social perception: Towards a unifying framework from action observation to person knowledge. Social and Personality Psychology Compass 11, e12312, https://doi.org/10.1111/spc3.12312 (2017).

Hudson, M., McDonough, K. L., Edwards, R. & Bach, P. Perceptual Teleology: Expectations of Action Efficiency Bias Social Perception. Proceedings of the Royal Society: B. 20180638, https://doi.org/10.1098/rspb.2018.0638 (2018).

Csibra, G. In Sensorymotor foundations of higher cognition. Attention and performance XXII (eds P. Haggard, Y. Rosetti, & M. Kawato) 435–459 (Oxford University Press, 2008).

Kilner, J. M., Friston, K. J. & Frith, C. D. The mirror-neuron system: a Bayesian perspective. Neuro Report 18, 619–623, https://doi.org/10.1097/wnr.0b013e3281139ed0 (2007).

Kilner, J. M., Friston, K. J. & Frith, C. D. Predictive coding: an account of the mirror neuron system. Cognitive Processing 8, 159–166, https://doi.org/10.1007/s10339-007-0170-2 (2007).

Zaki, J. Cue integration: A common framework for social cognition and physical perception. Perspectives on Psychological Science 8, 296–312, https://doi.org/10.1177/1745691613475454 (2013).

Clark, A. Whatever next? Predictive brains, situated agents, and the future of cognitive science. Behavioral and Brain Sciences 36, 181–204, https://doi.org/10.1017/s0140525x12000477 (2013).

Friston, K. & Kiebel, S. Cortical circuits for perceptual inference. Neural Networks 22, 1093–1104, https://doi.org/10.1016/j.neunet.2009.07.023 (2009).

Hohwy, J. The Predictive Mind. (Oxford University Press, 2013).

Schlaffke, L. et al. The brain’s dress code: How The Dress allows to decode the neuronal pathway of an optical illusion. Cortex 73, 271–275, https://doi.org/10.1016/j.cortex.2015.08.017 (2015).

Kondo, H. M., Farkas, D., Denham, S. L., Asai, T. & Winkler, I. Auditory multistability and neurotransmitter concentrations in the human brain. Philosophical Transactions of the Royal Society B: Biological Sciences 372, 20160110, https://doi.org/10.1098/rstb.2016.0110 (2017).

Adams, W. J., Graf, E. W. & Ernst, M. O. Experience can change the ‘light-from-above’ prior. Nature Neuroscience 7, 1057–1058, https://doi.org/10.1038/nn1312 (2004).

Bach, P., Bayliss, A. P. & Tipper, S. P. The predictive mirror: interactions of mirror and affordance processes during action observation. Psychonomic Bulletin & Review 18, 171–176, https://doi.org/10.3758/s13423-010-0029-x (2011).

Bach, P., Knoblich, G., Gunter, T. C., Friederici, A. D. & Prinz, W. Action Comprehension: Deriving Spatial and Functional Relations. Journal of Experimental Psychology: Human Perception and Performance 31, 465–479, https://doi.org/10.1037/0096-1523.31.3.465 (2005).

Bach, P., Nicholson, T. & Hudson, M. The affordance-matching hypothesis: how objects guide action understanding and prediction. Frontiers in Human Neuroscience 8, https://doi.org/10.3389/fnhum.2014.00254 (2014).

Bach, P., Nicholson, T. & Hudson, M. Pattern completion does not negate matching: a response to Uithol and Maranesi. Frontiers in Human Neuroscience 9, https://doi.org/10.3389/fnhum.2015.00685 (2015).

Bex, P. J., Edgar, G. K. & Smith, A. T. Sharpening of drifting, blurred images. Vision Research 35, 2539–2546, https://doi.org/10.1016/0042-6989(95)00060-d (1995).

Hammett, S. T. Motion blur and motion sharpening in the human visual system. Vision Research 37, 2505–2510, https://doi.org/10.1016/s0042-6989(97)00059-x (1997).

Muckli, L., Kohler, A., Kriegeskorte, N. & Singer, W. Primary Visual Cortex Activity along the Apparent-Motion Trace Reflects Illusory Perception. PLoS Biology 3, e265, https://doi.org/10.1371/journal.pbio.0030265 (2005).

Shiffrar, M. & Freyd, J. J. Timing and Apparent Motion Path Choice With Human Body Photographs. Psychological Science 4, 379–384, https://doi.org/10.1111/j.1467-9280.1993.tb00585.x (1993).

Yantis, S. & Nakama, T. Visual interactions in the path of apparent motion. Nature Neuroscience 1, 508–512, https://doi.org/10.1038/2226 (1998).

Ekman, M., Kok, P. & de Lange, F. P. Time-compressed preplay of anticipated events in human primary visual cortex. Nature Communications 8, 15276, https://doi.org/10.1038/ncomms15276 (2017).

Avenanti, A., Annella, L., Candidi, M., Urgesi, C. & Aglioti, S. M. Compensatory plasticity in the action observation network: virtual lesions of STS enhance anticipatory simulation of seen actions. Cerebral cortex 23, 570–580 (2012).

Pozzo, T., Papaxanthis, C., Petit, J. L., Schweighofer, N. & Stucchi, N. Kinematic features of movement tunes perception and action coupling. Behavioural Brain Research 169, 75–82, https://doi.org/10.1016/j.bbr.2005.12.005 (2006).

Saunier, G., Papaxanthis, C., Vargas, C. D. & Pozzo, T. Inference of complex human motion requires internal models of action: behavioral evidence. Experimental Brain Research 185, 399–409, https://doi.org/10.1007/s00221-007-1162-2 (2008).

Hudson, M., Bach, P. & Nicholson, T. You said you would! The predictability of other’s behavior from their intentions determines predictive biases in action perception. Journal of Experimental Psychology: Human Perception and Performance 44, 320–335, https://doi.org/10.1037/xhp0000451 (2017).

Freyd, J. J. & Finke, R. A. Representational momentum. Journal of Experimental Psychology: Learning, Memory, and Cognition 10, 126–132, https://doi.org/10.1037//0278-7393.10.1.126 (1984).

Hubbard, T. L. Representational momentum and related displacements in spatial memory: A review of the findings. Psychonomic Bulletin & Review 12, 822–851, https://doi.org/10.3758/bf03196775 (2005).

Hubbard, T. L. The varieties of momentum-like experience. Psychological Bulletin 141, 1081–1119, https://doi.org/10.1037/bul0000016 (2015).

Hudson, M., Nicholson, T., Ellis, R. & Bach, P. I see what you say: Prior knowledge of other’s goals automatically biases the perception of their actions. Cognition 146, 245–250, https://doi.org/10.1016/j.cognition.2015.09.021 (2016).

Hudson, M., Nicholson, T., Simpson, W. A., Ellis, R. & Bach, P. One step ahead: The perceived kinematics of others’ actions are biased toward expected goals. Journal of Experimental Psychology: General 145, 1–7, https://doi.org/10.1037/xge0000126 (2016).

Kessler, K., Gordon, L., Cessford, K. & Lages, M. Characteristics of motor resonance predict the pattern of flash-lag effects for biological motion. PloS one 5, e8258 (2010).

Jordan, J. S. & Hunsinger, M. Learned patterns of action-effect anticipation contribute to the spatial displacement of continuously moving stimuli. Journal of Experimental Psychology: Human Perception and Performance 34, 113–124, https://doi.org/10.1037/0096-1523.34.1.113 (2008).

Hubbard, T. L. Cognitive representation of motion: Evidence for friction and gravity analogues. Journal of Experimental Psychology: Learning, Memory, and Cognition 21, 241–254, https://doi.org/10.1037//0278-7393.21.1.241 (1995).

Hudson, M., Burnett, H. G. & Jellema, T. Anticipation of Action Intentions in Autism Spectrum Disorder. Journal of Autism and Developmental Disorders 42, 1684–1693, https://doi.org/10.1007/s10803-011-1410-y (2012).

Hudson, M. & Jellema, T. Resolving ambiguous behavioral intentions by means of involuntary prioritization of gaze processing. Emotion 11, 681–686, https://doi.org/10.1037/a0023264 (2011).

Hudson, M., Liu, C. H. & Jellema, T. Anticipating intentional actions: The effect of eye gaze direction on the judgment of head rotation. Cognition 112, 423–434, https://doi.org/10.1016/j.cognition.2009.06.011 (2009).

Fahrenfort, J. J., Scholte, H. S. & Lamme, V. A. F. Masking Disrupts Reentrant Processing in Human Visual Cortex. Journal of Cognitive Neuroscience 19, 1488–1497, https://doi.org/10.1162/jocn.2007.19.9.1488 (2007).

Lamme, V. A. F., Zipser, K. & Spekreijse, H. Masking Interrupts Figure-Ground Signals in V1. Journal of Cognitive Neuroscience 14, 1044–1053, https://doi.org/10.1162/089892902320474490 (2002).

Johnson, S. C. The recognition of mentalistic agents in infancy. Trends in Cognitive Sciences 4, 22–28, https://doi.org/10.1016/s1364-6613(99)01414-x (2000).

Johnson, S. C. Detecting agents. Philosophical Transactions of the Royal Society B: Biological Sciences 358, 549–559, https://doi.org/10.1098/rstb.2002.1237 (2003).

Sartori, L., Becchio, C. & Castiello, U. Cues to intention: The role of movement information. Cognition 119, 242–252, https://doi.org/10.1016/j.cognition.2011.01.014 (2011).

Falck-Ytter, T., Gredebäck, G. & von Hofsten, C. Infants predict other people’s action goals. Nature Neuroscience 9, 878–879, https://doi.org/10.1038/nn1729 (2006).

Leslie, A. M. In Mapping the mind: Domain specificity in cognition and culture (eds L. Hirschfeld & S. Gelman) 119–148 (Cambridge University Press, 1994).

Morewedge, C. K., Preston, J. & Wegner, D. M. Timescale bias in the attribution of mind. Journal of Personality and Social Psychology 93, 1–11, https://doi.org/10.1037/0022-3514.93.1.1 (2007).

Rakison, D. H. & Poulin-Dubois, D. Developmental origin of the animate-inanimate distinction. Psychological Bulletin 127, 209–228, https://doi.org/10.1037//0033-2909.127.2.209 (2001).

Baron-Cohen, S. Mindblindness: An essay on autism and theory of mind. (MIT press, 1997).

Clare Press, Action observation and robotic agents: Learning and anthropomorphism. Neuroscience & Biobehavioral Reviews 35, (6), 1410–1418 (2011).

Beggs, W. D. A. & Howarth, C. I. The Movement of the Hand towards a Target. Quarterly Journal of Experimental Psychology 24, 448–453, https://doi.org/10.1080/14640747208400304 (1972).

Luo, Y. & Baillargeon, R. Can a Self-Propelled Box Have a Goal?: Psychological Reasoning in 5-Month-Old Infants. Psychological Science 16, 601–608, https://doi.org/10.1111/j.1467-9280.2005.01582.x (2005).

Wertheimer, M. Experimentelle studien uber das sehen von bewegung. Zeitschrift fur Psychologie 61 (1912).

Kerzel, D. Mental extrapolation of target position is strongest with weak motion signals and motor responses. Vision Research 43, 2623–2635 (2003).

Hohwy, J., Roepstorff, A. & Friston, K. Predictive coding explains binocular rivalry: An epistemological review. Cognition 108, 687–701 (2008).

Gordon, N., Koenig-Robert, R., Tsuchiya, N., van Boxtel, J. J. & Hohwy, J. Neural markers of predictive coding under perceptual uncertainty revealed with Hierarchical Frequency Tagging. Elife 6, e22749 (2017).

Kok, P., Brouwer, G. J., van Gerven, M. A. & de Lange, F. P. Prior expectations bias sensory representations in visual cortex. Journal of Neuroscience 33, 16275–16284 (2013).

Lakens, D. Equivalence tests: a practical primer for t tests, correlations, and meta-analyses. Social Psychological and Personality. Science 8, 355–362 (2017).

Cramer, A. O. J. et al. Hidden multiplicity in exploratory multiway ANOVA: Prevalence and remedies. Psychonomic Bulletin & Review 23, 640–647, https://doi.org/10.3758/s13423-015-0913-5 (2015).

Coren, S. & Hoenig, P. Effect of Non-Target Stimuli upon Length of Voluntary Saccades. Perceptual and Motor Skills 34, 499–508, https://doi.org/10.2466/pms.1972.34.2.499 (1972).

Pratt, J., Radulescu, P. V., Guo, R. M. & Abrams, R. A. It’s alive! Animate motion captures visual attention. Psychological science 21, 1724–1730 (2010).

Guerrero, G. & Calvillo, D. P. Animacy increases second target reporting in a rapid serial visual presentation task. Psychonomic bulletin & review 23, 1832–1838 (2016).

Lindemann, O., Nuku, P., Rueschemeyer, S.-A. & Bekkering, H. Grasping the other’s attention: The role of animacy in action cueing of joint attention. Vision Research 51, 940–944 (2011).

Sebanz, N., Bekkering, H. & Knoblich, G. Joint action: bodies and minds moving together. Trends in Cognitive Sciences 10, 70–76, https://doi.org/10.1016/j.tics.2005.12.009 (2006).

Ansuini, C., Cavallo, A., Bertone, C. & Becchio, C. Intentions in the Brain. The Neuroscientist 21, 126–135, https://doi.org/10.1177/1073858414533827 (2015).

Otten, M., Seth, A. K. & Pinto, Y. A social Bayesian brain: How social knowledge can shape visual perception. Brain and Cognition 112, 69–77, https://doi.org/10.1016/j.bandc.2016.05.002 (2017).

Liepelt, R., Von Cramon, D. Y. & Brass, M. How do we infer others’ goals from non-stereotypic actions? The outcome of context-sensitive inferential processing in right inferior parietal and posterior temporal cortex. Neuroimage 43, 784–792 (2008).

De Maeght, S. & Prinz, W. Action induction through action observation. Psychological Research 68, 97–114 (2004).

Knuf, L., Aschersleben, G. & Prinz, W. An analysis of ideomotor action. Journal of Experimental Psychology: General 130, 779 (2001).

Bloj, M. G., Kersten, D. & Hurlbert, A. C. Perception of three-dimensional shape influences colour perception through mutual illumination. Nature 402, 877–879, https://doi.org/10.1038/47245 (1999).

Scholl, B. J. & Gao, T. In Social Perception: Detection and interpretation of animacy, agency, and intention, (eds M.D. Rutherford & V. A. Kuhlmeier) 197–230 (The MIT Press, 2013).

Grossman, E. et al. Brain Areas Involved in Perception of Biological Motion. Journal of Cognitive Neuroscience 12, 711–720, https://doi.org/10.1162/089892900562417 (2000).

Saygin, A. P. Superior temporal and premotor brain areas necessary for biological motion perception. Brain 130, 2452–2461, https://doi.org/10.1093/brain/awm162 (2007).

Heider, F. & Simmel, M. An experimental study of apparent behavior. The American journal of psychology 57, 243–259 (1944).

Southgate, V., Johnson, M. H. & Csibra, G. Infants attribute goals even to biomechanically impossible actions. Cognition 107, 1059–1069 (2008).

Gredebäck, G. & Melinder, A. Infants’ understanding of everyday social interactions: A dual process account. Cognition 114, 197–206 (2010).

Sodian, B., Schoeppner, B. & Metz, U. Do infants apply the principle of rational action to human agents? Infant Behavior and Development 27, 31–41 (2004).

Bang, J. W. & Rahnev, D. Stimulus expectation alters decision criterion but not sensory signal in perceptual decision making. Scientific Reports 7, https://doi.org/10.1038/s41598-017-16885-2 (2017).

Rungratsameetaweemana, N., Itthipuripat, S., Salazar, A. & Serences, J. T. Expectations do not alter early sensory processing during perceptual decision making. The Journal of Neuroscience, 3638–3617, https://doi.org/10.1523/jneurosci.3638-17.2018 (2018).

Desimone, R. & Duncan, J. Neural Mechanisms of Selective Visual Attention. Annual Review of Neuroscience 18, 193–222, https://doi.org/10.1146/annurev.neuro.18.1.193 (1995).

Serences, J. T. & Kastner, S. In Oxford Handbooks Online (Oxford University Press, 2014).

Durgin, F. H. et al. Who is being deceived? The experimental demands of wearing a backpack. Psychonomic Bulletin & Review 16, 964–969, https://doi.org/10.3758/pbr.16.5.964 (2009).

Firestone, C. & Scholl, B. J. Cognition does not affect perception: Evaluating the evidence for “top-down” effects. Behavioral and Brain Sciences 39, https://doi.org/10.1017/s0140525x15000965 (2016).

Courtney, J. R. & Hubbard, T. L. Spatial Memory and Explicit Knowledge: An Effect of Instruction on Representational Momentum. Quarterly Journal of Experimental Psychology 61, 1778–1784, https://doi.org/10.1080/17470210802194217 (2008).

Ruppel, S. E., Fleming, C. N. & Hubbard, T. L. Representational momentum is not (totally) impervious to error feedback. Canadian Journal of Experimental Psychology/Revue canadienne de psychologie expérimentale 63, 49–58, https://doi.org/10.1037/a0013980 (2009).

Kinsbourne, M. & Warrington, E. K. The Effect of an After-coming Random Pattern on the Perception of Brief Visual Stimuli. Quarterly Journal of Experimental Psychology 14, 223–234, https://doi.org/10.1080/17470216208416540 (1962).

Breitmeyer, B. & Öğmen, H. Visual masking: Time slices through conscious and unconscious vision. (Oxford University Press, 2006).

Dijkstra, N., Mostert, P., de Lange, F., Bosch, S. E. & van Gerven, M. (eLife, 2018).

Ianì, F., Mazzoni, G. & Bucciarelli, M. The role of kinematic mental simulation in creating false memories. Journal of Cognitive Psychology 30, 292–306 (2018).

Acknowledgements

This work was supported by the Economic and Social Research Council [Grant Number ES/J019178/1] awarded to Patric Bach, and a University of Plymouth doctoral student grant awarded to Katrina L. McDonough.

Author information

Authors and Affiliations

Contributions

K.L.M. and P.B. devised the experiments, with advice from M.H. Stimuli were created by K.L.M. and the computer program was developed by M.H. and K.L.M. Data was collected by K.L.M. Data were analysed by K.L.M. and P.B., with help from M.H. The manuscript was written by K.L.M., P.B. and M.H. All authors gave final approval for publication.

Corresponding author

Ethics declarations

Competing Interests

The authors declare no competing interests.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

McDonough, K.L., Hudson, M. & Bach, P. Cues to intention bias action perception toward the most efficient trajectory. Sci Rep 9, 6472 (2019). https://doi.org/10.1038/s41598-019-42204-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-019-42204-y

This article is cited by

-

Sonic Sleight of Hand: Sound Induces Illusory Distortions in the Perception and Prediction of Robot Action

International Journal of Social Robotics (2024)

-

Analysis of gaze patterns during facade inspection to understand inspector sense-making processes

Scientific Reports (2023)

-

Predicting others’ actions from their social contexts

Scientific Reports (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.