Abstract

It is increasingly recognized that Alzheimer’s disease (AD) exists before dementia is present and that shifts in amyloid beta occur long before clinical symptoms can be detected. Early detection of these molecular changes is a key aspect for the success of interventions aimed at slowing down rates of cognitive decline. Recent evidence indicates that of the two established methods for measuring amyloid, a decrease in cerebrospinal fluid (CSF) amyloid β1−42 (Aβ1−42) may be an earlier indicator of Alzheimer’s disease risk than measures of amyloid obtained from Positron Emission Tomography (PET). However, CSF collection is highly invasive and expensive. In contrast, blood collection is routinely performed, minimally invasive and cheap. In this work, we develop a blood-based signature that can provide a cheap and minimally invasive estimation of an individual’s CSF amyloid status using a machine learning approach. We show that a Random Forest model derived from plasma analytes can accurately predict subjects as having abnormal (low) CSF Aβ1−42 levels indicative of AD risk (0.84 AUC, 0.78 sensitivity, and 0.73 specificity). Refinement of the modeling indicates that only APOEε4 carrier status and four plasma analytes (CGA, Aβ1−42, Eotaxin 3, APOE) are required to achieve a high level of accuracy. Furthermore, we show across an independent validation cohort that individuals with predicted abnormal CSF Aβ1−42 levels transitioned to an AD diagnosis over 120 months significantly faster than those with predicted normal CSF Aβ1−42 levels and that the resulting model also validates reasonably across PET Aβ1−42 status (0.78 AUC). This is the first study to show that a machine learning approach, using plasma protein levels, age and APOEε4 carrier status, is able to predict CSF Aβ1−42 status, the earliest risk indicator for AD, with high accuracy.

Similar content being viewed by others

Introduction

Alzheimer’s disease (AD) is a terminal neurodegenerative disease that has historically been diagnosed based on “clinically significant” cognitive decline of an individual and exclusion of other conditions. However, it is increasingly recognized that AD is a decades-long neurodegenerative process, with shifts in amyloid β1–42 (Aβ1–42) providing the first indicators of disease development, long before “Alzheimer’s dementia” (significant cognitive decline) is clinically apparent1,2,3,4,5.

There is currently no cure or disease-modifying therapy for this terminal illness despite hundreds of clinical trials being conducted since 20026,7. It is hypothesized that the high failure rate of AD trials is in part due to the trials targeting AD patients with significant cognitive impairment, who are therefore in the late stages of the disease and likely have suffered a level of brain tissue loss that cannot be compensated for8. Compounding this is the discovery that many patients enrolled in clinical trials were retrospectively found to have normal levels of amyloid and hence did not have AD9, with this number as high as 20%10. Given these findings, there is a great interest in amyloid screening for clinical trial enrichment in order to recruit individuals at the earliest stages of AD, where intervention is thought to have the greatest chance of success8. It would also ensure that included individuals are amyloid positive (i.e. have abnormal levels of amyloid), a necessary precondition for the development of AD. This sort of selective screening is an important precursor for the longer-term goal of population screening for AD11.

There are currently two established methods to measure an individual’s amyloid burden: either in vivo in the form of reduced levels of Aβ1–42 in the cerebrospinal fluid (CSF) or increased uptake of radioactive tracers that bind selectively to the Aβ fibrillary aggregates by PET imaging. Unfortunately, existing methodologies for measuring an individual’s amyloid levels suffer drawbacks that limit their utility for screening. Lumbar punctures are highly invasive, with this factor alone limiting the applicability of CSF biomarkers for screening. While PET scans are less invasive, they are far more expensive and access to PET scanning facilities is limited in some regions. Despite this, many current trials that target amyloid now require positive amyloid imaging at baseline to ensure accurate diagnosis, a cost-intensive process7.

Despite their invasiveness, recent studies have found evidence that changes in CSF Aβ1–42 may indicate AD risk long before these same changes are reflected in PET Aβ imaging12,13,14. Palmqvist et al.13 have shown compelling evidence that changes in CSF Aβ1–42 occur up to a decade before the same signal is found by PET Aβ imaging. These results indicate that CSF may be a more suitable measure for early detection, whereas Aβ PET contributes independent information that is more related to disease progression and downstream pathology.

To bypass the invasiveness of CSF collection, there is a strong interest in finding blood-based markers that yield the same information about amyloid status as would be obtained from CSF. There have been a number of studies which have shown that a blood protein signature can be found that reflects AD brain pathology as measured by PET15,16,17,18,19,20,21,22,23,24. Of particular interest is the recent study by Nakamura et al.25, whereby levels of Aβ1–40, Aβ1–42 and APP669−771 in plasma, measured using specialised immunoprecipitation (IP) coupled with Matrix Assisted Laser Desorption/Ionization (MALDI) time-of-flight (TOF) mass spectrometry (MS) (henceforth referred to as IP-MALDI-TOF-MS), were shown in combination to have strong performance (>0.94 area under the receiver operating characteristic curve (AUC)) in predicting PET Aβ1–42 status across two cohorts. This combination of biomarkers was also found to be predictive of CSF Aβ1–42 status, with an AUC of 0.88 on a smaller subset of patients (n = 46). The novel IP-MALDI-TOF-MS method employed by Nakamura et al.25 is still in its infancy and it is unclear how easily this will be translated into a clinical setting. Thus, there is still strong interest in finding blood markers for CSF Aβ1–42 using alternative approaches that rely on more established assays.

Here, we evaluated the ability of proteomic and metabolomic data to predict the levels of CSF Aβ1–42 using a Random Forest (RF) approach and explore which types of measurements lead to the strongest predictive performance. We then determine the minimal set of features required to achieve comparable predictive performance. Finally, we evaluate the robustness and utility of these predictive models across a held-out validation cohort of individuals with mild cognitive impairment (MCI), demonstrating that subjects with predicted abnormal CSF Aβ1–42 levels showed a faster rate of cognitive decline (measured by the transition to a clinical AD diagnosis) than those with predicted normal CSF Aβ1–42 levels.

Methods

Overview of cohort and measurements

The Alzheimer’s Disease Neuroimaging Initiative (ADNI) is a large, multicenter, longitudinal neuroimaging study, launched in 2004 by the National Institute on Aging, the National Institute of Biomedical Imaging and Bioengineering, the Food and Drug Administration, private pharmaceutical companies, and non-profit organizations.

ADNI is a longitudinal study of older adults, designed to test whether serial magnetic resonance imaging (MRI), positron emission tomography (PET), other biological markers, and clinical and neuropsychological assessment can be combined to measure the progression of MCI and early AD.

The data used in this study were obtained from the ADNI database (http://adni.loni.usc.edu/), downloaded 10th October 2017 as the R package, ADNIMERGE.

The ADNI study protocols were approved by the institutional review boards of all participating sites (http://www.adni-info.org/) and written informed consent was obtained from all participants or authorized representatives. All the analytical methods were performed on the de-identified data and were carried out in accordance with the approved guidelines. Study inclusion criteria and definitions for each diagnosis class have been previously reported in detail26. Briefly, individuals diagnosed as AD had to meet the National Institute of Neurological and Communicative Disorders and Stroke-Alzheimer’s Disease and Related Disorders Association criteria for probable AD27. These individuals had issues with global cognition and memory function and they, or their caretakers, reported significant concerns about their memory. In contrast, individuals with MCI exhibited subjective memory loss (CDR of 0.5 and were at least one standard deviation(SD) below the normal mean of the delayed recall of the Wechsler Memory Scale Logical Memory II) but showed preserved activities of daily living, the absence of dementia and scored 24–30 on the MMSE.

Data preparation

We examined 566 individuals in the ADNI cohort who had baseline measures of age, APOEε4 carrier status, 193 protein levels (including homocysteine, Aβ1–40, and Aβ1–42) and a further 190 proteins measured on a Rules-Based Medicine (RBM) platform) and 186 LC-MS/MS metabolites and lipids. After applying previously documented quality control procedures (Supplementary Methods) and removing analytes with more than 15% missingness, 149 proteins and 138 metabolites remained. No samples were removed from the analysis as missingness levels were less than 5%. Any remaining missing data points were imputed using an unsupervised RF approach28, with the resulting 289 analytes listed in Supplementary Table 5, showing means, SD, and association across different CSF Aβ1–42 status. After quality control, a total of 566 individuals, each with measures for 289 analytes, age, and APOEε4 carrier status, were present in the ADNI cohort (Table 1).

Training and Validation cohorts

The 566 individuals in this study ranged from 54.4–89.6 years of age and could be categorized at baseline by their AD clinical diagnosis as cognitively normal (CN; n = 58), amnestic MCI (n = 396) or probable AD (n = 112). A breakdown of the demographics of the 566 individuals by baseline diagnosis and CSF availability is shown in Table 1.

This cohort was split into training and validation cohorts with 356 and 210 individuals with and without measures of CSF Aβ1–42, respectively. CSF Aβ1–42 was measured using the Luminex xMAP platform. The training set was used to build predictive models and evaluate their performance directly using the measured Aβ1–42 levels while the validation cohort was used to evaluate the generalizability and utility of the model’s predictions. For each cohort, we also considered a subset of individuals for whom Aβ1–42 status from PET was available at least one-time point (not just at baseline), either using 11C-Pittsburgh compound B (PiB) and 18F-AV-45 (florbetapir, AV45) tracers, for further validation of our modeling. Further demographic information for these cohorts can be found in Supplementary Tables 1, 2 and 3.

Binary and regression modeling tasks

The primary aim of this work was to produce a model that predicts if an individual’s CSF Aβ1–42 levels are below the recognized clinical threshold of 192 pg/ml for the Luminex platform, indicating an abnormal CSF Aβ1–42 level, and hence increased AD risk. Given the continuous CSF Aβ1–42 measures in the ADNI cohort, two approaches were considered

-

a ‘regression’ task: learning the continuous CSF Aβ1–42 levels and thresholding these post-prediction

-

a ‘binary’ task: learning the dichotomized CSF Aβ1–42 status based on clinical thresholds directly.

While both tasks result in a binary classifier, they face different trade-offs. The regression task makes use of the full information in the CSF levels but needs to learn a suitable threshold to convert its continuous predictions into suitable binary labels whereas the binary task only learns from the dichotomized CSF levels. Given these trade-offs, we have investigated both modeling approaches throughout this work.

Statistical modeling

We made use of Random Forests (RF) as the modeling approach to predict CSF Aβ1–42 levels for both the binary and regression tasks. RFs are a widely-used machine-learning ensemble method that have a number of advantages for the small sample size and disparate types of features observed in the ADNI dataset. RFs are invariant to the scale of the observed features and make few assumptions about the distributions of observed data allowing them to be applied to multiple data modalities easily. It can also detect non-additive relationships between variables without needing them to be included explicitly29.

All analysis in this work made use of the RF implementation in the R package ranger30. Each forest contained 2000 individual trees, each making use of a random selection of p3/4 features, where p was the total number of variables used in a given model. These parameter choices were based on recommendations provided in Ishwaran et al.31. All other parameters in the ranger implementation were set to their default values. To get an estimate of the performance of our models, we have made use of a nested cross-validation (CV) framework, whereby an inner CV was used to determine model parameters, and the outer CV was used to gain an estimate of the model’s performance on unseen data32. In this study, we used 3 repetitions of 3 fold CV for the inner loop and 10 repetitions of 10 fold CV for the outer loop.

As the RF used pre-determined parameter values, only a single parameter had to be determined: the threshold on the continuous regression predictions necessary to generate binary labels. This threshold was selected based on performance in the inner CV loop, using the R package OptimalCutoffs33 to evaluate six potential cutoff metrics (Supplementary Methods) and selecting the method which maximized the accuracy over all of the test folds from the inner cross validation loops. The best performing cutoff criterion was then used in the current iteration of the outer cross-validation loop and the accuracy, sensitivity, and specificity derived from this threshold was recorded for that fold. While this approach means that a different method could be used to derive the regression threshold for each fold in the outer CV loop, the resulting estimate of performance is unbiased and hence is likely to be more representative of performance on unseen data compared with selecting a threshold based on the entire set of training data.

Measures of model performance

Model performance was summarized by the mean and standard deviation of the area under the Receiver Operating Characteristic (ROC) curve (AUC), accuracy, sensitivity, and specificity from the testing performance across the different cross-validation runs. R2 values were also calculated for the regression task. Increases in AUC between models were tested for significance using a one-tailed Wilcoxon signed-rank test. Receiver operating curves were constructed by aggregating all of the test predictions from the outer cross-validation.

Evaluating the importance of different input modalities

The input variables were separated into three classes: a commonly used baseline model (B) including age and APOEε4 carrier status; Proteomics (P), which included the 146 analytes measured on the RBM panel as well as homocysteine and plasma Aβ1–40 and Aβ1–42; Metabolomics (M), including 138 metabolites and lipids. Four separate random forests were created using different subsets of these features to determine which were most useful for modeling CSF Aβ1–42. We denoted these models by the combination of features they included; for example ‘BPM’ refers to a model built using all three classes of features. The best performing model was selected for all subsequent analysis.

Discovery of the smallest set of markers needed for strong predictive performance

After evaluating the impact of the different input modalities, we determine the minimum set of individual analytes necessary to achieve high predictive performance. This was done by treating the number of included features as a parameter to be determined in our nested CV framework. Within each fold of the inner CV loop, we used a recursive feature elimination approach, ranking features according to their Variable Importance, the difference in the prediction error on the out-of-bag data when a given feature was permuted and unpermuted29 and removed the lowest ranking features in a stepwise fashion. The AUC of the resulting RF was recorded, and the procedure was repeated over increasingly smaller subsets of features until no features were left to be removed. After the inner CV loop finishes, we determine the number of features that achieved the optimal trade-off between model complexity and performance by selecting the smallest subset of features that achieved within 4% of the maximal observed AUC. A model using this subset of features was then trained on all training folds of the outer CV loop and evaluated on the test fold. Again, by determining the number of features to include within our nested cross-validation framework, we are able to determine an unbiased estimate of the model’s expected performance over unseen data.

Survival analysis

Survival analysis was conducted to determine if the rate of conversion from MCI to AD was different between those with predicted low and normal CSF Aβ1–42 levels, enabling us to determine if our predictions lead to useful clinical outcomes in the validation cohort.

Four separate analyses were performed, using the:

-

1.

measured CSF status on the training set (n = 198)

-

2.

predicted CSF status from B model, the standard baseline, in the validation set (n = 198)

-

3.

predicted CSF status from BP model, the best performing model, in the validation set (n = 198)

-

4.

predicted CSF status from BPfs model, the most parsimonious model, in the validation set (n = 198),

where BPfs is the feature selected model with the smallest set of features. For each analysis, we have examined the hazard ratios using Cox regression and used log-rank tests to compare the survival distribution of low/normal CSF Aβ1–42 stratifications in the four analyses, as well to compare equivalence between the actual and predicted stratifications.

Validation performance over PET Aβ 1–42 status

In order to further validate our model, we have examined the ability of our model to differentiate PET Aβ1–42 abnormal and normal status. While it is known that Aβ1–42 status from PET can differ from that observed in CSF, measurements from the two modalities are correlated and should be very similar for individuals who are not close to the cutoff indicating pathology. This provides analysis provides further evidence of our model’s ability to determine Aβ1–42 status in individuals in the validation cohort, where CSF measurements are not available.

Given that only a limited number of individuals had associated measures of PET imaging at baseline (n = 18 and 27 for training and validation cohorts respectively), we have made use of the earliest PET image available, leaving us with 108 and 68 individuals in the training and validation cohort to evaluate. The threshold for abnormality was defined as an SUVR of 1.5 and 1.11 for PET images using PiB and AV45 tracers respectively. The mean number of years past baseline that a scan was taken was 3.07 and 2.97 years for training and validation cohorts respectively.

The use of imaging at non-baseline times assumes that differences between the baseline and the time that the image was taken are relatively small (which may be reasonable assuming a slow rate of Aβ1–42 accumulation) and that few individuals are close to the defined threshold for abnormality. If these assumptions do not hold, it is likely to worsen predictive performance, making this analysis somewhat conservative.

Results

Models utilizing protein levels accurately predict CSF positivity

We evaluated the ability of blood-based biomarkers to predict CSF Aβ1–42 normal/abnormal status using RFs trained using different subsets of input variables, treating the modeling of CSF Aβ as either a regression or binary task. Summaries of the performance metrics from the resulting models are shown in Table 2 with their corresponding ROC curves shown in Fig. 1.

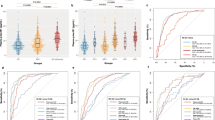

ROC curves comparing different sets of features to determine predictive value for the (a) regression, and (b) binary tasks. Different colours of lines correspond to different feature sets, (B) baseline model (age and APOEε4 carrier status), (P) Proteomics, (M) Metabolomics, with corresponding AUCs shown in the legend under each plot. The model BPfs in subplot (a) indicates the performance of the RF using feature selection.

We observed strong overall predictive performance for both the regression and binary tasks within our cross-validation framework. All sets of features outperformed the base model of age and APOEε4 carrier status with BP based models leading to the highest AUC of 0.84 and 0.83 for the regression and binary tasks respectively. The standard deviation for the AUC was relatively high (7–8%), likely due to the noise inherent in both the analytes being used for prediction as well as in the CSF Aβ1–42 measurements.

The BP models resulted in a mean R2 of 0.29 for the regression task. The automatically derived threshold for this regression RF yielded a mean accuracy of 0.77, with a sensitivity of 0.78 and a specificity of 0.73. Across the 100 cross-validation runs, the chosen threshold ranged from 164 pg/ml to 194 pg/ml with a median of 185 pg/ml. Similar AUC and accuracy could be observed for learning the dichotomized CSF labels directly (e.g. 0.83 AUC, 0.77 accuracy for the BP model). For the binary task, a slight drop in both AUC, as well as an altered trade-off between sensitivity and specificity, was observed across all different feature sets compared to the regression task. Given this, we chose to focus on the regression model for much of the follow-up analysis.

While all models making use of blood analytes outperformed the base model of age and APOEε4, models that made use of the protein level measurements consistently achieved the strongest predictive performance, whereas metabolites appeared to be of limited utility. In both the regression and binary tasks, models containing metabolites and proteomic data (BPM) achieved equivalent or worse AUCs than models containing only the proteomic data (BP). Furthermore, we observed that the use of the base features and metabolites alone (BM) lead to decreased performance compared to the baseline model, indicating that the set of measured metabolites may have contributed little predictive information or may have been too noisy to be useful for predicting CSF status. These findings are in contrast to the previously reported utility of metabolites in predicting PET Aβ positivity22.

While the results presented in this section include clinically diagnosed AD individuals, who are almost all CSF Aβ positive, it is worth considering only ‘pre-clinical’ individuals as this may be more relevant for selective screening in drug trials. Evaluating our model’s performance on CN and MCI individuals only, we find that similarly strong predictive performance can be obtained (Supplementary Table 4, Fig. 1, 0.80 AUC, 0.77 accuracy for the BP model) supporting our primary findings that plasma protein levels can be utilized to predict amyloid pathology status.

To ensure that our imputation procedure did not bias our results, we also built similar models using only complete cases after applying more stringent quality control (removal of plasma analytes where more than 1% of measurements were missing), obtaining similar AUCs of 0.81 for the regression and binary tasks (Supplementary Fig. 2).

Strong predictive performance is maintained using only four proteins

The models described so far used all (>140) available features in this dataset. In practice, measuring hundreds of analytes is costly, negating a key advantage of using blood biomarkers for screening. Given this, we have applied feature selection to the BP regression model to identify the smallest number of features that still achieved high predictive performance. Within cross-validation, we find that the average performance of this feature selection approach, denoted BPfs, yields an AUC, sensitivity, and specificity of 0.81, 0.81 and 0.63. The number of features selected in the model ranges from 2 to 15, with a median of 5 features included.

When applying this feature selection procedure to the entire set of training data, we identified a subset of four plasma analytes as well as APOE4 genotype status critical for model performance: Chromogranin-A (CGA), Aβ1–42 (AB42), Eotaxin 3, and Apolipoprotein E (APOE). This combination of protein levels, together with APOEε4 is denoted as BP5. Figure 2 indicates how each variable influences the model predictions after we have accounted for the influence of the other four variables. As expected, the strongest relationship with CSF Aβ1–42 is with APOEε4 carrier status, where being a carrier (APOEε4 = 1) leads to a low predicted Aβ level. While the relationships between the proteins and CSF Aβ1–42 are non-linear (a common outcome given the nature of RFs), the overall correlation with CSF Aβ1–42 is positive for CGA, Plasma Aβ1–42, and APOE protein levels and negative for Eotaxin 3.

Validation of clinical utility

To demonstrate the utility of our modeling on unseen data, we conducted a survival analysis over the validation cohort (n = 198), evaluating the probability of baseline MCI individuals transitioning to AD diagnosis over 120 months, stratified by predicted CSF Aβ1–42 status from either the B, BP or reduced BP5 model. These survival distributions could then be compared to those of the real Aβ status observed over the training cohort. Given the demographic similarity of the two cohorts, we would expect to see strong similarities in rates of conversion.

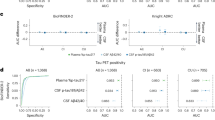

From Fig. 3, we observed that in all cases, the predicted low CSF Aβ1–42 group transitioned to AD significantly faster than the Aβ normal group. Comparing the predictions from the BP, BP5 and B models on the validation cohort to the actual CSF Aβ status on the training cohort, we find that there is no significant difference between the survival distributions for either the normal (log-rank test p = 0.19, 0.2, 0.21) or abnormal (log-rank test p = 0.97, 0.31, 0.23) survival distributions, respectively, reflecting the overlapping confidence intervals of the hazard ratios. However, it can be observed that due to differences in the thresholding of the Aβ levels, fewer individuals are deemed as CSF Aβ1–42 ‘normal’ in the actual data (n = 53), compared with any of the three models applied to the validation datasets (n = 95, 73, and 71 for BP, BP5, and B models respectively), highlighting the well-recognized issues of defining standardized cutoff values across studies34. The significant differences in conversion rates between the predicted normal/low strata, especially from the more parsimonious BP5 model, together with their similarity to the survival distributions of the actual CSF measures, provide strong evidence that our blood-based model can help stratify individuals based on their risk of developing clinical AD (Table 1).

Kaplan-Meier curves for (a) the training cohort stratified by actual CSF Aβ status, and the validation cohort stratified by predicted CSF Aβ1–42 status from the (b) B model, (c) BP model and (d) BP5 model. The bands along the curves represent the 95% confidence intervals. Hazards ratios and 95% confidence intervals for the abnormal group compared to the normal are shown in the bottom left of each subplot. In all cases, the low CSF Aβ1–42 group transitioned to AD diagnosis significantly faster then the normal group (p = 3.97 × 10−7, 7.89 × 10−6, 9.96 × 10−4, 1.65 × 10−3 for the four plots left-right). CN individuals were not included in this analysis because there were no CN individuals present in the validation cohort.

Concordance with PET Aβ status

To further validate and quantify our model’s performance, we have explored the relationship between the predicted CSF Aβ scores and PET imaging status. Confirming that the PET Aβ and CSF Aβ1–42 status are correlated, we find that they differed in only 7 out of 108 individuals for whom both CSF and PET amyloid status were available. As such, evaluating our model against the PET Aβ status should provide a conservative estimate for the AUC on the validation cohort, despite the lack of CSF measures.

The resulting ROC curves in Fig. 4 provide further evidence that the BP and BP5 models are able to predict Aβ status, with AUCs against PET Aβ on the validation cohort of 0.78 and 0.8 for the BP and BP5 models respectively. These results are similar to those from predicting CSF status from the training data (Fig. 1), with a small expected drop in AUC due to the inherent differences between CSF and PET amyloid. Interestingly, we observe stronger performance for the reduced BP5 model (AUC 0.80) compared to the full BP model (AUC 0.78), with both models significantly improving upon the baseline model of age and APOEε4 status (AUC 0.71).

ROC curves comparing different sets of features to determine PET-based Aβ status on the (a) training and (b) validation cohorts. Different colours of lines correspond to different feature sets with corresponding AUCs shown in the legend under each plot. Results in the training cohort are more useful as a measure of similarity between the tasks of predicting CSF and PET Aβ status given that this was the data used to train the CSF model and hence the AUC are upwardly biased, especially for more complex models (e.g. BP).

Discussion

The most positive results from AD trials to date have been found in patients with early forms of the disease, leading to an increasing awareness that treatments are likely to be most successful if applied at the earliest stages of AD8. Some AD clinical trials are enriching pre-symptomatic AD individuals with PET screening. However, recent findings that shifts in CSF amyloid can be observed up to a decade before those from PET may indicate that CSF positive individuals are even more suitable for clinical trial enrichment35. Direct measurement of CSF biomarkers is too invasive to be used in such a screening test36 motivating the development of a minimally-invasive, low-cost solution that provides the same type of information to overcome these limitations.

This current study evaluates the utility of a blood-based signature of CSF Aβ1–42 status using a Random Forest approach. We demonstrated that CSF Aβ1–42 normal/abnormal status using age, APOEε4 carrier status, and protein levels can be predicted with a high AUC, sensitivity and specificity of 0.84, 0.78 and 0.73 respectively. Compared to the baseline model (age and APOEε4 genotype) the inclusion of the plasma analytes improved the performance (AUC) by 6%. To make the model more suitable for clinical application, we identified four plasma analytes which, together with APOEε4 carrier status, still achieved a high AUC, sensitivity, and specificity of 0.81, 0.81 and 0.64 respectively. These predictive models were then validated on a separate cohort of individuals to demonstrate that MCI subjects with predicted abnormal CSF Aβ1–42 (low) levels transitioned to an AD diagnosis at a significantly higher rate than those predicted with normal CSF Aβ1–42 levels. Furthermore, these rates were similar to those observed in a demographically similar cohort of MCIs using actual CSF Aβ1–42 levels. This is a strong validation of our modeling as the blood-based biomarkers for CSF Aβ1–42 status is only useful if they can replicate the behavior of the actual Aβ1–42 status for clinically relevant endpoints for individuals that were not used to build the predictive model. Strong predictive power of PET Aβ1–42 status on the validation cohort provides further evidence for the generalizability and robustness of our modeling.

A number of studies have previously investigated the use of blood analytes to predict the burden of amyloid in the neocortex, as measured by PET15,16,18,19,20,22,23. Some of these studies showed similar performance metrics to those reported in this work (>0.80 AUCs15,23,24,25 or >0.78 accuracy17), indicating that prediction of PET Aβ and CSF Aβ1–42 status are of similar difficulty. PET Aβ is directly related to brain fibrillar amyloid, whereas CSF amyloid is a marker of soluble Aβ1–42 and they may, therefore, give different insights into AD progression and mechanisms. For example, CSF Aβ1–42 has been shown to be associated with APOEε4 whereas PET Aβ has been shown to have a greater association with tau37. Thus, the development of a blood-based screening test for CSF Aβ1–42 levels is a complementary approach to existing blood-based biomarkers of PET amyloid status.

Of the above studies, the study by Nakamura et al.25 showed a very high AUC in discovery and validation datasets for PET Aβ1–42 status (AUC 0.94 and 0.96 respectively) as well as a strong performance for predicting abnormalities in CSF Aβ1–42 levels (AUC 0.88%), in a small cohort (n = 46) of their validation set. While these results are promising, the automation of the novel technique used (IP-MALDI-TOF-MS), and hence transfer to a clinical setting, is non-trivial, motivating the search for complementary approaches. The protein signature presented in this study, based on a multiplex immunoassay, is likely to require a far shorter timeframe for clinical translation given the high level of automation that already exists for multiplex immunoassays, and that biomarkers from such platform have already been used in commercially available diagnostic tests that have been approved by the FDA.

The use of metabolites appeared to be of limited utility for predicting CSF Aβ1–42. In both the regression and binary tasks, models containing metabolites achieved equivalent or worse AUCs than models without. These findings can be contrast with the utility of metabolites in predicting PET Aβ positivity22 and their association with AD more broadly38. Alternative methods for integrating this source of data39 may be required in order to find robust associations with CSF Aβ1–42 status.

The subset of features used in our BP5 model included APOEε4 genotype and plasma levels of Chromogranin-A (CGA), Eotaxin 3, Aβ1–42 (AB42), and Apolipoprotein E (APOE). Several of these identified proteins have known associations with Alzheimer’s disease. Unsurprisingly, the levels of plasma APOE are associated with CSF amyloid levels. APOEε4 is the strongest genetic risk factor for AD. APOE is involved in the clearance of Aβ1–4240,41,42 and there is a strong relationship between APOEε4 genotype and APOE plasma levels, where APOEε4 carriers have lower plasma levels43,44. Plasma Aβ1–42 showed a positive relationship in our model for CSF Aβ1–42, in line with a prior observation45. This is interesting as the link between alterations of Aβ1–42 levels in the blood and the progression of the disease is still controversial and studies assessing the Aβ1–42 concentration in blood of AD patients have produced conflicting results45,46,47,48,49,50,51. Chromogranin A (CGA) is associated with synaptic function and has traditionally been used as an indicator of neuroendocrine tumors52. More recent work has shown that CGA has a degree of co-localisation with amyloid plaques in the brain53,54. However, levels of CGA in the CSF and blood serum do not appear to be correlated55 and serum CGA has not previously been linked to AD. Eotaxin 3, also known as C-C chemokine ligand 26 (CCL26), plays an important role in the innate immune system and has been found to be dysregulated in AD patients56. CSF Eotaxin 3 has been shown to be significantly elevated in patients with prodromal AD, however, Eotaxin 3 levels in plasma or the CSF has not been shown to correlate with rates of disease progression56,57.

This study has several limitations. The training and validation cohorts are both composed of individuals in the ADNI study and thus all measures were conducted on the same platforms. Hence further cross-cohort and cross-platform replication is required. This remains an ongoing issue within the development of all AD biomarkers relating to early screening and requires significant future investment58. Furthermore, the current cohort is neuropathology biased, i.e. 84% of the cohort have MCI or AD, and thus likely to have neuronal damage, potentially confounding the analysis of CSF Aβ1–42 status. Finally, it needs to be noted that there are other medical conditions that are known to affect CSF Aβ1–42 levels and it is unclear whether these affect any of the patients in our cohort.

The early identification of AD disease is paramount and a major global focus as the success of disease-modifying or preventative therapies in AD may depend on detecting the earliest signs of abnormal amyloid-beta load. The differences between CSF Aβ1–42 and PET Aβ in preclinical stages of AD are likely to have implications for clinical trial enrichment. Blood-based biomarkers of amyloid can serve as the first step in a multistage screening procedure, similar to those that have been clinically-implemented in cancer, cardiovascular disease, and infectious diseases58. Inconjunction with biomarkers for neocortical amyloid burden, the CSF Aβ1–42 biomarkers presented in this work may help yield a cheap, non-invasive tool for both improving clinical trials targeting amyloid and for population screening.

Data Availability

The dataset analysed during the current study is available from the ADNI repository, (http://adni.loni.usc.edu/).

References

Villemagne, V. L. et al. Amyloid β deposition, neurodegeneration, and cognitive decline in sporadic Alzheimer’s disease: a prospective cohort study. The Lancet. Neurol. 12, 357–367 (2013).

Berti, V. et al. Rethinking on the concept of biomarkers in preclinical alzheimer’s disease. Neurol. Sci. 37, 663–672 (2016).

Dubois, B. et al. Revising the definition of Alzheimer’s disease: a new lexicon. The Lancet. Neurol (2010).

Dubois, B. et al. Preclinical Alzheimer’s disease: Definition, natural history, and diagnostic criteria. Alzheimer’s & Dementia 12, 292–323 (2016).

Alzheimers Association. Alzheimer’s disease facts and figures. Alzheimer’s & Dementia 13, 325–373 (2017).

Cummings, J. L., Morstorf, T. & Zhong, K. Alzheimer’s disease drug-development pipeline: few candidates, frequent failures. Alzheimer’s research & therapy 6, 37 (2014).

Cummings, J., Lee, G., Mortsdorf, T., Ritter, A. & Zhong, K. Alzheimer’s disease drug development pipeline: 2017. Alzheimer’s & Dementia 3, 367–384 (2017).

Godyń, J., Jończyk, J., Panek, D. & Malawska, B. Therapeutic strategies for alzheimer’s disease in clinical trials. Pharmacol. Reports 68, 127–138 (2016).

Sevigny, J. et al. Amyloid pet screening for enrichment of early-stage alzheimer disease clinical trials: Experience in a phase 1b clinical trial. Alzheimer Dis. & Assoc. Disord. 30, 1–7 (2016).

Salloway, S. et al. Two phase 3 trials of bapineuzumab in mild-to-moderate alzheimer’s disease. New Engl. J. Medicine 370, 322–333 (2014).

Scheltens, P. et al. Alzheimer’s disease. The Lancet 388, 505–517 (2016).

Blennow, K., Mattsson, N., Schöll, M., Hansson, O. & Zetterberg, H. Amyloid biomarkers in alzheimer’s disease. Trends Pharmacol. Sci. 36, 297–309 (2015).

Palmqvist, S., Mattsson, N., Hansson, O. & Neuroimaging, A. D. Cerebrospinal fluid analysis detects cerebral amyloid-beta accumulation earlier than positron emission tomography. Brain 139, 1226–1236 (2016).

Rabinovici, G. D. Amyloid biomarkers: pushing the limits of early detection. Brain 139, 1008–1010 (2016).

Burnham, S. C. et al. A blood-based predictor for neocortical Ab burden in Alzheimer’s disease: results from the AIBL study. Mol. Psychiatry 19, 519–526 (2014).

Burnham, S. C. et al. Predicting Alzheimer disease from a blood-based biomarker profile: A 54-month follow-up. Neurol. 87, 1093–1101 (2016).

Voyle, N. et al. Blood Protein Markers of Neocortical Amyloid-b Burden: A Candidate Study Using SOMAscan Technology. J. Alzheimers Dis. 46, 947 (2015).

Ashton, N. J. et al. Blood protein predictors of brain amyloid for enrichment in clinical trials? Alzheimer’s & Dementia 1, 48–60 (2015).

Kiddle, S. J. et al. Plasma Based Markers of [C-11] PiB-PET Brain Amyloid Burden. PLoS One 7 (2012).

Westwood, S. et al. Blood-Based Biomarker Candidates of Cerebral Amyloid Using PiB PET in Non-Demented Elderly. J. Alzheimers Dis. 52, 561–572 (2016).

Thambisetty, M. et al. Proteome-Based Plasma Markers of Brain Amyloid-beta Deposition in Non-Demented Older Individuals. J. Alzheimers Dis. 22, 1099–1109 (2010).

Voyle, N. et al. Blood metabolite markers of neocortical amyloid-b burden: Discovery and enrichment using candidate proteins. Transl. Psychiatry 6, e719 (2016).

Gupta, V. B. et al. Follow-up plasma apolipoprotein e levels in the australian imaging, biomarkers and lifestyle flagship study of ageing (aibl) cohort. Alzheimer’s research & therapy 7, 16 (2015).

Haghighi, M., Smith, A., Morgan, D., Small, B. & Huang, S. Identifying cost-effective predictive rules of amyloid-b level by integrating neuropsychological tests and plasma-based markers. J. Alzheimers Dis. 43, 1261–1270 (2015).

Nakamura, A. et al. High performance plasma amyloid-b biomarkers for Alzheimer’s disease. Nat. 554 (2018).

Petersen, R. C. et al. Alzheimer’s disease neuroimaging initiative (adni) clinical characterization. Neurol. 74, 201–209 (2010).

McKhann, G. et al. Clinical diagnosis of Alzheimer‘s disease: report of the NINCDS-ADRDA Work Group under the auspices of Department of Health and Human Services Task Force on Alzheimer’s Disease. Neurology 34, 939–944 (1984).

Ishwaran, H. & Kogalur, U. Randomforestsrc: Random forests for survival, regression and classification (rf-src). R package version 1 (2014).

Breiman, L. Random forests. Mach. learning 45, 5–32 (2001).

Wright, M. N. & Ziegler, A. ranger: A fast implementation of random forests for high dimensional data in c++ and r. J. Stat. Softw. 77 (2017).

Ishwaran, H., Kogalur, U. B., Chen, X. & Minn, A. J. Random survival forests for high-dimensional data. Stat. Analysis Data Mining: The ASA Data Sci. J. 4, 115–132 (2011).

Cawley, G. C. & Talbot, N. L. On over-fitting in model selection and subsequent selection bias in performance evaluation. J. Mach. Learn. Res. 11, 2079–2107 (2010).

López-Ratón, M. et al. Optimalcutpoints: an r package for selecting optimal cutpoints in diagnostic tests. J Stat Softw 61, 1–36 (2014).

Blennow, K. et al. Clinical utility of cerebrospinal fluid biomarkers in the diagnosis of early alzheimer’s disease. Alzheimer’s & dementia: journal Alzheimer’s Assoc. 11, 58–69 (2015).

Palmqvist, S., Mattsson, N. & Hansson, O. Reply: Do we still need positron emission tomography for early alzheimer’s disease diagnosis? Brain 139, e61–e61 (2016).

Hane, F. T. et al. Recent progress in alzheimer’s disease research, part 3: Diagnosis and treatment. J. Alzheimer’s Dis. 1–21 (2017).

Mattsson, N. et al. Independent information from cerebrospinal fluid amyloid-b and florbetapir imaging in Alzheimer’s disease. Brain 138, 772–783 (2015).

Toledo, J. B. et al. Metabolic network failures in alzheimer’s disease—a biochemical road map. Alzheimer’s & Dementia (2017).

Wang, B. et al. Similarity network fusion for aggregating data types on a genomic scale. Nat. Met. 11, 333–337 (2014).

Jiang, Q. et al. ApoE Promotes the Proteolytic Degradation of A? Neuron 58, 681–693 (2008).

Verghese, P. B. et al. ApoE influences amyloid-b (Ab) clearance despite minimal apoE/Ab association in physiological conditions. Proc. Natl. Acad. Sci. USA 110, E1807–16 (2013).

Bachmeier, C. et al. A multifaceted role for apoE in the clearance of beta-amyloid across the blood-brain barrier. Neurodegener. Dis. 11, 13–21 (2013).

Simon, R. et al. Total ApoE and ApoE4 isoform assays in an Alzheimer’s disease case-control study by targeted mass spectrometry (n=669): a pilot assay for methionine-containing proteotypic peptides. Mol. & cellular proteomics: MCP 11, 1389–1403 (2012).

Martínez-Morillo, E. et al. Total apolipoprotein E levels and specific isoform composition in cerebrospinal fluid and plasma from Alzheimer’s disease patients and controls. Acta Neuropathol. (Berl.) 127, 633–643 (2014).

Janelidze, S. et al. Plasma b-amyloid in Alzheimer’s disease and vascular disease. Sci. Rep. 6, 26801 (2016).

Lewczuk, P. et al. Amyloid beta peptides in plasma in early diagnosis of Alzheimer’s disease: A multicenter study with multiplexing. Exp. Neurol. 223, 366–370 (2010).

Lui, J. K. et al. Plasma amyloid-beta as a biomarker in Alzheimer’s disease: the AIBL study of aging. J. Alzheimers Dis. 20, 1233–1242 (2010).

Rembach, A. et al. Changes in plasma amyloid beta in a longitudinal study of aging and Alzheimer’s disease. Alzheimer’s & Dementia 10, 53–61 (2014).

Park, J.-C. et al. Chemically treated plasma Ab is a potential blood-based biomarker for screening cerebral amyloid deposition. Alzheimer’s research & therapy 9, 20 (2017).

Pesaresi, M. et al. Plasma levels of beta-amyloid (1–42) in Alzheimer’s disease and mild cognitive impairment. Neurobiol. Aging 27, 904–905 (2006).

Tzen, K.-Y. et al. Plasma Ab but not tau is related to brain PiB retention in early Alzheimer’s disease. ACS Chem. Neurosci. 5, 830–836 (2014).

Campana, D. et al. Chromogranin A: is it a useful marker of neuroendocrine tumors? J Clin Oncol 25, 1967–1973 (2007).

Lechner, T. et al. Chromogranin peptides in alzheimer’s disease. Exp. gerontology 39, 101–113 (2004).

Brion, J.-P., Couck, A.-M., Bruce, M., Anderton, B. & Flament-Durand, J. Synaptophysin and chromogranin a immunoreactivities in senile plaques of alzheimer’s disease. Brain research 539, 143–150 (1991).

Blennow, K. et al. Tau protein in cerebrospinal fluid. Mol. Chem. Neuropathol. 26, 231–245 (1995).

Huber, A. K., Giles, D. A., Segal, B. M. & Irani, D. N. An emerging role for eotaxins in neurodegenerative disease. Clin. Immunol. (2016).

Westin, K. et al. Ccl2 is associated with a faster rate of cognitive decline during early stages of alzheimer’s disease. PLoS One 7, e30525 (2012).

O’Bryant, S. E. et al. Blood-based biomarkers in alzheimer disease: Current state of the science and a novel collaborative paradigm for advancing from discovery to clinic. Alzheimer’s & Dementia 13, 45–58 (2017).

Acknowledgements

Data collection and sharing for this project was funded by the Alzheimer’s Disease Neuroimaging Initiative (ADNI) (National Institutes of Health Grant U01 AG024904) and DOD ADNI (Department of Defense award number W81XWH-12-2-0012). ADNI is funded by the National Institute on Aging, the National Institute of Biomedical Imaging and Bioengineering, and through generous contributions from the following: AbbVie, Alzheimer’s Association; Alzheimer’s Drug Discovery Foundation; Araclon Biotech; BioClinica, Inc.; Biogen; Bristol-Myers Squibb Company; CereSpir, Inc.; Cogstate; Eisai Inc.; Elan Pharmaceuticals, Inc.; Eli Lilly and Company; EuroImmun; F. Hoffmann-La Roche Ltd and its affiliated company Genentech, Inc.; Fujirebio; GE Healthcare; IXICO Ltd.; Janssen Alzheimer Immunotherapy Research & Development, LLC.; Johnson & Johnson Pharmaceutical Research & Development LLC.; Lumosity; Lundbeck; Merck & Co., Inc.; Meso Scale Diagnostics, LLC.; NeuroRx Research; Neurotrack Technologies; Novartis Pharmaceuticals Corporation; Pfizer Inc.; Piramal Imaging; Servier; Takeda Pharmaceutical Company; and Transition Therapeutics. The Canadian Institutes of Health Research is providing funds to support ADNI clinical sites in Canada. Private sector contributions are facilitated by the Foundation for the National Institutes of Health (www.fnih.org). The grantee organization is the Northern California Institute for Research and Education, and the study is coordinated by the Alzheimer’s Therapeutic Research Institute at the University of Southern California. ADNI data are disseminated by the Laboratory for Neuro Imaging at the University of Southern California. This work was supported by IBM. We would like to thank Dr. Matthew Downton, Dr. Annalisa Swan and Dr. Anna Trigos for helpful feedback on the manuscript.

Author information

Authors and Affiliations

Author notes

A complete listing of ADMC investigators can be found at: https://sites.duke.edu/adnimetab/who-we-are/.

A complete listing of ADNI investigators can be found at: http: //adni.loni.usc.edu/wp-content/uploads/how_to_apply/ADNI_Acknowledgement_List.pdf

Consortia

Contributions

B.G., B.F. and N.F. designed the study, B.G., C.S. and B.F. analyzed the data and ran all experiments, B.G. made the figures, B.G., C.S. and N.F. wrote the manuscript. All authors interpreted the data and critically revised the manuscript.

Corresponding author

Ethics declarations

Competing Interests

The authors declare no competing interests.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Goudey, B., Fung, B.J., Schieber, C. et al. A blood-based signature of cerebrospinal fluid Aβ1–42 status. Sci Rep 9, 4163 (2019). https://doi.org/10.1038/s41598-018-37149-7

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-018-37149-7

This article is cited by

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.