Abstract

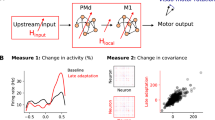

Motor cortex (M1) exhibits a rich repertoire of neuronal activities to support the generation of complex movements. Although recent neuronal-network models capture many qualitative aspects of M1 dynamics, they can generate only a few distinct movements. Additionally, it is unclear how M1 efficiently controls movements over a wide range of shapes and speeds. We demonstrate that modulation of neuronal input–output gains in recurrent neuronal-network models with a fixed architecture can dramatically reorganize neuronal activity and thus downstream muscle outputs. Consistent with the observation of diffuse neuromodulatory projections to M1, a relatively small number of modulatory control units provide sufficient flexibility to adjust high-dimensional network activity using a simple reward-based learning rule. Furthermore, it is possible to assemble novel movements from previously learned primitives, and one can separately change movement speed while preserving movement shape. Our results provide a new perspective on the role of modulatory systems in controlling recurrent cortical activity.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 print issues and online access

$209.00 per year

only $17.42 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Change history

19 December 2018

In the version of this article initially published, in the PDF, equations (2) and (4) erroneously displayed a curly bracket on the right hand side of the equation. This should not be there. The errors have been corrected in the PDF version of the article. The equations appear correctly in the HTML.

References

Rathelot, J.-A. & Strick, P. L. Subdivisions of primary motor cortex based on cortico-motoneuronal cells. Proc. Natl. Acad. Sci. USA 106, 918–923 (2009).

Rosenbaum, D. A. Human Motor Control. (Academic Press, Cambridge, MA,USA, 2009).

Sanes, J. N. & Donoghue, J. P. Plasticity and primary motor cortex. Annu. Rev. Neurosci. 23, 393–415 (2000).

Churchland, M. M. et al. Neural population dynamics during reaching. Nature 487, 51–56 (2012).

Shenoy, K. V., Sahani, M. & Churchland, M. M. Cortical control of arm movements: a dynamical systems perspective. Annu. Rev. Neurosci. 36, 337–359 (2013).

Afshar, A. et al. Single-trial neural correlates of arm movement preparation. Neuron 71, 555–564 (2011).

Churchland, M. M., Cunningham, J. P., Kaufman, M. T., Ryu, S. I. & Shenoy, K. V. Cortical preparatory activity: representation of movement or first cog in a dynamical machine? Neuron 68, 387–400 (2010).

Russo, A. A. et al. Motor cortex embeds muscle-like commands in an untangled population response. Neuron 97, 953–966.e8 (2018).

Churchland, M. M. & Cunningham, J. P. A dynamical basis set for generating reaches. Cold Spring Harb. Symp. Quant. Biol. 79, 67–80 (2014).

Sussillo, D., Churchland, M. M., Kaufman, M. T. & Shenoy, K. V. A neural network that finds a naturalistic solution for the production of muscle activity. Nat. Neurosci. 18, 1025–1033 (2015).

Hennequin, G., Vogels, T. P. & Gerstner, W. Optimal control of transient dynamics in balanced networks supports generation of complex movements. Neuron 82, 1394–1406 (2014).

Sehgal, M., Song, C., Ehlers, V. L. & Moyer, J. R. Jr. Learning to learn - intrinsic plasticity as a metaplasticity mechanism for memory formation. Neurobiol. Learn. Mem. 105, 186–199 (2013).

Kida, H. & Mitsushima, D. Mechanisms of motor learning mediated by synaptic plasticity in rat primary motor cortex. Neurosci. Res. 128, 14–18 (2018).

Chance, F. S., Abbott, L. F. & Reyes, A. D. Gain modulation from background synaptic input. Neuron 35, 773–782 (2002).

Swinehart, C. D., Bouchard, K., Partensky, P. & Abbott, L. F. Control of network activity through neuronal response modulation. Neurocomputing 58–60, 327–335 (2004).

Zhang, J. & Abbott, L. F. Gain modulation of recurrent networks. Neurocomputing 32–33, 623–628 (2000).

Marder, E. Neuromodulation of neuronal circuits: back to the future. Neuron 76, 1–11 (2012).

Salinas, E. & Thier, P. Gain modulation: a major computational principle of the central nervous system. Neuron 27, 15–21 (2000).

Molina-Luna, K. et al. Dopamine in motor cortex is necessary for skill learning and synaptic plasticity. PLoS One 4, e7082 (2009).

Thurley, K., Senn, W. & Lüscher, H.-R. Dopamine increases the gain of the input-output response of rat prefrontal pyramidal neurons. J. Neurophysiol. 99, 2985–2997 (2008).

Vestergaard, M. & Berg, R. W. Divisive gain modulation of motoneurons by inhibition optimizes muscular control. J. Neurosci. 35, 3711–3723 (2015).

Wei, K. et al. Serotonin affects movement gain control in the spinal cord. J. Neurosci. 34, 12690–12700 (2014).

Hosp, J. A., Pekanovic, A., Rioult-Pedotti, M. S. & Luft, A. R. Dopaminergic projections from midbrain to primary motor cortex mediate motor skill learning. J. Neurosci. 31, 2481–2487 (2011).

Huntley, G. W., Morrison, J. H., Prikhozhan, A. & Sealfon, S. C. Localization of multiple dopamine receptor subtype mRNAs in human and monkey motor cortex and striatum. Brain Res. Mol. Brain Res. 15, 181–188 (1992).

Thoroughman, K. A. & Shadmehr, R. Learning of action through adaptive combination of motor primitives. Nature 407, 742–747 (2000).

Giszter, S. F. Motor primitives--new data and future questions. Curr. Opin. Neurobiol. 33, 156–165 (2015).

Lara, A. H., Cunningham, J. P. & Churchland, M. M. Different population dynamics in the supplementary motor area and motor cortex during reaching. Nat. Commun. 9, 2754 (2018).

Rajan, K., Abbott, L. F. & Sompolinsky, H. Stimulus-dependent suppression of chaos in recurrent neural networks. Phys. Rev. E 82, 011903 (2010).

Mazzoni, P., Andersen, R. A. & Jordan, M. I. A more biologically plausible learning rule for neural networks. Proc. Natl. Acad. Sci. USA 88, 4433–4437 (1991).

Legenstein, R., Chase, S. M., Schwartz, A. B. & Maass, W. A reward-modulated hebbian learning rule can explain experimentally observed network reorganization in a brain control task. J. Neurosci. 30, 8400–8410 (2010).

Hoerzer, G. M., Legenstein, R. & Maass, W. Emergence of complex computational structures from chaotic neural networks through reward-modulated Hebbian learning. Cereb. Cortex 24, 677–690 (2014).

Miconi, T. Biologically plausible learning in recurrent neural networks reproduces neural dynamics observed during cognitive tasks. eLife 6, e20899 (2017).

Li, N., Chen, T.-W., Guo, Z. V., Gerfen, C. R. & Svoboda, K. A motor cortex circuit for motor planning and movement. Nature 519, 51–56 (2015).

Sussillo, D. & Abbott, L. F. Generating coherent patterns of activity from chaotic neural networks. Neuron 63, 544–557 (2009).

Spampinato, D. A., Block, H. J. & Celnik, P. A. Cerebellar–M1 connectivity changes associated with motor learning are somatotopic specific. J. Neurosci. 37, 2377–2386 (2017).

Kao, J. C. et al. Single-trial dynamics of motor cortex and their applications to brain-machine interfaces. Nat. Commun. 6, 7759 (2015).

Wang, J., Narain, D., Hosseini, E. A. & Jazayeri, M. Flexible timing by temporal scaling of cortical responses. Nat. Neurosci. 21, 102–110 (2018).

Soares, S., Atallah, B. V. & Paton, J. J. Midbrain dopamine neurons control judgment of time. Science 354, 1273–1277 (2016).

Hardy, N. F., Goudar, V., Romero-Sosa, J. L. & Buonomano, D. V. A model of temporal scaling correctly predicts that motor timing improves with speed. Nat. Commun. 9, 4732 (2018).

Collier, G. L. & Wright, C. E. Temporal rescaling of simple and complex ratios in rhythmic tapping. J. Exp. Psychol. Hum. Percept. Perform. 21, 602–627 (1995).

Gallego, J. A., Perich, M. G., Miller, L. E. & Solla, S. A. Neural manifolds for the control of movement. Neuron 94, 978–984 (2017).

Friston, K. J. Functional and effective connectivity: a review. Brain Connect. 1, 13–36 (2011).

Sussillo, D. & Barak, O. Opening the black box: low-dimensional dynamics in high-dimensional recurrent neural networks. Neural Comput. 25, 626–649 (2013).

Kambara, H., Shin, D. & Koike, Y. A computational model for optimal muscle activity considering muscle viscoelasticity in wrist movements. J. Neurophysiol. 109, 2145–2160 (2013).

Martins, A. R. O. & Froemke, R. C. Coordinated forms of noradrenergic plasticity in the locus coeruleus and primary auditory cortex. Nat. Neurosci. 18, 1483–1492 (2015).

Swinehart, C. D. & Abbott, L. F. Supervised learning through neuronal response modulation. Neural Comput. 17, 609–631 (2005).

Breakspear, M. Dynamic models of large-scale brain activity. Nat. Neurosci. 20, 340–352 (2017).

Mante, V., Sussillo, D., Shenoy, K. V. & Newsome, W. T. Context-dependent computation by recurrent dynamics in prefrontal cortex. Nature 503, 78–84 (2013).

Bargmann, C. I. Beyond the connectome: how neuromodulators shape neural circuits. BioEssays 34, 458–465 (2012).

Bassett, D. S. & Sporns, O. Network neuroscience. Nat. Neurosci. 20, 353–364 (2017).

Sompolinsky, H., Crisanti, A. & Sommers, H. J. Chaos in random neural networks. Phys. Rev. Lett. 61, 259–262 (1988).

Saito, H., Katahira, K., Okanoya, K. & Okada, M. Statistical mechanics of structural and temporal credit assignment effects on learning in neural networks. Phys. Rev. E 83, 051125 (2011).

Frémaux, N. & Gerstner, W. Neuromodulated spike-timing-dependent plasticity, and theory of three-factor learning rules. Front. Neural Circuits 9, 85 (2016).

Rumelhart, D. E., Hinton, G. E. & Williams, R. J. Learning representations by back-propagating errors. Nature 323, 533–536 (1986).

Acknowledgements

We thank the members of the Vogels lab (particularly E. J. Agnes, R. P. Costa, W. F. Podlaski, and F. Zenke) for their insightful comments and Y. T. Kimura for creating the monkey illustration. We also thank O. Barak, T. E. J. Behrens, R. Bogacz, M. Jazayeri, and L. Susman for their helpful comments. Our work was supported by grants from the Wellcome Trust (T.P.V. and J.P.S. through WT100000, and G.H. through 202111/Z/16/Z) and the Engineering and Physical Sciences Research Council through the Life Sciences Interface Doctoral Training Centre at the University of Oxford (EP/F500394/1 to J.P.S.).

Author information

Authors and Affiliations

Contributions

J.P.S., G.H., and T.P.V. conceived the study and developed the model. J.P.S. performed simulations for Figs. 1–5 and Supplementary Figs. 1–4, and J.P.S. and G.H. performed simulations for Figs. 6–8 and Supplementary Figs. 5–8. J.P.S. analyzed the results, produced the figures, and wrote the first draft of the manuscript. J.P.S., M.A.P., G.H., and T.P.V. discussed and iterated on the analysis and its results, and all authors also revised the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Integrated supplementary information

Supplementary Figure 1 Further effects of neuron-specific gain modulation.

a, Changes in the largest real part in the spectrum of W×diag(g) that result from 10 different training sessions (see our simulation details). Although this change seems substantial, the resulting firing-rate activity does not change dramatically. (For example, see b and the far left panel of Fig. 2b) b, Pearson correlation matrices of the firing rates for all pairs of neurons with (left) all gains set to 1 and (centre and right) two (of the 10) trained gain patterns for the task in Fig. 1d. The order of neurons is the same in all three matrices. Training does not result in a substantial reorganization in Pearson correlations between pairs of neurons. c, Histogram of the Pearson correlation coefficients between the 45 pairs of the 10 trained gain patterns for the task in Fig. 1d. d, Mean error between the network output with white Gaussian noise added to the initial condition x0 and the network output without noise added to x0 for various signal-to-noise ratios for 1,000 different samples of such noise. We plot results with all gains set to 1 (blue) and the 10 trained gain patterns (red) for the task in Fig. 1d. Shading indicates 1 standard deviation. e, Left: The mean Pearson correlation coefficient between the neuronal firing rates and the target increases after training. (We show 10 training sessions, and we use a paired Wilcoxon signed rank one-sided test to generate a p-value of p ≈ 0.002.) Bottom right: Example change in Pearson correlation coefficients between the 200 neurons’ firing rates and the target after training for the trial in gray in the left panel. Top right: Example of a substantial change in the dynamics of one neuron after training. f, Box plots of the errors after training independently on 10 different target movements using back-propagation for four different scenarios. In these examples, we train either the neuronal gains, the initial condition, the recurrent synaptic weight matrix, or a rank-1 perturbation of the synaptic weight matrix. See our full simulation descriptions in the supplementary material). The dashed black line is the mean error over the 10 target movements before training. (Center lines indicate median errors, boxes indicate 25th to 75th percentiles, whiskers indicate ± 1.5× the interquartile range, and dots indicate training sessions whose error lies outside the whiskers

Supplementary Figure 2 Additional results for grouped gain modulation.

a, Mean error over 10 training sessions (where shading indicates one standard deviation) using (left) random and (right) specialized groupings for 2, 10, 20, and 200 (i.e., neuron-specific) groups (see our simulation details). The target output is the same as in Fig. 1. b, Relative improvement in performance compared with neuron-specific modulation for each of 5 movements when using specialized groups shared across all (squares) or for each (circles) of the 5 movements using either 10 (blue) or 20 (black) groups. A value of 2 implies that the error is 2 times smaller after training compared to neuron-specific modulation. We indicate the performance of neuron-specific modulation using the red line. c, Mean error over 10 training sessions (where shading indicates one standard deviation) when learning 5 movements using either 20 specialized groups (shared across all 5 movements), 20 random groups, or neuron-specific modulation. d, Mean error over 10 training sessions when learning 10 novel movements using the specialized grouping (with 20 groups) shared across the 5 previously trained movements from c. e, The firing rates of 50 inhibitory and 50 excitatory neurons for each of the three different networks sizes. f, The curves give the mean error over 10 training sessions and across the 3 networks for each of 5 targets. The circles represent the mean error for each network, and the different colours indicate each of 5 different target outputs. (See our simulation details and our full simulation descriptions in the supplementary material.) g, Outputs for all five targets from the trial that produces the median error for the 400-neuron network for the cases of 10 and 20 groups. h, Box plots (in blue) of the minimum error after training for different numbers of groups and the 3 different network sizes. (These are the same data that we plotted in f.) We also include box plots (in red) for the minimum number of iterations required before the error is within 1% of the minimum error. (Center lines indicate median errors, boxes indicate 25th to 75th percentiles, whiskers indicate ± 1.5× the interquartile range, and dots indicate training sessions whose error (or number of iterations) lies outside the whiskers.)

Supplementary Figure 3 Additional results for gain patterns providing motor primitives.

a, The resulting distribution of gains from training independently on each of 100 target outputs (see our simulation details). The distribution of the gain patterns resembles a normal distribution (blue curve) with the same mean and variance as those in Fig. 1e. b, Each output from the 100 trained gain patterns. c, Outputs of 100 randomly-generated gain patterns from the distribution in a. (See our simulation details and our full simulation descriptions in the supplementary material for further details.) The outputs are substantially more homogeneous than those in b and likely would not constitute a good library for movement generation. d, The same plot as in Fig. 4d, but for up to l = 50 library elements. e, The distributions of errors across 100 different libraries for (left) l = 5 and (right) l = 20. (Note the difference in horizontal-axis scales in the two plots.) f, The error between the output and the fit from d with a different vertical axis scale. g, The same plot as in Fig. 4c, but for l = 1,..., 50 and with extended axes. Each point represents the 50th-smallest error between the output and the fit across 100 novel target movements for each of 100 randomly-generated combinations of l library elements. We show the identity line in gray. h, The same as in g, but each point represents the 50th-smallest error between the output and the fit across the 100 libraries for each of the 100 novel target movements. We plot these data in the square [0, 1] × [0, 1] and for l = 1,..., 20. i, For the data in g, we plot the Pearson correlation coefficient between the output and the fit errors over the 100 randomly-generated libraries for each number of library elements (up to l = 50). j, For the data in h, we plot the Pearson correlation coefficient between the output and the fit errors over the 100 novel target movements for each number of library elements (up to l = 50)

Supplementary Figure 4 Gain patterns as motor primitives with r0 = 5 Hz.

a, Example target (gray), fit (dashed red), and output (orange) that produces the 50th-smallest output error over 100 randomly-generated combinations (see our simulation details for a description of the generation process) of l library elements using l = 2, l = 4, l = 8, and l = 16. b, Fit error versus the output error for 100 randomly-generated combinations of l library elements for l = 1,..., 20. Each point represents the 50th-smallest error between the output and the fit across 100 novel target movements. We show the identity line in gray. c, For the data in b, we plot the Pearson correlation coefficient between the output and the fit errors over the 100 randomly-generated combinations of library elements for each number of library elements (up to l = 50). d, The same as c, but for data corresponding to the 50th-smallest error for each of the 100 novel target movements, rather than for each randomly-generated combination of library elements (up to l = 50) (see our simulation details). Compare c and d of this figure with i and j in Supplementary Fig. 3

Supplementary Figure 5 Additional results for controlling movement speeds through gain modulation.

a, Mean error over 10 training sessions for each of 10 different movements when learning gain patterns for slow-movement variants using our reward-based learning rule (see our simulation details). b, Mean error over 10 training sessions for the same 10 movements when instead learning gain patterns for slow-movement variants using a back-propagation algorithm (see our simulation details). c, Distribution of gains for the slow-movement variants across all training sessions using our reward-based learning rule. d, Distribution of gains for the slow-movement variants across all training sessions when using back-propagation. e, Histograms of the real and imaginary parts of the eigenvalues of the linearization of equation (1) around x = 0 before and after training using our reward-based rule for the example in Fig. 6b. f, Histograms of the real and imaginary parts of the eigenvalues of the linearization of equation (1) around x = 0 before and after training using the back-propagation algorithm for the example in Fig. 6c. g, On the left and right, respectively, we show the same outputs that we plotted in Figs. 6b and 6c, but we now add white Gaussian noise (with a signal-to-noise ratio of 4 dB) to the initial condition of the neuronal activity. (See our full simulation descriptions in the supplementary material.) h, Box plot of the slow-variant errors across 10 training sessions after training for different numbers of initial conditions. (Center lines indicate median errors, boxes indicate 25th to 75th percentiles, whiskers indicate ± 1.5× the interquartile range, and dots indicate training sessions whose error lies outside the whiskers.) (See our full simulation descriptions in the supplementary material for further details.) i, Mean error during training over 10 training sessions for m = 1,..., 10 initial conditions. j, For the case of 6 initial conditions in panel (h), we plot 4 example outputs that correspond to the 5th-smallest error for the 10 training sessions. (For each simulation in this figure, we train a 400-neuron network using 40 random modulatory groups (see our simulation details)

Supplementary Figure 6 Additional results for smooth control of movement speeds through gain modulation.

a, We show outputs that result from the 7 trained gain patterns from Fig. 6e (which we also reproduce here in b) for both initial conditions (see our simulation details). b, Top: We reproduce the 7 optimized gain patterns for all 40 modulatory groups when training at 7 evenly spaced speeds from Fig. 6e. We call this the ‘speed manifold’ in the main text. Bottom: We linearly interpolate between the fast and the slow gain patterns. We use this interpolation for the outputs that we show in Fig. 6f

Supplementary Figure 7 Additional results for learning gain-pattern primitives to control movement shape and speed.

We plot histograms of the errors over the 100 target movements from Fig. 8 at both fast (blue) and slow (orange) speeds (see our simulation details)

Supplementary Figure 8 Learning slow-movement variants when scaling both the amplitude and duration of target movements.

We can perform the same task as the one that we showed in Fig. 6b when we also scale the amplitude of the slow-variant target movement by the factor 1/25 (see the dashed curves). Scaling the slow-variant target movement by this factor corresponds to the same movement, but it lasts 5 times longer (see “Creating target muscle activity in Methods). We also reproduce the results from the top panel of Fig. 6b (solid curves) for comparison. During training, we reduce the errors from approximately 128 to 0.9 (i.e., a 99.3% reduction) for the amplitude-scaled task and from approximately 1.22 to 0.02 (i.e., a 98.36% reduction) for the amplitude-fixed task. The error for the amplitude-scaled task is larger than that for the amplitude-fixed task, because we scale the error by the total sum of squared errors of the target. (See equation (7) for the definition of error that we use.)

Supplementary information

Rights and permissions

About this article

Cite this article

Stroud, J.P., Porter, M.A., Hennequin, G. et al. Motor primitives in space and time via targeted gain modulation in cortical networks. Nat Neurosci 21, 1774–1783 (2018). https://doi.org/10.1038/s41593-018-0276-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41593-018-0276-0

This article is cited by

-

Temporal scaling of motor cortical dynamics reveals hierarchical control of vocal production

Nature Neuroscience (2024)

-

The concepts of muscle activity generation driven by upper limb kinematics

BioMedical Engineering OnLine (2023)

-

Initial conditions combine with sensory evidence to induce decision-related dynamics in premotor cortex

Nature Communications (2023)

-

The role of population structure in computations through neural dynamics

Nature Neuroscience (2022)

-

Linking task structure and neural network dynamics

Nature Neuroscience (2022)