Abstract

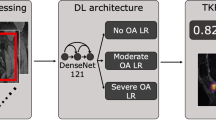

Underserved populations experience higher levels of pain. These disparities persist even after controlling for the objective severity of diseases like osteoarthritis, as graded by human physicians using medical images, raising the possibility that underserved patients’ pain stems from factors external to the knee, such as stress. Here we use a deep learning approach to measure the severity of osteoarthritis, by using knee X-rays to predict patients’ experienced pain. We show that this approach dramatically reduces unexplained racial disparities in pain. Relative to standard measures of severity graded by radiologists, which accounted for only 9% (95% confidence interval (CI), 3–16%) of racial disparities in pain, algorithmic predictions accounted for 43% of disparities, or 4.7× more (95% CI, 3.2–11.8×), with similar results for lower-income and less-educated patients. This suggests that much of underserved patients’ pain stems from factors within the knee not reflected in standard radiographic measures of severity. We show that the algorithm’s ability to reduce unexplained disparities is rooted in the racial and socioeconomic diversity of the training set. Because algorithmic severity measures better capture underserved patients’ pain, and severity measures influence treatment decisions, algorithmic predictions could potentially redress disparities in access to treatments like arthroplasty.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 print issues and online access

$209.00 per year

only $17.42 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

Anonymized imaging and clinical data to reproduce results of this study are available online at https://nda.nih.gov/oai/.

Code availability

Code to reproduce the results of this study is available online at https://github.com/epierson9/pain-disparities.

References

Zhang, Y. & Jordan, J. M. Epidemiology of osteoarthritis. Clin. Geriatr. Med. 26, 355–369 (2010).

Eberly, L. et al. Psychosocial and demographic factors influencing pain scores of patients with knee osteoarthritis. PLoS ONE 13, e0195075 (2018).

Allen, K. D. et al. Racial differences in self-reported pain and function among individuals with radiographic hip and knee osteoarthritis: the Johnston County Osteoarthritis Project. Osteoarthr. Cartil. 17, 1132–1136 (2009).

Collins, J. E., Katz, J. N., Dervan, E. E. & Losina, E. Trajectories and risk profiles of pain in persons with radiographic, symptomatic knee osteoarthritis: data from the Osteoarthritis Initiative. Osteoarthr. Cartil. 22, 622–630 (2014).

Allen, K. D. et al. Racial differences in osteoarthritis pain and function: potential explanatory factors. Osteoarthr. Cartil. 18, 160–167 (2010).

Bolen, J. et al. Differences in the prevalence and impact of arthritis among racial/ethnic groups in the United States, National Health Interview Survey, 2002, 2003, and 2006. Prev. Chronic Dis. 7, A64 (2010).

Poleshuck, E. L. & Green, C. R. Socioeconomic disadvantage and pain. Pain 136, 235–238 (2008).

Anderson, K. O., Green, C. R. & Payne, R. Racial and ethnic disparities in pain: causes and consequences of unequal care. J. Pain 10, 1187–1204 (2009).

Krause, N. et al. Psychosocial job factors associated with back and neck pain in public transit operators. Scand. J. Work Env. Health 23, 179–186 (1997).

Deveza, L. A. & Bennell, K. Management of knee osteoarthritis. UpToDate https://www.uptodate.com/contents/management-of-knee-osteoarthritis (2019).

Losina, E., Thornhill, T. S., Rome, B. N., Wright, J. & Katz, J. N. The dramatic increase in total knee replacement utilization rates in the United States cannot be fully explained by growth in population size and the obesity epidemic. J. Bone Joint Surg. Am. 94, 201–207 (2012).

Hochberg, M. C. et al. Effect of intra-articular sprifermin vs placebo on femorotibial joint cartilage thickness in patients with osteoarthritis: the FORWARD randomized clinical trial. JAMA 322, 1360–1370 (2019).

Vina, E. R., Ran, D., Ashbeck, E. L. & Kwoh, C. K. Natural history of pain and disability among African–Americans and Whites with or at risk for knee osteoarthritis: a longitudinal study. Osteoarthr. Cartil. 26, 471–479 (2018).

Neogi, T. et al. Association between radiographic features of knee osteoarthritis and pain: results from two cohort studies. BMJ 339, b2844 (2009).

Bedson, J. & Croft, P. R. The discordance between clinical and radiographic knee osteoarthritis: a systematic search and summary of the literature. BMC Musculoskelet. Disord. 9, 116 (2008).

Sayre, E. C. et al. Associations between MRI features versus knee pain severity and progression: data from the Vancouver longitudinal study of early knee osteoarthritis. PLoS ONE 12, e0176833 (2017).

Kellgren, J. H. & Lawrence, J. S. Radiological assessment of osteo-arthrosis. Ann. Rheum. Dis. 16, 494–502 (1957).

Haug, W., Compton, P. & Courbage, Y. (eds.) The Demographic Characteristics of Immigrant Populations Vol. 38 (Council of Europe, 2002).

Cheek, N. N. & Shafir, E. The thick skin bias in judgments about people in poverty. Behav. Public Policy 4, 1–26 (2020).

Hoffman Kelly, M., Trawalter, S., Axt Jordan, R. & Oliver, M. N. Racial bias in pain assessment and treatment recommendations, and false beliefs about biological differences between Blacks and whites. Proc. Natl Acad. Sci. USA 113, 4296–4301 (2016).

Nevitt, M. C., Felson, D. T. & Lester, G. The Osteoarthritis Initiative. https://nda.nih.gov/oai/ (2006).

Roos, E. M., Roos, H. P., Lohmander, L. S., Ekdahl, C. & Beynnon, B. D. Knee injury and Osteoarthritis Outcome Score (KOOS)—development of a self-administered outcome measure. J. Orthop. Sports Phys. Ther. 28, 88–96 (1998).

Englund, M., Roos, E. M. & Lohmander, L. S. Impact of type of meniscal tear on radiographic and symptomatic knee osteoarthritis: a sixteen-year followup of meniscectomy with matched controls. Arthritis Rheum. 48, 2178–2187 (2003).

Altman, R. D. & Gold, G. E. Atlas of individual radiographic features in osteoarthritis, revised. Osteoarthr. Cartil. 15, A1–A56 (2007).

Hunter, D. J. et al. Evolution of semi-quantitative whole joint assessment of knee OA: MOAKS (MRI Osteoarthritis Knee Score). Osteoarthr. Cartil. 19, 990–1002 (2011).

Rankin, E. A., Alarcon, G. S., Chang, R. W. & Cooney, L. M. Jr NIH Consensus Statement on total knee replacement December 8–10, 2003. J. Bone Joint Surg. Am. 86, 1328–1335 (2004).

Losina, E. et al. Lifetime medical costs of knee osteoarthritis management in the United States: impact of extending indications for total knee arthroplasty. Arthritis Care Res. 67, 203–215 (2015).

Lingard, E. A. & Riddle, D. L. Impact of psychological distress on pain and function following knee arthroplasty. J. Bone Joint Surg. Am. 89, 1161–1169 (2007).

Skinner, J., Weinstein, J. N., Sporer, S. M. & Wennberg, J. E. Racial, ethnic, and geographic disparities in rates of knee arthroplasty among Medicare patients. N. Engl. J. Med. 349, 1350–1359 (2003).

Riddle, D. L., Perera, R. A., Jiranek, W. A. & Dumenci, L. Using surgical appropriateness criteria to examine outcomes of total knee arthroplasty in a United States sample. Arthritis Care Res. 67, 349–357 (2015).

Xu, Y. et al. Deep learning predicts lung cancer treatment response from serial medical imaging. Clin. Cancer Res. 25, 3266–3275 (2019).

Bien, N. et al. Deep-learning-assisted diagnosis for knee magnetic resonance imaging: development and retrospective validation of MRNet. PLoS Med. 15, e1002699 (2018).

Steiner, D. F. et al. Impact of deep learning assistance on the histopathologic review of lymph nodes for metastatic breast cancer. Am. J. Surg. Pathol. 42, 1636–1646 (2018).

Uyumazturk, B. et al. Deep learning for the digital pathologic diagnosis of cholangiocarcinoma and hepatocellular carcinoma: evaluating the impact of a web-based diagnostic assistant. In Machine Learning for Health ML4H (NeurIPS, 2019).

Rajpurkar, P. et al. CheXNet: radiologist-level pneumonia detection on chest X-rays with deep learning. Preprint at https://arxiv.org/abs/1711.05225 (2017).

Kohn, M. D., Sassoon, A. A. & Fernando, N. D. Classifications in brief: Kellgren–Lawrence classification of osteoarthritis. Clin. Orthop. Relat. Res. 474, 1886–1893 (2016).

He, K., Zhang, X., Ren, S. & Sun, J. Deep residual learning for image recognition. In Proc. IEEE Conference on Computer Vision and Pattern Recognition 770–778 (IEEE, 2016).

Deng, J. et al. ImageNet: a large-scale hierarchical image database. In Proc. IEEE Conference on Computer Vision and Pattern Recognition 248–255 (IEEE, 2009).

Perez, L. & Wang, J. The effectiveness of data augmentation in image classification using deep learning. Preprint at https://arxiv.org/abs/1712.04621 (2017).

Kingma, D. P. & Ba, J. Adam: a method for stochastic optimization. In International Conference on Learning Representations (ICLR, 2014).

Gulshan, V. et al. Development and validation of a deep learning algorithm for detection of diabetic retinopathy in retinal fundus photographs. JAMA 316, 2402–2410 (2016).

Tibshirani, R. Regression shrinkage and selection via the lasso. J. R. Stat. Soc. Series B Stat. Methodol. 58, 267–288 (1996).

Zeiler, M. D. & Fergus, R. Visualizing and understanding convolutional networks. In European Conference on Computer Vision 8689, 818–833 (Springer, 2014).

Zhou, B., Khosla, A., Lapedriza, A., Oliva, A. & Torralba, A. Learning deep features for discriminative localization. In IEEE Conference on Computer Vision and Pattern Recognition 2921–2929 (IEEE, 2016).

Tiulpin, A., Thevenot, J., Rahtu, E., Lehenkari, P. & Saarakkala, S. Automatic knee osteoarthritis diagnosis from plain radiographs: a deep learning-based approach. Sci. Rep. 8, 1727 (2018).

Antony, J., McGuinness, K., O’Connor, N. E. & Moran, K. Quantifying radiographic knee osteoarthritis severity using deep convolutional neural networks. In International Conference on Pattern Recognition 1195–1200 (IEEE, 2016).

Sheehy, L. et al. Validity and sensitivity to change of three scales for the radiographic assessment of knee osteoarthritis using images from the Multicenter Osteoarthritis Study (MOST). Osteoarthr. Cartil. 23, 1491–1498 (2015).

Cutler, D. M., Meara, E. R. & Stewart, S. T. Socioeconomic status and the experience of pain: an example from knees. NBER working paper 27974 (2020); https://www.nber.org/papers/w27974

Zech, J. R. et al. Confounding variables can degrade generalization performance of radiological deep learning models. PLoS Med. 15, e1002683 (2019).

Rogers, M. W. & Wilder, F. V. The association of BMI and knee pain among persons with radiographic knee osteoarthritis: a cross-sectional study. BMC Musculoskelet. Disord. 9, 163 (2008).

Acknowledgements

We thank K. Blumer, J. Duryea, J. Irvin, P.W. Koh, S. Lamb, G. Lester, S. Li, K. Lin, B. McCann, A. Miller, L. Pierson, C. Olah, M. Raghu, P. Rajpurkar, N. Roth, C. Ruiz, C. Sabatti and participants at several seminars and meetings for helpful comments. We acknowledge financial support from Hertz and NDSEG graduate fellowships and the US Social Security Administration (SSA) grant RDR18000003, funded as part of the Retirement and Disability Research Consortium.

Author information

Authors and Affiliations

Contributions

E.P., D.M.C., J.L., S.M. and Z.O. jointly analyzed the results and wrote the paper.

Corresponding author

Ethics declarations

Competing interests

E.P. is employed by Microsoft Research. S.M. and Z.O. have equity interests in LookDeep Health (healthcare services), Dandelion (healthcare services) and Spur Labs (healthcare services). Z.O. has equity interests in Berkeley Data Ventures (consulting).

Additional information

Peer review information Jennifer Sargent was the primary editor on this article and managed its editorial process and peer review in collaboration with the rest of the editorial team.

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

Extended Data Fig. 1 The effect of dataset diversity on model performance.

Each row of plots shows the effect of removing one minority group from the training set: from top, Black, lower-income, and lower-education patients. Each column of plots shows one metric: from left, R2 in predicting KOOS pain score, and the reductions in the education, income, and racial pain disparities (relative to KLG). In each subplot, the blue dot shows, as a baseline, the performance of KLG. The red dot shows the performance of a neural network trained on a non-diverse training set, with all minority patients removed. The five black dots show the performance of neural networks trained on five diverse training sets of equal size, with five random subsets of non-minority patients removed; in all cases, the diverse training sets yield superior performance to non-diverse training sets of equal size.

Extended Data Fig. 2 Analysis pipeline.

Prior to conducting any analysis, 1,295 patients (red box) were reserved as a held-out validation set to assess final results. In the exploratory phase, the remaining patients were analyzed as follows: a training set was used to optimize model weights, and a development set to select model hyperparameters and conduct early stopping to avoid overfitting. The main analyses to run on the held-out validation set were determined prior to examining it, and the hyperparameters were finalized. In the final analysis, all models were retrained using the hyperparameters chosen in the exploratory phase, and model predictions were assessed on the 1,295 patients in the held-out validation set.

Extended Data Fig. 3 Pain levels among overlapping racial and socioeconomic subgroups.

Race and socioeconomic status are correlated: among Black patients, 61% were lower-education and 63% were lower-income, while among non-Black patients, 34% were lower-education and 34% were lower-income.

Extended Data Fig. 4 Predictive performance for pain.

95% CIs are computed by cluster bootstrapping at the patient level.

Supplementary information

Rights and permissions

About this article

Cite this article

Pierson, E., Cutler, D.M., Leskovec, J. et al. An algorithmic approach to reducing unexplained pain disparities in underserved populations. Nat Med 27, 136–140 (2021). https://doi.org/10.1038/s41591-020-01192-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41591-020-01192-7

This article is cited by

-

An intriguing vision for transatlantic collaborative health data use and artificial intelligence development

npj Digital Medicine (2024)

-

Demographic bias in misdiagnosis by computational pathology models

Nature Medicine (2024)

-

Prognostic modeling in early rheumatoid arthritis: reconsidering the predictive role of disease activity scores

Clinical Rheumatology (2024)

-

Human visual explanations mitigate bias in AI-based assessment of surgeon skills

npj Digital Medicine (2023)

-

Considerations for addressing bias in artificial intelligence for health equity

npj Digital Medicine (2023)