Abstract

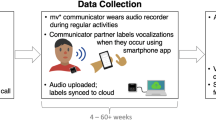

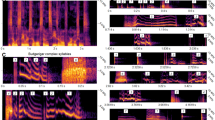

Human social life is rich with sighs, chuckles, shrieks and other emotional vocalizations, called ‘vocal bursts’. Nevertheless, the meaning of vocal bursts across cultures is only beginning to be understood. Here, we combined large-scale experimental data collection with deep learning to reveal the shared and culture-specific meanings of vocal bursts. A total of n = 4,031 participants in China, India, South Africa, the USA and Venezuela mimicked vocal bursts drawn from 2,756 seed recordings. Participants also judged the emotional meaning of each vocal burst. A deep neural network tasked with predicting the culture-specific meanings people attributed to vocal bursts while disregarding context and speaker identity discovered 24 acoustic dimensions, or kinds, of vocal expression with distinct emotion-related meanings. The meanings attributed to these complex vocal modulations were 79% preserved across the five countries and three languages. These results reveal the underlying dimensions of human emotional vocalization in remarkable detail.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The data associated with this manuscript are available upon reasonable request to the corresponding authors.

Code availability

Code associated with this study, including the functions to perform PPCA, is available in the following Zenodo repository: https://doi.org/10.5281/zenodo.7111972.

References

Banse, R. & Scherer, K. R. Acoustic profiles in vocal emotion expression. J. Pers. Soc. Psychol. 70, 614–636 (1996).

Fernald, A. in The Adapted Mind: Evolutionary Psychology and the Generation of Culture (eds Barkow, J. et al.) 391–428 (Oxford Univ. Press, 1992).

Soltis, J. The signal functions of early infant crying. Behav. Brain Sci. 27, 443–458 (2004).

Cordaro, D. T., Keltner, D., Tshering, S., Wangchuk, D. & Flynn, L. M. The voice conveys emotion in ten globalized cultures and one remote village in Bhutan. Emotion 16, 117–128 (2016).

Keltner, D. & Kring, A. M. Emotion, social function, and psychopathology. Rev. Gen. Psychol. 2, 320–342 (1998).

Van Kleef, G. A., De Dreu, C. K. W. & Manstead, A. S. R. An interpersonal approach to emotion in social decision making: the emotions as social information model. Adv. Exp. Social Psychol. 42, 45–96 (2010).

Bryant, G. A. in The Handbook of Communication Science and Biology (eds Floyd, K. & Weber, R.) 63–77 (Routledge, 2020).

Snowdon, C. T. in Handbook of Affective Sciences (eds Davidson, R. J. et al.) 457–480 (Oxford Univ. Press, 2003).

Wu, Y., Muentener, P. & Schulz, L. E. One- to four-year-olds connect diverse positive emotional vocalizations to their probable causes. Proc. Natl Acad. Sci. USA 114, 11896–11901 (2017).

Vouloumanos, A. & Bryant, G. A. Five-month-old infants detect affiliation in colaughter. Sci. Rep. 9, 4158 (2019).

Smoski, M. & Bachorowski, J.-A. Antiphonal laughter between friends and strangers. Cogn. Emot. 17, 327–340 (2003).

Bryant, G. A. et al. Detecting affiliation in colaughter across 24 societies. Proc. Natl Acad. Sci. USA 113, 4682–4687 (2016).

Sauter, D. A., Eisner, F., Ekman, P. & Scott, S. K. Cross-cultural recognition of basic emotions through nonverbal emotional vocalizations. Proc. Natl Acad. Sci. USA 107, 2408–2412 (2010).

Gendron, M., Roberson, D., van der Vyver, J. M. & Barrett, L. F. Cultural relativity in perceiving emotion from vocalizations. Psychol. Sci. 25, 911–920 (2014).

Scherer, K. R. in Emotions in Personality and Psychopathology (ed. Izard, C. E.) 493–529 (Springer, 1979).

Laukka, P. et al. Cross-cultural decoding of positive and negative non-linguistic emotion vocalizations. Front. Psychol. 4, 353 (2013).

Cowen, A. S., Elfenbein, H. A., Laukka, P. & Keltner, D. Mapping 24 emotions conveyed by brief human vocalization. Am. Psychol. 74, 698–712 (2019).

Jolly, E. & Chang, L. J. The flatland fallacy: moving beyond low-dimensional thinking. Top. Cogn. Sci. 11, 433–454 (2019).

Sauter, D. A., Eisner, F., Ekman, P. & Scott, S. K. Emotional vocalizations are recognized across cultures regardless of the valence of distractors. Psychol. Sci. 26, 354–356 (2015).

Whiting, C. M., Kotz, S. A., Gross, J., Giordano, B. L. & Belin, P. The perception of caricatured emotion in voice. Cognition 200, 104249 (2020).

Monroy, M., Cowen, A. S. & Keltner, D. Intersectionality in emotion signaling and recognition: the influence of gender, ethnicity, and social class. Emotion https://doi.org/10.1037/emo0001082 (2022).

Jackson, J. C. et al. Emotion semantics show both cultural variation and universal structure. Science 366, 1517–1522 (2019).

Rozin, P. & Cohen, A. B. High frequency of facial expressions corresponding to confusion, concentration, and worry in an analysis of naturally occurring facial expressions of Americans. Emotion 3, 68–75 (2003).

Hejmadi, A., Davidson, R. J. & Rozin, P. Exploring Hindu Indian emotion expressions: evidence for accurate recognition by Americans and Indians. Psychol. Sci. 11, 183–186 (2000).

Russell, J. A., Suzuki, N. & Ishida, N. Canadian, Greek, and Japanese freely produced emotion labels for facial expressions. Motiv. Emot. 17, 337–351 (1993).

Cowen, A. S., Laukka, P., Elfenbein, H. A., Liu, R. & Keltner, D. The primacy of categories in the recognition of 12 emotions in speech prosody across two cultures. Nat. Hum. Behav. 3, 369–382 (2019).

Cowen, A. S., Fang, X., Sauter, D. & Keltner, D. What music makes us feel: at least 13 dimensions organize subjective experiences associated with music across different cultures. Proc. Natl Acad. Sci. USA 117, 1924–1934 (2020).

Cowen, A. S. & Keltner, D. Universal facial expressions uncovered in art of the ancient Americas: a computational approach. Sci. Adv. 6, eabb1005 (2020).

Demszky, D. et al. GoEmotions: a dataset of fine-grained emotions. In Proc. 58th Annual Meeting of the Association for Computational Linguistics 4040–4054 (ACL, 2020).

Cowen, A. S. & Keltner, D. Semantic space theory: a computational approach to emotion. Trends Cogn. Sci. 25, 124–136 (2021).

Cowen, A. S. & Keltner, D. Self-report captures 27 distinct categories of emotion bridged by continuous gradients. Proc. Natl Acad. Sci. USA 114, E7900–E7909 (2017).

Cordaro, D. T. et al. The recognition of 18 facial-bodily expressions across nine cultures. Emotion 20, 1292–1300 (2020).

Cordaro, D. T. et al. Universals and cultural variations in 22 emotional expressions across five cultures. Emotion 18, 75–93 (2018).

Keltner, D., Sauter, D., Tracy, J. & Cowen, A. Emotional expression: advances in basic emotion theory. J. Nonverbal Behav. 43, 133–160 (2019).

Peterson, J. C., Abbott, J. T. & Griffiths, T. L. Adapting deep network features to capture psychological representations. In Proc. of the 48th Annual Conference of the Cognitive Science Society 2363–2368 (2016).

Peterson, J. C., Uddenberg, S., Griffiths, T. L., Todorov, A. & Suchow, J. W. Deep models of superficial face judgments. Proc. Natl Acad. Sci. USA 119, e2115228119 (2022).

Peters, B. & Kriegeskorte, N. Capturing the objects of vision with neural networks. Nat. Hum. Behav. 5, 1127–1144 (2021).

Storrs, K. R., Anderson, L. & Fleming, R. W. Unsupervised learning predicts human perception and misperception of gloss. Nat. Hum. Behav. 5, 1402–1417 (2021). https://doi.org/10.1101/2020.04.07.026120

Lake, B. M., Zaremba, W., Fergus, R. & Gureckis, T. M. Deep neural networks predict category typicality ratings for images. In Proc. 37th Annual Meeting of the Cognitive Science Society (eds Noelle, D. C. et al.) 1243–1248 (The Cognitive Science Society, 2015); https://cogsci.mindmodeling.org/2015/papers/0219/paper0219.pdf

Cowen, A. S. & Keltner, D. Universal emotional expressions uncovered in art of the ancient Americas: a computational approach. Sci. Adv. 6, eabb1005 (2020).

Cowen, A., Sauter, D., Tracy, J. L. & Keltner, D. Mapping the passions: toward a high-dimensional taxonomy of emotional experience and expression. Psychol. Sci. Public Interest 20, 69–90 (2019).

Cowen, A. S. & Keltner, D. What the face displays: mapping 28 emotions conveyed by naturalistic expression. Am. Psychol. 75, 349–364 (2020).

Hess, U. & Fischer, A. Emotional mimicry: why and when we mimic emotions. Soc. Pers. Psychol. Compass 8, 45–57 (2014).

Fischer, A. & Hess, U. Mimicking emotions. Curr. Opin. Psychol. 17, 151–155 (2017).

Ćwiek, A. et al. Novel vocalizations are understood across cultures. Sci. Rep. 11, 10108 (2021).

Simon-Thomas, E. R., Keltner, D. J., Sauter, D., Sinicropi-Yao, L. & Abramson, A. The voice conveys specific emotions: evidence from vocal burst displays. Emotion 9, 838–846 (2009).

Cowen, A. S. et al. Sixteen facial expressions occur in similar contexts worldwide. Nature 589, 251–257 (2021).

Horikawa, T., Cowen, A. S., Keltner, D. & Kamitani, Y. The neural representation of visually evoked emotion is high-dimensional, categorical, and distributed across transmodal brain regions. iScience 23, 101060 (2020).

Hofstede, G. Dimensionalizing cultures: the Hofstede model in context. Online Read. Psychol. Cult. 2, 8 (2011).

Simmons, J. P., Nelson, L. D. & Simonsohn, U. False-positive psychology: undisclosed flexibility in data collection and analysis allows presenting anything as significant. Psychol. Sci. 22, 1359–1366 (2011).

Roth, P. L. Missing data: a conceptual review for applied psychologists. Pers. Psychol. 47, 537–560 (1994).

Juslin, P. N. & Laukka, P. Communication of emotions in vocal expression and music performance: different channels, same code? Psychol. Bull. 129, 770–814 (2003).

van der Maaten, L. & Hinton, G. Visualizing data using t-SNE. J. Mach. Learn. Res. 9, 2579–2605 (2008).

Tzirakis, P., Zhang, J. & Schuller, B. W. End-to-end speech emotion recognition using deep neural networks. In Proc. 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) 5089–5093 (IEEE, 2018). https://doi.org/10.1109/icassp.2018.8462677

Zamani, H. & Croft, W. B. On the theory of weak supervision for information retrieval. In Proc. 2018 ACM SIGIR International Conference on Theory of Information Retrieval 147–154 (Association for Computing Machinery, 2018).

Kingma, D. P. & Ba, J. Adam: a method for stochastic optimization. In Proc. 3rd International Conference on Learning Representations (ICLR) (ICLR, 2015).

He, K., Zhang, X., Ren, S. & Sun, J. Delving deep into rectifiers: surpassing human-level performance on imagenet classification. In Proc. 2015 IEEE International Conference on Computer Vision (ICCV) 1026–1034 (IEEE, 2015).

Benjamini, Y. & Hochberg, Y. Controlling the false discovery rate—a practical and powerful approach to multiple testing. J. R. Stat. Soc. B 57, 289–300 (1995).

G’Sell, M. G., Wager, S., Chouldechova, A. & Tibshirani, R. Sequential selection procedures and false discovery rate control. J. R. Stat. Soc. B 78, 423–444 (2016).

Kaiser, H. F. The varimax criterion for analytic rotation in factor analysis. Psychometrika 23, 187–200 (1958).

Acknowledgements

This work was supported by Hume AI as part of its effort to advance emotion research using computational methods. The funders had no role in study design, data collection and analysis, decision to publish or preparation of the manuscript.

Author information

Authors and Affiliations

Contributions

A.S.C. and D.K. designed the experiment. L.K., M.O., X.F., M.M., R.C., J.M. and A.S.C. implemented the study design and collected data. J.A.B., P.T., A.B. and A.S.C. analysed data. J.A.B. and A.S.C. interpreted results and created figures. J.A.B. and A.S.C. drafted the manuscript. All authors provided critical revisions and approved the final manuscript for submission.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Human Behaviour thanks the anonymous reviewers for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information

Supplementary Figs. 1–7 and Tables 1–3.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Brooks, J.A., Tzirakis, P., Baird, A. et al. Deep learning reveals what vocal bursts express in different cultures. Nat Hum Behav 7, 240–250 (2023). https://doi.org/10.1038/s41562-022-01489-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41562-022-01489-2