Abstract

Detecting and learning temporal regularities is essential to accurately predict the future. A long-standing debate in cognitive science concerns the existence in humans of a dissociation between two systems, one for handling statistical regularities governing the probabilities of individual items and their transitions, and another for handling deterministic rules. Here, to address this issue, we used finger tracking to continuously monitor the online build-up of evidence, confidence, false alarms and changes-of-mind during sequence processing. All these aspects of behaviour conformed tightly to a hierarchical Bayesian inference model with distinct hypothesis spaces for statistics and rules, yet linked by a single probabilistic currency. Alternative models based either on a single statistical mechanism or on two non-commensurable systems were rejected. Our results indicate that a hierarchical Bayesian inference mechanism, capable of operating over distinct hypothesis spaces for statistics and rules, underlies the human capability for sequence processing.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The dataset presented in the current study is available on GitHub (https://github.com/maheump/Emergence).

Code availability

The MATLAB code used to run simulations of the different models, analyse the results and reproduce all the figures is available on GitHub (https://github.com/maheump/Emergence).

References

Clegg, B. A., Digirolamo, G. J. & Keele, S. W. Sequence learning. Trends Cogn. Sci. 2, 275–281 (1998).

Dehaene, S., Meyniel, F., Wacongne, C., Wang, L. & Pallier, C. The neural representation of sequences: from transition probabilities to algebraic patterns and linguistic trees. Neuron 88, 2–19 (2015).

Lashley, K. S. in Cerebral Mechanisms in Behavior (ed. Jeffress, L. A.) 112–131 (Wiley, 1951).

Friston, K. A theory of cortical responses. Phil. Trans. R. Soc. B 360, 815–836 (2005).

Rao, R. P. & Ballard, D. H. Predictive coding in the visual cortex: a functional interpretation of some extra-classical receptive-field effects. Nat. Neurosci. 2, 79–87 (1999).

Spratling, M. W. A review of predictive coding algorithms. Brain Cogn. 112, 92–97 (2017).

Friston, K. The free-energy principle: a unified brain theory? Nat. Rev. Neurosci. 11, 127–138 (2010).

Friston, K., Rosch, R., Parr, T., Price, C. & Bowman, H. Deep temporal models and active inference. Neurosci. Biobehav. Rev. 90, 486–501 (2018).

Summerfield, C. & de Lange, F. P. Expectation in perceptual decision making: neural and computational mechanisms. Nat. Rev. Neurosci. 15, 745–756 (2014).

Barascud, N., Pearce, M. T., Griffiths, T. D., Friston, K. J. & Chait, M. Brain responses in humans reveal ideal observer-like sensitivity to complex acoustic patterns. Proc. Natl Acad. Sci. USA 113, E616–E625 (2016).

Barczak, A. et al. Top-down, contextual entrainment of neuronal oscillations in the auditory thalamocortical circuit. Proc. Natl Acad. Sci. USA 115, E7605–E7614 (2018).

Boubenec, Y., Lawlor, J., Górska, U., Shamma, S. & Englitz, B. Detecting changes in dynamic and complex acoustic environments. eLife 6, 1929 (2017).

Herrmann, B. & Johnsrude, I. S. Neural signatures of the processing of temporal patterns in sound. J. Neurosci. 38, 5466–5477 (2018).

Skerritt-Davis, B. & Elhilali, M. Detecting change in stochastic sound sequences. PLoS Comput. Biol. 14, e1006162 (2018).

Fodor, J. A. & Pylyshyn, Z. W. Connectionism and cognitive architecture: a critical analysis. Cognition 28, 3–71 (1988).

Marcus, G. F. Connectionism: with or without rules? Response to JL McClelland and DC Plaut (1999). Trends Cogn. Sci. 3, 168–170 (1999).

Marcus, G. F. The Algebraic Mind: Integrating Connectionism and Cognitive Science (MIT Press, 2019).

Pinker, S. & Prince, A. On language and connectionism: analysis of a parallel distributed processing model of language acquisition. Cognition 28, 73–193 (1988).

Rumelhart, D. & McClelland, J. Parallel Distributed Processing: Explorations in the Microstructure of Cognition Vol. 2, Psychological and Biological Models (MIT Press, 1986).

Brown, S. D. & Steyvers, M. Detecting and predicting changes. Cogn. Psychol. 58, 49–67 (2009).

Gallistel, C. R., Krishan, M., Liu, Y., Miller, R. & Latham, P. E. The perception of probability. Psychol. Rev. 121, 96–123 (2014).

Glaze, C. M., Kable, J. W. & Gold, J. I. Normative evidence accumulation in unpredictable environments. eLife 4, e08825 (2015).

Maheu, M., Dehaene, S. & Meyniel, F. Brain signatures of a multiscale process of sequence learning in humans. eLife 8, 275 (2019).

Meyniel, F., Schlunegger, D. & Dehaene, S. The sense of confidence during probabilistic learning: a normative account. PLoS Comput. Biol. 11, e1004305 (2015).

Nassar, M. R., Wilson, R. C., Heasly, B. & Gold, J. I. An approximately Bayesian delta-rule model explains the dynamics of belief updating in a changing environment. J. Neurosci. 30, 12366–12378 (2010).

Saffran, J. R., Aslin, R. N. & Newport, E. L. Statistical learning by 8-month-old infants. Science 274, 1926–1928 (1996).

Glascher, J., Daw, N. D., Dayan, P. & O’Doherty, J. P. States versus rewards: dissociable neural prediction error signals underlying model-based and model-free reinforcement learning. Neuron 66, 585–595 (2010).

Gold, J. I. & Shadlen, M. N. The neural basis of decision making. Annu. Rev. Neurosci. 30, 535–574 (2007).

Hollerman, J. R. & Schultz, W. Dopamine neurons report an error in the temporal prediction of reward during learning. Nat. Neurosci. 1, 304–309 (1998).

Huys, Q. J. M. et al. Bonsai trees in your head: how the Pavlovian system sculpts goal-directed choices by pruning decision trees. PLoS Comput. Biol. 8, e1002410 (2012).

Lee, D., Seo, H. & Jung, M. W. Neural basis of reinforcement learning and decision making. Annu. Rev. Neurosci. 35, 287–308 (2012).

Rescorla, R. & Wagner, A. A Theory of Pavlovian Conditioning: Variations in the Effectiveness of Reinforcement and Nonreinforcement (Appletone-Century-Crofts, 1972).

Sutton, R. S. & Barto, A. G. Reinforcement Learning (MIT Press, 1998); https://doi.org/10.5772/5275

Cleeremans, A. & McClelland, J. L. Learning the structure of event sequences. J. Exp. Psychol. Gen. 120, 235–253 (1991).

Lieberman, M. D., Chang, G. Y., Chiao, J., Bookheimer, S. Y. & Knowlton, B. J. An event-related fMRI study of artificial grammar learning in a balanced chunk strength design. J. Cogn. Neurosci. 16, 427–438 (2004).

Nissen, M. J. & Bullemer, P. Attentional requirements of learning: evidence from performance measures. Cogn. Psychol. 19, 1–32 (1987).

Reber, A. S. Implicit learning of artificial grammars. J. Verbal Learn. Verbal Behav. 6, 855–863 (1967).

Friederici, A. D., Bahlmann, J. R., Heim, S., Schubotz, R. I. & Anwander, A. The brain differentiates human and non-human grammars: functional localization and structural connectivity. Proc. Natl Acad. Sci. USA 103, 2458–2463 (2006).

Landmann, C. et al. Dynamics of prefrontal and cingulate activity during a reward-based logical deduction task. Cereb. Cortex 17, 749–759 (2007).

Marcus, G. F., Vijayan, S., Rao, S. B. & Vishton, P. M. Rule learning by seven-month-old infants. Science 283, 77–80 (1999).

Wang, L., Uhrig, L., Jarraya, B. & Dehaene, S. Representation of numerical and sequential patterns in macaque and human brains. Curr. Biol. 25, 1966–1974 (2015).

Amalric, M. et al. The language of geometry: fast comprehension of geometrical primitives and rules in human adults and preschoolers. PLoS Comput. Biol. 13, e1005273 (2017).

Konovalov, A. & Krajbich, I. Neurocomputational dynamics of sequence learning. Neuron 98, 1282–1293.e4 (2018).

Restle, F. Theory of serial pattern learning: structural trees. Psychol. Rev. 77, 481–495 (1970).

Shima, K., Isoda, M., Mushiake, H. & Tanji, J. Categorization of behavioral sequences in the prefrontal cortex. Nature 445, 315–318 (2006).

Fitch, W. T. Toward a computational framework for cognitive biology: unifying approaches from cognitive neuroscience and comparative cognition. Phys. Life Rev. 11, 329–364 (2014).

Ballard, I., Miller, E. M., Piantadosi, S. T., Goodman, N. D. & McClure, S. M. Beyond reward prediction errors: human striatum updates rule values during learning. Cereb. Cortex 28, 3965–3975 (2017).

Bhanji, J. P., Beer, J. S. & Bunge, S. A. Taking a gamble or playing by the rules: dissociable prefrontal systems implicated in probabilistic versus deterministic rule-based decisions. NeuroImage 49, 1810–1819 (2010).

Kóbor, A. et al. ERPs differentiate the sensitivity to statistical probabilities and the learning of sequential structures during procedural learning. Biol. Psychol. 135, 180–193 (2018).

Naudé, J. et al. Nicotinic receptors in the ventral tegmental area promote uncertainty-seeking. Nat. Neurosci. 19, 471–478 (2016).

Shanks, D. R., Wilkinson, L. & Channon, S. Relationship between priming and recognition in deterministic and probabilistic sequence learning. J. Exp. Psychol. Learn. Mem. Cogn. 29, 248–261 (2003).

Stefaniak, N., Willems, S., Adam, S. & Meulemans, T. What is the impact of the explicit knowledge of sequence regularities on both deterministic and probabilistic serial reaction time task performance? Mem. Cogn. 36, 1283–1298 (2008).

Téglás, E. & Bonatti, L. L. Infants anticipate probabilistic but not deterministic outcomes. Cognition 157, 227–236 (2016).

Meyniel, F., Maheu, M. & Dehaene, S. Human inferences about sequences: a minimal transition probability model. PLoS Comput. Biol. 12, e1005260 (2016).

Navajas, J. et al. The idiosyncratic nature of confidence. Nat. Hum. Behav. 1, 810–818 (2017).

Yeung, N. & Summerfield, C. Metacognition in human decision-making: confidence and error monitoring. Phil. Trans. R. Soc. B 367, 1310–1321 (2012).

Resulaj, A., Kiani, R., Wolpert, D. M. & Shadlen, M. N. Changes of mind in decision-making. Nature 461, 263–266 (2009).

Song, J.-H. & Nakayama, K. Hidden cognitive states revealed in choice reaching tasks. Trends Cogn. Sci. 13, 360–366 (2009).

Estes, W. K. Toward a statistical theory of learning. Psychol. Rev. 57, 94–107 (1950).

Peterson, C. R. & Beach, L. R. Man as an intuitive statistician. Psychol. Bull. 68, 29–46 (1967).

Falk, R. & Konold, C. Making sense of randomness: implicit encoding as a basis for judgment. Psychol. Rev. 104, 301–318 (1997).

Griffiths, T. L., Daniels, D., Austerweil, J. L. & Tenenbaum, J. B. Subjective randomness as statistical inference. Cogn. Psychol. 103, 85–109 (2018).

Williams, J. J. & Griffiths, T. L. Why are people bad at detecting randomness? A statistical argument. J. Exp. Psychol. Learn. Mem. Cogn. 39, 1473–1490 (2013).

Kounios, J. & Beeman, M. The aha! moment: the cognitive neuroscience of insight. Curr. Dir. Psychol. Sci. 18, 210–216 (2009).

Warren, R. M. & Ackroff, J. M. Two types of auditory sequence perception. Percept. Psychophys. 20, 387–394 (1976).

Warren, R. M. & Bashford, J. A. When acoustic sequences are not perceptual sequences: the global perception of auditory patterns. Percept. Psychophys. 54, 121–126 (1993).

Johnson, B., Verma, R., Sun, M. & Hanks, T. D. Characterization of decision commitment rule alterations during an auditory change detection task. J. Neurophysiol. 118, 2526–2536 (2017).

Pauli, W. M. & Jones, M. Changepoint detection versus reinforcement learning: separable neural substrates approximate different forms of Bayesian inference. Preprint at bioRxiv https://doi.org/10.1101/591818 (2019).

Farashahi, S. et al. Metaplasticity as a neural substrate for adaptive learning and choice under uncertainty. Neuron 94, 401–414 (2017).

Meder, D. et al. Simultaneous representation of a spectrum of dynamically changing value estimates during decision making. Nat. Commun. 8, 1942 (2017).

Wilson, R. C., Nassar, M. R. & Gold, J. I. A mixture of delta-rules approximation to Bayesian inference in change-point problems. PLoS Comput. Biol. 9, e1003150 (2013).

Findling, C. & Wyart, V. Computation noise in human learning and decision-making: origin, impact, function. Curr. Opin. Behav. Sci. 38, 124–132 (2021).

Drugowitsch, J., Wyart, V., Devauchelle, A.-D. & Koechlin, E. Computational precision of mental inference as critical source of human choice suboptimality. Neuron 92, 1398–1411 (2016).

Huettel, S. A., Mack, P. B. & McCarthy, G. Perceiving patterns in random series: dynamic processing of sequence in prefrontal cortex. Nat. Neurosci. 5, 485–490 (2002).

Tervo, D. G. R. et al. Behavioral variability through stochastic choice and its gating by anterior cingulate cortex. Cell 159, 21–32 (2014).

Bekinschtein, T. A. et al. Neural signature of the conscious processing of auditory regularities. Proc. Natl Acad. Sci. USA 106, 1672–1677 (2009).

Wacongne, C. et al. Evidence for a hierarchy of predictions and prediction errors in human cortex. Proc. Natl Acad. Sci. USA 108, 20754–20759 (2011).

Schapiro, A. C., Rogers, T. T., Cordova, N. I., Turk-Browne, N. B. & Botvinick, M. M. Neural representations of events arise from temporal community structure. Nat. Neurosci. 16, 486–492 (2013).

Takács, Á. et al. Neurophysiological and functional neuroanatomical coding of statistical and deterministic rule information during sequence learning. Hum. Brain Mapp. 42, 3182–3201 (2021).

Goodman, N., Tenenbaum, J., Feldman, J. & Griffiths, T. A rational analysis of rule-based concept learning. Cogn. Sci. Multidiscip. J. 32, 108–154 (2008).

Kemp, C. & Tenenbaum, J. B. The discovery of structural form. Proc. Natl Acad. Sci. USA 105, 10687–10692 (2008).

Aitkin, C. D. Discretization of Continuous Features by Human Learners (Rutgers University, Graduate School, 2009).

Silver, D. et al. Mastering the game of Go with deep neural networks and tree search. Nature 529, 484–489 (2016).

Jacobs, R. A., Jordan, M. I., Nowlan, S. J. & Hinton, G. E. Adaptive mixtures of local experts. Neural Comput. 3, 79–87 (1991).

Gigerenzer, G. & Brighton, H. Homo heuristicus: why biased minds make better inferences. Top. Cogn. Sci. 1, 107–143 (2009).

Wolpert, D. H. The lack of a priori distinctions between learning algorithms. Neural Comput. 8, 1341–1390 (1996).

Radulescu, A., Niv, Y. & Ballard, I. Holistic reinforcement learning: the role of structure and attention. Trends Cogn. Sci. 23, 278–292 (2019).

Frank, M. C. & Tenenbaum, J. B. Three ideal observer models for rule learning in simple languages. Cognition 120, 360–371 (2011).

Anderson, J. R. The adaptive nature of human categorization. Psychol. Rev. 98, 409–429 (1991).

Ashby, F. G., Alfonso-Reese, L. A., Turken, A. U. & Waldron, E. M. A neuropsychological theory of multiple systems in category learning. Psychol. Rev. 105, 442–481 (1998).

Ashby, F. G. & Maddox, W. T. Human category learning. Annu. Rev. Physiol. 56, 149–178 (2005).

Chomsky, N. & Halle, M. The Sound Pattern of English (MIT Press, 1991).

Saffran, J. R. Statistical language learning. Curr. Dir. Psychol. Sci. 12, 110–114 (2003).

Kahneman, D. & Tversky, A. in Handbook of the Fundamentals of Financial Decision Making Part I (ed. Ziemba, W. T.) 99–127 (World Scientific Publishing, 2013); https://www.worldscientific.com/doi/abs/10.1142/9789814417358_0006

Feldman, J. Minimization of Boolean complexity in human concept learning. Nature 407, 630–633 (2000).

Gobet, F. et al. Chunking mechanisms in human learning. Trends Cogn. Sci. 5, 236–243 (2001).

Planton, S. et al. A theory of memory for binary sequences: evidence for a mental compression algorithm in humans. PLoS Comput. Biol. 17, e1008598 (2021).

Shannon, C. E. A Mathematical Theory of Communication Vol. 27 (Bell System Technical Journal, 1948).

Brainard, D. H. The psychophysics toolbox. Spat. Vis. 10, 433–436 (1997).

Bayes, M. & Price, M. An essay towards solving a problem in the doctrine of chances. By the late Rev. Mr. Bayes, F. R. S. communicated by Mr. Price, in a letter to John Canton, A. M. F. R. S. Phil. Trans. R. Soc. Lond. 53, 370–418 (1763).

Laplace, P. Théorie Analytique des Probabilités Vol. 7 (Courcier, 1820).

Palminteri, S., Wyart, V. & Koechlin, E. The importance of falsification in computational cognitive modeling. Trends Cogn. Sci. 21, 425–433 (2017).

Gelman, A. et al. Bayesian Data Analysis 3rd edn (CRC, 2013).

Tenenbaum, J. B. & Griffiths, T. L. Generalization, similarity, and Bayesian inference. Behav. Brain Sci. 24, 629–640 (2002).

Brown, P. F., Desouza, P. V., Mercer, R. L., Pietra, V. J. D. & Lai, J. C. Class-based n-gram models of natural language. Comput. Linguist. 18, 467–479 (1992).

Jeffreys, H. The Theory of Probability (Oxford Univ. Press, 1998).

Macmillan, N. A. & Creelman, C. D. Detection Theory: A User’s Guide (Routledge, 2004); https://doi.org/10.4324/9781410611147

Rouder, J. N., Speckman, P. L., Sun, D., Morey, R. D. & Iverson, G. Bayesian t-tests for accepting and rejecting the null hypothesis. Psychon. Bull. Rev. 16, 225–237 (2009).

Acknowledgements

M.M. was supported by a ‘Frontières du Vivant’ doctoral fellowship involving the Ministère de l’Enseignement Supérieur et de la Recherche and the Fondation Bettencourt Schueller, as well as a Fondation pour la Recherche Médicale doctoral fellowship. This research was funded by Institut National de la Santé et de la Recherche Médicale (to S.D.), Commissariat à l’Energie Atomique (to S.D. and F.M.), Collège de France (to S.D.) and a European Research Council grant ‘NeuroSyntax’ ID 695403 funded under H2020-E.U.1.1. (to S.D.). The funders had no role in study design, data collection and analysis, decision to publish or preparation of the manuscript. We thank the people who participated in the study as well as I. Brunet for her help in data acquisition. We thank L. Berkovitch and J. Pesnot-Lerousseau for help in piloting the experiment. We also thank A. Akrami and her group, M. Chait, P. Domenech, S. Fleming and his group, L. Mallet and his group, K. N’Diaye, E. Procyk, J. Sackur, M. Sigman, V. Wyart, and the members of the Cognitive Neuroimaging Unit for useful discussions.

Author information

Authors and Affiliations

Contributions

Conceptualization: M.M., F.M., S.D.; formal analysis: M.M., F.M.; funding acquisition: S.D.; investigation: M.M.; methodology: M.M.; project administration: M.M.; software: M.M.; supervision: F.M., S.D.; visualization: M.M.; writing—original draft: M.M.; writing—review and editing: M.M., F.M., S.D.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Human Behaviour thanks the anonymous reviewers for their contribution to the peer review of this work. Peer reviewer reports are available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

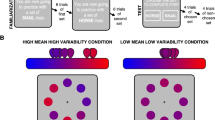

Extended Data Fig. 1 Experimental conditions.

H corresponds to the Shannon entropy of generative transition probabilities (in bits). In the case of statistical biases, the type of bias (that is, repetition-, alternation- and/or frequency bias) and its strength (that is, weak, intermediate or strong) is specified. Superscripts indicate sequences with matched (apparent) transition probabilities, also illustrated by the tree on the right border.

Extended Data Fig. 2 Categorization of sequences by participants and models.

Proportion of generative processes reported by participants and the different models for fully random sequences (a, averaged across the 10 fully random sequences), sequences with statistical biases (b), or a deterministic rule (c). Participants report the generative process using post-sequence questions. Models identify the generative process based on the maximum a posteriori probability over hypotheses at the end of the sequence. When the model is undecided (for example, when p(Hrule|y) ≈ p(Hstat|y) ≈ 1/2; with a precision of 0.001), the model chooses randomly among hypotheses. H corresponds to the Shannon entropy of generative transition probabilities (in bits). The probabilities p(A) and p(alternation) are analytically computed from generative transition probabilities. Stars denote significance of an exact binomial one-tailed test of the proportion of correct categorization larger than 1/3: *** P < 0.005, ** P < 0.01, * P < 0.05.

Extended Data Fig. 3 Normative causes of undetected statistical biases.

a, No latter change-point occurrence. Sequences with undetected statistical biases were not characterized by a latter occurrence of the change-point. b, Weaker evidence. Sequences with undetected statistical biases were characterized by a lower posterior probability of the statistical bias hypothesis estimated by the model at the end of the sequence. c, Less frequent detection of alternation biases. For both the participants and the model, alternation biases are more often missed than repetition- and frequency-biases (after controlling for the strength of statistical bias, by design). This is a signature of transition probability learning. Stars denote significance: *** P < 0.005, ** P < 0.01, * P < 0.05; ns stands for non-significant.

Extended Data Fig. 4 Different detection slope for statistical biases and deterministic rules.

The distribution of mean squared error (MSE) is plotted as a function of a grid of values of the sigmoid slope parameter (after minimizing the MSE over the other parameters of the sigmoid functions). Analyses were restricted to non-random sequences that were correctly identified by participants and shaded areas correspond to the standard error of the mean computed over participants. Stars denote significance: *** P < 0.005.

Extended Data Fig. 5 Exhaustive list of models.

The normative two-system model uses distinct hypothesis spaces for statistics and rules, and normatively arbitrates between them using a common probabilistic currency. By contrast, the normative single-system model uses the same hypothesis space for both statistics and rules: in this case, rules are detected based on the apparent bias in (high-order) transition probabilities they induce. For these models, the arbitration remains normative, except for the version in which both statistic and rule hypotheses are identical (that is, same prior distribution and same likelihood function), in which case several (linear and non-linear) arbitration functions based on item predictability are compared. Finally, the non-commensurable two-system model uses distinct hypothesis spaces for statistics and rules (as the normative two-system model), but do not normatively arbitrate between them. Instead, it uses one of different arbitration functions (linear, sigmoid, selection of maximum) based on the hypothesis (pseudo-)posterior probabilities. Several versions of those 3 classes of models are parameterized; the different parameters are: α, the order of transition probabilities which are estimated; d, the degree of bias towards predictable transition probabilities in the prior distribution; δ, the non-linearity in the function mapping item predictability to hypothesis posterior probabilities; β, the non-linearity (sigmoid) in the weighting of hypothesis (pseudo-)posterior probabilities.

Extended Data Fig. 6 Error metrics for model comparison.

a, Error measured on categorization profile. Model error is measured by comparing the model’s and participant's categorization of sequences. Participants report the generative process using post-sequence questions. Models identify the most likely generative process based on the posterior probability of each hypothesis at the end of the sequence. When models were undecided (that is, when two hypotheses have probability ½ ± 0.001, or three hypotheses have probability 1/3 ± 0.001; see bottom part of the matrix), the resulting error is divided among the equally likely hypotheses. b, Error measured on detection dynamics of deterministic rule. Model error is measured by comparing the model’s and the participant's detection dynamics of deterministic rules. A metric reflecting the smoothness of the dynamics is used: the absolute second-order derivative of the posterior probability of the deterministic rule hypothesis averaged in a period ranging from the change-point position to the end of the sequence. Each sequence (with a deterministic rule) is thus characterized by such a metric. c, Error measured on graded weighing of non-random hypotheses in random sequences. Model error is the residual error of the linear regression relating participant's belief difference in random sequences to the model’s, restrained to ≥ 0 regression coefficients to prevent flips of data points between models and participant. The effect of observation number and together with its interaction with belief difference (Fig. 7c) is removed prior to this analysis (using a linear regression).

Extended Data Fig. 7 Normative single-system model arbitrating between non-random hypotheses using non-linear functions of predictability strength.

a, Non-linear functions of predictability strength. In the version of the normative single-system model in which Hstat and Hrule monitor the same transition order (which we varied) using the same prior beliefs regarding predictability (uniform prior), we explored several variants. Because, in this case, the two non-random hypotheses are strictly identical, the strength of predictability is used to arbitrate among non-random hypotheses: the further away p(yk|y1:k–1), where y is the sequence and k the current observation, from ½, the more likely Hrule. In the main text, we report results for an estimation of posterior probabilities estimated as a linear function of predictability, called balanced. Here, we explored non-linear relationships: extremes, stat-preference and rule-preference. b/c, Error of alternative models with respect to participants’ categorization profile/detection dynamics of deterministic rules. Same as in Fig. 8.

Extended Data Fig. 8 Detection dynamics by participants and models.

a,b, Detection dynamics of statistical biases from the normative single-system model/non-commensurable two-system model. The posterior probability of the statistical bias hypothesis is aligned on the change-point position. Detection dynamics is plotted for each deterministic rule (columns), for each version of the models (rows), and across a range of parameter values (color-coded lines). Detection dynamics from the normative two-system model and the participants are overlaid on all plots as dotted and dashed lines respectively. c/d, Detection dynamics of deterministic rules from the normative single-system model/non-commensurable two-system model. The posterior probability of the deterministic rule hypothesis is aligned on detection-point. Same convention as in a/b.

Extended Data Fig. 9 Similarity of model inference for different maximum pattern lengths.

Correlation across all types of sequences. Correlation between posterior probabilities (after conversion to cartesian coordinates to ensure an appropriate number of degrees of freedom) from different versions of the normative two-system model considering different maximum lengths for pattern detection. Versions of the model which use a maximum pattern length larger than the longest patterns (that is, 10 observations) used in the experiment make very similar inferences. b, Correlation across each type of sequence. Same as in a but separately for each type of sequence. The difference in inference between versions of the model using small maximum pattern lengths arises solely for sequences entailing deterministic rules, thereby suggesting that the difference solely originates from longest patterns remaining undetected.

Supplementary information

Supplementary Information

Supplementary Notes 1 and 2, including Figs. 1–4.

Rights and permissions

About this article

Cite this article

Maheu, M., Meyniel, F. & Dehaene, S. Rational arbitration between statistics and rules in human sequence processing. Nat Hum Behav 6, 1087–1103 (2022). https://doi.org/10.1038/s41562-021-01259-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41562-021-01259-6

This article is cited by

-

A neurophysiological perspective on the integration between incidental learning and cognitive control

Communications Biology (2023)

-

Deterministic and probabilistic regularities underlying risky choices are acquired in a changing decision context

Scientific Reports (2023)