Abstract

Governments worldwide have implemented countless policies in response to the COVID-19 pandemic. We present an initial public release of a large hand-coded dataset of over 13,000 such policy announcements across more than 195 countries. The dataset is updated daily, with a 5-day lag for validity checking. We document policies across numerous dimensions, including the type of policy, national versus subnational enforcement, the specific human group and geographical region targeted by the policy, and the time frame within which each policy is implemented. We further analyse the dataset using a Bayesian measurement model, which shows the quick acceleration of the adoption of costly policies across countries beginning in mid-March 2020 through 24 May 2020. We believe that these data will be instrumental for helping policymakers and researchers assess, among other objectives, how effective different policies are in addressing the spread and health outcomes of COVID-19.

Similar content being viewed by others

Main

Governments around the world have implemented a substantial number and variety of policies in reaction to the COVID-19 pandemic over a period of a few months. However, policymakers and researchers have, to date, lacked access to quality, up-to-date data they need for conducting rigorous analyses of whether, how and to what degree these fast changing policies have worked in mitigating the health, political and economic effects of the pandemic. To address this concern, we present the CoronaNet COVID-19 Government Response Event Dataset, which provides fine-grained, monadic and dyadic data on policy actions taken by governments across the world since the Chinese government first reported the COVID-19 outbreak on 31 December 2019. At the time of writing, the dataset covers the policy actions of 198 countries up until 24 May 2020 for a total of 13,398 events.

With the help of a team of over 260 research assistants (RAs) in 18 time zones, we are releasing the data on a daily basis. We are implementing a 5-day lag between data collection and release to evaluate and validate ongoing coding efforts for random samples of the data to ensure the best possible quality given the considerable time constraints. More specifically, the CoronaNet dataset collects daily data on government policy actions taken in response to COVID-19 across the following dimensions:

-

The type of government policy implemented (for example, quarantine or closure of schools (16 total)).

-

The level of government initiating the action (for example, national or provincial).

-

The geographical target of the policy action, if applicable (for example, national, provincial or municipal).

-

The human or material target of the policy action, if applicable (for example, travellers or masks).

-

The directionality of the policy action, if applicable (for example, inbound, outbound or both).

-

The mechanism of travel that the policy action targets, if applicable (for example, flights or trains).

-

The enforcement of the policy action (for example, mandatory or voluntary).

-

The enforcer of the policy action (for example, national government or military).

-

The timing of the policy action (for example, date announced and date implemented).

Although government responses to the COVID-19 pandemic have inaugurated considerable changes in how billions of people live their lives, they draw on the lessons learned from the long history of pandemics and epidemics that came before. Indeed, the earliest written sources document how ancient Mesopotamians responded to the constant threat of epidemic by, on the one hand, drawing on spiritual practices and, on the other hand, isolating people showing the first symptoms of a disease from others1,2. As time has marched forwards, pandemics and epidemics have consistently and significantly affected the course of human history3,4,5,6,7, and governments have continued to implement a variety of policies in response1,8,9. Throughout it all, the collection of reliable data has helped advance a collective understanding of which policies are effective in curbing the effects of a given disease outbreak10,11. This is no trivial task. Indeed, previous research on pandemics and epidemics suggests that a policy that is effective in one context may be ineffective in another due to a whole host of potentially conditioning factors, including the pathogenesis of the particular disease12,13, the characteristics of the underlying population14,15,16,17 and the available medical18,19 and communication20,21,22,23 technology at the time.

We believe that the data presented in this paper can similarly help policymakers and researchers assess how effective different policies are in addressing the spread and health outcomes of COVID-19 (ref. 24). While available research is necessarily preliminary, it suggests that the type of policies that governments have implemented in response to COVID-19 (refs. 25,26,27,28), when they decided to implement them29,30, who they were targeted towards31,32 and what state capacity they possessed to do so33,34 have all significantly influenced how the virus has affected health outcomes both within and across different country contexts35,36,37, all of which are readily captured by this dataset38,39. Equally important is understanding why countries adopt different policies, with early analyses suggesting that institutional and political factors, for example, the authoritarian or democratic nature of the institutions of a country40 or its level of political partisanship41, play an important role. These findings will not only help improve the global response to the current crisis but can also build an influential foundation of knowledge for responding to future outbreaks42,43.

Meanwhile, given the unexpected nature of the initial outbreak in Wuhan, China, the government policies that were made in reaction to the COVID-19 pandemic constitute the single largest natural experiment in recent memory, allowing researchers to improve causal inference in any number of fields. Indeed, government reactions to the COVID-19 pandemic may advance our understanding of a wide range of social phenomena, from the evolution of political institutions44,45,46,47,48 to the progression of economic development49,50,51,52,53 and the stability of financial markets54,55, to say nothing of what we might learn about environmental economics56,57, mental health58,59, disaster response60,61 and disaster preparedness62,63,64. One early analysis has already made use of our data to explore how lockdown policies affect political attitudes65. Other initial analyses suggest that the COVID-19 pandemic has already led to authoritarian backsliding in some countries66, unprecedented shocks to economies around the world67,68,69,70 and serious negative mental health effects for millions of people71,72. While scholars have always sought to understand how large-scale historical events have shaped contemporary phenomena, modern technological tools allow us to document such events more quickly and precisely than ever before.

Detailed documentation of such policies is all the more important given that policy choices made by one government often depend on the policy choices of other governments. The structure of the data we present in this paper allows researchers and policymakers not only to examine monadic policy information—that is, policies targeted to the same political unit that enacted it—but also directed, dyadic policy information—that is, policies targeted to a political unit that is different from the unit that enacted it. The dyadic data are not limited to only capturing foreign policy dynamics, such as when country A implements a policy that affects citizens of country B, but can also document dynamics within countries, such as when central governments enact policies targeted to subnational political entities. Given its dyadic structure, the dataset further enables critical analyses of the links and interdependencies between and within countries, including patterns of policy learning and diffusion across governments, as well as of cooperative and antagonistic relationships in global crisis governance.

In the following sections, we provide a description of the data and then an application of the data, in which we model policy activity of countries over time. Using a Bayesian dynamic item–response theory model, we produce a statistically valid index that categorizes countries in terms of their responses to the pandemic and show how quickly policy responses have changed over time. We document clear evidence of rapid policy diffusion of harsh measures in response to the virus. In the Methods section, we provide a thorough discussion of the methodology used to collate the dataset and to manage more than 260 RAs coding this data around the world in real time and to create this index.

Results

In this section, we first present some descriptive statistics that illustrate how government policy towards COVID-19 has varied across key variables. We then present our index for tracking how active governments have been with regard to announcing policies targeting COVID-19 across countries and over time.

Descriptive statistics

Here, descriptive statistics for key variables available in the dataset are discussed. In Table 1, for each policy type we present cumulative totals for the number of policies and the number of countries that have implemented that policy, an average value for the number of countries a policy targets and percentages in terms of how stringently a policy is enforced. While we highlight the number of targeted countries in this table, we note that our data also captures other potential geographical targets not shown in the table. For instance, it is possible for a national policy to be targeted towards one or more subnational provinces or for a provincial policy to be targeted towards one or more subprovincial regions. Table 1 shows that the policy most governments have implemented in reaction to COVID-19 is external border restrictions; that is, policies that seek to limit entry or exit across different sovereign jurisdictions. We found that 188 countries made 1,122 policy announcements about such restrictions since 31 December 2019. The second policy that most countries, by our count 171, have implemented is closure of schools, of which we document 1,441 such policies.

Meanwhile, the policy that has been implemented the most number of times, at 2,638, is health resources; that is, policies that seek to secure the availability of health-related materials (for example, masks), infrastructure (for example, hospitals) or personnel (for example, doctors) to address the pandemic. The next most common policy in terms of the number of times it has been implemented, at 1,833, includes those that impose restrictions on non-essential businesses.

However, we note that a strict comparison of policy types by this metric is not perfect given that, for example, there may be a need for more individualized policies regarding external border restrictions (given the number of countries that a government can restrict travel access to) as opposed to closing schools. We also note that we have more possible subtypes for documenting health resources in the survey (21 subtypes) than restrictions of non-essential businesses (7 subtypes). In the next subsection, we provide a more rigorous method of comparing policies while taking into account their depth.

Our dataset also shows that the majority of countries in the world are a target of an external border restriction, quarantine measure or health-monitoring measure from another country. Moreover, a high percentage of policies documented in our dataset have mandatory enforcement.

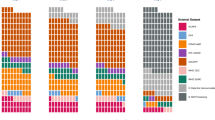

In Fig. 1, we present the cumulative incidence of different types of policies in our data over time. The figure shows that arguably relatively easy-to-implement policies, such as external border restrictions, the forming of task forces, public awareness campaigns and efforts to increase health resources, came relatively early in the course of the pandemic. Relatively more difficult policies to implement, such as curfews, closures of schools, restrictions of non-essential businesses and restrictions of mass gatherings, arrived later.

We also explored the extent to which other countries are affected by policies that can have a geographical target outside the policy initiator (for example, external border restrictions, quarantine) across time. For example, in Fig. 2, we map a network of bans on inbound flights initiated by four European countries as of 15 March 2020: Italy, Greece, Albania and Romania. In the plot, each horizontal line represents a particular country (called a ‘node’ in network terminology). The vertical lines denote whether there was such a flight ban between two countries (an ‘edge’ or ‘link’ in network terminology) and the arrow of the vertical line indicates the direction in which the ban is applied (in network terminology, this allows us to capture directed dyads). For instance, the purple horizontal line represents Greece, and the vertical line connecting Greece to Italy shows that there was a flight ban between these two countries. The arrow pointing upwards towards Italy shows that it was Greece that directed the flight ban against Italy. See ref. 73 for more information on how to interpret this plot.

This figure shows a network of bans on inbound flights initiated by four European countries (Italy, Greece, Albania and Romania) as of 15 March 2020. In the plot, each horizontal line represents a particular country. The vertical lines denote whether there was such a flight ban between two countries, and the arrow of the vertical line indicates the direction in which the ban is applied.

Extended Data Fig. 1 depicts travel bans initiated by all European countries as of 15 March 2020. It shows that the governments of Poland and San Marino had banned all flights into Poland and San Marino, respectively, while the government of Italy banned incoming flights from China, Hong Kong, Macau and Taiwan. Additionally, the governments of Greece and Romania both banned flights from Italy, while the government of Albania banned incoming flights from Greece. According to our data, up until this point in time, no other European government at the national level had banned inbound flights from other countries. The availability of such dyadic data in this dataset may improve inference for any number of analyses that seek to investigate how actions undertaken by different governmental units are linked, including, for example, on how policies in one country affect health outcomes in another country.

Government policy activity index

In this section, we briefly present our index for tracking the relative government activity with regard to policies targeting COVID-19 across countries and over time. The model is a version of item–response theory known as ideal point modelling, which incorporates over-time trends74,75,76,77,78,79 and permits inference on how a latent construct, in this case total policy activity, responds to changes in the pandemic. To fit the model, the different policy types shown in Table 1, and subpolicies within them, were coded in terms of ordinal values, with lower values for subnational targets of policies and higher values for policies applying to the entire country or, in the case of external border restrictions, to one or more external countries. For instance, internal country policies can take on three possible values: no policy, subnational policy or policy covering the whole country. Meanwhile external border restrictions can take on four possible values: no policy, policy targeting one other country, policy targeting multiple countries or policy targeting all countries in the world (that is, border closure).

We employed ideal point modelling because it can be given a latent utility interpretation75. We assumed that each country has an unobserved ‘ideal point’ on a unidimensional space representing its willingness to impose policies, while each policy likewise has a position on the same space. The relative cost of different policies can be thought of as the distance between the ideal point of a country and the ideal point of the policy relative to other policies. While the meaning of this implied cost will vary from country to country, it is probably a combination of the social, political and economic costs of implementing the policy at a given time point.

As countries become more willing to pay the implied cost (that is, the latent distance between country and policy decreases), the ideal points/policy activity score of that country will rise and they will implement more policies. This interpretation is similar to the traditional item–response theory approach for analysing test questions in which students who correctly answer more questions on a test are considered to have higher ‘ability’80,81. Following this logic, we are able to estimate latent country scores that represent the readiness of a country to impose a set number of policies. The implied cost of policies is estimated via discrimination parameters, which indicate how strongly policies discriminate between countries.

The country-level policy activity score is further allowed to vary over time in a random-walk process with a country-specific variance parameter to incorporate heteroskedasticity77. Incorporating over-time trends explicitly is important for capturing the nuances of policy implementation over time. For example, countries that impose more restrictive policies at an earlier date will be rewarded with higher policy activity scores compared with those who impose such policies at a later date. Imposing a given policy when most countries have already imposed them will result in little, if any, change in the policy activity score.

The advantage of employing a statistical model rather than simply summing across policies is that the index ends up as a weighted average, whereby the weights are derived from the probability that a certain policy is implemented. In other words, while many countries set up task forces, relatively few imposed curfews at an early stage. As a result, the model adjusts for these distinctions, producing a score that aggregates across the patterns in the data.

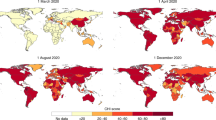

Furthermore, because the model is stochastic, it is robust against some of the coding errors that often occur in these types of datasets. As we discuss in the validation section, while we are continuing to validate the data on a daily basis, the massive speed and scope of data collection means that we cannot identify all issues with the data in real time. However, the measurement model employed only requires us to assume that on average the policy codings are correct, not that they are correct for each instance. Coding error, such as incorrectly selecting a policy type, will propagate through the model as higher uncertainty intervals, but will not affect average posterior estimates. As our data quality improves and we are able to collect more data over time, the model will produce more variegated estimates with smaller uncertainty intervals. Figure 3 shows the estimated index scores for the 198 countries in our dataset up until 24 May 2020, and suggests strong evidence of policy diffusion effects. While information about COVID-19 existed at least as early as January 2020, we do not see large-scale changes occurring in activity scores until March 2020. Furthermore, the trajectories are highly nonlinear, with a large number of countries quickly transitioning from relatively low to relatively high scores. This nonlinear movement could be due to a variety of factors, including the rapid spread of the virus and policy learning as states observe policy actions from other states. For an interactive version of this figure, please see our website (https://coronanet-project.org). We note that the country that appeared to take the quickest action in the shortest amount of time is New Zealand, as can be seen in Fig. 4, where we show over-time variance parameters for each country. We further validate the model’s over-time process by estimating a static item–response theory model for each day in the sample. In Fig. 5, we plot the results for six countries separately; a fuller explanation of our model validation strategy and of Fig. 5 is provided in the Methods section.

Estimates are derived from Stan, a MCMC sampler. Median posterior estimates are shown. a, Full distribution of countries. b, Each month is shown separately, with the top three countries for that month highlighted in terms of increases in activity scores from start of the month to the end of the month.

This figure shows the country-level variance (over-time change) parameters from the estimation of the policy activity index. Each estimate is the median posterior country-specific variance (heteroskedasticity) parameter reflecting the average amount of over-time change in the estimates. Scale is the original (logit) scale. Error bars represent the 5–95% high posterior density interval.

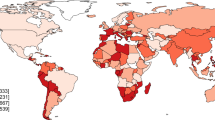

Of course, a caveat with the index is that we may be missing some policy measures that have occurred, which is due to difficulty in finding them in published sources. However, there is still clear differentiation within the index in terms of when policies were imposed, with some countries starting to impose policies much earlier than others. Furthermore, there is a clear break around 1 March 2020, when countries began to impose more stringent policies across the world. Table 2 shows the discrimination parameters from the underlying Bayesian model for each policy type. These parameters suggest which policies governments find relatively difficult or costly to implement, and for that reason, tends to separate more active from less active states in terms of responses to COVID-19. Two of these policies (closure of restaurants and quarantine at home) were given fixed values to identify the direction and rotation of the latent scale; therefore, their discrimination parameters are not informative. These policies were chosen as a priori, so we can identify them as being relatively high cost. However, the rest of the parameters were allowed to float, which provides inference as to which policies appear to be the most difficult or costly to implement.

We note that these are average values for the sample. Imposing these policies may be less costly for certain countries or for countries that share certain characteristics, such as having smaller numbers of enrolled students or relatively healthy economies. However, it is important to note that we can see these patterns on a worldwide scale.

At the top of the index, we see various business closure policies as the most difficult to implement, while school closures are the next most difficult. Closure of pre-schools, though, as opposed to other school types, appears to be relatively less costly for states to undertake, perhaps because pre-schools do not operate on a full-time basis. Internal border restrictions are considered more difficult to implement than external border restrictions, while relatively straightforward policies such as public awareness campaigns, health monitoring and opening new task forces or bureaus are near the bottom of the index. Quarantines placing people in external facilities, such as hotels or government quarantine centres, are also estimated as being less costly than quarantine at home (stay-at-home orders).

Given this distribution of discrimination parameters, we believe that the index is a valid representation of the underlying process by which governments progressively impose more difficult policies. As states relax policies, we will further gain information about which policies appear to be more costly, as we will be able to factor in the duration for which these policies were implemented. Consistent with our findings, we observe that the announced relaxation policies happening at the time of writing in European countries primarily centre on businesses and school openings, which suggests that these policies are uniquely costly to keep in place compared to travel restrictions82.

Discussion

As policymakers, researchers and the broader public debate and compare how to succeed against the novel threats posed by COVID-19, they need real-time, traceable data of government policies to understand which of these policies are effective and under what conditions. This requires specific knowledge of the variation of such policies and how widely implemented they are across countries and time. The goal of the dataset and policy action index presented here is to provide this information.

We have tried to match our data collection efforts to keep up with the exponential speed at which COVID-19 has already upended global public health and the international economy while also maintaining high levels of quality. However, we will inevitably be refining, revising and updating our data to reflect new knowledge and trends as the pandemic unfolds. The data that we present here represent an initial release; we will continue to validate and release data as long as governments continue to develop policies in response to COVID-19.

In future work, we intend to analyse the policy combinations that are best able to stymie the pandemic to contribute to the research community and provide urgently needed knowledge for policymakers and the wider global public.

Methods

In this section, we first describe the variables that our dataset provides and how they are organized. We then provide detail on the methodology we employed to collect the data. Following this, we provide more detail on the methodology we employed to estimate our government policy action index.

Data schema

Each unique record documents at the minimum the following information: the policy type; the name of the country from which a policy originates (if the policy originates from a province or state, that information is also documented; future versions of the dataset will also include information on whether a policy was initiated from a city or municipality or another level of government); the degree to which a policy must be complied with; the entity enforcing the policy; and the date a policy is announced, implemented and ends. Note that sometimes policies are announced without a predetermined end date. In these cases, this field is left blank.

For all policies, the database further documents information about the geographical target of the policy and the human or material target of a policy. Note, however, for some policies, the geographical target may be the same as the policy initiator, and in these cases can be considered monadic. Where applicable, we also document the directional flow of the policy and what mechanism of travel (for example, flights or trains) a policy targets.

All of the above-mentioned information is also qualitatively provided via a textual event description. Additional meta-data that are available for all policies include when the record entered the database and a link for the information source for the policy. See Supplementary Methods (appendix A) for a list of currently available fields in the data, along with a list of external data variables such as country-level covariates that are added to daily releases, including COVID-19 tests and cases.

There is a unique record identity (ID) for each unique policy announcement per initiating country, which we code at the policy subtype. That is, some policy types are further categorized into subtypes. For example, ‘quarantine’ can be further classified into one or more of the following subtypes: ‘self-quarantine’, ‘government quarantine’, ‘quarantine outside the home or government facility’ and ‘other’. Of the 13,398 such events in the dataset, we identified 11,172 unique events. That is, some events in the database are updates or changes to existing policies. We link such events over time using a unique ID, which we term the policy ID as opposed to the record ID. An event counts as an update if it deals with a change in the following parameters:

-

1.

The time duration (for example, a country lengthens its quarantine to 28 days from 14 days.).

-

2.

The quantitative ‘amount’ of the policy (for example, a restriction of mass gatherings was previously set at 100 people and now it is set at 50 people).

-

3.

A set of other policy dimensions, such as the following:

-

a.

Who the policy applies to (for example, the quarantine used to apply to people of all ages and now it only applies to the elderly).

-

b.

The directionality of the policy (for example, a travel ban previously banned inbound flights from country X and now bans both inbound and outbound flights to and from country X).

-

c.

The travel mechanism (for example, a travel ban was previously applied to all types of travel but now only applies to flights).

-

d.

The compliance rules for the policy (for example, the quarantine used to be voluntary but is now mandatory).

-

e.

The enforcer of a policy (for example, the policy was previously under the purview of the ministry of health but changed to the ministry of the interior).

A policy counts as a new entry and not an update if it deals with a change in any other dimension, for example, the qualitative policy type (for example, a quarantine used to mandate a stay in a government facility but now quarantine at home is allowed) or the targeted country (for example, a quarantine upon arrival was mandated for people travelling from China but now these rules also apply to people travelling from Italy). In these cases, or when a policy is completely cancelled or annulled, the policy is coded as having ended.

Data collection methodology

As researchers learn more about the various health, economic and social effects of the COVID-19 pandemic, it is crucial that, to the greatest extent possible, they have access to data that are reliable, valid and timely. We adopted a data collection methodology that we believe optimizes all three of these constraints.

To collect the data, we recruited more than 260 RAs from colleges and universities around the world, representing 18 out of the 24 time zones. Large social scientific datasets typically rely on experts, coders or crowd-sourcing to input data. The literature has shown that common coding tasks can be completed via crowd-sourcing83,84, but that there are also limitations to the wisdom of crowds when specific contextual or subject knowledge is required85,86. To address these trade-offs, we decided to train current RAs to code our entries, leveraging the benefits of widespread recruitment and a diverse pool of country-specific knowledge from across the globe. Data collection started on 28 March 2020 and has proceeded rapidly, reaching 13,398 records as of the date of writing this article. Each RA is responsible for tracking government policy actions for at least one country. RAs were allocated on the basis of their background, language skills and expressed interest in certain countries87. Note that depending on the level of policy coordination at the national level, certain countries were assigned multiple RAs, for example, the United States, Germany and France.

We have also partnered with the machine-learning company Jataware to automate the collection of more than 200,000 news articles from around the world related to COVID-19. Jataware employs a natural language processing classifier using bidirectional encoder representations from transformers to detect whether a given article is indicative of a governmental policy intervention related to COVID-19. They then apply a secondary natural language processing classifier to categorize the type of policy intervention based on the definitions in our codebook (for example, ‘declaration of emergency’ and ‘quarantine’, among others). Next, Jataware extracts the geospatial and temporal extent of the policy intervention (for example, ‘Washington DC’ and ‘March 15, 2020’) whenever possible. The resulting list of news sources is then provided to our RAs as an additional source for manual coding and further data validation.

In the following sections, we describe in greater detail how RAs document the policies that they identify using our data collection software instrument, and our post data-collection validation procedure. Please refer to the Supplementary Methods (appendix B) for more information on our procedure for on-boarding and training RAs and our system for communicating with and organizing RAs.

Data collection software instrument

We designed a Qualtrics survey with survey questions to systematize and streamline the documentation of a given government policy over a wide range of dimensions. With this tool, RAs can easily and efficiently document information about different policy actions by answering the relevant questions posed in the survey (Büthe, T., Minhas, S. and Lieu, T., unpublished manuscript). For example, instead of entering the country that initiated a policy action into a spreadsheet, RAs answer the following question in the survey: ‘From what country does this policy originate?’. They then choose from the available options given in the survey.

By using a survey instrument to collect data, we are able to systematize the collection of very fine-grained data while minimizing coding errors common to tools such as shared spreadsheets. The value of this approach of course depends on the comprehensiveness of the questions posed in the survey, especially in terms of the universe of policy actions that countries have implemented for COVID-19. For example, if the survey only allowed RAs to select ‘quarantines’ as a government policy, it would not capture any data on ‘external border restrictions’, which would seriously reduce the value of the resulting data.

As such, to ensure the comprehensiveness of the data, before designing the survey, we collected in-depth, over-time data on policy actions taken by one country since the beginning of the outbreak, Taiwan, as well as cross-national data on travel bans implemented by most countries for a total of 245 events. The specific data source we cross-referenced for this effort was the 20 March 2020 version of a New York Times article on travel restrictions across the globe88.

We chose to focus on Taiwan because of its relative success, as of 28 March 2020, in limiting the negative health consequences of COVID-19 within its borders89. As such, it seemed probable at the time that other countries would choose to emulate some of the policy measures that Taiwan had implemented, thereby bolstering the comprehensiveness of the questions we ask in our survey. Indeed at the time of writing, it would appear that some countries have indeed sought to mirror some parts of the response implemented by Taiwan90.

Meanwhile, by also investigating variations in how different countries around the world have implemented travel restrictions, we have helped ensure that our survey is able to comprehensively document variations in how an important and commonly used policy tool is applied; for example, restrictions on different methods of travel (for example, flights and cruises), restrictions across borders and within borders, restrictions targeted towards people of different statuses (for example, citizens and travellers).

There are many additional benefits of using a survey instrument for data collection, especially in terms of ensuring the reliability and validity of the resulting data, including the following reasons:

-

1.

Preventing unforced measurement error: RAs are prevented from entering data into incorrect fields or unknowingly overwriting existing data—as would be possible with manual data entry into a spreadsheet—because RAs can only document one policy action at a time in a given iteration of a survey and do not have access to the full spreadsheet when they are entering in the data.

-

2.

Standardizing responses: we are able to ensure that RAs can only choose among standardized responses to the survey questions, which increases the reliability of the data and reduces the likelihood of measurement error. For example, when RAs choose different dates that we would like them to document (for example, the date a policy was announced), they are forced to choose from a calendar embedded in the survey that systematizes the day, month and year format that the date is recorded in.

-

3.

Minimizing measurement error: a survey instrument allows coding of different conditional logics for when certain survey questions are posed. This technique obviates the occurrence of logical fallacies in our data. For example, we are able to avoid situations whereby a RA might accidentally code the United States as having closed all schools in another country.

-

4.

Reduction of missing data: we are able to reduce the amount of missing data in the dataset by using the forced response option in Qualtrics. Where there is truly missing data, there is a text entry at the end of the survey where RAs can describe what difficulties they encountered in collecting information for a particular policy event.

-

5.

Reliability of the responses: we increase the reliability of the documentation for each policy by embedding descriptions of different possible responses within the survey. For example, in the survey question where RAs are asked to identify the policy type (‘type’ variable, see Supplementary Methods (appendix A)), the survey question includes pop-up buttons that allow RAs to easily access descriptions and examples of each possible policy type. Such pop-up buttons were also made available for the survey questions that code for the people or materials a policy was targeted at (‘target_who_what’) and whether the policy was inbound, outbound or both (‘target_direction’). Embedding such information in the dataset both clarifies the distinction between different answer choices and increases the efficiency of the policy documentation process (as RAs are not obliged to refer back and forth from the survey to the codebook).

-

6.

Linking observations: the use of a survey instrument facilitates the linking of policy events together over time should there be updates to existing policies. Once coded, each policy is given a unique record ID, which RAs can easily look up, reference and link to if they need to update a particular policy.

Post-data collection validation checks

We further implement the following processes to validate the quality of the dataset:

-

1.

Cleaning: before validation, we use a team of RAs to check the raw data for logical inconsistencies and typographical errors. The data will also become part of a larger effort commissioned by the World Health Organization to collate different datasets on government actions taken in response to COVID-19. To that end, future versions of the data will be further cleaned with resources from this collaborative effort91.

-

2.

Multiple coding for validation: others have shown that the random allocation of tasks and the validation of labels by more than one coder are among the best ways to improve the quality of a dataset92,93. We randomly sample 10% of the new entries in the dataset using the source of the data (for example, newspaper article, government press release) as our unit of randomization. We use the source as our unit of randomization because one source may detail many different policy types. We then provide this source to a fully independent RA and ask her to code for the government policy contained in the sampled source in a separate, but identical, survey instrument. If the source is in a language the RA cannot read, then a new source is drawn. The RA then codes all policies in the given source. This practice is repeated a third time by a third independent coder. Given the fact that each source in the sample is coded three times, we can assess the reliability of our measures and report the reliability score of each coder.

-

3.

Evaluation and reconciliation: we then check for discrepancies between the originally coded data and the second and third coding of the data through two primary methods. First, we use majority voting to establish a consensus for policy labels. Using the majority label as an estimate of the ‘hidden true label’ is a common method to address classification problems94. One issue with this approach is that it assumes that all coders are equally competent95. This criticism is generally levied at data creation with crowd-sourced labourers. We mitigate this problem by training our RAs in the data collection process and prioritizing the country-knowledge and language skills of RAs, therefore ensuring a more equal baseline for RA quality. In addition, we will provide RA identification codes that will allow users to evaluate coder accuracy.

If the majority achieves consensus, then we consider the entry valid. If a discrepancy exists, a fourth RA or principal investigator makes an assessment of the three entries to determine whether one, some or a combination of all three is most accurate. Reconciled policies are then entered in the dataset as a correction for full transparency. If a RA was found to have made a coding mistake, then we sample six of their previous entries: three entries that correspond to the type of mistake made (for example, if the RA incorrectly codes an external border restriction as a quarantine, we sample three entries for which the RA has coded a policy as being about a quarantine) and randomly sample three more entries to ascertain whether the mistake was systematic or not. If systematic errors are found, entries coded by that individual will be entirely re-coded by a new RA.

At the time of writing, we are in the process of completing our second coding of the validation sample. Thus far, 297 policies have been double coded—276 double-coded policies after excluding the category ‘other policies’ from the analysis—out of the original 500 randomly selected policies included in our validation set. This is equivalent to 10% of the first 5,000 policies in the dataset. We will gradually expand the validation set until we cover 10% of all observations.

We provide several measures in Table 3 to evaluate the inter-coder reliability at this early stage of validation. We find remarkable heterogeneity in the inter-coder reliability across types of policies. Our coders show a substantial level of agreement on policies such as restrictions of mass gatherings (n = 21, k = 0.95), closure of schools (n = 14, k = 0.92), restrictions of non-essential businesses (n = 19, k = 0.89), external border restrictions (n = 52, k = 0.83), curfew (n = 6, k = 0.82) and internal border restrictions (n = 11, k = 0.80). However, we also observe poor inter-rater agreement scores in other policies such as social distancing (n = 14, k = 0.38), public awareness measures (n = 15, k = 0.49) and new task force, bureau or administrative configuration (n = 9, k = 0.52). Overall, these statistics indicate substantial levels of overall agreement between coders, with inter-coder reliability scores between 0.71 and 0.74 (n = 276).

Our initial assessment of miscodings suggests that our coders have difficulties in distinguishing social distancing policies from quarantine/lockdowns and public awareness campaigns. We have taken some steps to ameliorate these issues. First, we have recently separated quarantine from lockdowns in our codebook and survey. Second, we have added branching logic to the Qualtrics survey that also clarifies the specific subpolicies that fall under quarantine, lockdowns and social distancing. Additionally, we have added several subtypes of public awareness campaigns in the survey that should provide conceptual clarity to this policy category. Furthermore, the creation of a new task force, bureau or administrative configuration often goes together with a number of additional policies. In these cases, some of our coders seem to focus on these additional policies rather than on the creation of administrative units, which lowers the reliability of the coding system for this policy. We have provided RAs with better guidance on this category and have added several subtypes for this question to help improve conceptual clarity for this policy category. Finally, we detected extremely poor reliability for the health-related policies of health monitoring and health testing. We have clarified the distinction across the three health-related policies—namely, health resources, health monitoring and health testing—in the codebook and we combine them under the category of health measures in this ongoing validation.

In the subsequent weeks, we expect inter-coder reliability scores to improve as a consequence of three processes: (1) our coders are becoming more experienced with the codebook and the coding tasks in general; (2) we are cleaning the dataset of obvious errors and logical inconsistencies; and (3) we are working on clarifying and improving the codebook and the coding system. Notwithstanding these processes, we acknowledge that some ambiguities will unavoidably remain, which provides evidence for the utility of our planned majority-voting validation strategy.

Time-varying item response model

Our time-varying item response model follows the specification in Kubinec96. We review that notation here to show how it relates to classical item–response theory and the ideal point modelling literature.

The likelihood function for the model is as follows for a set of countries i ∈ I, items j ∈ J, time points t ∈ T and ordinal categories k ∈ K:

In this equation, the time-varying country parameters αit, also called person abilities or ideal points, are our estimate of policy activity scores. They are jointly estimated with the item (policy type) discrimination parameters γj and item difficulty (intercept) parameters βj. To address the ordinal nature of the outcome Yijtk, ordinal cutpoints ck are used to model the varying levels of enforcement and geographical targets in the data. The logit function, represented by ζ (⋅), maps the latent scale to the probability that a given ordinal outcome is chosen. Because we have two separate types of ordered measures (domestic versus international policies) with either three or four ordered categories, we jointly estimate the model as two ordered logit specifications.

The likelihood in equation (1) is not fully identified due to possible scaling issues with the latent variable αit (that is, it has no natural units) and due to potential sign reflection (also called multimodality) where L(Yijtk) could be unchanged even if αit is multiplied by –1. These identification issues are well known in the literature76, and we resolve them with standard practices. First, we assign a reasonably informative prior distribution on the t = 1 ideal points as follows:

We also fix the discrimination parameters γj for two items, quarantines and restriction of restaurants and bars, to opposite ends of the latent scale (+1 and –1). Because both of these variables load on the same side of the scale (that is, both indicate more policy activity), we reverse the order of the categories for restriction of restaurants and bars. We note that these types of restrictions are not commonly used in traditional item–response theory, whereby a sign restriction is imposed on all discrimination parameters. We employ the more flexible ideal point specification, which also allows us to test the assumption that all the discrimination parameters load on the same sign (as Table 2 shows, this is true for all of the parameters). The rest of the parameters are given weakly informative prior distributions (note a prior is put over the difference of cutpoints, rather than the cutpoints themselves, to reflect the fact that only the differences between cutpoints have any natural scale):

Finally, to model the policy scores αit as a random walk, we assign a prior that is equal to the prior period policy score plus normally distributed noise as follows:

The over-time dimension induces a new source of identifiability issues, which we resolve by fixing the variance σi of one of the countries (the United States) to 0.1 so that the over-time variance is relative to this constant. This constraint has a similar identification effect to the informative prior on the first period policy activity scores in equation (2).

Model convergence

For estimation, we sample from four Markov chain Monte Carlo (MCMC) chains with over-dispersed starting values using Stan, a Hamiltonian Markov chain Monte Carlo (HMC) sampler97. We run the sampler for 800 iterations, 400 of which are discarded as a warm-up. While this number of iterations is far fewer than other MCMC samplers, HMC is far more efficient at exploring the posterior density, and we were able to achieve convergence using this number of iterations.

We assess convergence using split-\(\hat{R}\) by fitting four independent chains with over-dispersed starting values. \(\hat{R}\) values for all parameters (which totalled more than 40,000) were 1.01 or less (see plot A in Extended Data Fig. 2). Plot B in Extended Data Fig. 2 shows the distribution of effective number of samples for the parameters, which is a way of comparing the autocorrelation in MCMC draws to independent draws without autocorrelation, such as we might obtain from a Monte Carlo simulation. Again, the number of effective samples is high, often exceeding the total number of empirical draws. This occurred because Hamiltonian Monte Carlo can produce more informative samples than even a Monte Carlo simulation because it can generate negatively correlated draws that explore the posterior space much more quickly. We also assess convergence using trace plots, one of which is shown below for the time-varying country policy activity scores for the United States. Strong mixing between chains can be observed in the plot. Finally, we report no divergent transitions or iterations where the sampler reached its maximum tree depth, which are both signs of poor mixing in the chains. For these reasons, we are confident that the sampler reached a stationary distribution and was able to adequately explore the high-density regions of the joint posterior.

Model validity

While employing a measurement model ensures robustness against arbitrary data-coding errors, it is still necessary to validate the over-time process of the model, which imposes some assumptions on how policy activity scores change over time. The use of a random walk implies that policy differences will be relatively stable from one day to the next, which could limit the ability of scores to encompass quick, discontinuous changes98. While we employ this particular specification because it has been previously applied to a variety of empirical phenomena and because of its relative parsimony, we can partially test for whether it captures changes by estimating a static item–response theory model for each day in the sample. The corresponding estimates represent cross-sections without any time process imposed.

Due to the complexity of comparing the estimates, we plot the results for six countries separately in Fig. 5. This figure shows that the cross-sectional estimates can show much more discontinuous jumps, although we note at the same time that there appears to be substantial noise in the estimates as they only incorporate information available at a single day. Nonetheless, while the random-walk estimates certainly exhibit less discontinuous change, they do still allow for quick divergence in policy activity scores, with France and Russia moving from the bottom to the top of the index in the space of only a few weeks.

This figure provides a comparison of cross-sectional estimates of policy activity scores to the random-walk time series estimates. Lines show median posterior estimates from MCMC estimation with Stan. Each cross-sectional model was re-fitted to each day’s data independently using MCMC. High variance in cross-sectional estimates is a result of limited data per day and distortion due to latent scaling effects.

We note as well that the model is parameterized so that each country has its own variance parameter. This permits the rate of change to vary by country, reducing the concern that the model may be overly restricting change. These variance parameters are shown in Fig. 4, sorted in order of increasing over-time variance. These estimates are themselves substantively interesting, as the United States, which was used as the reference category, has actually one of the lowest rates of over-time change, while some countries such as New Zealand, Spain and San Marino witnessed the highest variance in policy activity scores. Because, at this time, the index only captures increasing numbers of policies, the variance parameters can be given the interpretation of which countries responded in the shortest period of time across a broad array of policy indicators.

Reporting Summary

Further information on research design is available in the Nature Research Reporting Summary linked to this article.

Data availability

For the most current, up-to-date version of the dataset, please visit https://coronanet-project.org or our Github page at https://github.com/saudiwin/corona_tscs. For more information on the exact variables collected, please see our publicly available codebook here and visit our website at https://www.coronanet-project.org.

Code availability

Interested readers may also find our code for collecting the data and maintaining the database at our Github page: https://github.com/saudiwin/corona_tscs.

References

Porter, D. Health, Civilization and the State: a History of Public Health from Ancient to Modern Times (Routledge, 2005).

Scott, J. C. Against the Grain: a Deep History of the Earliest States (Yale Univ. Press, 2017).

Behbehani, A. M. The smallpox story: life and death of an old disease. Microbiological Rev. 47, 455–509 (1983).

Duncan-Jones, R. P. The impact of the antonine plague. J. Rom. Archaeol. 9, 108–136 (1996).

Crosby, A. W. The Columbian Exchange: Biological and Cultural Consequences of 1492 Vol. 2 (Greenwood Publishing Group, 2003).

Ziegler, P. The Black Death (Faber & Faber, 2013).

Jannetta, A. B. Epidemics and Mortality in Early Modern Japan (Princeton Univ. Press, 2014).

Hatchett, R. J., Mecher, C. E. & Lipsitch, M. Public health interventions and epidemic intensity during the 1918 influenza pandemic. Proc. Natl Acad. Sci. USA 104, 7582–7587 (2007).

Shah, S. Pandemic: Tracking Contagions, from Cholera to Ebola and Beyond (Macmillan, 2016).

Snow, J. The cholera near Golden-Square, and at Deptford. Med. Gaz. 9, 321–322 (1854).

Paneth, N. Assessing the contributions of John Snow to epidemiology: 150 years after removal of the Broad Street pump handle. Epidemiology 15, 514–516 (2004).

Taubenberger, J. K. & Morens, D. M. 1918 Influenza: the mother of all pandemics. Rev. Biomed. 17, 69–79 (2006).

Kilbourne, E. D. Influenza pandemics of the 20th century. Emerg. Infect. Dis. 12, 9–14 (2006).

Farmer, P. Social inequalities and emerging infectious diseases. Emerg. Infect. Dis. 2, 259–269 (1996).

van Bavel, J. J. et al. Using social and behavioural science to support COVID-19 pandemic response. Nat. Hum. Behav. 4, 460–471 (2020).

Bootsma, M. C. & Ferguson, N. M. The effect of public health measures on the 1918 influenza pandemic in US cities. Proc. Natl Acad. Sci. USA 104, 7588–7593 (2007).

Hunter, M. The changing political economy of sex in South Africa: the significance of unemployment and inequalities to the scale of the AIDS pandemic. Soc. Sci. Med. 64, 689–700 (2007).

Bandayrel, K., Lapinsky, S. & Christian, M. Information technology systems for critical care triage and medical response during an influenza pandemic: a review of current systems. Disaster Med. Public Health Prep. 7, 287–291 (2013).

Abelin, A., Colegate, T., Gardner, S., Hehme, N. & Palache, A. Lessons from pandemic influenza A (H1N1): the research-based vaccine industry’s perspective. Vaccine 29, 1135–1138 (2011).

Chew, C. & Eysenbach, G. Pandemics in the age of Twitter: content analysis of tweets during the 2009 H1N1 outbreak. PLoS ONE 5, e14118 (2010).

Jarynowski, A., Wójta-Kempa, M., Płatek, D. & Czopek, K. Attempt to understand public health relevant social dimensions of COVID-19 outbreak in Poland. Soc. Reg. 4, 7–44 (2020).

Boyd, T., Savel, T., Kesarinath, G., Lee, B. & Stinn, J. The use of public health grid technology in the United States Centers for Disease Control and Prevention H1N1 pandemic response. In Proc. 2010 IEEE 24th International Conference on Advanced Information Networking and Applications Workshops 974–978 (IEEE, 2010).

Galaz, V. Pandemic 2.0: can information technology help save the planet? Environ. Sci. Policy Sustain. Dev. 51, 20–28 (2009).

Flaxman, S. et al. Estimating the number of infections and the impact of non-pharmaceutical interventions on COVID-19 in 11 European countries. Preprint at arXiv https://arxiv.org/abs/2004.11342 (2020).

Ferguson, N. et al. Report 9: impact of non-pharmaceutical interventions (NPIs) to reduce COVID19 mortality and healthcare demand (Imperial College London, 2020).

Goumenou, M. et al. COVID-19 in Northern Italy: an integrative overview of factors possibly influencing the sharp increase of the outbreak. Mol. Med. Rep. 22, 20–32 (2020).

Singh, R. & Adhikari, R. Age-structured impact of social distancing on the COVID-19 epidemic in India. Preprint at https://arxiv.org/abs/2003.12055 (2020).

Prem, K. et al. The effect of control strategies to reduce social mixing on outcomes of the COVID-19 epidemic in Wuhan, China: a modelling study. Lancet Public Health 5, e261–e270 (2020).

Chen, S., Yang, J., Yang, W., Wang, C. & Bärnighausen, T. COVID-19 control in China during mass population movements at New Year. Lancet 395, 764–766 (2020).

Koo, J. R. et al. Interventions to mitigate early spread of SARS-COV-2 in Singapore: a modelling study. Lancet Infect. Dis. 20, 678–688 (2020).

Arriola, L. & Grossman, A. Ethnic marginalization and (non)compliance in public health emergencies. J. Politics (in the press).

Yancy, C. W. COVID-19 and African Americans. JAMA 323, 1891–1892 (2020).

Anwar, S., Nasrullah, M. & Hosen, M. COVID-19 and Bangladesh: challenges and how to address them. Front. Public Health 8, 154 (2020).

Corburn, J. et al. Slum health: arresting COVID-19 and improving well-being in urban informal settlements. J. Urban Health 24, 1–10 (2020).

Anderson, R. M., Heesterbeek, H., Klinkenberg, D. & Hollingsworth, T. D. How will country-based mitigation measures influence the course of the COVID-19 epidemic? Lancet 395, 931–934 (2020).

Dorn, A., van Cooney, R. E. & Sabin, M. L. COVID-19 exacerbating inequalities in the US. Lancet 395, 1243–1244 (2020).

Barceló, J. & Sheen, G. Voluntary adoption of social welfare-enhancing behavior: mask-wearing in Spain during the COVID-19 outbreak. Preprint at OSF https://osf.io/preprints/socarxiv/6m85q/ (2020).

Büthe, T., Messerschmidt, L. & Cheng, C. Policy responses to the coronavirus in Germany. In the world before and after COVID-19: intellectual reflections on politics, diplomacy and international relations, edited by Gian Luca Gardini. Stockholm—Salamanca: European Institute of International Relations, 2020. Preprint at SSRN https://doi.org/10.2139/ssrn.3614794 (2020).

Kubinec, R. The CoronaNet Database. POMEPS Studies 39: The COVID-19 Pandemic in the Middle East and North Africa https://pomeps.org/wp-content/uploads/2020/04/POMEPS_Studies_39_Web.pdf (2020).

Cronert, A. Democracy, state capacity, and COVID-19 related school closures. Preprint at APSA Preprints https://doi.org/10.33774/apsa-2020-jf671 (2020).

Allcott, H. et al. Polarization and public health: partisan differences in social distancing during the coronavirus pandemic. NBER Working Paper No. 26946 https://www.nber.org/papers/w26946 (2020).

Barrett, R., Kuzawa, C. W., McDade, T. & Armelagos, G. J. Emerging and re-emerging infectious diseases: the third epidemiologic transition. Annu. Rev. Anthropol. 27, 247–271 (1998).

Miller, M. A., Viboud, C., Balinska, M. & Simonsen, L. The signature features of influenza pandemics—implications for policy. N. Engl. J. Med. 360, 2595–2598 (2009).

Pierson, P. Increasing returns, path dependence, and the study of politics. Am. Political Sci. Rev. 94, 251–267 (2000).

Przeworski, A., Stokes, S. C. S., Stokes, S. C. & Manin, B. (eds) Democracy, Accountability, and Representation Vol. 2 (Cambridge Univ. Press, 1999).

Svolik, M. W. The Politics of Authoritarian Rule (Cambridge Univ. Press, 2012).

Kitschelt, H., Wilkinson, S. I. (eds) Patrons, Clients and Policies: Patterns of Democratic Accountability and Political Competition (Cambridge Univ. Press, 2007).

Gailmard, S. & Patty, J. W. Preventing prevention. Am. J. Political Sci. 63, 342–352 (2019).

Meltzer, M. I., Cox, N. J. & Fukuda, K. The economic impact of pandemic influenza in the United States: priorities for intervention. Emerg. Infect. Dis. 5, 659–671 (1999).

Nunn, N. The importance of history for economic development. Annu. Rev. Econ. 1, 65–92 (2009).

Kilian, L. Not all oil price shocks are alike: disentangling demand and supply shocks in the crude oil market. Am. Econ. Rev. 99, 1053–1069 (2009).

Noy, I. The macroeconomic consequences of disasters. J. Dev. Econ. 88, 221–231 (2009).

Correia, S., Luck, S. & Verner, E. Pandemics depress the economy, public health interventions do not: evidence from the 1918 flu. Preprint at https://doi.org/10.2139/ssrn.3561560 (2020).

Kindleberger, C. P. & Aliber, R. Z. Manias, Panics and Crashes: a History of Financial Crises (Palgrave Macmillan, 2011).

Peckham, R. Economies of contagion: financial crisis and pandemic. Econ. Soc. 42, 226–248 (2013).

Dasgupta, S., Laplante, B., Wang, H. & Wheeler, D. Confronting the environmental kuznets curve. J. Econ. Perspect. 16, 147–168 (2002).

Folke, C. Resilience The emergence of a perspective for social–ecological systems analyses. Glob. Environ. Change 16, 253–267 (2006).

Galea, S. et al. Trends of probable post-traumatic stress disorder in New York city after the September 11 terrorist attacks. Am. J. Epidemiol. 158, 514–524 (2003).

Gifford, R. Environmental psychology matters. Annu. Rev. Psychol. 65, 541–579 (2014).

Baekkeskov, E. & Rubin, O. Why pandemic response is unique: powerful experts and hands-off political leaders. Disaster Prev. Manag. 23, 81–93 (2014).

Boin, A., Stern, E., Hart, P. & Sundelius, B. The Politics of Crisis Management: Public Leadership Under Pressure (Cambridge Univ. Press, 2016).

Tierney, K. J. From the margins to the mainstream? Disaster research at the crossroads. Annu. Rev. Sociol. 33, 503–525 (2007).

Blaikie, P., Cannon, T., Davis, I. & Wisner, B. At Risk: Natural Hazards, People’s Vulnerability and Disasters (Routledge, 2014).

Burby, R. J. Hurricane Katrina and the paradoxes of government disaster policy: bringing about wise governmental decisions for hazardous areas. Ann. Am. Acad. Polit. Soc. Sci. 604, 171–191 (2006).

Bol, D., Giani, M., Blais, A. & Loewen, P. J. The effect of covid-19 lockdowns on political support: some good news for democracy? Eur. J. Pol. Res. https://doi.org/10.1111/1475-6765.12401 (2020).

Lührmann, A., Edgell, A. B. & Maerz, S. F. Pandemic backsliding: does Covid-19 put democracy at risk? (V-DEM Institute, 2020).

Coibion, O., Gorodnichenko, Y. & Weber, M. Labor markets during the Covid-19 crisis: a preliminary view. NBER Working Paper No. 27017 https://www.nber.org/papers/w27017 (2020).

Atkeson, A. What will be the economic impact of Covid-19 in the US? Rough estimates of disease scenarios. NBER Working Paper No. 26867 https://www.nber.org/papers/w26867 (2020).

McKibbin, W. J. & Fernando, R. The global macroeconomic impacts of Covid-19: seven scenarios. CAMA working paper no. 19/2020 https://doi.org/10.2139/ssrn.3547729 (2020).

Fernandes, N. Economic effects of coronavirus outbreak (Covid-19) on the world economy. Preprint at SSRN https://doi.org/10.2139/ssrn.3557504 (2020).

Zandifar, A. & Badrfam, R. Iranian mental health during the Covid-19 epidemic. Asian J. Psychiatr. 51, 101990 (2020).

Qiu, J. et al. A nationwide survey of psychological distress among Chinese people in the Covid-19 epidemic: implications and policy recommendations. Gen. Psychiatr. 33, e100213 (2020).

Longabaugh, W. J. Combing the hairball with biofabric: a new approach for visualization of large networks. BMC Bioinformatics 13, 275 (2012).

Kubinec, R. Generalized ideal point models for time-varying and missing-data inference. Preprint at OSF https://osf.io/8j2bt/ (2019).

Clinton, J., Jackman, S. & Rivers, D. The statistical analysis of rollcall data. Am. Polit. Sci. Rev. 98, 355–370 (2004).

Bafumi, J., Gelman, A., Park, D. K. & Kaplan, N. Practical issues in implementing and understanding Bayesian ideal point estimation. Polit. Anal. 13, 171–187 (2005).

Martin, A. D. & Quinn, K. M. Dynamic ideal point estimation via Markov chain Monte Carlo for the U.S. Supreme Court, 1953–1999. Polit. Anal. 10, 134–153 (2002).

Barberá, P. Birds of the same feather tweet together: Bayesian ideal point estimation using Twitter data. Polit. Anal. 23, 76–91 (2015).

Bonica, A. Mapping the ideological marketplace. Am. J. Polit. Sci. 58, 367–386 (2014).

Takane, Y. & de Leeuw, J. On the relationship between item response theory and factor analysis of discretized variables. Psychometrika 52, 393–408 (1986).

Reckase, M. D. Multidimensional Item Response Theory (Springer, 2009).

Doherty, B. The exit strategy: how countries around the world are preparing for life after Covid-19. The Guardian (18 April 2020).

Benoit, K., Conway, D., Lauderdale, B. E., Laver, M. & Mikhaylov, S. Crowd-sourced text analysis: reproduceable and agile production of political data. Am. Polit. Sci. Rev. 110, 278–295 (2016).

Sumner, J. L., Farris, E. M. & Holman, M. R. Crowdsourcing reliable local data. Polit. Anal. 28, 244–262 (2019).

Marquardt, K. L. et al. Experts, coders, and crowds: an analysis of substitutability. V-Dem working paper 2017:53. https://doi.org/10.2139/ssrn.3046462 (2017).

Urlacher, B. R. Opportunities and obstacles in distributed or crowdsourced coding. Qual. Multi-Method Res. 15, 15–21 (2017).

Horn, A. Can the online crowd match real expert judgments? How task complexity and coder location affect the validity of crowd-coded data. Eur. J. Polit. Res. 58, 236–247 (2019).

Salcedo, A., Yar, S. & Cherelus, G. Coronavirus travel restrictions and bans globally. The New York Times (8 May 2020).

Beech, H. Tracking the coronavirus: how crowded Asian cities tackled an epidemic. The New York Times (17 March 2020).

Aspinwall, N. Taiwan is exporting its coronavirus successes to the world. Foreign Policy (9 April 2020).

Gibney, E. Whose coronavirus strategy worked best? Scientists hunt most effective policies. Nature 581, 15–16 (2020).

Sheng, V. S., Provost, F. & Ipeirotis, P. G. Get another label? Improving data quality and data mining using multiple, noisy labelers. In Proc. 14th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining 614–622 (Association for Computing Machinery, 2008).

Amazon.com. Amazon Mechanical Turk Requester Best Practices Guide. Amazon Web Services https://mturkpublic.s3.amazonaws.com/docs/MTURK_BP.pdf (2011).

Raykar, V. C. et al. Supervised learning from multiple experts: whom to trust when everyone lies a bit. In Proc. 26th Annual International Conference on Machine Learning 889–896 (Association for Computing Machinery, 2009).

Raykar, V. C. et al. Learning from crowds. J. Mach. Learn. Res. 11, 1297–1322 (2010).

Kubinec, R. Politically-connected firms and the military–clientelist complex in North Africa. Preprint at OSF https://osf.io/xbyh9/ (2019).

Carpenter, B. et al. Stan: a probabilistic programming language. J. Stat. Softw. 76, 1–32 (2017).

Reunig, K., Kenwick, M. R. & Fariss, C. J. Exploring the dynamics of latent variable models. Polit. Anal. 27, 503–517 (2019). https://scholar.google.com/scholar?hl=en&as_sdt=0%2C33&q=Reunig%2C+K.%2C+Kenwick%2C+M.+R.+%26+Fariss%2C+C.+J.+Exploring+the+dynamics+of+latent+variable+models.+Polit.+Anal.+27%2C+503%E2%80%93517+%282019%29.&btnG=.

Acknowledgements

We deeply thank the very large number of RAs who coded these data. Their affiliations and vita are listed in Supplementary Table 1. Our RAs include the following individuals:

A. Ibn Abdelouahab, A. Tyagi, A. Clemonds, A. Poppe, A. Arya, A. Akhand, A. Baglanova, A. Mengerink, A. Pachanov, A. Michaelsen, A. Taufik, A. Widmann, A. Udodik, A. Tlegenova, A. Albrecht, A. Panella, A. Acero, A. Belén Perianes, A. McElroy, A. Steinbrunner, A. Khripunova, A. Duncan, A. Lopez Schrader, A. Petrova, A. Johar, A. Herz, A. Kanyangi, A. Horn, A. Mohan, A. S. Körner, A. Vaughan-Pow, A. Kaiser, A. Thakre, A. Pérez, A. Barrenechea, A. Schouten, A. Mwombeki, A. Edelman, A. Maria, B. Kushwaha, B. Bromová, B. Di Giulio, B. von Braunschweig, B. Selvi, B. Hecking, B. Grizhar, B. Lee, B. Arrue-Astrain, B. Ouerghi, B. C. Quartey, B. Ciccarini, C. Kaleel, C. Kim, C. Schenk, C. P. Dybwad, C. Velez, C. Kimmett, C. Alija, C. Beale, C. Anderson, C. Vorbauer, C.-H. Shen, C. Fraser, C. Ekengren, C. Wang, C. M. Dybwad, C. Martin, C. Horvath, D. Downes, D. Wu, D. A. Stasiukynas, D. Boey, D. Martínek, D. Nguyen, D. Abilova, D. Jintcharadzé, D. Agboola, D. McCord, D. Fennelly, D. Duba, D. P. Ouko, D. G. Arciniega, D. Calvo, D. Shallal, D. Nadella, D. Takenov, D. Juling, D. Obeth, D. Kamel, D. Quelle, D. Wilstic, D. Jankunaite, M. King-Okoye, D. Ollivier, E. Landaeta, E. Lin, E. Volpe, E. Derrer-Merk, E. Çalışkan, E. Seith, E. Jones, E. Pettersen, E. Weir, E. Jacobo, E. Westropp, E. Hutchinson, E. Repetto, E. Walczyk, E. Muro, E. Ollivier, E. Kwizera, E. Parker, E. Si, E. Lewis, F. Lind, F. Kadner, F. F. Primandari, F. Liu, F. Sadek, F. Wang, F. Willuweit, F. Werneck, F. Bono, F. Yoon, F. Y. Sun, F. Nguyen, F. Denker, G. B. Freitas, G. Wood, G. Mutheu, G. Katiambo, G. Makaoko, H.-N. Yu, H. Ahmed, H. C. Eddine, H. Zhang, H. Garfinkel, H. Shepard-Moore, H. Scheir, H. Paul, H. Felappi, H. Asibuo, H. Richter, H. Okwatch, H. Haider, I. Rebouças Batista, I. Koch, I. Rickert, I. Böhret, I. Sæle Helland, I. Ravnanger, I. Aldama, I. Conti, I. Russo, I. Smith, I. J. Ait Hmitti, J. Kubinec, J. Lindahl, J. Berg, J. Menzer, J. Murutu, J. Li, J. Klaiber, J. L. Piper, J. Sowa, J. Patel, J. von Agris, J. Noguera Barrera, J. Johansson, J. Yoo, J. Lencastre Morais, J. L. D. R. Hilger, J. Gräff, J. Kleinknecht, J. Bürkner, J. Pollig, Josef Montag, J. Diversi, J. Scholten, J. Dröge, J. Nassl, J. Smakman, J. Wießmann, K. Nisa Başkan, K. Su, K. Kartawira, K. L. Båsund, K. Schultz, K. Klaunig, K. Hermann, K. Schwoerer, K. Ratnasingham, K. Tran, K. Vogli, K. Vandyck, K. Schönfeld, K. Anklewicz, K. Burghartz, L. Cadena, L. Williamson, L. Hannig, L.Frick, L. C. Frömchen-Zwick, L. Wiedmann, L. Ankliss, L. Kolb, L. Kohrt, L. Imberger, L. Cheng, L. T. Albrecht, L. Zandstra, L. Dow, L. Kulp, L. Chen, L. Illert, L. B. Freitas, L. Linares, L. Modrakowski, L. Cuéllar, M. Strebling, M. Zahra, M. Sheikh, M. Nasirova, M. Spel, M. Winking, M. A. Kwao, M. Ellemunt, M. Förster, M. (M.) Papageorgiou, M. Sievers, M. Deierl, M. Siliankina, M. Hofmann, M. Hellmich, M. Nussbaumer, M. AlHammadi, M. Hotopp, M. Jensen, M. Cottrell, M. Hargreaves, M. Tan, M. Dirks, M. Rollberg, M. Sugden, M. Bhouri, M. Balluff, M. Limpe, M. Chen, M. Moskovljevic, M. T. Janowski, M. Witte, M. Muller, M. Murad, M. Horn, M. Masood, M. Alramlawi, M. Moghis, M. Nasery, N. Grossenbacher, N. K. Meichle, N. Elmore, N. Filkina-Spreizer, N. Baratashvili, N. Ruhde, N. Mukhlissova, N. Allgeier, N. Göller, N. Oubre, N. Hasan, N. Fent, N. Illenseer, N. Danevski, N. Klatt, N. D. Bholah, N. Fröhlich, N. Kubinec, N. Altunaiji, N. Assanbay, O. Mauffrey, O. J. Anyango, O. Pollex, O. Weber, O. Kalandaridis, O. Renda, O. Gibatov, O. Syed, O. Durhan, O. Pehlivanoglu, O. Courtier, P. Robles, P. Groome, P. Heysch, P. Ganga, P. Germana, P. Chen, P. Weber, P. Bansagi, P. Neupane, R. Hanine, R. Dada, R. Böhning, R. d. M. Baldrighi, R. Demirkol, R. Karl, R. Beigel, R. Al-Ameri, R. Winn, R. Buitrago, R. S. Baye, R. Lipinski, R.Whittle, R. Fischer, R. Rodiriguez-Ramirez, R. Fayazzadh, R. Pollack, R. Wang, R. Kim, S. Khan, S. Ixcaragua, S. Soliman, S. Law, S. Reinard, S. Moghis, S. Torres Hernandez, S. Ahmad, S. Edmonds, S. Sleigh, S. K. Chan, S. Bhardwaj, S.-M. Pigeon, S.-A. Paik, S. Corea, S. Guo, S. Shukla, S. S. Thosar, S. Hüttemann, S. Müller, S. Tomany, S. Mallow, S. Dold, S. Ülkenli, S. Kim, S. Belbase, S. Razaque, T. White, T. Matheis, T. Goodsir, T. Wagner, T. Davronov, T. Thornton, T. de Rooij, T. Martin, T. Nilsson Gige, T. Model, T. Seiler, T. Xu, T. Brömsen, U. Okoye, U. Barteczko, V. Cheng, V. Grujic, V. Singh, V. K. Kigwiru, V. Velasquez Mesa, V. Bartáková, V. Abuor, V. Pedrero, V. Atanasov, V. Han, V. Kalandaridis, V. Rajesekhar, W. Ruan, W. Yoh, W. Njuguna, X. Jin, Y. Fu, Y. Plumpe, Y. Mussatayev, Y. Ma, Y. Zhu, Y. Sun, Y. Ke, Y. C. Kenawas, Y. Shi, Z. Tuyakpayeva, Z. Ybrayev, Z. Mukatay, Z. Umarova.

We also thank B. Rose and Jataware for making the news database available to this project. We thank T. Büthe, T. Rommel, the Chair for International Relations, the Hochschule für Politik at the Technical University of Munich (TUM), as well as the TUM School of Governance, and the TUM School of Management for the many ways they have supported this project. We further thank the Chair of International Relations, the Hochschule für Politik and New York University Abu Dhabi for their funding in support of this endeavour. Moreover, we appreciate the support by Slack Technologies and RStudio who provided access to their technical infrastructure. The funders had no role in study design, data collection and analysis, decision to publish or preparation of the manuscript.

Author information

Authors and Affiliations

Contributions

All authors contributed to the supervision of the project, project administration and the writing of the paper. Additionally C.C. contributed to the conceptualization (lead), software, data curation, visualization and funding acquisition of the project. J.B. contributed to the conceptualization, validation (lead), formal analysis and funding acquisition of the project. A.S.H. contributed to the validation (lead) and formal analysis of the project. R.K. contributed to the conceptualization, software, formal analysis (lead), data curation, visualization and funding acquisition of the project. L.M. contributed to the conceptualization, software, visualization, project administration (lead) and funding acquisition of the project.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Peer review information Peer reviewer reports are available. Primary Handling Editor: Aisha Bradshaw.

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

Extended Data Fig. 1 Network Map of Bans on Inbound Flights of all European countries as of March 15, 2020.

This figure is an expansion of Fig. 2. It shows a network of bans on inbound flights initiated by all European countries as of March 15, 2020 as opposed to the subset of European countries shown in Fig. 2. The vertical lines denote whether there was such a flight ban between two countries, and the arrow of the vertical line indicates the direction in which the ban is applied.

Extended Data Fig. 2 Convergence Diagnostics for Random-Walk HMC Fit.

Plot A shows the distribution of split-Rhat values for all 40,000 parameters in the model, revealing most parameters are close to 1, which indicates strong convergence. The effective number of samples for parameters in plot B is also very high, often exceeding the total number of posterior draws. Plots C and D show strong mixing across chains for the intercept and over-time parameter for the United States for January 30th.

Supplementary information

Supplementary Information

Supplementary Methods and Supplementary Table 1.

Rights and permissions

About this article

Cite this article

Cheng, C., Barceló, J., Hartnett, A.S. et al. COVID-19 Government Response Event Dataset (CoronaNet v.1.0). Nat Hum Behav 4, 756–768 (2020). https://doi.org/10.1038/s41562-020-0909-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41562-020-0909-7

This article is cited by

-

Harmonizing government responses to the COVID-19 pandemic

Scientific Data (2024)

-

Epidemic outbreaks and the optimal lockdown area: a spatial normative approach

Economic Theory (2024)

-

Religious Identity and its Relation to Health-Related Quality of Life and COVID-Related Stress of Refugee Children and Adolescents in Germany

Journal of Religion and Health (2024)

-

A time-space integro-differential economic model of epidemic control

Economic Theory (2024)

-

A synthesis of evidence for policy from behavioural science during COVID-19

Nature (2024)