Abstract

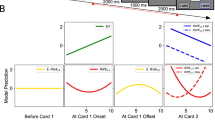

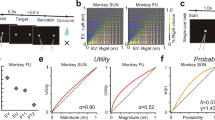

A fundamental but rarely contested assumption in economics and neuroeconomics is that decision-makers compute subjective values of risky options by multiplying functions of reward probability and magnitude. By contrast, an additive strategy for valuation allows flexible combination of reward information required in uncertain or changing environments. We hypothesized that the level of uncertainty in the reward environment should determine the strategy used for valuation and choice. To test this hypothesis, we examined choice between risky options in humans and rhesus macaques across three tasks with different levels of uncertainty. We found that whereas humans and monkeys adopted a multiplicative strategy under risk when probabilities are known, both species spontaneously adopted an additive strategy under uncertainty when probabilities must be learned. Additionally, the level of volatility influenced relative weighting of certain and uncertain reward information, and this was reflected in the encoding of reward magnitude by neurons in the dorsolateral prefrontal cortex.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The data that support the findings of this study are available from the corresponding author upon request.

Code availability

Custom computer codes that support the findings of this study are available from the corresponding author upon request.

References

Bernoulli, D. Expositions of a new theory of the measurement of risk. Econometrica 22, 23–36 (1954).

Edwards, W. The theory of decision making. Psychol. Bull. 51, 380 (1954).

Kahneman, D. & Tversky, A. On the psychology of prediction. Psych. Rev. 80, 237–251 (1973).

Stewart, N. Information integration in risky choice: identification and stability. Front. Psychol. 2, 301 (2011).

Ernst, M. O. & Banks, M. S. Humans integrate visual and haptic information in a statistically optimal fashion. Nature 415, 429 (2002).

Hunt, L. T., Dolan, R. J. & Behrens, T. E. Hierarchical competitions subserving multi-attribute choice. Nat. Neurosci. 17, 1613–1622 (2014).

Farashahi, S., Rowe, K., Aslami, Z., Lee, D. & Soltani, A. Feature-based learning improves adaptability without compromising precision. Nat. Commun. 8, 1768 (2017).

Farashahi, S. et al. Metaplasticity as a neural substrate for adaptive learning and choice under uncertainty. Neuron 94, 401–414 (2017).

Spitmaan, M., Chu, E. & Soltani, A. Salience-driven value construction for adaptive choice under risk. J. Neurosci. 39, 5195–5209 (2019).

Strait, C. E., Blanchard, T. C. & Hayden, B. Y. Reward value comparison via mutual inhibition in ventromedial prefrontal cortex. Neuron 82, 1357–1366 (2014).

Farashahi, S., Azab, H., Hayden, B. & Soltani, A. On the flexibility of basic risk attitudes in monkeys. J. Neurosci. 38, 4383–4398 (2018).

Hayden, B., Heilbronner, S. & Platt, M. Ambiguity aversion in rhesus macaques. Front. Neurosci. 4, 166 (2010).

Donahue, C. H. & Lee, D. Dynamic routing of task-relevant signals for decision making in dorsolateral prefrontal cortex. Nat. Neurosci. 18, 295–301 (2015).

Massi, B., Donahue, C. H. & Lee, D. Volatility facilitates value updating in the prefrontal cortex. Neuron 99, 598–608 (2018).

Stephan, K. E., Penny, W. D., Daunizeau, J., Moran, R. J. & Friston, K. J. Bayesian model selection for group studies. NeuroImage 46, 1005–1017 (2009); erratum 48, 311–311 (2009).

Behrens, T. E. J., Woolrich, M. W., Walton, M. E. & Rushworth, M. F. S. Learning the value of information in an uncertain world. Nat. Neurosci. 10, 1214–1221 (2007).

Tversky, A. Intransitivity of preferences. Psychol. Rev. 76, 31 (1969).

Lichtenstein, S. & Slovic, P. The Construction of Preference (Cambridge Univ. Press, 2006).

Ariely, D., Loewenstein, G. & Prelec, D. “Coherent arbitrariness”: stable demand curves without stable preferences. Q. J. Econ. 118, 73–106 (2003).

Frederick, S., Loewenstein, G. & O’Donoghue, T. Time discounting and time preference: a critical review. J. Econ. Lit. 40, 351–401 (2002).

Kolling, N., Wittmann, M. & Rushworth, M. F. Multiple neural mechanisms of decision making and their competition under changing risk pressure. Neuron 81, 1190–1202 (2014).

Ferrari-Toniolo, S., Bujold, P. M. & Schultz, W. Probability distortion depends on choice sequence in rhesus monkeys. J. Neurosci. 39, 2915–2929 (2019).

Hayden, B. Y. Time discounting and time preference in animals: a critical review. Psychon. Bull. Rev. 23, 39–53 (2016).

Kennerley, S. W., Walton, M. E., Behrens, T. E. J., Buckley, M. J. & Rushworth, M. F. S. Optimal decision making and the anterior cingulate cortex. Nat. Neurosci. 9, 940–947 (2006).

Soltani, A. & Izquierdo, A. Adaptive learning under expected and unexpected uncertainty. Nat. Rev. Neurosci. https://doi.org/10.1038/341583-019-0180-y (2019).

Brainard, D. H. The psychophysics toolbox. Spat. Vis. 10, 433–436 (1997).

Cornelissen, F. W., Peters, E. M. & Palmer, J. The Eyelink Toolbox: eye tracking with MATLAB and the Psychophysics Toolbox. Behav. Res. Methods Instrum. Comput. 34, 613–617 (2002).

Acknowledgements

We thank E. Chu, S. Nichols-Worley and L. Tran for collecting human data, and C. Strait and M. Mancarella for collecting monkey data in the gambling task. This work is supported by the National Science Foundation (CAREER Award no. BCS1253576 to B.Y.H. and EPSCoR Award no. 1632738 to A.S.), and the National Institutes of Health (grant no. R01 DA038615 to B.Y.H., grant nos. R01 DA029330 and R01 MH108629 to D.L., and grant no. R01 DA047870 to A.S.). The funders had no role in study design, data collection and analysis, decision to publish or preparation of the manuscript.

Author information

Authors and Affiliations

Contributions

A.S. conceived the project. C.H.D., B.Y.H. and D.L. designed the experiments in monkeys. S.F. and A.S. designed the human experiments. S.F. and A.S. performed model simulations and analysed the data. C.H.D. and S.F. conducted the experiments. C.H.D., S.F., D.L., B.Y.H. and A.S. analysed and interpreted the experimental data. D.L., B.Y.H. and A.S wrote the manuscript and all authors contributed to revising the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Peer review information: Primary Handling Editor: Marike Schiffer.

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information

Supplementary Notes 1 and 2 and Figs. 1–7.

Rights and permissions

About this article

Cite this article

Farashahi, S., Donahue, C.H., Hayden, B.Y. et al. Flexible combination of reward information across primates. Nat Hum Behav 3, 1215–1224 (2019). https://doi.org/10.1038/s41562-019-0714-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41562-019-0714-3

This article is cited by

-

Neural mechanisms underlying the hierarchical construction of perceived aesthetic value

Nature Communications (2023)

-

The rat frontal orienting field dynamically encodes value for economic decisions under risk

Nature Neuroscience (2023)

-

Computational models of adaptive behavior and prefrontal cortex

Neuropsychopharmacology (2022)

-

A structural and functional subdivision in central orbitofrontal cortex

Nature Communications (2022)

-

Dissociable roles of cortical excitation-inhibition balance during patch-leaving versus value-guided decisions

Nature Communications (2021)