Abstract

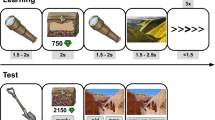

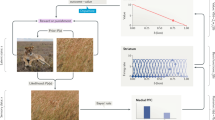

Dopamine is thought to provide reward prediction error signals to temporal lobe memory systems, but the role of these signals in episodic memory has not been fully characterized. Here we developed an incidental memory paradigm to (i) estimate the influence of reward prediction errors on the formation of episodic memories, (ii) dissociate this influence from surprise and uncertainty, (iii) characterize the role of temporal correspondence between prediction error and memoranda presentation and (iv) determine the extent to which this influence is dependent on memory consolidation. We found that people encoded incidental memoranda more strongly when they gambled for potential rewards. Moreover, the degree to which gambling strengthened encoding scaled with the reward prediction error experienced when memoranda were presented (and not before or after). This encoding enhancement was detectable within minutes and did not differ substantially after 24 h, indicating that it is not dependent on memory consolidation. These results suggest a computationally and temporally specific role for reward prediction error signalling in memory formation.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The behavioural data from both experiments are available from the corresponding author on request.

Code availability

Custom code used to analyse and model the data is available from the corresponding author on request.

References

Sutton, R. & Barto, A. Reinforcement Learning: An I ntroduction (MIT Press, 1998).

Schacter, D. L. & Tulving, E. Memory Systems 1994 (MIT Press, 1994).

McClelland, J. L., McNaughton, B. L. & O’Reilly, R. C. Why there are complementary learning systems in the hippocampus and neocortex: insights from the successes and failures of connectionist models of learning and memory. Psychol. Rev. 102, 419–457 (1995).

O’Reilly, R. C., Bhattacharyya, R., Howard, M. D. & Ketz, N. Complementary learning systems. Cogn. Sci. 38, 1229–1248 (2014).

Schultz, W. A neural substrate of prediction and reward. Science 275, 1593–1599 (1997).

Frank, M. J. By carrot or by stick: cognitive reinforcement learning in parkinsonism. Science 306, 1940–1943 (2004).

O’Doherty, J. et al. Dissociable roles of ventral and dorsal striatum in instrumental conditioning. Science 304, 452–454 (2004).

Sohal, V. S., Zhang, F., Yizhar, O. & Deisseroth, K. Parvalbumin neurons and gamma rhythms enhance cortical circuit performance. Nature 459, 698–702 (2009).

Squire, L. R. Memory and the hippocampus: a synthesis from findings with rats, monkeys, and humans. Psychol. Rev. 99, 195–231 (1992).

Nadel, L. & Moscovitch, M. Memory consolidation, retrograde amnesia and the hippocampal complex. Curr. Opin. Neurobiol. 7, 217–227 (1997).

Wan, H., Aggleton, J. P. & Brown, M. W. Different contributions of the hippocampus and perirhinal cortex to recognition memory. J. Neurosci. 19, 1142–1148 (1999).

Eichenbaum, H., Yonelinas, A. P. & Ranganath, C. The medial temporal lobe and recognition memory. Annu. Rev. Neurosci. 30, 123–152 (2007).

Moscovitch, M., Cabeza, R., Winocur, G. & Nadel, L. Episodic memory and beyond: the hippocampus and neocortex in transformation. Annu. Rev. Psychol. 67, 105–134 (2016).

Lohnas, L. J. et al. Time-resolved neural reinstatement and pattern separation during memory decisions in human hippocampus. Proc. Natl Acad. Sci. USA 115, E7418–E7427 (2018).

Jang, A. I., Wittig, J. H.Jr, Inati, S. K. & Zaghloul, K. A. Human cortical neurons in the anterior temporal lobe reinstate spiking activity during verbal memory retrieval. Curr. Biol. 27, 1700–1705 (2017).

Heilbronner, S. R., Rodriguez-Romaguera, J., Quirk, G. J., Groenewegen, H. J. & Haber, S. N. Circuit-based corticostriatal homologies between rat and primate. Biol. Psychiat. 80, 509–521 (2016).

Thierry, A. M., Gioanni, Y., Dégénétais, E. & Glowinski, J. Hippocampo-prefrontal cortex pathway: anatomical and electrophysiological characteristics. Hippocampus 10, 411–419 (2000).

Atallah, H. E., Frank, M. J. & O’Reilly, R. C. Hippocampus, cortex, and basal ganglia: insights from computational models of complementary learning systems. Neurobiol. Learn. Mem. 82, 253–267 (2004).

Eichenbaum, H. Prefrontal–hippocampal interactions in episodic memory. Nat. Rev. Neurosci. 18, 547–558 (2017).

Floresco, S. B. Dopaminergic regulation of limbic-striatal interplay. J. Psychiat. Neurosci. 32, 400–411 (2007).

Shohamy, D. & Adcock, R. A. Dopamine and adaptive memory. Trends Cogn. Sci. 14, 464–472 (2010).

Montague, P. R., Dayan, P. & Sejnowski, T. J. A framework for mesencephalic dopamine systems based on predictive Hebbian learning. J. Neurosci. 16, 1936–1947 (1996).

Steinberg, E. E. et al. A causal link between prediction errors, dopamine neurons and learning. Nat. Neurosci. 16, 966–973 (2013).

Schultz, W. Dopamine reward prediction-error signalling: a two-component response. Nat. Rev. Neurosci. 17, 183–195 (2016).

Berridge, K. C. & Robinson, T. E. What is the role of dopamine in reward: hedonic impact, reward learning, or incentive salience? Brain Res. Rev. 28, 309–369 (1998).

Fiorillo, C. D., Tobler, P. N. & Schultz, W. Discrete coding of reward probability and uncertainty by dopamine neurons. Science 299, 1898–1902 (2003).

Clark, C. A. & Dagher, A. The role of dopamine in risk taking: a specific look at Parkinson’s disease and gambling. Front. Behav. Neurosci. 8, 196 (2014).

Stopper, C. M., Tse, M. T. L., Montes, D. R., Wiedman, C. R. & Floresco, S. B. Overriding phasic dopamine signals redirects action selection during risk/reward decision making. Neuron 84, 177–189 (2014).

Collins, A. G. E. & Frank, M. J. Opponent actor learning (OpAL): modeling interactive effects of striatal dopamine on reinforcement learning and choice incentive. Psychol. Rev. 121, 337–366 (2014).

Zalocusky, K. A. et al. Nucleus accumbens D2R cells signal prior outcomes and control risky decision-making. Nature 531, 642–646 (2016).

Rutledge, R. B., Skandali, N., Dayan, P. & Dolan, R. J. Dopaminergic modulation of decision making and subjective well-being. J. Neurosci. 35, 9811–9822 (2015).

Lisman, J. E. & Grace, A. A. The hippocampal-VTA loop: controlling the entry of information into long-term memory. Neuron 46, 703–713 (2005).

Wittmann, B. C. et al. Reward-related fMRI activation of dopaminergic midbrain is associated with enhanced hippocampus-dependent long-term memory formation. Neuron 45, 459–467 (2005).

Tully, K. & Bolshakov, V. Y. Emotional enhancement of memory: how norepinephrine enables synaptic plasticity. Mol. Brain 3, 15 (2010).

Rosen, Z. B., Cheung, S. & Siegelbaum, S. A. Midbrain dopamine neurons bidirectionally regulate CA3-CA1 synaptic drive. Nat. Neurosci. 18, 1763–1771 (2015).

Weitemier, A. Z. & McHugh, T. J. Noradrenergic modulation of evoked dopamine release and pH shift in the mouse dorsal hippocampus and ventral striatum. Brain Res. 1657, 74–86 (2017).

Lemon, N. & Manahan-Vaughan, D. Dopamine D1/D5 receptors gate the acquisition of novel information through hippocampal long-term potentiation and long-term depression. J. Neurosci. 26, 7723–7729 (2006).

McNamara, C. G., Tejero-Cantero, Á., Trouche, S., Campo-Urriza, N. & Dupret, D. Dopaminergic neurons promote hippocampal reactivation and spatial memory persistence. Nat. Neurosci. 17, 1658–1660 (2014).

Gan, J. O., Walton, M. E. & Phillips, P. E. M. Dissociable cost and benefit encoding of future rewards by mesolimbic dopamine. Nat. Neurosci. 13, 25–27 (2009).

Bayer, H. M. & Glimcher, P. W. Midbrain dopamine neurons encode a quantitative reward prediction error signal. Neuron 47, 129–141 (2005).

Zaghloul, K. A. et al. Human substantia nigra neurons encode unexpected financial rewards. Science 323, 1496–1499 (2009).

Bethus, I., Tse, D. & Morris, R. G. M. Dopamine and memory: modulation of the persistence of memory for novel hippocampal NMDA receptor-dependent paired associates. J. Neurosci. 30, 1610–1618 (2010).

Murty, V. P. & Adcock, R. A. Enriched encoding: reward motivation organizes cortical networks for hippocampal detection of unexpected events. Cereb. Cortex 24, 2160–2168 (2013).

Murty, V. P., Tompary, A., Adcock, R. A. & Davachi, L. Selectivity in postencoding connectivity with high-level visual cortex is associated with reward-motivated memory. J. Neurosci. 37, 537–545 (2017).

Wimmer, G. E., Braun, E. K., Daw, N. D. & Shohamy, D. Episodic memory encoding interferes with reward learning and decreases striatal prediction errors. J. Neurosci. 34, 14901–14912 (2014).

Rouhani, N., Norman, K. A. & Niv, Y. Dissociable effects of surprising rewards on learning and memory. J. Exp. Psychol. Learn. Mem. Cogn. 44, 1430–1443 (2018).

De Loof, E. et al. Signed reward prediction errors drive declarative learning. PLoS One 13, e0189212 (2018).

Bouret, S. & Sara, S. J. Network reset: a simplified overarching theory of locus coeruleus noradrenaline function. Trends Neurosci. 28, 574–582 (2005).

Yu, A. J. & Dayan, P. Uncertainty, neuromodulation, and attention. Neuron 46, 681–692 (2005).

Nassar, M. R. et al. Rational regulation of learning dynamics by pupil-linked arousal systems. Nat. Neurosci. 15, 1040–1046 (2012).

Preuschoff, K., ’t Hart, M. & Einhäuser, W. Pupil dilation signals surprise: evidence for noradrenaline’s role in decision making. Front. Neurosci. 5, 115 (2011).

Behrens, T. E. J., Woolrich, M. W., Walton, M. E. & Rushworth, M. F. S. Learning the value of information in an uncertain world. Nat. Neurosci. 10, 1214–1221 (2007).

Nassar, M. R., Wilson, R. C., Heasly, B. & Gold, J. I. An approximately Bayesian delta-rule model explains the dynamics of belief updating in a changing environment. J. Neurosci. 30, 12366–12378 (2010).

Doya, K. Modulators of decision making. Nat. Neurosci. 11, 410–416 (2008).

McGuire, J. T., Nassar, M. R., Gold, J. I. & Kable, J. W. Functionally dissociable influences on learning rate in a dynamic environment. Neuron 84, 870–881 (2014).

Nassar, M. R. et al. Age differences in learning emerge from an insufficient representation of uncertainty in older adults. Nat. Commun. 7, 11609 (2016).

Cohen, J. Y., Haesler, S., Vong, L., Lowell, B. B. & Uchida, N. Neuron-type-specific signals for reward and punishment in the ventral tegmental area. Nature 482, 85–88 (2012).

Rutledge, R. B. et al. Dopaminergic drugs modulate learning rates and perseveration in Parkinson’s patients in a dynamic foraging task. J. Neurosci. 29, 15104–15114 (2009).

Sadacca, B. F., Jones, J. L. & Schoenbaum, G. Midbrain dopamine neurons compute inferred and cached value prediction errors in a common framework. eLife 5, e13665 (2016).

Frey, U. & Morris, R. G. Synaptic tagging: implications for late maintenance of hippocampal long-term potentiation. Trends Neurosci. 21, 181–188 (1998).

Stanek, J. K., Dickerson, K. C., Chiew, K. S., Clement, N. J. & Adcock, R. A. Expected reward value and reward uncertainty have temporally dissociable effects on memory formation. Preprint at bioRxiv https://doi.org/10.1101/280164 (2018).

Murty, V. P., DuBrow, S. & Davachi, L. The simple act of choosing influences declarative memory. J. Neurosci. 35, 6255–6264 (2015).

Broadbent, N. J., Squire, L. R. & Clark, R. E. Spatial memory, recognition memory, and the hippocampus. Proc. Natl Acad. Sci. USA 101, 14515–14520 (2004).

Squire, L. R., Wixted, J. T. & Clark, R. E. Recognition memory and the medial temporal lobe: a new perspective. Nat. Rev. Neurosci. 8, 872–883 (2007).

Koster, R., Guitart-Masip, M., Dolan, R. J. & Düzel, E. Basal ganglia activity mirrors a benefit of action and reward on long-lasting event memory. Cereb. Cortex 25, 4908–4917 (2015).

Cohen, M. S., Rissman, J., Suthana, N. A., Castel, A. D. & Knowlton, B. J. Value-based modulation of memory encoding involves strategic engagement of fronto-temporal semantic processing regions. Cogn. Affect. Behav. Neurosci. 14, 578–592 (2014).

Hamid, A. A. et al. Mesolimbic dopamine signals the value of work. Nat. Neurosci. 19, 117–126 (2016).

Starkweather, C. K., Babayan, B. M., Uchida, N. & Gershman, S. J. Dopamine reward prediction errors reflect hidden-state inference across time. Nat. Neurosci. 20, 581–589 (2017).

Long, N. M., Lee, H. & Kuhl, B. A. Hippocampal mismatch signals are modulated by the strength of neural predictions and their similarity to outcomes. J. Neurosci. 36, 12677–12687 (2016).

Greve, A., Cooper, E., Kaula, A., Anderson, M. C. & Henson, R. Does prediction error drive one-shot declarative learning?. J. Mem. Lang. 94, 149–165 (2017).

Schwartz, G. Estimating the Dimension of a Model. Ann. Stat. 6, 461–464 (1978).

Carpenter, B. et al. Stan: A probabilistic programming language. J. Stat. Soft. https://doi.org/10.18637/jss.v076.i01 (2017).

Acknowledgements

We thank A. Collins for comments on the experimental design and thank J. Helmers and D. Rogers for their help in setting up and collecting data through Amazon Mechanical Turk. This work was funded by NIH grant numbers F32MH102009 and K99AG054732 (M.R.N.), NIMH R01 MH080066-01 and NSF Proposal number 1460604 (M.J.F.), and R00MH094438 (D.G.D.). The funders had no role in study design, data collection and analysis, decision to publish or preparation of the manuscript.

Author information

Authors and Affiliations

Contributions

A.I.J., M.R.N., D.G.D. and M.J.F. designed the experiment and wrote the manuscript. A.I.J. collected the data and M.R.N. developed the computational models. M.R.N. and A.I.J. designed and performed behavioural analysis.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Supplementary Information

Supplementary Figs. 1–8 and Supplementary Tables 1 and 2.

Rights and permissions

About this article

Cite this article

Jang, A.I., Nassar, M.R., Dillon, D.G. et al. Positive reward prediction errors during decision-making strengthen memory encoding. Nat Hum Behav 3, 719–732 (2019). https://doi.org/10.1038/s41562-019-0597-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41562-019-0597-3

This article is cited by

-

Memory for rewards guides retrieval

Communications Psychology (2024)

-

Reinforcement learning deficits exhibited by postnatal PCP-treated rats enable deep neural network classification

Neuropsychopharmacology (2023)

-

Long-term, multi-event surprise correlates with enhanced autobiographical memory

Nature Human Behaviour (2023)

-

The effect of prediction error on episodic memory encoding is modulated by the outcome of the predictions

npj Science of Learning (2023)

-

The influence of insight on risky decision making and nucleus accumbens activation

Scientific Reports (2023)