Abstract

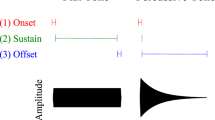

The principles underlying functional asymmetries in cortex remain debated. For example, it is accepted that speech is processed bilaterally in auditory cortex, but a left hemisphere dominance emerges when the input is interpreted linguistically. The mechanisms, however, are contested, such as what sound features or processing principles underlie laterality. Recent findings across species (humans, canines and bats) provide converging evidence that spectrotemporal sound features drive asymmetrical responses. Typically, accounts invoke models wherein the hemispheres differ in time–frequency resolution or integration window size. We develop a framework that builds on and unifies prevailing models, using spectrotemporal modulation space. Using signal processing techniques motivated by neural responses, we test this approach, employing behavioural and neurophysiological measures. We show how psychophysical judgements align with spectrotemporal modulations and then characterize the neural sensitivities to temporal and spectral modulations. We demonstrate differential contributions from both hemispheres, with a left lateralization for temporal modulations and a weaker right lateralization for spectral modulations. We argue that representations in the modulation domain provide a more mechanistic basis to account for lateralization in auditory cortex.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Code availability

All stimuli construction code is available on a public repository https://github.com/flinkerlab/SpectroTemporalModulationFilter. Experiment and analysis code is available from the corresponding author upon reasonable request.

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

References

Broca, P. Remarques sur le siege de la faculté du langage articulé, suivies d’une observation d’aphémie (perte de la parole). Bull. et mémoires de la Société Anatomique de Paris 36, 330–356 (1861).

Wernicke, C. Der aphasische Symptomencomplex; eine psychologische Studie auf anatomischer Basis (Cohn & Weigert, Breslau, 1874).

Hickok, G. & Poeppel, D. The cortical organization of speech processing. Nat. Rev. Neurosci. 8, 393–402 (2007).

Hagoort, P. & Indefrey, P. The neurobiology of language beyond single words. Annu. Rev. Neurosci. 37, 347–362 (2014).

Friederici, A. D. The brain basis of language processing: from structure to function. Physiol. Rev. 91, 1357–1392 (2011).

Rauschecker, J. P. & Scott, S. K. Maps and streams in the auditory cortex: nonhuman primates illuminate human speech processing. Nat. Neurosci. 12, 718–724 (2009).

Price, C. J. A review and synthesis of the first 20 years of PET and fMRI studies of heard speech, spoken language and reading. NeuroImage 62, 816–847 (2012).

Goetz, C. G. Textbook of Clinical Neurology 3rd edn, 355 (2007).

Kandel, E. R., Schwartz, J. H., Jessell, T. M. Principles of Neural Science 4th edn, 457–469 (McGraw-Hill, New York, 2000).

Bozic, M., Tyler, L. K., Ives, D. T., Randall, B. & Marslen-Wilson, W. D. Bihemispheric foundations for human speech comprehension. Proc. Natl Acad. Sci. USA 107, 17439–17444 (2010).

Overath, T., McDermott, J. H., Zarate, J. M. & Poeppel, D. The cortical analysis of speech-specific temporal structure revealed by responses to sound quilts. Nat. Neurosci. 18, 903–911 (2015).

Zatorre, R. J. & Gandour, J. T. Neural specializations for speech and pitch: moving beyond the dichotomies. Phil. Trans. R. Soc. Lond. 363, 1087–1104 (2008).

McGettigan, C. & Scott, S. K. Cortical asymmetries in speech perception: what’s wrong, what’s right and what’s left? Trends Cognit. Sci. 16, 269–276 (2012).

Efron, R. Temporal perception, aphasia and déjà vu. Brain 86, 403–424 (1963).

Schwartz, J. & Tallal, P. Rate of acoustic change may underlie hemispheric specialization for speech perception. Science 207, 1380–1381 (1980).

Tallal, P. & Piercy, M. Defects of non-verbal auditory perception in children with developmental aphasia. Nature 241, 468–469 (1973).

Ross, E. D. & Mesulam, M.-M. Dominant language functions of the right hemisphere? Prosody and emotional gesturing. Arch. Neurol. 36, 144–148 (1979).

Tucker, D. M., Watson, R. T. & Heilman, K. M. Discrimination and evocation of affectively intoned speech in patients with right parietal disease. Neurol. 27, 947–947 (1977).

Robin, D. A., Tranel, D. & Damasio, H. Auditory perception of temporal and spectral events in patients with focal left and right cerebral lesions. Brain Lang. 39, 539–555 (1990).

Zatorre, R. J. Pitch perception of complex tones and human temporal-lobe function. J. Acoust. Soc. Am. 84, 566–572 (1988).

Zatorre, R. J., Evans, A. C., Meyer, E. & Gjedde, A. Lateralization of phonetic and pitch discrimination in speech processing. Science 256, 846–849 (1992).

Belin, P. et al. Lateralization of speech and auditory temporal processing. J. Cogn. Neurosci. 10, 536–540 (1998).

Zatorre, R. J., Belin, P. & Penhune, V. B. Structure and function of auditory cortex: music and speech. Trends Cognit. Sci. 6, 37–46 (2002).

Poeppel, D. The analysis of speech in different temporal integration windows: cerebral lateralization as ‘asymmetric sampling in time’. Speech Commun. 41, 245–255 (2003).

Scott, S. K. & McGettigan, C. Do temporal processes underlie left hemisphere dominance in speech perception? Brain Lang. 127, 36–45 (2013).

Gervain, J. Near-infrared spectroscopy: recent advances in infant speech perception and language acquisition research. Front. Psychol. 5, 481 (2014).

Luo, H. & Poeppel, D. Cortical oscillations in auditory perception and speech: evidence for two temporal windows in human auditory cortex. Front. Psychol. 3, 170 (2012).

Okamoto, H. & Kakigi, R. Hemispheric asymmetry of auditory mismatch negativity elicited by spectral and temporal deviants: a magnetoencephalographic study. Brain Topogr. 28, 471–478 (2015).

Thompson, E. C. et al. Hemispheric asymmetry of endogenous neural oscillations in young children: implications for hearing speech in noise. Sci. Rep. 6, 19737 (2016).

Ratcliffe, V. F. & Reby, D. Orienting asymmetries in dogs’ responses to different communicatory components of human speech. Curr. Biol. 24, 2908–2912 (2014).

Washington, S. D. & Tillinghast, J. S. Conjugating time and frequency: hemispheric specialization, acoustic uncertainty, and the mustached bat. Front. Neurosci. 9, 143 (2015).

Washington, S. D. & Kanwal, J. S. Sex-dependent hemispheric asymmetries for processing frequency-modulated sounds in the primary auditory cortex of the mustached bat. J. Neurophysiol. 108, 1548–1566 (2012).

De Valois R. L. & De Valois K. K. Spatial Vision (Oxford Univ. Press, 1990).

Shapley, R. & Lennie, P. Spatial frequency analysis in the visual system. Annu. Rev. Neurosci. 8, 547–581 (1985).

Kowalski, N., Depireux, D. A. & Shamma, S. A. Analysis of dynamic spectra in ferret primary auditory cortex. I. Characteristics of single-unit responses to moving ripple spectra. J. Neurophysiol. 76, 3503–3523 (1996).

Woolley, S. M. N., Fremouw, T. E., Hsu, A. & Theunissen, F. E. Tuning for spectro-temporal modulations as a mechanism for auditory discrimination of natural sounds. Nat. Neurosci. 8, 1371–1379 (2005).

Elhilali, M., Chi, T. & Shamma, S. A. A spectro-temporal modulation index (STMI) for assessment of speech intelligibility. Speech Commun. 41, 331–348 (2003).

Elliott, T. M. & Theunissen, F. E. The modulation transfer function for speech intelligibility. PLoS Comput. Biol 5, e1000302 (2009).

Arnal, L. H., Flinker, A., Kleinschmidt, A., Giraud, A.-L. & Poeppel, D. Human screams occupy a privileged niche in the communication soundscape. Curr. Biol. 25, 2051–2056 (2015).

Gross, J. et al. Speech rhythms and multiplexed oscillatory sensory coding in the human brain. Plos Biol. 11, e1001752 (2013).

Schönwiesner, M. & Zatorre, R. J. Spectro-temporal modulation transfer function of single voxels in the human auditory cortex measured with high-resolution fMRI. Proc. Natl Acad. Sci. USA 106, 14611–14616 (2009).

Santoro, R. et al. Encoding of natural sounds at multiple spectral and temporal resolutions in the human auditory cortex. PLoS Comput. Biol. 10, e1003412 (2014).

Pasley, B. N. et al. Reconstructing speech from human auditory cortex. PLoS Biol. 10, e1001251 (2012).

Poeppel, D. Pure word deafness and the bilateral processing of the speech code. Cognit. Sci. 25, 679–693 (2001).

Moore, B. C. J. Auditory filter shapes derived in simultaneous and forward masking. J. Acoust. Soc. Am. 70, 1003 (1981).

Ghitza, O. On the upper cutoff frequency of the auditory critical-band envelope detectors in the context of speech perception. J. Acoust. Soc. Am. 110, 1628–1640 (2001).

Drullman, R., Festen, J. M. & Plomp, R. Effect of reducing slow temporal modulations on speech reception. J. Acoust. Soc. Am. 95, 2670–2680 (1994).

Chi, T., Gao, Y., Guyton, M. C., Ru, P. & Shamma, S. Spectro-temporal modulation transfer functions and speech intelligibility. J. Acoust. Soc. Am. 106, 2719 (1999).

Jaeger, T. F. Categorical data analysis: away from ANOVAs (transformation or not) and towards logit mixed models. J. Mem. Lang. 59, 434–446 (2008).

Lawrence, M. A. Package ‘ez’. http://github.com/mike-lawrence/ez (R package, 2016).

Prins, N. & Kingdom, F. A. A. Applying the model-comparison approach to test specific research hypotheses in psychophysical research using the Palamedes Toolbox. Front. Psychol. 9, 1250 (2018).

Kimura, D. Functional asymmetry of the brain in dichotic listening. Cortex 3, 163–178 (1967).

Shamma, S. On the role of space and time in auditory processing. Trends Cognit. Sci. 5, 340–348 (2001).

Venezia, J. H., Hickok, G. & Richards, V. M. Auditory ‘bubbles’: efficient classification of the spectrotemporal modulations essential for speech intelligibility. J. Acoust. Soc. Am. 140, 1072–1088 (2016).

Houtgast, T. & Steeneken, H. J. M. A review of the MTF concept in room acoustics and its use for estimating speech intelligibility in auditoria. J. Acoust. Soc. Am. 77, 1069–1077 (1985).

Ding, N. et al. Temporal modulations in speech and music. Neurosci. Biobehav. Rev. 81, 181–187 (2017).

Shannon, R. V., Zeng, F. G., Kamath, V., Wygonski, J. & Ekelid, M. Speech recognition with primarily temporal cues. Science 270, 303–304 (1995).

Gabor, D. Theory of communication. Part 1: the analysis of information. J. Inst. Electr. Eng. 93, 429–441 (1946).

Joris, P. X., Schreiner, C. E. & Ress, A. Neural processing of amplitude-modulated sounds. Physiol. Rev. 84, 541–577 (2004).

Overath, T., Zhang, Y., Sanes, D. H. & Poeppel, D. Sensitivity to temporal modulation rate and spectral bandwidth in the human auditory system: fMRI evidence. J. Neurophysiol. 107, 2042–2056 (2012).

Zatorre, R. J. & Belin, P. Spectral and temporal processing in human auditory cortex. Cereb. Cortex 11, 946–953 (2001).

Liégeois-Chauvel, C., Lorenzi, C., Trébuchon, A., Régis, J. & Chauvel, P. Temporal envelope processing in the human left and right auditory cortices. Cereb. Cortex 14, 731–740 (2004).

Okamoto, H., Stracke, H., Draganova, R. & Pantev, C. Hemispheric asymmetry of auditory evoked fields elicited by spectral versus temporal stimulus change. Cereb. Cortex 19, 245–2297 (2009).

Wang, Y. et al. Sensitivity to temporal modulation rate and spectral bandwidth in the human auditory system: MEG evidence. J. Neurophysiol. 107, 2033–2041 (2012).

Schönwiesner, M., Rübsamen, R. & Cramon, Von,D. Y. Hemispheric asymmetry for spectral and temporal processing in the human antero‐lateral auditory belt cortex. Eur. J. Neurosci. 22, 1521–1528 (2005).

Jamison, H. L., Watkins, K. E., Bishop, D. V. M. & Matthews, P. M. Hemispheric specialization for processing auditory nonspeech stimuli. Cereb. Cortex 16, 1266–1275 (2006).

Boemio, A., Fromm, S., Braun, A. & Poeppel, D. Hierarchical and asymmetric temporal sensitivity in human auditory cortices. Nat. Neurosci. 8, 389–395 (2005).

Overath, T., Kumar, S., Kriegstein, von, K. & Griffiths, T. D. Encoding of spectral correlation over time in auditory cortex. J. Neurosci. 28, 13268–13273 (2008).

Hyde, K. L., Peretz, I. & Zatorre, R. J. Evidence for the role of the right auditory cortex in fine pitch resolution. Neuropsychologia 46, 632–639 (2008).

Liégeois-Chauvel, C., de Graaf, J. B., Laguitton, V. & Chauvel, P. Specialization of left auditory cortex for speech perception in man depends on temporal coding. Cereb. Cortex 9, 484–496 (1999).

Kriegstein, von et al. How the human brain recognizes speech in the context of changing speakers. J. Neurosci. 30, 629–638 (2010).

Arsenault, J. S. & Buchsbaum, B. R. Distributed neural representations of phonological features during speech perception. J. Neurosci. 35, 634–642 (2015).

Millman, R. E., Woods, W. P. & Quinlan, P. T. Functional asymmetries in the representation of noise-vocoded speech. NeuroImage 54, 2364–2373 (2011).

Obleser, J., Eisner, F. & Kotz, S. A. Bilateral speech comprehension reflects differential sensitivity to spectral and temporal features. J. Neurosci. 28, 8116–8123 (2008).

Morillon, B. et al. Neurophysiological origin of human brain asymmetry for speech and language. Proc. Natl Acad. Sci. USA 107, 18688–18693 (2010).

Peelle, J. E., Gross, J. & Davis, M. H. Phase-locked responses to speech in human auditory cortex are enhanced during comprehension. Cereb. Cortex 23, 1378–1387 (2013).

Luo, H. & Poeppel, D. Phase patterns of neuronal responses reliably discriminate speech in human auditory cortex. Neuron 54, 1001–1010 (2007).

Morillon, B., Liégeois-Chauvel, C., Arnal, L. H., Bénar, C.-G. & Giraud, A.-L. Asymmetric function of theta and gamma activity in syllable processing: an intra-cortical study. Front. Psychol. 3, 248 (2012).

Giraud, A.-L. et al. Endogenous cortical rhythms determine cerebral specialization for speech perception and production. Neuron 56, 1127–1134 (2007).

Giraud, A.-L. & Poeppel, D. Cortical oscillations and speech processing: emerging computational principles and operations. Nat. Neurosci. 15, 511–517 (2012).

Herdener, M. et al. Spatial representations of temporal and spectral sound cues in human auditory cortex. Cortex 49, 2822–2833 (2013).

Barton, B., Venezia, J. H., Saberi, K., Hickok, G. & Brewer, A. A. Orthogonal acoustic dimensions define auditory field maps in human cortex. Proc. Natl Acad. Sci. USA 109, 20738–20743 (2012).

Joanisse, M. F. Sensitivity of human auditory cortex to rapid frequency modulation revealed by multivariate representational similarity analysis. Front. Neurosci. 8, 306 (2014).

Garofolo, J. S. et al. TIMIT acoustic-phonetic continuous speech corpus. (Linguistic Data Consortium, 1993).

Camacho, A. & Harris, J. G. A sawtooth waveform inspired pitch estimator for speech and music. J. Acoust. Soc. Am. 124, 1638–1652 (2008).

Moore, B. C. J. Hearing (Academic, 1995).

Griffin, D. & Jae, Lim. Signal estimation from modified short-time Fourier transform. IEEE Trans. Acoust. Speech Signal Process. 32, 236–243 (1984).

Chi, T. & Shamma, S. NSL Matlab Toolbox (Neural Systems Lab, Maryland, 2005).

Flinker, A. et al. Redefining the role of Broca’s area in speech. Proc. Natl Acad. Sci. USA 112, 2871–2875 (2015).

Oostenveld, R., Fries, P., Maris, E. & Schoffelen, J.-M. Fieldtrip: open source software for advanced analysis of meg, eeg, and invasive electrophysiological data. Computational Intell. Neurosci. 2011, 1–9 (2010).

Adachi, Y., Shimogawara, M., Higuchi, M., Haruta, Y. & Ochiai, M. Reduction of nonperiodical environmental magnetic noise in MEG measurement by continuously adjusted least squares method. IEEE Trans. Appl. Superconductivity 11, 669–672 (2001).

de Cheveigné, A. & Simon, J. Z. Denoising based on time-shift PCA. J. Neurosci. Method 165, 297–305 (2007).

Dale, A. M. et al. Dynamic statistical parametric mapping: combining fMRI and MEG for high-resolution imaging of cortical activity. Neuron 26, 55–67 (2000).

Yang, A. I. et al. Localization of dense intracranial electrode arrays using magnetic resonance imaging. NeuroImage 63, 157–165 (2012).

Ashburner, J. A fast diffeomorphic image registration algorithm. NeuroImage 38, 95–113 (2007).

Groppe, D. M. et al. iELVis: An open source MATLAB toolbox for localizing and visualizing human intracranial electrode data. J. Neurosci. Method 281, 40–48 (2017).

Collingridge, D. S. A primer on quantitized data analysis and permutation testing. J. Mix. Methods Res. 7, 81–97 (2012).

Acknowledgements

This work was supported by NIH F32 DC011985 and Charles H. Revson Senior Fellowships in Biomedical Science 15–28 to A.F., by NIH 2R01DC05660 to D.P. and by NIMH R21 MH114166-01 to A.D.M. The funders had no role in study design, data collection and analysis, decision to publish or preparation of the manuscript. We would like to thank I. T. Kim and N. Mei, who assisted in the setup and acquisition of psychophysical dichotic data, B. Mahmood and M. Hofstradter, who assisted in NYU ECoG data acquisition and setup, D. Groppe, who assisted in North Shore ECoG data acquisition and electrode reconstruction, and H. Wang, who provided electrode reconstruction at NYU.

Author information

Authors and Affiliations

Contributions

A.F. and D.P. designed the study and hypotheses, and wrote the manuscript. A.F. constructed the stimuli and filtering techniques and collected and analysed the data. A.D.M., O.D. and W.K.D. recruited clinical patients and performed clinical care.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information

Supplementary Figures 1–17 and Supplementary Table 1.

SI Guide

A pdf that explains what the Auditory files are.

Rights and permissions

About this article

Cite this article

Flinker, A., Doyle, W.K., Mehta, A.D. et al. Spectrotemporal modulation provides a unifying framework for auditory cortical asymmetries. Nat Hum Behav 3, 393–405 (2019). https://doi.org/10.1038/s41562-019-0548-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41562-019-0548-z

This article is cited by

-

Spectrotemporal cues and attention jointly modulate fMRI network topology for sentence and melody perception

Scientific Reports (2024)

-

How the conception of control influences our understanding of actions

Nature Reviews Neuroscience (2023)

-

Spectrotemporal content of human auditory working memory represented in functional connectivity patterns

Communications Biology (2023)

-

Bilateral human laryngeal motor cortex in perceptual decision of lexical tone and voicing of consonant

Nature Communications (2023)

-

The common limitations in auditory temporal processing for Mandarin Chinese and Japanese

Scientific Reports (2022)