Abstract

This work provides a data-oriented overview of the rapidly growing research field covering machine learning (ML) applied to predicting electrochemical corrosion. Our main aim was to determine which ML models have been applied and how well they performed depending on the corrosion topic considered. From an extensive review of corrosion articles presenting comparable performance metrics, a ‘Machine learning for corrosion database’ was created, guiding corrosion experts and model developers in their applications of ML to corrosion. Potential research gaps and recommendations are discussed, and a broad perspective for future research paths is provided.

Similar content being viewed by others

Introduction

The data-centric analysis presented in this review article derives from the refs 1,2,3,4,5,6,7,8,9,10,11,12,13,14,15,16,17,18,19. The Materials Genome Initiative (MGI)20 has recently resulted in concentrated efforts to collect materials properties in the form of shared databases21. As a result, data-driven approaches have provided effective tools for driving the prediction of properties and the discovery of new materials21,22,23. In the era of big materials data24, machine learning (ML) has led to a conceptual leap in the field (especially in energy storage applications)21,23,24,25,26,27. Although ML has been gradually applied to corrosion research28, the corrosion community has benefited far less from the progress in Big Data technologies.

As with batteries research data21,29, corrosion data is typically incomplete, noisy, heterogeneous and large in volume (low-value density). Moreover, the in-service corrosive scenarios are complex and changeable, constituting a highly nonlinear system hardly approachable by traditional statistical methods.

ML is the specific area of AI that allows computers to learn from data solving a given task. It consists of a flexible fitting function approach that, in comparison to traditional computational methods, can provide cheap and accurate simulation processes21,24,30. ML aims to acquire knowledge from (very) large datasets by continuously improving their own performance. Despite the lack of prior knowledge of the system, knowledge mining based on ML methods could help the domain expert link outcomes to underlying physical laws or confirm some of the already established concepts24,27,31.

A variety of electrochemical techniques has been employed for the prediction of the corrosion behaviour of metals. Accelerated corrosion tests and weathering tests have been historically used to simulate the working conditions of various systems. However, the simulated environmental factors in accelerated tests are distributed in ranges that often differ from the actual application. While in weathering tests, the environmental factors are time-varying with periodicity randomness32,33. As there are no clear principles on setting fully representative test profiles, the extrapolation of short-term corrosion test results to field exposures in different environments is a challenging topic32,33.

Despite disposing of huge volumes of testing data, the corrosion community still relies on inaccurate predictive models (log-linear34, time-varying function35, dose-response function36, power function36,37, Eyring and Arrhenius38) of the in-service corrosion rate (CR). To the best of our knowledge, only one review referring to ML and corrosion is available39 (this work focused on image recognition and pattern classification).

The selection of the most suitable ML approach is a multifaceted question, depending on the amount of data available, the quality of results desired and the necessity of physical interpretation of the results27. Therefore, the present investigation highlights assessing the predictive power of different machine learning approaches and elaborate on the current status of regression modelling for various corrosion topics.

The data mining approach proposed here also aims at identifying unseen patterns from the intersectional ML and corrosion literature (knowledge mining). We encourage new experimental and computer-oriented scientists to apply ML to corrosion prediction studies by discussing challenges and providing recommendations.

Bibliometric data mining

The main tool used for identifying relevant literature for this Review was the Web of Science search engine, a large abstract/citation database covering mainly peer-reviewed journals40. The queries employed in the search engine were the following: ‘machine learning’ AND ‘corrosion’ (‘Topic’ search, all types of documents). The 164 records returned from the queries (sent on 1 March 2021) were carefully reviewed and filtered based on domain knowledge.

A co-citation network of publications and their citation relations is presented in Fig. 1 for the 2017–2020 period (the size of the nodes is scaled by the number of citations of each source)41,42. The two largest nodes (‘machine learning’ and ‘corrosion’) are highly interconnected and are predominantly related to the ‘prediction’ node than with the ‘design’ or ‘classification’ nodes. The obtained cluster illustrates that research has been conducted on distinct corrosion topics (atmospheric corrosion, pitting corrosion) and materials (alloys, carbon steel, reinforced concrete), using different models (artificial neural network (ANN), random forest (RF), support vector machine (SVM)).

Citation relations from 2017 to 2020 (VOSviewer41).

Initially, 35 papers in which supervised learning were explicitly used for corrosion prediction were considered (classification modelling investigations were excluded). From this preselection, 16 references where regression models were employed while providing comparable metrics were selected1,2,3,4,5,6,7,8,9,10,13,14,15,16,17,18. Finally, 3 other works on artificial neural networks (not designated as ML)11,12,19 were also included.

Machine learning for corrosion database

The final dataset used for this Review consisted of 19 papers comprising 34 ML works whose models were evaluated by comparable metrics (absolute percentage error (MAPE) and/or the correlation coefficient (R²)). The dataset was loaded in a Jupyter Notebook (Python language), and a ML and corrosion database was built into a Pandas DataFrame (further details in the Supplementary information).

The database43 disposes of 47 features and 1 indexing column, the ‘Reference’, the same reference numbering of this article. The features list describes the investigation approach (‘Orientation-strategy’, ‘Targets’). It includes essential descriptors of the data (‘Data collection’, ‘Data availability’), corrosion (‘Corrosion topic’, ‘Material’) and ML (‘Models description’, ‘Evaluation method’, ‘Train-Test split’). All plots presented in this publication were derived from this database43, which is available to download at Mendeley data (related codes available at GitHub).

Based on the ‘Selected features’ attribute in our ML for corrosion database43, Table 1 displays the predictors organised by different categories (environment, material, etc.) for all selected papers1,2,3,4,5,6,7,8,9,10,11,12,13,14,15,16,17,18,19.

It is essential to remember that our reviewing exercise was conducted with 34 investigations coming from at least 9 broad corrosion topics. A case-by-base judgement of the validity of the predictions was out of our scope. This approach would require in-depth expertise related to all addressed topics and much broader access to the corresponding datasets (including the ‘bad training data’, which is traditionally seldomly reported).

Data dimension Vs publication year

Figure 2 displays the ‘Dataset size’ and the ‘Initial N° of attributes’ features as a function of the publication year. Corrosion has always been a multi-dimensional problem, which is reflected in the plot by the relatively constant ‘Initial N° of attributes’ (number of corrosion variables considered before data reduction) from 1999 to 2021. Nonetheless, from 2018 onwards, the growing trend in ‘Dataset size’ identified could be attributed to the ‘Big Data Boom’40. In parallel to the Big Data Boom, the rise in popularity of ML is expected to reduce the contradiction of high-dimensional problems based on small sample sizes21.

Performance of the ML models

The effect of data dimension

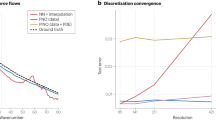

From Fig. 3, it could be seen that the higher the dataset size, the lower tend to be the mean percentage errors, either during training or testing. The associated mean R² values were, whenever possible, included next to the mean MAPE data points, indicating the good correlation between both metrics. Although not likely to confirm it without the corresponding mean R², the low mean MAPE values obtained for dataset sizes smaller than 101 might result from overfitting.

Corresponding mean R² were included whenever possible next to data points. This plot considered all corrosion topics from the ML for corrosion database43. The error bars represent the standard deviation of the mean.

The effect of the Time variable

Figure 4 reveals that the models’ performance (expressed by R2 1,2,3,4,6,7,8,9,10,11,12,13,14,15,16,17,19) considerably increased when the Time was considered as an input (refer to the ‘Selected features’ and ‘Time selected?’ features in the ML for corrosion database (Supplementary information)). This trend generally held for both the training and testing datasets and was particularly remarkable for the ANN model type. It is interesting noting that Linear models are not suitable for predicting corrosion, which is essentially characterised by time-series data.

Performance per corrosion topic

The performance of the models (Machine learning for corrosion database43) was evaluated either by the R2 1,2,3,4,6,17,19 and/or by MAPE3,5,9,10,11,13,19). With the models’ names and corresponding references, Figs. 5 and 6, respectively, display the achieved R² and MAPE values organised by corrosion topic. Moreover, the data points are subdivided by orientation strategy (Fig. 5) or by type of ML model (Fig. 6).

MAPE values are distinguished for training (‘O’) and testing (‘X’) performances. The models’ names were included right next to the respective ‘X’ points (other useful descriptors were also included whenever possible). Part of the results for the Ensemble models is magnified in the inset image (green borders).

These evaluation metrics derive from models with differences in structure/hyperparameters and represent optimum modelling conditions for each case. Therefore, the reader should be aware that the proposed performance comparisons refer to the modelling approaches in the broad sense (not the models solely).

As displayed in Figs. 5 and 6, atmospheric corrosion has been the most studied corrosion topic1,2,3,4,6,9,11. Marine corrosion seems to be a straightforward research topic: not only relatively high predictive accuracies were achieved8,18, but the models demonstrated a high generalisation ability (low difference between training and testing MAPE values (Fig. 6)). On the contrary, the prediction of rebar corrosion has been more scattered, with the highest errors in cases where localised corrosion (pit) occurred (Fig. 618).

The ‘Material & environment’ orientation strategy has been the most popular approach, encompassing corrosion-resistant alloys (CRAs)7, inhibitors16 and atmospheric corrosion1,2,3,6,9 investigations. Regardless of the corrosion subject, Fig. 5 shows that diversifying types of variables leads to increased performances. For example, in the case of rebar corrosion, investigations simultaneously considering material, system-related and environmental descriptors19 clearly outperformed both material14 and environment-oriented12,18 approaches. A system-orientated strategy has been required for pipeline corrosion (including geometrical pipeline characteristics13), with increased goodness of fit when instrumental variables (e.g. operating pressures10) were also considered. Atmospheric corrosion benefited the most when material features were also included in environment-oriented strategies1,2,3,6,9.

Concerning the types of ML models (Fig. 6), ANN (including BPNN (back propagation neural network), DNN (deep neural network)3,8,9,10) and tree-based (RF, cForest, etc.3,9,10) have generally modelled atmospheric corrosion3,9, marine corrosion8 and pipeline corrosion10 with reasonable training errors (mostly below 30%). Comparatively, Kernel-based methods (SVR (support vector regression), SVMs) have generated higher prediction scattering across different corrosion topics3,5,9,18. Although only a few works employed Linear (logistic regression3, linear regression18) or genetic algorithms5, these methods tend to produce high and low MAPE values, respectively. Overall, ensemble methods (combination of individual learners) have yielded the best predictive performances8,9,10,19, except when modelling the pitting of rebar corrosion18.

More details from each investigation, including a further description of the models employed, are disclosed in the corrosion topic sections hereafter.

Reviewing ML per corrosion topic

Atmospheric corrosion

Atmospheric corrosion data, traditionally acquired by coupon exposure tests, is often associated with small sample sizes and high dimensionality defined by multiple corrosion-influencing factors. The complexity of the corrosive environment makes it highly challenging for traditional predictive models to make optimal decisions in short timescales26.

In 1999, in the pioneering work performed by Cai et al.11, a worldwide dataset on the atmospheric corrosion of steel and zinc was used to train and test neural networks. In contrast to previous works using a linear regression technique, the ANN seemed to be a promising modelling tool for addressing nonlinear/complex systems based on interpolation from past experience.

Through sensitivity analysis, ANN could also demonstrate that the CRs of zinc were most likely affected by changes in corrosion products. As the corrosion rates slowed at high TOW (time of wetness), it was suggested that moisture inhibited corrosion due to the formation of protective carbonates. Meanwhile, corrosion significantly increased at high SO2 concentrations due to sparingly soluble basic sulphates shifting towards soluble sulphates11. Concerning steel, knowledge mining showed that the relation between TOW, SO2 concentration and atmospheric corrosion was almost linear11.

Regarding the prediction of corrosion depths, ANN achieved the following (testing) performances for steel and Zn, respectively: R² = 0.548 (MAPE = 39%) and R² = 0.608 (MAPE = 53%)11. These testing performance values are displayed in Figs. 6 and 7.

Data points were segmented by ‘Targets’ (current I, CR, depth) and by ‘ML models’. R² values are distinguished for the training (‘O’) and testing (‘X’) performances. Meaningful entries from the following features were included in the plot: the ‘Material’ (Zn, steel11, steels6); the ‘Selected features’ (elements, phys. features1). QACM indicates the RF with charge correction4. The purple and green arrows, respectively, indicate the data points from refs. 3 and 9 (modelling on the same dataset). The difference between values a, b, c and a′, b′, c′ highlights a low generalisation ability2.

The high variances obtained were mainly due to ignorance of other important affecting variables and inherent scatter in the data from several sources. As the datasets were collected from multiple publications (42), more or less noise or bias is typically unavoidable24.

Although the ANN was not suitable to predict very long-term corrosion (lack of datasets), it was expected that its modelling capability could increase by considering more affecting factors and with the availability of more accurate data11.

Nearly a decade later, Kamrunnahar et al.6 employed a neural network backpropagation method as a data mining tool to predict the CRs of carbon and alloy steels. According to the authors6, the trends that govern long-term behaviour are among the most critical challenges in corrosion studies. Notably, the effect of environmental variables on the outputs is often not well understood.

The ANN employed data organised according to the differences in the environmental conditions, including temperature, pH, etc. (each condition was unique). The supervised neural network could achieve a high prediction accuracy for long periods, despite being trained with short time period data only6. The achieved testing performance (R² = 0.987) is indicated by ‘steels’ and ‘ANN (steels)’ in Figs. 7 and 5, respectively.

More recently, Zhi et al.3 developed ML tools to forecast the outdoor atmospheric CR of low alloy steels (LAS). They also applied corrosion-knowledge mining by using a Random Forests (RF) algorithm. By applying this approach on corrosion data of 17 low alloy steels under 6 atmospheric corrosion test stations in China over 16 years44, the authors aimed at tackling the three main challenges with atmospheric corrosion datasets from multiple materials/environments: (1) their non-linearity, (2) their steep-manifold structure and (3) their (often) small sample size.

In their study, the methods of Logistic Regression (LR) and support vector regression (SVR) were not accurate (MAPE (testing) values of 45.63% and 41.35%) to deal with the steep-manifold structure of the data (at least for the number of samples considered). On the other hand, unstable classifiers (tree-based, ANN) produced acceptable performances using datasets with same sizes (MAPE (testing) = 22.12%, for ANN). In particular, RF was the least affected by the relatively small number of training samples (MAPE (testing) = 16.08%). The MAPE values3 are indicated in Fig. 6, while the corresponding R² (training) values3 are indicated by purple arrows in Fig. 7.

Furthermore, RF was applied separately to 5 sub-datasets with different exposures times: 1, 2, 4, 8, 16 years (the corresponding performances are present in the ‘Sub-datasets with different times’ feature in the ML for corrosion database (Supplementary Notes).

From knowledge mining applied to these sub-datasets, it was revealed that the environmental factor was more important than the material factor, with pH being the most important feature at the two corrosion stages (time split at Year 5). At the initial stage, the ‘environment’ importance was always greater than 80%. Notably, the SO2 and Cl− concentrations had higher importance indices than the temperature (T) and the Relative Humidity (RH), while the rainfall was the second most important feature only in Year 1. At the stationary stage (more than 5 years), the corrosion products shielded the LAS from environments, decreasing the importance of the environment factor (the SO2 importance was much less than Cl−, T and RH)3.

In another work, Zhi and co-authors9 mentioned the current difficulty of making predictions of various materials in diverse and dynamic outdoor environments from modelling approaches based on indoor corrosion tests with just single materials (at least for the commonly used ML models, such as ANN, SVM, power-linear function, hidden Markov). Therefore, the authors proposed a new deep structure, called Densely Connected Cascade Forest-Weighted K-Nearest Neighbours (DCCF-WKNNs), to implement the modelling of LAS atmospheric corrosion from the same dataset previously used in ref. 3. The different models employed in these two works3,9 are not directly comparable because the latter9 disposed of one more input (Fe element). The DCCF-WKNNs inherited the high generalisation ability (MAPE (testing) = 12.95%) from RF for modelling the small-size LAS outdoor atmospheric corrosion data, also demonstrating a better feature extraction ability as a representation learning algorithm with a deep structure. The testing performances (MAPE and R²) obtained with ANN, SVR, RF, RF-WKNNs, cForest (cascade forests), and DCCF-WKNNs9 are highlighted in Fig. 6 and indicated by green arrows in Fig. 7. For more information on the developed ensemble model, the reader is referred to the ‘Models description’ column of the ML for corrosion database (Supplementary Notes).

In addition, knowledge mining could provide quantitative thresholds for the environmental variables; for instance, the CR decreased when pH was higher than ~6.3 or the rainfall was lower than ~320 mm per month; the CR increased when the SO2 concentration was higher than ~0.067 mg cm−3 or the Cl− concentrations were higher than = 0.8 and 1.9 mg cm−3; and whether the electrolyte was continuous or not and whether the film was saturated or not (RH values equal to 67% and 86%, respectively)9.

In the same direction, Yan et al.2 proposed a ML modelling method to simulate the atmospheric corrosion behaviour of LAS. While evaluating the correlations between material, environmental factors and CRs, the impact of rust layer formation as a function of the exposure periods was addressed.

The Pearson correlation coefficient (PCC) was used to evaluate the degree of correlation between multiple variables. Features with significant correlation were grouped into one same cluster and were considered multicollinear features. The dominating factors from each cluster were closely correlated with the output (CR) and independent. The achieved Pearson correlation map demonstrated a positive correlation for all the variables analysed (PCC > 0), highlighting their interdependence (refer to the ‘Attributes interdependence’ feature in the ML for corrosion database43).

The RF, GBDT (gradient boosted decision trees), and XGBoost models showed high predictive accuracy from the training set, with R² values of 0.94, 0.96 and 0.93, respectively2 (points a, b and c in Fig. 7). Next, feature importance analysis revealed that the ELEMENTS (total content of alloying elements) feature was among the most significant during the whole exposure period (the SO2 feature was selected based on domain knowledge). Concerning the environmental features, the CHLORIDE (chloride deposition rate) and PRECIPIT (precipitation) variables were the most significant ones in the first 3 years, while the RH_MIN (minimum relative humidity) became the most significant factor after 5 years2.

From this work, the main shortcoming of the RF, GBDT and XGBoost models was their low generalisation ability: R² values (testing) of 0.73, 0.69 and 0.77, respectively2 (points a′, b′ and c′ in Fig. 7). The authors believed that the data amount was insufficient to reflect the impact of all factors fully. In particular, the low amount of data with high CR values generated a detrimental effect: the models were only accurate for samples with low CR values (limited extrapolation ability)2.

Traditional methods to predict atmospheric corrosion requires the accumulation of environmental data yearly. These annual averages could generate inaccurate predictions, especially in the short term4.

Based on this premise, Pei et al.4 monitored the atmospheric corrosion of carbon steel by a Fe/Cu type galvanic corrosion sensor for 34 days. Upon application of RF for the ranking of feature importance, the T (temperature), RH and rainfall status were considered the most important features (pollutants were not significant due to their low concentrations). The authors recommend that the deposition of chlorides (monthly recording) be included as a feature in future works, especially for modelling longer-term corrosion in marine atmospheres4.

The sensor ended almost fully covered with corrosion products, which affected its response and thus the models’ accuracy over time. This issue was dealt with by considering the rust formation on the sensor with the integral value of instantaneous corrosion current (QACM) as an input parameter, resulting in improved prediction accuracy for all models (QACM was considered the most crucial factor in the second half of the test). Remarkably, the RF model with charge correction produced the highest accuracy, with a R² of 0.940, for both training/testing sets4 (indicated as ‘QACM’ and ‘RF-QACM’ in Figs. 7 and 5, respectively).

The application of CR prediction models is often limited to alloys with similar compositions to those used for training (inputs with a fixed number of elements content). Aiming at overcoming this limitation, Diao et al.1 proposed a feature creation method to convert the chemical composition features from LAS into a set of atomic and physical property features. Thereby, these material-oriented features were used together with environmental factors as inputs, and RF was selected as the modelling algorithm. But firstly, feature reduction methods were applied to select the dominating factors on the data target (GBDT + Kendall provided the best results).

By applying the GBDT + Kendall method, the key factors influencing atmospheric corrosion were identified: Cr, C, Si, P, and S contents, O2_max (maximum dissolved oxygen content), S_max (maximum salinity value of the seawater), pH_min (minimum pH), T_mean (mean temperature). Next, the feature creation methods proposed an effective way to simulate the influence of elemental contents and their interactions with the steel corrosion resistance1. Although the feature creation methods (based on physical properties simulating elements) could extend the model’s applicability to other alloys, it should be mentioned that modelling with the top 4 most important physical features produced lower performance than with the top 5 elements1. This difference in testing performance (R² equal to 0.90 or 0.92)1 is depicted in Fig. 7 as ‘elements’ and ‘phys. features’.

As summarised in Fig. 7, the same predictive tools have successfully modelled both I or CR; namely SVR2,3,4,9, RF1,2,3,4,9 and ANN3,9,11. Although more extensive statistics would be needed to confirm this hypothesis, higher goodness of fit was generally observed having the I as data target (CR is an indirect measure often deriving from I).

Finally, Zhi et al.5 combined a nonlinear grey Bernoulli model (NGBM(1,1)) with genetic algorithm (GA) for long-term prediction on a specific monotonic data series of atmospheric CR. The collected corrosion data of carbon steels exposed in various outdoor atmospheric environments was provided by the China Gateway to Corrosion and Protection44.

NGBM(1,1) combined with GA had been previously used to model small-sample datasets with high performance45,46. Nevertheless, the authors reported that when trying to apply GANGBM(1,1) to CR series data, the prediction accuracy was only high for historical data, but not for future data (model overfitting)5.

A new RGANGBM(1,1) (regularization genetic algorithm nonlinear grey Bernoulli model) was proposed for the long-term prediction of CR vs time series, from the 1st, 2nd, 4th, 8th and 16th year. Despite training the model with data from only one station while testing it on five other datasets, the mean prediction accuracy of this method was relatively high (for instance, MAPE (testing) = 9.15% (16th year)). Such an outcome was attributed to the overfitting optimisation via the regularisation parameter η (knowledge mining showed that η could reflect the corrosivity of the environment)5.

BPNN and SVR were shown to be unreliable when applied to comparable datasets (same sizes), yielding MAPE (testing) values of 28.02% and 83.55% (16th years), respectively—as indicated for ref. 5 in Fig. 6.

Corrosion-resistant alloys

Corrosion prediction is challenging for CRAs, as corrosion tests require considerable time periods for reaching steady states and appreciable weight losses47. Kamrunnahar et al.7 proposed a data mining approach based on weight loss measurements of Alloy 22 in Basic Saturated Water at 60–105 °C (this environment intended to mimic the Yucca Mountain area of the United States, which was of great interest for high-level nuclear waste disposal and storage). The authors were able to predict the general corrosion behaviour of Alloy 22 (canister candidates for nuclear waste disposal) by employing ANN on the training set7. The achieved performance (R² = 0.8)7 is indicated in Fig. 5 as ‘ANN (Alloy 22)’. One of the main outcomes was the observed validity of the Arrhenius rule: the model would be applicable outside the ranges considered for the Arrhenius parameters, temperature and CR. This work7 was elaborated before the Big Data Boom: more data points would be valuable to test the robustness of this ANN approach.

In their early Data mining approach6, Kamrunnahar et al. also employed ANN to investigate the general corrosion of metallic glasses with variable compositions. Their objective was to mimic polarisation curves to calculate corrosion potentials and polarisation resistances then. The developed ANN could learn the underlying functions in mapping corrosion variables, alloy characteristics, and environments (HCl, H2SO4) to the different corrosion metrics. Although the train-test split procedure used was not specified, the model showed good agreement with experimental data as a function of the changing environment (average R² between 0.87 and 1, depending on the data target)6. The achieved performances, with corresponding targets (i, E, Rp) and solutions (HCl, H2SO4), are indicated in Fig. 5 as a green ‘ANN’6.

Marine corrosion

As an early example of ML work, Wen et al.8 combined SVR with particle swarm optimisation (PSO) for predictive modelling of marine corrosion. Before 2008, Artificial Neural Networks were the main ML modelling tool employed for corrosion studies and had proven to be effective for predicting atmospheric corrosion of steel and zinc11.

Nonetheless, the weak generalisation ability and tendency to overfitting was already known for ANN modelling with small-sample datasets. For instance, this work8 demonstrated that the higher the number of training samples (41 or 42 out of 46), the better the regression performance of BPNN (MAPE (testing) of 5.001% or 4.215%, respectively). In comparison to BPNN, a superior generalisation ability was verified for the SVR-PSO, which resulted in MAPE (testing) values of 3.840%, 3.180% and 1.360% (for 41, 42 and 45 (LOOCV) training samples, respectively). Nonetheless, from a goodness-of-fit point of view, it is worth noting that the SVR-PSO (LOOCV) produced the lowest R² (testing) value reported: 0.836. All reported metrics (MAPE and R² (testing)) related to the corresponding models’8 are highlighted in Figs. 5 and 6.

Using sample datasets considered small by today’s standards, SVR-PSO presented a high accuracy for predicting the CR of 3C steel under different seawater environments. Nonetheless, the model also showed a limited extrapolation ability. The collection of more experimental data (with larger data ranges) could enhance the model regression accuracy8.

More recently, using the same dataset as in ref. 8, Chou et al.18 also attempted the ML modelling of marine corrosion on carbon steel. The prediction included single and ensemble models constructed from four well-known machine learners, including SVR/SVMs, classification and regression tree (CART), and linear regression (LR). Various ML models are typically used to construct single classifiers, but ensemble systems compensate for errors made by the individual classifiers. The tiering ensemble models were shown to improve further the predictive accuracy of SVR (best individual model)18.

Nature-inspired metaheuristic optimisation algorithms such as the firefly algorithm (FA) have effectively solved difficult optimisation problems48. A hybrid model was recently implemented by integrating a smart firefly algorithm (SFA) with a least-squares SVR49. Notably, this hybrid SFA-LSSVR model was shown to be effective for modelling CRs of 3C steel, outperforming all other models18. The MAPE (testing) values achieved for SFA-LSSVR (1.26%) and for all other tested models (SVR, CART + LR, LR, CART + SVR + LR, SVMs+SVR, SVMs+CART)18 are highlighted in Fig. 6.

Pipeline corrosion

Understanding and predicting the risk of pipeline corrosion is essential for maintaining oil operations healthy and safe. The first step in the Corrosion Risk Assessment of oil and gas companies is selecting the input parameters for estimating the corrosion defect depth amongst many parameters routinely measured10.

In a quest for estimating the defect depth growth of aged pipelines, Ossai et al.10 implemented a data-driven ML approach relying on PSO, Feed-Forward Artificial Neural Network (FFANN), Gradient Boosting Machine (GBM), RF and Deep Neural Network (DNN). The influence of PCA-transformed (Principal Component Analysis) variables on the accuracy of the models was also conducted.

Before the application of the models mentioned above, a multivariate polynomial regression was used to fit the entire dataset coming from 60 wells (R² (training) = 0.9819, as included in Fig. 5 as ‘MP’10). The objective was to avoid data bias by having new datasets comprising variable scenarios of corrosion severity. Thus, the multivariate polynomial was used for data generation and provided a larger training dataset for the ML algorithms.

A PCA transformation thus highlighted the improvement in the prediction of future states of corrosion defect depth growth. For PCA non-transformed and transformed inputs (respectively), the PSO-FFANN, GBM, RF and DNN models produced training MAPE (%) values of 34.1329, 31.9266, 32.4267, 23.647 and 7.8588, 6.0082, 7.7421, 6.681310. All these models/metrics relationships10 are displayed in Fig. 6.

Supported by the operating parameters of pipelines, this work contributed to understanding the time-dependent changes in the defect depth growth trajectories (over a 10 year period)10.

Since internal corrosion of pipelines results from an interaction of different mechanisms, a large degree of uncertainty is associated with the prediction of the damage evolution.

De Masi et al.13 developed a predictive model to identify pipeline sections exposed to higher corrosion risks (existing approaches have not reproduced behaviours observed during gauging activity). The authors’ premise was that combining data with different natures (geometrical profile of pipelines, fluid dynamic multiphase variables and deterministic models) could enormously improve the performance of predictive tasks13.

Thereby, by considering several network inputs, an ANN ensemble model could correctly identify the regions of pipelines where corrosion was most likely. This strategy improved not only the prediction performance obtained with deterministic models but also with single ANN models. For instance, the R² (testing) values achieved for the ANN and the ANN ensemble were respectively: 0.689 and 0.884 (for CR) or 0.336 and 0.672 (for volume loss). All these performance metrics are mapped in Fig. 513.

Rebar corrosion

The highly nonlinear nature of embedded steel corrosion and the lack of theoretical basis for some corrosion phenomena render the predictive modelling in this topic difficult. In particular, the corrosion initiation of reinforced concrete is a crucial service life parameter, which depends upon the material, the environment, and the duration of exposure. The early detection of corrosion initiation of embedded steel could save cost and time.

To optimise the predictive modelling of corrosion initiation time, Salami et al.14 compared the performances of different ML techniques with the corrosion potential (measured in 5% NaCl) as the data target. Before the regression modelling, various dataset transformations were applied, revealing no significant effect on the resulting performance (the raw dataset was considered)14.

This low sensitivity towards feature engineering techniques is aligned with the more recent models, such as deep learning (provided that the amount of training data is high enough)14.

This finding is in contraction with the results reported in ref. 10, where the performance of the DNN model clearly increased with PCA transformation.

Next, RF was shown to combine high flexibility and could generalise with high accuracy the corrosion initiation times (R² (testing) = 0.897). The effect of split percentage on the performances of RF revealed that the model variance further reduced with an increase in the training dataset, with the highest performance achieved with an 85/15 dataset percentage split (R² (testing) = 0.974). These models and corresponding metrics are displayed in Fig. 514.

The modelling of rebar corrosion is further complexified when localised corrosion (pitting) occurs, which is often the case in chloride-laden media. The chloride threshold (Clth) is defined as the chloride content necessary to sustain the breakdown of the passive film and is an essential parameter for assessing the service life of reinforced concrete12. The literature reports that the current lack of understanding of the Clth value of steel in concrete is due to its numerous factors affecting pitting corrosion (pH, E of the steel, physical condition of the steel/concrete interface)12.

Searching to establish a phenomenological model correlating the pitting risk of steel (characterised by Ecorr - Epit) and environmental factors, Shi et al.12 applied a modified back propagation (BP) algorithm for ANN training with laboratory data from simulated concrete pore solutions. Only the training performance of the back propagation ANN was reported (R² = 0.8741), as indicated by ‘BP ANN’ in Fig. 512.

From knowledge mining, the authors elaborated the following measures to enhance the Clth of rebar: the initial [Cl], as well as the DO, should be limited, while the percentage of bound Cl− and the pH of concrete should be maximised. However, they cautioned that only the overall trends were observed with independent variables maintained at specific levels12.

Six years after the publication of the ‘pitting corrosion risk of steel rebar’ dataset12, Chou et al.18 derived this data for testing their advanced SFA-LSSVR model. Unfortunately, no performance comparison could be elaborated between both works12,18, as different evaluation metrics were used.

The SFA-LSSVR model presented a lower predictive performance for rebar pitting risk than the obtained for marine CR reported in the same work (shown in the Marine corrosion topic)18. Here, as illustrated in Fig. 6 as ‘SFA-LSSVR (pit)’, the model produced a MAPE (testing) value of 5.60%18. Such as for the marine corrosion case, although the tiering ensemble models outperformed all individual models, none of them reached reasonable percentage errors such as the one achieved by the hybrid model18. Further investigations would be envisaged for testing the prediction accuracy of SFA-LSSVR applied to different scenarios.

In 2021, Zhu et al.19 combined ANN with Kohonen Self-Organized Mapping (KSOM) for modelling the chloride threshold (CT) in reinforced concrete from a sparse literature database. Their KSOM-ANN approach was first applied to find the most probable values for missing data, including primary (pH, corrosion potential, breakdown potential, temperature) and secondary independent variables (cement composition, concrete porosity, water/cement ratio) (‘Attributes interdependence’ feature in the ML for corrosion database43). The trained KSOM-ANN was validated by comparing the predicted values with the experimental ones in the evaluation database for CR (%TotalCl/cem). Modelling a dataset of 1242 vectors yielded a high training performance (R² = 0.99, as indicated by ‘K-ANN’ in Fig. 5)19.

Next, the network revealed quantitative correlations between the major dependent (CT in three possible definitions) and the corresponding independent variables. For instance, it was found that CT was only poorly correlated with porosity, water/cement ratio, and the Al2O3, Fe2O3, MgO, K2O and SO3 oxide contents of the cement. These findings are generally not supported by experimental investigations, thus claiming that CT is a highly scattered property and ambiguous in capturing all variables concerned. Particularly in the case of porosity, the weak correlation seemed to result from an interplay between the volume available to accommodate the same amount of Cl− and the migration/diffusion effect, which increases with porosity. Nonetheless, for a comprehensive understanding of the correlations obtained, one should consider that they depend on the definition of CT used. Indeed, a given definition of CT does not provide a unique measure of the aggressiveness of a particular concrete system because of simultaneous changes in pH and pKw (this issue was further addressed in Part II of the series50). Interestingly, the CT was independent of the w/cem ratio in all three definitions, for which the lack of correlation was attributed to the weak effect of the w/cem ratio on the bound Cl−.

Crevice corrosion

Besides being effective when dealing with atmospheric and general corrosion, the ANN model developed by Kamrunnahar et al.6 also proved to be efficient for modelling localised corrosion. Based on crevice corrosion data on Ti-2, the ANN predicted the alloy’s penetration depth over much longer times than the timescale of the experimental data available. The ANN could perfectly fit the datasets for the 4 outputs considered6. The corresponding performance values (R² (training) = 1) are illustrated in Fig. 5 as ‘ANN (Ti-2)’6.

The ANN model developed by Kamrunnahar et al. was not only able to predict the weight loss of the corrosion-resistant Alloy 22 (Nuclear corrosion topic) but also the alloy’s localised behaviour7. Remarkably, the model mapped the environment (pH, [Cl−]), the alloy condition, and the sample treatment to the crevice repassivation potential. Through ANN weighting, the input variable with the highest importance was the chloride concentration, which was a very realistic outcome based on domain knowledge. As only the average R squared value was provided (2-fold cross-validation (CV))7, this was considered as a training (R²) performance point in Fig. 5 (indicated by ‘ANN’7).

Stress corrosion cracking

In 2014, Shi et al.15 derived a database from the literature to train an ANN to predict the crack growth rate (CGR) in 304 SS. The primary motivation of the authors was to reduce the dispersion data (often higher than two orders of magnitude) associated with the modelling estimation of CGR from sensitised stainless steels. First, ANN sensitivity analysis was done to evaluate the contribution of each independent variable to the dependent variable. Next, the ANN-predicted CGR values were shown to be virtually identical to the ones obtained by the (deterministic) extended Coupled Environment Fracture Model (CEFM) (R² (training) = 0.95, as shown in Fig. 5 by ‘ANN’15). Moreover, both the AI model and the deterministic one predicted that CGR increased strongly with increased concentration in O2.

Corrosion inhibitors

Benign and efficient corrosion inhibitors are needed for overpassing the restrictions of toxic chromate-based technologies in the aerospace industry.

Winkler et al.16 combined high throughput experiments with ML to determine the inhibition score (0–10) of corrosion inhibitors for aluminium alloys. The prediction of inhibition performance was conducted over an extensive library (100) of organic molecules tested on AA2024 and AA7075, at pH 4 and 10.

First, ML showed a negligible correlation between quantum chemical properties (calculated by DFT) and inhibition, suggesting that published structure-inhibition models available in the literature were incorrect (these were presumably based on minimum numbers of molecules).

Then, different robust models (including the BRANNGP (feed forward Bayesian regularised neural networks using a Gaussian prior)) were developed, linking molecular properties calculated by non-quantum chemical methods and inhibition. The BRANNGP model overperformed the MLREM model (multiple linear regressions), increasing the R² (training, 80/20 split) values from 0.79, 0.56, 0.75 to 0.82, 0.60, 0.82, for AA7075 (pH 4), AA7075 (pH 10), AA2024 (pH 4), respectively. The only exception was the AA2024 (pH 10) system, where the MLREM produced a higher R² (training, 80/20 split) than the BRANNGP (0.76 and 0.74, respectively)16. All these metric relationships are exhibited in Fig. 5 by the models’ names (with respective alloy and solution)16.

In terms of the number and diversity of inhibitors, range of alloys and pHs, this work16 stands out as one of the most comprehensive corrosion modelling studies. Besides, many descriptors (∼2000) were obtained, and the ones made available were employed in a subsequent ML work on the classification of inhibitors51. Nevertheless, the authors referred to the relatively opaque or arcane nature of the ensemble methods, which renders difficult the mechanistic interpretation of the phenomena16.

Conversion coatings

In a recent design-oriented work17, a new designing strategy, i.e. the ratio of the total acidity (TA) and pH value (TA/pH) was proposed to validate the chemical formulation of a phosphate-based conversion coating. The results indicated that ANN could not fit the corrosion performance solely with the pH or the TA predictor, presenting a relatively accurate prediction based on TA/pH17.

In Fig. 5, the average R squared obtained are presented as R² (training) values, with 0.19, 0.49 and 0.86 for pH, TA and TA/pH, respectively17. Furthermore, the corrosion performance of the phosphate conversion coatings (PCC) increased with the decrease in TA/pH. However, it was impossible to produce a coating with good corrosion performance in pure water (TA/pH equal to 0). The authors explained that a critical value of TA/pH should be the boundary of the proposed theory of TA/pH. They also pointed out the complexity of decreasing TA/pH under the premise of maintaining the stability of the conversion bath and concluded that the validity of using TA/pH in large pH ranges should be further discussed17.

High-temperature oxidation

Although the data analysis conducted in this Review is entirely based on research papers focusing on electrochemical corrosion, two recent articles covering a correlated topic (ML applied to high-temperature oxidation)52,53 are worth the assessment.

In the first of these two publications52, ML was used to predict the parabolic rate constant (kp) for high-temperature oxidation of Ti alloys. Three different regression ML models (Gradient Boosting, Random Forest, k-Nearest Neighbours) were applied to a dataset of kp values (115 data points) built from experimental studies reported in the literature. Several descriptors (alloys’ composition, constituent phase, temperature of oxidation, time for oxidation, O2 content, moisture content, remaining atmosphere, mode of oxidation) were selected as independent variables. The gradient boosting model produced the highest achieved accuracy (R² = 0.92). The learning from this work could be extended to design novel Ti alloys with enhanced resistance against high-temperature oxidation52.

In the second publication53, 237 unique kp values were extracted from publications, calculated from corrosion product data and structured in a comprehensive database. The high-temperature oxidation dataset comprised entries from various environments (exposed temperatures between ~500 and 1700 °C) from 75 alloys, including low- and high-Cr ferritic/austenitic steels, nickel superalloys, aluminide materials. Several modelling strategies (including supervised ML) were implemented to explore the relationships between composition and oxidation kinetics. The modelling of materials based on their kp values presented the lowest errors achieved when supervised ML (logistic regression, linear regression, RF, decision tree, neural network) was employed. Furthermore, the dominant elements encountered to control the oxidation kinetics were Ni, Cr, Al and Fe (Mo and Co were also considered relevant descriptors)53.

Modelling approaches: critical analysis

One of the most commonly encountered findings against good practices in ML is the use of small training datasets, which potentially leads to overfitting. For instance, BPNN and SVR were unreliable when applied to datasets with low sizes5. In ref. 7 (‘Crevice corrosion’ section), the small sample dataset was the main drawback of the study. The small size of the training sample for the network modelling was also critical in ref. 13. Conversely, the MP regression was most likely only efficient because dealing with such a large input dataset (8300 samples)10. Regrettably, the final data dimension upon application of PCA was not presented10.

Another issue with small datasets is that appropriate data splitting methods become less feasible. For example, due to the small sample size (only 8 samples), the data was not divided into training and testing sets in ref. 6 (‘Crevice corrosion’ section). The training-testing split method was unfortunately not clear6 (‘Atmospheric corrosion’ section): only one type of steel seems to have been included in the testing set (the training performance was not presented). In ref. 7 (‘Corrosion-resistant alloys’ section), the same data was used for training-testing (the employed cross-validation method was not specified).

Not only the model’s generalisation ability is essential, but also its extrapolation ability. Hence, to improve the prediction accuracy, more relevant data within appropriate ranges would be needed. In the case of ref. 2, this would imply more samples with corrosion rates higher than 0.050 mm per year. As reported in ref. 13, the prediction accuracy could be enhanced by considering larger datasets comprising several pipeline cases and flow conditions. In ref. 14, tests were performed under laboratory conditions only: the validation of the RF method for the prediction of concrete corrosion would be recommended in in-field samples. Similarly, further study would be required to explore the broader applicability of the SFA-LSSVR18. Unfortunately, the electrochemical methods employed for data acquisition in ref. 8 are unknown.

In ref. 1, features such as the flow velocity or microorganisms, also seriously influencing the corrosion rate of LAS in marine environments, were not explored. The investigation3 lacks features describing the material microstructure. Unfortunately, the paper9 did not discuss the effects of the composition or the microstructure. An illustrative example is the pitting risk of steel in simulated concrete pore solutions12: modelling from the referred predictors would be more complex than the initially conceived. Although the flow velocity was not included in this analysis15, theory indicates that this operational variable should be considered in SCC modelling. When assumptions are oversimplified, the model might be susceptible to underfitting. Increasing the data dimension with properly selected variables (based on domain knowledge) might improve further the model performance.

Finally, only one referenced paper19 formally addressed the critical property of attributes interdependence. In there, as the corrosion potential (ECP) is also a function of T, pH and [Cl−], the trends in CT with these independent variables must consider their interdependence with ECP19. Input independence leads to a more accurate prediction when there is enough information to describe data distribution in feature space. However, adding variables that depend on others and contain newer and richer information would improve the model accuracy and robustness.

Performance of the ML models: conclusions

Not all articles used the same training, validation, and testing datasets, bringing extra uncertainty to comparing performances. As clearly visible in the plots dividing the training and testing performances, the testing performances are sometimes not reported, contrary to the good practices in the field. For the sake of easing comparisons, it would also be recommended to clearly report the number of learnable parameters/hyperparameters between models54.

Nonetheless, one could apprehend from Figs. 6 and 7 that the linear regression algorithms have difficulties in dealing with nonlinear datasets and/or with high dimensionality data. Kernel-based algorithms are effective when dealing with nonlinear and/or sparse data but have a low training efficiency with large datasets26,27. The SVR models’ performances are associated with points with relatively high scatter, reflecting their sensitivity to the Kernel selection (which could lead to training failure).

On the other hand, neural networks are more training expensive (vast number of tuning parameters) but provided there is enough data available, they might yield the best possible quality fitting27. This observation corresponds well with the performances observed for BPNN and/or ANN (Figs. 6 and 7). Neural networks were developed for modelling sequence data, and the recurrent method (most of the ANN models in the ML for corrosion database43) is especially suitable for time series data (Fig. 4). For example, in Fig. 7, ANN presented a lower predictive performance on corrosion depth (static data)11 than on CR (temporal data)3,9.

The Tree-based methods can handle missing data and datasets with multiple features but can be prone to instabilities24,26,40. As explicit in Figs. 6 and 7, the considerable divergence between training and testing performances might reflect their overfitting characteristic.

Deep learning models (for example, ANN with multiple hidden layers) have high robustness, accuracy, and reliability when dealing with highly nonlinear and complex relationships between the input and output data26,40. The main drawback of deep learning, which requires a large amount of training data, is most likely to be surmounted as we enter the Big Data and the internet of things (IoT) era40.

The ensemble (hybrid) methods cited accomplished predictive tasks with superior performance by combining various weak learners. Worthy mentioning the regression trees (weak learner) was efficiently used to build models (GBDT, XGBoost) for predicting the weight loss of LAS2.

Modelling with ensemble methods (SVR-PSO, RF-WKNNs, PSO-FFANN, SFA-LSSVR, KSOM-ANN) or deep learning (cForest, DCCF-WKNNs, DNN, and the most ANNs here addressed) generally produced the highest observed performances. Both approaches could certainly solve possible contradictions between the high dimensionality and the small sample often observed in electrochemical data21,40.

Challenges

A central challenge in machine learning is the conflict between the complexity and accuracy of the models21. This point is particularly true in the case of deep learning because these models usually have more complicated structures (structural designing becomes vital)26. Such as illustrated in Fig. 7, hybrid models with high complexity (XGBoost, RF-WKNNs, cForest, DCCF-WKNNs) could yield better performance than all the others. However, concerns related to their scalability exist, especially when non-parametric methods (tree-based, CARTs, SVMs, KNNs) are included40.

From a Review on ML approaches for energy systems40, the need to develop models that are generalisable to wider settings is claimed. The authors also point out the lack of modelling details across a vast part of the reviewed literature. Notably, the number of times the models were trained are rarely reported54.

As it is complicated to translate the ML black box mechanics to formulas, many efforts have been devoted to developing more interpretable algorithms24,55, including the rule extraction (express the implied knowledge with patterns that are easy to understand)21 and argument based machine learning (ABML), which improves the comprehensibility of the learned concepts31.

The learning achieved by ML frequently conflicts with domain expert knowledge. As much as for the batteries field, it is also imperative to establish AI approaches encompassing domain knowledge in the corrosion field21. For example, from this work on atmospheric corrosion2, each feature’s significance to the CR was ranked based on the Pearson correlation coefficient and MIC (maximal information coefficient) methods. Although the SO2 feature was not indicated as dominating factor, the authors decided to include it based on domain knowledge.

Although ML is a powerful tool for finding statistical patterns hidden behind multi-dimensional data, condensing the extracted knowledge into scientific laws for general cases is challenging24. When the expertise from the corrosion field is incorporated into the modelling already during the definition of problems, it often leads to the best results31.

Typical algorithms that could integrate domain knowledge and ML methods include Bayesian network, fuzzy learning21 and argument based machine learning (ABML enables the knowledge to be articulated easily)31.

The availability of high-quality datasets is a primary challenge in ML for corrosion and is separately addressed in the Data sharing section.

Guidelines

The success of ML modelling most likely relies on the use of large datasets (Fig. 3), but even more on the proper selection of features23,24. Incorporating basic descriptors based on domain knowledge often simplifies the representation and improves the interpretability of machine learning modelling21. As highlighted in this Review (Fig. 4), the selection of temporal variables positively affects the overall models performance. Alternatively, ML could be applied to capture the remaining divergence between the target properties and the outputs from deterministic modelling27.

Such as in the case of this addressed study13, where data from different natures were considered (in-field measurements, software simulation and deterministic models), ML should be coupled more tightly with other approaches (experiments, numerical simulations, deterministic models)26. For instance, as previously described6, ANN could validate the high fidelity prediction of a deterministic model (CEFM) in capturing the electrochemical/mechanical/microstructural phenomena of crack growth rates.

In a Review paper on energy materials published in 202126, the authors emphasised that the application of ML should not be limited to the predictive tasks but should encompass all data-related stages, including data-preprocessing and data mining. Text mining technologies, including full-text publisher application programming interfaces (APIs) and natural language processing (NLP)56, have been extensively used in materials science and chemistry24.

Moreover, ML could be an intermediate step for processing the collected datasets, thus reducing redundant experiments (or simulations)26. Ensemble learning strategies could be applied in the training phase to increase the model efficiency and robustness.

Data sharing: initiatives and recommendations

Unlike traditional hard-coded modelling based on human expertise, ML approaches rely on available datasets for building reliable models. For accelerated progress of corrosion predictive modelling, collaborative culture of sharing of knowledge and resources (data, models, software) between the computer science and corrosion fields should be fostered57.

In the era of big materials data, considerable efforts have been made to collect and build materials properties databases24,58. In their review on machine learning for molecular and materials science, Butler et al.59 listed the publicly accessible machine learning resources and tools. The available ML tools related to molecules and materials might be of great interest for corrosion research. The Materials Project and the Open Quantum Materials Database (OQMD) (referred to in ref. 27) also provides an ideal platform for machine learning.

The ‘Data availability’ feature of the Machine learning for corrosion database (Supplementary Notes) provides links to high quality publicly available datasets that can be downloaded for ML modelling. Atmospheric corrosion is the most studied topic, thanks to the good availability of atmospheric/climatic data obtained following standardised approaches.

Besides sharing datasets, researchers should ideally open their modelling codes and libraries as much as possible, thus providing a viable path for the transferability of their models25,30. Among the papers considered in the Machine learning for corrosion database43, only one investigation16 made available related codes (see the ‘Libraries’ feature (Supplementary information)).

However, electrochemical data is often not shared in a systematic and machine-readable way25,27. The research community is also concerned with how to build/optimise such databases in a more automated fashion.

Data standards and tools for automated organisation are needed to structure the data in a reusable fashion. Such initiatives have paved the way for innovation in battery technologies (through wider availability of high-quality databases)25,27,30. It would be recommended not to let corrosion data buried in figures only but also to present it within structured Tables that are readily accessible. In particular, reporting more failed data used during training and data from negative experiments would accelerate this process24,27.

Research evolution and perspectives

Training ML models with large datasets presenting comparable features across wide ranges of values is paramount for accurate prediction2,24,26,54.

In a Review paper on rechargeable batteries21, virtual sample generation (VSG) methods are cited as a solution for filling in data gaps. As a similar example from the selected papers on pipeline corrosion, the multivariate polynomial employed by Ossai et al.10 generated defect depth values to complement their training dataset.

Another method for constructing training sets that might be particularly useful for corrosion studies based on small sample data is active learning (selecting effective samples by iterative sampling that ensures the highest possible accuracy21). Active learning has been considered a promising approach for learning the high-dimensional data of battery materials21. Similarly, in a recent review paper on ML and energy storage, Gao et al.26 refer to an unsupervised learning (generative adversarial network, GAN) capable of reconstructing/repairing datasets, thus enhancing their quality. The GAN approach might be beneficial for in-field corrosion measurements, where the quality and integrity of the data are often compromised.

Finally, transfer learning technology has been demonstrated to improve the applicable range of predictive models23,26. From the review on deep learning on materials degradation39, Nash et al. mentioned the capability of transfer learning in fine-tuning models for tasks other than the ones they were trained on.

Although not the focus of this paper, machine learning has accelerated the discovery of new materials21,22,23. In the development of batteries, Attia et al.52 employed a ML method that could reduce the time of performance tests by 98%. A similar approach would be instrumental in corrosion research because testing in simulated environments usually lasts weeks or even months (corrosion acceleration tests). This time-consuming aspect has become a limiting factor for the long-term study of corrosion, particularly atmospheric corrosion, where testing protocols often take years to complete.

Seeking at discovering the links between material composition, structure, properties and performance, scientists have input various property features to machine learning21,28. For example, ML methods have been applied to property prediction for energy conversion materials, potentially overcoming the shortcomings of DFT (high consumption of computational resources)24. For instance, ML of quantum-mechanical reference data has been used for fast and accurate interatomic potentials of battery materials30. ML has indeed the potential to bridge new synergies between experimental data and DFT modelling.

In their comprehensive study on the machine learning of corrosion inhibitors, Winkler et al.16 assumed that molecular properties permitted more reliable models than quantum chemical properties of the individual compounds. In an ensuing investigation based on classification algorithms51, the structure-property relationships in aluminium alloy inhibitors were further elucidated. Galvão et al.51 employed new descriptors related to the self-association between inhibitors (dimerisation enthalpies and Gibbs energies), gaining further insights into the properties that distinguish these molecules.

This bibliometric data mining revealed not only the low number of ML works focusing on corrosion inhibitors (or other vital topics such as protective coatings or localised corrosion) but also identified several research gaps in which ML could be envisaged: EIS, local electrochemical techniques, microbial corrosion, corrosion of electronics, tribocorrosion.

To decrease the usage complexity of ML, automated (Auto) ML has attempted to limit human intervention in the model implementation21. In this context, meta-learning or ‘learning to learn’ methods have emerged and shown to be particularly useful in image recognition24. The task of hyperparameters’ optimisation of nonlinear models (ANN, SVR) is complex and often relies on trial and error. Hyperparameter optimisation methods have been proposed to simplify these tuning processes21, including Auto ML60. From the selected papers, two investigations implemented distinct approaches to address this issue: the SFA was proven to be effective in dynamically optimise the LSSVR hyperparameters18, and the genetic algorithm NGBM (1,1) included a regularisation term η to avoid the overfitting problem5.

A variation of ensemble method called Temporal Ensemble Learning (TEL), in which temporal features portion the dataset for the forecast at specific time ranges40, might be a promising alternative for time series data in corrosion.

Although probabilistic (Gaussian processing regression) models have shown promising forecasting results in the energy and battery domains26,40, as far as the authors’ knowledge on supervised learning goes, probabilistic regression tools have not yet been considered for corrosion research. All in all, hybrid models and holistic modelling approaches transcending time, length and mechanism scales point towards fruitful directions.

Unlike supervised and unsupervised learning, Reinforcement Learning (RL) can consider the trade-off between exploration and exploitation. RL is one of the most promising computational ML approaches by interacting with an unknown environment while explicitly focusing on goal-oriented learning40.

Conclusions

This data-driven review work depicts the current status of the emerging research on predictive machine learning applied to corrosion.

A bibliometric data mining approach led to creating a Machine learning for corrosion database, covering 34 works where relative metrics did the performance evaluation. Exploratory data analysis demonstrated the positive effects of increasing data dimension and including time as an input on the models performance. A comparative performance analysis was conducted, segmenting the references by corrosion topic, orientation strategy, type of ML model and data targets. In particular, expanding the types of variables (orientation strategies comprising data from multiple relevant sources) likely increases the models performance. The plots bridging the multi-dimensional data to the references serve as a roadmap for future regression modelling of corrosion. More studies focusing on the prediction of localised corrosion and inhibition efficiency should be considered in the future.

Accurate and reliable modelling requires large sets of training (and validation) data of high quality. Moreover, the process of labelling corrosion features should be based on the experience and intuition of domain researchers. Despite the challenge of establishing direct comparative analysis on the performances, this reviewing exercise allowed identifying research gaps and evaluating common practices in the field. For more systematic incorporation of ML within the corrosion community, concerted international efforts are needed to promote data sharing practices. Domain experts would benefit far more from the literature if the modelling datasets were made available alongside the related publications.

Data availability

The processed data required to reproduce these findings are available to download from Bertolucci Coelho, Leonardo (2021), ‘Machine learning for corrosion database’, Mendeley Data, V1, https://doi.org/10.17632/jfn8yhrphd.143, permanently stored at the repository via the link https://data.mendeley.com/datasets/jfn8yhrphd/1.

Code availability

The code required to reproduce these findings is available to download from GitHub: https://github.com/bcoelho-leonardo/Machine-learning-for-corrosion/blob/77c05253447d4bca737a126f678391e0f98108df/Machine%20learning%20for%20corrosion%20database_codes.ipynb.

Change history

25 August 2022

A Correction to this paper has been published: https://doi.org/10.1038/s41529-022-00284-8

References

Diao, Y., Yan, L. & Gao, K. Improvement of the machine learning-based corrosion rate prediction model through the optimization of input features. Mater. Des. 198, 109326 (2021).

Yan, L., Diao, Y., Lang, Z. & Gao, K. Corrosion rate prediction and influencing factors evaluation of low-alloy steels in marine atmosphere using machine learning approach. Sci. Technol. Adv. Mater. 21, 359–370 (2020).

Zhi, Y., Fu, D., Zhang, D., Yang, T. & Li, X. Prediction and knowledge mining of outdoor atmospheric corrosion rates of low alloy steels based on the random forests approach. Metals 9, 383 (2019).

Pei, Z. et al. Towards understanding and prediction of atmospheric corrosion of an Fe/Cu corrosion sensor via machine learning. Corros. Sci. 170, 108697 (2020).

Zhi, Y. et al. Long-term prediction on atmospheric corrosion data series of carbon steel in China based on NGBM(1,1) model and genetic algorithm. Anti-Corros. Method M 66, 403–411 (2017).

Kamrunnahar, M. & Urquidi-Macdonald, M. Prediction of corrosion behavior using neural network as a data mining tool. Corros. Sci. 52, 669–677 (2010).

Kamrunnahar, M. & Urquidi-Macdonald, M. Prediction of corrosion behaviour of Alloy 22 using neural network as a data mining tool. Corros. Sci. 53, 961–967 (2011).

Wen, Y. F. et al. Corrosion rate prediction of 3C steel under different seawater environment by using support vector regression. Corros. Sci. 51, 349–355 (2009).

Zhi, Y., Yang, T. & Fu, D. An improved deep forest model for forecast the outdoor atmospheric corrosion rate of low-alloy steels. J. Mater. Sci. Technol. 49, 202–210 (2020).

Ossai, C. I. A data-driven machine learning approach for corrosion risk assessment—a comparative study. Big Data Cogn. Comput. 3, 28 (2019).

Cai, J., Cottis, R. A. & Lyon, S. B. Phenomenological modelling of atmospheric corrosion using an artificial neural network. Corros. Sci. 41, 2001–2030 (1999).

Shi, X., Anh Nguyen, T., Kumar, P. & Liu, Y. A phenomenological model for the chloride threshold of pitting corrosion of steel in simulated concrete pore solutions. Anti-Corros. Method M 58, 179–189 (2011).

De Masi, G., Gentile, M., Vichi, R., Bruschi, R. & Gabetta, G. Machine learning approach to corrosion assessment in subsea pipelines. in OCEANS 2015 - Genova 1–6 (IEEE, 2015). https://doi.org/10.1109/OCEANS-Genova.2015.7271592

Salami, B. A., Rahman, S. M., Oyehan, T. A., Maslehuddin, M. & Al Dulaijan, S. U. Ensemble machine learning model for corrosion initiation time estimation of embedded steel reinforced self-compacting concrete. Measurement 165, 108141 (2020).

Shi, J., Wang, J. & Macdonald, D. D. Prediction of crack growth rate in Type 304 stainless steel using artificial neural networks and the coupled environment fracture model. Corros. Sci. 89, 69–80 (2014).

Winkler, D. A. et al. Using high throughput experimental data and in silico models to discover alternatives to toxic chromate corrosion inhibitors. Corros. Sci. 106, 229–235 (2016).

Chunyan, Z. et al. Ratio of total acidity to pH value of coating bath: a new strategy towards phosphate conversion coatings with optimized corrosion resistance for magnesium alloys. Corros. Sci. 150, 279–295 (2019).

Chou, J., Ngo, N. & Chong, W. K. The use of artificial intelligence combiners for modeling steel pitting risk and corrosion rate. Eng. Appl. Artif. Intell. 65, 471–483 (2017).

Zhu, Y., Macdonald, D. D., Qiu, J. & Urquidi-Macdonald, M. Corrosion of rebar in concrete. Part III: Artificial Neural Network analysis of chloride threshold data. Corros. Sci. 185, 109438 (2021).

Materials Genome Initiative. https://www.mgi.gov/ (2021).

Liu, Y., Guo, B., Zou, X., Li, Y. & Shi, S. Machine learning assisted materials design and discovery for rechargeable batteries. Energy Storage Mater. 31, 434–450 (2020).

Scully, J. R. & Balachandran, P. V. Future frontiers in corrosion science and engineering, part III: the next “Leap Ahead” in corrosion control may be enabled by data analytics and artificial intelligence. Corrosion 75, 1395–1397 (2019).

Luo, Z. et al. A survey of artificial intelligence techniques applied in energy storage materials R&D. Front. Energy Res. 8, 1–12 (2020).

Chen, A., Zhang, X. & Zhou, Z. Machine learning: accelerating materials development for energy storage and conversion. InfoMat 2, 553–576 (2020).

Aykol, M., Herring, P. & Anapolsky, A. Machine learning for continuous innovation in battery technologies. Nat. Rev. Mater. 5, 725–727 (2020).

Gao, T. & Lu, W. Machine learning toward advanced energy storage devices and systems. iScience 24, 1–33 (2021).

Ng, M.-F., Zhao, J., Yan, Q., Conduit, G. J. & Seh, Z. W. Predicting the state of charge and health of batteries using data-driven machine learning. Nat. Mach. Intell. 2, 161–170 (2020).

Wei, X. et al. Data mining to effect of key alloying elements on corrosion resistance of low alloy steels in Sanya seawater environmentAlloying Elements. J. Mater. Sci. Technol. https://doi.org/10.1016/j.jmst.2020.01.040 (2020).

Li, S., Li, J., He, H. & Wang, H. Lithium-ion battery modeling based on Big Data. Energy Procedia 159, 168–173 (2019).

Deringer, V. L. Modelling and understanding battery materials with machine-learning-driven atomistic simulations. J. Phys. Energy 2, 041003 (2020).

Mozina, M., Guid, M., Krivec, J., Sadikov, A. & Bratko, I. Fighting Knowledge Acquisition Bottleneck With Argument Based Machine Learning. 234–238, https://doi.org/10.3233/978-1-58603-891-5-234 (2008).

Cai, Y., Xu, Y., Zhao, Y. & Ma, X. Atmospheric corrosion prediction: a review. Corros. Rev. 38, 299–321 (2020).

Cai, Y., Xu, Y., Zhao, Y. & Ma, X. Extrapolating short-term corrosion test results to field exposures in different environments. Corros. Sci. 186, 109455 (2021).

Feliu, S., Morcillo, M. & Feliu, S. The prediction of atmospheric corrosion from meteorological and pollution parameters—I. Annual corrosion. Corros. Sci. 34, 403–414 (1993).

Chan, V. Degradation-based reliability in outdoor environments. https://doi.org/10.31274/rtd-180813-12114 (Iowa State University, Digital Repository, 2001).

Mikhailov, A. A., Tidblad, J. & Kucera, V. The classification system of ISO 9223 standard and the dose–response functions assessing the corrosivity of outdoor atmospheres. Prot. Met. 40, 541–550 (2004).

Klinesmith, D. E., McCuen, R. H. & Albrecht, P. Effect of environmental conditions on corrosion rates. J. Mater. Civ. Eng. 19, 121–129 (2007).

David, P. K. & Montanari, G. C. Compensation effect in thermal aging investigated according to Eyring and Arrhenius models. Eur. Trans. Electr. Power 2, 187–194 (2007).

Nash, W., Drummond, T. & Birbilis, N. A review of deep learning in the study of materials degradation. npj Mater. Degrad 2, 37 (2018).

Antonopoulos, I. et al. Artificial intelligence and machine learning approaches to energy demand-side response: a systematic review. Renew. Sustain. Energy Rev. 130, 109899 (2020).

van Eck, N. J. & Waltman, L. Software survey: VOSviewer, a computer program for bibliometric mapping. Scientometrics 84, 523–538 (2010).

Hashemi, S. J. et al. Bibliometric analysis of microbiologically influenced corrosion (MIC) of oil and gas engineering systems. Corrosion 74, 468–486 (2018).

Bertolucci Coelho, L. Machine learning for corrosion database, Mendeley Data. https://doi.org/10.17632/jfn8yhrphd.1 (2021).

China Gateway to Corrosion and Protection. http://data.ecorr.org/ (2021).

Hsu, L.-C. A genetic algorithm based nonlinear grey Bernoulli model for output forecasting in integrated circuit industry. Expert Syst. Appl. 37, 4318–4323 (2010).

Ma, D. & Bai, H. Groundwater inflow prediction model of karst collapse pillar: a case study for mining-induced groundwater inrush risk. Nat. Hazards 76, 1319–1334 (2015).

Donaldson, L. Metallic glass-based materials in wearable energy storage devices. Mater. Today 36, 3 (2020).

Yang, X.-S. Firefly Algorithms for Multimodal Optimization. 169–178, https://doi.org/10.1007/978-3-642-04944-6_14 (2009).

Chou, J.-S. & Pham, A.-D. Smart artificial firefly colony algorithm-based support vector regression for enhanced forecasting in civil engineering. Comput. Civ. Infrastruct. Eng. 30, 715–732 (2015).

Zhu, Y., Macdonald, D. D., Yang, J., Qiu, J. & Engelhardt, G. R. Corrosion of rebar in concrete. Part II: Literature survey and statistical analysis of existing data on chloride threshold. Corros. Sci. 185, 109439 (2021).

Galvão, T. L. P., Novell-Leruth, G., Kuznetsova, A., Tedim, J. & Gomes, J. R. B. Elucidating structure-property relationships in aluminum alloy corrosion inhibitors by machine learning. J. Phys. Chem. C. 124, 5624–5635 (2020).

Attia, P. M. et al. Closed-loop optimization of fast-charging protocols for batteries with machine learning. Nature 578, 397–402 (2020).

Bhattacharya, S. K., Sahara, R. & Narushima, T. Predicting the parabolic rate constants of high-temperature oxidation of Ti alloys using machine learning. Oxid. Met. 94, 205–218 (2020).

Vidal, C., Malysz, P., Kollmeyer, P. & Emadi, A. Machine learning applied to electrified vehicle battery state of charge and state of health estimation: state-of-the-art. IEEE Access 8, 52796–52814 (2020).

Yang, L., Wang, P., Jiang, Y. & Chen, J. Studying the explanatory capacity of artificial neural networks for understanding environmental chemical quantitative structure−activity relationship models. J. Chem. Inf. Model. 45, 1804–1811 (2005).

Kim, E. et al. Materials synthesis insights from scientific literature via text extraction and machine learning. Chem. Mater. 29, 9436–9444 (2017).