Abstract

Human nonverbal communication tools are very ambiguous and difficult to transfer to machines or artificial intelligence (AI). If the AI understands the mental state behind a user’s decision, it can learn more appropriate decisions even in unclear situations. We introduce the Brain–AI Closed-Loop System (BACLoS), a wireless interaction platform that enables human brain wave analysis and transfers results to AI to verify and enhance AI decision-making. We developed a wireless earbud-like electroencephalography (EEG) measurement device, combined with tattoo-like electrodes and connectors, which enables continuous recording of high-quality EEG signals, especially the error-related potential (ErrP). The sensor measures the ErrP signals, which reflects the human cognitive consequences of an unpredicted machine response. The AI corrects or reinforces decisions depending on the presence or absence of the ErrP signals, which is determined by deep learning classification of the received EEG data. We demonstrate the BACLoS for AI-based machines, including autonomous driving vehicles, maze solvers, and assistant interfaces.

Similar content being viewed by others

Introduction

The recent development of bio-integrated technology based on wearable and implantable electronics has made it possible to continuously measure bioinformation, such as physiological, electrophysiological, and chemical signals1,2,3,4,5. Such devices can be utilized in a human–machine interface (HMI) integrated with advanced Internet of Things (IoT) and artificial intelligence (AI) technologies6,7,8,9,10,11,12,13. In particular, the brain–machine interface (BMI), which enables interaction between the brain and machines14,15,16, can be established using electrophysiological sensors, such as brain-surface17, brain-penetrating18,19, and skin-surface electrodes20,21, to assess electrocorticography (ECoG), local field potentials (LFPs), and electroencephalography (EEG), respectively. Even with heavy signal processing, the signals from the brain are usually converted into simplified and unreciprocated orders for the machine and involve uncomfortable use (for instance, by watching flickering displays to guide the direction of a wheelchair)20,21,22.

An ideal approach for expanding these interactions is the basis for Steven Spielberg’s film A.I. Artificial Intelligence (2001), which features a robot that attempts to understand human emotions and independently revise/reinforce behavior with bidirectional interactions23. Although such interaction has not yet been realized, basic collaborations with machines have recently been suggested24,25,26,27,28,29,30,31,32. The AI recognizes error-related potential (ErrP), one of the human brain wave patterns, and is able to accelerate the reinforcement of its decision to increase yield of the decision-making. The ErrP is an event-related potential as a result of an error stimulus. It is a complex pattern consisting of error-related negativity (ERN), N200/feedback-related negativity (FRN), and P300/Error positivity (Pe)33. The systems that continuously reinforce the functions of AI by accepting the ErrP as human guidance is very convenient because it does not require conscious effort compared to the method that uses hands, voice, and facial expressions34,35,36. This approach is inevitable in the current era, in which AI-powered machines are developing rapidly, replacing human decision-making, and required continuous feedback37,38. However, current technologies are being researched and applied only under very limited conditions. In most studies, (1) wet electrolyte-based electrodes that dry over time are used, the impedance changes over time, making it difficult for long-term measurement24,25,26,27,28,29,30,31,32; (2) using multiple electrodes and fixing them in the form of a helmet or headset causes inconvenience to users and generates noise due to its heavyweight24,25,26,27,28,29,30,31,32; (3) EEG measurement device does not support wireless communication, noise is generated from the connector, and it cannot be moved away from the data collection device or target agent24,25,27,28,29,31,32; (4) accordingly, unrealistic research is conducted only with a monitor, not with autonomous machines24,25,28,29,30; (5) the only theoretical studies are conducted without closed-loop demonstration that enables continuous learning by the ErrP feedback24,25,28 (Supplementary Table 1). For a practical and convenient BMI system, it will be necessary to conduct integration that considers electrodes, connectors, and wireless system as well as optimized geometries for fixation method and even the closed-loop configurations39.

Thus, we introduce a wireless, neuroadaptable (adaptable in relation to the subject’s mindset), intuitive Brain–AI Closed-Loop System (BACLoS) based on an earbud-like wireless EEG device (e-EEGd) composed of tattoo-like electrodes, connectors, and a wireless EEG earbud. The neuroadaptable BACLoS can revise and reinforce the autonomous decision of the AI-based machine based on the error-related potential (ErrP) in the volunteer’s brain signals, which results from undesired AI decisions33,40. In this study, (1) tattoo-like dry electrodes without wet electrolyte, making it suitable for long-term and high-quality EEG measurements; (2) 8.24 g of single-channel e-EEGd conformally adheres to the skin on the head and does not cause discomfort due to the fixation method; (3) as e-EEGd is supported by Bluetooth Low Energy (BLE) communication, it can achieve high-quality EEG signal from the target agent with minimized artifacts. The conformal tattoo-like electrodes, unswaying tattoo-like connectors, light-weight earbud devices, and engineering to successfully connect and integrate them are the reason of e-EEGd generates 8.5-times less artifactual noise than a commercial device. The e-EEGd can continuously record EEG signals, even while the volunteer is walking, driving, or engaged in other activities, which expands the variety of situations in which the BACLoS can be used; (4) accordingly, it was possible to conduct an ErrP study for autonomous driving remote-controlled (RC) cars in a situation where volunteers were not restricted. As a result, we identified the ErrP by unexpected autonomous machine behaviors in the transmitted EEG signals from the e-EEGd. We also conducted a signal processing and classifier optimization study to enable reasonable and fast real-time classification to be performed on AI-based machines; (5) finally, we built trained classifier into the RC car to demonstrate that the RC car automatically corrects or reinforces its decisions within a closed-loop.

Results

Concept of the BACLoS

Figure 1a shows an overview of the BACLoS in which humans and AI interact in a closed loop. In the BACLoS, the human subject observes the AI’s autonomous decision-making (① in Fig. 1a) without any behavioral restrictions. Human satisfaction (yes) or dissatisfaction (no) concerning the AI’s previous decision (② in Fig. 1a) is reflected in the EEG as ErrP (③ in Fig. 1a), which are automatically transmitted to the AI in real time. Depending on the deep learning classification of the EEG, the AI corrects or reinforces the existing decision-making process (④ in Fig. 1a). As this closed-loop iterates, the AI is increasingly able to make accurate decisions without ErrP feedback from humans.

a Conceptual illustration of the BACLoS. Humans and artificial intelligence (AI) interact through an EEG measured by the e-EEGd, forming a wireless closed loop. Erroneous AI decisions result in error-related potential (ErrP) in the EEG signals. The AI recognizes the ErrP feedback through deep learning and corrects/reinforces the next decision. Compared to the unidirectional commands of conventional machines, this machine can automatically learn appropriate decisions and revise inaccurate decisions using the EEG signals. b Photo of a volunteer who has the e-EEGd (left), i.e., a wireless EEG measurement earbud and three open-mesh, structured, tattoo-like electrodes and connectors attached on recording sites. Images (right) show recording (top), ground (middle), and reference (bottom) electrodes mounted on the mastoid, temple, and forehead of the volunteer, respectively. c Zoomed-in illustration of the e-EEGd. d Flowchart of the BACLoS implemented in the closed-loop feedback algorithm based on ErrP signals achieved by the e-EEGd for reinforcement and correction of the machine’s autonomous decision-making. The e-EEGd only transmits the EEG wirelessly and does not process classification or filtering. Receiving EEG, performing preprocessing, and checking the existence of ERP patterns through deep learning are all done on the AI side.

The e-EEGd measures the EEG through a single channel consisting of working, ground, and reference electrodes attached to the forehead, temple, and mastoid, respectively (Fig. 1b). The frontal lobe area where the working electrode is located avoids the hairy site and can be used practically, and it is known that P300 and ErrP patterns can be measured33. Tattoo-like electrodes (900 nm) and connectors (2.8 μm) are conformally attached to the skin, and their ultrathin geometries and method of attachment minimize mechanical stress caused by skin deformation and motion artifacts (Supplementary Fig. 1). An optimized design that gradually increases in thickness from thin tattoo electrodes to thick earbuds allows thin and soft electrodes to be connected to thick and rigid electronics with minimal mechanical stress (Supplementary Figs. 2 and 3). The wireless EEG earbud is encapsulated in 3D-printed plastic, in which electronic components and batteries are mounted around a miniature (12.5 × 34.5 mm), multilayered printed circuit board (Fig. 1c and Supplementary Fig. 4). The e-EEGd is lightweight (8.24 g) compared with other miniaturized single-channel EEG devices (Supplementary Tables 2 and 3) and tightly fixed in the ear so that motion artifacts are reduced and the user is comfortable when the EEG is measured. The e-EEGd is time synchronized with the AI via wireless communication, which is essential for time-domain analysis of ErrP signals and deep learning classification. A flowchart for the closed loop, which improves the decision-making system between humans and AI, is shown in Fig. 1d. The AI automatically generates new output using an independent decision-making process based on input that is perceived from the environment (Supplementary Table 4). The human compares the output created by the AI with the input recognized from the environment. Depending on whether the AI output is unexpected or expected, the ErrP signals will (red EEG record in Fig. 1d) or will not (blue EEG record in Fig. 1d) be present. EEG signals are wirelessly transmitted to the AI and classified using deep learning. Based on these results, an emergency interrupting system or a user-customized reinforcement system is activated. This configuration is repeated in a closed loop, and humans can use the BACLoS naturally by wearing the e-EEGd and observing the AI output (Supplementary Fig. 5).

Quantitative comparison of the motion artifacts

The tattoo-like electrode has a low bending stiffness similar to human skin and can effectively make conformal contact with rough and uneven human skin41,42. This conformal contact minimizes the gaps between the skin and the electrodes, which allows the tattoo-like electrode to have low electrode–skin impedance comparable to the commercial electrode without using wet electrolytes. In addition, it is very effective in reducing motion artifacts to minimize the inconsistent gaps that are induced by human motion or external vibration41,42,43,44. Not only the electrode but also the connector is another reason for the motion artifacts. The main problem with unfixed and dangling connectors is that they generate indirect noise through random swinging motions across different frequency bands of vibration45,46,47. The tattoo-like connectors are very light and securely fixed to the skin, do not vibrate, and have a negligible effect on vibration, considerably reducing noise. However, the most critical issue for using tattoo-like bioelectronics is thickness mismatch between extremely-thin components and a thick and heavy measurement system. There is a risk of adhesion failure due to stress on the connection interface. To solve the problem, we increased the thickness in several steps from the tattoo-like electrode (900 nm), tattoo-like connector (2.8 μm), anisotropic conductive film (ACF) cable (12 μm), ACF contact pad of flexible printed circuit (FPC) connecter (80 μm), to SMD contact pad of FPC connecter (295 μm). As a result, it was possible to successfully maintain adhesion and reduce overall motion artifacts while connecting 900 nm electrodes to a 1 cm earbud.

Figure 2a shows two wireless EEG measurement systems for comparing motion artifacts. The tattoo-like sensor-based EEG device (e-EEGd) can be seen on the left side of the subject and a commercial sensor-based EEG device (c-EEGd) on the right. To highlight the difference from the electrodes (tattoo-like vs. wet commercial) and connectors (tattoo-like vs. swaying commercial), the measurement device of c-EEGd uses a module based on the RHD2216 amplifier chip same as the e-EEGd. Figure 2b shows EEG measurement while walking; significant EEG signal fluctuation can be seen in the c-EEGd (bottom of Fig. 2b) compared with that in the e-EEGd (top of Fig. 2b). The average root mean square (RMS) values of the EEG signals with the volunteer sitting, shown in Fig. 2c, were 11.16 μV and 11.28 μV for e-EEGd and c-EEGd, respectively. However, when the subject walked around, the average RMS values were 18.57 μV and 45.49 μV for e-EEGd and c-EEGd, respectively.

a A photo of a subject wearing both wireless EEG devices. The left portion of the image shows the wireless, earbud-like EEG device (e-EEGd) with tattoo-like electrodes and connectors, and the right portion of the image shows a conventional wireless EEG device with commercial electrodes and connectors (c-EEGd). b 60 overlaid EEG recordings for 0.75 s while the subject was walking on the floor from the e-EEGd (top) and c-EEGd (bottom). c Root mean square values of the recorded EEG signals from both devices while sitting (left) and walking (right). d Skin–electrode impedance of the tattoo-like electrode in the e-EEGd and commercial electrode in the c-EEGd. e, f Fast Fourier transform (FFT) spectra of recorded EEG signals during walking with different electrode and connector combinations; commercial connector with commercial electrode (top) used in c-EEGd, tattoo-like connector with commercial electrode (middle), and both tattoo-like connector and electrode (bottom) used in the e-EEGd. The subject walked 2–3 steps per second (~2 Hz). e FFT data between 0 and 2 Hz. f FFT data between 2 and 4 Hz. g, h EEG signal and artifact comparisons between the e-EEGd and c-EEGd during walking, riding a bike, riding a scooter, and driving a car in daily-life conditions.

To verify the motion artifacts of the electrodes and connectors, the EEG was measured when the volunteer walked at a pace of 2–3 steps per second using different combinations of electrodes and connectors (Supplementary Fig. 6). Figure 2e and f show the results of a fast Fourier transform (FFT) analysis in the 0–2 Hz and 2–4 Hz frequency bands, respectively. When the long, dangling connectors were replaced with a tattoo type connector (red) with commercial electrodes, the noise in the 0–2 Hz band was reduced, but noise in the 2–4 Hz band was not. On the other hand, when all the connectors and electrodes were changed to the tattoo-like connectors and electrodes (purple), the noise in the 2–4 Hz band was significantly reduced. Taken together, these results indicate that the tattoo-like electrodes were effective in reducing direct noise from motion artifacts (2–4 Hz) and that the tattoo-like connector was effective in reducing indirect noise (0–2 Hz). We demonstrated the e-EEGd, with a short length of tightly fixed connector incorporated with tattoo-like electrodes on the skin, reduced direct (2–4 Hz) and indirect noise (0–2 Hz) and motion artifacts (Supplementary Video 1).

The noise-reduction effect of the e-EEGd allowed EEG measurement in noisy outdoor environments while a participant was walking, riding a bicycle, riding a scooter, and driving a car (Fig. 2g). Figure 2h shows the EEG results during outdoor activities measured by e-EEGd (colored) and c-EEGd (gray). The EEG fluctuations were very severe with the c-EEGd, whereas negligible EEG amplitudes were associated with the e-EEGd. The average RMS value of the continuous EEG across the four activities was 23.10 μV and 124.23 μV for the e-EEGd and c-EEGd, respectively (Supplementary Fig. 7). In addition, it was possible to measure alpha waves in the noisy walking condition (Supplementary Fig. 8). We also investigated ocular artifacts caused by eye movement. Electrooculogram (EOG) caused by eyelids close and open can act as artifacts and make EEG analysis difficult. We confirmed that artifacts due to eye blinking were observed in both e-EEGd and c-EEGd. The blinking artifacts follow a specific pattern that rises rapidly according to eyes close, descends according to eyes open, and then returns to the origin. This pattern is totally different with the P300 and ErrP patterns to be measured later in shape/size, so incorrect interpretation can be avoided (Supplementary Fig. 9a, b). We also tested artifacts caused by gaze when observing the driving RC car along a circular track (Supplementary Fig. 9c). As a result, any significant artifacts were not observed in both e-EEGd and c-EEGd, comparable to measured baseline signal in sitting/inactive subjects (Supplementary Fig. 9d, e, ‘sit’ of Fig. 2c). These results became the basis for us to conduct research on ErrP using e-EEGd and autonomous driving RC car.

P300 event-related potential and error-related potential (ErrP)

With the e-EEGd, we obtained a high-SNR EEG signal with less noise from motion artifacts. To confirm whether such e-EEGd can be utilized for ErrP research, we measured P300 (related to Pe), the largest and most well-known of the ErrP complexes. We measured the ERP when subjects (n = 10, Supplementary Table 5) participated in three auditory ‘oddball’ tests with single-channel tattoo-like electrodes (Fig. 3a and Supplementary Video 2). The auditory oddball tests randomly presented repeated sounds and rarely presented the unusual sounds. The expected stimuli were a low-pitch tone (red) and a human voice saying ‘no’ (green and blue). The unexpected stimuli consisted of a high-pitch tone (black) and a human voice saying ‘yes’ (gray and white). The P300 peak was observed for all subjects approximately 300 ms after the unexpected sound (Supplementary Fig. 10), with an average P300 peak at 392, 414, and 371 ms in tests 1, 2, and 3, respectively (Fig. 3b and Supplementary Table 6). The difference in the average EEG results measured by repeated trials in several participants clearly showed the P300 pattern caused by the target stimulus (Fig. 3c). Also, there was no significant difference in signal patterns for P300 when measured simultaneously with c-EEGd. It was confirmed that the e-EEGd based on the tattoo-like electrodes exhibit reliable characteristics comparable to the commercial device based on the wet commercial electrodes in a low-noise environment (Supplementary Fig. 11 and Table 6). Compared to the RMS value at baseline, the measured P300 peaks in each subject showed a significant amplitude, even with single-channel electrodes (Supplementary Fig. 12). The dominant P300 frequency band perfectly matches the dominant frequency band of motion artifacts below 4 Hz48,49; thus, motion artifacts can easily distort the P300 signal (Fig. 3d). The e-EEGd, which effectively blocks motion artifacts, is therefore, well suited for P300 measurements in noisy conditions. To confirm this effect, we measured P300 with the auditory test when walking in place. As a result, it was confirmed that P300 measurement was difficult for c-EEGd, which is susceptible to motion artifact, unlike e-EEGd (Supplementary Fig. 8c, d).

a–d P300 measurements using the wireless earbud-like encephalography device (e-EEGd) with different auditory oddball tests. a Three different auditory oddball tests used to record P300. b A scatter plot of the amplitude and latency of recorded P300. P300 ERPs evoked approximately 300 ms after recognition of unexpected events (high pitch in test 1, ‘no’ voice in tests 2 and 3), which is the general latency for P300 (n = 360 recordings; ten subjects). c P300 based on three different oddball tests: test 1 (top, n = 190 cases), test 2 (middle, n = 120 cases), and test 3 (bottom, n = 228 cases). Each average result from ten subjects is overlaid on the background. d Fast Fourier transform (FFT) spectra of recorded EEG from tests from ten subjects. e ErrP measurement setup using a remote-controlled (RC) car. The probability (2%, 5%, 10%, 20%, and 50%) that the RC car unexpectedly moves without pausing at the stop line was manipulated. f Average ErrP (n = 295 cases) based on probabilities of the unexpected ‘malfunction’ (i.e., failure to stop) of the car. The lowest probability of the unexpected result caused the highest ErrP intensity. g Deep neural network (DNN) architecture with fully connected and multiple layers. h Long short-term memory (LSTM) architecture of recurrent neural network with multiple layers. i Single-trial classification accuracies by time range of the input EEG data for training and validation using deep learning and traditional machine learning algorithms: logistic regression (LR), linear discriminant analysis (LDA), random forest (RF), and support vector machine (SVM). j Classification accuracy based on probability of an unexpected response.

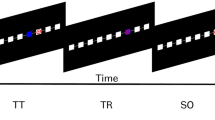

Subsequently, we experimented using the e-EEGd and an autonomous driving RC car to determine if the unexpected behavior of the AI-based machine caused the ErrP signal (Fig. 3e and Supplementary Fig. 13). The RC car drives on the track line and immediately pauses when the stop line is electronically recognized. However, we programmed the RC car to drive without stopping at the stop line with a specified probability (violation probabilities of 2%, 5%, 10%, 20%, and 50%). We assessed whether the ErrP signal was detected with the e-EEGd after the RC car continued or stopped. The ErrP pattern was detected after the RC car committed a violation and crossed the stop line (Supplementary Video 3). When the RC car stopped at the stop line, the ErrP signal pattern could not be identified. Among the various peaks of the ErrP, Ern peak is observed between 50 and 100 msec, N200/FRN peak is observed around 200 msec, and P300/Pe peak can be observed around 300 msec. Additionally, because the probability of the RC car crossing the stop line decreased, the subjects were less likely to expect the violation, and the amplitude of the P300/Pe peak increased when a violation occurred (Fig. 3f). We also measured ErrP patterns while walking along a moving RC car using two devices (e-EEGd and c-EEGd). In the case of c-EEGd, it was difficult to observe the ErrP pattern due to the excessive noise (about 50 times) caused by vibration, but in the case of e-EEGd, the ErrP pattern was confirmed by showing motion artifact resistance (Supplementary Fig. 14).

Deep learning classification of the ErrP patterns

The ErrP component follows a specific pattern, but the peak amplitude and latency change according to various conditions, such as subject, situation, violation probability, etc33,40. This phenomenon makes it difficult to determine whether the EEG contains the ErrP pattern with simple conditional statements, such as if and else. Thus, we proposed a deep learning classification to determine that the transmitted complex EEG contains ErrP patterns50,51,52,53. The most common deep learning networks nowadays are fully connected (FC) deep neural networks (DNNs), convolution neural networks (CNNs), and recurrent neural networks (RNNs)50,51. They are also used individually but are usually combined to allow for more effective classification51,52,53. Among these three networks, we tested DNNs and long short-term memory (LSTM) RNNs except CNNs. Because CNNs are excellent at reducing features, are less effective in dealing with single-channel-based EEG signals51. We compared several classifiers with fivefold cross-validation to find a high-performance classifier and an optimized input dimension, which is the time interval of the input EEG data. There are two reasons for finding an efficient input dimension: (1) An immediate response is only possible if the AI can classify the presence/absence of an ErrP in the shortest possible time from when the EEG is received, and (2) higher input dimensions could worsen the performance of a classification model by fitting on a random noise rather than the trend of input data. A lower input dimension could help generalize a classification model that may alleviate the impact of the curse of dimensionality and overfitting problems. Input EEG data for classification are preprocessed through a finite impulse response (FIR) bandpass filter of 1–8 Hz based on simple optimizing study (Supplementary Fig. 15) and parsed according to specific input dimensions. As a result, the use of a FC DNNs and LSTM of RNNs with FC (Fig. 3g, h) allows for the binary detection of the ErrP with high accuracy and area under curve (AUC) rather than traditional machine learning classifiers (Fig. 3i, Supplementary Fig. 16 and Table 7). Linear discriminant analysis (LDA) and support vector machine (SVM) are representative machine learning algorithms that are very widely used for the ErrP pattern classification24,26,27,31,32. The highest accuracy of 83.81% and area under the curve (AUC) of 0.849 were achieved when single-trial EEG was classified using LSTM with 30 input dimensions were set from 0.05 s to 0.35 s of EEG. This seems to be a valid result given that the Ern, N200/Frn, and P300/Pe peaks are located within 0.05 s to 0.35 s, and the LSTM network is well suited for training time-series data. This result suggests that ErrP classification is possible even with a single-channel and dry electrode-based e-EEGd at a reasonable level similar to the accuracy seen in other multi-channel and wet electrode-based studies24,25,26,27,28,29,30,31,32. This is Additionally, as the probability of unexpected behavior by the RC car decreased, the P300/Pe peak intensity and the classification accuracy increased. When the chance of an unexpected response (i.e., not stopping) was 2%, the classifier accuracy increased to 100% for LSTM and 92% for DNN. When the probability of stopping was 50%, the P300/Pe peak intensity was small, which made it challenging to classify; however, the accuracy maintained a certain level of performance, which was 81% for LSTM and 77% for DNN (Fig. 3j). Then, we tested a classification on the signals measured in e-EEGd and c-EEGd while moving. As a result, it was confirmed that the signal measured in e-EEGd has a slight decrease in accuracy, but it is still possible to classify ErrP patterns, but c-EEGd does not (Supplementary Table 8). AI-based machines, which are intended to be improved by BACLoS, show mostly high accuracy. However, AI-based machines still cause unexpected automation behaviors with low probability, causing inconvenience and risking safety54. We believe that the higher-intensity ErrP due to the low probability of the unexpected working, results in high classification accuracy, which is very suitable for compensating for the malfunction of AI.

Real-time demonstration of the BACLoS

The BACLoS, including the e-EEGd, was used to demonstrate the following two autonomous and reinforced systems for AI-based machines. The first is an emergency interrupting system (EIS) that urgently blocks an operation when an ErrP signal occurs due to an AI malfunction that induces ERP signals. The second system is a user-customized reinforcement system (UCRS) that predicts a user’s satisfaction based on past decisions and is influenced by ErrP feedback that reinforces particular decisions. The AI-based machine receives an EEG from the e-EEGd, processes the signal through a finite impulse response (FIR) filter (1–8 Hz), and then checks the presence/absence of the ErrP component in real time through the built-in pretrained classification model. Supplementary Fig. 17a shows a flowchart for the EIS. The AI requests EEG data from the e-EEGd immediately after an instance of decision-making. The e-EEGd sends EEG data in real time to the AI after receiving the request. The AI system determines whether there is an ErrP component in the EEG using deep learning classification. If the ErrP component is found, which indicates that previous decision-making may cause danger to the user, the AI operation is urgently stopped or modified. We conducted a demonstration of the EIS with an RC car (Supplementary Video 4). We tested the emergency braking of an RC car using the EIS and a remote controller to compare the performance of the BACLoS to the physical reaction of the human subject (Fig. 4a). The braking distance with the BACLoS was shorter, and there was less braking distance variation than when braking the RC car by hand (Fig. 4b, c). We analyzed the reaction time between ErrP feedback and the physical response using a normal distribution graph (Fig. 4d and Supplementary Fig. 18). The average reaction time using ErrP feedback was 0.35 s, which was 0.13 s faster than the physical reaction time. If someone is driving at 110 km/h, 0.13 s can shorten the braking distance by 4 m and possibly prevent accidents. In addition, the overall standard deviation using ErrP feedback was 1.8 times smaller than that using the hand directly. The response distribution from the ErrP feedback peaked at ~350 ms, without significant variation. In contrast, the physical responses exhibited wide variation.

a–d Demonstration of an emergency interrupting system (EIS) based on ErrP feedback with the BACLoS with an autonomous remote-controlled (RC) car. a Subject stops the vehicle using the EIS when it unexpectedly crosses the stop line (left) and uses a remotely operated stop button (right). b Overlaid photos of RC cars stopped by ErrP feedback from human neural activity. c Overlaid photo of RC cars stopped by a stop button via human physical activity. d Normal distributions of time required to stop after passing the stop line. Difference in the mean braking time using ErrP feedback and the manual stop button is 0.13 s. e–h User-customized reinforcement system (UCRS) on a GPS-like navigation system of an autonomous RC car. e An autonomous navigation system must make a decision at a fork in the road. ErrP is detected when the navigation system chooses a route that the user did not prefer. Using ErrP signals, the machine reinforces the navigating system for the most preferable route. f Overlaid photos of a vehicle repeating the track before the reinforcement, with 50% probability for either decision at each fork in the road. g Overlaid photos of a vehicle repeating the track after user ErrP reinforcement. h Classification accuracies based on intensification sequences. The ErrP feedback data for chosen routes overlap as the vehicle repeatedly traverses the track. i, j An autonomous maze solver with the BACLoS. i A subject wearing the e-EEGd observes the maze solver in the display. j Maze results using different strategies: right-hand rule, ErrP feedback based on EIS, and reinforcement based on UCRS.

Supplementary Fig. 17b shows a flowchart of the UCRS. The process by which the AI received ErrP feedback from human users was the same as the process for the EIS. The ErrP feedback was input to the AI as a reward or punishment. If an ErrP signal was found in the user’s EEG, it functioned as a punishment for the AI. Conversely, if an ErrP signal was not found in the EEG, it functioned as a reward for the AI. Then, the AI matched and stored the previous decision, action, and reward based on the ErrP feedback. As a result, the AI was able to predict the reward for making new decisions based on the stored information. Finally, the AI was able to reinforce decision-making (i.e., an appropriate response) that did not result in ErrP feedback. We also conducted a demonstration of the UCRS using an RC car and a track that included three forked roads (Supplementary Video 5). If the RC car encountered a fork in the track and proceeded on a path that the user did not want, the RC car received ErrP feedback, which acted as a penalty. On the other hand, if the RC car continued on the path the user wanted, the RC car did not receive ErrP feedback, which served as a reward (Fig. 4e). When the RC car encountered a forked road, it was constantly reinforced by the UCRS to behave as desired by the user. This result is shown in Fig. 4f, g as superimposed photographs of a moving RC car on the track. In the initial attempt, the RC car, without any information about the route, determined the course with a 50:50 probability of making a particular choice at a fork in the road (Fig. 4f). After learning the user’s preferred choices at the forks in the road through the UCRS, the RC car selected the route that the user wanted with a high probability (Fig. 4g). Classification accuracy also increased when classification was based on the average ErrP data obtained by overlapping the ErrP feedback. The DNN classification accuracy increased to 92.29 ± 5.3% and 98.57 ± 1.7% when the ErrP feedback overlapped in three and five trials, respectively (Fig. 4h). This result suggests that the AI can more accurately decide what the user expects and prefers using UCRS.

We also adopted an autonomous maze solver, which is a common application in robot and game algorithms. Figure 4i shows a participant with an e-EEGd observing a maze solver. The autonomous maze solver used the right-hand rule to determine the exit after entering the maze. The right-hand rule is a method of solving almost all mazes, but it cannot find the shortest distance because it progresses in the wrong direction and through trial and error. We reduced the time required to finish the maze from 36.47 s to 14.12 s by providing ErrP feedback through the BACLoS to the AI using the right-hand rule to solve the maze. After the AI learned the shortest distance for solving the maze based on ErrP feedback, the AI solved the maze in 13.37 s (Fig. 4j and Supplementary Video 6). In addition, we demonstrated the user interface of the AI assistant for BACLoS (Supplementary Fig. 19 and Supplementary Video 7).

Discussion

The integration strategy presented here includes tattoo-like electronics, wearable devices, wireless technology, bioelectric signal measurement, deep learning, and embedded systems. Elaborate engineering made the successful connection between tattoo-like electronics and rigid wearable devices while maintaining the low noise performances of tattoo-like electronics. It enables a neuroadaptable, interactive BACLoS that is based on high-SNR EEG signals and minimal motion artifacts in real-life conditions. The device can continuously monitor EEG signals in response to a diverse range of auditory and visual stimuli and identify ErrP signals among the EEG signals. With the BACLoS platform and wireless devices, we can offer a perspective on the advanced brain–machine interface in real life, which can be helpful in autonomous AI-based machines. We believe that a personalized AI system can use human electrophysiological signals to optimize machine decisions using reinforcement. For further development, more interest in various fields and multifaceted research are required. The performance of BACLoS can be further improved by adopting a recently explored conductive, soft, and functional nanomembrane or hydrogel/polymer-based electrodes. Another approach is applying an amplifier with low noise and high precision and an Analog-to-Digital Converter (ADC) with high resolution (>16 bit). More realistic studies with AI-based machines and the BACLoS, such as experiments on the road with self-driving cars, should be conducted. Notably, because the BACLoS allows continuous biosignal collection in harsh conditions, it can be used for advanced diagnosis using miniaturized electronics and AI for ‘human-in-the-loop’ machine learning. We also believe that the strategies and results from this study will provide inspiration and guidelines for experts in many fields.

Methods

Tattoo-like electrode and interconnector

Temporary tattoo paper (Silhouette) served as the base substrate for fabrication of the tattoo-like electrode. A parylene layer (300 nm thick) was coated onto the tattoo paper by high-vacuum chemical vapor deposition (CVD). A 5 nm chromium layer for adhesion and a 100 nm gold layer were deposited by thermal evaporation through a serpentine open mesh patterned shadow mask. The tattoo paper was cut using a mechanical cutter to obtain the desired size and shape. The total thickness of the tattoo-like electrodes transferred onto the skin was approximately 900 nm. The flexible interconnectors included tattoo-like connectors, anisotropic conductive film (ACF) cables, and flexible printed circuit (FPC) connectors. The tattoo-like connector was prepared using conventional microfabrication techniques, including thermal evaporation and reactive ion etching. The details of the microfabrication and encapsulation methods are provided in the Supplementary Note. The tattoo-like connector was fabricated with a polyimide (PI, 1.2 μm)/gold (100 nm)/PI (1.2 μm) sandwich structure to avoid mechanical mismatch by locating the metal part on a neutral mechanical plane. The total thickness of the tattoo-like connector on the skin is 2.8 μm. An ACF cable was used to bond both the tattoo-like connector to the FPC connector. The ACF cable requires high-temperature (>150 °C) and high-pressure (30–40 kg cm^-2) environments to ensure adhesion. The thickness and width of the conducting area of the ACF cable were 30 and 250 μm, respectively. The female FPC connector was manufactured considering the width of the conducting area of the ACF cable and the thickness of the male surface mount device (SMD)-type FPC connector on the EEG measurement earbud (Supplementary Fig. 3). The thickness of the FPC connector varied from 80 to 300 μm. Consequently, the final interconnector has a step-up thickness gradient, which makes it more stable, even if it is connected to a conventional rigid connector (Supplementary Fig. 2).

Preparation of the wireless EEG measurement earbud

We used a four-layer printed circuit board (PCB) manufactured by JLCPCB (Shenzhen, China). An electrophysiology amplifier chip (RHD2216, INTAN), 8-bit microcontroller (ATMEGA328P-MMH, Microchip), Bluetooth low energy (BLE) module (Bot-NLE522, CHIPSEN), FPC connector (IMSA-11501S-08C, IRISO), linear voltage regulator (TLV70033QDDCRQ1, Texas Instruments), rechargeable 3.3 V lithium polymer battery, and four capacitors (0603 size, Samsung) were soldered onto the PCB board using solder paste (SMD291SNL10T5, Chip Quick) (Supplementary Fig. 4 and Supplementary Table 9). Polyethylene resin (FLDUCL02, Formlabs) was used for the 3D-printed case of the soldered PCB board. The 3D-printed case is composed of upper and lower parts that are designed in line with the shape of the soldered PCB board and battery. Atmel Studio 7.0 (Atmel) and Arduino IDE 1.8.13 (Arduino) were used to write bootload and upload software for the microcontroller. The programmable sampling rate used for the EEG measurement was 100 Hz for the basic setup. The resolution of the integrated analog-to-digital converter (ADC) was 16-bit, and the differential gain of the amplifier was 192. Continuous transmission of EEG signals in wireless conditions is possible for 8 h with a fully charged battery (Supplementary Fig. 20).

Fabrication of the autonomously driving RC car

All components were mechanically fixed to the main acrylic frame and electrically connected using breadboard and jumper wires. A motor driver (L9110s, SMG), artificial intelligence (AI)-embedded microcontroller (Arduino Nano 33 BLE, Arduino), 8-bit microcontrollers (Arduino UNO R3, Arduino), BLE module (Bot-NLE522D, CHIPSEN), microSD card socket (SZH-EKBZ-005, SMG), microSD card (SDSQUAR-016G, Sandisk), DC motors (NP01D-288, OEM), reflective photointerrupter (TCRT5000, Vishay), passive components, and batteries were used (Supplementary Fig. 13 and Supplementary Table 10). The outer frame was generated using a 3D printer and polyamide (nylon 12) powder. There was a black line on the track located between the two floor-facing reflective photointerrupters of the RC car. When the photointerrupter was placed on the black line, the light could not be reflected, and the photointerrupter reading changed. The RC car minutely adjusted the driving direction according to the photointerrupter values and always maintained the black line between the two photointerrupters. As a result, the RC car could follow the track without leaving the line.

Measurement of skin–electrode impedance

Two tattoo-like electrodes with tattoo-like connectors were attached to the skin with 10 cm between them. One electrode was the working (cathode) electrode, and the other was the reference/counter (anode) electrode for the electrochemical impedance spectroscopy measurement. Impedance was measured with a single-channel potentiostat (PalmSens4, PalmSens B.V.) and software (PSTrace, PalmSens B.V.) from 1 to 1000 Hz every 30 min for 10 h.

Continuous EEG recording while moving

Two volunteers participated in the EEG recording under several different motion conditions. The tattoo-like electrodes were attached to the side of the forehead as a working electrode, the temple as the ground electrode, and mastoid as the reference electrode. The tattoo-like connectors between the electrodes and interconnector were attached to the side of the face, and the EEG measurement earbud was plugged into one ear. The electrodes, connectors, and measurement systems were connected (e-EEGd) to transmit EEG data wirelessly. EEG data were received wirelessly via Arduino Nano BLE (Arduino) and stored on a computer. Volunteers performed various motions such as sitting, standing, walking, cycling, riding, and driving. The same measurement was also performed with the volunteer’s eyes closed and opened to measure the alpha waves under these conditions. Extra experiments were conducted by replacing the following components with conventional components (c-EEGd): the electrodes (2223H, 3 M), lead cable (MIKROE-2457, MikroElektronika), and measurement system (RHD2216 Arduino shield, INTAN); this was done to conduct a noise comparison analysis. MATLAB R2019 a (MathWorks) was used to create the main platform of the EEG signal processing toolbox, BrainStorm (Tadel et al. 2011). An FIR bandpass filter (8–12 Hz) and Morlet wavelet transform (5–15 Hz) were used to analyze the alpha waves. The noise characteristics analysis included calculation of the root mean square value and fast Fourier transform (FFT) of continuous EEG data.

P300 ERP and ErrP signal acquisition and analysis

Twelve volunteers participated in an experiment to obtain P300 event-related potential measurements. The experiment included three different setups: auditory oddball tests (test 1, test 2, and test 3) for P300 measurement; a visual oddball test with an autonomously driving RC car that moves in various unexpected ways for ErrP measurement; and a test of the assistant interface, which randomly gave undesirable answers for ErrP measurement. All data were recorded for 1 s after each stimulus. An FIR bandpass filter (1–30 Hz and 1–8 Hz) was applied as a preprocessing method for the analysis. The average amplitudes of the P300 peak from each subject were derived from the average ERP waveforms. The signal-to-noise ratio was calculated by dividing the P300 peak amplitude value by the RMS value at the baseline, before the target stimuli. FFT analysis was performed to reveal the domain frequency band of the P300 pattern.

P300 measurement using auditory oddball tests (tests 1, 2, and 3)

Both e-EEGd and c-EEGd are used for these tests. The wet commercial electrodes are attached right next to the tattoo-like electrodes of c-EEGd. The duration and interval of the stimuli were 0.1 and 0.9 s, respectively. The target stimuli were randomly organized and presented with a 10% probability. All subjects were asked to count the number of target stimuli in the test to ensure that their attention was on the tests and close the eyes for avoiding ocular artifacts. Test 1 used a high-pitched sound (1000 Hz) for the target stimuli and a low-pitched sound (250 Hz) for nontarget stimuli. Test 2 included a human voice saying ‘no’ for the target stimuli and ‘yes’ for the nontarget stimuli. Test 3 included a human voice saying ‘no’ for the target stimuli and silence for the nontarget stimuli. We also measured P300 as an auditory test when subjects walked slow. To acquire the P300 data wirelessly in the oddball tests, an 8-bit microcontroller (Arduino UNO R3, Arduino), BLE module (Bot-NLE522D, CHIPSEN), MP3 player module (KE0092, KEYES), and mini speaker were connected to a computer. CoolTermWin (Roger Meier) was used for serial communication between the wireless receiver and computer to save wireless data in the EEG measurement system.

ErrP measurement using autonomously driving RC car: various unexpected motions

To measure EEG signals that reflect more realistic situations, a wireless recording was obtained as the volunteer observed the wireless autonomous driving RC car on a custom-designed track (black line). The RC car was designed to follow the black line on the track using reflective infrared sensors and to stop immediately when it reached the stop line (crossroad). However, with a certain programmed probability (2%, 5%, 10%, 20%, and 50%), the RC car did not stop at the stop line and exhibited abnormal behavior. Whenever the RC car detected a stop line, it wirelessly sent a timing cue for temporal synchronization to the EEG earbud. The e-EEG system then measured the EEG signal for 1 s from this cue point while simultaneously transmitting the signal back to the RC car. The EEG data transmitted by the earbud were saved to the SD card that was connected to the RC car. If the RC car operated abnormally, the EEG was stored as ErrP measurements according to the target stimulus; if it operated normally, the EEG was stored as ErrP measurements according to the nontarget stimulus.

ErrP measurement using assistant interface: undesirable answers at random

The assistant interface answered the user’s request for a phone call. The assistant interface had a preprogrammed probability (10%) of malfunction, similar to the autonomous driving RC car. When the assistant interface answered the user, it sent a wireless timing cue to the EEG earbud. The EEG earbud system recorded the EEG signal for 1 s after receiving the cue from the assistant interface and sent EEG data back to the assistant interface in real time. This EEG data was saved on a computer connected to the assistant interface.

Machine learning classification

Input EEG data are 295 cases of ErrP pattern data recorded from experiment with autonomous driving RC car and e-EEGd. RC car usually works normally rather than malfunction, so the measured data has more un-target data than target data. If all of these biased proportions of data are used for classifier training, there is a high probability that incorrect training will proceed. For classifier training, we configured input data with the same ratio of target data and un-target data. We adopted five classification models for ErrP pattern detection: logistic regression (LR), linear discriminant analysis (LDA), k-nearest neighbors algorithm (k-NN), random forest (RF), and support vector machine (SVM). For linear regression, a limited-memory Broyden–Fletcher–Goldfarb–Shanno solver with a ridge penalty was applied. Singular value decomposition was used to fit the input data for the LDA model. For the k-NN model, the number of neighbors was set to five. The Minkowski distance was applied. In the RF model, 128 trees were used. The Gini impurity was used as a splitting condition for each decision tree. The SVM model was implemented based on libsvm. The penalty parameter was set to 1.0. A linear kernel with a degree of three was used to boost the classification performance by embedding the EEG signal into a higher-dimensional space. To optimize the input dimensionality, which refers to the size and starting point of the input data, we repeatedly conducted training and 5-fold cross-validation of each classifier. The accuracy was determined according to the dimensions and starting points of the input data. We also calculated the area under the curve of the receiver operating characteristic curve, which is one of the most widely used metrics for representing the performance of a classifier.

Deep learning classification

The input EEG data is the same as the data used for training machine learning classifiers. We adopted deep neural networks (DNNs) and long short-term memory (LSTM) models for ErrP pattern detection. These models were optimized using the Keras platform with a TensorFlow (Google) backend. The input EEG data from a single recording channel were preprocessed with an FIR bandpass filter and parsed into data of 30 input dimensions (0.3 s interval). We used two dense layers, including 16 and 8 units with ReLU activation, and one output layer with sigmoid activation for the DNN model. In addition, we used one LSTM layer including 90 units with tanh activation, one dense layer including 18 units with ReLU activation, and one output layer with sigmoid activation for the LSTM model. We repeatedly conducted training and 5-fold cross-validation of each classifier with a constant learning rate (Adam, learning rate = 0.001, beta_1 = 0.9, beta_2 = 0.999)55 and computed the mean of the squares of errors between labels and predictions to optimize classifier performance.

Real-time deep learning classification

TensorFlow Lite (Google) and TensorFlow (Google) were used to deploy pretrained classification models and train classification models using a deep learning algorithm for the BACLoS. The recorded ErrP data were used to train the classification model. The time interval of the input EEG data for training and examination of the classification model was fixed at 0.3 s based on the optimization study. The pretrained classification model was converted to the TensorFlow Lite format without quantization. The converted TensorFlow Lite file was then encoded in an Arduino header file to upload and utilize in the Arduino Nano 33 BLE (Arduino) with the Arduino IDE (Arduino). When the Arduino Nano 33 BLE with a built-in trained model received EEG data from the e-EEGd wirelessly, it processed the data with an FIR filter (1–8 Hz) in the same format as the trained data, parsed it as a 0.3 s input dimension, and then inputted it to the trained model to test print the result in real time.

EIS and UCRS for an RC car via BACLoS with the e-EEGd

Two controllers, the Arduino Nano 33 BLE and Arduino Uno, were built into the RC car to control its movements. The Arduino Nano wirelessly received EEG signals and performed classification using a deep learning algorithm in real time. The Arduino Uno controlled all the components of the RC car, including sensors, motors, and drivers. The digital ports of the Arduino Nano and Uno were connected by commercial jumper cables. The Arduino Uno sent timing cue information to the Arduino Nano through a digital port when it detected a stop line or a fork in the track. Consequently, the Arduino Nano wirelessly received the EEG signal from the e-EEGd and performed ErrP classification simultaneously. The output of the ErrP classification was sent to the Arduino Uno through the digital port to control the RC car instantly to demonstrate the EIS. For the UCRS demonstration, the classification result was used to choose the appropriate path when the RC car encountered the same fork in the road for a second time. Before this point, there was no information on the user’s preference for a particular fork, so the route was selected at random. After the route was selected, there was ErrP feedback about the selected route, which made it the preferred route. The Arduino Uno then reinforced the internal programs for route selection on the forked road according to the user’s preference information. The RC car more accurately reflected the user’s preference with repeated reinforcement.

Demonstration of the BACLoS for the maze solver

The maze solver was composed of an AI-embedded microcontroller (Arduino Nano 33 BLE, Arduino) and Processing, which is the software that creates the maze interface. Before reinforcement, the maze solver solved all the mazes using the right-hand rule algorithm. The maze was generated by an internal program (Processing). Users with an e-EEGd thought about the shortest path to solve the maze and observed how the maze solver solved the maze. The maze solver sent a timing cue to the e-EEGd whenever it determined the direction of progress using the right-hand rule algorithm. The e-EEGd measured and transmitted the EEG signals from the user in real time after receiving the request. Then the maze solver analyzed the transmitted EEG signals using the trained classifier to confirm that the ErrP pattern was included. If an ErrP pattern was identified in the EEG signal through classification, the maze solver deemed the previous decision inefficient. Based on this series of processes, the maze solver immediately reversed the direction of progress, returned to the previous decision point, and proceeded in the opposite decision. The maze solver saved the decision information and proceeded with reinforcement to revise the internal algorithm to find the path. After repeated reinforcement, the maze solver was able to find the shortest path through the maze.

Demonstration of the BACLoS for the assistant interface

The speech recognition kit (SZH-KI001, SMG), BLE module (Bot-NLE522D, CHIPSEN), AI-embedded microcontrollers (Arduino Nano 33 BLE, Arduino), and 8-bit microcontrollers (Arduino UNO R3, Arduino) were the main components of the assistant interface hardware. Processing Software was used to create a user interface for the assistant interface. Whenever the assistant interface responded to a user’s request, the assistant interface processed the transmitted EEG signal through the trained classifier to determine whether the ErrP pattern was included. If an ErrP pattern was identified in the EEG through classification, the assistant interface determined that the previous response was incorrect and presented another, more suitable, response to the user.

IRB approval for the study of human subjects

All measurements were conducted following the protocol approved by the Institutional Review Board (IRB) of Sungkyunkwan University (ISKKU 2019-06-011). All participants were given a comprehensive set of instructions regarding the tests, agreed to the testing procedures, and provided written informed consents to take part in the study.

Data availability

All data are available in the main text or supplementary materials. All information can be requested from one of the corresponding authors.

Code availability

The code for the machine learning and deep learning models are available on GitHub (https://github.com/JooHwanS/ErrP). Additional information can be requested from one of the corresponding authors.

References

Ray, T. R. et al. Bio-integrated wearable systems: a comprehensive review. Chem. Rev. 119, 5461–5533 (2019).

Jung, Y. H. et al. Injectable biomedical devices for sensing and stimulating internal body organs. Adv. Mater. 32, 1907478 (2020).

Kim, D. H. et al. Epidermal electronics. Science 333, 838–843 (2011).

Sanderson, K. Electronic skin: from flexibility to a sense of touch. Nature 591, 685–687 (2021).

Someya, T. & Amagai, M. Toward a new generation of smart skins. Nat. Biotechnol. 37, 382–388 (2019).

Yu, X. et al. Skin-integrated wireless haptic interfaces for virtual and augmented reality. Nature 575, 473–479 (2019).

Tian, L. et al. Large-area MRI-compatible epidermal electronic interfaces for prosthetic control and cognitive monitoring. Nat. Biomed. Eng. 3, 194–205 (2019).

Kwak, J. W. et al. Wireless sensors for continuous, multimodal measurements at the skin interface with lower limb prostheses. Sci. Transl. Med. 12, 574 (2020).

Jeong, J. W. et al. Materials and optimized designs for human-machine interfaces via epidermal electronics. Adv. Mater. 25, 6839–6846 (2013).

Kwon, Y. T. et al. All-printed nanomembrane wireless bioelectronics using a biocompatible solderable graphene for multimodal human-machine interfaces. Nat. Commun. 11, 1–11 (2020).

Xu, Y. et al. Real-time monitoring system of automobile driver status and intelligent fatigue warning based on triboelectric nanogenerator. ACS Nano 15, 7271–7278 (2021).

Ding, W. B., Wang, A. C., Wu, C. S., Guo, H. Y. & Wang, Z. L. Human-machine interfacing enabled by triboelectric nanogenerators and tribotronics. Adv. Mater. Technol. 4, 1800487 (2019).

Kim, J. et al. Stretchable silicon nanoribbon electronics for skin prosthesis. Nat. Commun. 5, 5747 (2014).

Thakor, N. V. Translating the brain-machine interface. Sci. Transl. Med. 5, 210ps217 (2013).

Lebedev, M. A. & Nicolelis, M. A. Brain-machine interfaces: past, present and future. Trends Neurosci. 29, 536–546 (2006).

Xu, M. et al. A brain–computer interface based on miniature-event-related potentials induced by very small lateral visual stimuli. IEEE. Trans. Biomed. 65, 1166–1175 (2018).

Silversmith, D. B. et al. Plug-and-play control of a brain–computer interface through neural map stabilization. Nat. Biotechnol. 39, 326–335 (2021).

Musk, E. An integrated brain-machine interface platform with thousands of channels. J. Med. Internet Res. 21, e16194 (2019).

Willett, F. R., Avansino, D. T., Hochberg, L. R., Henderson, J. M. & Shenoy, K. V. High-performance brain-to-text communication via handwriting. Nature 593, 249–254 (2021).

Norton, J. J. et al. Soft, curved electrode systems capable of integration on the auricle as a persistent brain–computer interface. Proc. Natl Acad. Sci. USA 112, 3920–3925 (2015).

Mahmood, M. et al. Fully portable and wireless universal brain-machine interfaces enabled by flexible scalp electronics and deep learning algorithm. Nat. Mach. Intell. 1, 412–422 (2019).

Hong, K.-S. & Khan, M. J. Hybrid brain–computer interface techniques for improved classification accuracy and increased number of commands: a review. Front. Neurobot 11, 35 (2017).

Spielberg, S., Kennedy, K. & Curtis, B. AI: Artificial Intelligence. (Warner Bros. Entertainment Australia Pty Limited, 2007).

Lin, C., Hasan, S. S. & Bai, O. Robotic navigation with human brain signals and deep reinforcement learning. IEEE International Conference on Robotics, Control and Automation Engineering (RCAE). 278–283 (IEEE, Wuhan, 2021).

Akinola, I. et al. Accelerated robot learning via human brain signals. IEEE https://doi.org/10.1109/ICRA40945.2020.9196566 (2020).

Chakraborti, T., Sreedharan, S., Kulkarni, A. & Kambhampati, S. Alternative modes of interaction in proximal human-in-the-loop operation of robots. Preprint at https://arxiv.org/abs/1703.08930 (2017).

Ehrlich, S. K. & Cheng, G. Human-agent co-adaptation using error-related potentials. J. Neural Eng. 15, 066014 (2018).

Luo, T.-J., Fan, Y.-C. & Lv, J.-T. Deep reinforcement learning from error-related potentials via an EEG-based brain-computer interface. IEEE International Conference on Bioinformatics and Biomedicine (BIBM). 697–701 (IEEE, Spain, 2018).

Wang, Z., Shi, J., Akinola, I. & Allen, P. Maximizing BCI human feedback using active learning. IEEE https://doi.org/10.1109/IROS45743.2020.9341669 (2020).

Xu, D., Agarwal, M., Gupta, E., Fekri, F. & Sivakumar, R. Accelerating Reinforcement Learning using EEG-based implicit human feedback. Neurocomputing 460, 139–153 (2021).

Salazar-Gomez, A. F., DelPreto, J., Gil, S., Guenther, F. H. & Rus, D. Correcting robot mistakes in real time using EEG signals. IEEE https://doi.org/10.1109/ICRA.2017.7989777 (2017).

Kim, S. K., Kirchner, E. A., Stefes, A. & Kirchner, F. Intrinsic interactive reinforcement learning–using error-related potentials for real world human-robot interaction. Sci. Rep. 7, 1–16 (2017).

Ullsperger, M., Fischer, A. G., Nigbur, R. & Endrass, T. Neural mechanisms and temporal dynamics of performance monitoring. Trends Cogn. Sci. 18, 259–267 (2014).

Christiano, P. F. et al. Deep reinforcement learning from human preferences. Adv. Neural Inf. Process. Syst. 30, 4299–4307 (2017).

Arakawa, R., Kobayashi, S., Unno, Y., Tsuboi, Y. & Maeda, S.-I. Dqn-tamer: Human-in-the-loop reinforcement learning with intractable feedback. Preprint at https://arxiv.org/abs/1810.11748 (2018).

Lin, J. et al. A review on interactive reinforcement learning from human social feedback. IEEE Access 8, 120757–120765 (2020).

Littman, M. L. Reinforcement learning improves behaviour from evaluative feedback. Nature 521, 445–451 (2015).

Zhang, R., Torabi, F., Guan, L., Ballard, D. H. & Stone, P. Leveraging human guidance for deep reinforcement learning tasks. Preprint at https://arxiv.org/abs/1909.09906 (2019).

Xu, M., He, F., Jung, T.-P., Gu, X. & Ming, D. Current Challenges for the Practical Application of Electroencephalography-Based Brain–Computer Interfaces. Engineering 7, 1710–1712 (2021).

Luck, S. J. An Introduction to the Event-Related Potential Technique. (MIT x, 2014).

Nawrocki, R. A. et al. Self‐adhesive and ultra‐conformable, Sub‐300 nm dry thin‐film electrodes for surface monitoring of biopotentials. Adv. Funct. Mater. 28, 1803279 (2018).

Kabiri Ameri, S. et al. Graphene electronic tattoo sensors. ACS nano 11, 7634–7641 (2017).

Stauffer, F. et al. Skin conformal polymer electrodes for clinical ECG and EEG recordings. Adv. Healthc. Mater. 7, 1700994 (2018).

Zhang, L. et al. Fully organic compliant dry electrodes self-adhesive to skin for long-term motion-robust epidermal biopotential monitoring. Nat. Commun. 11, 1–13 (2020).

Reis, P., Hebenstreit, F., Gabsteiger, F., von Tscharner, V. & Lochmann, M. Methodological aspects of EEG and body dynamics measurements during motion. Front. Hum. Neurosci. 8, 156 (2014).

Castermans, T., Duvinage, M., Cheron, G. & Dutoit, T. About the cortical origin of the low-delta and high-gamma rhythms observed in EEG signals during treadmill walking. Neurosci. Lett. 561, 166–170 (2014).

Kline, J. E., Huang, H. J., Snyder, K. L. & Ferris, D. P. Isolating gait-related movement artifacts in electroencephalography during human walking. J. Neural Eng. 12, 046022 (2015).

Antonsson, E. K. & Mann, R. W. The frequency content of gait. J. Biomech. 18, 39–47 (1985).

Lee, K. et al. Mechano-acoustic sensing of physiological processes and body motions via a soft wireless device placed at the suprasternal notch. Nat. Biomed. Eng. 4, 148–158 (2020).

Roy, Y. et al. Deep learning-based electroencephalography analysis: a systematic review. J. Neural Eng. 16, 051001 (2019).

Sainath, T. N., Vinyals, O., Senior, A. & Sak, H. Convolutional, long short-term memory, fully connected deep neural networks. IEEE. https://doi.org/10.1109/ICASSP.2015.7178838 (2015).

Ditthapron, A., Banluesombatkul, N., Ketrat, S., Chuangsuwanich, E. & Wilaiprasitporn, T. Universal joint feature extraction for P300 EEG classification using multi-task autoencoder. IEEE Access 7, 68415–68428 (2019).

Liu, M. et al. Deep learning based on batch normalization for P300 signal detection. Neurocomputing 275, 288–297 (2018).

Dikmen, M. & Burns, C. M. Autonomous driving in the real world: experiences with Tesla autopilot and summon. In Proc 8th International Conference on Automotive UIser Interfaces and Interactive Vehicular Applications. 225–228 (ACM, 2016).

Kingma, D. P. & Ba, J. Adam: a method for stochastic optimization. Preprint at https://arxiv.org/abs/1412.6980 (2014).

Acknowledgements

The authors would like to thank Dr. Soonkwon Paik (Hyundai Motors) for helpful discussions concerning the devices. The authors thank to Sungho Gong for participating the measurement experiment and providing photos. This research was proposed by the Hyundai-NGV project, but was mainly supported by the National Research Foundation (NRF) funded by the Korean government (MSIT) (NRF-2019M3C7A1032076, and NRF-2020M3C1B8016137). Also, the work is partially funded by SKKU Research Project (S-2021-2151-000 International A).

Author information

Authors and Affiliations

Contributions

J.H.S. and T.-i.K. designed the experiments; J.H.S., H.R., and J.O. performed the experiments; J.H.S. and T.-i.K. led this work; J.K., S.J.K., and H.P. analyzed the EEG data; and J.H.S., J.U.K., and T.-i.K. wrote the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Shin, J.H., Kwon, J., Kim, J.U. et al. Wearable EEG electronics for a Brain–AI Closed-Loop System to enhance autonomous machine decision-making. npj Flex Electron 6, 32 (2022). https://doi.org/10.1038/s41528-022-00164-w

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41528-022-00164-w

This article is cited by

-

Multilayer stretchable electronics with designs enabling a compact lateral form

npj Flexible Electronics (2024)

-

Autonomous vehicles decision-making enhancement using self-determination theory and mixed-precision neural networks

Multimedia Tools and Applications (2023)

-

Graphene e-tattoos for unobstructive ambulatory electrodermal activity sensing on the palm enabled by heterogeneous serpentine ribbons

Nature Communications (2022)