Abstract

Optical imaging through diffusive, visually-opaque barriers and around corners is an important challenge in many fields, ranging from defense to medical applications. Recently, novel techniques that combine time-of-flight (TOF) measurements with computational reconstruction have allowed breakthrough imaging and tracking of objects hidden from view. These light detection and ranging (LiDAR)-based approaches require active short-pulsed illumination and ultrafast time-resolved detection. Here, bringing notions from passive radio detection and ranging (RADAR) and passive geophysical mapping approaches, we present an optical TOF technique that allows passive localization of light sources and reflective objects through diffusive barriers and around corners. Our approach retrieves TOF information from temporal cross-correlations of scattered light, via interferometry, providing temporal resolution that surpasses state-of-the-art ultrafast detectors by three orders of magnitude. While our passive approach is limited by signal-to-noise to relatively sparse scenes, we demonstrate passive localization of multiple white-light sources and reflective objects hidden from view using a simple setup.

Similar content being viewed by others

Introduction

In recent years there have been great advancements in techniques that enable non-line-of-sight (NLOS) optical imaging for a variety of applications, ranging from microscopic imaging through scattering tissue1,2,3,4,5,6,7,8 to around-the-corner imaging1,3,5,9,10,11,12,13,14,15,16,17,18,19. The enabling principle behind these techniques is the use of scattered light for computational reconstruction of objects that are hidden from direct view. This has been achieved in a variety of approaches, such as wavefront shaping3, inverse-problem solutions based on intensity-only imaging18,19, speckle correlations4,5,20, phase-space measurements17, and time-resolved measurements8,9,10,11,12,13,14. While wavefront-shaping approaches allow diffraction-limited resolution, they require the presence of a guide-star at the target position21 or long iterative optimization procedures. Inverse-problem solutions based on intensity-only imaging do not require prior mapping of the scattering barrier, but suffer from a drastically lower resolution, dictated by the diffusive halo blur dimensions. Phase-space based approaches17, which localize hidden sources by measuring the positions and angles of the scattered light, suffer as well from a localization resolution that is dictated by the scattering angle of the barrier. Large scattering angles blur the k-space traces for objects that are not adjacent to the barrier.

A considerably higher resolution that is insensitive to the scattering angle of the barrier was recently obtained using speckle correlations4,5,6,7 or by time-of-flight (TOF)8,9,10,11,12,13,14,15,16 based approaches. The former rely on angular correlations of scattered light, known as the memory-effect22, and allow diffraction-limited, single shot, passive imaging, using a simple setup. However, memory-effect based approaches suffer from an extremely limited field of view (FOV), and are limited to planar objects and to narrow spectral bandwidths. TOF-based approaches have recently allowed three-dimensional (3D) tracking and reconstruction of macroscopic scenes hidden from view9,13,15,16. These approaches utilize the principle of light detection and ranging (LiDAR) to obtain 3D spatial information from temporal measurements of reflected light that experienced several reflections (bounces). This is achieved by using ultrafast detectors to measure the time it takes a short light pulse to travel from an illumination point on the diffusive barrier, to the target object and back to the barrier. The scene is then computationally reconstructed from multiple TOF measurements at different spatial positions on the barrier.

Intuitively, one may assume that in order to measure time of flight, a pulsed source is mandatory, as is indeed used in most common LiDAR, radio detection and ranging (RADAR), and sound navigation and ranging (SONAR) systems. However, short-pulsed illumination is not a fundamental requirement: it is possible to obtain high-resolution temporal information from temporal cross-correlations of ambient broadband noise, without any active or controlled source. This principle is put to use in helioseismology23, ultrasound24, geophysics25, passive RADAR26, and recently in optical studies of complex media27.

Here, we adapt these principles of passive temporal correlations-based imaging for 3D localization of hidden broadband light sources and reflective objects through diffusive barriers and around a corner. We retrieve high-resolution TOF information from scattered light using a simple, completely passive setup, based on a conventional camera. In our approach, temporal cross-correlations of scattered light are measured in a single shot, via low-coherence (white-light) interferometry, using controllable masks. Unlike conventional active TOF/LiDAR, where the temporal resolution is dictated by the pulse duration, or by the detectors’ response time, in our approach the temporal resolution is dictated by the coherence time of the scene illumination. For natural white-light illumination, as used in our experiments, the TOF temporal resolution is approximately10 fs, three orders of magnitude better than the state of the art ultrafast detectors9,13,16. However, while the localization resolution of our approach is superior to what is possible with ultrafast detectors, due to the passive nature of our approach and its reliance on low-coherence interferometry, it is fundamentally limited by signal-to-noise (SNR) considerations to the localization of a relatively small number of sources or reflectors, and it requires relatively long-exposure times. Unlike intensity-only18,19 and phase-space measurements17 approaches, the localization resolution in our approach is dictated by the TOF temporal resolution and not by the scattering angle of the barrier. We thus present localization results obtained through highly scattering diffusers, having a scattering angle of 80°, and using light scattered off a white-painted surface, using a simple, completely passive setup.

Results

Principle

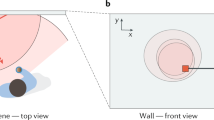

The principle of our approach is described in Fig. 1. Consider a small light source, or a reflective object hidden behind a diffusive barrier (Fig. 1a). For a source transmitting short pulses (or an object reflecting a short pulse), the source position can be determined by measuring the time of arrival of light from the source to different points on the barrier. Such TOF-based spatial localization is straightforward, as was recently demonstrated using ultrafast detectors9,13,16. However, when the illumination source is an uncontrolled continuous broadband noise source, such as natural white-light illumination, measuring the relative TOF is still surprisingly possible by temporally cross-correlating the random time-varying fields arriving at the barrier (Fig. 1b, c). Such passive correlation-imaging28 is the underlying principle of our approach.

Passive TOF by temporal cross-correlations. a–c Principle: a white-light source or a reflective object is hidden behind a diffusive barrier. a The random noise field from the source is measured at two points on the barrier by detectors 1 (blue) and 2 (orange). b For a thin barrier, the measured fields are replicas of the same broadband noise, shifted by the time-of-flight (TOF) difference Δτ = ΔL/c. c Cross-correlating the measured fields reveals the TOF difference as a sharp cross-correlation peak. d Optical implementation: light from two points on the barrier is selected by a controllable mask and interferes on a high-resolution camera. A single long-exposure camera shot Icam(x) provides the fields temporal cross-correlation at different time delays, t(x) = ΔL(x)/c. e–g Numerical example of the measured intensity patterns on a single camera row. e For a mask with a single aperture, a speckle intensity pattern is measured. f For a double-aperture mask, a cross-correlation peak appears as white-light interference fringes on top of the speckle pattern, providing the TOF difference Δτ. g Zoom-in on (f), the TOF resolution is the source coherence time

A numerical example for this principle is shown in Fig. 1a–c: the random time-varying fields from a broadband white-light source are measured at two positions on the barrier by two detectors (Fig. 1a). For a single hidden point source the measured fields arriving on the barrier, E1(t) and E2(t) are identical delayed versions of the source random field Es(t): E1(t) = Es(t − τ1) and E2(t) = Es(t − τ2) (Fig. 1b). Assuming free space propagation between the source and the barrier, τi = Li/c, where Li is the optical path length between the source and the i-th measurement point, and c is the speed of light. The TOF difference Δτ = ΔL/c = (L1 − L2)/c, can be determined by temporal cross-correlating the two arriving random fields (Fig. 1c). For a sufficiently thin scattering barrier, the cross-correlation of the measured fields exiting the barrier is identical to the cross-correlation of the arriving fields, i.e., possessing a sharp peak with a temporal width that is equal to the coherence time of their illumination, centered at the relative time delay Δτ (see Fig. 1c and Supplementary Note 11). The source position can be determined in three-dimensions (3D) from three or more such TOF measurements taken at different points on the barrier, in a similar manner to the principle of global positioning system (GPS), and the recent NLOS imaging works in optics9,13,16.

The spatial localization accuracy is dictated by the TOF temporal resolution, δt, i.e., by the temporal width of the cross-correlation peak. For a broadband source, this width is the source coherence time δt ≈ tc ≈ 1/Δf, where Δf is the source spectral bandwidth. This is easily shown by noting that the cross-correlation of the two fields, \(E_1(t) \star E_2(t)\), is the autocorrelation of the source field, shifted by Δτ:

For a broadband source, the autocorrelation \((E_{\mathrm{s}} \star E_{\mathrm{s}})(t)\), is a narrow, sharply peaked function around t = 0, with a temporal width that is given by δt ≈ 1/Δf according to the Wiener-Khinchin theorem. Thus, the fields cross-correlation would display a sharp peak at the time delay t = Δτ = (L1 − L2)/c, providing the TOF difference from the hidden source to the two points on the barrier (Fig. 1c). Such a single TOF difference measurement localizes the source to be on a hyperboloid surface, with its foci at the two detectors. Repeating this passive TOF measurement at two additional positions on the barrier would localize the source in 3D.

Direct implementation of such a field correlation approach using two optical detectors is not straightforward since measuring the temporal variations in optical fields requires phase-sensitive ultrafast detection with sub-femtosecond temporal response. Conventional optical detectors measure only the optical intensity averaged over response times that are several orders of magnitude longer. However, access to the optical fields’ temporal cross-correlation is directly possible via interference, even with very slow detectors. Our approach utilizes this principle to implement passive optical TOF measurements, as presented in Fig. 1d–g and as explained below.

Figure 1d depicts a simplified optical setup that enables spatially resolved temporal cross-correlation measurements via interferometry, using a conventional high-resolution camera. First, the light from two chosen positions on the barrier is selected by a mask having two apertures. The mask is placed adjacent to the barrier or imaged on its surface. The light fields passing through the two apertures, E1(t) and E2(t), interfere on a camera detector placed at a sufficiently large distance from the mask. In a single long-exposure image, each camera pixel intensity value, Icam(x), at a transverse position x, is the time-integrated optical intensity resulting from the interference of the two fields:

Where Ij is the time-averaged intensity that passes through the jth aperture. Thus, a single camera row provides the fields temporal cross-correlation, \(E_1 \star E_2\), sampled at thousands of different time delays, given by the positions of the camera pixels, x, at that row: t(x) = ΔL(x)/c. The sampled time delays are dictated by the system’s geometry (see Supplementary Fig. 6). Using a single aperture pair, the number of time delays that can be sampled in a single exposure is limited by the number of pixels in a single camera row, which was 2160 in our experiments.

The source TOF difference can be easily determined from the position of the cross-correlation peak, manifested as low-coherence interference fringes (Fig. 1f, g). This bears similarity to white light interferometry29 and optical coherence tomography (OCT)30. However, in contrast to these techniques, in our approach no reference arm or external source are used, and the measurements are self-referenced, i.e., cross-correlated.

In practice, for a strongly scattering barrier, the light intensity from each of the apertures on the mask produces a spatially varying random speckle intensity pattern on the camera pixels (Fig. 1e). However, the speckle intensity patterns do not mask the low-coherence interference fringes, as we design the fringes period to be considerably smaller than the speckle grain size (Figs. 1f, g and 2b, c).

Hidden source experimental TOF measurement through a diffusive barrier. a–c Raw camera images showing: a The speckle pattern measured for a mask with a single aperture (top). b For a double-aperture mask (top), localized interference fringes (highlighted by a red square) provide the TOF difference from the hidden source to the two apertures. c Zoom-in on (b). d–o Multiplexing elevation and horizontal information is accomplished using a mask with two pairs of apertures. 3D localization is obtained by shifting the mask for multiple TOF measurements: d–f Shifting the mask horizontally (e) and vertically (f) as imaged on the barrier. g–i Raw camera images for each mask position. j–l Vertical fringes envelope amplitude as detected by filtered Hilbert transform, providing the TOF difference between the two horizontally separated apertures (bottom: sum over rows). m–o Same as (j–l) for the horizontal fringes envelope amplitude (right: sum over columns). Scale bars: 100 fs

3D passive TOF measurement through a diffusive barrier

Figure 2 presents experimental results of passive TOF measurement using a setup based on the design of Fig. 1d. The full experimental setup is given in Supplementary Fig. 1. As a first demonstration, a single small white-light light-emitting diode (LED) source was hidden behind a highly scattering diffusive barrier, having an 80° scattering angle and no measurable ballistic component (80° light shaping diffuser, Newport). In this experiment, a movable mask, comprised of four small apertures (Fig. 2d), is used to interfere light from two pairs of points on the barrier. The points are vertically and horizontally spaced to obtain TOF information on both elevation and transverse position of the hidden source, respectively, in a single camera shot.

When a mask with only a single aperture is used, the camera image is a random speckle pattern (Fig. 2a). This random speckle pattern provides no useful spatial information on the source position. However, when a mask with two horizontally spaced apertures is used, low-coherence interference fringes appear on top of the random speckle pattern at a specific horizontal position on the camera image (Fig. 2b, c). This white-light fringe pattern is the result of the interference between the speckle patterns that are transmitted through each of the two apertures. Since the hidden source is a broadband white-light source, the interference fringes are located only around the zero path delay difference, i.e., when the path difference accumulated after the diffuser ΔL(x) is equal to the path difference accumulated before the diffuser: ΔL(x) = ΔL, where x is the camera pixel transverse position (Fig. 1d), similar to Young double-slit experiment with low-coherence light. Thus, the optical path length (or TOF) difference of the light from the source to each of the two apertures can be extracted from the fringes position present on a single camera image (Fig. 2c), with a resolution given by the coherence-length (or coherence time) of the source. The practical TOF resolution is also limited by any additional path delay spread inside the diffusive barrier (see Discussion section, and Supplementary Note 11).

A single TOF difference measured from a single camera image, localizes the source position to be on a hyperboloid surface. To localize the source to a single point, additional measurements are required. Two additional measurements with the mask shifted to two different positions will provide two additional hyperboloids. The intersection of the three hyperboloids can localize a single source to a single point in 3D.

Using more complex masks can reduce the required number of camera shots. Inspired by aperture masking interferometry31,32, we designed a mask that multiplex two TOF measurements in a single camera shot. This mask, presented in Fig. 2d, comprises two pairs of apertures, simultaneously providing two TOF measurements by generating both vertical and horizontal fringes. Figure 2g presents an example for a raw camera image acquired using this mask.

The position of the horizontal and vertical high spatial-frequency fringes can be easily extracted using spatial bandpass filtering and a Hilbert transform of the camera image (or alternatively by a spectrogram analysis of the camera image rows and columns, as detailed in Supplementary Fig. 3). The results of such filtering on the camera image of Fig. 2g are shown in Fig. 2j, m, revealing the vertical and horizontal fringes amplitude respectively. The additional required TOF measurements are obtained by shifting the mask position, horizontally (Fig. 2e, h), and/or vertically (Fig. 2f, i). When the mask is horizontally shifted only the vertical fringes position changes (Fig. 2k), while the horizontal fringes position remains fixed, as expected (Fig. 2n). When the mask is vertically shifted, the horizontal fringes shift upward (Fig. 2o), and the vertical ones remain fixed (Fig. 2l).

Localization of multiple light sources

In the case of multiple hidden sources, each single camera image contains several interference fringe patterns at different positions. Each fringe pattern position provides the TOF for a single hidden source. For independent spatially incoherent sources, such as natural light sources, the number of interference fringe patterns is equal to the number of hidden sources, as the light from different sources does not interfere.

As in active TOF-based NLOS imaging approaches, localizing multiple hidden sources without ambiguity requires a larger number of TOF measurements. This can be achieved by shifting a single mask to multiple positions and measuring the TOF difference for each mask position. An experimental example for such localization of two and three hidden sources in two dimensions is shown in Fig. 3. Figure 3b displays the measured fringes’ amplitude envelope at 40 different mask positions: each row in Fig. 3b displays the fringe amplitude extracted from a single camera image (see Supplementary Fig. 3), where the horizontal axis is the TOF delay (camera pixel), and the vertical axis is the mask position. Inspection of Fig. 3b clearly reveals that two hidden sources are present at the hidden scene.

Experimental localization of multiple hidden sources. a Simplified sketch of the setup with two hidden sources (white-light LEDs). b Detected envelope of the interference fringes as a function of the mask position (vertical axis). The fringes positions (horizontal axis) mark the TOF differences. c Hidden scene reconstructed from (b) by back-projection. Each fringe position in a row in (b) localizes the sources to a hyperboloid. The sources real positions are marked by ‘x’. d Back-projection reconstruction of a scene containing three hidden sources. Scale bar: 100 fs

Each intensity-peak in each row in Fig. 3b, localizes the sources to a hyperboloid. The sources are unambiguously localized from the intersection of multiple back-projected hyperboloids (see Supplementary Note 4), as is demonstrated in Fig. 3c, d.

In the experimental results of Fig. 3, the mask position was mechanically scanned. A similar acquisition can be performed without any moving parts, by replacing the mask with a computer-controlled spatial light modulator (SLM). An experimental demonstration using such a programmable mask for passive TOF measurements is presented in Supplementary Fig. 2. The advantages of an SLM-based mask are its versatility and speed, in particular for advanced multiplexing using complex multi-apertures masks (see Supplementary Note 2).

Localization around a corner

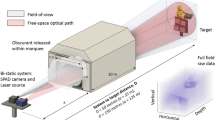

Our passive TOF approach can be used to localize sources around a corner, using light that was scattered off diffusive white-painted walls9,13,16. Figure 4 presents a proof of principle experiment for around the corner passive localization. Figure 4a shows the experimental arrangement, which is conceptually identical to the design of Fig. 2a, with the only difference that the diffusive barrier is replaced by a white-painted wall, and that the wall’s surface is imaged on the movable mask.

Localizing multiple light sources around a corner. a Setup (top view): hidden white light sources illuminate a white-painted surface. The wall surface is imaged by a 4-f telescope on a movable double-aperture mask. The light from the two apertures is interfered on the camera by a lens. b, c Photographs of the wall surface. The imaged apertures positions are marked by ‘x’. The mask scan trajectory is depicted by a dashed line. d Interference fringes envelope as a function of the double-aperture mask position, revealing the two hidden sources. e Scene reconstructed from (d) by back-projection. The sources real positions are marked by ‘x’. f fringes envelope as a function of the time delay, for the case of a thin diffuser (blue), and the white-painted barrier (orange). Scale bar: 100 fs

In order to localize multiple light sources that are placed around the corner, the same measurement protocol described above (Fig. 3) was performed. The measured spatio-temporal (x–t) trace of fringes’ position on camera (i.e., TOF, t) vs. mask position (x) is shown in Fig. 4d. The scene reconstructed via back-projection is shown in Fig. 4e.

As can be observed in Fig. 4d, the TOF traces from the reflective wall display TOF fluctuations that are larger than those observed through the optical diffuser (Fig. 3b). This effect is a result of the rough nature of the white-painted wall. It can be observed and quantified thanks to the unique femtosecond-scale temporal resolution of our approach, whereas picosecond pulses used in previous works would mask these effects. To show that the barrier roughness indeed induces measurable variations in the TOF with our approach, we calculated the standard-deviations of the measured TOF for both the diffusive barrier in transmission and the white-painted wall in reflection to be ~4.6 fs, and ~20 fs, respectively (see Supplementary Fig. 12).

The surface roughness and the multiple-scattering nature of the reflections from the white-painted barrier, induce not only fluctuations in the TOF differences, but also a temporally broader impulse response. This temporal broadening is also measurable by our system, as is shown in Fig. 4f. This figure presents a comparison of the fringes envelope as a function of the time delay for the case of a thin diffuser vs. the white-painted barrier. Compared with the diffuser case, for the white-painted wall we observe a correlation peak that is broader by a factor of ~2, accompanied by a broader pedestal. This broadening, in addition to the TOF fluctuations due to the surface roughness, effectively lower the TOF resolution and reduces the localization accuracy. Nonetheless, the TOF resolution is still generally better than using picosecond pulses, and our approach is still able to successfully localize the source positions in reflection in our proof-of-principle experiments.

Interestingly, the changes in the TOF curves that are induced due the nature of the barrier, and are resolvable with our system, carry information on the barrier. For sufficiently small point-like hidden sources, the surface and scattering properties of the barrier, such as its transport mean free path and/or roughness, can in principle be estimated from the temporal broadening and fluctuations of the low-coherence fringes envelope27.

Localization of reflective objects

The approach can also be used to localize small reflective objects. Figure 5 presents such a demonstration using the same experimental system, with the only difference that two small metallic objects are placed in the scene, and the dark-background scene is illuminated by a halogen lamp (Thorlabs OSL2). The measured spatio-temporal (x–t) TOF trace and scene reconstruction are presented in Fig. 5b, c.

Localizing hidden reflective objects. a Setup (top view): two hidden metallic objects are illuminated by a halogen lamp (Thorlabs OSL2). The reflected light passing through a highly scattering diffuser is interfered on a camera by a movable double-aperture mask. b Interference fringes envelope as a function of the mask position, providing the TOF information. c Scene reconstructed from (b) revealing the sources positions. The objects’ real positions are marked by ‘x’. Scale bar: 100 fs

Discussion

We have demonstrated an approach that allows to passively localize incoherent light sources and reflective objects through diffusive barriers and around corners using standard cameras. As in conventional (active) TOF approaches9,13,16, the spatial localization resolution is determined by the TOF temporal resolution and the setup’s geometry. However, unlike conventional TOF approaches, in our approach the TOF resolution is given by the light source coherence time, rather than the detectors response time. For the white-light sources used in our experiments, the coherence time is of the order of 10 fs (see Supplementary Note 5), more than three orders of magnitude better than the response time of state-of-the-art streak-cameras or single-photon avalanche diode (SPAD) detectors9,13,16. This temporal resolution may be an advantage in, e.g., microscopic imaging applications. However, as we discuss in detail below, while the localization resolution is better than active techniques, the passive nature of our approach and its low-coherence interferometry based measurements fundamentally limit its use to localizing a relatively small number of small sources or reflectors, a limitation that does not occur in active TOF-based approaches, where the measurement background is low compared with the active pulsed illumination intensity.

Beyond the TOF resolution, the main advantage of our approach is in it being passive and having a simple implementation, not requiring fast detectors or streak cameras. Our technique makes use of natural incoherent light, present in many natural scenes, in a fundamentally new fashion.

The passive nature of our TOF approach is also its main disadvantage: since light from natural light sources is considered, the detected intensity levels are inherently low. In our implementation, integration times of several seconds for a single camera shot were required to provide sufficiently high contrast fringes from the white-light LED sources in the diffusive barrier case (Fig. 3), under dark conditions. In the experiments with reflective objects (Fig. 5), exposure times of 30 s were used for a single exposure. In the around-the-corner experiments, exposure times of 15 min per camera shot were required (Fig. 4). These single-shot exposure times yielded total acquisition times of minutes to hours for the total number of measurements presented. Lower exposure times lead to lower fringe visibility and signal-to-noise ratio (SNR) but can still be useful for TOF measurements, as we study in Supplementary Note 8. A lower number of measurements can still be used for localization at a lower accuracy (see Supplementary Fig. 9). Reduced acquisition times and number of acquisitions may be possible by using multiplexed detection with masks having a large number of apertures, and by using more advanced reconstruction algorithms. In our proof-of-principle experiments we have chosen to use simple masks comprised of two groups of circular-apertures pairs to multiplex two TOF measurements in a single camera exposure. More complex masks can be used to allow scene reconstruction from a single camera exposure, in a similar manner as is done in aperture masking interferometry in astronomy32.

To achieve a sufficiently high fringe contrast, the experiments presented in Figs. 2–5 were performed under dark conditions. The effects of ambient light and bright reflecting background on the fringe contrast are studied in Supplementary Note 8. For the hidden white-light LED sources similar localization results can be obtained under both dark and ambient-light conditions. For the reflective objects case, the quality of the results depend on the relative illumination intensity of the objects compared with the background. The requirement for bright sources or reflectors over a relatively dark background is a fundamental requirement for obtaining high contrast fringes. However, as in OCT, low contrast fringes may be resolved by longer exposure times, achieved by averaging multiple exposures or by large well-depth cameras.

Similar to other TOF-based NLOS imaging approaches, the localization resolution is determined by the geometry of the system and the TOF temporal measurement resolution. The transverse (dx) and axial (depth, dz) localization resolution from a single camera shot in our approach are analyzed theoretically in Supplementary Note 6. They are given by dx ≈ lc · z/D and \(dz \approx \frac{{l_{\mathrm{c}}\cdot z^2}}{{D(x + x_{{\mathrm{slits}}})}}\) (Supplementary Eqs. (9) and (8) respectively), where x is the source\object transverse position in respect to the center of the measurement, xslits is the center position of the moving slits, Ic is the coherence length of the light source and D is the slits separation . Substituting our experimental parameters of lc = 6.3 μm, x = 0, xslits = 10 mm, and z = 80 cm, yields: dz ≈ 32 cm and dx ≈ 4 mm, for the single-shot localization resolution. In order to verify our theoretical predictions for the localization resolution, we have performed a set of experiments with two sources separated by various transverse and axial distances. The results of these experiments, which validate our theoretical estimates, are presented in Supplementary Note 6.

The final localization accuracy is considerably better than the single-shot localization resolution, since it is obtained from multiple TOF measurements. An experimental study of the localization accuracy as a function of the number of TOF measurements is presented in Supplementary Note 7.

The theoretical localization resolution improves for larger apertures separation, D. However, when extended objects are concerned, the aperture separation must be smaller than the source coherence size on the barrier, rcoh, to obtain high contrast fringes. This limits the apertures separation when large extended sources or objects are considered, and limits the single-shot localization resolution to the source dimensions (see Supplementary Note 6). Improved localization accuracy can be obtained by scanning the double-aperture mask over larger distances (xslits).

In our experiments with thin diffusive barriers and highly scattering walls, the fringe visibility was high. However, when transmission through thick diffusive barriers is considered, the fringe visibility, as well as speckle contrast, will be reduced due to the narrower speckle spectral correlation width, resulting from the larger spread in optical paths33,34 (see Supplementary Notes 9–11).

The presented technique is conceptually similar to Michelson stellar interferometry, where the fringe contrast (visibility) as a function of the apertures separation is used to reconstruct the shape of a distant star, as was also recently demonstrated for NLOS object reconstruction35. However, in our approach the apertures separation is fixed, and only the positions of the low-coherence fringes positions are used to extract the TOF information. Our approach can be combined with such stellar interferometry inspired approaches to reconstruct also the hidden source’s shape, and not just its position. Interestingly, the same information that was obtained in our approach via. interferometry can in principle be obtained from intensity-only correlations using the Hanbury Brown and Twiss (HBT) approach36.

In our approach, differences in TOF are measured and not the total round-trip TOF, which is measured in active TOF approaches9,13,16. This leads to localization of the hidden sources on spatial hyperboloids in 3D, rather than ellipsoids9,13 or spheres16 of previous works.

As in recent NLOS works, we have chosen to reconstruct the complex scenes via back-projection. Alternatively, when single-localized sources are present, a single source position can also be determined from the slope and position of its high brightness spatio-temporal measured curve (see, e.g., Fig. 3b), in a similar manner to the principle of parallax-based localization, which was also recently used for localization based on phase-space measurements17.

Unlike memory-effect based works5, the FOV of our technique is not limited by the memory-effect, since each source position is measured independently. However, for our technique to work well through thick diffusive barriers, the apertures separation, Dslits, must be larger than the thickness of the barrier (in transmission) or its transport mean free path (in reflection), to minimize mixing between the signals in two apertures, induced by the light spreading inside the barrier.

Methods

Experiments

The full experimental setups are presented in detail in Supplementary Fig. 1. The hidden light sources were generated by splitting the light from a white-light LED (Thorlabs MWWHF1) to four, using a fiber bundle (Thorlabs BF42HS01), effectively producing white light sources of 200-μm diameter and numerical aperture of NA = 0.39, having an average power of ~1.5 mW. The diffusive barrier was a Newport light shaping diffuser with a scattering angle of 80° (40° for the experiments with reflective objects (Fig. 5)) and a transmission of >90% in the visible range.

The scattering wall was a metal plate painted with white matte spray (Tambour 465-024). The light sources were placed at various positions with distances of 30–110 cm from the diffuser and 56–70 cm from the wall. For the object localization experiment the light source used was a white light halogen lamp (Thorlabs OSL2) culminated with its culmination package (Thorlabs OSL2COL). The object was a metallic nut covered with black tape leaving a reflective area 3 mm high and 0.7-mm wide. The diffusive barrier/wall was imaged on the aperture masks using a 4-f telescope. The light collection aperture diameter on the first lens was 5 cm. For the measurements shown in Figs. 2 and 5 and for around the corner measurements, the aperture was limited to 2.5 cm. The apertures masks were fabricated by drilling 0.25-mm-diameter circular holes in ~2 mm-thick black Delrin plates. The separation between the apertures was 3.2 mm. For the experiments with reflective objects (Fig. 5) and the system’s resolution and accuracy analysis measurements (Supplementary Figs. 5, 7, 8 and 10) a mask with 0.6 mm diameter holes and separation of 1.5 mm was used. The light passing through the mask was focused on an sCMOS camera (Andor Neo 5.5) with an f = 10 cm lens in an f–f configuration (see Supplementary Fig. 1). Each TOF measurement was acquired by a single long-exposure (15 s to 15 min) image.

Data availability

All relevant data are available from the authors upon request.

Code availability

All relevant codes are available from the authors upon request.

Change history

14 August 2019

The original version of this Article was updated shortly after publication to correct an error in the name of the of the Reviewer ‘Amaury Badon’ in the Peer review information section, which was previously incorrectly given as ‘Amoury Badon’.

References

Freund, I. Looking through walls and around corners. Phys. A: Stat. Mech. Appl. 168, 49–65 (1990).

Wang, L., Ho, P. P., Liu, C., Zhang, G. & Alfano, R. R. Ballistic 2-D imaging through scattering walls using an ultrafast optical Kerr gate. Science 253, 769–771 (1991).

Katz, O., Small, E. & Silberberg, Y. Looking around corners and through thin turbid layers in real time with scattered incoherent light. Nat. Photonics 6, 549–553 (2012).

Bertolotti, J. et al. Non-invasive imaging through opaque scattering layers. Nature 491, 232–234 (2012).

Katz, O., Heidmann, P., Fink, M. & Gigan, S. Non-invasive single-shot imaging through scattering layers and around corners via speckle correlations. Nat. Photonics 8, 784–790 (2014).

Takasaki, K. T. & Fleischer, J. W. Phase-space measurement for depth-resolved memory-effect imaging. Opt. Express 22, 31426–31433 (2014).

Edrei, E. & Scarcelli, G. Optical imaging through dynamic turbid media using the Fourier-domain shower-curtain effect. Optica 3, 71–74 (2016).

Kirmani, A., Hutchison, T., Davis, J., Raskar, R. Looking around the corner using transient imaging. in 2009 IEEE 12th International Conference on Computer Vision 159–166 (IEEE, 2009).

Velten, A. et al. Recovering three-dimensional shape around a corner using ultrafast time-of-flight imaging. Nat. Commun. 3, 745 (2012).

Gupta, O., Willwacher, T., Velten, A., Veeraraghavan, A. & Raskar, R. Reconstruction of hidden 3D shapes using diffuse reflections. Opt. Express 20, 19096–19108 (2012).

Heide, F., Xiao, L., Heidrich, W., Hullin, M. B. Diffuse mirrors: 3D reconstruction from diffuse indirect illumination using inexpensive time-of-flight sensors. in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 3222–3229 (2014).

Buttafava, M., Zeman, J., Tosi, A., Eliceiri, K. & Velten, A. Non-line-of-sight imaging using a time-gated single photon avalanche diode. Opt. Express 23, 20997–21011 (2015).

Gariepy, G., Tonolini, F., Henderson, R., Leach, J. & Faccio, D. Detection and tracking of moving objects hidden from view. Nat. Photonics 10, 23–26 (2016).

Chan, S., Warburton, R., Gariepy, G., Leach, J. & Faccio, D. Non-line-of-sight tracking of people at long range. Opt. Express 25, 10109–10117 (2017).

Satat, G., Tancik, M., Gupta, O., Heshmat, B. & Raskar, R. Object classification through scattering media with deep learning on time resolved measurement. Opt. Express 25, 17466–17479 (2017).

O’Toole, M., Lindell, D. & Wetzstein, G. Confocal non-line-of-sight imaging based on the light-cone transform. Nature 555, 338–341 (2018).

Liu, H. Y. et al. 3D imaging in volumetric scattering media using phase-space measurements. Opt. Express 23, 14461–14471 (2015).

Klein, J., Peters, C., Martn, J., Laurenzis, M. & Hullin, M. B. Tracking objects outside the line of sight using 2D intensity images. Sci. Rep. 6, 32491 (2016).

Bouman, K. L. et al. Turning corners into cameras: principles and methods. in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 2270–2278 (2017).

Salhov, O., Weinberg, G. & Katz, O. Depth-resolved speckle-correlations imaging through scattering layers via coherence gating. Opt. Lett. 43, 5528–5531 (2018).

Horstmeyer, R., Ruan, H. & Yang, C. Guidestar-assisted wavefront-shaping methods for focusing light into biological tissue. Nat. Photonics 9, 563–571 (2015).

Freund, I., Rosenbluh, M. & Feng, S. Memory effects in propagation of optical waves through disordered media. Phys. Rev. Lett. 61, 2328–2331 (1988).

Duvall, T. L. Jr., Jeffferies, S., Harvey, J. & Pomerantz, M. Time–distance helioseismology. Nature 362, 430–432 (1993).

Weaver, R. L. & Lobkis, O. I. Ultrasonics without a source: thermal fluctuation correlations at MHz frequencies. Phys. Rev. Lett. 87, 134301 (2001).

Snieder, R., Grêt, A., Douma, H. & Scales, J. Coda wave interferometry for estimating nonlinear behavior in seismic velocity. Science 295, 2253–2255 (2002).

Davy, M., Fink, M. & De Rosny, J. Green’s function retrieval and passive imaging from correlations of wideband thermal radiations. Phys. Rev. Lett. 110, 203901 (2013).

Badon, A., Lerosey, G., Boccara, A. C., Fink, M. & Aubry, A. Retrieving time-dependent Green’s functions in optics with low-coherence interferometry. Phys. Rev. Lett. 114, 023901 (2015).

Garnier, J. & Papanicolaou, G. Passive Imaging with Ambient Noise. (Cambridge University Press, 2016).

Häusler, G., Herrmann, J. M., Kummer, R. & Lindner, M. W. Observation of light propagation in volume scatterers with 10 11-fold slow motion. Opt. Lett. 21, 1087–1089 (1996).

Huang, D. et al. Optical coherence tomography. Science 254, 1178–1181 (1991).

Haniff, C. A. et al. The first images from optical aperture synthesis. Nature 328, 694–696 (1987).

Tuthill, P. G., Monnier, J. D., Danchi, W. C., Wishnow, E. H. & Haniff, C. A. Michelson interferometry with the Keck I telescope. Publ. Astron. Soc. Pac. 112, 555–565 (2000).

Curry, N. et al. Direct determination of diffusion properties of random media from speckle contrast. Opt. Lett. 36, 3332–3334 (2011).

Small, E., Katz, O. & Silberberg, Y. Spatiotemporal focusing through a thin scattering layer. Opt. Express 20, 5189–5195 (2012).

Batarseh, M. et al. Passive sensing around the corner using spatial coherence. Nat. Commun. 9, 3629 (2018).

Hanbury Brown, R. & Twiss, R. Q. Correlation between photons in two coherent beams of light. Nature 177, 27–29 (1956).

Acknowledgements

This material is based upon work supported by the Defense Advanced Research Projects Agency (DARPA) under Contract No. HR0011-16-C-0027, the ISRAEL SCIENCE FOUNDATION (Grant no. 1361/18), and the European Research Council (ERC) under the European Union Horizon 2020 research and innovation program (Grants no. 677909). O.K. acknowledges support from the Azrieli Faculty Fellowship, Azrieli Foundation. The information presented in this work does not necessarily reflect the position or policy of either DARPA or the U.S. Government, and no such official endorsement should be inferred.

Author information

Authors and Affiliations

Contributions

O.K. conceived the idea, J.B.L. and O.K. designed the experiments and performed numerical simulations. J.B.L. conducted the experiments and analyzed the data. J.B.L. and O.K. wrote the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Peer review information: Nature Communications thanks Andreas Velten, Amaury Badon and other anonymous reviewers for their contribution to the peer review of this work. Peer reviewer reports are available.

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Boger-Lombard, J., Katz, O. Passive optical time-of-flight for non line-of-sight localization. Nat Commun 10, 3343 (2019). https://doi.org/10.1038/s41467-019-11279-6

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41467-019-11279-6

This article is cited by

-

Spatio-spectral 4D coherent ranging using a flutter-wavelength-swept laser

Nature Communications (2024)

-

Research Advances on Non-Line-of-Sight Imaging Technology

Journal of Shanghai Jiaotong University (Science) (2024)

-

Learning diffractive optical communication around arbitrary opaque occlusions

Nature Communications (2023)

-

Towards passive non-line-of-sight acoustic localization around corners using uncontrolled random noise sources

Scientific Reports (2023)

-

Blind inverse light transport using unrolling network

Applied Intelligence (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.