Abstract

The role of serotonin in human brain function remains elusive due, at least in part, to our inability to measure rapidly the local concentration of this neurotransmitter. We used fast-scan cyclic voltammetry to infer serotonergic signaling from the striatum of 14 brains of human patients with Parkinson’s disease. Here we report these novel measurements and show that they correlate with outcomes and decisions in a sequential investment game. We find that serotonergic concentrations transiently increase as a whole following negative reward prediction errors, while reversing when counterfactual losses predominate. This provides initial evidence that the serotonergic system acts as an opponent to dopamine signaling, as anticipated by theoretical models. Serotonin transients on one trial were also associated with actions on the next trial in a manner that correlated with decreased exposure to poor outcomes. Thus, the fluctuations observed for serotonin appear to correlate with the inhibition of over-reactions and promote persistence of ongoing strategies in the face of short-term environmental changes. Together these findings elucidate a role for serotonin in the striatum, suggesting it encodes a protective action strategy that mitigates risk and modulates choice selection particularly following negative environmental events.

Similar content being viewed by others

Introduction

The neurotransmitter serotonin influences a broad range of brain functions, including mood, sleep, learning, and decision-making (Portas et al, 2000; Must et al, 2007; Diekhof et al, 2008), and is thus implicated in a diverse range of diseases, including obsessive compulsive disorder (Hu et al, 2006), anorexia nervosa (Kaye et al, 1998), and depression (Whittington et al, 2004; Risch et al, 2009). However, there is much debate as to what it actually encodes. Various data and arguments favor aspects of it as being opponent to dopamine, thus suggesting a role in punishment and loss (Deakin, 1983; Graeff and Deakin, 1991; Daw et al, 2002; Cools et al, 2008; Crockett et al, 2009; Tanaka et al, 2009; Boureau and Dayan, 2011). Other evidence suggests a role in patience while waiting for a reward (Miyazaki et al, 2012; Worbe et al, 2014; Fonseca et al, 2015; Li et al, 2016), disengagement (Tops et al, 2009), motor activity (Jacobs, 1994), or even reward (Dölen et al, 2013; Liu et al, 2014; Li et al, 2016). Recent optogenetically tagged recordings from serotonergic neurons in the raphé have not resolved these debates (Cohen et al, 2015), perhaps partly because of heterogeneity among serotonin neurons (Lowry, 2002). A voltammetric approach in awake, behaving, humans may offer new insights into the role of serotonin at a temporal resolution that reveals dynamic events several orders of magnitude faster than positron emission tomography (Yao et al, 2009) whilst also observing concentrations of the neurotransmitter at downstream structures directly, rather than assuming linearity with firing rates in the raphé (Montague et al, 2004).

Recently, we developed a fast-scan cyclic voltammetry procedure to identify dopamine fluctuations in patients with Parkinson’s disease who were undergoing surgery for deep brain stimulator implantation (Kishida et al, 2016). These unique multi-subject recordings provided millisecond-resolved estimates of relative fluctuations in the concentration of this neurotransmitter whilst patients played an investment task that was designed to elicit adaptive behavior in the face of rewards and punishments. Serotonin also produces redox reactions around this range; thus, were it possible to distinguish serotonin from dopamine, we could measure relative serotonergic responses from exactly these same signals, thus addressing its involvement in the same task. There are approaches to perform this separation or boost serotonin signals in slices or in vivo in other animals such as picking peaks in the voltammetric recordings visually (John and Jones, 2007), using drugs (John and Jones, 2007; Hashemi et al, 2011) or even optogenetics (Xiao et al, 2014). However, these do not readily extend to studies in humans.

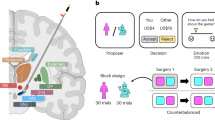

Here we derived a principled supervised learning method to apply to these same human recordings to measure trial-by-trial fluctuations in extracellular concentrations of serotonin, distinguished from dopamine. We used this to measure serotonin transients in 16 hemispheres from patients as they performed the investment task (12 of these recordings were used in the previous dopamine assessment). In the task, patients selected an investment level on each trial as market prices unfolded according to real historical financial markets (Lohrenz et al, 2007). Dopamine transients in this task were found to encode not the typical ‘reward prediction error (RPE)’ but rather a signal that combined actual and counterfactual RPEs (the latter when subjects invested little, and so experienced regret or rejoicing when the market rose or fell, respectively) (Kishida et al, 2016). We hypothesized that serotonin may function in a similar way but in the loss domain, with the potential to represent counterfactual losses from foregone gains akin to a regret signal (a positive RPE when investments are low). Moreover, given the theorized role of serotonin in active avoidance (Dayan and Huys, 2008), we investigated whether fluctuations in serotonin correlated with avoidance behavior in the task (ie reducing one’s level of exposure) to actual or counterfactual losses.

Materials and methods

Participants and Fast-Scan Cyclic Voltammetry

Fourteen patients (aged 61.1±9.7 years, 2 female) undergoing surgery for the implantation of deep brain stimulation (DBS) electrodes participated in the experiment. Two patients participated on separate days during right and left implantation to give a total of n=16 recordings. All patients had a diagnosis of Parkinson’s disease. Participants provided informed consent and were instructed that they could opt out of the experiment at any time. Procedures were approved by the Virginia Tech and Wake Forest Baptist Medical Center Institutional Review Boards (IRB #11-078). No adverse effect of the extended surgical procedure was reported. Eleven of our participants (12 hemispheres) formed part of the cohort reported in Kishida et al (2016). In Supplementary Table 1 we provide details of disease duration, medication, and comorbidities. During the surgery and during our test period, all patients were off their dopamine-related medications but remained on all other pharmacological treatments. For details of the surgical and voltammetry procedure see Supplementary Materials and Methods. Our voltammetric electrochemical assays relied upon redox reactions of serotonin along a carbon fiber (at 10 Hz), which we assumed would produce measurable current changes in proportion to the concentration of the chemical species in the extracellular space (for full details of the carbon fiber and FSCV protocol see Supplementary Materials and Methods and Kishida et al, 2011).

Investment Task

Participants played a decision-making game in a simulated ‘stock market’. Participants were first endowed with 100 points, and, on each trial they had to decide an amount to invest in the stock market. This amount could be 0, 10, 20, 30, 40, 50, 60, 70, 80, 90, or 100% of their points. At the beginning of a game with a single market, participants were first shown a trajectory of previous market moves indicating changes in the value of the market (Figure 3a). They were then asked to make their first investment decision (submit bet), and following a delay (840±12 ms), the market value change was revealed and players lost or gained points in accordance with market returns. Following this outcome, participants then submitted a further 19 investment or ‘bet’ decisions in a self-timed manner. On average, players waited 4.5 s to submit their next decision after the reveal event. In all, 6 markets of 20 decisions were played. Our markets were consistent with real historical markets (eg, mimicking events before and after 1929 Wall St crash and 1987’s ‘black Monday’).

We chose this decision-making task as it is engaging and it could be used to interrogate the serotonergic response to ‘what is’ as well as ‘what could have been’. The latter involve so-called counterfactual or fictive RPEs. These errors are ecologically important as learning signals and have been previously shown to activate the human striatum (Lohrenz et al, 2007). For the details of how we computed RPE on each trial see Supplementary Materials and Methods.

The game was designed with 6 markets of 20 decisions (120 decisions). In 14 of our 16 experiments, the participants completed the full game. For two patients, recordings were stopped before experimental completion with 5 markets (100 decisions) and 2 markets (40 decisions) completed. Thus, a total of 1820 trials were played. From those 1820 trials, a total of 1729 trials could be associated with prospective actions within a market (ie, where (bet(t+1)−bet(t)) can be computed within a given market). In Figure 4 we present the concentration differences in serotonin for negative compared to positive RPEs at high (60–100%) and low (0–50%) betting levels. Here 1724 trials are included as 5 trials resulted in RPEs equal to zero. In Figures 5 and 6 we examine these 1724 bet decisions parametrically and in terms of the adjustments in bet made on the next trial. We show the transients in serotonin associated with lowering, holding or raising one’s bet, from investment levels of (0%), (10 and 20%), (30 and 40%), (50 and 60%), (70 and 80%), and (90 and 100%).

Testing RPEs and Action Control

For each voltammogram we applied the model’s serotonin coefficients to predict concentration levels of serotonin at 100 ms temporal resolution. For each trial we collected transient responses of 700 ms duration around the time of market reveal (0 ms) from −100 to 600 ms. Data were Z-scored over an individual market and baseline corrected within their cell to zero at either −100 (Figure 4) or 0 ms (Figures 5 and 6).

To test for responses that corresponded with RPEs (Figure 4), we tested the fluctuations in serotonin transients at the time of market reveal (the outcome of their decision) from 0 to 600 ms. For this analysis we spilt our trials according to the outcome-related RPE and according to the bet size (high or low). To test whether these prediction error-related responses (Figure 4) further corresponded with prospective action encoding (Figures 5 and 6) we performed a second analysis. Specifically we tested, again, the fluctuations in serotonin transients at the time of market reveal from 0 to 600 ms. For this analysis however, we spilt our trials according to the outcome-related RPE (negative, Figure 5; and positive, Figure 6), and according to the decision to ‘raise’, ‘hold’, or ‘lower’ the current bet from levels (0%, (10–20%), (30–40%), (50–60%), (70–80%), and (90–100%)). We aimed to examine differences in serotonin transients associated with negative compared to positive RPEs. Given our previous findings for dopamine (Figure 2), we were also interested in the bet dependence on the prediction error since, for example, positive RPEs might be coded as a poor outcome if the bet decision on that trial was low. We also aimed to determine whether these transients predicted decision-making on the next trial—ie, what adjustment might be applied from current investment levels. For full details of the statistical analysis applied to test for RPE-related serotonin responses and to test for action control-related responses please see Supplementary Materials and Methods.

Results

Serotonin Concentrations: Estimation Results

We followed a supervised-learning approach for extracting serotonin signals (Kishida et al, 2016). We created a training set of voltammograms taken from a set of probes constructed identically to those used in the patient recordings. The probes were exposed to analytes in a flow cell containing a known range of serotonin concentrations (from 0 to 8000 nM) confounded with various concentrations of dopamine and pH levels. The concentration dependence of the shape and magnitude of the serotonin redox current was directly apparent (Figure 1a). We therefore trained a multivariate penalized regression model (Tibshirani, 1996) to extract serotonin concentration estimates from all points of each trace (1000 samples over 10 ms, Figure 1a). We aimed to produce a dual-transmitter model that could predict both serotonin and dopamine, as we had previously observed task-related dopamine fluctuations in these samples.

Serotonin concentration prediction from dual transmitter model. (a) An illustration of voltammograms acquired for varying levels of serotonin concentration (left) and dopamine concentration (right) in the flow cell. We see that low to high concentration levels produce changes in current magnitude around the oxidation potentials (insets). Concentration is denoted by []. (b) The flow cell predictions are illustrated for serotonin under varying concentrations of dopamine in the mixture. Serotonin was sampled at each level of concentration from 0.1 to 8 μM in 0.1 μM increments. For each of these 80 concentrations we computed the serotonin prediction over different levels of dopamine. Not all concentration mixtures for dopamine and serotonin were acquired and acquired mixtures are denoted by the asterisk. Over this test grid we interpolated (using linear triangulation) across the acquired tests samples to produce a three-dimensional heat map of serotonin predictions. This plot show that serotonin predictions do not vary systematically with increasing dopamine levels. We illustrate one outlying serotonin prediction, which was observed but not included in the interpolated plot for visualization purposes. (c) Flow cell predictions for dopamine in the mixture, plotted as a function of increasing dopamine and increasing serotonin as per b. Again, dopamine predictions using our model do not appear to be systematically affected by the level of serotonin in the sample. (d) We tested 200 out-of-sample voltammograms for each concentration level to quantify the error in generalizability. Illustrated in gray are predictions for different concentration ranges and their 99.99% confidence intervals. Red crosses denote the mean of the (correct) test range. For each range we randomly selected 20 predictions from the full test set.

We used L1-penalized regression to create a generalizable dual-transmitter regression model that estimated the concentrations of DA independent of the ambient levels of 5-HT and pH, and 5-HT, independent of the level of DA and pH (Supplementary Figure S1). We found that regression coefficients were distributed throughout the trace indicating that concentration levels were best predicted by considering not only the peaks of oxidation and reduction but also other points distributed across the voltage sweep (Figure 1a). Crucially, we were able to estimate the concentrations of serotonin in each mixture independent of dopamine (Figure 1b) and we could predict dopamine concentration levels independent of serotonin (Figure 1c) Moreover, our predictions for serotonin were trained to ignore altering pH levels (Supplementary Figure S2). This model estimated the true serotonin levels within 90% confidence intervals of the estimated levels in absolute terms (Figure 1d), even in the presence of dopamine and at differing pH. We also tested whether at very low concentrations we could differentiate 5-HT concentrations and found a resolution of ~100 nM (Supplementary Figure S2).

Figure 1a shows that determining the low contamination of the models is difficult to observe by visual inspection, as the voltammograms for changing dopamine concentrations appear similar to those for serotonin (Figure 1a). For additional validation of our procedure, we compared the dopamine predictions to our previously published findings (Figure 2). We confirmed that on the identical data sets to those previously published (17 recordings in total), we could replicate previous results on transient fluctuations in dopamine from the dorsal striatum (Kishida et al, 2016) (Figure 2). We also show in a supplemental analysis (Supplementary Figure S2), the correlation structure amongst our DA and 5 HT estimates, where small positive correlations were found to exist.

Dopamine replication in mixture model. We performed an in vivo validation by replicating the previous dopamine findings using our new multivariate mixture model. We split trials into low (0–50%), medium (60–80%), and high (90–100%) bets and examined dopamine transients at these different bet levels in response to positive and negative reward prediction errors. As per the findings reported previously for a univariate model (Kishida et al, 2016) dopamine estimates from the dual transmitter model predict dopamine encoding of prediction errors. Using two-way ANOVAs with factors RPE (negative RPE and positive RPE) and bet levels (high, medium, low) we found a significant interaction at 200 (p=0.005), 300 (p=0.0001), 400 (p=0.0016), 500 (p=0.0079), and 600 ms (p=0.02). Post hoc two-sample t-test results for each bet level and time point are illustrated (*p<0.05, ***p⩽0.001). For validation purposes, we report here, those measurements from the original cohort of 17 patients reported in Kishida et al, 2016. Data were baseline corrected to zero at 0 ms, and bar graphs depict the mean and SEM.

Behavior on the Sequential Investment Task

Figure 3a shows the sequence of events in the investment game (Lohrenz et al, 2007), which participants played during voltammetric recordings from dorsal striatum. The game was designed to elicit prediction-, prediction error-, reward-, and future investment-related signals associated with revelation of market price movements on 120 separate trials over 6 historical markets (20 moves per market). On each trial participants chose a level of investment for their current endowment with possible choices from 0 to 100% with 10% increments (Figure 3a) and submitted their choices. Then participants were shown the market move (its change in value, Figure 3a) to end a trial. Our behavioral data showed that over all subjects, bets were distributed bimodally across these 11 possible investment choices (Figure 3b), with investment levels distributed around 50% and also peaking at 100%. RPEs measure the difference between the return on a trial and a prediction. We defined the return as the fractional change in wealth (combining the current bet size and market change), and the prediction as an average of recent previous returns. We also scaled this difference by the SD of those previous returns (see Supplementary Materials and Methods). Across the cohort, this led to a spectrum of positive and negative RPEs (Figure 3c). Further, based on our previous results (Kishida et al, 2016) we considered counterfactual as well as real outcomes depending on the current betting level. This study suggested that negative outcomes could be experienced in two ways: the first were those outcomes where negative RPEs were experienced and so events were ‘worse than expected’ (as bets were high). The second were counterfactual negative events, in which positive RPEs occurred when bets were low and thus regret on a foregone gain. We correlated the whole collection of events and choices with relative fluctuations in transmitter concentrations.

Investment game and distributions of bets and RPEs over trials. (a) In this figure we provide an illustration of the overall task design. To investigate the role of serotonin we used an investment game (Lohrenz et al, 2007) where participants were endowed with an initial 100 ‘points’ and were instructed to invest a percentage of this amount for investment into a stock market (historic markets, eg, the 1929 Wall St crash). Participants could choose to invest 0–100% (color bar) in 10% increments (blue arrow, Bet(t)). On each trial participants submitted their investment (upper panel) and 840 ms later (±12 ms std) were shown the market return (middle panel). On the outcome, participants either lost or gained in accordance with their investment. From these market moves we calculated the reward prediction error on that trial. Following this outcome, participants submitted their next investment (blue arrow, Bet(t+1)) at their own pace (lower panel). (b) Distribution of investment choices over all participants. (c) Distribution of reward prediction errors, calculated over each market move over all participants.

Serotonin Encodes Loss Prediction Errors

We assessed serotonin responses in voltammograms at a repetition frequency of 10 Hz using the penalized regression models developed above. We examined fluctuations in estimated concentrations at the time of trial outcomes (as the market move is revealed) and tested for the serotonergic encoding of prediction errors. Figure 4a displays the serotonin transients associated with positive and negative RPEs. Remarkably, when considering all betting levels, serotonin displayed an upward fluctuation to negative prediction errors and a downward fluctuation to positive prediction errors (Figure 4a and see also Supplementary Figure S3). Given the potential difference in response to negative RPEs at high and low betting levels (loss and counterfactual loss/foregone gain, respectively), we examined serotonin fluctuations across a median split of bet levels. Figure 4b shows that this encoding reversed for the lower half of bets with upward serotonin fluctuations encoding positive errors and downward fluctuations encoding negative errors. The inversion of the encoding can be understood as the presence of a counterfactual term for serotonin, which responds to negative outcomes both in the context of a surprising loss when one was highly invested in the market and a surprising gain when one was not. The difference between Figure 4b and Supplementary Figure S3 is the baseline normalization of the signals to 100 ms before revelation of the outcome (Figure 4b) or at the time of revelation (Supplementary Figure S3). We present both as a form of exploratory result. They suggest that the dynamics of how the prediction component of the prediction error is represented in serotonin concentration would be worth exploring in future studies; in particular, higher temporal resolution in the voltammetric signal could elucidate early dynamics that alter baseline properties (Schmidt et al, 2013,Supplementary Figure S3). Here the interaction of RPE and bet amount was significant for the earlier baseline (Figure 4b). In our Supplementary Information (Supplementary Figure S3) we also include a random effects analysis across patients to ensure that our results are not driven by only a few subjects. These additional analyses support our findings when accounting for individual differences.

Serotonin encodes negative reward prediction errors at high bets. (a) Testing across all outcomes and separating according to either a concomitant positive or negative reward prediction error, we found that serotonin fluctuated significantly more positively for negative (black line) compared to positive (cyan line) reward prediction errors. Six two-sample t-tests were performed over temporal bins (100–600 ms) comparing concentration levels; significant effects of RPE were observed at 300 and 400 ms (*p<0.05). Here we baseline corrected at −100 ms. (b) Two-way analyses of variance of serotonin’s transient response at presentation of the outcome or market move were performed for six temporal bins (100–600 ms), with factors reward prediction error polarity; positive and negative and bet level; low (0–50%), and high (60–100%). These revealed a significant interaction of reward prediction error and bet level at 100 (F=14.34, p=0.0002) and 500 ms (F=4.89, p=0.027). Post hoc two-sample t-tests were performed using permutation testing to assess within bet range differences in the response to negative compared to positive reward prediction errors. For the high bet range (60–100% invested), serotonin transients were significantly greater for negative compared to positive reward prediction errors at 100 ms, p=0.001; 300 ms, p=0.011; 400 ms, p=0.005; and 500 ms, p=0.01. While for the low bet range (0–50% invested) responses were significantly greater for positive compared to negative reward prediction errors at 100 ms; p=0.016. Only the differential response at 100 ms in the high bet case survived FWE-correction p=0.005 (**p⩽0.005, *p⩽0.05, (**)FWE-corrected). We also applied one-sample, two-sided t-tests in order to investigate the effects of RPE and bet size on 5-HT responses as compared to baseline. We find that the difference is driven by significant decreases in 5-HT following positive reward prediction errors at high bets, and to negative reward prediction errors at low bets («p<0.005, <p<0.05). Bar graphs depict the mean and SEM. Comparisons of transients with an alternate baseline is presented in Supplementary Figure S3. (c) The area under the curve in b revealed a significant interaction (F=7.13, p=0.0077) of RPE and bet level with larger (in time and amplitude) negative-going transients for positive reward prediction error responses in the high bet condition. (d) We tested the serotonin response at 100 ms and its correlation with the sign and polarity of the RPE. After omitting 65 outliers (~3% of trials) that may drive the effect (outliers defined as RPEs with an absolute magnitude >3 and Z-scores with an absolute magnitude >5) we see a small but significant correlation for the different bet levels. Serotonin transients are negatively correlated with the RPE for high bets (R=−0.0714; p=0.0113) and positively correlated with the RPE for low bets (R=0.0653; p=0.0494). To explore these results more granularly, we examined individual bins. We found that the only significant individual bins were at (20 and 30%), (60 and 70%), and (80 and 90%) with correlation coefficients and p-values of (R=0.19; p=0.01), (R=−0.08; p=0.06), and (R=−0.14; p=0.009), respectively. This suggests that a putative ‘indifference point’ for counterfactual and actual losses occurs around 40–50%.

To allow for duration of serotonergic signaling to be altered in response to RPEs, we computed the area under the curve to indicate ‘cumulative serotonin’ responses. For this analysis the interaction of prediction error (positive or negative) and bet invested (high or low) was significant (Figure 4c). In particular, the response to positive RPEs seemed to induce a depression in serotonin that was more prolonged than in the low bet condition. Further, a parametric analysis revealed a small but significant negative correlation between the serotonin response and RPE at high bets and a small but significant positive correlation between serotonin response and RPE at low bets (Figure 4d). To examine the subjective effects of actual and counterfactual gains and losses, rather than to RPE per se, we conducted a further supplemental analysis (Supplementary Figure S4). This revealed a lack of a parametric effect in gains or losses (Supplementary Figure S4).

Serotonin Protects Investors from Loss

Given these bet-dependent prediction error transients, we sought to establish serotonin’s influence on investment decisions. The effect of counterfactual outcomes on both dopamine (Kishida et al, 2016) and serotonin (Figure 4) suggests that it is crucial to perform the analysis of action encoding (betting more or less) at different bet levels as a bet of 0% could result in large foregone gains (ie, counterfactual losses), while a bet of 100% could result in large actual losses. In other words, in the context of this task, one’s next move carries two distinct risks of loss on the upcoming trial. We tested whether fluctuations in 5-HT could be used to predict the bet level on the next trial. Using a multiple linear regression we tested for serotonin and game factors in predicting the next decision. Specifically, our independent variables included the area under the curve of the 5-HT transient from 100 to 600 ms, the bet level, the polarity of the RPE at trial (t), as well as their interactions. Our dependent variable was the change in bet at trial (t+1). Our regression model revealed significant predictive power in upcoming decision (F-statistic vs constant model: 32.4, p-value <0.00001; Supplementary Table 2). Importantly the regressor describing the interaction of serotonin and current bet level was a significant predictor of the upcoming decision (p=0.04). This was a negative interaction indicting that for large serotonin responses and large bets, participants tended to decrease their bet, and for large serotonin responses and small current bets, participants tended to increase their bets. We found that the three-way interaction of serotonin, bet level, and RPE sign was at trend level significance (p=0.13; Supplementary Table 2).

To examine and illustrate these regression effects, we first separated out ranges of current bet levels (Figure 5a), and examined how serotonin transients were associated with decisions following a negative RPE (Figure 5). We tested the relationship between serotonin responses and current bet levels at trial (t) for decisions to ‘lower’ and for decisions to ‘hold or raise’ the bet on trial (t+1) (Figure 5b). These analyses are a recapitulation of the negative RPE responses in Figure 4 but separated according to what the subject decides to do next. We found that under the conditions of the decision to withdraw from the market following negative RPEs there was a strong positive correlation between 5-HT and current betting levels (Figure 5c). This is important given that withdrawal from the market (ie, lowering one’s bet) is consistent with the hypothesized role for serotonin in forms of avoidance (Dayan and Huys, 2008). This striking parametric effect is indicated in the serotonin time courses of Figure 5b. We can see that reducing the bet from a high amount implies reducing the risk of actual loss, and is associated with positive serotonin fluctuations (Figure 5b). Reducing the bet from an already low amount implies increasing the risk of counterfactual losses, and is associated with negative fluctuations. A trend toward a significant positive correlation for serotonin and decisions to hold or raise one’s bets was also observed. In the time courses we can see that particularly at 10–20% bet levels, serotonin rises following a negative RPE and is associated with a subsequent raise-or-hold bet decision. This direction is again consistent with serotonin protecting against counterfactual losses on the next trial.

Serotonin and active avoidance following negative reward prediction errors. (a) Depiction of ‘next actions’. Responses to trial (t) were analyzed for bet(t)=(0%, (10–20%), (30–40%), (50–60%), (70–80%), and (90–100%)). For each of these six levels we examined ‘lower bet’ next actions (black arrows) and ‘hold-or-raise’ bet next actions (gray arrows). (b) The n=842 negative RPE transients presented in Figure 4a and b are represented here but separated according to next-bet decision and current betting level. These results are provided in order to explore the significant negative interaction between serotonin and current bet on predicting change in bet (Supplementary Table 2). Consistent with this negative interaction in the regression analysis, we observe that large positive 5-HT transients at large bets predict a lowering of the bet at trial t+1 (black line, right panels). While dips in 5-HT are associated with reducing one’s bet at low bet levels to even lower levels (black line, left panels). The opposite effect is observed for holding or raising one bets, grey lines (with 0% not showing any significant transient effects). Significance here is indicated for uncorrected t-tests against zero (*p<0.05, **p<0.01, ***p<0.005). (c) Applying a correlation analyses to examine the relationship between serotonin and current bet levels when the next decision is to lower one’s bet. We find that over all ‘lower bet’ decisions, serotonin, and the current bet level were positively correlated (R=0.3; p<0.00001). This indicates that market withdrawal is affected by serotonin following poor outcomes. More specifically it indicates that serotonin may prevent further withdrawal (when investment is already low) and promote withdrawal (when investment is high). (d) Correlating decisions to hold or raise bets suggests the opposite effect (but at only trend-level significance).

In Figure 6 we explore the same dependencies but following positive RPEs. Here at low bets, again from 10 to 20% levels we observe a upgoing serotonin transient (Figure 6b) that dominates the low bet regime (Figure 4b). At this betting level both ‘lower’ and ‘raise-hold’ decisions are associated with a positive transient. Significant negative-going fluctuations are observed at the lowest betting level of 0%. No parametric effects are observed for either decision following positive RPEs (Figure 6c and d).

Serotonin and active avoidance following positive reward prediction errors. (a) Depiction of ‘next actions’ as per negative RPE analysis in Figure 5. (b) Serotonin transients (n=882) following positive reward prediction errors as per Figure 4a and b but shown here separated according to decision on next trial and current bet level (lower bet on trial (t+1): cyan line; hold-or-raise bet on trial (t+1): dark green line). Only at low bets (where counterfactual losses dominate) did we observe large transients—the direction of the transient was not discriminative however in terms of next-bet decision. (c) Unlike following negative RPES, no parametric effect, in terms of current bet level and serotonin response, was observed for the decision to lower bet following positive reward prediction errors. (d) Similarly, no parametric effects were observed for serotonin responses preceding a decision to raise or increase bet levels (following a positive reward prediction error).

In order to investigate the timing of these decision-related transients further, we extracted the peak 5-HT response from every trial and tested whether a faster time to peak corresponded with decisions from investment on the next trial. For responses to negative RPEs we found no timing effects in an analysis of decision × bet level. However, in response to positive RPEs we saw a significant effect of time to peak on the decision to lower, hold, or raise one’s bets following the outcomes. Specifically, the decision to raise one’s bets was associated with slower 5-HT transient peaks as compared to decisions to hold or reduce current betting levels. No effect of bet level or interaction was observed (Supplementary Figure S5). This may suggest that fast serotonin signals are associated with withdrawal from market investment, even when the ‘going is good’.

Discussion

We used a modern statistical method to extract serotonin signals from fast-scan cyclic voltammetric data. We showed in vitro using a flow cell that we could extract separate 5-HT and dopamine signals from a single voltammogram, even at variable pH, and then applied our method to recordings taken in vivo from human Parkinson patients playing an investment game. We found that at the time that the outcome of a round was revealed, serotonin encoded a prediction error for actual reward when subjects were substantially invested, and an inverted prediction error for counterfactual reward (ie, regret) when subjects had failed to invest. Moreover, these serotonin concentration fluctuations on a trial were positively correlated with protective choices made by subjects in the subsequent trial following negative RPEs.

Our findings provide novel evidence that serotonin encodes loss-related prediction errors. This finding ratifies and extends previous theoretical accounts, which hypothesized a role for serotonin in aversive prediction and learning (Deakin, 1983; Daw et al, 2002). For the high bet case, our findings demonstrate the opposite of standard accounts of the activity of dopamine neurons (Schultz et al, 1997; Kishida et al, 2016) or transient fluctuations of dopamine concentrations (Flagel et al, 2011) recorded in conventional Pavlovian or instrumental paradigms in animals other than humans. For the low bet case, our results for serotonin are the mirror image of those for dopamine on the same task, showing a sensitivity to counterfactual as well as actual outcomes (Kishida et al, 2016).

Our second major finding was an emergent action code in serotonin that could be used to predict the change in bet following negative RPEs. In particular, our findings related to betting less or holding on the next trial are consistent with computational accounts of serotonin in active avoidance (Dayan and Huys, 2008; Dayan and Huys, 2009). However, here too the signaling was not unidirectional. Positive fluctuations to lower bets and negative ones to holding predominated when current bet levels were high. However, they flipped polarity at 50%, suggesting that serotonin drops when avoidance is overall detrimental, and likely to expose the player to foregone gains. Colloquially this might be deemed as a signal that both ‘secures in place’ when risk is already low and ‘retreats’ from dangerous high-risk investments. The dependencies on prior sensory experience and its sensitivity to context (previous bet amounts) may help clarify some formerly confusing and seemingly contradictory findings with respect to learning signals and reward error processing (Yacubian et al, 2006). (Figures 5b and c, and 6a).

Recently, serotonergic firing in rodents has been associated with patience (Miyazaki et al, 2012). They found that optogenetic stimulation of rodent serotonergic neurons in the dorsal raphé enables waiting for delayed reward (Miyazaki et al, 2014). Similarly, fiber photometry recordings from the dorsal raphé nucleus have recently demonstrated increased tonic firing of serotonergic neurons during a reward-related anticipatory period, and phasic firing on reward acquisition (Li et al, 2016). In future work, an experimental manipulation of the time from bet submission to outcome in our task would enable us to formally test the role of serotonin in signaling patience for anticipated reward. With regard the outcome acquisition-related activity (Li et al, 2016), our results also find increased 5-HT to positive RPEs but only in the context of low bets (when outcomes could have been better). Studies that systematically vary actual and counterfactual gains and losses might be required to unravel these effects in animals.

Our findings are more directly comparable to studies, which investigated lose-shift and win-stay behaviors. For example, Bari et al (2010) have shown that an acute dose of SSRIs in rodents can increase lose-shift behavior but that longer-term chronic administration increased win-stay behaviors. Similar effects on lose-shift behaviors in humans have been associated with genetic polymorphisms in the serotonin transporter gene (SERT), which was dissociated from dopamine transporter polymorphisms on perseveration (den Ouden et al, 2013) in the same task. We show that serotonin estimates from our participants are associated with lose-shift behaviours. But interestingly, the associated shift behavior is not a simple ‘withdrawal’ from the game. Rather, the shift behaviors associated with serotonin increases (Figure 5b) is toward the center of our betting levels. These effects are observed following negative RPEs and might mean that the player is not exposed to ‘too much’ risk while also ensuring that they do not ‘miss out’ on future gains.

Pharmacological manipulations using tryptophan depletion in humans support this idea of behavioral effects of 5-HT in the face of aversive outcomes with reports of both Pavlovian and instrumental predictions of negative events reduced following dietary depletion (Crockett et al, 2012), as well as reports of a specific model-based or goal-directed deficit (Worbe et al, 2016). Overall, our methodology provides a unique opportunity to understand the role of serotonin in the human brain in computational terms. Relating these findings to our previous work we provide evidence for serotonin’s loss-opponency to dopamine’s gain-dependent signals (Kishida et al, 2016) and further extend this valence-dependent activity with evidence for a role in subsequent action selection. Our analysis could be further extended to examine, for example, individual differences in RPEs or in decision-making. This may reflect altered model-based goals. Testing such a proposal is beyond the scope of the present work, but would start from developing a quantitative parametrized computational account of the full task. For example, the proposed role of serotonin in regulating temporal discounting and impulsivity (Doya, 2007) could be explored with a temporal difference model of the task (and manipulations of cue timings, for example). Our results, at least informally, are consistent with a role for serotonin in controlling impulsivity. In particular, following negative RPEs, the impulsive choice may be to lower further one’s investment. However, at low bet levels, serotonin increases are associated with decisions to ‘hold’ or even ‘raise’ the bet. This may be a protective signal that guards against over-reactions to negative outcomes. Further in our supplementary analysis (Supplementary Figure S5), we show that after outcomes that elicit positive RPEs, the serotonin transient is faster for lower, or hold decisions, perhaps preventing an impulsive raise in betting levels. Our reward-prediction error and action encoding transients might also be considered in light of models of risk assessment. Risk, typically modeled as predicted outcome variance, has been mapped to serotonin function in the basal ganglia (eg, in Balasubramani et al, 2014). Our transient serotonin increases to negative RPEs at high bets and low bets are associated with decisions to lower the bet and to hold or raise bets, respectively. Thus, 5-HT may seek a mid-point where the potential variability in both actual and counterfactual losses are balanced (eg, minimizing maximal losses).

Limitations of the current study are related to both the human brains from which the signals were acquired and the specificity of voltammetric signal extraction. Our cohort comprised 14 patients with Parkinson’s disease. This pathology has been associated with aberrations in some decision-making parameters—including impulsivity (Voon et al, 2014). Though our paradigm did not address learning per se, the effect of valence on learning and subsequent decision-making has been shown to be affected by dopamine medication. In patients off medication positive outcomes tend to affect learning more prominently, while patients off dopamine medication show a greater sensitivity to negative outcomes—effects thought to be controlled by a high dopamine ‘tone’ or ‘floor’ on medication (Frank et al, 2004). Though in the absence of learning, a similar off/on study showed that decision-making tends to be improved by dopamine medications in patients generally (Shiner et al, 2012), though here the loss domain was not explicitly investigated. Other studies have corroborated (Frank et al, 2004) showing reduced sensitivity to negative feedback when patients are on medications (Euteneuer et al, 2009). Our task did not require learning. In other decision-making studies without learning, it has been shown that patients with Parkinson’s disease and age-matched controls perform comparably (unlike Parkinson’s patients with dementia, who are not in our cohort) (Delazer et al, 2009). Hence, the type of game we chose for our participants has been shown to engender near-normal performance. Nevertheless, the pattern of choice selection may not fully reflect the statistics of choice in people without neurological disease. Second, patients with Parkinson’s disease may exhibit cross-loading of these particular neurotransmitters into the alternate axonal terminals. Specifically, levodopa is thought to induce a ‘false transmission’ of dopamine via serotonin axons, and may contribute to the dyskinesias associated with long-term L-Dopa use (Mathur and Lovinger, 2012; Politis et al, 2014). This cross-loading would reduce the distinct effects of dopamine and serotonin in the striatum (Montague et al, 2016). These caveats deserve study in their own right using this type of protocol. Here we show the feasibility of dissociating dopamine from serotonin and thus the procedures may be extended to test particular cross-talk hypotheses that might contribute to movement and decision-making impairments. Our measurements were restricted to the caudate and putamen—according to each patient’s pre-planned surgical trajectory for the eventual placement of the DBS electrode (which followed after our recordings had been completed along the same guide tube). These regions have been shown to activate in response to real and counterfactual RPEs in previous fMRI studies of this task: caudate (Lohrenz et al, 2007) and putamen (Chiu et al, 2008). However, we could not access the ventral striatum with our probe, where RPEs are pronounced (Pagnoni et al, 2002) and thus cannot rule out a role for 5-HT in modulating this or other regions such as the orbitofrontal cortex (Knutson and Cooper, 2005), during this task. Furthermore, our extraction model only accounted for dopamine, serotonin, and pH changes, but will not account for systematic voltammetric changes induced by other neuromodulators or metabolites. For example, serotonnin’s metabolite, as 5-hydoxyindole acetic acid has been observed at levels higher than serotonin in voltammetric recordings in vivo in rodents and with similar oxidation and reduction characteristics. Though we cannot directly rule out the role of a metabolite, its systematic (and speeded) fluctuation in concert with decision variables would still suggest a role for the serotonergic system in reacting to negative outcomes and pose important questions for future experimental and theoretical study.

Funding and disclosure

This work was supported by the following: a Wellcome Trust Principal Research Fellowship (PRM, TL), The Gatsby Charitable Foundation (PD), NINDS R01NS092701 (PRM, KTK, TL), NIH 5KL2TR001421 (KTK), Virginia Tech (PRM, RJM, KTK, TL), Wake Forest School of Medicine (KTK). The authors report no conflict of interest.

References

Bari A, Theobald DE, Caprioli D, Mar AC, Aidoo-Micah A, Dalley JW et al (2010). Serotonin modulates sensitivity to reward and negative feedback in a probabilistic reversal learning task in rats. Neuropsychopharmacology 35: 1290–1301.

Balasubramani PP, Chakravarthy VS, Ravindran B, Moustafa AA (2014). An extended reinforcement learning model of basal ganglia to understand the contributions of serotonin and dopamine in risk-based decision making, reward prediction, and punishment learning. Front Comput Neurosci 8: 47.

Boureau Y-L, Dayan P (2011). Opponency revisited: competition and cooperation between dopamine and serotonin. Neuropsychopharmacology 36: 74–97.

Chiu PH, Lohrenz TM, Montague PR (2008). Smokers' brains compute, but ignore, a fictive error signal in a sequential investment task. Nat Neurosci 11: 514–520.

Cohen JY, Amoroso MW, Uchida N (2015). Serotonergic neurons signal reward and punishment on multiple timescales. Elife 4: e06346.

Cools R, Roberts AC, Robbins TW (2008). Serotoninergic regulation of emotional and behavioural control processes. Trends Cogn Sci 12: 31–40.

Crockett MJ, Clark L, Apergis-Schoute AM, Morein-Zamir S, Robbins TW (2012). Serotonin modulates the effects of Pavlovian aversive predictions on response vigor. Neuropsychopharmacology 37: 2244–2252.

Crockett MJ, Clark L, Robbins TW (2009). Reconciling the role of serotonin in behavioral inhibition and aversion: acute tryptophan depletion abolishes punishment-induced inhibition in humans. J Neurosci 29: 11993–11999.

Daw ND, Kakade S, Dayan P (2002). Opponent interactions between serotonin and dopamine. Neural Netw 15: 603–616.

Dayan P, Huys QJ (2008). Serotonin, inhibition, and negative mood. PLoS Comput Biol 4: e4.

Dayan P, Huys QJ (2009). Serotonin in affective control. Annu Rev Neurosci 32: 95–126.

Deakin J (1983). Roles of serotonergic systems in escape, avoidance and other behaviours. Theory Psychopharmacol 2: 149–193.

Delazer M, Sinz H, Zamarian L, Stockner H, Seppi K, Wenning G et al (2009). Decision making under risk and under ambiguity in Parkinson’s disease. Neuropsychologia 47: 1901–1908.

den Ouden HE, Daw ND, Fernandez G, Elshout JA, Rijpkema M, Hoogman M et al (2013). Dissociable effects of dopamine and serotonin on reversal learning. Neuron 80: 1090–1100.

Diekhof EK, Falkai P, Gruber O (2008). Functional neuroimaging of reward processing and decision-making: a review of aberrant motivational and affective processing in addiction and mood disorders. Brain Res Rev 59: 164–184.

Dölen G, Darvishzadeh A, Huang KW, Malenka RC (2013). Social reward requires coordinated activity of nucleus accumbens oxytocin and serotonin. Nature 501: 179–184.

Doya K (2007). Reinforcement learning: computational theory and biological mechanisms. HFSP J 1: 30–40.

Euteneuer F, Schaefer F, Stuermer R, Boucsein W, Timmermann L, Barbe MT et al (2009). Dissociation of decision-making under ambiguity and decision-making under risk in patients with Parkinson's disease: a neuropsychological and psychophysiological study. Neuropsychologia 47: 2882–2890.

Flagel SB, Clark JJ, Robinson TE, Mayo L, Czuj A, Willuhn I et al (2011). A selective role for dopamine in stimulus-reward learning. Nature 469: 53–57.

Fonseca MS, Murakami M, Mainen ZF (2015). Activation of dorsal raphe serotonergic neurons promotes waiting but is not reinforcing. Curr Biol 25: 306–315.

Frank MJ, Seeberger LC, O'reilly RC (2004) By carrot or by stick: cognitive reinforcement learning in parkinsonism Science 306: 1940–1943.

Göthert M (2016). 5-HT receptors mediating pre-synaptic autoinhibition in central serotoninergic nerve. Serotonin 56.

Graeff F, Deakin J (1991). 5-HT and mechanisms of defence. J Psychopharmacol 5: 305–315.

Hashemi P, Dankoski EC, Wood KM, Ambrose RE, Wightman RM (2011). In vivo electrochemical evidence for simultaneous 5‐HT and histamine release in the rat substantia nigra pars reticulata following medial forebrain bundle stimulation. J Neurochem 118: 749–759.

Hu X-Z, Lipsky RH, Zhu G, Akhtar LA, Taubman J, Greenberg BD et al (2006). Serotonin transporter promoter gain-of-function genotypes are linked to obsessive-compulsive disorder. Am J Hum Genet 78: 815–826.

Jacobs BL (1994). Serotonin, motor activity and depression-related disorders. Am Sci 82: 456–463.

John CE, Jones SR (2007) Fast scan cyclic voltammetry of dopamine and serotonin in mouse brain slices In: Michael AC, Borland LM (eds) Electrochemical Methods for Neuroscience. CRC Press/Taylor & Francis: Boca Raton, FL. Chapter 4.

John CE, Jones SR (2007). Voltammetric characterization of the effect of monoamine uptake inhibitors and releasers on dopamine and serotonin uptake in mouse caudate-putamen and substantia nigra slices. Neuropharmacology 52: 1596–1605.

Kaye W, Gendall K, Strober M (1998). Serotonin neuronal function and selective serotonin reuptake inhibitor treatment in anorexia and bulimia nervosa. Biol Psychiatry 44: 825–838.

Kishida KT, Saez I, Lohrenz T, Witcher MR, Laxton AW, Tatter SB et al (2016). Subsecond dopamine fluctuations in human striatum encode superposed error signals about actual and counterfactual reward. Proc Natl Acad Sci USA 113: 200–205.

Kishida KT, Sandberg SG, Lohrenz T, Comair YG, Sáez I, Phillips PE et al (2011). Sub-second dopamine detection in human striatum. PLoS ONE 6: e23291.

Knutson B, Cooper JC (2005). Functional magnetic resonance imaging of reward prediction. Curr Opin Neurol 18: 411–417.

Li Y, Zhong W, Wang D, Feng Q, Liu Z, Zhou J et al (2016). Serotonin neurons in the dorsal raphe nucleus encode reward signals. Nat Commun 7: 10503.

Liu Z, Zhou J, Li Y, Hu F, Lu Y, Ma M et al (2014). Dorsal raphe neurons signal reward through 5-HT and glutamate. Neuron 81: 1360–1374.

Lohrenz T, McCabe K, Camerer CF, Montague PR (2007). Neural signature of fictive learning signals in a sequential investment task. Proc Natl Acad Sci USA 104: 9493–9498.

Lowry C (2002). Functional subsets of serotonergic neurones: implications for control of the hypothalamic‐pituitary‐adrenal axis. J Neuroendocrinol 14: 911–923.

Mathur BN, Lovinger DM (2012). Serotonergic action on dorsal striatal function. Parkinsonism Relat Disord 18: S129–S131.

Miyazaki K, Miyazaki KW, Doya K (2012). The role of serotonin in the regulation of patience and impulsivity. Mol Neurobiol 45: 213–224.

Miyazaki KW, Miyazaki K, Doya K (2012). Activation of dorsal raphe serotonin neurons is necessary for waiting for delayed rewards. J Neurosci 32: 10451–10457.

Miyazaki KW, Miyazaki K, Tanaka KF, Yamanaka A, Takahashi A, Tabuchi S et al (2014). Optogenetic activation of dorsal raphe serotonin neurons enhances patience for future rewards. Curr Biol 24: 2033–2040.

Montague PR, Kishida KT, Moran RJ, Lohrenz TM (2016). An efficiency framework for valence processing systems inspired by soft cross-wiring. Curr Opin Behav Sci 11: 121–129.

Montague PR, McClure SM, Baldwin P, Phillips PE, Budygin EA, Stuber GD et al (2004). Dynamic gain control of dopamine delivery in freely moving animals. J Neurosci 24: 1754–1759.

Must A, Juhász A, Rimanóczy Á, Szabó Z, Kéri S, Janka Z (2007). Major depressive disorder, serotonin transporter, and personality traits: why patients use suboptimal decision-making strategies? J Affect Disord 103: 273–276.

Pagnoni G, Zink CF, Montague PR, Berns GS (2002). Activity in human ventral striatum locked to errors of reward prediction. Nat Neurosci 5: 97–98.

Politis M, Wu K, Loane C, Brooks DJ, Kiferle L, Turkheimer FE et al (2014). Serotonergic mechanisms responsible for levodopa-induced dyskinesias in Parkinson’s disease patients. J Clin Invest 124: 1340–1349.

Portas CM, Bjorvatn B, Ursin R (2000). Serotonin and the sleep/wake cycle: special emphasis on microdialysis studies. Prog Neurobiol 60: 13–35.

Risch N, Herrell R, Lehner T, Liang K-Y, Eaves L, Hoh J et al (2009). Interaction between the serotonin transporter gene (5-HTTLPR), stressful life events, and risk of depression: a meta-analysis. JAMA 301: 2462–2471.

Schmidt R, Leventhal DK, Mallet N, Chen F, Berke J (2013). Canceling actions involves a race between basal ganglia pathways. Nat Neurosci 16: 1118–1124.

Schultz W, Dayan P, Montague PR (1997). A neural substrate of prediction and reward. Science 275: 1593–1599.

Shiner T, Seymour B, Wunderlich K, Hill C, Bhatia KP, Dayan P et al (2012). Dopamine and performance in a reinforcement learning task: evidence from Parkinson’s disease. Brain 135: 1871–1883.

Tanaka SC, Shishida K, Schweighofer N, Okamoto Y, Yamawaki S, Doya K (2009). Serotonin affects association of aversive outcomes to past actions. J Neurosci 29: 15669–15674.

Tibshirani R (1996). Regression shrinkage and selection via the lasso. J R Stat Soc Series B Methodol 58: 267–288.

Tops M, Russo S, Boksem MA, Tucker DM (2009). Serotonin: modulator of a drive to withdraw. Brain Cogn 71: 427–436.

Voon V, Irvine MA, Derbyshire K, Worbe Y, Lange I, Abbott S et al (2014). Measuring ‘waiting’ impulsivity in substance addictions and binge eating disorder in a novel analogue of rodent serial reaction time task. Biol Psychiatry 75: 148–155.

Whittington CJ, Kendall T, Fonagy P, Cottrell D, Cotgrove A, Boddington E (2004). Selective serotonin reuptake inhibitors in childhood depression: systematic review of published versus unpublished data. Lancet 363: 1341–1345.

Worbe Y, Palminteri S, Savulich G, Daw N, Fernandez-Egea E, Robbins T et al (2016). Valence-dependent influence of serotonin depletion on model-based choice strategy. Mol Psychiatry 21: 624–629.

Worbe Y, Savulich G, Voon V, Fernandez-Egea E, Robbins TW (2014). Serotonin depletion induces ‘waiting impulsivity’on the human four-choice serial reaction time task: cross-species translational significance. Neuropsychopharmacology 39: 1519–1526.

Xiao N, Privman E, Venton BJ (2014). Optogenetic control of serotonin and dopamine release in Drosophila larvae. ACS Chem Neurosci 5: 666–673.

Yacubian J, Gläscher J, Schroeder K, Sommer T, Braus DF, Büchel C (2006). Dissociable systems for gain-and loss-related value predictions and errors of prediction in the human brain. J Neurosci 26: 9530–9537.

Yao H, Li S, Tang Y, Chen Y, Chen Y, Lin X (2009). Selective oxidation of serotonin and norepinephrine over eriochrome cyanine R film modified glassy carbon electrode. Electrochim Acta 54: 4607–4612.

Acknowledgements

We thank the patients who took part in this study.

Author information

Authors and Affiliations

Corresponding author

Additional information

Supplementary Information accompanies the paper on the Neuropsychopharmacology website

Supplementary information

Rights and permissions

About this article

Cite this article

Moran, R., Kishida, K., Lohrenz, T. et al. The Protective Action Encoding of Serotonin Transients in the Human Brain. Neuropsychopharmacol. 43, 1425–1435 (2018). https://doi.org/10.1038/npp.2017.304

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/npp.2017.304

This article is cited by

-

Electrochemical and biosensor techniques to monitor neurotransmitter changes with depression

Analytical and Bioanalytical Chemistry (2024)

-

Dopamine and serotonin in human substantia nigra track social context and value signals during economic exchange

Nature Human Behaviour (2024)

-

Pushing the frontiers: tools for monitoring neurotransmitters and neuromodulators

Nature Reviews Neuroscience (2022)

-

A tissue-like neurotransmitter sensor for the brain and gut

Nature (2022)

-

Expression and co-expression of serotonin and dopamine transporters in social anxiety disorder: a multitracer positron emission tomography study

Molecular Psychiatry (2021)