Abstract

Quantifying image distortions caused by strong gravitational lensing—the formation of multiple images of distant sources due to the deflection of their light by the gravity of intervening structures—and estimating the corresponding matter distribution of these structures (the ‘gravitational lens’) has primarily been performed using maximum likelihood modelling of observations. This procedure is typically time- and resource-consuming, requiring sophisticated lensing codes, several data preparation steps, and finding the maximum likelihood model parameters in a computationally expensive process with downhill optimizers1. Accurate analysis of a single gravitational lens can take up to a few weeks and requires expert knowledge of the physical processes and methods involved. Tens of thousands of new lenses are expected to be discovered with the upcoming generation of ground and space surveys2,3. Here we report the use of deep convolutional neural networks to estimate lensing parameters in an extremely fast and automated way, circumventing the difficulties that are faced by maximum likelihood methods. We also show that the removal of lens light can be made fast and automated using independent component analysis4 of multi-filter imaging data. Our networks can recover the parameters of the ‘singular isothermal ellipsoid’ density profile5, which is commonly used to model strong lensing systems, with an accuracy comparable to the uncertainties of sophisticated models but about ten million times faster: 100 systems in approximately one second on a single graphics processing unit. These networks can provide a way for non-experts to obtain estimates of lensing parameters for large samples of data.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Lefor, A. T., Futamase, T. & Akhlaghi, M. A systematic review of strong gravitational lens modeling software. New Astron. Rev. 57, 1–13 (2013)

LSST Science Collaborations. LSST Science Book, Version 2.0. Preprint at https://arxiv.org/abs/0912.0201 (2009)

Collett, T. E. The population of galaxy-galaxy strong lenses in forthcoming optical imaging surveys. Astrophys. J. 811, 20 (2015)

Hyvärinen, A ., Karhunen, J & Oja, E. Independent Component Analysis (Wiley, 2001)

Kormann, R., Schneider, P. & Bartelmann, M. Isothermal elliptical gravitational lens models. Astron. Astrophys. 284, 285–299 (1994)

Russakovsky, O. et al. ImageNet Large Scale Visual Recognition Challenge. Int. J. Comput. Vision 115, 211–252 (2015)

Petrillo, C. E. et al. Finding strong gravitational lenses in the Kilo Degree Survey with convolutional neural networks. Preprint at https://arxiv.org/abs/1702.07675 (2017)

Jacobs, C., Glazebrook, K., Collett, T., More, A. & McCarthy, C. Finding strong lenses in CFHTLS using convolutional neural networks. Mon. Not. R. Astron. Soc. 471, 167–181 (2017)

Lanusse, F. et al. CMU DeepLens: deep learning for automatic image-based galaxy-galaxy strong lens finding. Preprint at https://arxiv.org/abs/1703.02642 (2017)

Ravanbakhsh, S ., Lanusse, F ., Mandelbaum, R ., Schneider, J & Poczos, B. Enabling dark energy science with deep generative models of galaxy images. In Proc. 31st AAAI Conf. on Artificial Intelligence 1488–1494 (AAAI, 2017)

Szegedy, C ., Ioffe, S ., Vanhoucke, V & Alemi, A. A. Inception-v4, Inception-ResNet and the impact of residual connections on learning. In Proc. 31st AAAI Conf. on Artificial Intelligence 4278–4284 (AAAI, 2017)

Krizhevsky, A., Sutskever, I. & Hinton, G. E. Imagenet classification with deep convolutional neural networks. In Advances in Neural Information Processing Systems 25 (eds Pereira, F. et al.) 1097–1105 (Curran Associates, 2012)

Sermanet, P. et al. OverFeat: integrated recognition, localization and detection using convolutional networks. Preprint at https://arxiv.org/abs/1312.6229 (2014)

Willett, K. W. et al. Galaxy Zoo: morphological classifications for 120 000 galaxies in HST legacy imaging. Mon. Not. R. Astron. Soc. 464, 4176–4203 (2017)

Mandelbaum, R. et al. The Third Gravitational Lensing Accuracy Testing (GREAT3) challenge handbook. Astrophys. J. Suppl. Ser. 212, 5 (2014)

Bolton, A. S. et al. The Sloan Lens ACS Survey. V. The full ACS strong-lens sample. Astrophys. J. 682, 964–984 (2008)

Sonnenfeld, A., Gavazzi, R., Suyu, S. H., Treu, T. & Marshall, P. J. The SL2S galaxy-scale lens sample. III. Lens models, surface photometry, and stellar masses for the final sample. Astrophys. J. 777, 97 (2013)

Sonnenfeld, A. et al. The SL2S galaxy-scale lens sample. V. Dark matter halos and stellar IMF of massive early-type galaxies out to redshift 0.8. Astrophys. J. 800, 94 (2015)

Warren, S. J. & Dye, S. Semilinear gravitational lens inversion. Astrophys. J. 590, 673–682 (2003)

Suyu, S. H., Marshall, P. J., Hobson, M. P. & Blandford, R. D. A Bayesian analysis of regularized source inversions in gravitational lensing. Mon. Not. R. Astron. Soc. 371, 983–998 (2006)

Gal, Y & Ghahramani, Z. Dropout as a Bayesian approximation: representing model uncertainty in deep learning. In Proc. 33rd Int. Conf. on Machine Learning (ICML-16) Vol. 48, 1050–1059 (PMLR, 2016)

Gal, Y. & Ghahramani, Z. Bayesian convolutional neural networks with Bernoulli approximate variational inference. Preprint at https://arxiv.org/abs/1506.02158 (2016)

Johnson, T. L. et al. Lens models and magnification maps of the six Hubble Frontier Fields clusters. Astrophys. J. 797, 48 (2014)

Cybenko, G. Approximation by superpositions of a sigmoidal function. Math. Contr. Signals Syst. 2, 303–314 (1989)

Baldassi, C. et al. Unreasonable effectiveness of learning neural networks: from accessible states and robust ensembles to basic algorithmic schemes. Proc. Natl Acad. Sci. USA 113, E7655–E7662 (2016)

Acknowledgements

We thank R. Keisler, G. Holder, R. Blandford, R. Wechsler and W. Morningstar for discussions and comments on the manuscript. We also thank G. P. Maher and A. Dwaraknath for comments about neural networks, leading to improved performance. We thank Stanford Research Computing Center and their staff for providing computational resources (Sherlock Cluster) and support. Support for this work was provided by NASA through Hubble Fellowship grant HST-HF2-51358.001-A awarded by the Space Telescope Science Institute, which is operated by the Association of Universities for Research in Astronomy, Inc., for NASA, under contract NAS 5-26555. P.J.M. acknowledges support from the US Department of Energy under contract number DE-AC02-76SF00515. Y.D.H. is a Hubble Fellow.

Author information

Authors and Affiliations

Contributions

Y.D.H. and L.P.L. contributed equally to all aspects of this project, including design and implementation of the networks and the text of the paper. L.P.L. developed the use of ICA for lens removal. P.J.M. contributed to various aspects of this project, including the choice of tensor libraries and tests on real and simulated data.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Additional information

Reviewer Information Nature thanks Y. Gal, A. Sonnenfeld and the other anonymous reviewer(s) for their contribution to the peer review of this work.

Publisher's note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data figures and tables

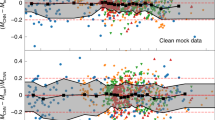

Extended Data Figure 1 A selection of the test samples used to evaluate the performance of the network.

These examples are chosen to illustrate the variations of different effects, including cosmic rays (for example, panels 11 and 12), masks (for example, panels 6 and 23), Einstein radii (for example, panels 7 and 9), noise levels and PSF blurring strengths, and a mixture of lensing image configurations including some unfavourable morphologies (for example, panels 10 and 21).

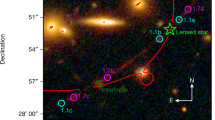

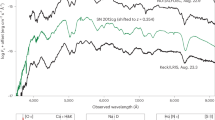

Extended Data Figure 2 Examples of the inputs and outputs of the ICA.

For each row, the first two panels show the HST images in F475X and F600LP filters. The third and fourth columns show the outputs of the ICA. For comparison, lens-removed arcs using a Sérsic model are shown in the last column. Cosmic rays and the brightest central parts of the lensing galaxies have been masked.

PowerPoint slides

Rights and permissions

About this article

Cite this article

Hezaveh, Y., Levasseur, L. & Marshall, P. Fast automated analysis of strong gravitational lenses with convolutional neural networks. Nature 548, 555–557 (2017). https://doi.org/10.1038/nature23463

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/nature23463

This article is cited by

-

QBoost for regression problems: solving partial differential equations

Quantum Information Processing (2023)

-

Strong lensing time-delay cosmography in the 2020s

The Astronomy and Astrophysics Review (2022)

-

Astronomical big data processing using machine learning: A comprehensive review

Experimental Astronomy (2022)

-

Stark spectral line broadening modeling by machine learning algorithms

Neural Computing and Applications (2022)

-

Genetic-algorithm-optimized neural networks for gravitational wave classification

Neural Computing and Applications (2021)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.