- NEWS FEATURE

How COVID broke the evidence pipeline

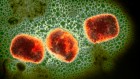

Illustration by Jasiek Krzysztofiak/Nature

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Rent or buy this article

Prices vary by article type

from$1.95

to$39.95

Prices may be subject to local taxes which are calculated during checkout

Nature 593, 182-185 (2021)

doi: https://doi.org/10.1038/d41586-021-01246-x

References

Carley, S. et al. Emerg. Med. J. 37, 572–575 (2020).

Chalmers, I., Enkin, M. & Keirse, M. J. N. C. Effective Care in Pregnancy and Childbirth (Oxford Univ. Press, 1989).

Sackett, D. L., Rosenberg, W. M. C., Gray, J. A. M., Haynes, R. B. & Richardson, W. S. Br. Med. J. 312, 71 (1996).

Chalmers, I. & Glasziou, P. Lancet 374, 86–89 (2009).

RECOVERY Collaborative Group. Lancet 397, 1637–1645 (2021).

Evidence-based medicine: how COVID can drive positive change

Evidence-based medicine: how COVID can drive positive change

International COVID-19 trial to restart with focus on immune responses

International COVID-19 trial to restart with focus on immune responses

The race for antiviral drugs to beat COVID — and the next pandemic

The race for antiviral drugs to beat COVID — and the next pandemic

Face masks: what the data say

Face masks: what the data say

The epic battle against coronavirus misinformation and conspiracy theories

The epic battle against coronavirus misinformation and conspiracy theories