- NEWS FEATURE

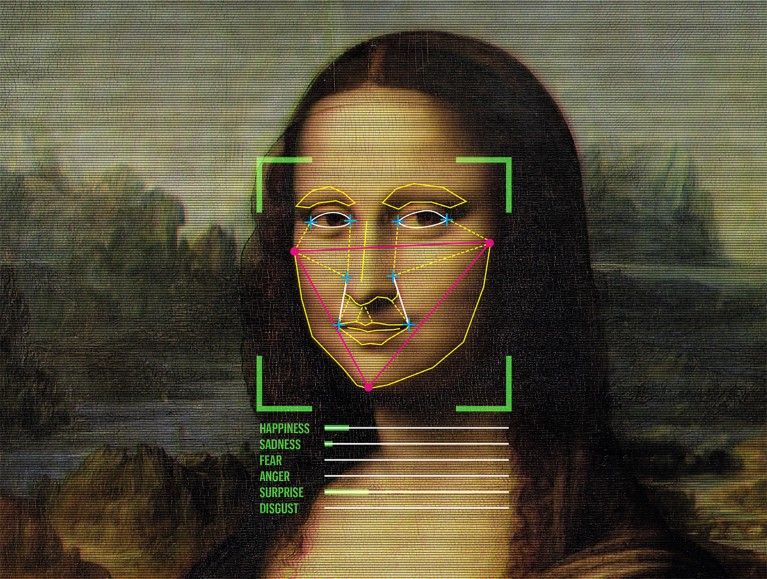

Why faces don’t always tell the truth about feelings

Credit: Adapted from GL Archive/Alamy

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Rent or buy this article

Prices vary by article type

from$1.95

to$39.95

Prices may be subject to local taxes which are calculated during checkout

Nature 578, 502-504 (2020)

doi: https://doi.org/10.1038/d41586-020-00507-5

References

Chen, C. et al. Proc. Natl Acad. Sci. USA 115, E10013–E10021 (2018).

Ekman, P., Sorenson, E. R. & Friesen, W. V. Science 164, 86–88 (1969).

Ekman, P. & Friesen, W. V. J. Personal. Soc. Psychol. 17, 124–129 (1971).

Crawford, K. et al. AI Now 2019 Report (AI Now Institute, 2019).

Susskind, J. M. & Anderson, A. K. Commun. Integrat. Biol. 1, 148–149 (2008).

Barrett, L. F., Adolphs, R., Marsella, S., Martinez, A. M. & Pollak, S. D. Psychol. Sci. Publ. Interest 20, 1–68 (2019).

Benitez-Quiroz, C. F., Srinivasan, R. & Martinez, A. M. Proc. Natl Acad. Sci. USA 115, 3581–3586 (2018).

Chen, Z. & Whitney, D. Proc. Natl Acad. Sci. USA 116, 7559–7564 (2019).

Elfenbein, H. A. & Ambady, N. Psychol. Bull. 128, 203–235 (2002)

Cowen, A., Sauter, D., Tracy, J. L. & Keltner, D. Psychol. Sci. Publ. Interest 20, 69–90 (2019).

The learning machines

The learning machines

Neuroscientists rethink how the brain recognises faces

Neuroscientists rethink how the brain recognises faces